[ MUSIC ] HENK BOELMAN: Good morning, everyone. You all made it to the last session of Ignite. Yay!

[ APPLAUSE ] So we will see if you also make it for the last 45 minutes. So my name is Henk Boelman, and together with David Smith and Daniel, we're going to kind of go over the recap of the event, kind of what we found cool, what we have learned in all kind of short clips. So there were over 800 sessions, and there were more than 30 sessions alone about Azure AI Foundry.

So I don't think you saw them all. I didn't get the chance to see them all, but I've seen a few, and I wanted to share my insights about that with you. So please take a photo of this one, because this is an excellent guide if you're back home or sitting in a plane and want to learn more about Azure AI Foundry in this case.

One more second. Okay. Perfect.

So my part is going to be about agents at Ignite. Who didn't hear about agents this week? No more agents, right?

So I'm going to go over the definition I liked for agents, and then tell a few things about the new models that you need and the developer tools that you need to build these kind of agents. So these are my session picks. Breakout 100 is a general introduction about the Azure AI Foundry, with all the new announcements very nicely packed at 45 minutes.

Then Breakout 102 is diving a little bit deeper in building multi-agent systems. Super cool. Then Breakout 110 is going into the different type of models that are being released, like the capabilities, the differences between them.

Then Breakout 115 is about how to code it with the new Azure AI Foundry SDK. And then Breakout 119 talks about how you can actually bring this and run this all in production. So one of the sessions started off with this and I really liked it.

It's agents is a term overused in this industry to the point, but when I use it, and what I mean is semi-autonomous software that can be given a goal and will work to achieve that goal without you knowing in advance exactly how it's going to do that and what steps it is going to take. I really like that. So in summary, an agent is kind of semi-autonomous software.

You give it a goal to do it. And it uses tools or can use tools to achieve that goal. And the exact steps of which tools it's going to be using and when it decides to use is not explicitly specified.

And to make those agents' agentic systems a little bit easier, we launched Azure AI Foundry Agent Service. And this is Mads, and he announced it. So we made a short video of him that I'm going to play telling you what the service is.

And it should be playing. MADS: Azure AI Agent Service, which is brand new that we're announcing at Microsoft Ignite. With Azure AI Agent Service, we're taking the assistance API that you know and love, sprinkling on some enterprise goodness, and adding additional tools like Bing search, SharePoint search, Fabric AI skills, and Azure functions.

Give it a shot. HENK BOELMAN: Perfect. So you should all give it a shot when we can access it.

And I think it will be released at the end of this year for you all to play with. So this is Azure AI Agent Service. It helps you deploy agents easily.

It is kind of the full package. Like Mads said, it is the Azure Open AI Assistant API, together with all kinds of tools that you can use. So an agent requires seamlessly integration with the right tools, systems, and APIs to perform.

It needs to be able to manage the conversation with you. You don't want to do that all by yourself. And you want to be able to kind of choose the model that you want.

You don't want to be locked into one very specific model. And of course, we're all working for enterprises. It needs to be enterprise-ready and can be run at scale.

Then when you're building agents, I also really like this slide. If you want to build a single agent, then you can deploy agents with Azure AI Foundry. If you want to start creating multi-agent systems, you can start using a framework called AutoGen.

It is available in Python. You can research and prototype very easily. And then when you move to production, you can use the production-ready and stable SDK from Semantic Kernel that is available in C-sharp, Python, and Java.

Also, a lot of new model announcements were made, like o1 Preview and o1 Mini. GPT-4o, with the latest update, is available, and GPT-4o Mini. Structured outputs, to have your model return structured output to you instead of text.

And GPT-4o Realtime Preview and GPT-4o Audio Preview are now available. I also found this slide in one of the presentations, and I really liked it. So at the basic level, when you're doing AI development, you have a prompt, a model, the model will have a response, you run some code, and the loop controls are continues and continues.

But it becomes way more complex if you're going to do this at scale, having multiple different conversations with your models, with all kinds of agents that run through each other, generating the output for you. And that is why, in Breakout 119, they talked about this toolchain in Azure AI, from how you can get started very easily with Azure AI templates, with the chat playground to try out different prompts. Then you probably need to customize things because it doesn't always work out of the box straightaway.

You can use Azure AI Search and Fabric to ground your responses. You can use fine-tuning with Azure AI Agents and Azure AI Machine Learning. You can go start experimenting with Prompty and Azure AI Foundry, make use of all the tracing so that you can actually debug your applications.

Then move to production, where you're going to orchestrate with Prompt Flow, Langchain, Semantic Kernel. Of course, you need some automation with GitHub Actions. And then, not very unimportant, you need to monitor your solution in production with Azure AI Monocore and Applications Insights.

And when you start building software in Breakout 115, they talked about the newly launched SDKs. So there is an Azure OpenAI SDK that has full support for Azure OpenAI. It is built on top of the OpenAI library.

And it is available in the choice of your language in. NET, JavaScript, Java, Go. And it works very good out of the box.

And there is the link if you want to try it out. So this SDK is there to interact with these models. Also newly launched is the Azure AI Foundry SDK, and this SDK is there to communicate for you to Azure AF Foundry, so that you can do everything in code, from creating projects to do inference on all the APIs that are there, use Azure AI Search to ground your model, and help you deploy these applications using templates.

I also presented a session about multi-agents together with Unilever and Capgemini about how to build a multi-agent solution. This was a solution that helped you find recipes and find the ingredients in the product stores and an agent that could actually help you buy this. So if you want to see a session about the real-world implementation on PromptFlow, just go to Breakout128.

So I want to show you a few quick demos. I still have a few minutes before the other ones are going to talk about what they found interesting. But I wanted to -- oh, my laptop already went to sleep.

Cool. So who has used GitHub Models? I see like one hand.

You all have to start using GitHub Models because it is a super easy place to start experimenting with your models. There is a playground. You go to the GitHub.

com. Log in with your credentials. You go to the marketplace.

Click a model you want to try out, and it will just work. But they recently launched, like, that you can compare models because, like you heard all my announcements, GPT-4o, GPT-4o1, preview thing. You want to compare and see what it is doing.

So you can easily compare models now and run the same prompt against different models side by side. So if I would run this prompt, "Write a short story about an astronaut," you see that GPT-4o starts responding directly and streaming the output. o1 preview, we're still waiting for you.

But it will come back, and it will return the response at once. o1-preview is known for its reasoning capability, and you see that the story that it generates is longer more in-depth than GPT-4o. So this is a great tool.

You can start getting an API key, start running it in a code space, and you can use your pet token as authentication key. So no deployments needed, and super easy to start building with these new models. Another model that I find really nice and fun to play with is GPT-4o Audio, or GPT-4o real-time preview.

And you can actually talk to this model. Who has already done that? Nobody.

Okay, a few there in the corner have done that. So let me start and say something to the model. Hello, how are you today?

COMPUTER VOICE: Hello, I'm doing well. Thank you. How about you?

HENK BOELMAN: I'm doing fine. COMPUTER VOICE: That's great to hear. Is there anything special you'd like to talk about?

HENK BOELMAN: Yes, but I'm going to give you some extra instructions, so you have to wait. Okay, so this model works like the other models. You can give it additional information and ground to responses.

So I am going to show you a model that is an AI assistant, as they all are, and they love to talk about raccoons. Only about raccoons. They dislike some of the types.

They like another type. And then I change the tone. Very bit rude, but funny.

Normally it's not getting too rude, but sometimes. So we're saving that prompt, and let's start and ask it something. Hello, how are you today?

VOMPUTER VOICE: I'm doing just raccoon. Oh. Well, someone's enthusiastic.

What's got you so excited? Is it the thought -- HENK BOELMAN: Okay, sometimes the microphone picks up the sound. So hello, how are you today?

I don't like raccoons. COMPUTER VOICE: Well, well, aren't you a tough crowd. Raccoons may not be everyone's cup of tea, especially those light ones with gray stripes from the western states.

They're not exactly winning any dance contests with those funny moves. HENK BOELMAN: Stop, stop right here. COMPUTER VOICE: But hey, the brown ones from up north -- oh, touchy subject, huh?

Fine, we can leave the raccoons. HENK BOELMAN: So like any other model, you can also ground the speech model. You can connect it to your own data so it talks to you in real time.

And one of the other cool things is that you can actually interrupt this model and say stop while it is streaming and have a real conversation. So I have time for one last demo, and that is about the assistants playground. So this is a super easy way to build kind of a start of your agent.

So what I have here is a Contoso sales agent. And I gave this agent a little bit of powers. I gave it the power to run code for me.

And I gave it the power to read this CSV file with all my sales data. I gave it also a prompt like instructions. You are a sales assistant for Contoso.

You have sales data, some examples, and some rules that it can only talk about that sales data. So if I say "Help," it will tell me exactly what it can do. "Hello, I'm here to help you with these tasks.

" I can now ask it, "What is the total revenue for Europe by month? " It starts telling me how it is going to solve my question. What it needs to do, it looked at my CSV file, it opened it, and it returned a nice table.

I don't like tables in particular. So I can now ask it, "Show me this information in a graph. " It decided, "Hey, I need to run some code for this.

" So it is starting to run code, and here it generated a code, a graph for me in Python. But I don't really like this color, so I can potentially say, "Hey, change the color," and start having a conversation with my data here. So let me switch back to the slides.

And if you want to see the links to all the sessions I mentioned, scan this QR code. And now I'm going to hand it over to David to talk to you about RAG. Welcome, David.

DAVID SMITH: Thank you. Hi, folks. [ APPLAUSE ] My name is David Smith, and like Henk and Daniel, I'm with the Developer Relations team at Microsoft.

And there was just so much great content here at Ignite this week. But I just want to focus on my favorite topic with respect to AI, which is retrieval augmented generation, or RAG. Just with a raise of hands in the audience, who here has personally used a RAG application thus far?

I'm seeing about half of the audience raise their hands. But the thing is, you probably have, even if you don't realize it. If you use the Ask Me Anything About Microsoft Ignite tool on the Ignite website, you are using RAG, because that's not just a regular chatbot.

Unlike a chatbot, I can talk to it in plain English, or in fact, any language. I can use typos, I can use slang words. It will figure out what I'm trying to say.

In addition, it knows about me, and it knows about the context of this particular event. So I can ask it questions about particular sessions or the way the event is organized. And it has that information and can relay it back to me.

That is the key of a retrieval automated generation application. I want to point to four breakout sessions and one workshop from Ignite this week, and connect some of the dots between them about the processes of building, deploying, and implementing RAG applications. I want to start with a really cool case study I saw from AT&T, who have deployed for all those workers who manage their networks and serve their customers a really amazing AT&T knowledge application called AT&T Knowledge, which is a RAG application.

It does lots and lots of things. It helps ask questions for people within AT&T on lots of different topics. It helps their developers write code and generate code as they're building those applications.

For content, it helps to classify content or create brand new content to help them fill out their documentation, websites, and so forth. And one really cool aspect that I hadn't considered before was being able to ask data of their applications. AT&T is able to get access to all their tables and information by generating SQL queries on the fly and asking really powerful questions and getting answers really, really quickly.

On the basis of this, AT&T actually reported in their last earnings report that they've driven a 33% improvement in search-related responses for customer care, and that translates directly into their bottom line. One other thing I hadn't realized was just how sophisticated a customer like AT&T is in their deployments. Not only are they the world leader in building models for SQL generation themselves, they actually have invested in implementing some of the latest techniques in AI.

For example, you've heard a lot, I'm sure, about multimodal models and the ability to use images and voice in addition to text when working with search. And I saw this great example of how using multimodals enabled their technicians to answer questions that are answered in pictures in a way that they simply weren't able to do that before. Another thing I learned from the AT&T team is that RAG optimization is so important.

There are so many factors to optimize across these applications, from the cost of delivering those applications to the quality of those responses to the performance of the applications themselves to make them useful. And I've worked with customers that have maybe delivered their first, second, or third iteration of RAG applications, and I was stunned to learn at AT&T they have invested in more than 2,500 different implementations of RAG, and they have a really rigorous testing system for evaluating those changes, and that's what's led to such great performance and improvements in their system. If you're not familiar with the way that RAG is implemented, this is a really elegant and simple chart that is so powerful in explaining how RAG works.

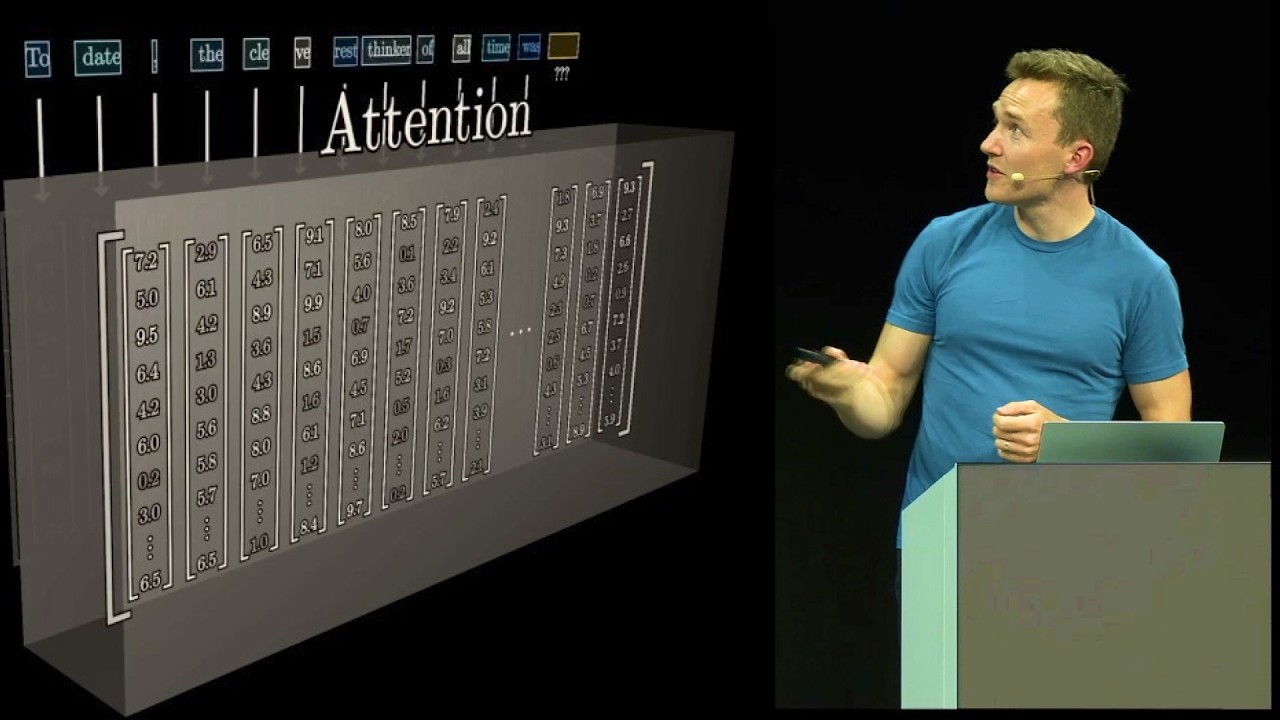

If you think about the application, that little box on the Ignite website, there on the left-hand side of that document, the user types a question into that box. What happens there? That question gets sent to an orchestrator, whose first job is to gather information that's relevant to the particular question that the user is asking.

So you need some kind of semantic search system, a retrieval system, which can, first of all, interpret what the question means, what the customer is asking, and then find relevant information, images, documents, pictures, chats, that could answer that question, and then send both that user's question and a series of potentially relevant information, documents, to a large language model. And then we'll use the power of those large language models to extract from the contextual information provided and the information that answers that actual user's question. It's a really elegant way of putting all that together.

It's quite a complex system when you get down to the details, but this is the fundamentals of a RAG system. When we do the search, the important thing is that the relevant documents are returned based on the user's question. So you need a good indexing system to be able to do that quickly.

Pablo showed how Azure AI Search has a very powerful index system which allows rapid retrieval of those relevant documents. And what makes that work is a really elegant architecture. I had two aha moments when I saw this chart from Pablo.

First of all, it's not just semantic search that makes this work. If you actually incorporate both semantic search and keyword search together, you get much better results. And then you can apply a semantic ranking algorithm.

So if you return back 5 or 10 or 15 results, you really care about what's the most relevant and the second relevant and so forth. And the semantic ranking system uses artificial intelligence to figure out importance. There's also a new tool in Azure AI Search called query rewriting which actually changes the text that the customer or the application put into that search box in such a way and tries different versions to further improve the results that come back into the RAG system.

Also, I just want to point you to this real quick. Pablo pointed out the RAG for GitHub models is coming soon. I actually haven't tried this myself, but I'm very eager to.

You might be as well. He shared this form where you can sign up to try out a new RAG system that's available in GitHub models. And I'll reiterate what Henk said earlier on.

If you haven't tried GitHub models to experiment with AI models and now RAG and other aspects, do take a look. It's a really, really convenient and powerful system. I want to switch.

I had an interesting conversation with somebody when I was at the booth the other day talking about, "How do I choose between different models? Why are some models more expensive than others? And, you know, I want to choose this model because it has the most recent learning.

It's trained on the most recent data. " And I suddenly thought, "In a RAG context, why do we even care about that? " Like, in some senses, we don't want the model to know anything at all except how to reason on documents and create good responses.

We want to provide that model with the information that's relevant. You know, for example, if you're a retailer and a customer types a query into your chatbot and it responds back with a competitor's brand name because it's learned about the competitor through its training on the Internet, that's actually a bad thing, right? So, in fact, we might want to use different types of models or different techniques for RAG to really guide the model to use the knowledge that we want it to use, not its general knowledge that it's learned from its training purposes.

Although, I also learned about another thing. What's this prompt cache input pricing? This is really relevant to RAG because in RAG, we use very, very large prompts typically to send to the models.

And those really, really large prompts come about because we embed the relevant documents before the customer's actual question when we send that to the model. We just introduced prompt caching on many of our models, and that really affects RAG. Just a little tip there, if you make sure that your prompt is static in the first sections and then variable keyed down below, you'll actually save money because you don't pay for the static part of the prompts in your repeated queries, your repeated calls to the API.

So that's just kind of a little aside right there. But going back to the models, you actually might consider using a model that doesn't have much knowledge at all in RAG applications. And that's exactly what the PHY model does.

It's trained in such a way that it learns information, language, facts, but doesn't learn general knowledge. And so this makes it a great application for RAG because of that fact and also because it's very cheap to run. And so if you're doing a lot of queries, you can save money that way.

The Phi model from Microsoft is trained to do exactly that. Furthermore, you can fine-tune the PHY model. This is, again, something that I hadn't really appreciated until I had a bit of an aha moment this week, that the cost in RAG applications is directly related to the prompt length.

And a lot of the reasons why we embed into prompts is to give the model some direction or some training that it needs to have to have the behavior that we want it to have. But you can embed that training through fine-tuning. So by spending some time to fine-tune a model like Phi, you can reduce the necessary size of your prompt and therefore reduce the costs of running it in an operational way.

This is all part of the Enterprise GenAIOps lifecycle. We iterate, create a model, we try out our application, we test, we improve, we deploy, and monitor. Now I want to sort of hover on the monitoring part.

This comes from Sarah Bird's breakout BRK113. And how do we answer the question? If I type in, "Is there breakfast at Ignite?

" and I get the response, "No, breakfast is not served at Ignite," is that a good response? I'm sure most of you would say yes, but how do you do that at scale? How do you answer the general question, is my RAG system giving good responses in some way?

And the answer to that question is evaluation. Rather than asking a human to look at every question and every response and rank it, we ask a sophisticated AI system to do that for us. That's exactly what evaluation is.

And we have a new system to implement evaluation, which we use both at the development phase, when you're first developing your prompts that are used in the system before production, and in the monitoring phase. So you can track over time if your questions are still being answered with the accuracy, fluency, coherence, and relevance that you intended for at the beginning. One last resource I want to point you to is a workshop.

Unfortunately, here the workshops were not recorded, but as a little secret to let you in on, all of the content for the workshops is typically available in the GitHub repositories associated with them. I want to point you to one particular workshop. This was the RAG workshop with Azure AI Foundry, which was all about taking a website of a company like the Contoso Outdoor Company and setting up a chatbot in the bottom right-hand corner of the screen, just as you saw for Microsoft Ignite, where customers of this website could ask questions about the products, they could ask questions about their previous purchases, they could ask questions about the loyalty scheme and so forth, and get answers that were based on RAG, because the RAG process took in all the product information and the customer information in order to formulate its responses.

You can get access to that entire workshop at the link that's available that Henk showed you earlier on. It goes through the entire process of revisioning an application like this, setting it up for development, prototyping, trying different ideas for prompts, evaluating like I just talked to, to see if it's giving good responses, and deploying it to production application, and you can find all of that information at the link right there. So with that, I will leave you with the same link that Henk provided you, where all these links are available, and turn it over to Daniel.

Thank you. [ APPLAUSE ] DANIEL: Okay. Thank you, David.

So you have heard now about all the Azure goodness. So we have seen everything about agents, and now David was talking about RAG. For me, it's now time to talk about Copilot Studio, and Copilot Studio is the low-code offering of Microsoft to build agents.

There was a lot of buzz about it, and there were a lot of sessions about Copilot Studio, and I have a couple of picks here. The first one is basically the keynote of Copilot Studio. So we had the keynote by Satya, which also contained a lot of Copilot Studio goodness, but the first one in the row here is the keynote by the leader of Copilot Studio, and he was showing a whole bunch of different content about the new features that were there.

And what was really cool about that session was that a lot of the stuff that they were presenting was really fueled by all customer feedback. So there were a lot of, like, really, really big requests from customers that were fulfilled in that session. And the next one is actually about autonomous agents, and that is the next really big thing that Copilot Studio did.

So before, we had Copilot Studio where you can build agents that you could start talking to, and then you would get a response, and it would have all kinds of topics and open AI services in there. But autonomous agents is that actually it can be part of a workflow. So what you could do is, for instance, let your agent start when an email is received or when there's a message in Teams.

There's a whole lot of things that you can do there, and the cool part about it is because it's autonomous agents and some people might be a little bit afraid of leaving everything over to AI, so what it has, it has a lot of reasoning around why it made certain choices, and you can all review that. So there is a whole, like, review experience in there as well. And the third one is a really good one because Henk and I did a session a while back about Copilot Studio and Azure AI Studio, and it was a better-together story, basically.

And the third session is about how you can actually do a lot of that together. And there are a lot of new features that will make it easier for you to bring in your Azure AI services into the Copilot Studio experience. The fourth one is a really important one as well because that one is more about the extensibility of Microsoft Copilot, and that's the other part of Copilot Studio that's really important.

So you can build your own agents and you can extend Microsoft Copilot, and that session, the fourth session, is really about that part. So make sure to watch that as well. And the fifth session is about Copilot Connectors, and Copilot Connectors are also something to expand on the Microsoft Copilot experience.

So let's start a bit with what is really new. And we have a lot of announcements at the Ignite event, and these are, like, the big pillars that were announced. And the first one of that is for extending Microsoft 365 Copilot.

And what you can do here is you can build inside of Microsoft 365 Copilot. You can click on create an agent, and then you will get a little designer, which is really fast, and it's built on Copilot Studio, and you can start creating your agent in there. So you can create an agent for a certain HR topic, or you can create something to help writing something.

There's a lot of really good capabilities in here. Next, I want to also show you the part of the AI answers quality, because that was also one of the big requests from our customers. And a lot of customers said, "We can add websites, we can add SharePoint sites, we can add all kinds of knowledge sources, but the quality is not that good.

" So what we did was we enhanced the models, so we have newer models which are better at giving answers on certain topics that we have with the knowledge. We also improved on the SharePoint search, because SharePoint is one of the biggest knowledge sources that are being used with Copilot Studio. So there is a feature where you can easily add a SharePoint site, and then you will be able to ask questions about all the documents, all the different list items that are in that experience.

And then you will get an answer based on your rights. So if you don't have access to a part of the website, then you don't get an answer, for instance. So it's all security-trimmed.

But with this event, there was an announcement to improve the SharePoint search. So now we have way better search, and you will get way better answers. The last part of this part is basically about knowledge curation.

So this will help you to improve the knowledge. You can kind of see what people are looking for, if they can find the right answers. And when they don't, you can easily add other knowledge or improve the knowledge search to make sure that you get those right answers.

The next one is about analytics. And analytics is also a vital part of Copilot Studio. The analytics are really helpful, because this is also what I talked a little bit about with knowledge.

You really want to see what your users are doing, and you want to see if they can find the right things, how they are rating everything, and how you want to improve on that based on those analytics. And there have been a lot of new things in the analytics area, so you can do a lot of things there. You can see all the analytics that you want, and then you can easily improve your agent based on those analytics.

Next, we have security and governance. And with a low-code way to create all those agents, you, of course, also want to make sure that they aren't doing the wrong things, because if everybody can create an agent, they can also do a lot wrong. So that's why we have a lot of security and governance in place for Copilot Studio.

And if you are an admin of your company, you can easily say, "Well, I don't want certain knowledge sources to be added to Copilot Studio, for instance. " So there's a lot of different things that are available here. So make sure to check that out as well.

The next one is about the autonomous capabilities. So, as I said, the autonomous agents are a really big pillar at this Ignite conference. It has been announced a while back already, but it wasn't yet in public preview.

And the big announcement now is that it's available in public preview. So everybody can now go to Copilot Studio and try out those autonomous agents. And that will enable you to, for instance, have an agent and add it to a certain workflow.

When an e-mail arrives in a shared mailbox or when somebody uploads a document to a SharePoint site, there are tons of different triggers available where you can have those autonomous agents there. And this is really the exciting new capability within Copilot Studio. So I really want to make sure that everybody is aware of this as well.

Okay. And there's basically my favorite, favorite topic, because this is the integration between Azure AI and Copilot Studio. And these are the first things that are being introduced to kind of improve the better-together story here.

And the first one is that you can connect to Azure AI Search. And a lot of people use Azure AI Search already to gather around certain knowledge areas and then search over that knowledge. And this will help a lot with that.

We used to have an integration with Azure AI Search already, but it wasn't that advanced. This one is way better and will help you a lot more. And this is really the first step of that better-together story.

And the next one is that you can bring your own model. So in Azure AI, we have a lot of models available. We can add those models to our instance of Azure AI, and we can use them there.

Within Copilot Studio, we only had the OpenAI models that were available in Copilot Studio. So they were only the one that was general available. And what we can do now is we can add all those different models that are in Azure AI already, and we can add those into Copilot Studio.

So we can kind of pick and match what we want to do there. So this is also a really exciting way to kind of make it better together. Then we also have a Microsoft 365 Agents SDK.

And this, for me, is also a really exciting topic because this will enable you to actually do something with code with Copilot Studio. And it might sound a little bit weird because Copilot Studio is low-code, so why do you have pro-code as well? And I think this is a really good example of how you can kind of have a grow-up story.

So you might start with Copilot Studio where you don't have a lot of developer knowledge, and then later on you get more advanced and advanced and advanced, and then you might want to do something with the Agents SDK. So that's something that's really interesting as well, and there was also a breakout session about that. So make sure to check that one out.

Then, last but not least, we also improved the speech capabilities of Copilot Studio. So what you can do now is you can easily create a speech agent, and you can then, for instance, hook it up to your own telephony numbers. So you can have like a help desk, for instance, if you want to.

And then you can also use all those generative AI features that we have in Copilot Studio, and you can hook that up into that speech bot. And this is something that's also really exciting because this wasn't that advanced before, but now they have really turned the dial on, and it's really good. So make sure to check that out as well.

So there were a lot of really big announcements, and I really encourage you to make sure to go to Copilot Studio and try the features out because they are all available right away. So make sure to check that out. And with that, I have a QR code here.

This is the same QR code as the other parts that we saw just now, and you will find all the resources there, all the different breakout sessions about those different features that I just showed you. And with that, I would like to hand it over to Henk for the wrap-up. [ APPLAUSE ] HENK BOELMAN: Thank you, everyone, for being here and not running away and making it actually to the end of Ignite.

So one more applause for all of you. [ APPLAUSE ] But we still have like four minutes, so we're not letting you go yet. [ LAUGHTER ] So, David, if you get to pick one thing from the whole Ignite that you're the most excited about.

DAVID SMITH: Well, that's not a fair question. One thing. HENK BOELMAN: Out of the 800 sessions.

DAVID SMITH: Out of the 800 sessions. I think I would have to go for the Azure AI evaluations SDK. Like evaluations is just so critical to the process of any AI application.

I mean, it's just as important as CICD testing is for a regular application and everybody should be doing it. It's always been possible, but you have to write a fair bit of Python code to automate the process. But now with this SDK, just having the framework to do that so easily, just like using GitHub Actions.

I'm very excited about using that. And what about you, Henk? HENK BOELMAN: Yeah, I think I'm excited about the new agent service in Azure AF Foundry, of course.

But I think this will really make it easier to build your agents and get started because it is quite a hassle to set all the connections of all the different things. And the good thing is that what they told me that you still keep the control about. You can still tune your parameters for Azure AI search, so you don't give all the controls out of hand, but it should make it all easier.

So I'm very excited to get my hands on that one. Okay, and then the last two minutes, Daniel. What about you?

DANIEL: Last two minutes. Oh, I have a lot of time, so I'm going to take it slow. No.

So for me, it's obviously the Azure AI Foundry and Copilot Studio better-together story because I believe that there is too much of two worlds going on. And I think this is going to bridge the gap and it's going to make it easier and easier to kind of hop over back and forth between those different things. And I think that's really powerful and if you use Copilot Studio as a low-code person, you might not use the Azure services right away.

But maybe over a couple of years, you might learn that much and you might use that as well. And the other way around, if you are a developer and you're not that interest in low-code, it might also be good to just look at it and see how they figure out certain things. So make sure to really check at that because that's to me, that's like a real superpower that we don't have like gaps between those different products.

And that's, yeah, in my book, the best the best thing about this conference. HENK BOELMAN: Perfect, then I think we're going to let you all go. Have a safe travel home, and we hope to see you all at the next Ignite.

Thank you very much. DANIEL: Thank you. DAVID SMITH: Thanks for being here.