Hello, my name is Kevin and I'm a Senior Research Manager at Cambridge English Language Assessment. And I'm Kevin's colleague, Gad. Thank you for joining us on this webinar.

To get us warmed up for our topic today, we'll start off with a few questions about assessing writing. We're going to show you three examples of assessment practices and for each one of these, we'd like you to tell us, is this a good example of assessment practice or an example of bad assessment practice? For this first one, we'd like you to vote using the polling function.

Here we go. A writing test that includes the question, what is one plus one? If you could vote using the poll section on your screens.

A for an example of good assessment practice, and B for an example of bad assessment practice. Please vote now using those buttons. While your responses are coming through to us, I'll just read the example again for you.

A writing test that includes the question, what is one plus one? And the question we asked you to vote on was, is this a good or bad example of a writing test? So we can see a lot of your answers coming through now.

And we're at about 82% of you are saying that it's bad. And okay this was an example of bad assessment practice. Most of you didn't think it was a good idea.

And in fact, toward the end, about 80% of you got that one correct. Well, let's move on to the second example. This time we'd like you to type good or bad in the chat box, so this time we're using the chat box.

Here's the second question. A writing task where we know who the intended reader is. Is this task a good or bad example of a writing test?

Please type your answer in the chat box. Type good or bad. Again, a writing task where we know who the intended reader is, if you can type good or bad in the chat box now.

That would be good. And I wasn't trying to give a clue, by the way, on what the correct answer is. But your answers are coming in thick and fast, and I can see that just about all of you say it is an example of good assessment practice.

Now, let's look at a third example, we're going to go back to voting for this one, so we'll be using the polling buttons again. To help students improve their writing, a teacher administers a test and only provides a letter grade. Is this an example of good or bad writing, assessment?

Use A and B, to vote this time? I'll just go over that again as your responses are coming in, in a situation where we are trying to help students improve their writing, a teacher only provides a letter grade. Is this an example of good or bad writing assessment?

Let's see what you said. So I'm looking at the poll now. And it's even more overwhelming this time.

Most of you have said this is a bad example, in fact, 90% of you have said this is a bad example and we would agree with you. Well, it seems from your answers to these three questions that you have a pretty good intuition about what makes for good writing tests. The three questions actually correspond to the three parts of our webinar today.

The sections are; first, what it is we're trying to assess, second, how we go about eliciting that thing we're trying to assess, and finally how we go about evaluating the piece of writing that we have collected. When they want to assess writing, we first need to know what we are assessing. That one plus one example you just look at.

Well, it wasn't assessing writing, was it? And once we know what we're assessing, we need to know how to go about doing that, and that involves eliciting an appropriate sample of writing, specifying who the reader is, maybe one part of that. And once we have a writing sample in our hands, we need to think about an appropriate way of evaluating that writing, whether giving it a letter grade is suitable or if some other way might in fact be better.

So we will look at each of these three in more detail now. Kevin? So first of all, let's take a look at what to assess.

We're going to begin this section with a poll again, so please get ready to vote. The question we'd like you to vote on is which of the following is not an aspect of writing ability, I repeat, not an aspect of writing ability? Is it A, writing the letter A.

B, typing speed. C, writing a magazine article or D, spelling. Please vote now.

So out of the options on the screen, which one is not part of writing ability? And it's interesting to see that on the poll, many of you are voting for typing speed not being an aspect of writing ability, and actually this relates to one of the questions that's already been asked previously, someone asked about handwriting. What about a student's handwriting that is terrible?

Well, writing the letter "A" is something that relates to handwriting. And that's part of what we'd be looking at when we're trying to assess writing. So most of you correctly voted for B.

Which is great, because that is indeed not an aspect of writing ability. We do hope this question made you think a little bit and realise something about the scope of writing that. When we think of writing, we tend to automatically think of long extended text like books.

But if we think a bit more carefully and remember that there are beginner writers, then we realise that the ability to use a pen to form the letter A on the page is also writing. That aspect of handwriting that we just mentioned. And in between writing a book and this aspect of handwriting, there are a range of things that will also count as part of writing, such as being able to spell words correctly, writing text messages and even filling in application forms.

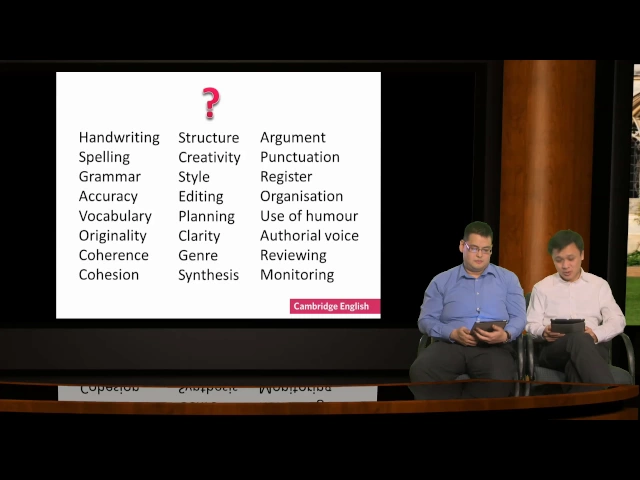

And behind those different kinds of writing are a range of skills, a number of which we have listed on the slide. So when we talk about assessing writing, we need to ask ourselves, what exactly do we mean by writing? What underlying skills are we interested in?

What sub skills are we trying to test? If we include all aspects of writing ability that we know about, there would be a long list of skills and it is probably quite difficult to try and assess them all at once. Let's try to learn a little bit more about you guys.

Please take a look at the list now. Choose one aspect of writing ability that is relevant to you and type it into the chat box. If you want, you can also type in why you think that aspect of writing ability is relevant for the learners that you teach.

Let me just repeat what I'd like you to do, look at the skills on the screen and pick just one that is relevant to your learners, you might have many, but just pick one for now. It might be one that your students find particularly difficult. Let's take a look at which skills you are interested in.

So lots and lots of different options coming through. I can see that coherence is one in particular. Some of you are mentioning slightly more advanced aspects of writing like synthesis and argument, but as a good range of things there.

I'm actually quite glad that you're coming up with things not just to do with vocab and grammar, and mentioning all these other things, including coherence, the one that Kevin mentioned. At the end of the day, where you choose to assess it's not a random decision, of course, and there are quite a few things that need to be considered in order to arrive at an answer. So let's talk briefly about some of them.

First, it's important to consider who is being assessed, the kind of writing someone will need and that you as a teacher will need to focus on will depend on such things as the learner's age, profession and the like. Second, some skills are more suitable to be assessed at different levels of competency. For example, here's a question I'd like you to vote on.

In one item on the list that we saw earlier of the skills is register. In this particular question, what I'd like to ask you is, should register be a focus of assessment among beginning writers, those at A1 and A2 on the common European framework? So please vote yes or no using the polling function.

Again, we're thinking right now about the concept of register. Should this be a focus when we're assessing beginning writers? Okay, I'll give you a few seconds to type in your answers, because some people are on a slower connection, so we'll give them some time to weigh in.

But at the moment, it seems like from the results of the poll that you yourself can see that roughly three-quarters of you think, no, it should not be a focus. And I'm quite glad to see that that is what your answer is. As you can imagine, at this level, learners are just building up their knowledge of vocabulary and grammatical structures.

So they probably won't quite have the range yet to consider register in depth. They may well know that a few words are slang or some words are more formal, but it's, you know, from a pedagogical perspective, it's probably better to focus on register later on. On the other hand, if you think about spelling, for example, it may well be an issue at various levels, but this probably should receive a greater emphasis at the lower levels.

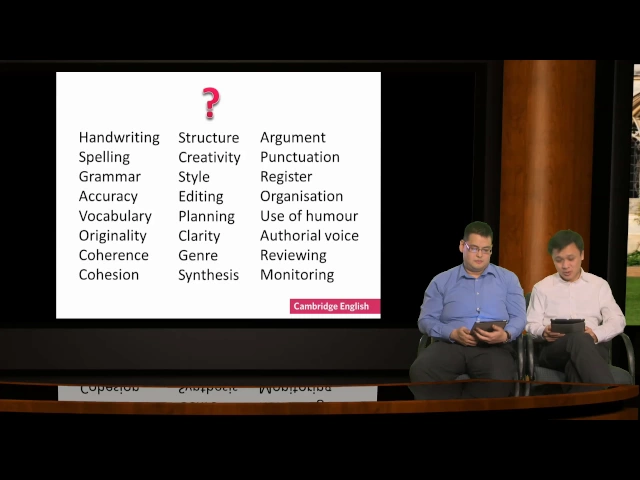

Later on, when learners are at the level where they can produce extended texts, then we need to think about what sub skills are involved in that, because extended texts just have a lot more going on in them. Well, we thought about this a little in Cambridge English, and at one point we look at various models and conceptions of writing ability. What we found was that no matter who the theorist's was, they all generally came up with the same number of components about writing.

In extended writing. There always is about producing a message, and in order to do this, it requires a writer to think about what they want to say. In other words, there is a cognitive aspect to writing.

Then they need to be able to have the words to express these thoughts, which is the linguistic aspect of writing ability, and depending on what they're writing and who they're writing to and for what reason, they would need to adjust their writing so that it is appropriate for the audience and the message. And this is what we would call the socio-linguistic aspect of writing. And as you might imagine, these different dimensions do interact with one another and depend on one another.

So when we write, we need to think, then decide what language we need and what is best for a particular situation in order to convey the message most effectively. Of course, as we said earlier. Each of these aspects is composed of other sub skills The linguistic dimension obviously includes things we've already mentioned, like vocabulary and grammar, among other things.

Well, let's think a little bit more about the cognitive dimension of writing and in the chat box please tell me what you think are some of the sub skills encompassed by this? Again, in the chat box could you share your ideas. This aspect of writing ability called the cognitive aspect.

What are some of the sub skills that are subsumed under this? Okay, and in this one, I want you to type in the chat box so there is no poll for this one. Okay, I see one of you at least typing about organisation, how you organise your writing.

Yeah, that requires the cognitive faculty. Creativity and planning, indeed, creativity is probably a cognitive aspect. Choosing your arguments, very good as well.

Obviously the arguments are formed inside of your head. So I'm quite glad you are seeing that the cognitive aspect of writing ability does in fact cover quite a number of different sub skills some or all of which you might want to assess at some point in your teaching. Moving on, you also want to think about why the test takers are being assessed.

In a large scale exam setting, the focus will likely be on proficiency or achievement. So the evaluation will more likely focus on macro skills. On the other hand, in the classroom context, assessment is more likely to be supporting instruction.

So you might want to focus on a particular sub skill in a bit more detail, as well as on the process of writing as well. Writing is, after all, not just about the product or the end result. In some cases, the process of writing may include collaboration with other people to produce a text.

As you might imagine, assessing the process is often easier to do in the classroom. In large scale external examinations, It is probably less appropriate to have test takers collaborate and produce a piece of text together. It can also be very difficult to assess someone's planning or editing in the large scale setting, particularly because the writing process can go through review and edit stages more than once.

These stages might be reflected in the quality of the product that gets submitted at the end, but they are generally not assessed directly in an exam. On the other hand, it is possible to assess how students plan and monitor their work in the classroom. This can be useful for encouraging more engagement with these aspects of writing.

So to summarise, to assess writing properly, we need to think carefully about what we're assessing and that is influenced by considerations of who we are assessing and for what purpose. Having thought a little about what to assess, let's move on and think about how we might go about getting a sample of writing so that we can assess these aspects of writing ability. If you haven't already, please download the handout, which we will be referring to soon.

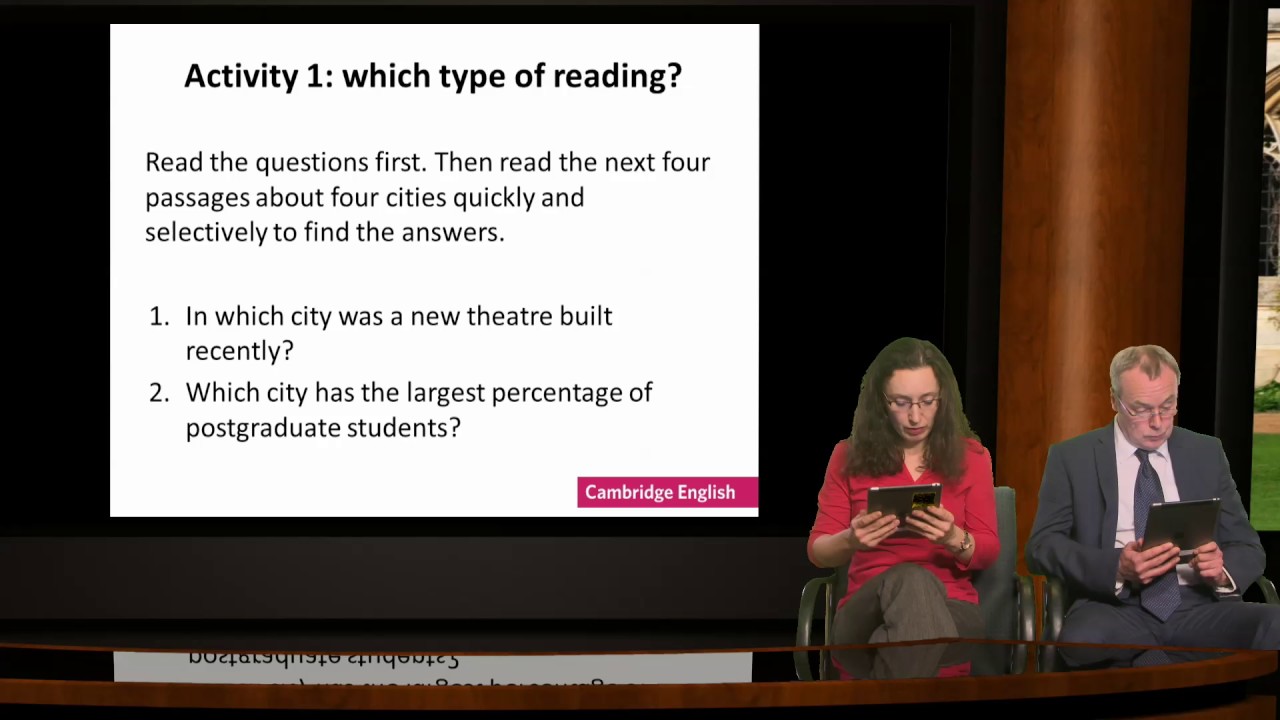

You'll see it under the offer section on your screen. Now we said earlier that it's important to consider who you are assessing, so let's take a look at some example tasks. In the handout, you will see two example tasks.

And in the chat box, please indicate what type of learner do you think is each of these writing tasks suitable for? Please share your ideas in the chat box. And here's the question again, what type of learners is each of these writing tasks suitable for?

And again, for those of you who do not have the handout, it's under the offer section, but you can also see the tasks on screen. And I am beginning to see your answers. There are quite a number of you who are writing that letter A is for young learners.

Some of you are saying beginners. And letter B, a lot of you are also saying that it's for business students. Okay.

Lots of answers coming in, and I think most of them are in that region, some of you are saying that task A is for beginners, it is probably more appropriate to say for younger learners, it's probably worth thinking. If you are assessing beginner learners who are adults, what might the task have to look like? Okay, but for the tasks we see on the screen, task A is indeed for younger learners.

It would be suitable to engage them with pictures as well as with topics that are close to their experience. And you've also said that a task like Task B that covers situations encountered in an office would probably be more appropriate for adults or people learning business English. In addition to age, we can also think about other characteristics of the person being assessed.

Earlier, we mentioned application forms as an example. If the person is an adult, then perhaps making them fill in a job application form is more appropriate. On the other hand, if they are students, then an application to join the school English club is probably more appropriate.

You can also adjust the task according to their level. If they are absolute beginners, then the application form, you may just ask them to fill in their name, address, and contact details. On the other hand, if they know more English and are of a higher level, they can be asked about their reason for joining the club and what their expectations are.

These tasks would make them produce slightly more writing, and they'd also be far more challenging. And sometimes you want to target and assess a particular skill. For example, you might want your students to learn about paragraphing, so you might give them a block of text and ask them to insert paragraph breaks in suitable places.

If you use a passage of real text, you could use a similar task to discuss how breaking the text in different places might be more or less appropriate. Now, we're sure you can think of many other ways to test different aspects of writing ability. So let's briefly take one item from the list of sub skills we look at earlier.

Planning. Could you indicate on the chat box how you might assess learner's ability to make a plan before producing a piece of writing? Again, in the chat box, please share your ideas on how you might go about assessing ability to plan one's writing?

And because there is a lag on the live stream, I'll give you some time in order to type in your ideas. Somebody said a mind map, yeah, that might be a good idea for the very early stages of coming up with ideas. There's brainstorming.

Outlining. Yeah, outlining is a very standard thing we do in writing quite a bit, so it's good to see a lot of you come up with that. Could you come up with something other than mind maps and outlines and brainstorming?

A lot of you are saying brainstorming as well. If I could see some other ones, it would be great. Topic sentences.

Yeah, topic sentences are probably a possibility as well, because it helps them think about, you know, what's the main point in what they're trying to say? So that could be one component. In such a lesson.

I think you've gotten the hang of it, and it's good that you've come up with quite a number of different approaches to test this aspect of writing ability. When it comes to extended writing, you might remember that we said earlier, there are four main aspects to disability. So let's think about this a little.

How might we construct a writing task that elicits all four aspects? If the instructions that you include in the task simply say to write, you might get some words. So that would cover the linguistic aspect.

If you're a bit more detailed in your instructions and said write about a particular topic, then you'd also get more of a message. If you specify that it's to write in a particular genre and to a specific audience, then you're able to assess the appropriateness of some of the choices that they make. Therefore, the socio-linguistic aspect of writing skill.

And finally, if you say what the purpose of the task is and the various parts that need to be included, then you can make sure that they have to engage their cognitive faculties to think about the topics and produce a well-structured response. So the point is for your writing task, you need to put enough detail in the instructions to make sure you elicit all aspects of the ability that you are interested in. So we don't expect you to read this example, but in this task, it says, read Andrew's letter and the notes you have made, then write a letter to Andrew using all of your notes.

Is there something in this example that says to write, yes, there is. Write about something? Yes, again.

Write in a particular genre to a particular audience? Yes, because they are instructed to write a letter to their friend, Andrew. Write about a number of different things?

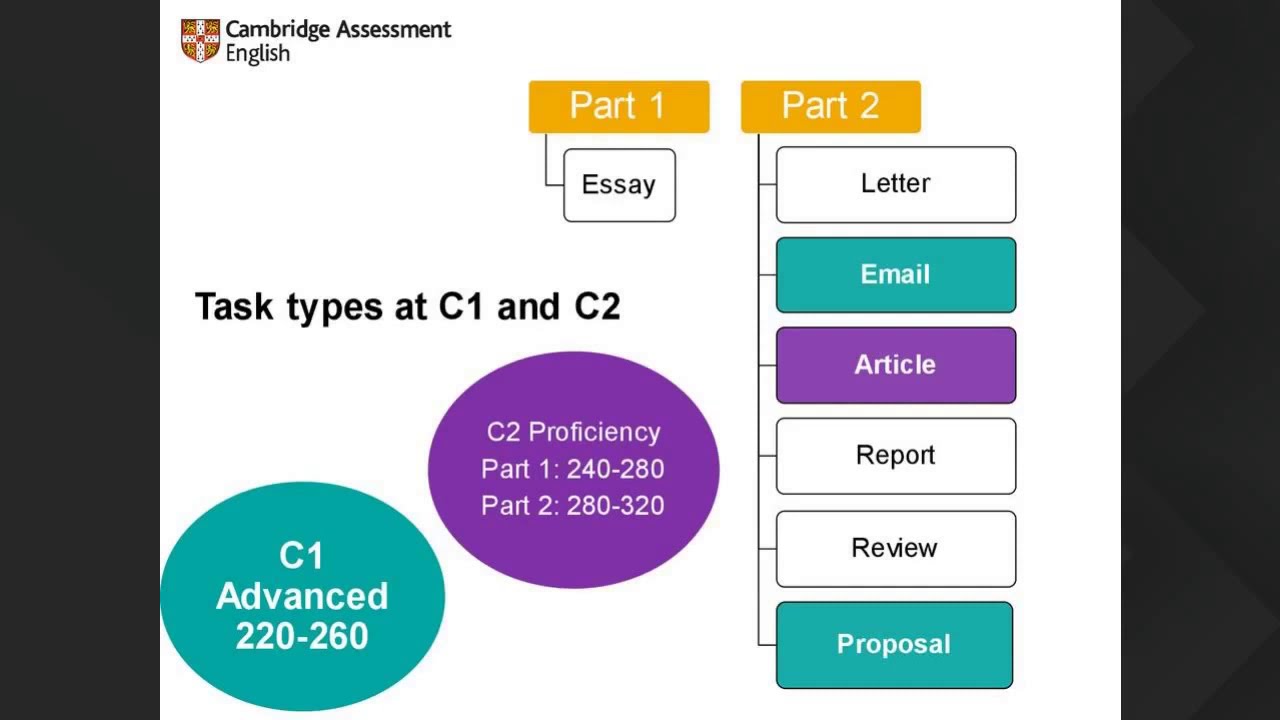

Yes, as well. Now, there are a few more important things to consider, which will go over by asking you some multiple choice quiz questions, so please get ready to vote. The type of texts or genre is an important consideration because the type of language you use is different depending on the kind of writing we're producing.

So here's the question, where will be voting on. Let's say you want to assess students on a business English course, which of the following genres do you think would be the least appropriate? A, is it the memo, or B, story, C, report, or D, newsletter?

Please vote now using the polling function, and again, the question is, which of the following genres is the least appropriate for assessing business English? Is it the memo, the story, a report, or a newsletter? And I can see from your votes that it seems to be a pretty constant 90 or 92% of you saying, story is the least appropriate.

Indeed, companies may very, very occasionally, you know, come up with a story of how they started or grew, but in general, of the four choices, that is the genre that's least frequently used in business contexts. Let's try another example. Let's think this time about academic writing, which of the following do you think is the least appropriate for assessing academic English?

In this case, the options in the poll, which are coming up now, are 1. essay, 2. a report, 3.

email, or 4. a newspaper article? Please vote now.

And again, what we're thinking about now is writing in academic context, in which genre is it that we think is the least appropriate for this context? Now, this one is quite interesting. A lot of you are actually saying that email is not very useful in the academic context.

With perhaps the second place being a newspaper article. Perhaps it does depend on your definition of what the academic context is. If you're just thinking, for example, about the lecture hall, then indeed email is not a good idea because you're probably you know, you don't want to be writing an email at just that point.

But if you think about the academic context as all the kinds of writing that people do while they are at college or university, then perhaps you quickly realise that email is actually quite important in that context. And essays are things they write and reports are things they write, and it's newspaper articles that it is least likely to be the one encountered. So there you go.

When you study, you have to write essays and reports, and you might write an email to your tutor about an academic problem, but generally newspaper article is not what we are thinking of in academic writing. The next thing to think about is that it's important to know the purpose for writing. For example, if you are working in customer service, you're unlikely to write a letter of complaint and far more likely to write a response to somebody else's complaint.

The language you used in these two situations would be very different because the writing task aimed to do different things. In other words, they have differing purposes, even though they are both examples of the same genre. They are both letters.

When setting a task, you can make sure that the purpose for writing matches the role of the writer. Doing this will help you to elicit an appropriate writing sample. Then it's also important to determine who the audience is.

We write very differently when we write to people we know and to people we don't know. We also write differently when it's private communication addressed to just one person and when it's a public document addressed to the entire world. Of course, when designing an assessment task, we would consider both the purpose and the audience together because not all purposes fit with all possible audiences.

The examples we've got here are from a business English context and the differences between these audiences could be more or less important, depending on the purpose included with the task. Think about some of the audience is suitable for your group of students and their level of competency. In a school setting, possible audiences that might be used would include friends, teachers, or even classmates.

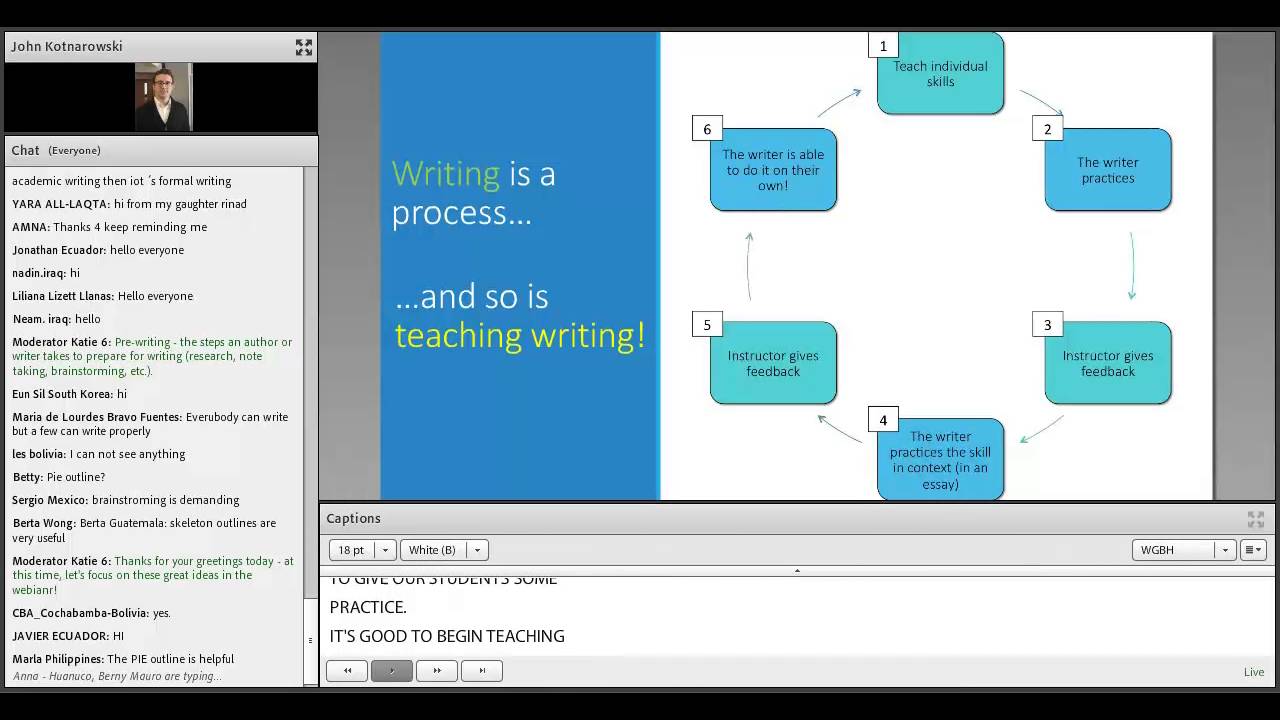

Now, earlier, we also mentioned the writing process as one aspect of writing that teachers may want to focus on. In fact, you can see represented on the screen right now. Well, I'll be in very abstract terms, some of these different stages.

If that is the focus, then you might want to consider breaking the writing process down to its component steps and set appropriate tasks so that you can evaluate your learner skills in the separate parts. Earlier, you did talk about planning and brainstorming and mind maps. There's other things to like paraphrasing or the ability to draft and then to revise one's writing in response to feedback, perhaps from a teacher or from other learners.

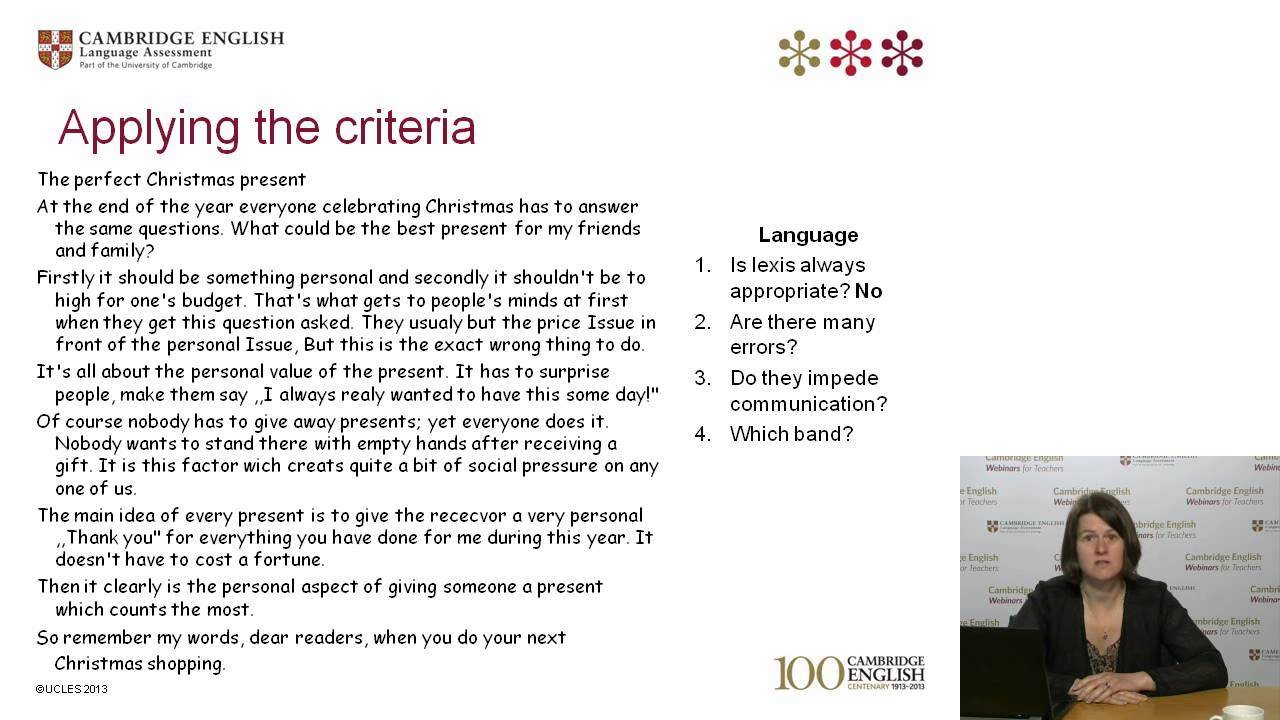

Again, if these are part of what you're trying to teach in your writing courses, then you might want to think about how you might go about assessing each one of these. So we've looked at how we elicit writing and having elicited some samples of writing, you now need to evaluate that piece of writing. Let's look at some of the different ways that we can use to evaluate tasks.

In some cases, the approach to marking can be suggested by the task itself. For example, this is A2 level task that we looked at earlier and which you can find in your handout, ask for a simple written response to the following question, what are the children doing now? Responses saying eating could be marked correct.

Things like organisation are not tested here, so it appears to be quite a simple task that's easy to evaluate. Having said that, you can change how you score many simple tasks based on which aspects of writing you are trying to assess. You might need the spelling to be accurate before you reward a mark for example.

Let us imagine that a student responded with the word "eat" and only the word eat. This is close to the correct answer of eating, but not in the correct form. On the other hand, a response where the candidate says, sitting at the dinner table, this would demonstrate quite advanced writing ability, but it isn't similar to the response we were originally thinking of.

Let's also look back at this task that we suggested for slightly more advanced learners earlier on, the one where we were introducing paragraph breaks. For this type of task, there are lots of options available. You might give marks for the most appropriate paragraph breaks.

You might want to give some detailed feedback about the appropriateness of their choices. You could even use it as a discussion tool and talk about how breaking the text in different places might affect the way that the text is interpreted by the reader. And of course, you can give feedback about mistakes.

You can point out paragraphs where they've broken them up and they're either too long or possibly too short. Now, when it comes to extended writing, there are more things going on at the same time, so there are a range of different ways to go about marking. Indeed, some of you put in the chat box that you need skills, but that is actually just one approach.

If you look at your handouts now, you'll see a sheet listing some options for evaluating extended writing. Now, these can range from the details, like giving comments and feedback to the more summative, as in giving one single score or a few scores. Now, the purpose and context of assessment can suggest which one is more appropriate.

So, for example, let's think about the first item here, would peer feedback be more appropriate in classroom assessment or in large scale assessment? Please use the chat box to type in your response. Again, do you think peer feedback would be more appropriate in the classroom context or in large scale testing contexts?

And I'm again, going to just give you a bit of time to type in your answers. But I think you can see what's happening in the chat as well, and the vast majority of you are typing in classroom contexts as the more appropriate one. Yep.

Indeed, I would tend to agree with you. In fact, imagine if, for example, you were using a large scale test for determining entry to university, such as IELTS which we produce. And how you go about scoring it is you ask a learner's peer's to be in charge of giving feedback for the admissions officer.

As you might imagine, if you did this, every single student would probably be described as excellent and then the poor admissions officer would be no further along because they cannot use that piece of information to determine whether a person should be admitted or not. So, as we can see, peer feedback is probably more appropriate in in-class context rather than in high stakes testing contexts. Now, let's go to the bottom of this table that we have and think about giving a single score.

Is it more appropriate for a classroom test or a large scale test? And again, please type your response into the chat box. So once again, this time we're thinking now of giving a single score.

Do you think this should appear more often in the classroom context or in the large scale testing context? And I can see your answers start to come in now. And I think you kind of get the idea, whereas peer feedback is more appropriate for the classroom context, in a large scale context, you're more likely to give a single score.

And there's obviously a reason for this, right? In general, if you think about it, large scale tests are more summative in nature and therefore it is more useful to the person using the tests to just get one score rather than have to have read everything. So in general, the approaches to marking near the top are more appropriate for classroom contexts and the ones near the bottom more appropriate for large scale achievement in summative decision making contexts.

Now, remember, it's not that one type of testing is better than the other, it's that they are different contexts and have different purposes, and so you approach them in different ways. Even if it was just you and your students. If it's an end of course assessment, you might probably prefer a score rather than detailed feedback.

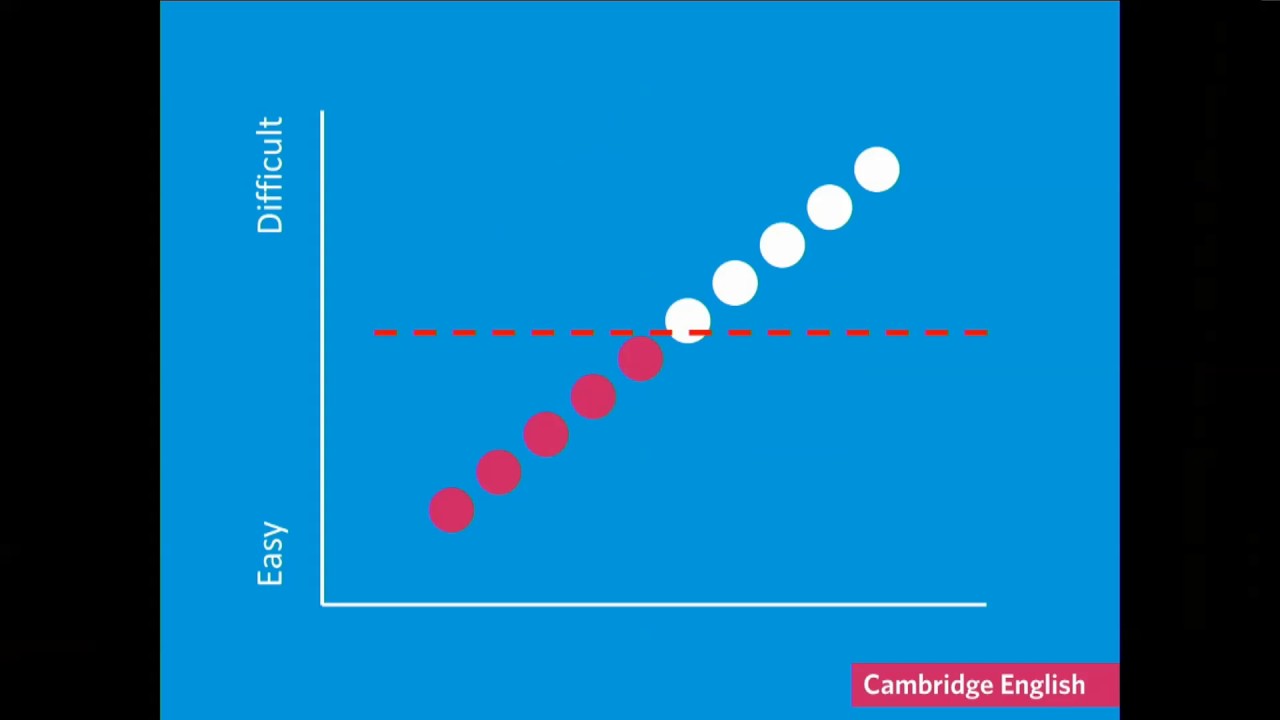

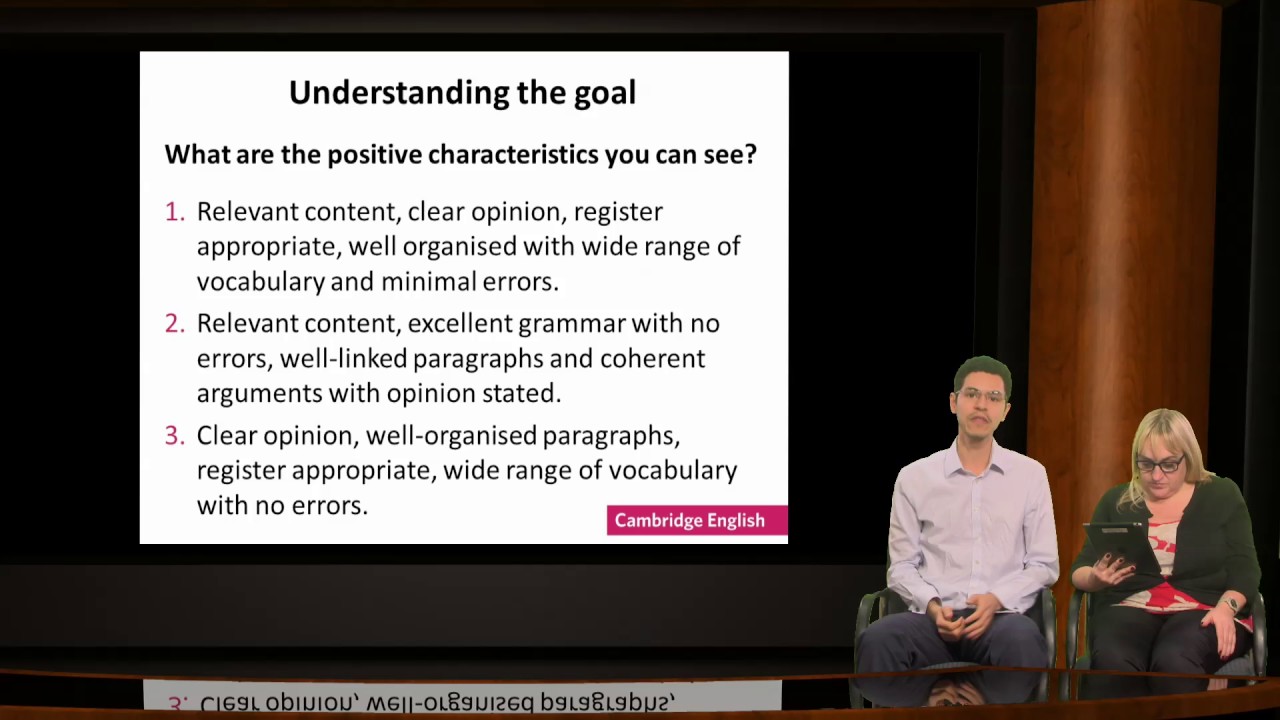

When we think about providing a single score, sometimes referred to as a holistic score with an assessment, and we compare this to a number of different scores, which we refer to as Analytic scores. Some people talk about a difference. For example, we might say that when we read a piece of text, we read it as a whole rather than reading separately.

We don't read it along different number of dimensions. So they would say that holistic scoring is more in keeping with how we read. On the other hand, it's also the case that when we assign a score, it's what scored that is given value, so it signals to learners and their teachers what they need to pay attention to.

In the two examples on screen, both represent eight as the total score, but the sub scores tell us a slightly different story. The sub scores tell us there is one part of the overall performance that is stronger than the other. This can support learning by identifying areas that need a little bit extra attention.

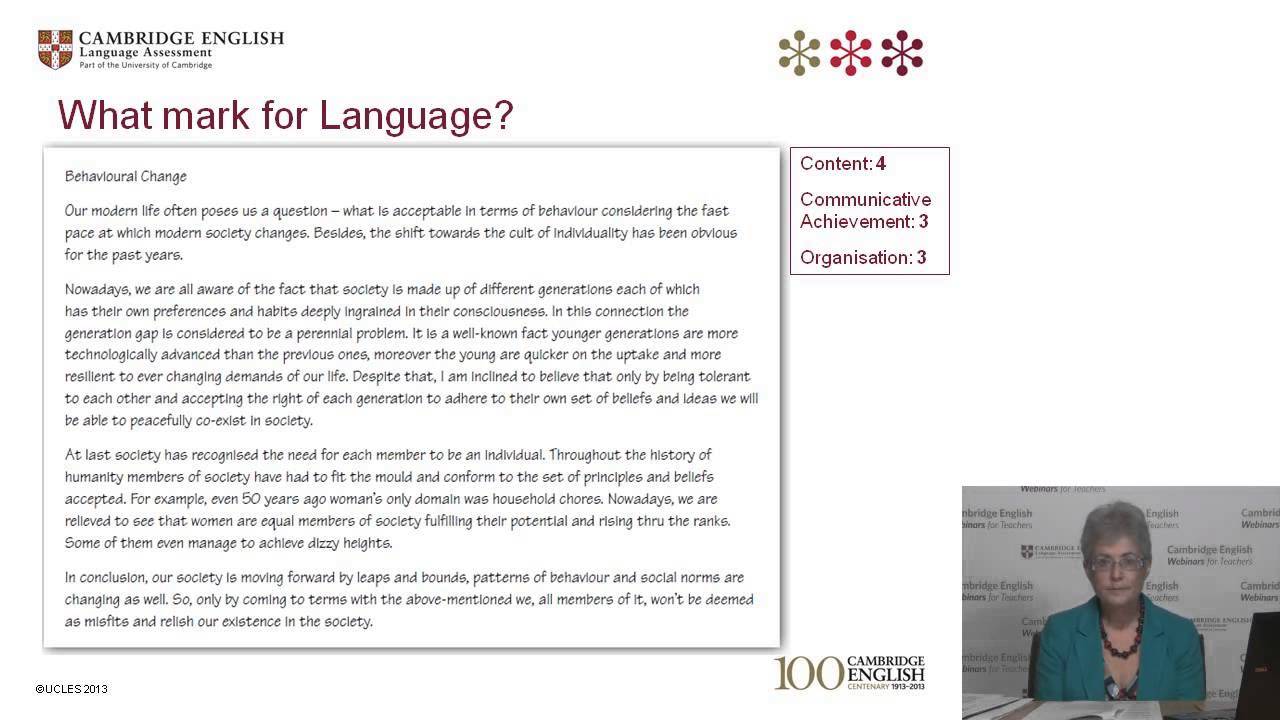

In our case at Cambridge English Language Assessment, we sometimes provide a single score and sometimes a number of sub scores depending on the situation. For the longer pieces of writing where more is going on, we can use our diagram on the sub skills involved in writing to help us. Remember that there are four main aspects of writing ability.

Well, it should be no surprise that we ended up with a mark scheme with four criteria. You can find the mark scheme for level B2 in your handout. Each of the criteria corresponds to one of the four dimensions from the diagram.

Content corresponds to being able to produce a message, communicative achievement deals with audience awareness and register. In other words, the social linguistic aspect. The way a text is organised gives us a clue on what the writer is thinking and trying to do, the cognitive aspect and language obviously fits with the linguistic component.

In this way, we hope to encourage learners and their teachers to work not just on the accuracy of their language, for example, but also to pay attention to larger matters such as the features of the type of writing or also how it is organised It would be a good idea to discuss these criteria with your students so they know exactly what they need to pay attention to and learn to self-assess. It can also indicate where students performance is particularly strong or a little bit weaker. And speaking of self-assessment, checklist's can also be quite useful.

In fact, one of you, Nicoletta, comments, multiple scores are useful for teachers, but students don't like them because they prefer a single score. Well, this is just something where we need to help learners know that writing or learning about writing is not just about getting a score, but about getting better. And they could very well do this by learning to self assess, using checklists like we see on the screen.

Now, you can see in this case that the checklist, which we looked up and found online, that it was written in a way to try to simplify the language so that the learners themselves or their peers can use the checklists if necessary. You can see in the third row, for example, it says, that I keep my tall letters tall and my letters sitting on the line. As you might imagine, that is to help more beginner writers to be able to write neatly on the page.

There's another point lower down which talks about using daring words they haven't used before, so some learners are afraid of making mistakes. So they try to stay on the safe side and just use the words that they already know. But we know that people learn language from using it.

And this checklist item positively supports learning by emphasising daring as a good thing rather than focusing on mistakes. So as you can see, this is quite a nicely written checklist that should help support your learners further writing development, and it's well worth encouraging them by engaging them in these kinds of activities. Now, if you think about it, checklists are a way of providing feedback on things that can typically cost learner problems when they write.

But if you wanted to be even more specific. You as a teacher can provide extended feedback, like the marked up piece of writing we see on screen, then you can point out things that are more specific to the individual. As for checklists, these can be used by learners to further improve their writing, so writing the feedback in a way that learners can act upon them is a good idea.

Now, in this regard, the research shows that the level of the learner matters, if they're relative beginners, they won't know what they don't know. So they will need to be told more explicitly what their mistakes are. On the other hand, for more advanced learners, it is better to engage their metacognitive faculties and perhaps ask them questions that help them think about what they have written and what they need to improve.

This will then enable them to assess themselves autonomously in the future. The equivalent of, you know, helping them to fish, teaching them to fish rather than giving them fish. So just to recap what we've been over in our webinar today, we talked about what to assess when we want to assess writing.

After establishing how important it is to think about what it is that we're assessing, we then outlined how we might assess those particular skills. Both how we might elicit a sample of writing and then how we evaluate that piece of writing once we've elicited it. The important thing to note is that throughout the who, what, and why, will be different and that they have been central to some of the things that we've been looking at.

In other words, depending on the people we're assessing, what we're assessing and why we're assessing, our assessment will be correspondingly our assessment will be correspondingly different. It is therefore important to think about all these different dimensions in the practice of assessing writing. We hope that this session has been helpful for you and we do have some time to answer any further questions you might have.

I'm going to hand over to Nancy right now who will talk to you about some further support that we have for teachers. Please type some of your questions into the chat box. And we'll do our best to answer as many as we can.

Well, thank you, Nancy. And here are some questions that you have asked. Özlem asks, how do we obtain objectivity in grading academic essays?

Two teacher's grade the papers separately but still, there is a great difference sometimes. And I think the first thing I'd say is think about whether you need objectivity in the first place. If you're just looking for a classroom context, maybe it's more important to give more qualitative feedback rather than ensuring you get the same score.

But if you actually needed to get the same score, then setting up meetings where you marked papers in common and agree on particular approaches to marking will probably help you to improve the degree of agreement among different teachers. If you agree on what the criteria are that you're looking for, then you're more likely to get scores that are comparable. So I'm going to answer a question from Bernadette about what about repeated mistakes after sharing criteria, checklists and practice.

And actually there are a few different questions that have referred to this about repeated mistakes. So, of course, those things that you've discussed there about sharing criteria, checklist, and practice are the first steps that we would take. But if you think back to some of the things that we talked about in the webinar, you might try to use more targeted exercises on those very specific places where they are making repeated mistakes.

So, for example, if the mistakes are often on paragraphing, then you might try some of those tasks related to asking the students to put in paragraph breaks. And in that way, you can tailor the feedback specifically to point out those common mistakes that they are making. You might also try to give some model answers that demonstrate really good examples for those particular things that they are getting wrong over and over again.

So Grezelle asking how much importance should we give to grammar accuracy, should it be 50% or less? And for this, the response I'd give is to go back again to what you're trying to work on. If your lesson is focused on grammar, then maybe it should be 100%.

But if you are assessing a complex of things, then maybe less. At the end of the day, it depends on what you are trying to assess and then you give it away accordingly. And Latif here asks, how can I add an element of enjoyment in the writing task?

Now, that's certainly something that we think you in the classroom setting are going to be more able to do than us in the large scale language testing setting where we do them within the example. Possibly you could maybe look at some of the topics that you are able to look at and think about, think really clearly about who is that you're assessing and what kind of topics your students might want to write about. There might be something suitable there in tailoring some of the topics rather than relying on the tests and the tasks that we use in our examination hall tests.

And Angela is asking whether I think there is some form of assessment that's versatile through different situations? Well, if you're actually able to set up an assessment where the process of writing is in there and people also need to produce a product, then the whole, you know, everything about writing from beginning to end would actually be included there, then you're actually able to then focus in on particular aspects of it, if you so desire. So I would say getting them to produce real writing earlier rather than later in general would be quite a good idea.

And Maksoot here asks what to do if you have a large number of papers to be assessed in a limited time? And that's actually a concern that we didn't cover a lot in the webinar, but sometimes you do have to think about some of the practical issues involved. For example, when we were talking about extended feedback, if you have a lot of learners that you need to provide feedback for, it might not be suitable to use extended feedback or possible to get enough information onto all of those scripts.

You might decide in that case to use a checklist or a simple letter grade in order to give some very quick feedback. Or to add to that, you can also use Write&Improve, which Nancy has told you about, if you tell your students to put in their answers to Write&Iimprove, then the website will provide the feedback about individual words and grammatical structures that they have not gotten right or how good their sentences are. And then you can focus on giving them more higher level feedback for those things.

Having said that, I think that's all the time that we have today for our webinar. Thank you very much for attending. We hope you found it useful.

The next webinar coming up is on Understanding Reading Assessment next month. We hope to see you in that webinar again. Thank you very much and goodbye.