[Music] okay so today we want to just uh establish I guess the basic properties of the derivative um as a map between nor infecta spaces having last time basically spent the whole lectur trying to figure out what the definition was um so let's just recall the defition so I I'll kind of try and use this as a standard setup that if I have a map it'll be between Norm Vector spaces X and Y and then U Is An Open set in X and F will be a function defined on that open set taking values in

y um sorry then if you've got a point in a sorry if a is a point in U um we say the function is differentiable at a if there is a linear map um let's call it t for the moment um I'll use this sort of caligraphic L to denote linear Maps or the space of linear Maps between two Norm Vector spaces um that has the following property that if you write F so you can either write sorry this pen isn't very good either write this in terms of sort of the value of the pertubation

like you see F of a plus h which I think is what I did last time um or just write it as f ofx is the value at a plus the linear map applied to the difference plus then a quantity which again if you write this in terms of a plus h maybe Define this as Epsilon of H um so if you look at this equation when X is not equal to a um this equation just tells you what this must be so this whole quantity is the error in approximating F ofx by this sort

of aine linear thing and I'm saying that the error after you divide it by the norm of xus a is the quantity Epsilon um satisfies this error divided by the norm of x - A goes to zero which we just take as the definition of Epsilon of a that should say um as x ts to a okay so it's saying that there is a linear map such that this sort of apine linear approximation to f um is at least um a better approximation to F ofx than anything that tends to well than any error that

is at least as big as the norm of x - A so this quantity goes to zero faster if you like than the norm of x - A goes to zero okay and then if such a linear map exists we call it the derivative as I said last time I think sometimes referred to as the total derivative to distinguish it from when you say partial derivatives of F at a okay um and we checked last time that um yeah I think I'll tend to write well ideally I'll write with a pen that works at some

point um lowercase D or uppercase D um F to denote the total derivative so and then with a subscript for the value that you were taking the derivative at just because this is a linear map so um we'll want to apply it to a vector so having the point you're taking der as a subscript is slightly easier notation to read okay um sorry yes DF at some vector v is just equal to the partial D sorry the directional derivative of F at a in the direction V so this thing was thing that was easier to

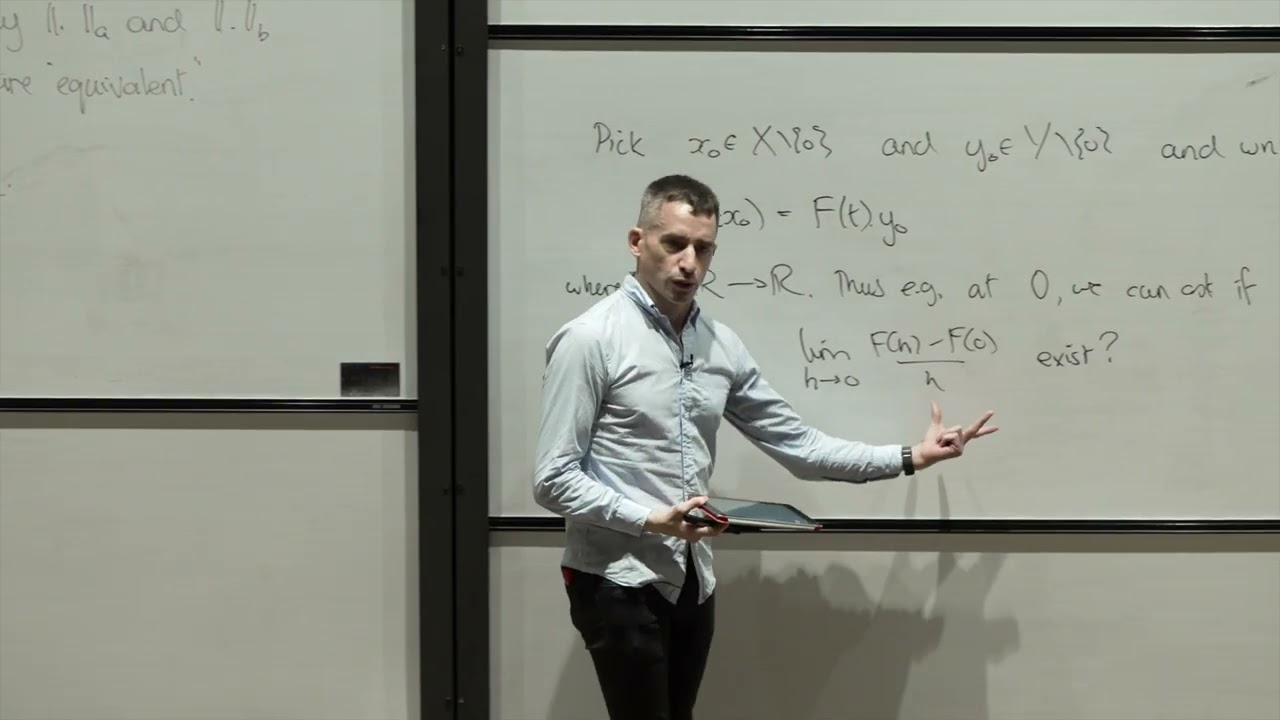

Define it was just the limit of T goes to zero of f of a + T * V minus F of a all over T So this directional derivative is the thing that you sort of get led to most directly from the ratio definition of derivative in dimension one um and where it makes sense you get the notion of a directional derivative but where the total derivative exists it recovers for you all the directional derivatives just by evaluating that linear map on the direction in question okay so I said this has sort of the standard

properties of the derivative uh so let's at least see the most basic of those okay so suppose XY and u are as above so X and Y nor Vector spaces U open in x uh then if you have two functions defined on U um and the derivatives of both exist at a then the sum is differentiable at a and its derivative is you'll be shocked to and the sum of the derivatives okay so there's a that's one of those kind of algebra of limits maybe results that you might want for the derivative similarly but a

little bit more subtle if you have say Lambda defined on you but taking values in the scalar and F taking values in the norm Vector space y um and you have the derivative of Lambda so the derivative of Lambda is a linear map from the vector space usage inside which is X to the vector space r so it's a an element if you like of the Dual space of X and the derivative of f is a linear map from X to Y um so this and the derivative of F at a exist then the derivative

of Lambda time F at a exists and it's the derivative of Lambda at a time f plus Lambda times the derivative of F at a and it's maybe worth just checking that syntactically this makes sense right this is a as I said linear map from X to the scalers so certainly at any Vector you apply it to that vector and you get a scaler you can multiply that by whatever F gives you at that Vector also and the same here this is a scalar valued function and this is a vector valued function so it makes

sense to multiply them okay and then uh let's see I add one more thing yes uh the third Point here is just in a sense to to notice that the the subtleties in the definition all really lie in the fact that the domain has Dimension bigger than one so if you have a basis is a basis of why the target Vector space um and let's say Delta 1 up to Delta n is the Dual basis so that's the basis of Y dual linear maps from y to the reals um B is dual to b y

uh then f is differentiable at a if and only if all of its coordinate functions um are differentiable at a and that's for all I = 1 to M okay so just to cach this out a bit more um if you write F ofx in terms of this basis you get some coefficients right every element of Y is a linear combination of the basis vectors call the coefficients that you get um these are scal scalar valued functions Fi ofx equivalently Fi of X is just apply the Dual basis Vector for bi to F okay so

this is um and the statement is that this is differentiable precisely when its component functions are differentiable um and the derivative of the total derivative of F at a is just the sum of the total derivatives of the component functions at a times the basis vectors so this just means remember this is a linear map which is a map from X to the scalers so taking the scalar that you get and multiplying it by bi gives you a vector and Y and the sum of those things is the derivative at a okay so none of

these things are particularly difficult to prove which is what you'd hope if the definition was right um so uh let's take one um if uh f and g are differentiable at a then what do we get f ofx is just the F of the value of the function at a plus the linear map which is derivative of a * x - A plus the norm of x - A times an error and similarly G sorry get the same expression for G um times some other error Factor Epsilon 2 and we know that Epsilon 1 of

X and Epsilon 2 of x tend to zero which is what we Define their value to be at zero sorry as X tends to a okay so then just adding these two expressions it gives you that f+ g at X is f+ g at a plus the sum of the derivatives applied to x - A plus the norm of x - A Time Epsilon 1 of X Plus Epsilon 2 of X okay so then this is exactly what we need in order for this to be the derivative of this provided this thing goes to zero

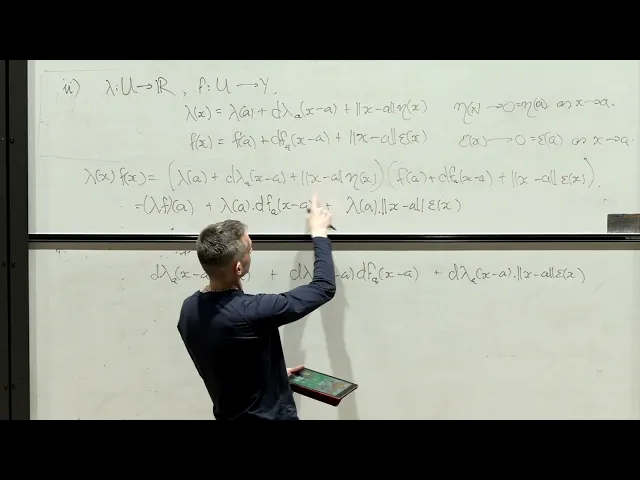

as X goes to a but that's just standard algebra of limits okay so I that's basically trivial but it's just the model of what you have to do so thinking about that for the second part what happens if we take um Lambda um a scalar valued function sorry not on all of X necessarily f a vector valued function then we know that if Lambda is differentiable uh I have this identity and if f is differentiable sorry I have this identity um let's call it Epsilon where Epsilon of X tends to zero which is what we

Define Epsilon to be at a um as x ts to a and we want to understand how Lambda time F behaves well then we just have to multiply these two expressions together but um most of what you get is easy to control from what we already know about F and Lambda but there's one term that we have to think about so Lambda of x * f of x is then just Lambda of a plus d Lambda of a x - A plus the norm of x - A A of X all time F of a

the derivative of f a * applied to x - a rather and the norm of x - A times Epsilon of X Okay so we've got 3x3 is nine terms to deal with um Lambda of a * F of a is the value of Lambda time F at a we want that one um then you get Lambda of a times DF at a appli to xus a that's one of the terms I'm declaring is in the derivative of the product um and then I get Lambda of a times this and that's not in what I

was calling part of the derivative but that's okay because certainly as um X goes to a this is the norm of x - A Time something that's a scalar times something going to zero so this will go to zero um this this we can put in an error term um what about the next factor that gives us the derivative of Lambda at a * a x - A Time F of a that's the other part of what I was declaring the derivative will be um then I get the product of the two derivatives which again

makes sense because this is scalar valued and this is Vector valued um and that's the one that we need to think about for a second um but let's just write down everything else we get that as the final term from that that term and then finally we get this times all those terms uh so let's okay so now that's a lot of terms If We Gather things according to how we want them this is the value of the product then I get uh this term well let me leave it like that okay so those are

the two cross terms that we got that are linear in xus a uh so that is the candidate for the derivative and then I claim we have the norm of xus a times everything else well what is everything else you get Lambda of a Time Epsilon of x uh from that term we've got D Lambda of a time x - A again time Epsilon of X which is this term and then we have I guess all of these terms which is uh a of X time F of a plus a of X time DF of

a and then sorry 8 of X Epsilon of X okay so what we ah and then the one term I've left out is the one which is D Lambda of a at x - A * DF the derivative of F at a * DX - A sorry sorry DF at a applied to x - A okay so now this term this is going to zero this is a constant this is going to zero this is a linear map applied to xus a so we know this is less than the the norm of that linear map

time x - a norm so uh this actually goes to zero as X goes to a and this goes to zero as X goes to a so the product certainly does um this goes to zero and this is a constant uh so they're both tending to zero as X goes to a or rather the product is uh and then this goes to zero and this is bounded so this is bounded by the modulus of sorry the absolute value of this thing times the norm of the derivative time x - A so again this is going

to zero and this is going to zero as X goes to a and then every term here is going to zero so the product certainly is okay so all of the things inside this bracket are straightforward to analyze and it's just this that we need to think about but we just use the same thing that we used here to see that um if you look at oh sorry I should have said it's just plus this term and we need to know that this term if you like divided by Norm x - A still goes to

zero so it's this divided by this um but I can look at for example just put the norm of xus a inside one of the two linear factors and you get this so the norm of this is bounded here by just the supremium norm of Lambda because this Vector has Norm one and then this using the same B same uh Bound for a linear map applied to a vector is the norm is bounded by the norm of the linear map times the norm of the vector okay so we had um the derivative of a scalar

valued function multiplied by the derivative of the linear Vector valued function and the point is that if you divide by the norm of x - A you could put that inside one factor and then the other factor is still going to zero okay so it's basically just saying the product of two linear things has to go to zero um even after you divide by the norm of the vector going to zero okay so the problem sheet asks you to essentially use the same argument to see that anytime you have a map in two Vector variables

that is linear in both so it's a bilinear map then the derivative then such a thing is differentiable and the derivative has the same form as it does here okay so that tells you if you like basic algebra of limits things still work for this Vector value derivative um the other thing that I used in the sense of I argued you want it to be true of the derivative um is that if a function is differentiable at a point it is continuous at that point okay so let's just check that is true um so suppose

we've got a function on an open set in a norm Vector Space X taking values in a vector space y and the derivative at a exists then um let's say maybe for all Epsilon greater than zero let's just check uh yes there is a a Delta greater than zero such that the open ball of radius Delta about a where you shrink Delta if necessary to make sure that sits inside you um is such that when you're within Delta of a the distance between F ofx and F of a is bounded by the operator Norm of

the linear map which is the derivative plus Epsilon times the distance between X and A so in particular the distance between f of x and F of a goes to zero as X goes to a because that's of course certainly true of this right hand side for any X of distance at most Delta from a okay so this um inequality is saying that the the distance of um f ofx from f ofx provided X is close enough to a is roughly bounded by the norm of the derivative um of F at a plus some error

that you can make as small as you like the thing to be slightly careful about is that this is not saying that f is lip shits near a it's just saying that the distance between F at some point x and the distance between sorry the distance between that when you apply F to it and F of a is bounded by a constant times the distance between X and A but you don't know for example that if X and Y are close enough to a then f ofx - F of Y is necessarily bounded by some

constant times the distance between X and Y so that's the thing to be slightly careful about in the statement okay so the proof is just um rolling out the definition um we have f ofx - F of a equal to the linear which is the derivative applied to x - A plus the norm of x - A Time Epsilon where Epsilon of X tends to zero which is what we Define Epsilon of a to be as X goes to a okay so that just means I can make the norm of this as small as I

like so long as I insist X is close enough to a so thus that's exactly what we need those are Delta greater than zero chosen small enough so that it lies inside you um and when X is in within distance Delta of a then that's better the norm of Epsilon of X is less than less than Epsilon ah maybe this wasn't so great to call on both Epsilon we call this Epsilon one perhaps that makes it easier to distinguish okay but then for X in this ball we have the distance between f of x and

F of a uh is just equal to the derivative applied to x - A Plus Epsilon of X time the norm of x - A so then we just use the triangle inequality and the definition of the norm of the derivative so that's saying that the operator Norm of the derivative times the norm of x - A is at least as big as the norm of this vector and then here we B Ed this by Epsilon by the choice of Delta so this is bounded by Epsilon time the nor of x - A which is

exactly what we wanted okay so that establishes that if you're differentiable you're continuous and I guess maybe just uh the thing to note is um since F differentiable at a kind of roughly speaking means that F has a good linear approximation um you should expect um f is continuous at a if it's derivative is continuous at a so that's kind of the crucial Point here so if X is not finite dimensional uh then we require that the derivative is not just a linear map it's a bounded linear map so I are continuous so if you

like the definition of derivative of a function being differentiable is that you have a good linear approximation um again I think the first problem sheet so the way we constructed things the linear the values of the linear map which is the derivative are just the directional derivatives so those are are uniquely defined by the function so if a function is differentiable um this gives you the values of the derivative at that point that you're asking it to be differentiable at so the question of whether such a linear map exists um it's just a existence question

if it does exist it doesn't mean the function is differentiable as we saw last time because the linear map can just be not a good enough approximation um if it is a good enough approximation in this sense then this LMA if you like tells you that the continuity of f will follow from the continuity of its derivative and then just in finite dimensions that's automatic for a linear map but if you work in infinite Dimensions it's not so you just impose that as part of the definition okay so the other I guess standard tool that

you use um when studying differentiable functions in one variable is the chain rule um and the way uh in fact the definition of the vector valued derivative is set up um the chain rule holds for Vector valued functions um with with basically the same proof actually um the only difference in the proof in the vector case is that we really have to use this Lema um whereas the corresponding statement in one variable you notice that you use it okay so the the theorem is as follows if you've got x y and Zed are Norm to

Vector spaces and U in X and v in y are open and you have a function defined on U taking values in y and a function on V taking values in z um so these are just functions uh such that um uh such that f is differentiable at some point a and u and G is differentiable at the image of that point in V then the composition um is differentiable at a and the derivative well the derivative is the only thing it could well the only thing it sort of could be in the situation right

this needs to be a linear map going from X to Zed you've got a linear map going from X to Y and one going from y to zed given by the derivatives of f and g respectively so the only thing you really have that you could use is the composition of those two linear maps and the chain rule says that's the right answer okay um I just wrote the composition here if you set it up in this generality it's slightly messy to write down precisely what the domain of definition of this thing is it's the

intersection of the pre-image of V with u um but uh if f is differentiable at a it's continuous at a and so that means if you're near B your pre-image is near a and so since U is open that definitely means that the composition is defined in some open ball around a which is all you need in order to understand whether or not it's differentiable okay so just to say that quickly um so since the F at a exists um hence G composed f is defined near a um since F inverse the pre-image of V

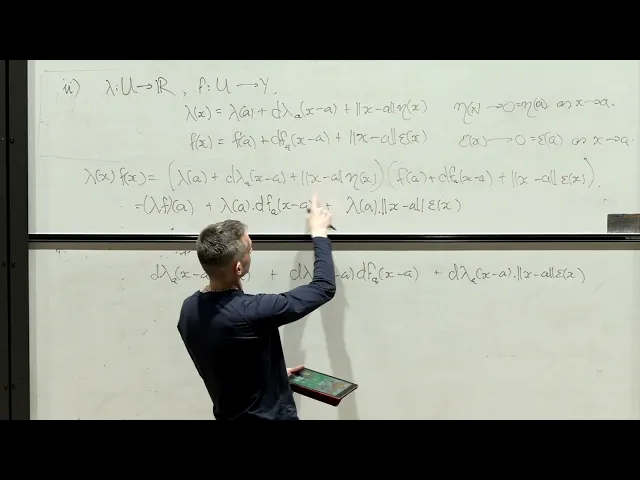

uh so that's just the vectors in X whose images lie in v um is a neighborhood of a so in other words it makes sense to ask if the composition is um differentiable at a uh sorry that's yeah that's fine um so just what do we know in these situations just like in everything that we did in the LMA a second ago you just write out what you're given so f is differentiable at a implies that f ofx is approximated by this Aline linear thing plus the norm of x minus a time Epsilon 1 a

function from U to Y and G of Y is G of B which is f of a plus the derivative of g at B applied to Yus B plus the norm of that uh Vector between Y and B times Epsilon 2 of Y let's say where Epsilon one of X tends to zero which is Epsilon one of a as X goes to a and Epsilon 2 of Y tends to zero which is Epsilon 2 of b as y goes to B okay so we want to understand the composition of G and F uh so we

just substitute in the first Formula into the second formula essentially G of f ofx is G of B which is G of f of a plus the derivative of g at B applies to well F ofx minus F of a um plus the norm of f ofx minus F of a time Epsilon 2 of f ofx - F of a oh sorry just f of x okay so now um that's just writing yal F ofx now substitute in the first formula for f ofx minus F of a gives us the derivative of g at B

applied to the derivative of F at a on the vector x - A plus the derivative of g at B applied to EPS one of x * the norm of x - A and then still plus this term here okay so this is the value of the composition oh sorry that's a not an X at the point a this is the linear map applied to xus a that we're claiming should be the derivative so in order for that to be true so thus um the derivative of G composed with F at a is equal to

the derivative of g at B composed with the derivative of F at a if and only if the error when you divide by Norm of x - A goes to zero so that's saying 1 over Norm of x - A times this stuff goes to zero as X goes to a okay so there's two terms here um one of which is easier than the other so it's enough obviously to check their Su goes to zero to check that each term goes to zero for one we've got the Norms cancelling um one is just equal to

the derivative of g at B on Epsilon one of X and this is a linear map Epson 1 is continuous at a so as X tends to a this to Epson one of a which is zero so this is a composition of continuous Maps so it tends to its value the value of the composition which is just the linear map applied to Epsilon one of a which is zero so that's equal to zero so that's the if you like straightforward one the slightly more subtle one is this one so two you've got the norm of

f ofx - F of a / x - A Time Epsilon 2 of f ofx okay now if you look at this term um Epsilon 2 goes to its value at Epsilon 2 of B which is zero so this goes to zero since Epsilon y goes to zero as y goes to B and F ofx goes to F of a um as X goes to a because F being differentiable implies f is continuous okay so this term the second term we need to use dilemma that um differentiability implies continuity you need to both use continuity

here and then in this Factor so by the LMA um there's a Delta greater than zero such that if x is within Delta of a then this thing is bounded by the operator Norm of the derivative of F at a plus one let's say okay so we need to use sort of the precise statement of the LMA on continuity to show that this ratio is bounded by something well anything you like as close to that's bigger than the operator Norm of the derivative and then this term goes to zero so the product goes to zero

um as required okay so that proves the chain roll and the the proof is really just write down what you know and then look at what you need in order to prove the theorem and that comes down to showing that you have this limit and then seeing that that limit is indeed zero for one term is sort of basically immediate from the definitions but from the the other term you really need to use um the argument that showed you that the derivative existing implies continuity at the point and in fact implies something a little bit

better than continuity right if f is continuous at a it's not the case that the norm of FX - F of a divided by the norm of x - A has to tend to zero well has to stay bounded in fact right I mean just take the square root function and uh the single variable case and it's not true and the reason if you write out so if you write out the proof of the chain rule from prelims um I mean I guess maybe one hint that the definition of the derivative in higher Dimensions is

a good one is that what you do in that proof is you rewrite the one variable definition as the many variable definition um because the thing that's sort of subtle in controlling the chain rule is understanding sort of how the products of the errors actually um behave and you get the term just like this but of course in the one variable case that's tending to uh frime to a and so you just have a factor that you know is banded because it's tending to the definition of the derivative here you don't get that because the

derivative is a linear Mass so there isn't the a single value of it at a but you get roughly the same statement you get that it's bounded not quite bounded on the nose but bounded by anything slightly larger than the norm of that Vector so the same you know the same bound would hold if you use the absolute value of the derivative in the one variable case Okay uh wait I feel oh yes um just realized I forgot to prove the third part of the Lemma we prove the two algebra limit statements but the statement

that everything boils down to the uh one variable in the Target proof we didn't do um so if we write so proof of three in LMA um um if f is given in this way or sorry the FI are given in this way so that fi of X is just Delta I of f ofx Delta 1 up to Delta n being the Dual basis uh dual to B1 up to b m I think I used yep then uh if f is differentiable at a um applying the linear map Delta I composed with f um is

differentiable um with derivative the linear functional composed with the derivative of F at a if you like this as an example of the chain rule if you have a linear map kind of obviously the best approximation to that which is linear as itself so um if T is linear T of X is T of a plus T of x - A shows I mean on the nose there's no error term shows that the derivative of T at a is just the linear W that you started with so the derivative of this composition being this linear

W composed with the derivative of F at a is a case of the chain rule um it's a much easier case so you should just check that it follows immediately from the definition um once you know that uh Delta I is bounded uh so you can use Delta I of a vector is bounded by the operator Norm of Delta I times the norm of that Vector okay so One Direction is Trivial if f is differentiable then its components are differentiable um for the other direction if Delta I compos f is differentiable well what does that

mean it just means that fi of X is fi of a plus DFI of a applied to x - A plus the norm of x - A times some error where Epsilon I goes to zero as X goes to a then we can just add all these together the norm of F ofx minus F of a minus the sum of DF I at a applied to xus a Time bi I that's just taking the components um minus Norm of x - A times the sum Epsilon I of X time bi I so all I'm doing

is I'm saying if you have this identity as scalers for 1 IAL 1 to M take it and multiply it by the vector bi I and add together what you get and you get um ah sorry so this um minus this the value at a minus this in Norm is just equal to this in Norm right so if you're like moving these two terms to the left hand side shows that this sum is bounded by Norm of x - A Time all of this and then the norm of xus a comes out uh so let's

just write that here so this is equal to and then just the triangle inequality tells you that this is less than or equal to okay and sorry these are just scalar so that's an absolute value these are going to zero as X goes to a so this is just a finite sum of things going to zero times constants so it goes to zero and that's exactly saying that the derivative of F at a is this linear map here which is exactly what was in the statement so if you know the components are differentiable then the

function is differentiable so really the thing to understand in the definition of the derivative is the case of X is higher dimensional but y the dimension of Y is sort of not an issue okay I think probably best to stop there uh for today but um next time we'll talk about partial derivatives and get some sort of fix for the fact that having all the directional derivatives didn't guarantee that you were differentiable so the fix is just if you have all the directional derivatives or even just the derivatives in the directions of the coordinates that

you use coordinate axes and they're continuous then you are differentiable so and then for the rest of the course in fact the condition of being continuously differentiable is always better than well it's obviously better than the condition of being differential in the sense that it's stronger but it's uh the one that's sort of the most robust so functions that are differentiable can still behave slightly weirdly functions that are continuous continuously differentiable are sort of the ones you can bring to a dinner party they behave like you expect okay and that's the main theorem of the

course which we don't actually prove is the inverse function theorem which which says that if you're continuously differentiable and your derivative is invertible then locally you're invertible also okay so that's what we'll get to next week

![The most beautiful equation in math, explained visually [Euler’s Formula]](https://img.youtube.com/vi/f8CXG7dS-D0/maxresdefault.jpg)