so um you know as I as I mentioned earlier we're talking about best episode selection here for Lee squares but we can just as well do this for logistic regression or for any other type of model um and in particular when we're talking about other types of models we don't usually talk about residual sum of squares instead we talk about something that's called the deviance which is negative two times the log likelihood and in the in the case of of um least squares the deviance and the residual sum of squares are equivalent but for other

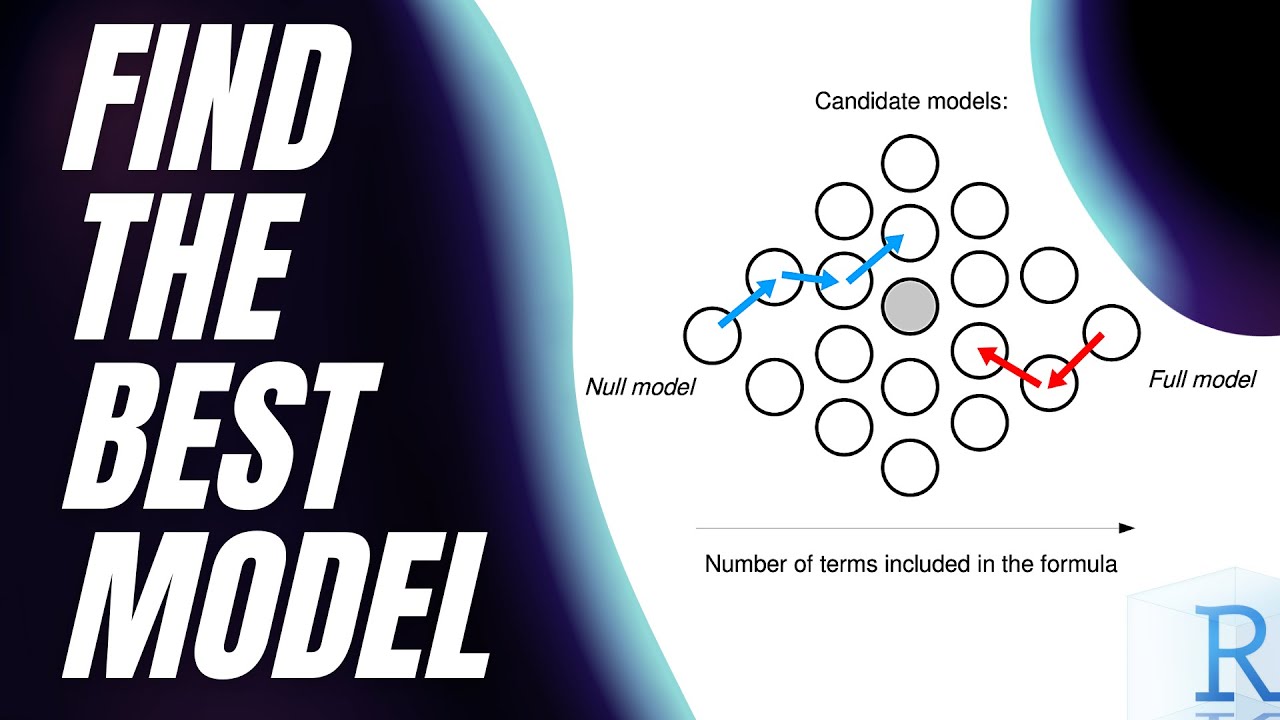

model types the deviance is really just a generalization of residual sum of squares but here we're going to talk about residual sum of squares for Simplicity so you know in that in that figure we were looking at a couple slides ago with the credit data we saw that there were all of those gray dots and I mentioned that there's actually two to the 10 gray dots in that picture and and the idea is that if I have a if I have access to p predictors and I want to consider every possible sub model I'm actually

looking at 2 to the p subsets and that is an absolutely huge number so just to put this in perspective you know like 2 to the 10 that's like a thousand so that's okay fitting a thousand models if you've got a decent computer is no problem but 2 to the 40 is a really big number and so the idea is that um when when p is large we're really not going to be able to do best subset selection and 40 predictors isn't large like I work I work on data where there's easily tens or hundreds

of thousands of predictors and so Beth that sub selection just does not scale for many of the types of problems that people are interested in today I think the limit for most packages to do subset selection is about 30 or 40. and beyond that they just say I can't I can't look at all the combinations right protection also suffers from statistical problems and the idea is like if if I'm considering gosh what is this this is a trillion models if I have 40 predictors and I'm considering a trillion models um I'm going to overfit the

data I'm just looking at so many models I'm going to choose a model that looks really really great on this data by chance but it's not going to look great on an independent test set that I didn't use in in training the model and so I'm going to have a problem with overfitting and so for these two reasons computational and statistical best upset selection isn't really great unless p is extremely small and in this setting we can use stepwise methods which sort of are the same idea as best subset selection but they look at a

more restricted set of models so instead of looking at two to the P models they look at more like P Squared models and of course 2p is much more than P Squared for for any P of any reasonable size so this point that the element is actually pretty somewhat counterintuitive especially people in computer science might think it's always best to do the the most exact computation you can if you can do the optimization over all models it's usually felt to be better but Danielle said something that's actually quite somewhat counterintuitive that actually to avoid fitting

too hard it's good to actually not look at all possible models but only a subset of the models so for its stepwise regression which we'll look at is Step wise and fourth step wise in particular doesn't try to look at all possible models despite the fact that in in in cases when p is less than 40 so you can look at all possible models it may not be the best thing to do that so for step wise on purpose um looks only at a subset of the models and that can actually be helpful yes I

mean best upset selection what would P be if you're going to use it and what would be your limit where if p is larger than that number you wouldn't want to go beyond that subset uh I wouldn't want to use best subset maybe not Beyond 10 or 20 say yeah I don't think I would probably even use it for 20 . I think I would use the subset if I've got a handful of predictors and if I've got 10 I wouldn't be using that anymore most likely yeah so the point is it's not always best

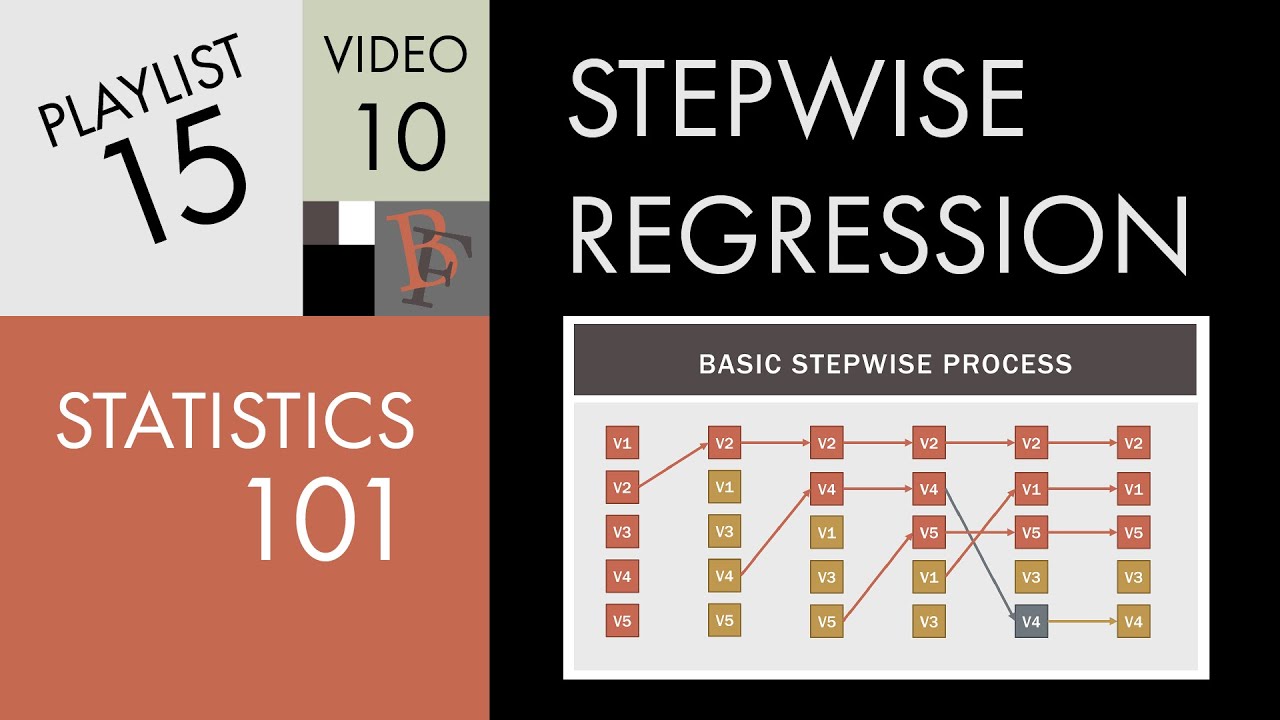

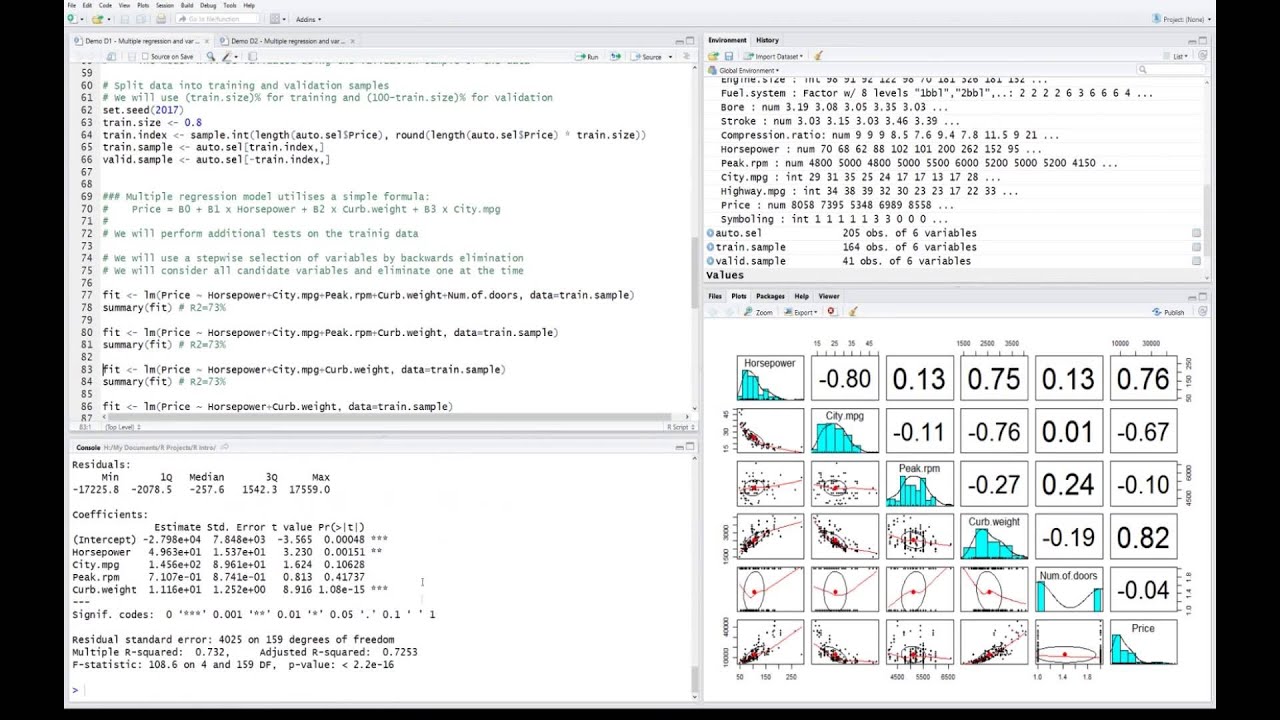

to do a full search even when you can do it because you pay a price in variance so so nice methods forward and backward stepwise and these are really pretty similar um but there's just one fundamental difference which is whether you're starting with a model with no predictors or you're starting with a model with all the predictors so in forward stepwise selection we start with a model that contains no predictors this is just a model with only the intercept it's what we were calling the the null model m0 earlier and then we're going to add

predictors to the model one at a time until all of the predictors are in the model so this sounds like best subset selection but there's actually a really major difference which is that at each step we're not looking at every single possible model in the universe that contains key predict K predictors we're just looking at the models that contain the K minus one predictors that we already chose in the previous step plus one more so at the Kate step we're looking at a much much more restricted set of models than than we are in in

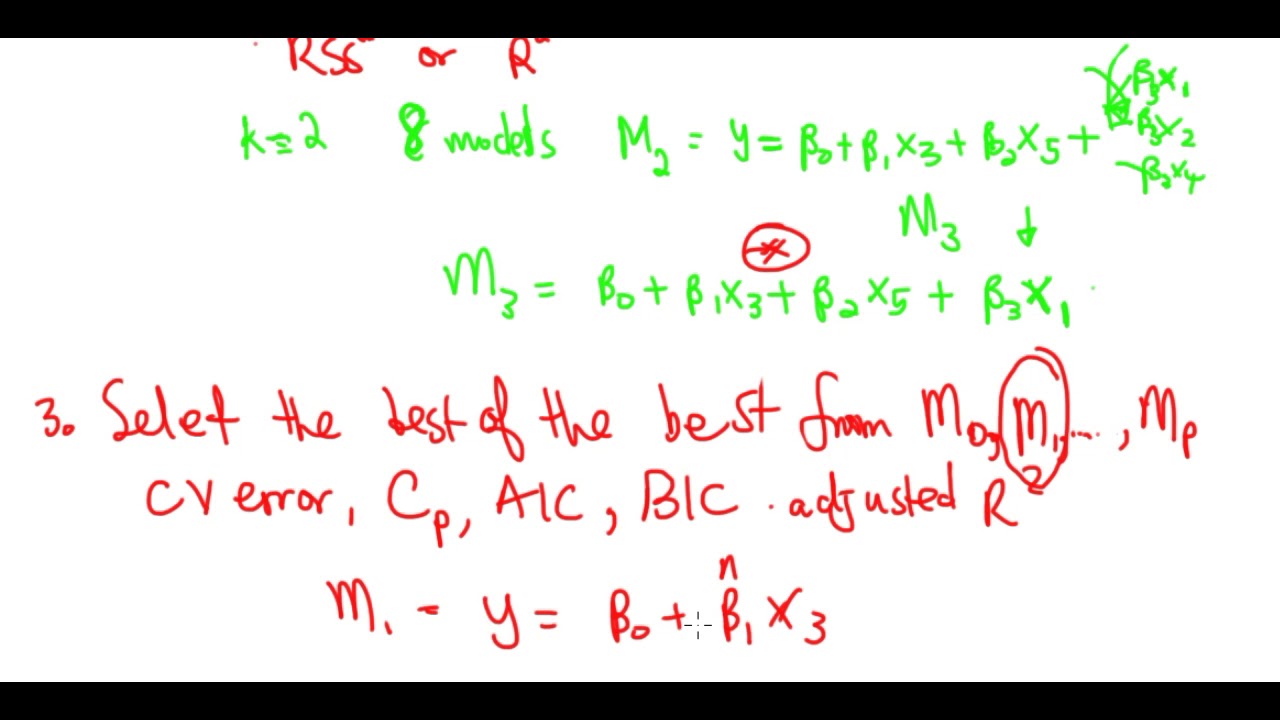

best subset selection in particular at each step we're just going to choose the variable that gives the biggest Improvement to the model we just had a moment earlier so the idea behind forward stepwise selection is that once again we start with this model m0 it's the null model and it just contains an intercept so you're just I'm going to predict the mean for every observation and then we're going to try to create a model M1 and the model M1 is going to just contain one more predictor than m0 so we're just going to take m0

and we're going to look for the best predictor to add that's going to lead to the smallest RSS or the largest r squared so that gives us M1 and now in order to get M2 we're going to take M1 and we're going to consider adding every predictor that we can one we're going to consider adding all P minus 1 possible predictors to M1 and we're going to see which of those P minus 1 predictors gives us the best model M2 and to get M3 we look at M2 we consider adding all P minus two predictors

that aren't in M2 we choose the best one and that gives us M3 and so on so the really key thing here is that in this step this is different from what we were doing in in the best subset selection case because here we're not looking at every possible model containing K predictors instead we're just looking at every possible model that contains one more predictor than M K minus one where we're going to take the model we just got and we're just going to add one predictor to it to get a slightly bigger model so

just like in best subset selection we're going to get p plus one models from m0 to MP but the difference is that these models are nested so MP M1 contains the predictor in m0 plus one more M2 contains M1 plus one more predictor M3 contains the predictors in M2 plus one more and so on these models are nested in a way that wasn't the case for a best subset selection so after we get these P plus one models we're going to choose among them and as I mentioned earlier we can't use RSS or r squared

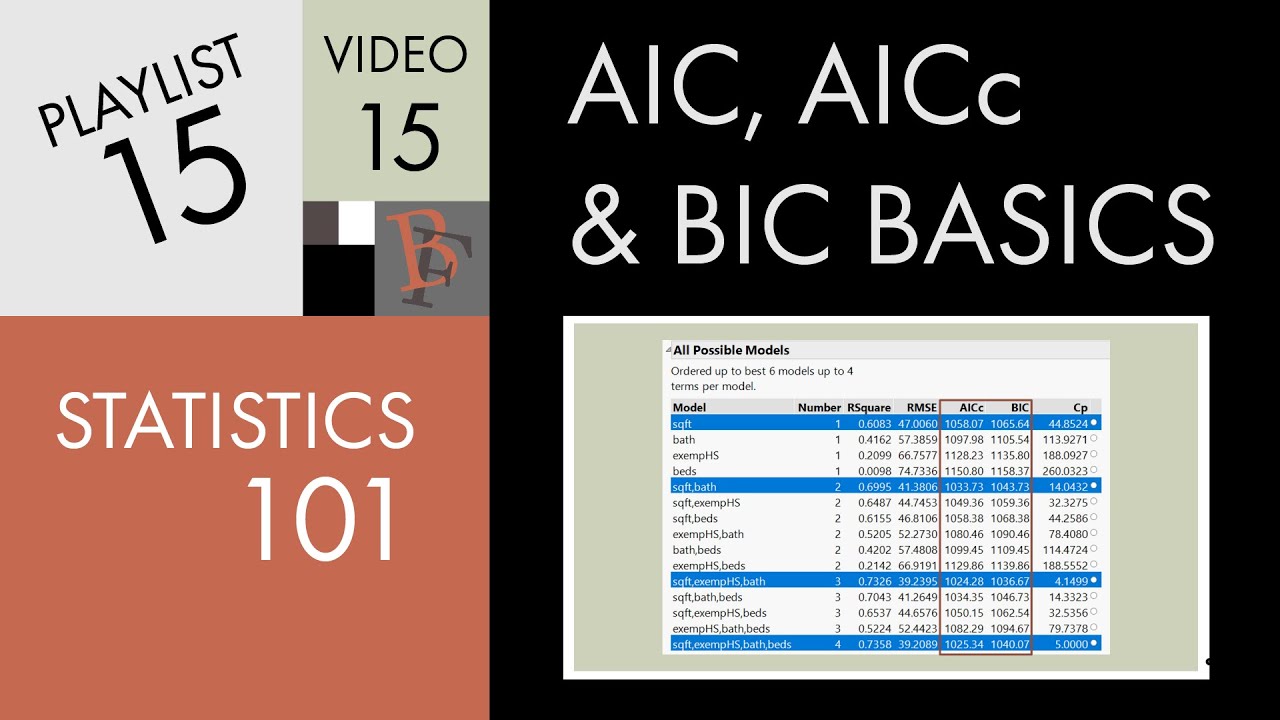

to choose among these P plus one models because they all have different sizes and in a few minutes we'll talk about some approaches like cross-validation and AIC and Bic to choose among these P plus one models so we we've said that um that forward stepwise selection has a computational advantage over best subset selection and just to really reiterate why that's true in best subset selection we're considering two to the P models which as we said is like a trillion when P equals 40. but in contrast in forward stepwise selection for m0 we're just considering one

model um and to get M1 we're considering adding P different predictors so we're considering P models for M2 we're considering adding P minus one additional predictors so that's P minus one models and so on and so actually when we do forward still stepwise selection we're considering around p-squared models and so P Squared is much less than 2 to the P so we're considering far fewer predictors when we do forward step wise and here's another picture to help see what's going on and see if I can draw it but if we think of the RSS picture

is because the function of model size remember this is RSS and for best subset remember it of course we said the career keeps going down it might flatten out um but for so this is for best subset I saw that before step Wise It's also going to have the same shape but um it's not going to go down it's going to be it might be it's going to be above that curvature so I'll start in the same place because for one variable it's going to pick the the same best variable but it's going to be

typically above that curve I'm not doing it very well until the very end whoops that wasn't these are meant to join at the end so the point is that forward step wise is not doing a search among all possible models so for a given model size it's going to have an RSS that might be above typically will be above that for Ford for best subset right so yeah I mean that relates to the fact that um forward step wise isn't actually guaranteed to find the best possible model out of all two to the P models

that the best subsets the best subset considers so like if you look at Rob's picture um right here the forward step wise and best subset curves oops have the same RSS because they each contain just the null model and then over here these two models are the same because forward step wise and best subset are each considering the model with all P predictors so those are the same but in between there's this Gap and the reason for the Gap is because best subset selection is going to find the best model with let's say k predictors

but forward step wise might not and the reason that it might not is because it could be that the best model containing K predictors is not a superset of the best model contained in K minus 1 predictors so we can actually see an example of that if we look a little more carefully at the credit data so um this table shows the results that you get if you fit if you look for m0 M1 M2 M3 and M4 on the credit data so this is M1 M2 M3 and M4 and so if we do best

subset selection then M1 just contains rating the rating variable and if we do forward step wise M1 just contains the rating variable so so far so good if we look at M2 then best subsets M2 contains rating and income and same with forward stepwise is M2 if we look at M3 best subset has rating income student and same thing with Ford stepwise rating income student but when we get to M4 things suddenly change because now in the context of a model with four variables the best that we can do is cards income student and limit

but that is not a model that forward stepwise can give us because remember forward step wise can only give us a model that contains rating income student and one other variable so forward stepwise is going to give us rating income student and it's going to add in limit when we ask for the best model with four variables and in contrast best substet isn't going to have rating it's instead going to have cards and it's lost rating and so this M4 the best subset gives us is going to have a smaller residual sum of squares it's

a different model than we would have gotten with forward stepwise um but you know just because best subset has a better model on the training data it doesn't mean that it's really going to be a better model overall in the context of test data which is what we really care about well one more point about that so you might wonder why this happens well it only happens when the correlation between the features right it's pretty easy to show that if the variables we had no correlation then uh the the variables chosen by the two methods

would be exactly the same because the correlation between the features which is typically there you can get a discrepancy between best subset and forward stepwise