think we'll get started um so welcome everybody uh T will be reading Amazon's Chronos paper that they published I think about a month ago now um I'm San I work on the product team here at arise and Ambert do you wantan to do a quick quick intro yeah hi everyone my name is Amber I'm an ml growth lead here at arise you probably see me a lot on the community side um I do a lot of like conferences events and workshops um also create a lot of content so if there's any content that you want

to see in the generative AI space uh you could just message me directly in Rise Community slack I'm always looking for uh have like like new ideas or what people are interested content wise amazing um yeah as Amber mentioned before if you have any questions as we're going along feel free to drop them in the chat or the Q&A we'll we'll be peeking at them as we go and we'll definitely try to um reserve some time at the end for us to go through them um before we get into our paper today I did want

to do a little um announcement about our iners conference observe um it's happening July 11th this year we're going to be in San FR Francisco at chck 15 really super cool venue excited for that uh we do have a special promo for all of you joining today so uh you can use promo code Kronos uh to get 50% off the standard tickets so really great deal uh Sarah's going to drop the link in the chat for you all to uh register so so come join us it's going to be an awesome event cool all right

so for today's agenda uh we're going to do a little time series 101 just really quick refresh of like what the current landscape of Time series um is then we're going to go through the Kronos framework um Amber's done a really awesome test on cronis Versa Rema you know they share stats in the paper but it's always fun to kind of try it out for yourself she also has a lot of different like anodal um kind of um research that she's done to see what people are saying about it so we'll get into that and

then we have um a overview of our founder research AA who's done a lot of research on can lm's Due Time series so we'll walk through that in the end um but at a high level what we're trying to do today is understand like does Kronos does llms do they have the capacity to kind of be the gold star for time series models or will these traditional models kind of continue so if anybody's familiar with Greek mythology this is what this is taking inspiration from so uh excited to kind of walk through it today and

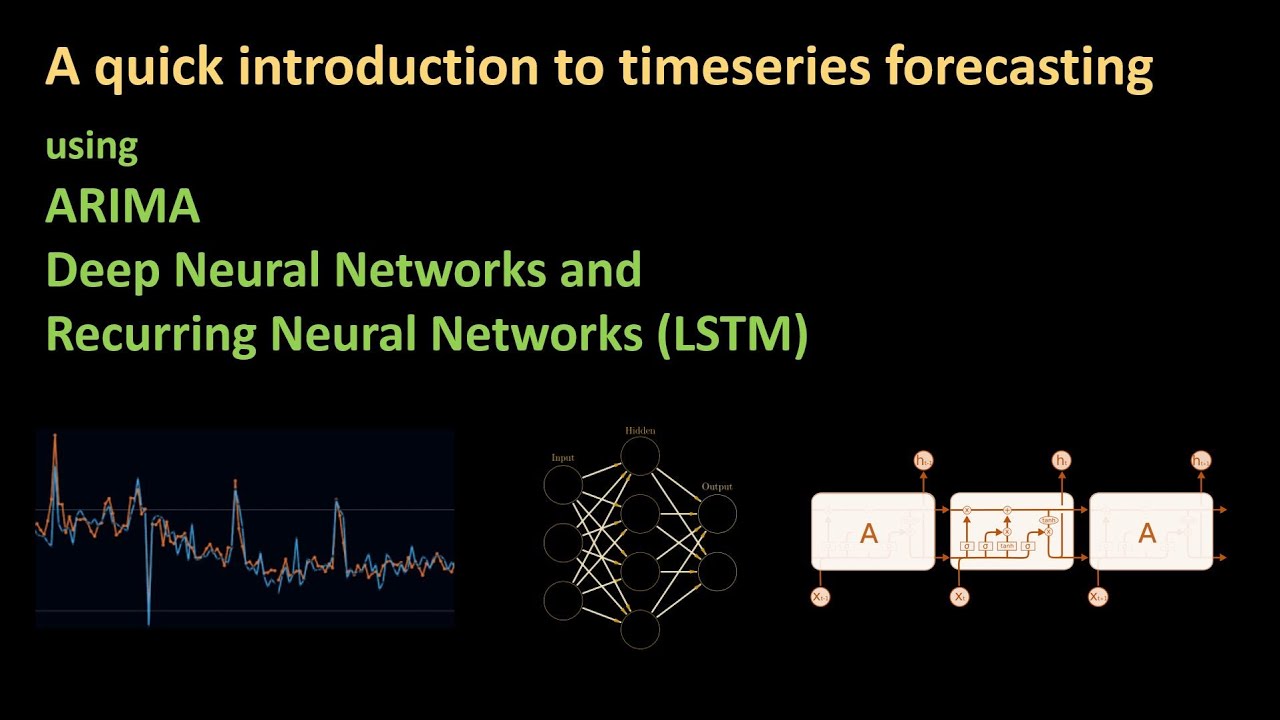

see what we find so uh time series 101 I thought it was important you know maybe people haven't touched really time series since you know school or maybe have been sticking with the same model for a long time so I just wanted to kind of call out some of our classical models deep learning models um so for our classical models these are usually called like local models since they independently fit you know that unique model for every time series um we have etss ARA Theta these are kind of commonly used uh for testing techniques I'd

say ARA is probably the most uh popular it's a really reliable model that uh folks seem to love to use um and as I note there there are really effective for those uh series with clear Trends and seasonality which is going to be important when you're dealing with this kind of wild time series data uh there are deep learning models that exist and are used um these are more like Global models uh because they're going to like look at the multiple time series in the data set um they're going to capture those complex patterns and

relationships um and they're really powerful in those environments with really large data sets so classical models analyze each time series independently while deep learning is going to look at the whole data set um the output is very similar for both so classical models will usually focus on the predicting the first sees value uh while deep learning is going to provide that like density function of future values so just kind of a TDR and time series I think it sets the scene well of what the Benchmark is that we're comparing against um and with that let's

get into the corona knows oh sorry forgot about this uh LML base forecaster so something that's interesting is I feel like this paper is getting a lot of attention I've been seeing it a lot more than some of the other papers that have come before it um so in case you're not aware LM based forecasters is something that's probably or not probably is a really hot research uh topic right now um so there's a lot of people in the time series Community who have been kind of using the same model we've been using a RoR

for years it's reliable and as soon as lm's came on the scene they're like what does this mean for time series so some of the approaches we've seen before are pre-train LMS to forecast through prompting so if you've ever heard of promp cast um promp cast is a way to prompt you know a pre-trained llm to look at time series we're making the numerical data strictly textual and passing it through the prompt but then there's other series of um research that focus on adapting LMS to time series via fine tuning um so this is LM

time time LM GPT for s um and so these are basically the two main approaches we've seen so far um the challenges with this is it requires a lot of prompt engineering specifically for that first approach you got to get your prompt really well positioned to be able to actually get accurate results here um if you're doing the second approach uh it's going to take a lot of fine-tuning like each new forecasting task requires you to fine-tune the model and that's just kind of really expensive in a lot of different ways um and then there

you know resource intense so they depend on large models which are going to increase computational demands and then it could have prolonged inference time so um these are kind of the growing um interests approaches uh challenges and then I think the key thing to call out here is where kronis is a little bit different than this is the fact that the Amazon research group had the realization that llms and time series forecasting kind of share a similar goal um and is to decode sequential data patterns to forecast future events so that is kind kind of

the Cornerstone of all of this research and what inspired them um to kind of go go and create this Kronos framework um Amber before we move on to the framework anything you want to add yeah definitely um and I think with what a lot of people say in the time series Community a lot of statisticians like um you know this tabular use case is kind of like the Last Frontier for deep learning um and kind of the differences between looking at like a purely statistical like method um and like time series forecasting versus something that

has content um understanding to it so I think with what we're seeing with large language models is and like especially like rag use cases is this ability to add context and um then you know you're making things like content specific it's like okay the llm is actually understanding um what trying to get from it it can provide explanations on it and so I think a big thing with time series and we'll sanne and I will get more into this but um Salan from your perspective like the format vers content uh debate um where do you

see kind of like llm forecasters fitting into that yeah it's tough um because like with normal time series models that like the idea of adding context be able to add that additional context maybe knowledge that you know about the the data is not something you can do with like AR Rema right so it could potentially be a really really powerful thing to give just have the edge over those traditional use cases because that's just not something that will ever work for that so I think context could potentially be um the real secret to all this

the secret sauce if you will um I I see a lot of potential with that and I think I think that's common uh with a lot of LM use cases that ability to really frame the data in a way that gives LM word context is just really powerful yeah here's what you think though yeah no definitely and I think maybe like that could be on the horizon it'd be amazing to have a Time series model like explain oh we're predicting actually a drop here um because I don't know maybe like weather patterns um that re

occur like every few years um I remember like um Walmart Labs uh coming in and like talking about how they sell out of strawberry Pop-Tarts every time there's a major storm in like certain areas and so that they need to kind of stock up on those and they wouldn't even have realized like that was part of their model until someone like very specifically looked at it but if you ask an LM to explain like you know give me five you know kind of interesting facts of like things we have to stock up on that we

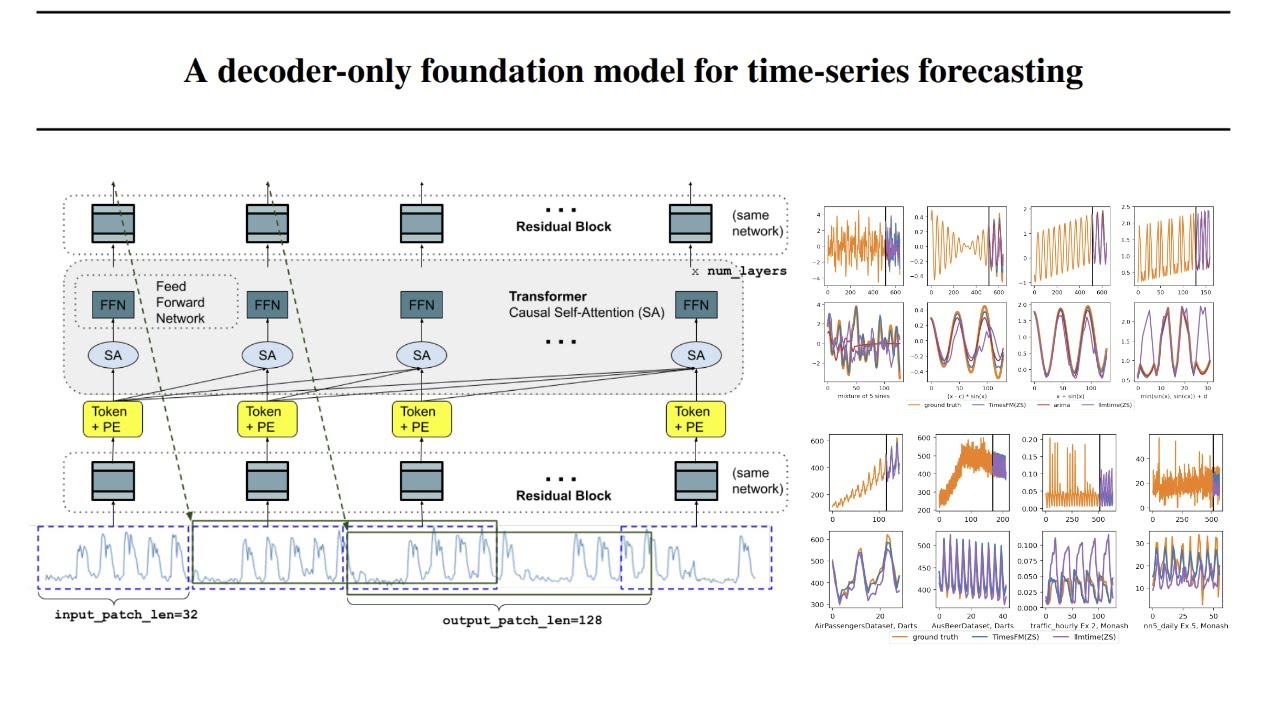

don't normally stock up on I think that would be like really interesting um yeah and yeah we we'll get a more to the results Sally because I think right now we're not obviously there yet we're still trying to build forecasters to compete with things like ARA um but yeah let's show show them how it works San all right let's get into the framework a little bit so this is um a visual that's right from the paper here it gives a really high level um overview of how all of this works um so I think the

idea that you know time series predicts sequential patterns so do LMS like when I read that in the paper I was kind of like I had like a dub moment um because I had been kind of playing around with this you know we've had some colleagues who have tested it we've tested it like the idea of like prompting an llm um for time series and it just feels so weird to like try to take time series data and like form an ass text and send it to an LM that's sound it just felt wrong and

I wasn't ever surprised when it performed badly but when I saw Amazon's approach to this I was like oh that actually makes so much sense to take this approach um so what they do is essentially we have our time series that we're going to do mean scaling then they're going to quantize it and don't worry we're going to get into that in a little bit more detail um and so from this quantization they can then get our context tokens so this is going to be the actual tokens that will then pass for training um so

for training they use uh Google's T5 um and then that's going to get you know these predicted probabilities and you know that next token ID which is essentially that forecasted value um at inference something very similar happens we take that context token except we're going to get you know this sampled tokens and what's interesting is you have to then de quantize and UNSC to get back to our forecasted value because you remember in the beginning that's how we're training so you kind of have to reverse that um to get back to your um ultimate prediction

so this is high level we'll kind of break down these steps in the next few slides but really interesting um approach and we'll kind of make it more clear in the coming steps so the first thing I mentioned with scaling different scaling approaches you can take they use mean scaling here um so the data scaling really helps keep its shape but it restricts it to a smaller set if you think of LMS that's going to be important less kind of range for the LM to have to kind of attend to uh so this is a

really important step in it so this is kind of a visual comparison you can see this is just kind of some generated data um I did use GPT for to help me generate some data here to uh plot this out it's a secret weapon um but yeah you can see the number of purchases just as an example here ploted over time we're ranging uh pretty range 30 to 70 and now we're kind of through those discret values there um from the scale time series data um so just that range is decrease from like you know

zero to 1.4 instead of like 30 to 70 so um super important then the next step is we're going to take that scale data and we're going to quantize it um so there are again different kind of approaches you can take here but what konos does is it takes the scale time series data so that's kind of what we're visualizing up here um and then we're going to create a Time series of of fixed tokens so they're using percentile binning so the tokens Can really only go from one to 100 and the Really key point

of both of these tasks is it makes it easier to represent the values as the fixed set to the um llm if we skipped all of that it would just be a lot less performant um so these are really important steps and as I mentioned before they'll kind of come back in the end to to reverse this process that they did yeah and San we have a brief question I know you're kind of like in the middle of it but um so someone asked um like they understand tokenization of words for vocab um can you

elaborate a bit on kind of the tokenization of Time series maybe just like a a little example of like it I don't know if you're given like prices like how that um just gets tokenized like through these steps we go to the paper maybe I think there's a good example of it in here let's see where is it sorry y' let me see if I can find it yeah no problem um yeah while while salian looks for that yeah it's I feel like um yeah like the tokenization of words has become so logical to us

um and the sequence is already understood because it's whatever context that sentence is put in um and yeah looking at it and this is also why llms traditionally aren't very good for regression task because of how difficult it is to create um like context or or meaning behind just numeric values 100% I think what we'll do is I know there's a visual on that paper somewhere that I'd love to show when you're showing your um your research I'm gonna find it and then we'll Circle back to this question at the end because I think it

would be helpful to show um okay perfect um so we'll Circle back to your question soon oh well I'm clicking the wrong direction here all right so just kind of breaking down everything we did in a little bit more um so it enhances the traditional language model Frameworks um by kind of exploiting that sequential similarities between the language in Time series models so these are the core components we just talked about the scaling quantization but but excuse me um one thing that I thought was really interesting in this was the fact that Kronos doesn't take

any of the temporal data into consideration and I feel like that's something that you know traditionally is super important for time series but um for Kronos it ignores all those indicators um Amber I'd love to kind of hear your thoughts on that because I know you you spent some time playing around with this um any ideas on the differences that that could cause between you know Kronos and your tradition like time series models for sorry which part San oops the temporal features the exclusion of oh yeah um right so I think this kind of goes

to use case as well um where oh I do think Kronos did a better job than some previous um like deep Learning Time series models where they're using like only really like benchmarking against other deep Learning Time series models um but if you're if you have a use case where it's like I only care about a forecast that's going to have like an hourly component or a daily component um like take Chick-fil-A for example like they're closed on Sundays and so they're they don't really uh need something that's going to include Sundays in a prediction

because they're not going to have data for it um if you're looking at things that are purely like oh like say Kronos is really good at predicting month over month like sales that could be good but if I'm a machine learning engineer and I don't care about month-to month like I need it on a much more granular scale like then I'm not going to use it um so or if I care about seasonality or if I don't have trends like very use case uh specific here um and so it you know we might get what

we might get like llm leader boards for a Time series uh llm models yeah it's it's a really interesting approach I think we'll get into that a little bit more later on about like what use cases and what the unknowns are to for this model okay um for training I think I already mentioned this uh they use Google T5 um they do employ cross categorical I'm sorry categorical cross entropy law um so that we're really viewing forecasting as a classification problem to learn these distributions which is again very very interesting approach here um kind of

turns like everything I feel like if I back in like undergrad and grad school when we learned about time series if we were told that it was going to be turned into a classification problem my my brain might have exploded but um it's the world we live in now with these large language models um another really important call out for the training is that they actually enhanced training diversity using TS mix and Colonel synth I think this is a really really important um thing to call out because we see in some of the research that

you know our Founders have done have other people have done of like how important it is to kind of have diverse training sets and try to your best to make sure that you know the training data sets are not the same as like the benchmarking marking data sets um so that when you know they have those zero shot forecasting for for that new unknown data it can actually perform so it's it's great to see uh these techniques are really interesting I definitely encourage you maybe check out the paper and see exactly how they do that

uh TSM Blain scaled sequences um from EX existing series and then Cal syn create synthetic series from gajian processes so it's really interesting to see how they um employed those and um that's how they got their training data set um for the forecasting approach um the model fed uh sampled context tokens and generate to generate a sequence of future tokens that was that last image here uh in that visual we looked at before um and then these are de quantized and unscaled to get the final prediction so you know they do all the scaling to

kind of get um the tokens into these like finite uh values and then we kind of have to reverse that so that we can get back to the original data set that we're looking at so um really interesting approach there so that's kind of the Kronos framework at a high level um so I'll pass it to you Amber and we can get into um the research that you you've done get into understanding how it performs yeah um we also have a few uh questions so let's maybe like have a bit of a discussion here um

and then San I'll also have the paper up so if you find that example um we can show that example that Nesh um asked about um so abas asked is Kronos good for intermediate time series um like some of these questions um I think just like I'll show how to play around with it um I bet if you ask the Chromo Kronos team versus someone that like does intermediate time series like the answer may vary um I'm sure you could get it to work for it like I'm showing uh kind of two different uh two

different examples for um just like the the granularity of the time that's being licked on um Jazz asked would it work if you have missing slns in the SE in the data sequence San did that come up at all for you I know like missing data and I was like there are ways to get around it um obviously with traditional techniques and kind of like uh smoothing or different um like Eda approaches um but not sure if you um if you came in kind of came into like contact with like NES in the data sequence

yes did do they employed pad um I forget what they was stand for but they essentially had this like pad time series of different lengths to kind of replace any missing values um and they used also end of um sequence tokens um to like denote that end of the sequence so I think those two techniques uh that they mention in the paper are probably how they overcome um any noes in the in the data there awesome um and then uh for at well he pretty much um you know mentioned something that we're going to talk

about more but for this is absolutely correct um you know so the training data is quanti been forecasted to become a classification which San like you you mentioned like that's uh you know not definitely not how we were taught uh for for time serious analysis but that can mean that you're not going to forecast like no novel values um and that's very true and it almost goes a little bit with um Pan's um question of is it valid for multivariant time series which you know it's it's interesting um because I think like what what like

some discussions are saying is like if you apply deep learning and apply this like LM like layer um you it could be good for like multimodal so it's going to be less good for traditional things like things that you really like doing with traditional statistical time series forecasting like I don't think these techniques should try to beat time series at its own game yeah but in terms of research and in terms of just like expanding capabilities um that's really that's really what it's about so there are some big claims for like these like l time

series um methods but most of the time they the claims fall short of traditional methods but and and this is what we were discussing salian like there could be additional methods um and different things that you could get from using something like this versus traditional um it's not going to be uh less money uh we can say h to use these methods yeah it's it's really interesting I just to kind of just clarify that last question we got of like how how does it become a classification problem um so you talk a bit about their

objective function there um and how it really works is they're essentially Trying to minimize the cross entropy between those different distributions of the quantized round truth in a sense um so it's like performing regression like via classification not like um just a full classification problem so um I would say that that's that's essentially what it is so we're they're explicitly trying to recognize um like just the cross entry between them it's not trying to see like this bin is closer to this bin it's just trying to kind of understand that distribution there um so hopefully

that adds in a little bit more clarity um to how it's doing it by classification yes awesome yeah I realized that was uh a question and not a comment how do you said that San um awesome so um so salian just went through um the the tokenization the training um a bit on like the inference like the processes there for how they're tackling it um again this is kind of the next steps for these um like the language of Time series if you will and so what I did and anyone could go to the the

Kronos forecasting um GitHub and and the first thing I want to do which we talked about this and like how to read AI research papers effectively like once you understand the paper once you can compare the techniques the audience is already asking really great relevant questions like for their own use cases and uh traditional use cases that they seen the next step is to just start testing those um and if they don't provide um the ability to recreate their own experiments I just don't believe any uh really that goes on in the the paper um

the paper like put out by the team um the the Amazon team did put out the model so you can run your own benchmarks but yeah anytime someone's talking like up their own results um either reproduce them or wait and see if someone else in the field is going to produce them for you um I am running um gpus uh so this I would say this I did try it on CPU it it worked um the first time and then I ran out as like space so um if you want to run this I would

recommend using um a GPU for it you don't need the GPU for the ARA versions um of the experiment um for Cronos they have these different models obviously they talk about the tiny one which is 8 million param and the large which is 700 million parameters it is pretty amazing that we do have models this large for time series like I um you know like five or six years ago like I would not expect um models this size for Weare time series and it's still you know still being more and more effective so it is

really cool to see this research being done um I used the small here so 46 million parameters and the example they had was like um pass passengers how many passengers like on um I think passengers on the plane like over time um but this was just the example data set they created and so it's like this is passengers over a time period and you see obviously a trend um you see the seasonality you see like an increase and it's saying like can Kronos the small model predict um this next Peak um for it and so

it does do a good job now again this is the example that Amazon put in the GitHub notebook so first step being able to recreate what Amazon showed and so okay I could recreate that with what they're showing the next step is comparing it to different data set um a popular yeah like a popular forecasting open Source data set is the Walmart sales forecasting data set um there's a lot of really good work done on this on kaggle I believe it was a kaggle competition um I found I got this data um from from this

uh GitHub notebook um this person really dives into like this notebook is huge with workflows um machine learning workflows um and kind of traditional cleaning and time series technique so if you want to run that you can I just kind of uh ran the the results for it um but not computationally dense like you can run uh this notebook yourself so looking at the Walmart data essentially we just care about the weekly sales forecast over time um which is which looks like this so you do have some periods of like you know negative sales not

many um Walmart is making a lot of money and this is like I think 2012 data um so like up upwards here um for just the idea of the range so let's predict you know the next I think I have it as the prediction length of just 25 if you want to expand it further than a prediction length of like 60 points uh it's going to break so um just note that um so maybe predicting like the next 25 50 like that's fine um and a good number of samples is like 10 to 30 uh

samples here so I ran the forecast and just to get an understanding of how much data compared to what's being forecast this is the forecast um amount I'm going to to zoom in but just so you understand like how much compute is going into predicting the next like 25 points here it's a lot of compute to get at this prediction now this is um yeah now this is over a a long period of time this is uh each yeah each week over I think a few years um for the the data here and so when

we run when we run Kronos like from like this is the very end of uh our data when we run the fronos predict it actually looks pretty good so when just looking at it anecdotally it's like okay so it actually does a decent prediction yeah the prediction interval might be a little high if this is you know essentially this is the the money the the value um for sales so you know it it's definitely like within those bounds but it it looks pretty good um but then we have to actually compare it to reality and

so when we overlap the actual data you know the the difference here is like half the sales that were made um in this time so for the compute for the process um it's it's fine um but when when we run the ARA notebook just um to see like how close this is these are much higher numbers with I mean the actual versus is it's pretty crazy and if I smoothed this out to be like kind of like um like the the actual like residuals um it would be very very close um so this is just

kind of a a little example little toy example of you know just some real world data um overall Kronos would not win the kaggle competition um is I think that sad the main the main thing to say um and yeah like San if there's anything we want to get into here otherwise we could uh either go back to the paper or talk about some um even talking about some of the um discussions around Kronos um so there was another GitHub repo which I didn't have time to run through um but I saw it posted it's

I believe this is nla um is the company and pretty much they found that you know Kronos is like 10% less accurate and 500% slower than training classical statistical models you can run these experiments yourself they did provide the notebook haven't been able to go through this but um a lot of people agree that hey it's going to be a lot slower um mostly these models are about fast imprints um and then the accuracy we kind of just saw like yeah that's probably 10 at least 10% less accurate yeah totally I can actually this is

kind of reminds me I have a slide of kind of community oh yeah um impressions of like the this is my new favorite segment if you were here for um the quad three paper and on and I started kind of pulling things from the community and I'm I'm really excited to have these because I think it just it's a really good pulse and I think it calls out some of the things that um I keep doing that um that we put um so one thing I think this is kind of kind of shown in the

sentiment of some of the questions we've got here this person says like am I missing something because we're we tokenize text because it isn't numbers now we're tokenizing numbers um into life into less precise numbers like what is going on with this I I thought that was a great call out because at first it kind of seems like kind of counterintuitive to do this um so kind of countering my initial remark was this makes perfect sense so I thought that was interesting um there is one specific oh this one was really classic I think um

it said because they thought that what they really should have said in this paper was we used LM techniques to see if time series forecasting better so far not really know that perfectly um yeah this is the real one I was looking for to show so this person calls out the fact that like um you get comparable results but like the resources that you have to use SO trading in like the CPU based approach for you know gpus and these massive models like from a cost perspective like that just doesn't seem realistic and I think

that's kind of a point you were you were just getting at Ember is like it they're just so large and it takes so much and it it's going to have latency and all this so it doesn't really feel like we're we're quite there yet um something I also thought was interesting was somebody called out the lack of interpretability that's something that always sticks with me because right now we we don't have like true explainability for the large language models we really don't know exactly how they're coming to their um conclusions so when you have a

lot of money on the line like you typically do with like a forecasting use case choosing a model that you can't really interpret is something to me that definitely stands out um and yeah um this is this is also an interesting call where I think it counter it counters everything that you and I have been saying which is for some people though if you can pay a price to get better results they they'll do it um so just just really interesting mixed results from the community but I just wanted to call out that whole the

GPU comment since that was kind of uh your note there yeah no that's uh that's exactly um the like kind of four takeaways that I had written down um from people that's you know less accurate slower less interpretable and more money like the interpretability is really I think a a key area because with stats you know you can essentially derive um what what's going on from what you're seeing and know how each thing is predicted and like why it's predicting that and I think you know that's where research could get really cool around language models

because like the same way that you could ask a data scientists to say like what like what's happening here and they have to figure it out um the an Alm could do that when provided the data so it's not going to be interpretable from a like statistics perspective like not from a derivation perspective where you can go in and figure out why this is happening it is going to it can be interpretable from an observability um perspective like where you can ask the model why it's you know predicting an increase um or why it's not

increasing or decreasing at all because maybe it's like yeah well you know if I we just stay here um it's it's a safe bet um and understand like why it's actually making those predictions yeah that's a totally good callor I know we only have a few minutes left we do have a few more questions um for the question about how we do Time Station I couldn't find the visual I thought existed in the paper I thought there was one um but the the scaling and um the quantization that I was discussing in the beginning that's

just the taal on how it's done so we're taking those infinite values and through the scaling and more importantly through the quantization that's how we're getting those fixed values of tokens um so I typed that out there but hopefully that clears things up um let's see we have some in the Q&A how does Kronos compare with Google's times FM um that one I don't think I have I didn't come across that in my investigations and while reading the paper um Amber did you happen to come across Google's time they mentioned a few that just wasn't

oh um yeah I I was looking at that one um I didn't see any specific mention on that we pretty much saw like lag llama was very poor performing and was it time um the time one was formed pretty well GPT time time GPT um some uh GPT 4ts yes all these names um yeah so um but um a lot of the like this is coming out out now like kind of these benchmarks against each other and again use case specific um someone asked about is there a way to put in features to improve the

Kronos prediction um Salan you looked excited well I think that's kind of the question that you and I were just heading the beginning of like the whole idea of adding context in so I think they didn't really explicitly say that there's a way to add in features but when I hear that I kind of think of adding more context to The Prompt So in theory I think it's totally doable but love to kind of hear what what your take on it is yeah yeah it's interesting because the example and I think someone also just asked

for like is there like another um example uh to show I could also I'll send out this notebook to the community um but the example they showed was just like a prediction of number of pass like number of passengers over time which was like a perfect time series sequence um like um you know it had had pretty perfect um components and I don't I couldn't find like where that came from I don't know if it was just uh generated but like that's essentially an example and it only had um you know the the one feature

so would more features help my guess would be yes like there's so many time series examples of leveraging features especially features that have that seasonality component which help with your predictions um but yeah like I mean this one was a zero shot um like kind of the whole point it was Zero shot so I would guess any like additional um like thought methods uh would help and any additional features or content would help with the prediction yeah it would be interesting with some of those prompt base uh like prompt casts like kind of comparing that

and playing around with the prompt that's used there and comparing it I wonder if that could be a good test for us to see if that that theory does Edge out without using it um cool I think the the last question in the Q&A at least is what exactly gets assigned a token is it one point or a sequence of data points um I think you're referring to kind of like that initial context um token that gets passed into the model and that is going to be the whole sequence of data points you can kind

of think of it as when you know you're profiting jet GPT tell me something and then it's going to kind of predict um the next uh token based off of your initial uh question there we're doing the same thing except we're taking time series as our prompt in a way um or our our initial input and then we're predicting the next token which is a data point um from there so that's kind of how it works so we we do the scaled um the scaling in the quantization to get our um token and then we're

predicting the next token that is then de quantized and unscaled to get that final value um so that's a little bit more on that process there and um like if you play around with the model yourself you can um select that prediction length like how much you like how long essentially um of a sequence you want predicted from um your examples and you can select you probably you know change that um uh window size um or you know the number of exam because you could put in the number of examples um and you can put

in the prediction length and so you can also uh you know chunk uh essentially like that data uh I haven't played around with that but yeah it would be interesting to see like because it correctly predicted like the next sequence for the example they had with those passengers like number of passengers um but if it was only given like the you know half a sequence um like or sorry half a like a period um would it be able to do that like if you stopped at the last amplitude would it be able to do a

full prediction which I really don't know um yeah but that that's an interesting question and that's why I think there's so much research around it as well overall uh you know we have one minute left like overall San like what are your takeaway thoughts from Kronos yeah I feel like I'm gonna I'm gonna share my screen one more time I should have uh should have kept it up here but my final takeaway is really um the fact that no I do not think um is it chering I can't get to work um no I don't

think konos is gonna reign supreme right now I think there's a lot of work that needs to be done in uh kind of this field to understand how we can uh more I think efficiently really um leverage LMS I think they're just way too costly the performance isn't edging out something that's kind of simple and straightforward that everybody's been using for a really long time so it's exciting it's fun to see these new models and new applications come out um but it's definitely not there yet in my opinion yeah I agree um I think I'm

glad we read the paper I'm glad we experimented with it I think it's really cool seeing all this research being done um by teams that can afford like all the computation costs and they're putting out research on it so it's really neat to see um the claim of like it not sacrificing accuracy I I don't believe that I think accuracy is sacrificed compared to traditional methods and if uh and it really all depends you know when you see these papers coming out look at the benchmarks being used y um because right now it's really cool

research I love seeing it do I recommend people change out their forecasting models with something like this no but if you have the compute you could you could play around and see absolutely yeah and I'm going to post um one more thread it's um our our PARTA our founder did more research on LMS and I think she has a t tldr there that was like nope don't trust your stock prices with LM so read that out if you want more context but yeah Amber I think you summarized it perfectly awesome thanks sanne thanks everyone for

joining if you have additional questions you can ask them in our rise Community slack absolutely thanks everyone thanks everyone bye

![[Webinar] LLMs for Evaluating LLMs](https://img.youtube.com/vi/jW290vZThgw/maxresdefault.jpg)