China just released a new large language model that changes everything because it cracked one of the Holy Grails of AI I think the Deep seek R1 breakthrough will go down in history as one of the most important moments in artificial intelligence and in this video I'll explain why what this breakthrough could mean for American Tech giants like Nvidia Microsoft and Google and how it could drastically change our daily lives in the very near future your time is valuable so let's get right into it on January 20th which is the same day that Donald Trump was

inaugurated China released deep seek R1 a breakthrough large language model that sent shock waves across the entire AI industry let's talk about deep seek because it is mindblowing and it is shaking this entire industry to its core we should take the development out of China very very seriously what we found is that deep seek which is the leading Chinese AI lab their model uh is actually the top performing or roughly on par with the best American models if theed States can't lead in this technology we're going to be in a very bad place geopolitically even

though deep seek r1's performance is on par with silicon Valley's best AI models like open AI 01 and Google Gemini there are three key factors that elevate it far above them first it was trained for around $5.5 million or just 2.5% of the $200 million that it cost open aai to train GPT 4 as a result ts's API pricing is around 25 times cheaper than open AIS or to put it in a different perspective deep seeks budget is 100,000 times less than the budget for project Stargate second deep seek achieved these results in under 3

million GPU hours over roughly 2 months while models like llama took meta platforms over 30 million GPU hours to train and fine-tune and third deep seek was allegedly trained using nvidia's h800 gpus which were built with reduced capabilities to comply with trade restrictions on sales to China before being banned by us sanctions altogether by the way I think those sanctions have backfired in a big way and have only made China more clever and resourceful than if they had access to nvidia's best chips in the first place either way what makes deep SE car1 so compelling

isn't just its performance it's that a small Chinese company with less than 200 employees could spend less than $6 million to achieve it and with that comes some pretty tough questions like why is America spending $500 billion on Project Stargate how come Nvidia is worth around $3 trillion or nearly 10% of America's entire GDP and what happens when not just every country or tech company but every person can access the most powerful AI on Earth essentially for free to answer these questions we need to understand how deep seek achieved these results in the first place

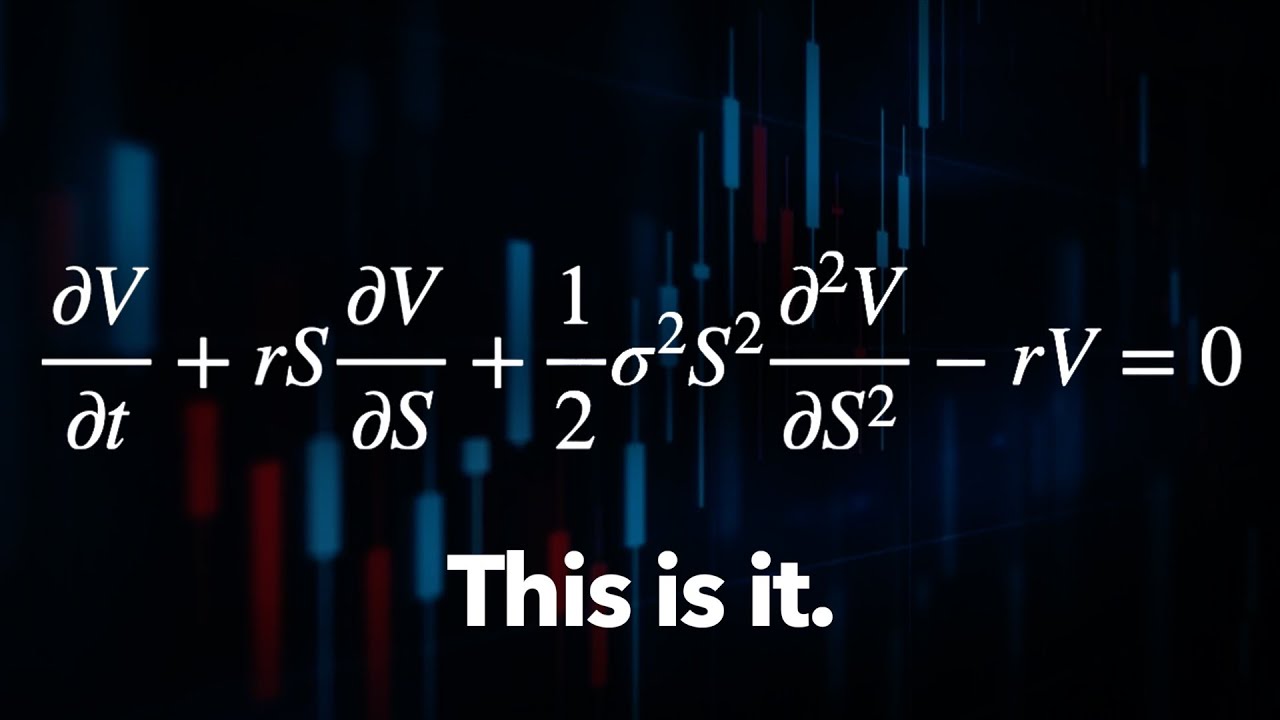

I spent the last week working my way through the Deep seek V3 technical report and the R1 reinforcement learning paper so let me break it all down for you first while most AI Labs use 32-bit floating point numberb numbers or fp32 deep seek developed a few clever math techniques to use 8bit numbers to do most of the heavy lifting and only use fp32 where it's needed the big trade-off for using 8 bit numbers instead of 32 is lower Precision but they save a massive amount of memory which means needing way fewer gpus overall another breakthrough

comes from the way that deep seek predicts tokens most Transformer based large language models predict the next token one at a time but deep seek can predict multiple tokens while still being 85 to 90% as accurate as other models so again they're sacrificing some accuracy to effectively double their inference speed in my opinion one of the biggest breakthroughs is what deep seek calls multi-head latent attention or MLA that's quite the mouthful so let's work through this one together when you compress an image you throw out any data that you decide you don't need in order

to save on memory the more you choose to throw away the more memory you save but if you throw away too much data or the wrong data you can affect the overall quality of the image that means that there's a sweet spot for data compression where you can save a good amount of memory while still keeping the data quality sufficiently High deep seeks MLA system is able to compress tokens first which makes them use far less memory and then train on the compressed values that's actually a huge deal for two separate reasons first one of

the reasons that AI models need to run on tens of thousands of gpus at once is because transform store a huge amount of tokens in memory way more memory than what's on a single GPU so this MLA system provides a massive boost in memory efficiency which again means the model needs fewer gpus overall but second compressing the data first means that the Deep seek model itself is only learning on the actually important parts of the data since all the noisy Parts just got thrown out during compression none of the computational capacity is wasted on useless

data which provides a huge boost to the model's performance not just its memory use deep seeks papers highlight a few more important optimizations to different steps like how to route a specialized problem to a smaller expert model and use its answer without sacrificing performance or how to share parameters between the main model and the smaller mixture of experts models the end result of stacking all of these techniques is around a 45x efficiency Improvement which is why deep seeks API pricing can be so cheap compared to open AIS and anthropics there's one more huge breakthrough that

I need to talk about because it's one of the Holy Grails of AI and then we can get into what all this means for the $500 billion project Stargate nvidia's $3 trillion valuation and the AI landscape as a whole but first let me point out how fast the AI Market was already expected to grow before the entire industry starts incorporating these breakthroughs into their own models according to Market us the global artificial intelligence Market is expected to more than 8X in size over the next 8 years which is a compound annual growth rate of 30%

through 2033 but many of the companies building Next Generation AI applications are not publicly traded think about the 9s and early 2000s companies like Amazon and Google went public very early in their growth cycle but today they're waiting an average of 10 years or longer to go public that means investors like us can miss out on most of the returns from the next Amazon the next Google the next Nvidia that's where the fundrise Innovation fund who's making this video po possible can help you they give you access to invest in some of the best tech

companies before they go public venture capital is usually only for the ultra wealthy but fund Rises Innovation fund gives everyday investors access to some of the top private pre-ipo companies on Earth with an access point starting at $10 they have an impressive track record already investing over $110 million into some of the largest most in demand Ai and data infrastructure companies so if you want access to some of the best best late stage companies before they IPO check out the fundrise Innovation fund with my link below today all right there's one more massive breakthrough from

Deep seek that we need to talk about because it's considered one of the Holy Grails of AI deep seek figured out a way to have the model develop reasoning capabilities by itself meaning nobody explicitly programmed the model to generate long chains of thought verify its work step by step or allocate more compute power to harder problems the Deep seek R1 model naturally converged on these things based on a clever set of reward functions for providing accurate answers in a format that encourages logical step-by-step thinking said in another way the developers gave deep seek Katon of

problems with objective Solutions and told it what a wellth thought out answer looks like but deep seek taught itself to reason in a way that gets those answers not deep seeks developers so this is AI improving Ai and it matters because this breakthrough addresses the single biggest problem with with Transformer models today hallucinations most large language models generate one token at a time and they try to have each token or word make sense with the words that came before that makes it very hard for llms to notice their mistakes let alone backtrack and correct them

but everything changed when AI models like 01 switched to Chain of Thought reasoning since breaking the inference process into multiple steps gives the AI a chance to check its answers see what's working change its approach and even ask a more special ized AI model for help if it has to and the results speak for themselves both open AI 01 and deep seeks r1's Performance top the charts for a wide variety of benchmarks except deep seek costs a tiny fraction of the price and now that you understand how this happened at least at a high level

we can talk about what Wall Street is getting wrong right now to give you a sense of all the Carnage broadcom and Nvidia stock are both down 177% today that means Nvidia just lost around 500 billion in market cap invertive Holdings which is a company that focuses on Power and thermal management for data centers is down around 30% I think this will end up being a huge investing opportunity so let me explain why until recently inference needed a lot less compute than training inference costs were basically a function of how many prompts the model received

and the average difficulty of those prompts but now things like Chain of Thought reasoning techniques and mixture of experts architectures generate a lot more tokens on the the way to solving a problem in fact it's opened up a whole new scaling law for AI models altogether because the more tokens a model can use for this internal thought process the better its final output and that makes sense it's like giving a human more resources to do a job and more time to double check their work if you want the output to be better you allocate more

resources for humans that means more time more money and more access to the best tools and experts but for AI models that means better algorithms and more GPU hours so now there are three ways to scale AI models the first kind of scaling is called pre-training scaling that's where the quality of an AI model goes up as you give it more data more parameters to work with and more compute power to use the second kind is called posttraining scaling this is reinforcement learning using more specialized data and prompts human or constitutional feedback having the model

practice on synthetic data and so on think of this as the fine-tuning step where the model learns from its mistakes takes over time which again requires a significant amount of compute and now deep seek has proven the power of a third way to scale AI performance called test time scaling that's where the model gets better by spending more time energy and tokens on the inference step itself should the model provide an answer in one shot or do some retrieval augmented generation or should it do step-by-step Chain of Thought reasoning or take a majority vote from

a panel of expert models what deep seek actually just showed the world is the power of this third scaling law and importantly this third scaling law still benefits from having more compute since letting the model generate more of these intermediate logic tokens while it's solving a problem leads to better solutions that means deep seek won't be the end of project Stargate or lower the demand for nvidia's gpus what it does mean is that companies should expect way more for their AI Investments because of this new scaling law since they now know they can squeeze way

more performance out of the same amount of compute companies like Microsoft and Google are not stupid they're going to add mixed Precision training multi- token generation multihead latent attention and the right reward functions to reproduce deep seeks step-by-step reasoning on their models that's how deep seek really did just change the AI landscape forever why this moment really is so important and why I spent so much time covering these Innovations in this video but companies like Nvidia aren't stupid either Nvidia is down 177% today but Jensen Wong has been talking about test time scaling well before

this deep seek breakthrough caught the World by storm we now have a third scaling law and the third scaling law has to do with uh what's called test time scaling test time scaling is basically when you're being used when you're using the AI uh the AI has the ability to now apply a different resource allocation instead of improving its parameters now it's focused on deciding how much computation to use to produce the answers uh it wants to produce reasoning is a way of thinking about this uh long thinking is a way to think about this

instead of a direct inference or One-Shot answer you might reason about it you might break down the problem into multiple steps you might uh generate multiple ideas and uh evaluate you know your AI system would evaluate which one of the ideas that you generated was the best one maybe it solves the problem step by up so on so forth and so now test time scaling has proven to be incredibly effective there's something called jeevan's Paradox which says that as something gets cheaper and more efficient the falling costs create much more widespread demand causing total spend

to rise not fall when air conditioning got cheap enough everybody got one and overall electricity demand went up not down when computers got cheap enough everybody got one and overall hardware and software spend went up even though the cost per compute is drastically going down over time and now the same is true with AI since deep seeks API costs 25 times less than open AIS for similar performance that doesn't mean 25 times less spend on AI it means 25 times more performance which makes every dollar spent on AI that much more impactful going forward and

that means we should expect overall AI spend to rise as smaller companies and even individuals start leveraging it more and more so honestly as a longtime Nvidia shareholder and AI investor I'm not worried one bit I think this is a huge overreaction by the market and a huge opportunity to invest in some of the best companies on Earth at a steep discount the same companies that I've been covering for years now and as for our daily lives I think we're one step closer to the AI apocalypse what's that a robot overlords want me to cut

that and as for our daily lives I think we haven't even scratched the surface of the benefits that deep seeks breakthroughs have unlocked because they can be applied to much more than just chatbots from robots and self-driving cars to genomics and medical Diagnostics and even physics simulations and weather prediction many different areas of AI can benefit from the huge innovations that deep seek just brought to the table and when they do I'll be right here to break it all down for you so if you feel I've earned it consider hitting the like button and subscribing

to the channel that really helps me out and it lets me know to make more content like this either way thanks for watching and until next time this is ticker symol U my name is Alex reminding you that the best investment you can make is in you