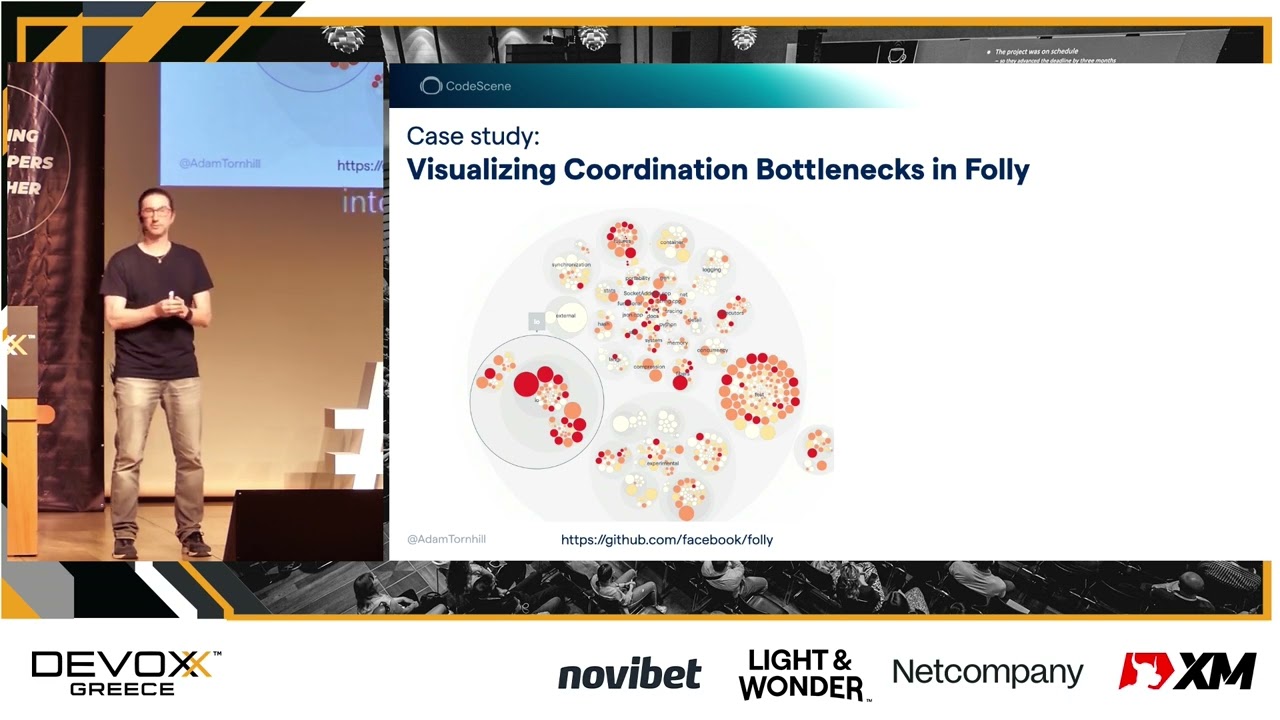

[Music] good afternoon everyone and welcome to this session on prioritizing technical depth as if time and money matters and this is a broad topic so let's Jump Right In and have a look at some of the challenges we need to balance when building software at scale and these challenges are well captured in something known as Lehman's losses of revolution and there are multiple laws and I just want to present two of them for you here and see if they resonate with your experience so the first loss of revolution is the law of continuing change and this is where Lehman claims that the system has to continuously adapt and evolve or it will become progressively less useful over time is this something you recognize yeah this is the very reason why we keep adding new stuff where we keep adding new features to our existing code bases because a successful product is never done what I want you to do now is to notice that there is some kind of tension some kind of conflict to the second law of software Evolution and that is the law of increasing complexity and this law claims that when our system evolves its complexity will increase unless we actively try to reduce it what's so challenging here is that when we fail to reduce complexity what's going to happen is that responding to change is becoming harder and harder and harder so this is a vicious circle and the problem is that if we end up in that Circle we might not even notice because what we tend to see are symptoms not the root cause and to make it worse we tend to see different symptoms depending on who we are what our role is in the organization so as product people or technical leaders what we tend to see is this we tend to see symptoms on the product roadmap execution right so if we could do a feature in one week a year ago it might take us one month now right and these cycle times get longer and longer they also tend to introduce unpredictability so software estimation was always a black art but with longer and longer lead terms increasing complexity it becomes virtually impossible what we also tend to see is an impact on the team so we tend to see a pretty high staff turnover because working in a extremely complex code base is no fun we also tend to see key personal dependencies so you know those parts of the code that only that one person knows and that one person happens to be on vacation for two weeks right finally what our end users tend to see is this they tend to experience bugs that there's an impact on the external quality of our code and the real root causes tend to remain hidden or back and obscure because code and Technical depth in particular lacks visibility now if we have a problem like technical depth that can really destroy your business shouldn't we try to bring visibility to it there have been multiple approaches over the year and I want to show you one fairly common approach the first time I came across this was a couple of years ago and the idea here was to use static code analysis technique basically scanning the code looking for potential violations of predefined rules and have a cost associated with each one of those violations and sum it up and you end up with a number on how high your technical depth is has anyone ever tried that few of you let me come up with 20 30 people cool so the first time I came across this was a client that I visited in London maybe 10 years ago and what they had done prior to my arrival was that they had used one of these tools capable of quantifying technical depth and they have taken this tool and thrown it at their 15 year old code base and this tool happily reported that on your 15 year old code base you have accumulated four thousand years of technical debt four thousand years of technical debt let me put that into perspective for you four thousand years ago that was here that's the start of recorded history for the invention of writing so you know when I tell that story I'm always super curious what kind of programming language did I use Fortran lisp on a more serious note I do understand that a lot of that depth probably grew in parallel by having hundreds of Engineers work on the code base right so it might actually be accurate we don't know there's no way of telling right but is it actionable what do we do with that information so what I wanted to show you by explaining this was that quantifying technical depth it might be an interesting exercise it's almost always depressing but it's simply not actionable more important technical depth cannot be prioritized from code alone what we see in the code the time to fix or code smell or fix or flaw that's not the cost of your technical debt that's the remediation time right the actual cost of technical depth is the additional time it takes to work on that code and that's something that's simply invisible in the code itself so we clearly need to look Beyond code here and this is important because we're always going to have this challenge that we saw in Lehman's law that there's always going to be a trade-off between improving existing code versus adding new features and it's a trade-off that's going to shift over time so we do need to prioritize but how can we do that I've spent the past decade trying to address this problem and I've written about it at length in my book software is an x-rays being their latest one and the techniques I use is something I call behavioral code analysis so what is a behavior code analysis well the core ID in behavioral code analysis is it that's it's always about code and people so the code is obviously important but it's even more important to understand how we as a development organization are interacting with the software we are building so if we want to try this out the first thing we need is obviously behavioral data of us as software Developers and the good news are that you all already have all the data you need we're just not used to thinking about it that way I'm talking about this I'm talking about a Version Control System so Version Control is something I've been fascinated with for a long long time and yes I know we all have our odd Hobbies this one happens to be mine and the reason I'm fascinated by Version Control is that for decades we've used Version Control a small lesson overly complicated backup system and then occasionally as a collaboration tool but in doing so we have built up a wonderful data source over how we as an organization have built our code base so Version Control Data is a virtual gold mine that tells the story of our system evolved and this is a data source that we can tap into to prioritize technical depth let's try to make this specific and I want to show you how I use these techniques in a real world code base and this is going to be a code base that some of you might actually carry around in your pocket as I speak because I'm talking about Android more specifically I'm talking about the part of Android known as the platform framework base and this is a huge code base what you see on screen here is roughly 3 million lines of code most of it developed in Java by more than 2 000 developers so this is really a massive code base the visualization that you see is something that I call a hotspot analysis and I'm going to walk you through the visualization so that you know what you see first of all if you look at those large circles the ones that blink on screen right now each one of those circles correspond to a top level folder in that git repo so if this was your code base you would recognize the names of those circles as important subsystems or high level components right it's a visualization that always always follows the structure of your code it's also interactive so I can zoom in to any level of detail that I'm interested in and I can inspect the subfolders and once I get to lowest level of detail I see that each file with source code is visualized as a circle and you see that the circles they have different size so size is used to reflect an interesting property size is used to reflect code complexity so what is code complexity it's basically how hard is that code for a human to understand I'm going to tell you in a minute what I use to measure code complexity but before I do that I really have to point out it's my responsibility to point out that when managing technical depth code complexity is actually the least and I repeat it's the least interesting dimension because complexity has this property that it's only really a problem when we have to deal with it so maybe have overly complicated code but I haven't touched it for years I should probably Focus my attention elsewhere and this is where we can pull out data from our Version Control history and get specific advice on how frequently do we work with that code so I'm going to verse control I'm pulling out things like the number of commits I sum them up and they're more commits to a piece of code the more red it becomes and from a technical deaf perspective the interesting thing is the intersection between these two dimensions because now we're capable of identifying complicated code that we're to work with often and those are our hotspots in code all right I promise to talk a little bit about code complexity and this is a fascinating topic because code complexity in itself is really complicated in fact there's a highly relevant research paper that I've taken a lot of inspiration from it's a pretty old paper but I think it hasn't lost any relevance over the years and what this paper tells us in the first paragraph is basically that we are doomed if we look for a single metric to capture a multifaceted concept like code complexity there is no such thing so what I did instead was that I took a lot of inspiration from the second paragraph here that says that a more promising approach is to identify separate and specific attributes of complexity measure these separately and then aggregate and we decided to call this concept code health because we can now ask questions like we have health face our code and we avoid the whole idea of using a term like code quality which I never really like because it kind of suggests an absolute and the way it works with codehealth is that we identify 20 to 25 different factors depending on program language and these are factors that are known from research to correlate with increased maintenance costs and increased risk for the facts so we sample this separately we stick a probe into any hotspot we look at what comes out we combine weight Aggregate and normalize these values and just to give you an idea on what type of things we look for so these are some very common code health issues that you tend to find in most code bases so we have things like low cohesion so what is that you know it's simply when you have a class and you have stuffed way too many responsibilities into that class so now as a developer when I try to understand that code I need to be familiar with multiple Concepts and there's also the risk for things like unexpected feature interactions which are some of the worst bugs that you can ever have then you have brain classes which are classes that are not only low on cohesion but they also include something known as a brain method brain methods are also known as gut functions and object function is simply this overly complicated functions they have a lot of logic in them they tend to be pretty large in terms of lines of code and they are very Central to the module so that each time you want to add or modify something in the module you end up in a brain method and over time they become even more complicated then we have Classics like copy pasted code nested logic and so on anyway once we have measured all of these things combine them then you can also start to visualize and you can do that by categorizing code into one of three different categories so I typically use colors for this because colors are known to kind of tap into one of the most powerful pattern detectors that we have in the known universe and that's our visual brain so looking at the code base like this I can quickly tell is my code green and healthy meaning it's easy to understand easy to maintain or is it red meaning that I've accumulated severe technical depth in that part of the code now what I find interesting is that those two perspectives that you've seen so far one quality perspective the code of perspective and the hotspot perspective the behavioral dimension none of these are enough in isolation to manage technical debt we need to combine them and the way it works is that using a complexity metric like code Health Wiki identify the part of our code with technical depth adding the human perspective the behavioral perspective with hotspots we know the relevance of each one of these findings and that is what let us prioritize technical depth and you're going to see a little bit more data on this soon but to me this combination is really really key because finding bad code is really the easy part right the hard part is to know which part to fix and this is where hotspots send a positive message and I want to explain this to you so please look carefully because this is probably the most important slide in this session so what you see here on screen is that on the x-axis you have each file in a particular system in this case it's Android and those files are sorted according to the exchange frequency that is the number of commits you have done to that specific file and the number of commits is what you see on the y-axis if you look at this graph you see that it's a very typical Pareto distribution right or power this power law distribution and this is really important because this is not something that's unique to Android this is something I found in every single code base that I've ever analyzed and I probably analyzed around three 400 code bases by now so this seems to be the way software evolves and this is good news to us because what it means is that most of our code is going to be in the long tail so it's code that's rarely if ever touched and that's the part of the code where we can actually live with a certain degree of technical depth we want to know about it because it might be a future risk but it's most likely not an urgent concern on the other hand most of our development activity is in a very small part of the code base the part identified by the hotspots and that's the part of the code where even a minor amount of technical depth can quickly become really really expensive due to the high change frequency all right let's dig a little bit deeper into our Android hotspot the number one hotspot in Android that you saw in the visualization the interactive resultation that I showed you earlier is called activity manager service isn't that a wonderful name right one of my favorites because first we have activity which could be anything then we have two words manager and service and each one of those words in isolation signals a unit with low cohesion now we combine them and we combine that with the fact that about 20 000 lines of code in a single file who wants to refactor this not so many volunteers and that's good because I would strongly advise against it because we're factoring code at this scale is going to be a huge risk right so how can we slay this Legacy code monster would I do when I come across a complex hotspot like this is that I use a technique called hotspots x-ray the way it works is that I take that code I parse it into separate functions and then I look at the git log and I see where do each commit hit over time right and I sum it up so that I get functions so I get hotspots at the function level and this is what I use to get a specific starting point for my refactorings let's have a look at our x-ray of activity manager service we see here that the number one hotspot at the function level is a method called handle message we see in the First Column that it has been modified 98 times right 98 times so that means like at least twice a week some developer is impacted but excess complexity of that function how complicated is it well we can get an ID by looking at the loss column the column that says cyclomatic complexity so for those of you who haven't heard about cyclomatic complexity before cyclomatic complexity is one of those old classic complexity metrics the way it works is simply that it's a function level metric you count the number of logical paths for your functions so if statements loops and so on and you sum them up and they lower that number the better now cyclometric complexity I have to admit it's a not a particularly good metric it has fairly low predictive value but it's very very good at one thing it's very good at estimating the minimum amount of unit tests you need to cover just that function and here in handle message we would need 101 unit tests to cover just that behavior and that shows us something about the complexity that we find there looking at the column in Middle we see that this method contains 500 lines of code that's a lot for a single method isn't it right you would never do something like that would you but still 500 lines of code is much less than 20 000 lines of code which was size of a total hotspot right and 500 lines of code is definitely less than three million lines of code which was size of a total code base so more important we are now at the level we can start and do a focused refactoring Based on data from how we as an organization have actually worked with the code no when talking about technical depth I also want to talk about the people side of it and the reason I want to do this is because getting the people side of software wrong I promise you it has killed more software projects than even Visual Basic as so it's important to uncover the relationship between technical left and people I like to start this part of the talk by talking about Legacy code because Legacy code is related to technical depth but the two are not the same so what is Legacy code there are many definitions out there the definition I prefer is that Legacy code is code that somehow lacks in quality and more importantly it's code that we didn't write ourselves right and that second part is really important so let me share a story with you this is something that happened to me five years ago and I've met it several times since then what happened here was that I was visiting our client I did a workshop for one of their teams we did a hotspot analysis of their code bases and they had two code bases so we analyzed them we talked about them discussed how we could address the findings and then after a while somewhere in the team mentioned that you know what we actually have a third code base as well so I start to think okay should we analyze that one too and everyone kind of looked at each other and Loft a little bit like uh no we don't really have to do that so I said why not because we know the third code base is a mess and I was like yeah we really have to look at it right so we did and we looked at the hot spot we looked at the code Health metrics cyclomatic complexity all that stuff and guess what objectively there was absolutely no difference in code quality between the first two code bases and the third one and you know when you bring up something like that everyone starts to question it that hey maybe the tool is measuring the wrong thing maybe you are measuring the wrong thing right so we had to spend a lot of time actually comparing code samples and after a while everyone was fairly convinced even though they didn't like it that code Base number three was indeed in no worse shape than the other two so why did everyone think that it was such a mess the reason of course was that code Base number three turned out to be developed in a different part of the organization in a team that has since been desponded and this team had simply inherited that code base meaning they were now responsible for piece of code that they didn't write themselves they did not understand the problem domain and they didn't understand the solution so it has much more to do with unfamiliarity than any properties of the code itself and the first time I experienced this I heard it's I mean I experienced the same situation with many teams since then but the first time I saw this I re it really made me think you know if it's that easy to create Legacy code can we build something like a legacy code making a machine what would it take to turn your code base into Legacy code base Let's do an experiment Let's do an experiment on a different code base now I have been torturing uh Android or Java code based so it's only fair I do the same thing to a. net code base this is asp. net MVC core it's a dynamic it's a web application right it's a framework for building Dynamic web applications and it's smaller than Android so this is just 350 000 lines of code and it had had 180 contributors now let me for a moment take on my manager hat let's say that I lose one or two Developers should I be worried of course not I still have 178 contributors right one or two people it really doesn't matter we can keep up the pace no problem unfortunately this is not what reality looks like so what you're going to see now is another Power law curve because software evolution is full of power law curves software evolution is really full with Pareto distributions but this one shows a different thing this is not hotspots this is offer contributions so on the x-axis you have each offer that has contributed code to.

net core and on the y-axis you have the number of lines of code that they have contributed so do you think it makes a difference if the two people are loose are part of the long tail or those two people happen to be at the head of the Curve it probably does right so how can we show that impact how can we visualize the impact of a potential off-boarding it's pretty easy because remember we are in Version Control Wonderland so git knows exactly which offer that wrote which piece of code so this is something that we can accumulate and we can use that to reason about things and acknowledge distribution and knowledge sharing so let me show you an example from asp. net core what happens if just one of the core developers lib so this is another type of visualization it's the same resultation style as we used for the hot spots and all that stuff but now the colors carry a different meaning everything that's blue that's code written by the current team and that means that if let's pretend that we are an organization right if our code is blue it means that somewhere in this room some developer knows each piece of code because they wrote it right then you see that there are different Shades of Gray and these Shades indicate that this is code written by a former contributor so person that has already left so these are like our black holes in system Mastery right and what I can do now using Version Control Data by aggregating it is that they can simulate the impact if that one core developer leaves and this is real data so please have a look to the right here this is the impact in red if just one contributor from 180 leaves so let me rephrase that question let me ask it again should I be worried yes I really should so what would I do if this was a real world situation because there's a lot of code and I simply cannot address all of it as once right so what I would do is that I would prioritize the parts of the code that are likely to need it the most I will look for a combination of three factors so first of all I would look for the hotspot criteria because if we lose knowledge in a hotspot I mean it's a hot spot for a reason it's a hot spot because that code is relevant right code doesn't just get changed because we want to have fun most of the time right it's most likely that if we worked a lot in a part of the code yesterday we're going to continue with that tomorrow right so hotspots relevant if that hotspot also has low code Health High complexity then I know that it's going to be extremely challenging to onboard a new developer in that part of the code and finally if the developer who wrote most of that code is going to leave then I know that I have a real issue so if I tick all these three boxes then I prioritize that part of the code and what I typically would do is that I would take that developer that's going to leave I would pair them up with a developer that's going to stay and I would have them refactor those complex pieces of code together so that we can mitigate an upcoming off-boarding risk and the reason I showed this scenario to you is because I really really want to drive home my point that there is so much more to code complexity than just the code itself in particular social factors like knowledge distribution influence how we perceive a code base and also want to point out that these techniques that I've been showing you today they don't replace anything say maybe speculations and wild guesses rather I view them as a compliment a compliment to your existing expertise your existing experience so that you can focus your attention on the parts of your system they're likely to need it the most and I want to make sure I leave time for questions before I do that if you want to try this out on your own code I have a whole book about these techniques called software design x-rays the tool I've been using to do all these visualizations and measurements is code scene you can try it out for free at codecin. com there's even a free Community Edition that you can use and I blog regularly about this stuff at codeine.

com and my privateblog and I'm thornhill.