design a machine Learning System to recommend artists on [Music] Spotify okay thank you so much s for being here we're really excited to have you uh can you introduce yourself quickly for our viewers yeah I'm excited to be here hi everyone my name is Sid I'm currently a senior machine learning engineer at FanDuel um and you know I spend a lot of my time building out complex systems in ml and excited to be here okay awesome it'll be really interesting to see some of the wisdom that you have about like the end the end system

of building and ml system so all right so let's get right into our question so our question today is design a machine Learning System to recommend artists on Spotify the first thing I want to know is um kind of understand is there a metric that we're using here to uh sort of measure the success or failure of this recommender generally when I think about metric I'm thinking about things like Impressions click rate engagement things like that is there something we're focusing on yeah let's start with with engagement for now awesome um okay so engagement um

how are we defining that um is it usually just you know users kind of clicking on things or what more are we doing here to to gauge the engagement yeah so let's start with just the user clicking on a recommendation of course like as you mentioned you can definitely go deeper than that uh but I think for now we can say that it's a positive recommendation if the user clicked on it and if not that it's not considered to be a good recommendation okay great um so first things first is U you know what kind

of raw data do we have access to um do we need to collect any extra data do we have do we need to think about that within our system yeah it's a really good question um I mean I feel like this is such a crucial part of building a machine Learning System right your algorithm is only as good as your data so you have two main sources of data the first one is going to be the click data so that's you know engagement as we mentioned earlier and the other is going to be metadata about

your users so that's going to be things like um gender age group location um and like previous history awesome um and then now that we kind of have a rough idea of the data um I'm really interested to also understand the raw conditions of the data that we're working with um kind of you know thinking along the lines of how much data massaging we need to do to clean it up and sort of make it edible for our model to consume and start working with it um just initially just kind of thinking about Transformations that

I have to do to to get it into that format um do we have any idea on that yeah so the click data comes in as like a raw stream of basically just Json objects um so every time there's an event uh which is a user clicking uh that Json um object is stored in an object store um and then for the user data uh that's a little bit simpler we just store that in a postr account table um it is uh pii data though so that's something to be careful about got it okay so

for my understanding U I'm just going to kind of summarize so far what what we're looking at um so we're looking at engagement as a metric um to gauge the success rate of our recommender our goal is to be able to successfully recommend um either artists or songs on Spotify um and then the data we're working with uh we essentially have two different sources one is the click data that we're getting in Json format so this is usually streams data that's coming in whenever someone clicks on things and then the second thing we're looking at

is user data so that's usually things like uh metadata about the user their ID um their uh I guess email address but you know I'm not expecting us to use that but age location things like that um so I'm trying to see in my head how we can kind of like join these together and get them in the right format uh to serve essentially as our features for our model um but we'll get to that bit um after we get into a little bit more deeper um so yeah awesome sounds great um so ideally what

we're trying to do here is figure out how we can take these two points um to create our initial data processing pipeline um and then you know fetch what we need to do uh to create our features um and the next question that I had um which uh I wanted to understand a little bit better is are we doing these recommendations in real time or batch what's the Cadence there um so so I think you might need to be a little bit more clear about that um but essentially you need to recommend artists as soon

as the user logs in because they're immediately presented with the recommendations got it um yeah so I can clarify a little bit um I guess what I meant was uh if a user logs in of course we're going to you know give them the recommendations that are more personalized to them um what I was trying to understand is from the model training standpoint model testing standpoint and even the inference generation standpoint uh are we looking at doing all of that in in batch or in real time and I'll explain a little bit behind my thought

process as to why I want to kind of analyze that a bit better um from an overhead standpoint from a compute storage and all the technical standpoint um a batch-based system is generally easier to manage um you're not necessarily doing a lot of Compu intensive stuff in real time within you know a less than a second or anything like that and sending it over um of course can't do it um but the uh the idea here is to make sure that we try to reduce that kind of uh technical complexity if we if we have

to um my understanding here is we could do everything in batch the training and the inferencing or we can do part in batch we can do the training in uh batch and then the actual inferencing in real time or we can do everything in real time as well so everything is possible um the best way I think I would attack this is kind of think along the lines of how is is our accuracy of the recommendations that we're generating how would that change in each case scenario um because if we're able to achieve the accuracy

metric that we set out uh by doing all in batch that's great right we don't have to do too much technical work um and we're getting really good you know accuracy and that can kind of on a very high level be our first generation that we look at and then once we sort of get accuracy metrics there then we can see can we make this a little bit better and we can talk a little bit more towards the end about um sort of doing further iterations on our base model right um where we can go

on to thinking about building models that might be part real time part batch and then eventually a full-time you know full real-time model similar to how like Tik tok's recommendation system works um but I'm thinking for now uh best solution might be to to look at a full batch based solution since we really don't have full idea of you know what our accuracy is going to look like how does that sound yeah yeah that sounds great um I like that you also mentioned that we can adjust later on and see just like what the trade-offs

are there with accuracy versus latency um okay so that being said uh can you tell me a little bit more about how you plan on designing your data pipelines and what features you're going to need um how are you planning on designing them yourself yeah so I'm going to assume that I have to design them myself I'll kind of give a high level um at least understanding of how I would go about doing this um I'm also going to assume that the uh system that's already firing off the events and sending the events to me

uh the Json click data that is uh that systems maintained by another team um all I have to do is essentially subscribe to those Events maybe it's a event based system of some sort um so I'll basically subscribe to that and then essentially fetch that data dump it into a raw Source maybe an object storage system and then I'll essentially set up a pipeline right that I can essentially connect to that raw Source pull that data and then apply certain Transformations on it to extract the fields that I need normalize anything that I need to

and I'll go into a little bit more detail about what I mean specifically there so on a high level I'm thinking that's kind of how my pipeline's going to be and then as this data is sort of flowing in I'm going to join that data once it's normalized and processed with the user data that we have in the postris table that you mentioned earlier so that way eventually we have a full feature Vector that says here's the user this is their age group um you know this is their um you know historical data on the

artists that they've liked in the past the songs that they've liked in the past um where they're located all of that good stuff um and once we have all of that then we can sort of uh normalize it and so have that R data sort of ready for our model to consume from and and kind of go from there um any questions so far does that kind of sound about the right right track there yeah I like the level of detail there I was curious though what you mean by normalizing MH yeah so generally I

guess in machine learning uh when we think about normalizing it's usually trying to get uh to do comparisons out from an Apple to Apple standpoint um so if we think about things like uh location or gender or even timestamps for example a very important field in almost all of feature engineering right we want to see how our data evolves over time so let's say if we get data that's coming in from you know Britain and they'll be at a different time stamp and data that might be coming from from America might be in a different

time zone and a different time stamp how do we sort of uh get on the same page there right when we're doing our evaluations we want to make sure we're looking at essentially the same standard of time um so you'll do normalization there to ensure that you know we're maybe in GMT time or maybe we're using the io um Source sequence numbers there's different ways of doing it but um a lot of that kind of processing will essentially take place uh in our data processing step gotcha okay um what about normalizing like um some users

might just in general click on things more often because they happen to be more active on Spotify um can you suggest like some way of normalizing for that yeah so we're thinking about here okay active users active clicks um so the idea here to look at the number of clicks per user is that what we're looking at here or something different uh yeah because our raw click data right is literally just you get one jsound record per click but um just because a user has clicked on a particular recommendation they may be a user that

they're like a power user so they're clicking things like they're they're like really active they log in every single day versus you might have somebody who only logs in like once a week um so how might you normalize the the number of clicks uh to account for like the general frequency of logins or activity yeah um I think the best way probably is to right off the top of my head the way I would probably approach this is to take maybe the number of clicks a user has per 100 or per one per 200 recommendations

right so that's one way of looking at it um if you have users that are kind of similar and were let's say recommending the same song to or same artist to a particular amount of users um we could kind of see if one user clicked on it versus the other and based on that kind of see what the interaction level there might be um I would think from a normalization standpoint those will probably be like the two best ways to like kind of look at it I think the former would probably be the best bet

here because you are kind of looking at um you're essentially providing the same recommendations to two different users and provided the same amount of recommendations the same kind of recommendations you're kind of looking at which user clicked on what um and how many times our user might have engaged with what item that were recommending if that sort of makes sense yeah that makes sense um I might also mention like um yeah if a have you could compare that user to themselves like if they particular if they click certain recommendations much more than others or you

can like divide by the total number of like clicks that they have and so on yeah makes a lot of sense Okay cool so uh let's move on so you mentioned normalizing the data so what do you want to do after that yeah so once the the data is kind of normalized and then I'm assuming the cleanup part is also completed um I did mention earlier about Pi um right I think you also mentioned that pii is a pretty critical piece of all of this so we're looking at the higher level architecture and higher level

system um you know Tech you want to try to ward off of using pii as much as possible because just dealing with it tends to have a lot more restrictions in our case um the two things that we're looking at that are kind of more pii related are you know a user's location and a user's age group age um so my idea is essentially to take a user's age and then categorize them into an age group um because um from a research standpoint generally age group gives a good understanding of uh what a user's Tendencies

tend to be or what they would be interested in especially in something like music right that's like a good assumption to sort of make and so if you put someone in an age group you don't necessarily have to worry about what I would do with that pii a worst case scenario you do have to deal with pii you can do masking algorithms and hashing and things like that to ensure that that pii remains uh uh not in plain text essentially um generally location data comes in the form of coordinates so trying to convert that to

a city and a state would be like a good good start there as well um and then the other two most important things right is thinking about in our case we want to recommend artists for users to like click into and follow and then we also want to recommend uh songs that we want users to click into and follow right um and so in those scenarios um we're essentially thinking about for each user an array of the most recent hundred songs that they've listened to um and an array of the uh most recent artists that

theyve followed um and array essentially contains map objects of all of these artists right and this could be things like artist's name um their most important songs that have come out the number of days that have been active on Spotify their trending rank amongst their competitors number of followers all sorts of stuff and then for songs similar things but one of the interesting two things that I can think about um is the average listen time for that song compared to how long a particular user looking at is listening to that same song so for example

let's say I'm listening to One song um for uh two minutes which is kind of the average for all users over that song and let's say you decide to listen to it through the whole duration for four minutes right um so there's a bit of a um like an not an outlier per se but there's a bit of a big Delta there that would entail and indicate maybe that you might like songs of that nature and you might like songs like that um so that's something I think we can definitely put into um as one

of our features as well um but yeah I think I'm I'll pause there I know it's threw a lot of things um is there anything else that I might have missed out on something that stood out to you um I don't think so I think that that's a really good uh diverse array of features to start out with yeah um now that you have an idea though of like the features that you want to create um I think it'd be good to talk a little bit about the model what kind of model would you want

to use um and why because I know there are many options out there yeah definitely I think this is the more fun part of doing machine learning right um traditionally at least uh recommendation systems um uh take advantage of user data that you already have um so the idea is imagine you're living on a house in a neighborhood and you know if you are similar to multiple different houses in that neighborhood um someone might take the uh you know lik things from the other houses and recommend that to you and that's similar to what we

call what a collaborative filtering model is um and so we're going to try to experiment with something here so if you have existing users and have existing user data you can kind of you know take that advantage of that data and then basically use that uh to recommend something to a new user um there's also uh the concept of content filtering where um you're not necessarily looking at user data but you're looking at your entire repository of items that you have and based on um just the user's history the user that you're recommending to you're

going to see a compatibility score of that user's history with all of the items that you have and see whichever one has the highest compatibility score and you can kind of recommend those and say Hey you haven't seen this one but I think you may like this um a lot of Netflix and Amazon and Airbnb like a lot of these companies generally use those uh traditional systems to try to um you know build build more personalized systems um and like you said there's you know many more uh but obviously for the sake of time um

I think the best thing to start off here is uh kind of looking at the collaborative filtering approach um my assumption here is that we have enough user data uh to avoid what we call the cold start problem which is basically the idea that you have no data at all what do I recommend to begin with right because both of these systems uh collaborative and content filtering algorithms they do require um some kind of uh initial data for them to kick off um so in those situations you can kind of think about what are the

top trending songs top trending artists that everybody likes and then sort of start recommending with those and and then start collecting click dat from the user and then start tweaking your algorithm a little bit because now your algorithm has a little bit of data based on what you provided to your user um does that kind of make sense I'll pause there a little bit um if you have any questions yeah I like that you were talking about the two different types of recommendation algorithms there when you were explaining content filtering you mentioned that there's a

comp compatibility score that's used uh are you saying that that score describes how similar the items are that you might recommend to the items that the user has uh View or listened to uh previously yes that's exactly what it is so a simple example might be if you think about Netflix right um let's say you're watching um Iron Man one and you know that these are the actors in Iron Man one you know the genre that it falls under um and let's say you watch the whole movie so they have play history time with it

so you'll use that data to say okay the best score here if you look at Iron Man 2 Iron Man 3 Captain America um and then let's say the fourth movie is Independence Day right the compatibility score with Iron Man 2 and three would be a lot higher um than with Independence Day uh because of similar features that are there um obviously mathematically there's a lot of work that's done to kind of create that compatibility score but that's essentially what I was going for um and so I would recommend Iron Man two and three as

like good matches gotcha okay um and one more thing I'm curious about is uh you decided on using um a collaborative approach uh why that one instead of content filtering yeah so my assumption um I guess with this was that we have enough cont or uh data on existing historical users um that we can take advantage of um existing historical user data tends to be more Dynamic and more fast changing than data that is item Pro uh more on the items in in in Spotify right so these items tend to move around a little bit

but not as frequently as user data because you're constantly getting that data um and because of the dynamic nature of it I believe that the accuracy will be a lot higher will be a lot more precise um so when in doubt and I have a choice if I have the data available I wanted to kind of lean towards using collaborative filtering over content because uh that's like a richer source of data if I had the user exactly yeah the other thing I wanted to also add in is that music is something personal a lot of

it is very much where you know people recommend one thing over the other right people generally your friends want to listen to what you recommend them right um over you know machine recommending it so if we're able to harness that uh by using collaborative filtering I think we'll be able to sort of replicate the same thing and have a better success rate okay perfect uh thanks so much okay yeah so keep on telling us about uh yeah please continue telling us about this model yeah so um so we talked a little bit about the choice

of the model and you know why we kind of chose it um the other thing I wanted to mention is sort of thinking a little bit about how collaborative filtering Works um you know I I mentioned a little bit around um you know the scoring aspect of it and that's kind of exactly what you're doing in this scenario you use something called a user item Matrix and then basically what you're doing is essentially finding kind of a cross join between um the item that you're recommending uh as a column and then each user is going

to be a row and you're going to essentially calculate a product at each point to see how close that number is to one or how close it is to negative one closer it is to one the better of a recommendation it is closer it is to negative one the worse of a recommendation it is and you do this over and over again as you get more data um and then as your feature Vector keeps growing you're going to do more of those um so there's some uh pitfalls that you'd want to kind of think about

here from a techn technological standpoint but we'll get to that in a little bit later okay sounds good um all right so now we have our features in our model um what else do you think you would need to worry about yeah I think um the next thing is really thinking along the lines of implementing this and understanding a little bit on when we put it into production what are some things we want to consider right um so uh this this can be things such as Compu and storage resources that we would need to to

sort of train the algorithm every single day or in our case it's a batch-based system um so do we want to train on an hourly basis uh a couple of hours every every 24 hours what's that Cadence going to look like um and then there's also the testing validation piece of it so we want to make sure that you know whatever we're training out and then we're actually running tests on it is the accuracy that we see during the training is that matching the test accuracy and then when we put it into production what is

that accuracy looking like right the end of the day the whole point is to ensure that whatever we're putting into production is hitting that metric we're looking at and we're essentially trying to push that so you want to think about things like that where how well is my model doing compared to maybe a randomly chosen set of items that we're showing a particular user and when you do those kind of Baseline comparisons you're going to try understand if your model's doing well it's doing worse what can we tweak Etc from a compute standpoint you know

um Cloud systems like AWS have made life a lot easier right um take advantage of tools like AWS Sage maker to house your model containerize it train test all of that think about um serverless systems like Lambda right how is this going to interface with your product so you have a product um and a user logs in or user opens the app um asynchronously it sends an API request to your service endpoint and the service endpoint might fetch the uh latest recommendations for that logged in user and serve that to the user um so there's

a lot of little moving pieces and parts that are kind of there so those are sort of the things that you think about and the last thing is thinking about scale right so um let's say uh you know you have for the sake of argument a 100 users today um at the sixth hour um at 6 a.m. for example um but let's say tomorrow at 6: a.m. the same time it's scales to 200,000 users um how is your service going to handle that traffic right um do we put in some sort of autoscaling system even

if we do how is it going to affect my model is my model going to be able to turn out those numbers um that quickly uh so those are kind of the things that you want to think about um after you know the whole model and feature engineering piece is completed okay gotcha um yeah so how about let's talk about a couple of those things um so one of them being that we've talked a little bit about metrics and how like engagement is ultimately the metric that we're trying to optimize for um is there anything

else that you would try to measure like either before or after deploying this model uh in terms of the success of the model itself yeah okay so engagement is one thing I think the other thing that I would probably look at is it's one thing to click the click a product that we're kind of giving them right the other thing is also kind of thinking along the lines of I mean are we are they listening to the song fully are they listening to it partially um based on that are there further interactions going on with

similar kind of data that they have um so this is more kind of thinking about the extent of the engagement that's happening or is the user turning right um so if I'm say let's say I'm giving 10 recommendations and the user clicks on eight of them that's a pretty good like engagement rate as per our metric that's 80% but if they're listening to it each song let's say each song is about four minutes for the sake of argument if they're listening to only a minute of it or they're barely listening to it before going on

to the next thing has our model really been successful because that could lead to the user just you know kind of churning eventually because they know eight out of 10 songs were okay but not really something that they like um so I would say maybe definitely look at long-term churn so maybe sday churn 14-day churn things like that um and that combined with our engagement metric will really give us like that strong indicator of yeah we're doing a good job and this is a good recommendation system yeah I like um the underlying concept of how

we're measuring these metrics over time right because the performance of our model may change um what is like another another way to uh segment out the engagement metrics to better understand where our model does well or doesn't yeah so is um is your question primarily focused on like I guess features for that matter so are we looking at like demographics age group like segmenting on that sort of metric and then figuring out where it would do better is that what you're going for yeah those are all possible things to segment on um and for example

like newer users versus uh longer time users right it's possible that the recommendations are more effective for the longer term users than for new users yeah yeah definitely or perhaps for yeah go ahead or perhaps for users from like one country over another yeah mhm yeah geographical setting age groups gender um all of that makes definitely makes a difference um even kind of looking at follower to- follower ratio between different artists and kind of taking that into account to try to see how that affects um you know our engagement and our turn also makes a

big difference I like what you mentioned a lot about um looking at long-term users versus new users because again that's the kind of goes back to that cold start problem that the traditional recommendation system algorithms have a difficult time solving but maybe looking at something different um like U like a decision tree or an XG boost or even like some kind of deep net might help kind of solve th and fill in those gaps right um and this could be further iterations as we look into uh uh kind of scaling this up and we see

some success with our first first result yeah exactly and like tracking all of these like more uh metric breakdowns rather than just the aggregate metrics can help us exactly have those insights right yeah so I'm curious then like um let's say okay you've deployed your model it works pretty well and you're tracking it over time and suddenly you're noticing like a little bit of a regression um how do you decide when that you should like update your model that's a good question um and I feel like a lot of this is very much kind of

a art more than a science right I think it is as your intuition sort of develops over working with this you start kind of getting more into knowing like this is sort of the right time and I think this is one of the the the more things about ml that's like different from other types of software is that there's always a lot more post- production work where you're constantly experimenting and constantly seeing like uh what's going on um generally my suggestion would would be to um have some sort of Benchmark before you push it into

production and see how the The Benchmark is doing in comparison to the model that's in production so let's say we have our collaborative filtering algorithm that's running and then let's say our second closest model that could have taken that spot is an XG boost right um and so everything's working fine with a collaborative filtering algorithm and all of a sudden the regression happens and you start seeing a decrease in model performance you can immediately sort of you can keep testing the XG boost model in parallel and if you do see that there's a you know

small spike in the increase of performance of the XG boost as new data is coming in then that's kind of a clear indication right there that the XG boost might be a better switch out so having the possibility to switch that out might be a good thing um this possible also it's good to also check in like things like overfitting to see that as this retraining keeps going on are We suddenly starting to overfit are we know our data too much in training that it starts to overfit and when that happens that could also be

a bit of an indicator that you know in production it's not doing as great but you know our training validation and training accuracy might be abnormally high right um you're getting a 99% accuracy in training but you're getting a 70% accuracy in testing there something's wrong so yeah exactly yeah and it seems like you mentioned two different axes um of like things that you might change if you need to like refresh the model that's in production right you mentioned like the type of the model and also the freshness of the data right so it's nice

that you have this Benchmark um way to compare both of those factors yeah and most importantly with the same data that's coming in so you're kind of like comparing Apples to Apples to see two different models and how they do with the same data yeah MH yeah okay perfect any last thoughts before we get into some interview analysis um yeah no I think we've covered um the at least the initial life cycle for the most part of it so I think I think I think that was everything yeah definitely okay thank you so much um

I'd love to hear from you what do you think went well about this interview and what do you think like you might change yeah I think things that went well um I think the assumptions part in the beginning and like also throughout the throughout the part where we were talking we were making you know certain Surefire assumptions that allowed us to kind of concentrate on how to build like the simplest version of the model I think there's a lot of focus on kind of figuring out how do we build like the most MVP basic iteration

which which went well um the second piece is I think we comprehensively sort of went through each of the ml life cycle process right we analyzed our problem we analyzed our metric um we thought about you know what are some of the features that we would need to produce and how do we get these features to happen what kind of data processing we would need to do to produce these features and we talked a little bit about analyzing models so we sort of went through uh a very high level quick way of kind of getting

from point A to until to build a model and and get that model into production so we have a game plan I think what I personally could have improved on is um maybe sort of shortening uh I guess my my feel and like sort of getting to the point a little bit quicker um I think that was definitely something that I could have improved on and I think we could have done a little I could have done a little bit better on um the the actual production part of it so we mentioned a little bit

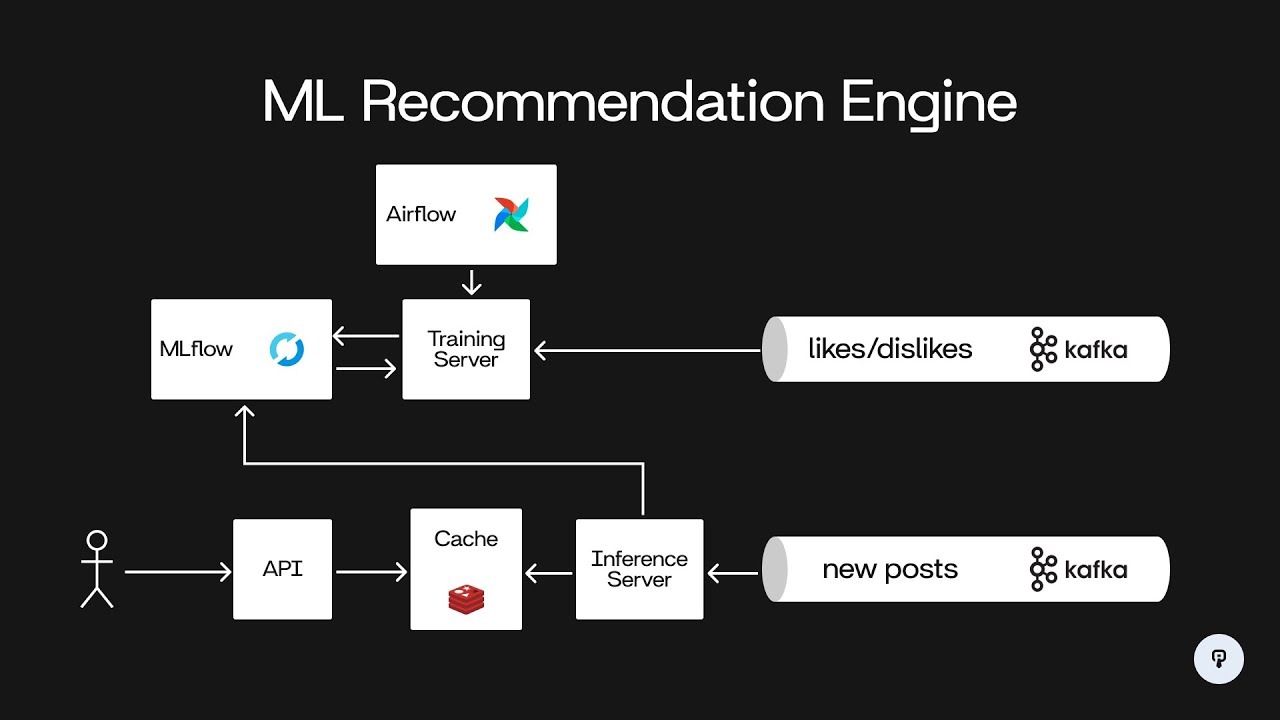

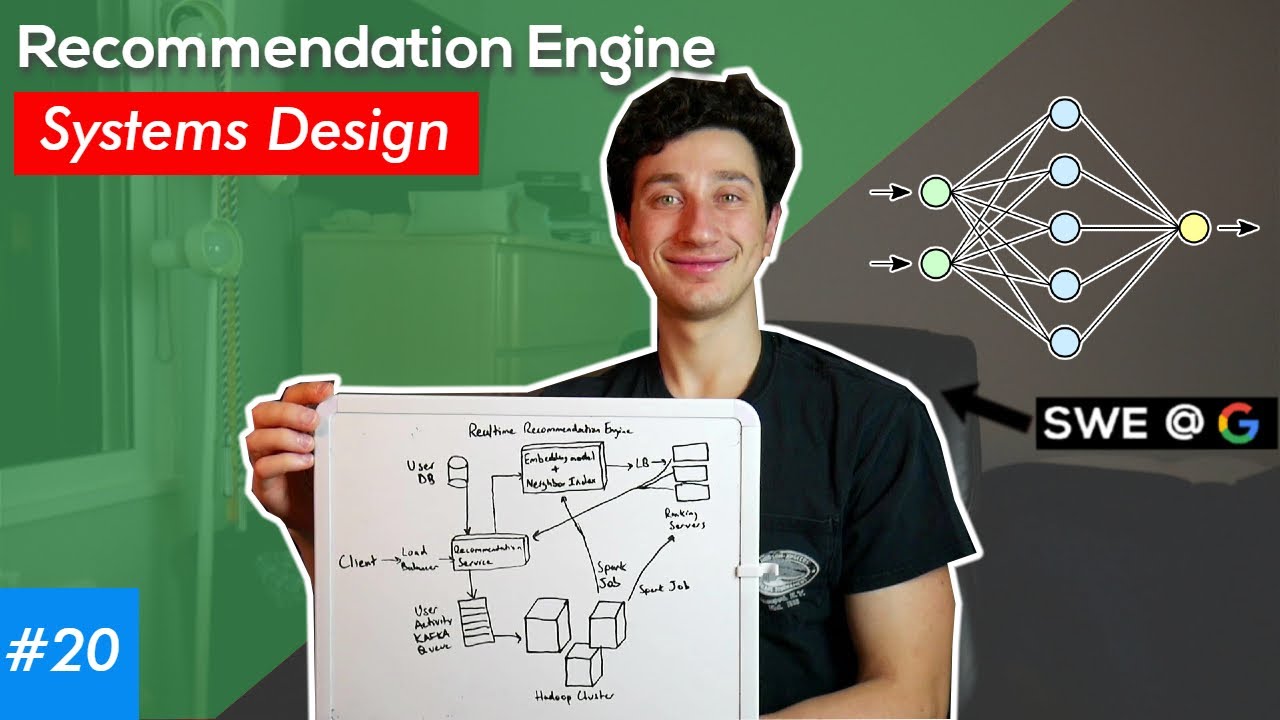

around different tools we can use to productionize our model um I think we could have done a little bit better on like sort of drawing it out and see how that flow would look like um and see like how the interactions would happen between the model the application um storage systems and things like that so yeah yeah it could be really helpful to have like that visual schematic of like exactly what inputs uh are going into each component and how did the components connect to each other and so on yeah definitely agree with that um

yeah I totally agree though you did so much so well um I really appreciated the level of detail that uh you use in asking um clarification questions in the beginning especially about the data you made sure you knew exactly what your data sources were and like what formats they were in and I think that made like the pre-processing part of your data pipeline like very clear and Polished um yeah and I also like that you talked a lot about tradeoffs for everything basically you talked about like trade-offs for a batching versus real time and you

talked about the trade-offs for like different types of recommendation algorithms and ultimately that is like one of the most important things to do in a machine Learning System interview right because there's not like a strictly correct answer it's mostly just about um how can you justify your answer so I really appreciated that about your answer um yeah I would have led to hear a little bit more about um I think you talked a little bit about uh the collaborative recommendation algorithm but um I didn't hear much about like how are we actually filling in this

user item Matrix right there's so many different ways that you could possibly do it um you could give like a highle uh summary of like some of those ways or you could just pick one and explain like how it works why you picked it and so on I think either of those are purchases okay uh yeah how does that all sound to you yeah sounds great I think it's really good feedback to take in for sure all right thank you so much today for sharing all of your knowledge about this I think we've learned a

lot from you and thanks everybody for watching and good luck on any upcoming interviews that you have all right bye [Music] everyone