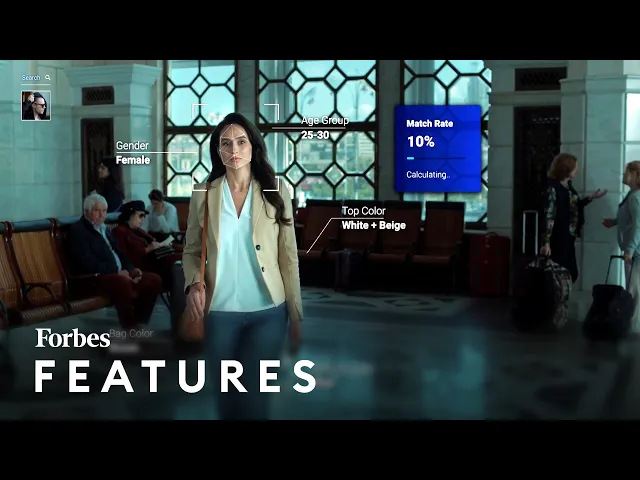

usdo which used to be called any vision a facial recognition company they're an israeli ai startup their big sell is that they can use ai to automatically watch over people watch their faces flag them if they're on a watch list or of a security concern and they claim to do this you know better faster more accurately than anyone else they also claim to have privacy protections such as saying that they won't you know store data how much we can trust companies claims about how they store people's information is is another matter entirely boosted over interest

in large part because any vision made headlines back in 2019 when reports essentially said that they worked with the israeli military to carry out surveillance on the west bank but following that it emerged that microsoft was an investor and microsoft eventually pulled out of being an investor we also don't know whether they pulled out because of ethical concerns it's hard to say at the minute it was last year as well that i found out that they were potentially working on technology to apply the facial recognition to drones as well so in theory you know you

could apply this super smart ai facial recognition and attach it to a drone and you could fly past say a ship or a bus stop and you could match faces there so it's a company that's come to prominence in the government realm but now i think with the name change as well to usto from any vision it's now claiming to be much more focused on the private market casinos seem to be a big part of lusto's future casinos see a huge number of people coming in and out so we wanted to go to oklahoma and

see what it looks like for a private company to have this powerful surveillance technology we spoke with travis thompson director of compliance at muskogee nation gaming enterprises and here's what he had to say facial recognition is important to us from a lot of different perspectives but the main one was really to kind of protect our players and enhance their experience while they're inside the casino we saw a major need to kind of dive into the facial recognition world because we were having a hard time recognizing faces just in person so that that responsibility was on

our security guards our floor personnel to actually go out and remember who these band patrons were who these bad actors were and try to see that in a sea of people every single day we started working with usto and at that time it was called any vision and we saw the detection rate just through the roof and so even when we did a demo test with our local vendor we saw a lot of great success and we knew it had opportunities to expand on that within our resort so when somebody comes into the property and

they are banned and they are in the facial recognition system we actually get an alert that's in our surveillance room we radio down to the floor usually to a security officer or an mod and they'll usually do an id check on that person to verify that's who we have in the system a lot of times we don't you know our bad actors have learned a little bit not to bring their id's well if you don't have an id in an oklahoma casino you have to go anyway so they're going to be escorted off the property

[Music] well the concerns about facial recognition i guess are pretty basic a large number of people would not want an image of their face to be stored in some database somewhere so that it could be used at some point further down the line for identifying you maybe even incriminating you in a crime that you weren't part of you know these things are risks and we've seen that in particular with facial recognition technologies that have led to wrongful arrests in particular of of black people there's always been an issue around the ways in which these technologies

identify black people compared to white people in fact any other ethnicity other than white people these kinds of technologies have on occasion really struggled with with identifying the right person any vision claims to have data that indicates that it doesn't have this problem dean nichols cmo avusto gave us more information i think there is a lot of misconception around demographic bias and i think a lot of that misconception was valid 10 years ago when a lot of these ai and facial recognition systems were getting rolled out a lot of it had to do with how

the ai was built so for example if you train your ai and a bunch of middle-aged white guys it's going to be really good at picking up and detecting middle-aged white guys but may not do so well with african-american women right those biases have been largely eliminated and so if you look at all the leading players in facial recognition they have virtually zero bias from it from an age or from an ethnicity perspective another area of concern is what are you doing with my data so virtually everyone that walks in the casino the thousands and

thousands of people that walk into this casino we don't care about we're not maintaining their information it's only when they're on the watch list we do that matching and now we're preserving that footage this is only used at least in our opinion for good this is giving an opportunity for our customers to feel safe because all we are detecting are the people that we want to keep out of here working with local law enforcement our tribal law enforcement we have had situations where that facial recognition system was critical to making those fines here on this

property and putting those people in jail see the capture of the face when she turned around and see the green box making the detection and as you can see she had a mask on and so she's trying to hide her identity for sure because we got a really high score that's got to be our person so that's a positive we don't use our system for our vips and that's again part of the ethics we had a small group that we tested with but we have not rolled that out to our vips again it's about that

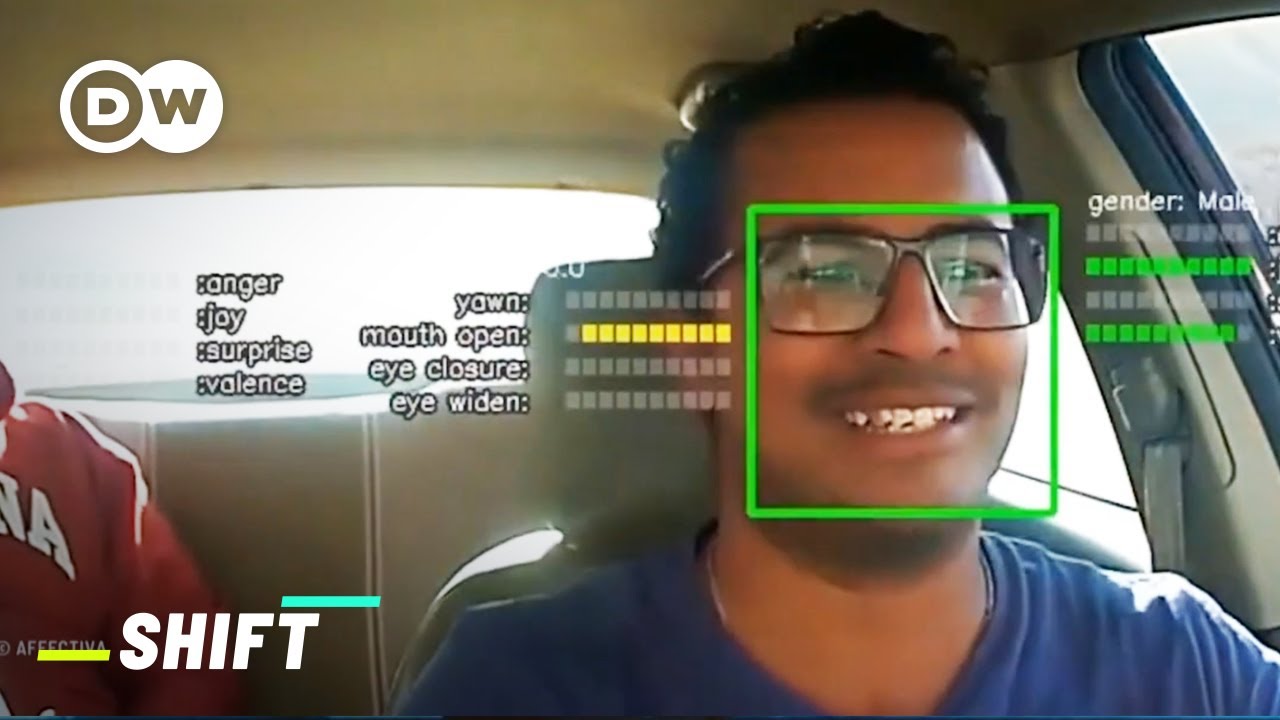

ethics and acknowledgement and them understanding that they're going to be used in a detection system we want it to be a customer friendly atmosphere so we don't want them to feel like big brothers watching them all the time and that's not what our system is here and used for facial recognition as a category has evolved tremendously over the last 15-20 years and it's it's allowed us to do real-time recognition of faces and body recognition and eventually involve behavior recognition as well whether they're also able to just simply detect patterns of behavior amongst people say someone

is acting suspiciously that's a really you know another controversial side of this kind of technology that's emotion detection almost it has worrying overtones of you know pre-crime things that have been a concern for you know sci-fi novelist before this technology actually now exists we really don't know how good technology is understanding human emotion and for that to be the next way of detecting threats or detecting just how happy people are in a public space i think you know that there's just a simple creep factor with that right i mean do you want a machine deciding

whether or not you're happy and then that translating into you know maybe a customer service rep coming up to you and offering you something maybe it's positive but again i think a lot of privacy advocates would have just serious serious concerns around widespread implementation of this technology in really really public spaces [Music] you