hi i'm luca welcome to a new research paper summary by marktechpost.com today we are gonna talk about an explainable ai method developed at the duke university the purpose of explainable ai methods is to help humans to understand and interpret the predictions of the machine learning model the major challenge is to explain the predictions of black box models due to their opacity for example deep learning methods are considered as black boxes since they are highly recursive and too complicated to be understood by humans today we are going to focus on image classification tasks faced by using

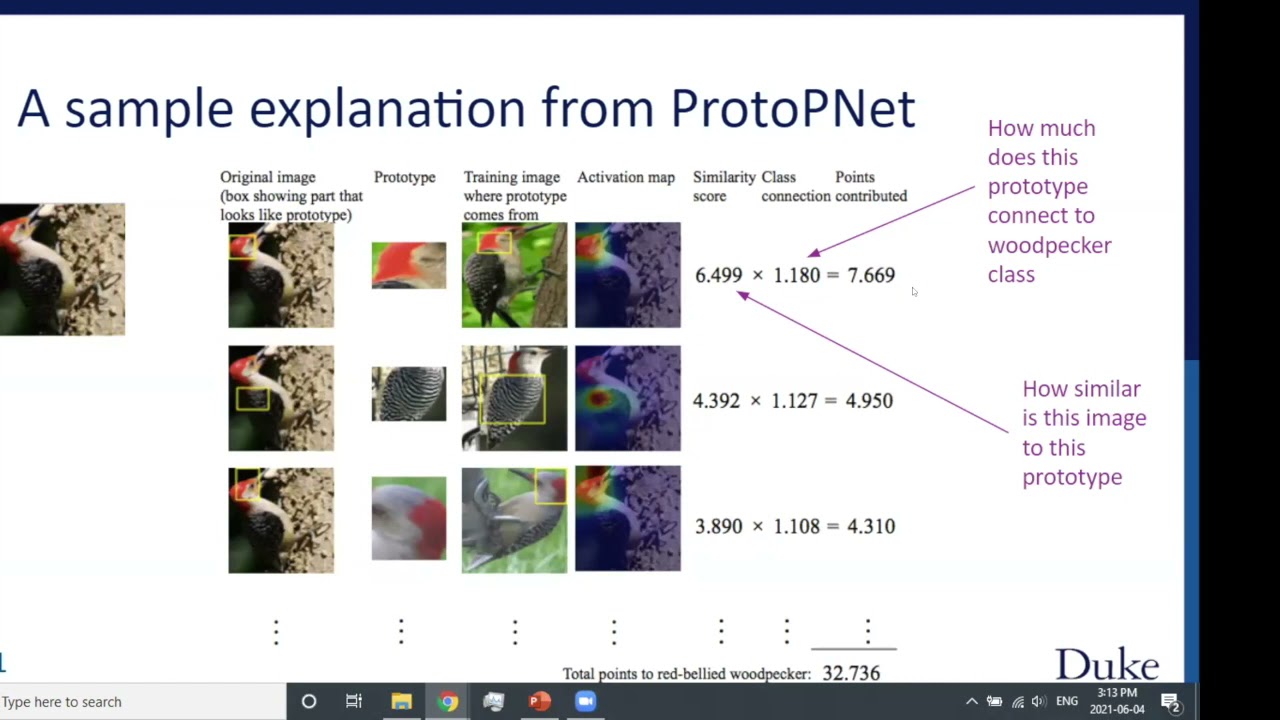

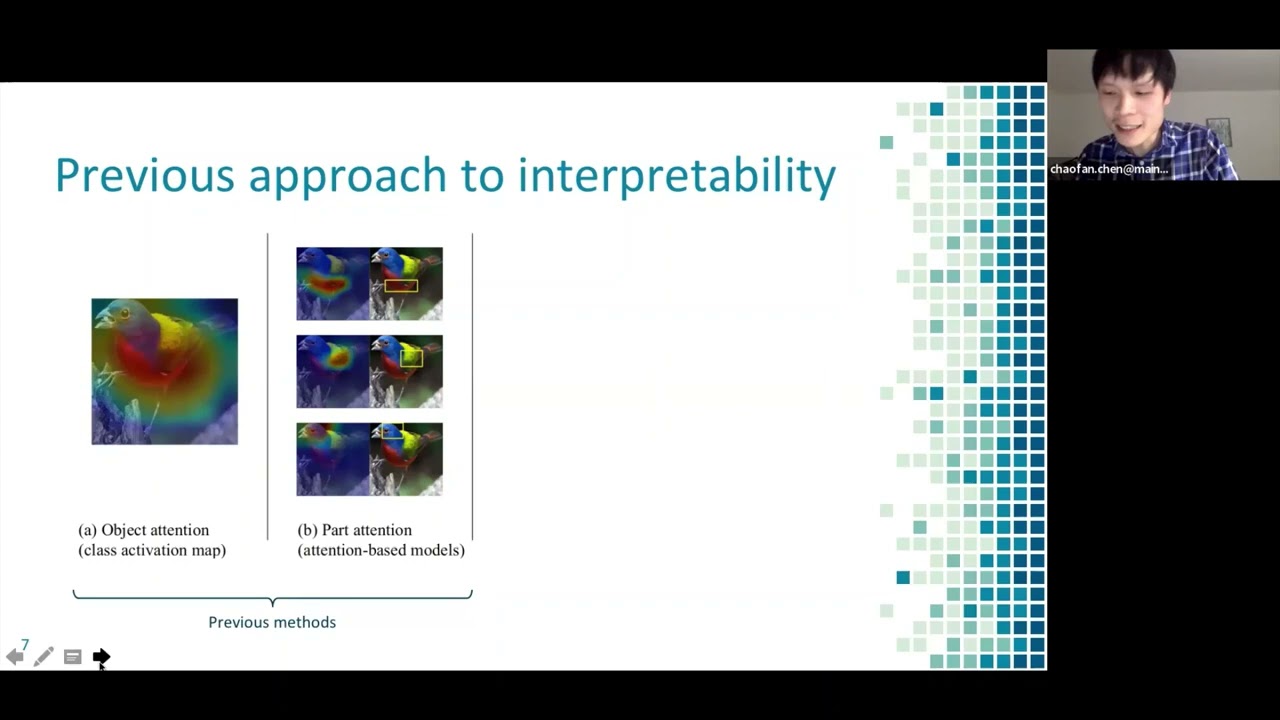

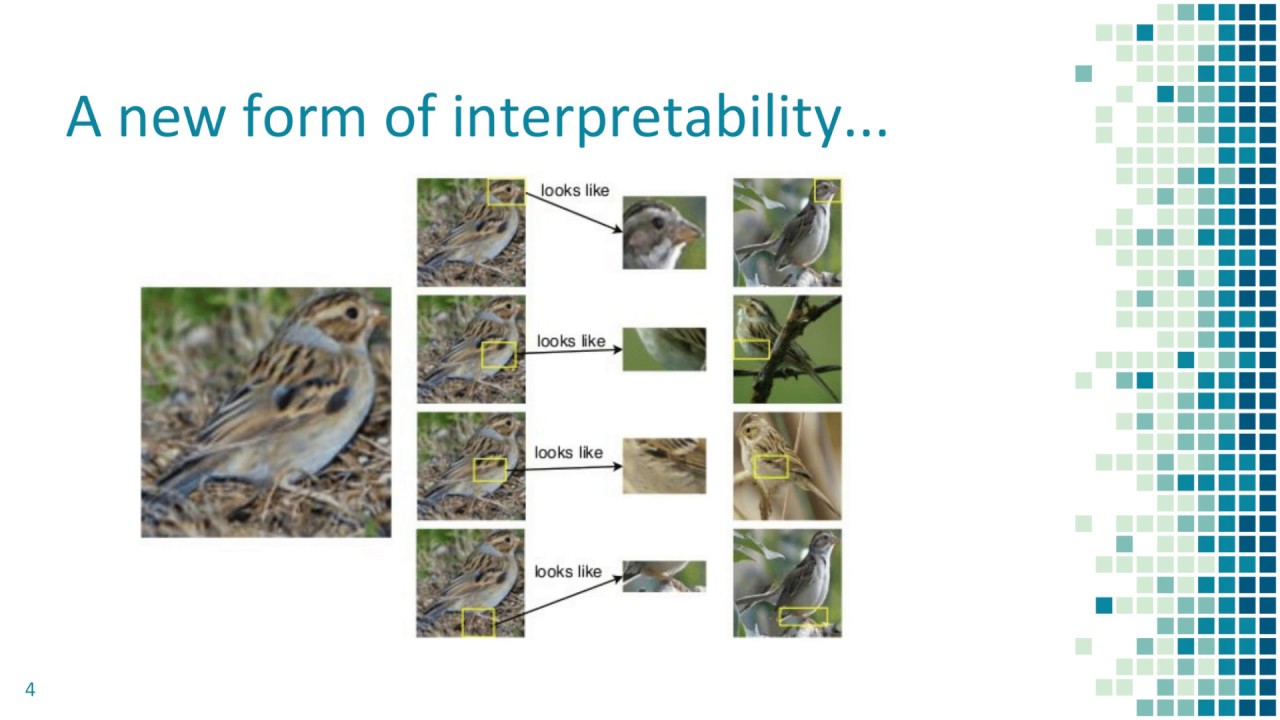

deep learning solutions the solution proposed by this paper is based on the following intuition as you can see in the figure when we describe how we classify the image of an animal we might focus on parts of the animal and compare them with prototypical parts of images of the same animal the goal of this paper is to introduce a neural network called protopinet which is able to identify several parts of an input image that look like prototypical parts of the classes present in the training data hence the predictions of this network will be based on

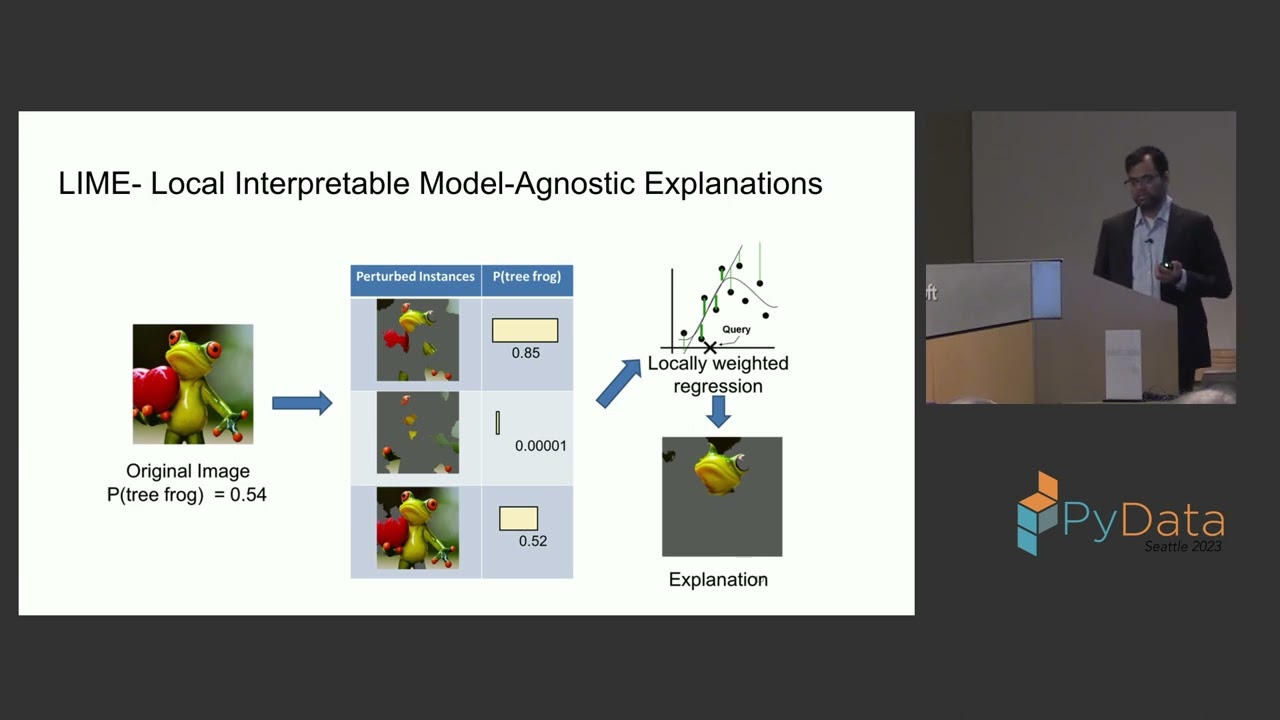

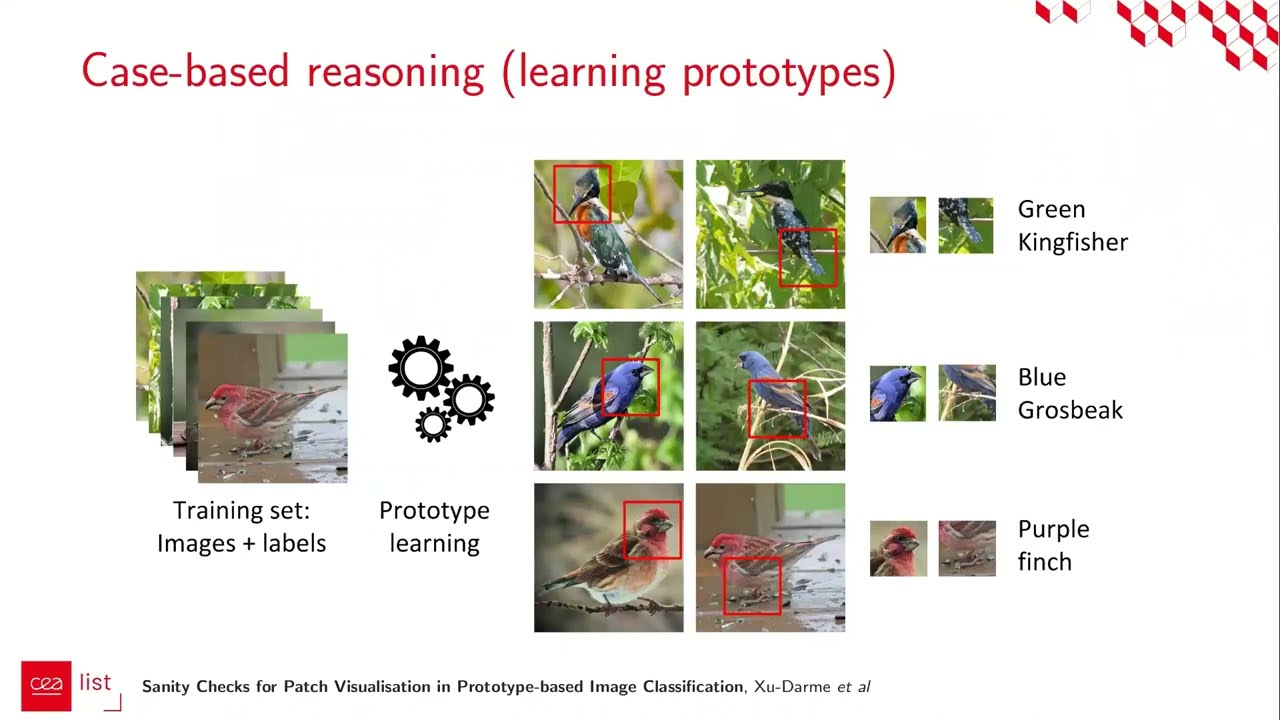

the similarity scores between parts of the input image and the learned prototypes in this way the developed model is more explainable and interpretable than a standard deep neural network since the reasoning process behind each prediction is transparent now let us consider the application of the protop net for the image classification of burst pieces given an input image the convolutional layers of the network extract useful features from it then the network learns a certain number of prototypes where each prototype can be considered as the latin representation of a prototypical part of the bird input image the

researchers allocated 10 prototypes for each bird class of the data set so that every class will be represented by more prototypes in the final model given a convolutional output the i the component of the prototype layer computes the distances between the height prototype and all the patches of the convolutional output that have the same shape as the considerate prototype the distances are then inverted to obtain similarity scores the result is an activation map of similarity scores this activation map can be up sampled to the size of the input image to produce a heat map that

identifies which part of the input image is most similar to the learn prototype the activation map of similarity scores produced by each prototype component is then reduced using global max pooling to a single similarity score this can be understood as how strongly a prototypical patch is present in a part of the input image finally the similarity scores produced by the components of the prototype layer are multiplied by the weights of the neurons of the fully connected layer the softmax activation function is used to obtain the predicted probabilities for the input image belonging to the dataset

classes let's focus on the figure the similarity score between the first prototype a clay colorless spiro head and the most activated patch of the input image for this prototype is equal to 3.9 on the other hand the similarity score between the second prototype a pre versus sparrow head and the corresponding most activated patch in the input image is 1.4 this means that protopinet finds that the head of a clay-colored sparrow has a stronger presence than the head of a brevard sparrow in the input image indeed this will increase the probability related to the class clay-colored

sparrow in the final prediction now we will focus on three aspects of the optimization process used to train the proto peanuts first of all during the training process the cross entropy loss is used to penalize misclassification on the training data then a cluster cost is minimized to encourage each training image to have a latent patch that is close to at least one prototype of its own class finally a separation cost is minimized to force every latin patch of a training image to stay away from the prototypes of the other classes did you like this explainable

ai method if yes leave a like on this video i hope to see you again in the next research paper summary by mark thatboss.com