Okay. So over the last couple of months, CrewAI has had a number of key updates. And in this video, I want to throw a look at some of them.

show you what you can actually do with some of them. How it changes how you make a project, and also show you how it actually improves a lot of the results that you're going to get out of here. So, here at GitHub, looking at the CrewAI releases and we can see that they had a release earlier this month, but they've also had quite a number of releases over the past couple of months that have been just slowly plugging away, adding new features, which is great to see.

Definitely a lot of kudos to João and the team at CrewAI. They still plugging away and adding new things to the open source version of this. And I think they're also working on a sort of hosted version for actually running the crews in here.

So what I want to do in here is basically have a look at some of the new ways for you to create a new project or a new crew. look at how, some of the structure has changed and how you can use YAML, in here. So this is something that definitely opens it up even more to people who perhaps are not coders and not comfortable with the actual code and stuff in there.

I also want to look at some of the things around the testing and evaluation of your crews and also how you can, use training to actually improve the results that you're going to get out of your crew. And then finally, we also look at some of the things that they've added around planning steps and a few other things in here that are really cool as well. All right.

So the first thing I want to look at is actually how you basically create a crews. So one of the big changes that has come, not in the latest version, I think a couple of versions back is this whole idea of that now you've got a command line interface or a CLI in here, to do this. And this basically allows you to just create a whole new crew by doing CrewAI, create crew, and then the name of the crew in here.

So you can see here, I've basically just created a blog post creator crew and you can see, this is all the files that it's made for us. and this is really interesting because it shows us a lot of different things that before we had to do manually. So obviously we've got, the main, file in here for running the crew, but we can also see there's some interesting stuff in there for training and for testing, which we'll come back and have a look at.

We can see also that, this is pulling a lot of information in here for how you set up your agents and stuff from these config files. So now we've got, an agent's config file where we can basically put all the info about creating an agent in there. And we've got a tasks config file, so we can put our different tasks in there as well.

So one of the things that I think is really cool with this is that if you are working with people on your team who are not coders, but they really want to be actively involved with writing the prompts with writing the different things that go on in creating a crew for CrewAI, this allows them to basically do it, and, get started where they can actually do sort of a lot of this work of, creating the different roles, creating the different goals, the backstories, for the tasks, the descriptions, the expected outputs and stuff like that. And what agents are actually going to be used and stuff in here. So having all of this built here.

Actually turns out that you can basically import this, and it will actually just pull in the YAML file and process that for you. Now, I think they're actually even expanding it beyond some of the things that are here. but for this video, I'm going to focus just on the ones that are here, so you can get started and get using this as quickly as possible.

So here I've got the project that I created before using the command line. I've made some changes obviously to this. So the idea here is that this is going to be a blog post creator.

And the idea is that we're going to basically pass in a URL. and we're going to have a few different agents. We're going to have a researcher, which is going to go and get the contents of that URL.

and then we're going to have a plan out which is going to plan out a blog post here. we can have a writer that actually writes the blog post. and then we're going to have an editor that edits it and gets a final version out here.

for the tasks, you can see we've got our research task, our planning task, our writing task, our editing tasks. So it's simple that each one is lining up with the corresponding agent in this case, And you can see here that I'm telling the researcher with the research tasks that you're going to be using the firecrawl tool to do, you know, to basically do the scraping of this. So it can basically pass in the URL that we're going to have.

and it will then take that URL, scrape the site, use that as the content for writing a blog post out. Now I'll show you just quickly. So the page that I passed in, is the GPT-4o mini launch post from OpenAI.

And you can see that this is basically, being able to write a nice blog post out, summarizing a little bit of what they had in there. Probably, including some of the things in there as well. And then this is all sort of metadata.

And actually this came after doing the training in there. So that bit we cut out. But we can see that we've got a pretty good blog post we've got it in markdown, which is what I asked for.

and if we look at the logs for this, we can see that we're actually logging out a bunch of things. So we're using the firecrawl tool. So we've got our task there.

as we go through this, we've also got the planning on. So let me go through that a little bit as well. So on top of the, getting the configs for the agents and tasks, the other big thing that you want to do is basically have your main file and your crew file in here.

So, okay, one of the important things, Into main. so I basically just loaded all in the environment variables in here just to make it so that they're easily coming in from the env in here. So that's basically got my firecrawl API key.

It's got my OpenAI API key, et cetera in there. one of the first things that you probably want to do while you're mucking around with things is set the model name to be one of the cheapest models possible. okay.

I've gone for GPT-4o mini, as the model, here. and that just means that everything is going to start using that model. The other thing too, so that's it in the main file.

the other thing is in the crew file. I wanted to make some changes in here. So one of the key things that you want to do is set planning to be true.

Now, in a more production system, the planning part, we probably use the GPT-4o model rather than the mini model, but here I'm basically just passing in the mini model and I'm also passing in, output log file, because I want to store the logs out for doing this. So, this is a change that you want to add in here. I'm just going through quickly looking at the other parts of this file.

We've got our agents that we're bringing in. You can see we're using the firecrawl scrape tool that we're importing from CrewAI tools in there. I'm giving that to both the research And the planner.

Honestly, I haven't spent a lot of time on playing around with getting the agent part working here. I'm just trying to get the overall CrewAI updates working and work out what goes, where and stuff like that. If I was going to start, fine tuning this a lot, I might not give the tool to both of them.

I'll just probably give it to the researcher to pull down and get it to use it. Anyway, you can see that the agent's config is basically coming in from the config files that we set earlier on. in the newer versions of this, looking at some of their source code, I think you will be able to set things like the tools and stuff in the actual agents YAML file.

For this particular case, I haven't done that. I've set tools and verbose in the actual crew file. And that's basically because I wanted to have control over the firecrawl tool and I wanted to bring that in.

The other thing too, is that, I need to bring in the chat OpenAI model, just so that I can pass that into the planning LLM in there. and also if I wanted to have specific models for each agent or something like that, I could bring those in and define them in here as well. if I decided that, okay, the writer or the editor, I wanted to have GPT-4o, and for these other ones, we wanted to have, a cheaper model, something like that we could certainly do that in here.

I do feel that this is something where once you've got the basics, you actually want to spend a bit more time trying to tune your agent to get it, as good as possible even before we go to the trainings that which I'll talk about in a second in here. So the planning step that we're setting up here. Actually we can see when we run the crew by using the command line CrewAI run.

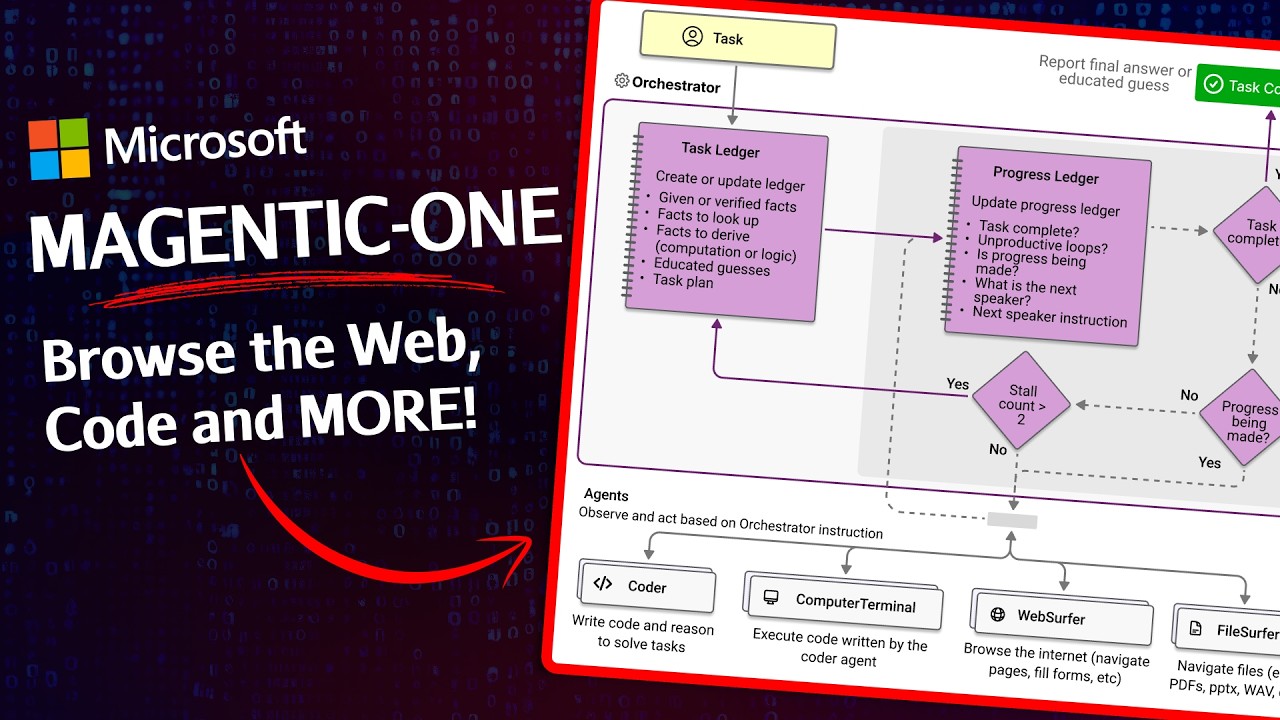

Now this whole idea of, these planning steps in here is one of the new features that's been added to CrewAI. And we can see that, it's pretty simple. You basically just pass in planning equals true.

And if you want to, you can pass in the actual model that you want to use for doing the planning. And you can see in their example here, that you're going to basically get this, planning the crew execution, and then you get this step-by-step plan out of what it's going to do. So let's look at how this actually worked.

So I changed the model back to GPT-40 for this. So when I come and. look at the logs out here, you can see that we're getting the step-by-step plan from this planning tool that's coming out.

So it's basically guiding it to be a little bit more fleshed out of exactly what it should be doing, in regards to the tasks more than the agents like reading the outputs of the plan and stuff like that. They definitely are things that relate more to the task side of things. And you can see that for each part it's going through and giving us this step-by-step plan right, of what should be happening at each point, what things that should be looking for, that kind of thing.

And you can see that as it's pulling those things out they're obviously being passed along as well. So we can see going through this, this is definitely, started to put together a draft and then pass it along to the blog editor. The blog post writer has written a thing out.

and then, pass that along. That's had the editor looking at it and reviewing it for, clarity and stuff like that. So it's done all of that task.

and when we look at it, we've finally come out with an output that is actually quite nice in here. So one of the reasons why the output has come out quite well is also because I've trained this. So let's.

look at the whole concept of training. And you can see the fact that I've trained it in here because I've got this pickle file which is, obviously saved stuff. and it seems like the training basically guides your prompts a bit more to get the kind of desired output that you want in here.

so, let me delete this and then we'll basically do, another training from scratch to see how it actually goes. So if we come in and look at the docs for the training section, we can basically see that the idea here is that you're not actually training an ML model or anything like that. you're basically going to be like training something that will help guide the prompts, to get a better output, the key thing here is that you're going to be using the command line.

You're going to be using CrewAI, and then train, and then you basically just put in -n, the number of iterations you want to train for, and then the file name that you're going to do this for. So let's jump in and do that now I'm gonna leave the 4 0 planning on here Okay, so you're going to basically put in CrewAI in the command line interface, train, n and then a number. So this basically is the number of iterations through that I want to do training.

So I'm going to do two here. if you're doing something training where you actually, cared a lot about the output and stuff like that, probably want to do around four or five, from my experience. And then f is basically the file name, that we're going to have in here.

Okay, now if I run this, we can see that it's basically going to be training the crew for two iterations. Now don't worry, I've got some issues in there with me having a different version of Pydantic in there as well. Okay, so we can see it's kicked off, it's gone through, it's downloaded the webpage, here.

And, if we scroll through, it's written out the agent's final answer and stuff like that. and now it basically says, Please provide feedback. So this is the sort of human in the loop section here.

So you can see that now I can give it some info about, let's say I didn't want, don't provide footnotes or acknowledgements. Now this is not necessarily what I would want, but you can see that, okay, if I tell it that, The next run through, it's going to use my feedback, for going through it. so let's just pause it and have a look when it's gone through the next run through.

Okay, so you can see now it's gone through and it has removed, the other stuff that we had in there. and it's removed any sort of mention of the, acknowledgements or of, footnotes, et cetera. running through again, from scratch.

And now see, in this time it's basically given us subsections where we've got 1. 2, 1. 1, something like that.

Let's say we don't want that. I'm going to give feedback on this, search. Okay, so my feedback is going to be don't give subsections or numbers for subsections make the paragraphs longer as we go through this.

Now, it'll basically take that in and do a new version With this human feedback. Now, it's saving this feedback as it goes through as well. this feedback, that you can see is being saved in this training data pickle file in here.

will actually be used for if we did this for other URLs as well. So you start to basically train the bot to be more consistent in the way that it's done. So you can see, sure enough, we've got longer paragraphs now from the version that it's come out here.

So this is, an example of going through and using it. Now we can give more feedback, Please no references at the end. Now obviously I probably wouldn't want to put that.

I might want to say just give one reference at the end or something like that. But I wanted to you to sort of see that we've been able to sort of guide it. when they're talking about training, really we're guiding it through our prompting to get it to give the kind of output that we want.

so that we can see that sure enough we're getting No subsections, we've got longer paragraphs in this now. we don't have any acknowledgements like we asked for before. I've now asked it for, no references.

And we can see sure enough at the end it's just giving the conclusion now with no references and stuff, as it goes through. And you can see that here it's also giving, in this case it's giving a summary of major improvements and suggestions. And I think this is coming out because of the sort of added prompts in this.

So you might want to filter this, out in here. So let's just say, don't have a section called summary of major improvements and suggestions. Now you can see it again, running from scratch, and getting the URL.

and testing it out, and basically writing, a new article, using the training and the guiding that we've learnt. and sure enough, it's now just got everything, and it finishes with future vision, right? We've got no references, no acknowledgements, none of that stuff.

We've got longer paragraphs, in here as well. now I can say something like, this is good, and how I want it. so the training is just basically going through and giving updates of how you want the outputs to be, from the various agents and how you want the final output to be, as you go through this.

In the end, this is going to be all saved to the training data pickle file in here. And it's going to be used each time that you run the crew going forward. So any sort of guidance that you gave in there is going to be put through prompts to guiding the crew for how it should act for things going forward.

Okay, now, after going through, the whole thing, it finally will stop the training, and now I have this trained version which I can use in here. So if you want to see what was actually happening in the code, we can see that the train was just basically, going through here. now we also have some other interesting features in here that we could do a replay where we could actually go through and, replay, what we saw.

But the next thing I want to try is the test. Okay, so if we want to run the test, we just use the command line, CrewAI test. Make sure I'm in there.

And we can see that it's doing the three iterations with the GPT-4o mini model, in there. And if we look at this, This is basically running it like it normally would. In this case, we're doing our step by step plan.

it's getting initialized by the Firecrawl tool. configure scraping options, execute. This is for each of the steps.

So the agent, web content researcher. next one, blog post planner. we can see, the step by step of what it's, deciding to do in there.

and then our blog post writer, the goal, step by step plan for this. So this is coming from our planning that we set up before. And in a test, it's basically just going through, like a normal thing, and it's going to give us some stats at the end of actually doing this.

Okay, now going through the test, it's actually gone through three full runs of doing this. So it took quite a while, of me sitting here to do this. And what it basically has done is it's scored the output of the crews, It scored the output of the tasks, each time.

So as you can see, we've got run one, run two, run three. and, I thought it was going to default to two runs. It seems like it's defaulted to three runs.

You can actually, set it with a N for number of iterations that you want it to do. we can see here that basically, we got, task one. for the first time through task 1, it basically just got 8.

0. run 2 got 9, run 3 got 9. 5.

Now, I have no idea whether run three did a lot better overall, because it took a lot longer. I'm not sure about that, in here, but we can see that, okay, this is what each task, scored for this. Honestly, at the moment, I'm not sure how useful this is.

really, you need to see, okay, what is it using to actually do, doing the scoring. could be also, though, that because I'm using, GPT-4o mini in here, perhaps we would want to use GPT-4o for doing, the evaluations, in here. But, okay, it's basically gone through and done this.

we can see what it's scored. And my guess is that if we had run the test before we did the training data, we possibly get, scores that are not as good out here. So that would be something, interesting to see as well.

It would be nice if you could actually set the prompt for doing this test evaluation. My guess is that they're using some kind of prompt evaluation. There's a number of them out there.

I think I've talked about things like RAGAS in the past and stuff like that. and you could imagine that if you customize this yourself, you would actually be able to test to see, okay, is your crew getting, the results that you want out or not here. we can see that overall, it's getting pretty high scores, which kind of makes sense Looking at the output that we're getting, it is reasonably, on track for what we've been trying to do, in here of basically get out some key parts with paragraphs, with different comparisons, et cetera.

Always log out your logs. if you've got your logs here, you can actually go through and look at these, and see, how well they're actually doing. As far as I know, the testing, et cetera, at the moment is all still limited to OpenAI.

Could be nice to do something like this with a Groq Llama3 70 billion or something. We probably get some really nice results out and it's probably going to be a lot quicker, as, as well in here. But we can see that, it certainly was different, for some of these.

task one seems to have done really badly the first time and really well the last time. coincidentally, the last run took a lot longer than task one. I'm not sure if these higher scores have anything to do with it taking a lot longer.

It shouldn't, really, right? It should have just been, the actual calls and stuff that we got there. but overall we can see, okay, this is how well the crew is doing for the various tasks.

And my guess is that it's probably doing better than if we had run this before we did the training, run through. so that would have been, interesting to see. Overall, this is something that you can certainly run and just see, do you have certain tasks that are letting everything down?

this would be something that you're gonna need to, look in here and see. This certainly took a while to run though, So, overall, just to conclude here, there are a bunch of new things coming to CrewAI, and it clearly is under active development. it's great to see, some of these new things especially, I like the new training, idea here.

I think it's a really good idea. Adding things like planning and stuff to this certainly improves the quality of the results out that we're seeing from the crews. And overall, this is definitely making CrewAI a better platform.

I still feel that it perhaps has some, reliability issues, but with each time that they're improving, this is great to see. if you do need something that's really reliable, you probably still want to go for something a bit more low level like LangGraph. But I also understand that a lot of people, watching some of these videos, are not coders themselves.

So they like the whole ability to just spin up, a CrewAI idea, use the YAML, et cetera, to basically put it together. And with these new features, it's certainly improving the framework as a whole for that kind of thing. Anyway, I'll probably look at, doing some more things with CrewAIs, of just trying out some different agent ideas to get a sense of where we could improve this.

They've also added some interesting things in here for coding agents, for conditional tasks, for a number of things that I didn't get a chance to look at in this video. So we'll probably make another video about some of these things soon. Anyway, as always, if you've got any, questions or comments, please put them in the comments below.

if you found the video useful, please click like and subscribe, and I will talk to you in the next video. Bye for now.

![AI Agent Framework Final: CrewAI vs AutoGen vs LangGraph vs AgentZero Final Results Shock [5/5]](https://img.youtube.com/vi/d5l-oUWuZk0/maxresdefault.jpg)

![Amazing NEW CrewAI Feature for AI Agents...FLOW Explained | [Day 5 of 8]](https://img.youtube.com/vi/EEzpeJqvb_w/maxresdefault.jpg)