hello everyone how are you doing good nice I hope you enjoyed the reinvent so far so thanks for being here in this session in the next 20 minutes we are going to talk about single tency and multi tency in a Ser application so this is a high level agenda for this stock first we are going to look into some theoretical part of it and then we will discuss a use case and uh we we will uh discuss some results of that use case and uh wrap up the session quick introduction about me I am puu jard I am from Amsterdam Netherlands I work as a senior solution software engineer at postel the Dutch national Postal Service I'm also an a community Builder and uh you can find me in LinkedIn Twitter talking all about uh AWS and specifically serus all right let's uh talk about some theories what is single tency and multi tency let's assume that we are building a source application how many of you have designed a source application cool okay so when you design a sasce application among many other constraints that applicable for uh designing any application you need to think about very specific things things just because uh SAS means you have more than one consumers using your system so you need you need to think about how the resource isolation of that each and every consumer or tenant also as a administrator you need to think about the flexibility and control how much flexibility and control you need to have in your application over these consumers and when you add more and more consumers to your application you you you need to think about the scalability as well as how much uh cost Effectiveness you can G gain even when you add more and more consumers into your application this is where the single tency and multi- tency choice comes into the [Music] play in single tency consumers will have their dedicated resources in your application this can be compute data configuration Etc there are advantages as well as disadvantages in this approach is it uh it is always nice to start with single tency approach because it enhance security it gives High resilience and you can even customize per per consumer basis and also if you have a very strict compliance requirement of single ten is a good approach however in single tency approach you will end up with lot of resources so so it will incur higher cost scaling challenges and sometimes it's uh the maintenance is hard and the deployment time can uh longer as well and alternative can be a multi teny approach where multiple consumers will share the same resources in your application the pros and cons are completely uh uh opposite of what you have seen in the single deny approach here the biggest disadvantages disadvantage of going multi is the nois ne issue which means now you have shared components that uh used by all the clients and some of the uh consumers will use that particular resource heavily where the others cannot use that uh resource so you need to make sure all the consumers are balanced uh all the consumers have access to that resource uh in a perfect way okay let's talk about our use case first let me give you some introduction about what we do at postel and my application so postel is the largest logistic provider in the belis region at a given day we deliver about 6. 9 million mail items and 1. 1 million parcels to cater to this demand we have about 70 plus applications developed by in-house development teams and Ebe or event broker e-commerce is one of these Mission critical seress application as the name suggests this is a event broker where there are producer applications produce messages and there are consumer applications that subscribe to those messages we implement this as a Sal service so it's very easy to connect with the event broker and it basically helps decoupling of other applications otherwise they have to maintain their own connection between them so this works as the integration as a service so if we talk about the underline services that we use we use eventbridge as the main component that route messages to different part of our application and we have two types of producers sqs and https on the consumer side also we have targets sqs and https including private https Connection by Design we push the messages to uh consumers uh this is important because then we have more control over how we can do retrives red drives and uh all the things so we have control uh how we uh push or deliver messages to the Target 10 points we uh process about 15 million uh events uh within this application per day so when a producer or consumer register in our system we run a code deploy job which deploys a aw cdk project into our AWS account which results in the cloud information stack which means each and every producer and consumer uh have their own stack in our application in our AWS account as you see this is very much a single 10 approach right they have their own resources in our application this works fine uh to some extent but when we add more and more producers and consumers into our application we fac some uh bottlenecks so first one is higher deployment time these uh Stacks are very heavy because they include all the resources that they need to uh do their job independently so it takes about 8 minutes per stack to deploy and when we need to upgrade the software from let's say from version 1.

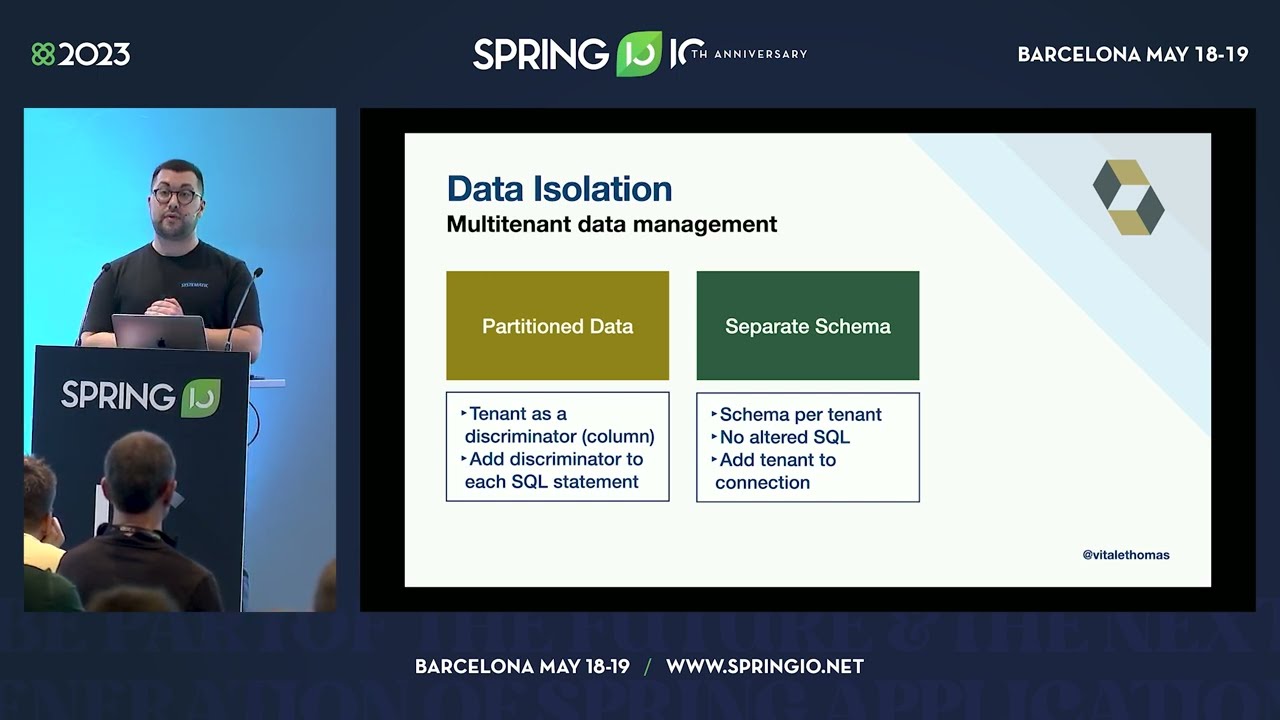

1 to 1. 2 then we need to upgrade all the stacks in our application which takes about 2 hours even with the paralyzation and with this process we uh experience some AWS resource limitations uh thring basically in cloud formation API Cod deploy and API Gateway deployment Etc of course we have some uh custom solutions to prevent this throttling but it's custom right and and there are some cost involved uh the fixed cost I would say because within those Stacks we have uh Cloud watch alarms km SK that's uh specific to that stack which means when we add more and more cons uh consumers or producers that cost goes up which is not nice for a serverless application I would say so we have a lot of identical resources to uh resolve these limitation to work on this limitation we decided to move some part of our application to a uh multi try approach however we are bound by two constraints because this is a mission critical Sol application so no downtime is expected also we are dealing with lot of consumers and producers so if we need their input on this migration it will take long time to finish the migration so uh we don't actually need their uh input in this migration as we plan also we plan the migration into two different uh oh not two four different stages I would say because we have two types of producers and two types of consumers we started with uh https producer migration so we have a API endpoint and when a new producer created there's a uh API endpoint created for that producer and in that stack there's a increase Lambda function specific to that producer that Lambda function is responsible to put that messages into event bus for routing into the uh consumer part what we Chang here is first we removed all these individual end points per producer and we uh replace it by a proxy end point so still everything is matches on the proxy end and then we replace the Lambda function that is specific to producer and uh make it the shared inase Lambda function and of course we need to uh validate the message that comes in so we had to use producer configuration uh that's come from the uh Dynamo DB table [Music] produce sqs migration is uh bit similar to previous one so we have specific producer CU specific producer increase Lambda functions what we did here was basically uh we created the Shar increas Lambda function that process all the messages from the all the producer cues and of course we need to have a producer configuration picked from the Dynamo DB table we cannot use one one sqsq here because once the message is in the sqsq we cannot identify the source of that message so we had to use uh different cues for that uh reason consumer part is bit complex because it has lot of resources so when the message comes into the consumer part there's a buffer queue here and uh there's a ESS Lambda function fix that uh message from the buffer Cube and put that into the target so that's the happy scenario if that fails all these resources are required to redrive retry or maybe delete the message uh if if we want so as you see there are a lot of a resources involved and this is very fat stack I would say so what we have done here basically identified all the compute resources and moved these compute resources out of this uh consumer stack so only the infrastructure is uh remaining on the consumer stack we went ahead and moved some of the infrastructure also out of this stack so we end ended up with very small stack with couple of uh sqsq and alarms and all the other things we made it uh sharable or shared components with the with this uh we we made sure that this once created this stack we don't need to update so when we need to update the software only these shared components are being updated so which is quite fast so we don't need to update each and every stack in our application so this is the uh migration of that sqs part so this is similar to the sqs that we discuss in the producer end as well so we have specific uh buffer cues processed by specific uh EG Lambda function and we replace the specific Lambda function with the sh degress uh Lambda function and that Lambda function is responsible to uh put that message to all the uh end points uh that's required also we have we have a consumer configuration which fits the uh configuration to send that data to any Target like Target input point the authentication Etc when we move this uh consumer sqs consumers uh to share the uh EG Lambda we take a step by step approach so first we introduce a Shar EG Lambda function and then uh so this esm is already available here so what we did was we created the new event Source mapping but in active state so still the message is processed by a specific e LDA function next we switch the esms so this was deactivated and uh all the messages were started processing processed by share degress Lambda function and in the next step we basically removed this Lambda function and all the consumer buffer cues will consume the share degress Lambda function so this way we made sure that uh business business is not not intact and all the messages are uh addressed so we we haven't lost any messages in this uh process so let's talk about some [Music] results so biggest advantage that we got from here is the the faster deployments now our Stacks are very thin and just a couple of resources so creation of producer or consumer is uh down to 1 minute from 8 minutes and also we don't need the redeployment all because when we need to upgrade the software from version 1. 1 to 1.