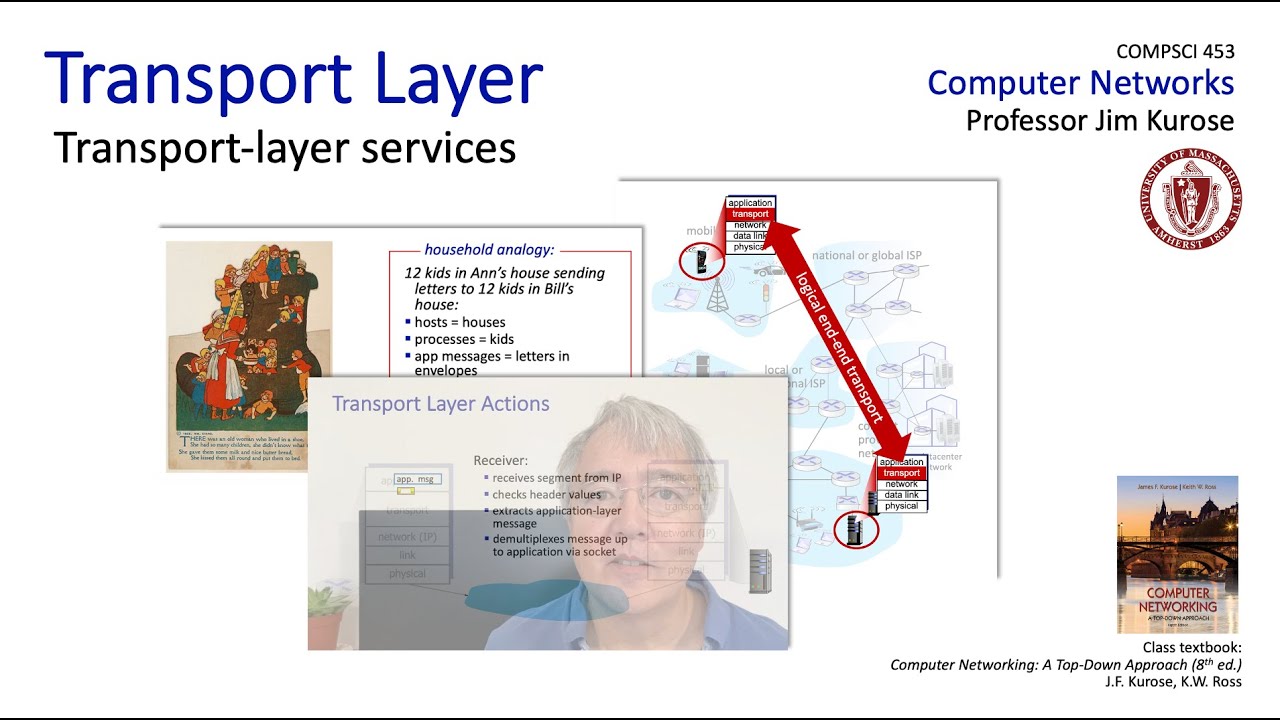

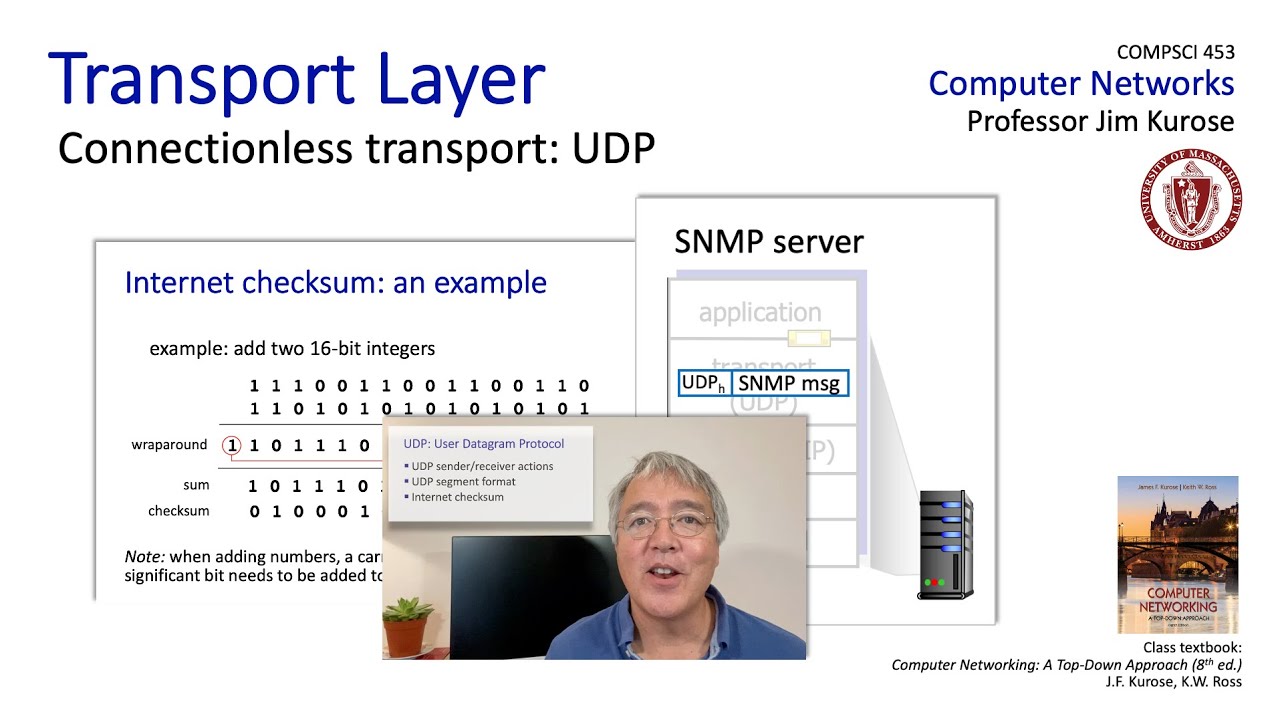

[Music] well we're all done with the technical topics of the transport layer so let's now do a little bit of crystal ball gazing and look into what the future of the evolution of transport layer functionality might look like the TCP and UDP protocols have been in place with a bit of changes for TCP for more than 40 years now and so they've proven by 40 years of use that they provide a pretty simple but maybe more importantly and arguably sufficient set of services to accommodate an amazing range of applications from the earliest internet applications like

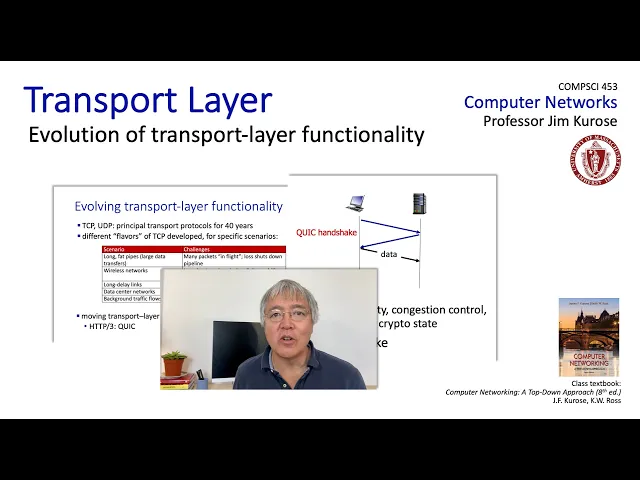

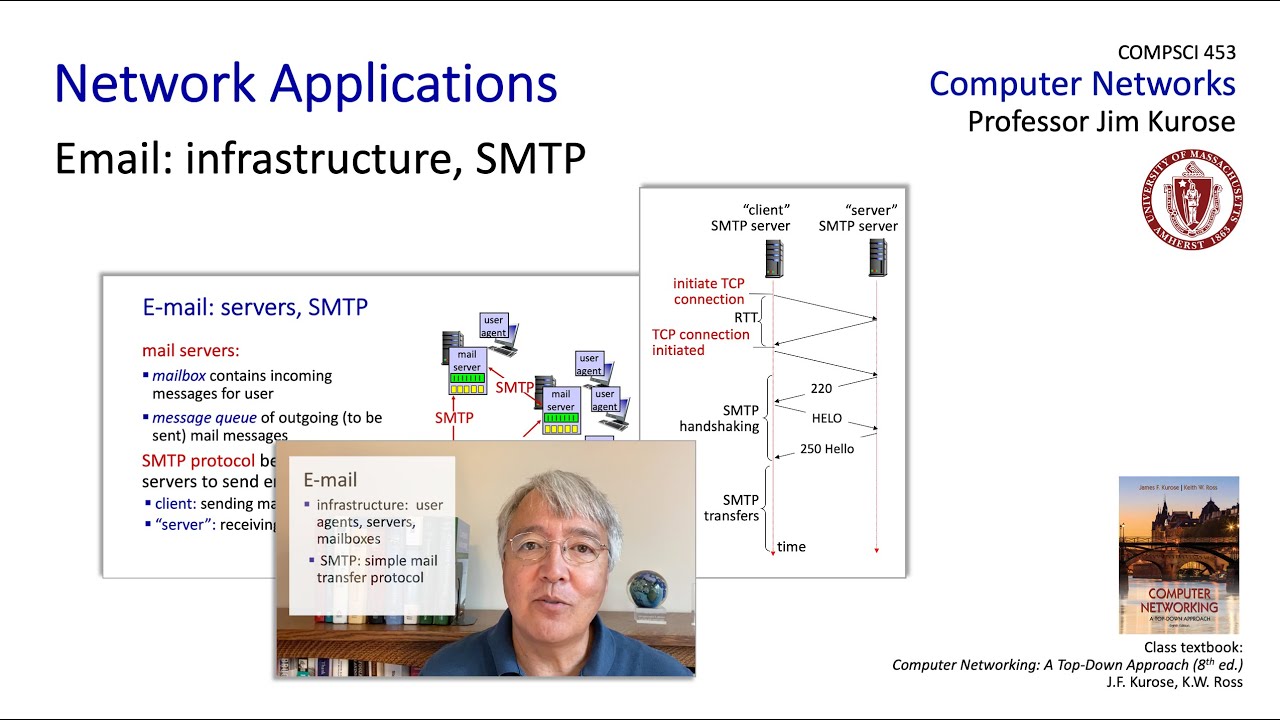

email FTP and telnet to applications that didn't even exist when TCP and UDP were standardized the web streaming voice over IP gaming and more well over the past 20 years there have been a number of flavors of TCP developed for specific scenarios and some of them deployed in practice but as you can see here in this table these are sometimes fairly specialized environments and it remains true that the TCP flavors that we studied earlier in this section really remain the dominant deployments well if you remember our discussions when we first introduced the transport layer you'll

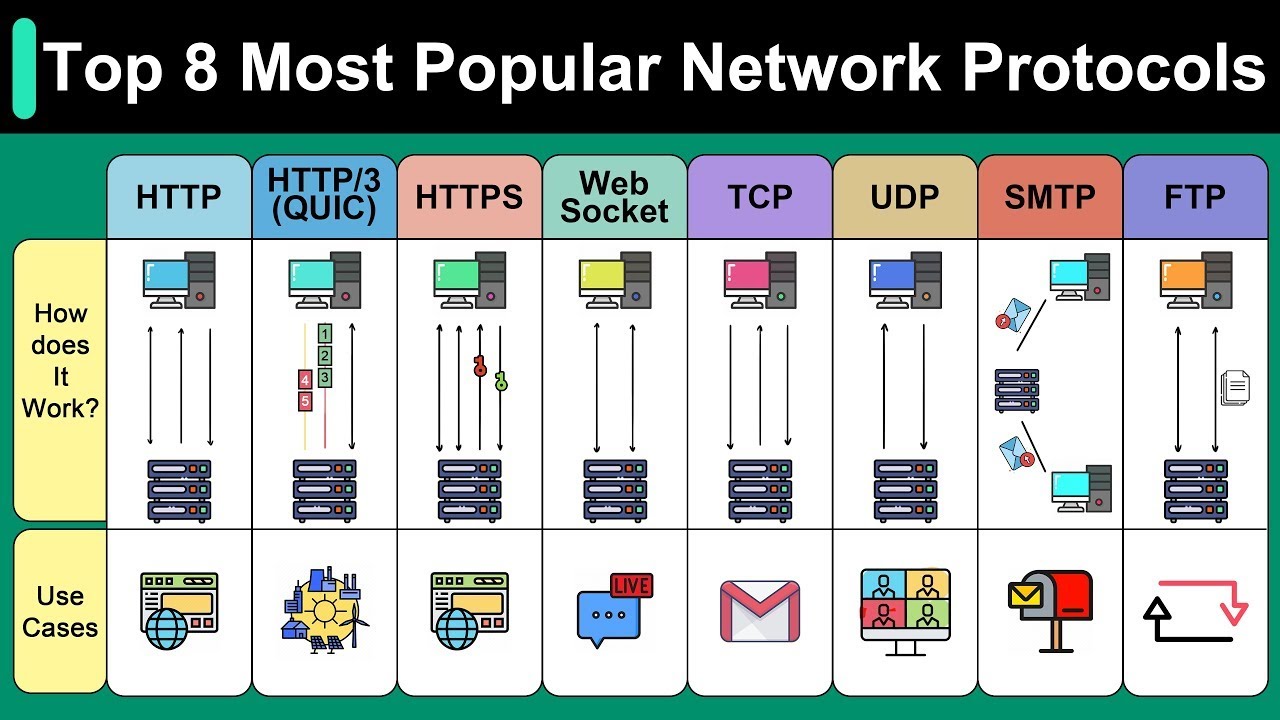

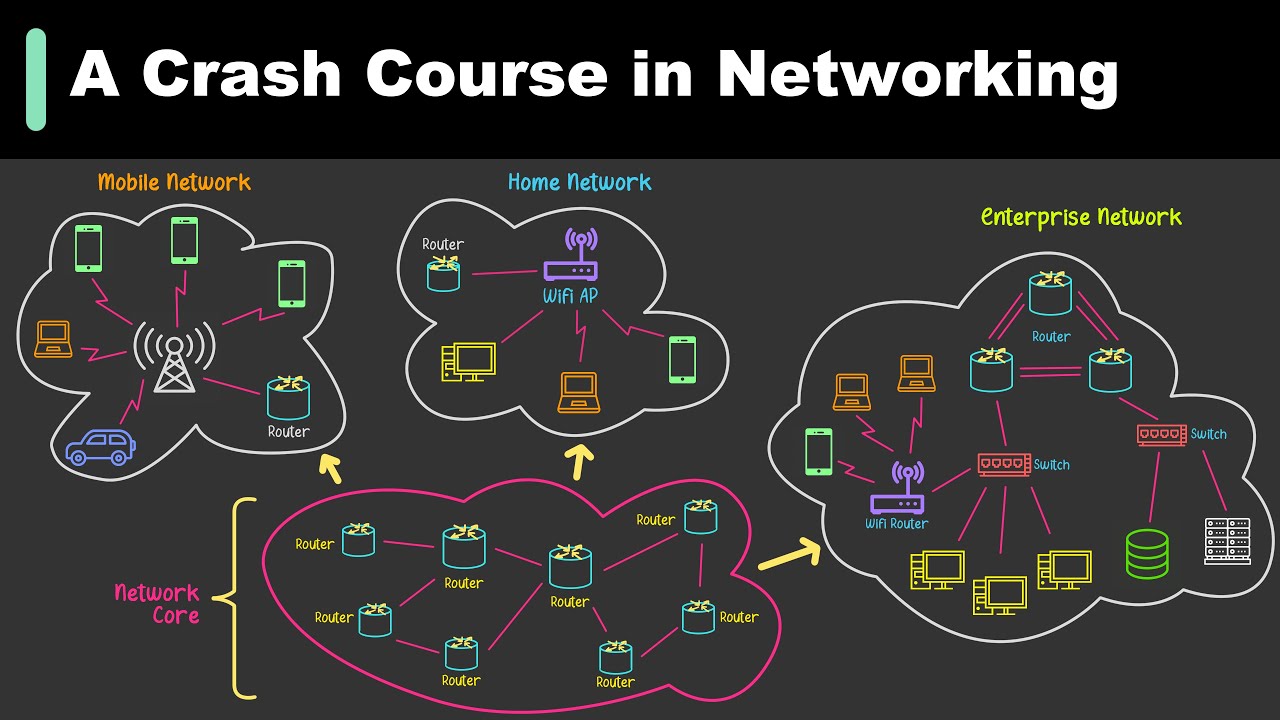

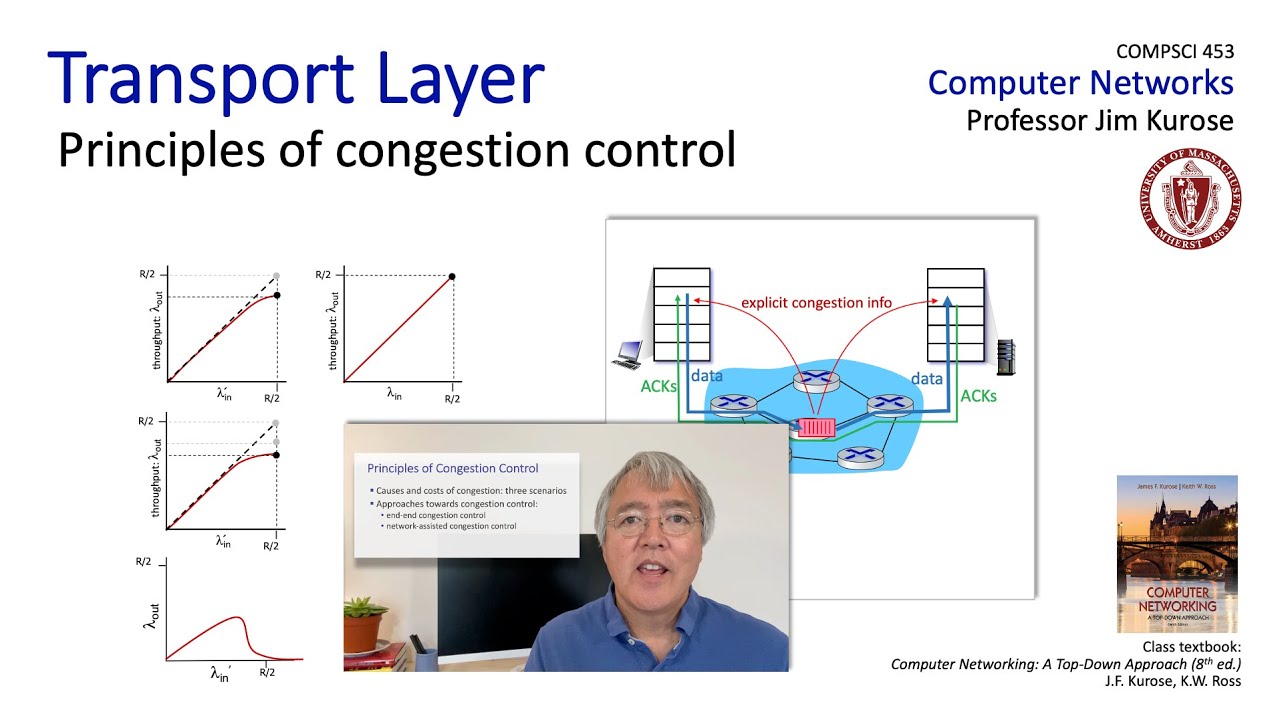

perhaps recall some of the services the transport layer doesn't provide support for real-time services or security in particular and these are incredibly important but they've been primarily added at the application layer that's been in the form of application layer protocols and distributed application layer infrastructure such as content distribution networks and data centers and with application layers service providers connecting close to their customers edge network we learned in Chapter two that pretty much since its inception HTTP has been running over TCP however there's change of foot with a new version of HTTP that's HTTP 3 building

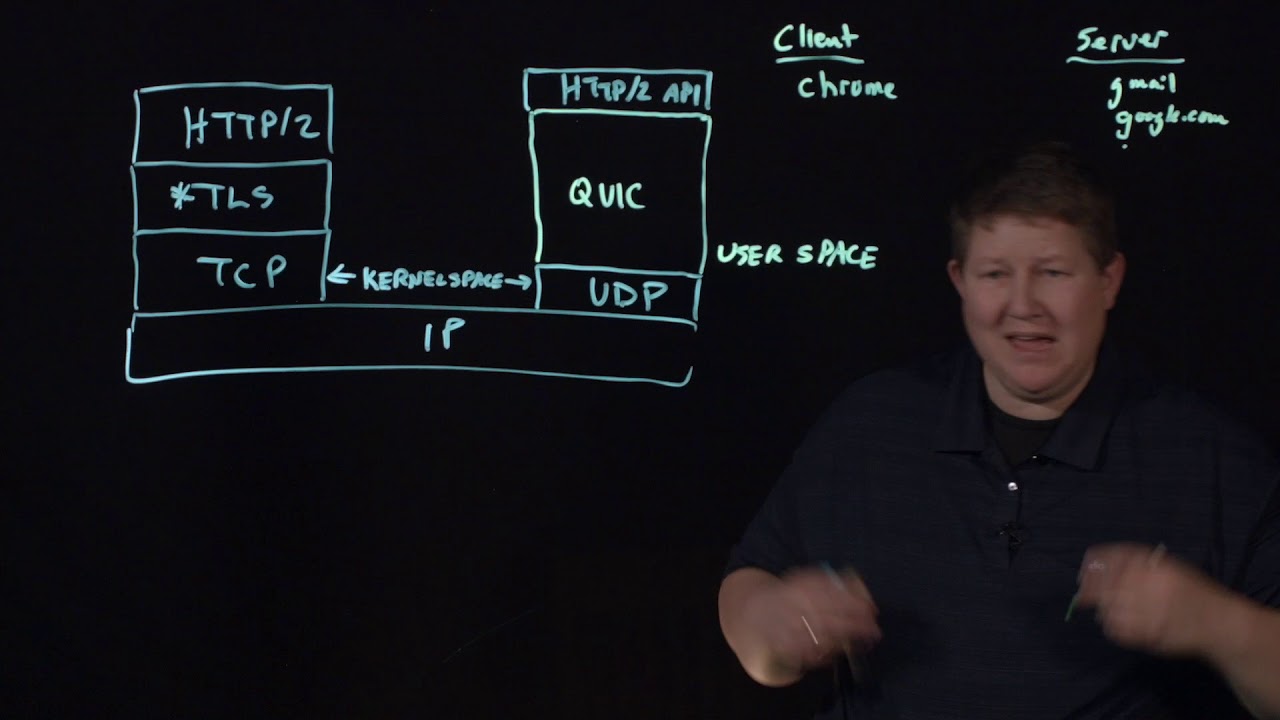

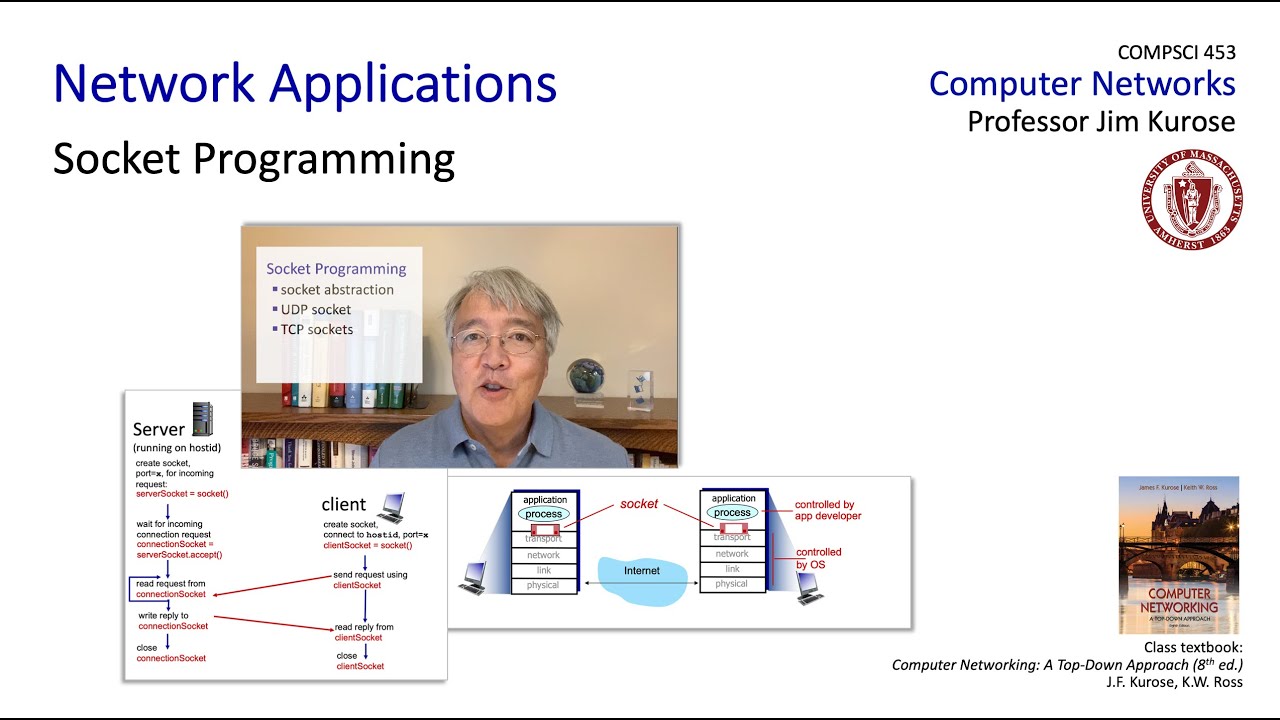

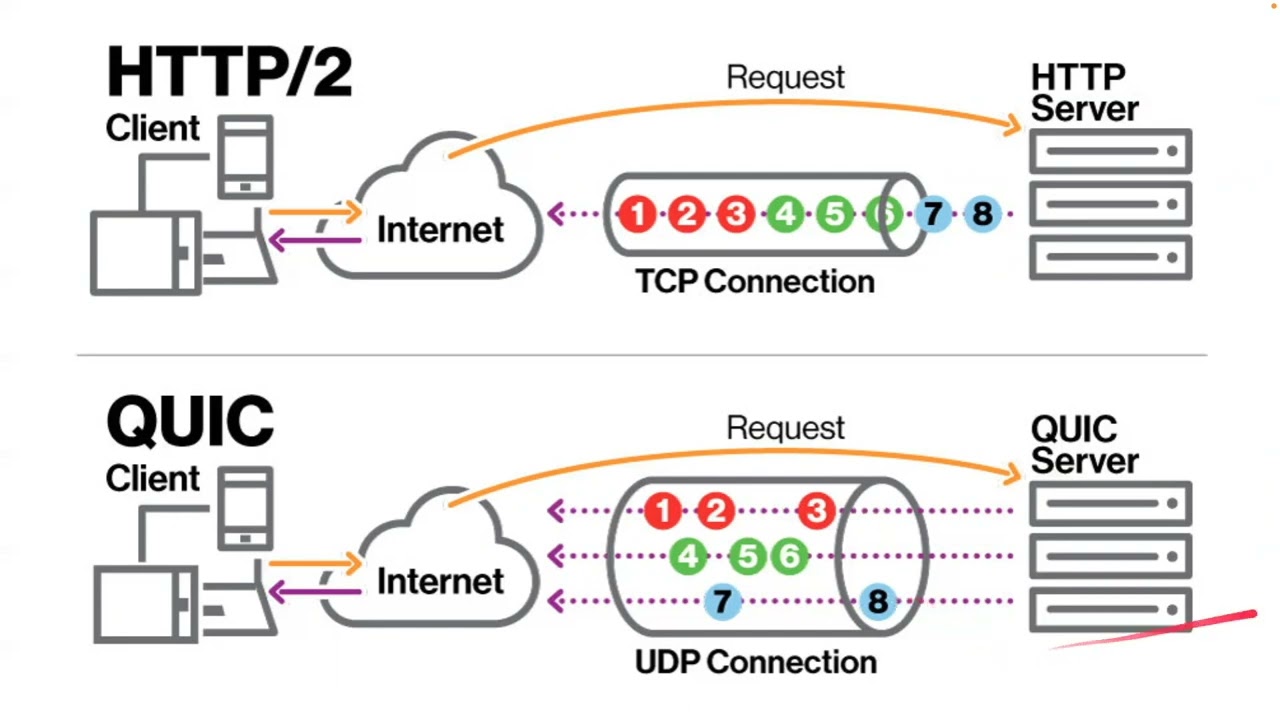

a lot of transport layer capabilities at the application layer and then running over UDP let's wrap up here by taking a look at this quick which stands for quick UDP internet connections is an application layer protocol that's meant to sit underneath HTTP and run over UDP as we see here it's already seeing widespread deployment on many Google servers and Chrome and in the mobile YouTube app it's also in the process of being standardized in the IETF from a technical point of view there's really not much for us to learn here because quick adopts the approaches

that we've learned about already in TCP for reliability congestion control and connection management and actually if you look at the quick draft specification it says quote readers familiar with TCP s lost detection and congestion control hey that's us we'll find algorithms here that parallel well-known TCP ones well since we've carefully studied TCPS reliable data transfer and congestion control protocols as well as flow control we'd be really right at home with quick so we don't really need to say anything more about these particular techniques but we should say a word about quicks connection establishment and how

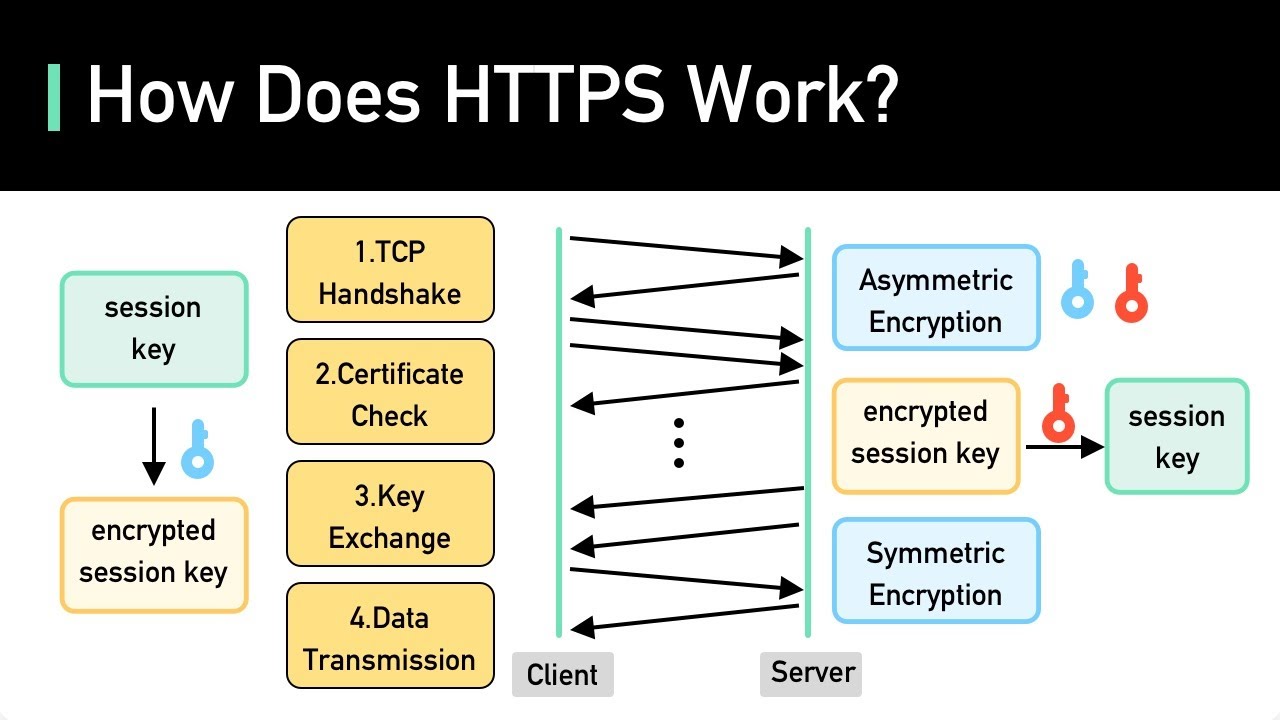

quick multiplexes multiple applications streams over a single quick connection like TCP quic is a connection oriented protocol between two endpoints and so a connection setup a handshaking protocol is used to set up sender and receiver state for reliability congestion control and security what's currently done in HTTP is that a client first sets up a TCP connection we've seen that takes a full RTT and then sets up a second connection on top of the TCP connection to do transport layer security and that takes another RTT so overall to our TTS are needed before data can begin

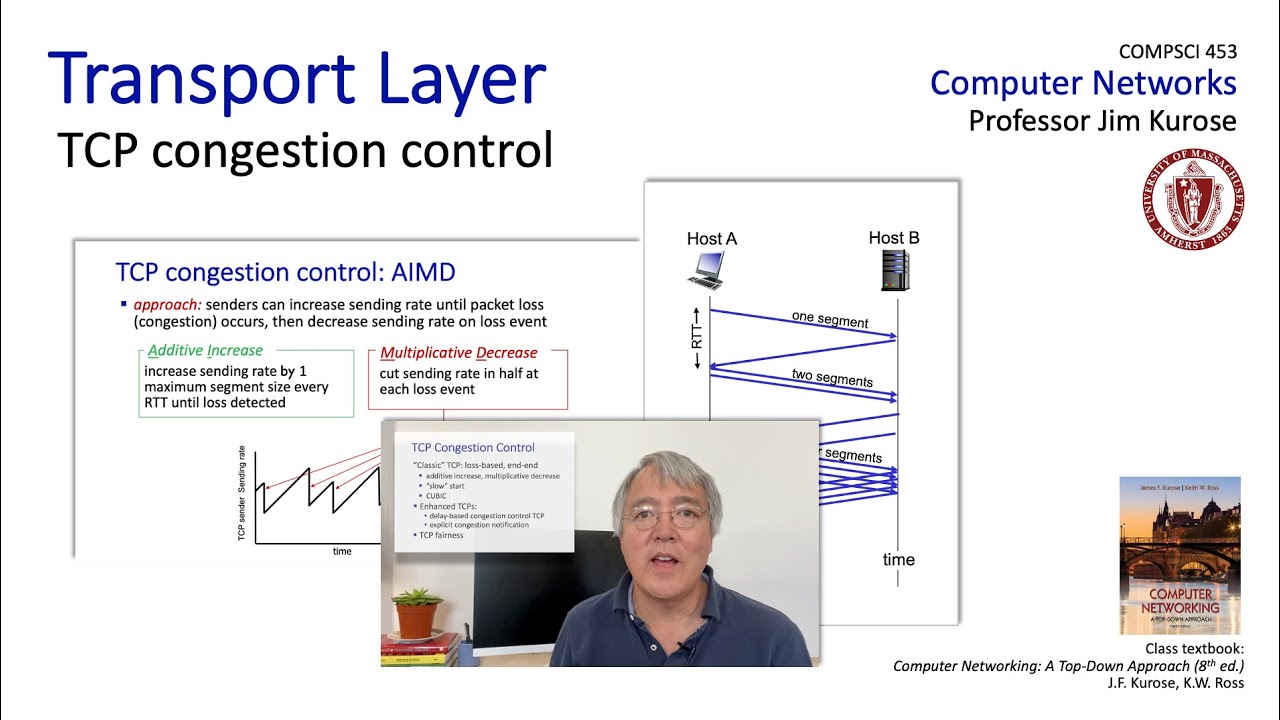

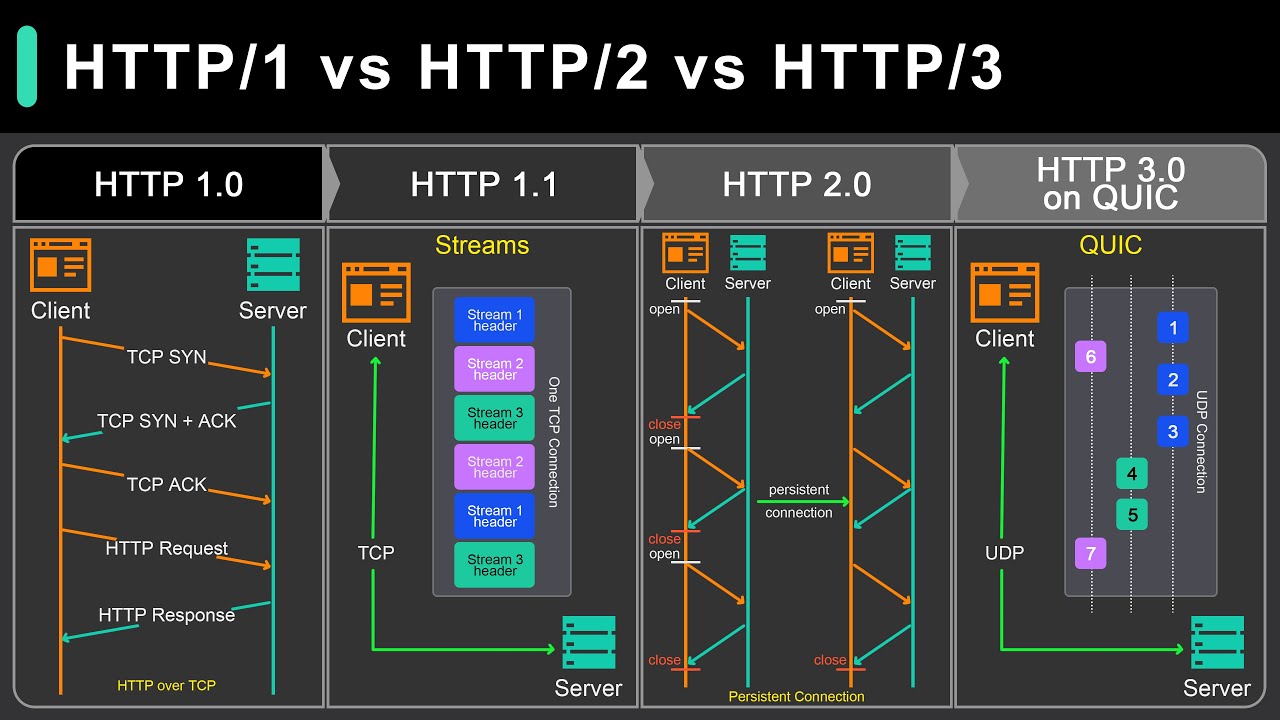

to flow at the application layer and using UDP quick performs a handshake in just one RTT and sets up reliability congestion control and security state all in one handshake is shown here quick also introduces the notion of multiple application levels streams that are multiplexed over a single quick connection and here's how we can think about streams in the case of HTTP 3 you can think of n streams being set up one for say each of the N objects that need to be retrieved from a web server in traditional HTTP shown on the left here multiple

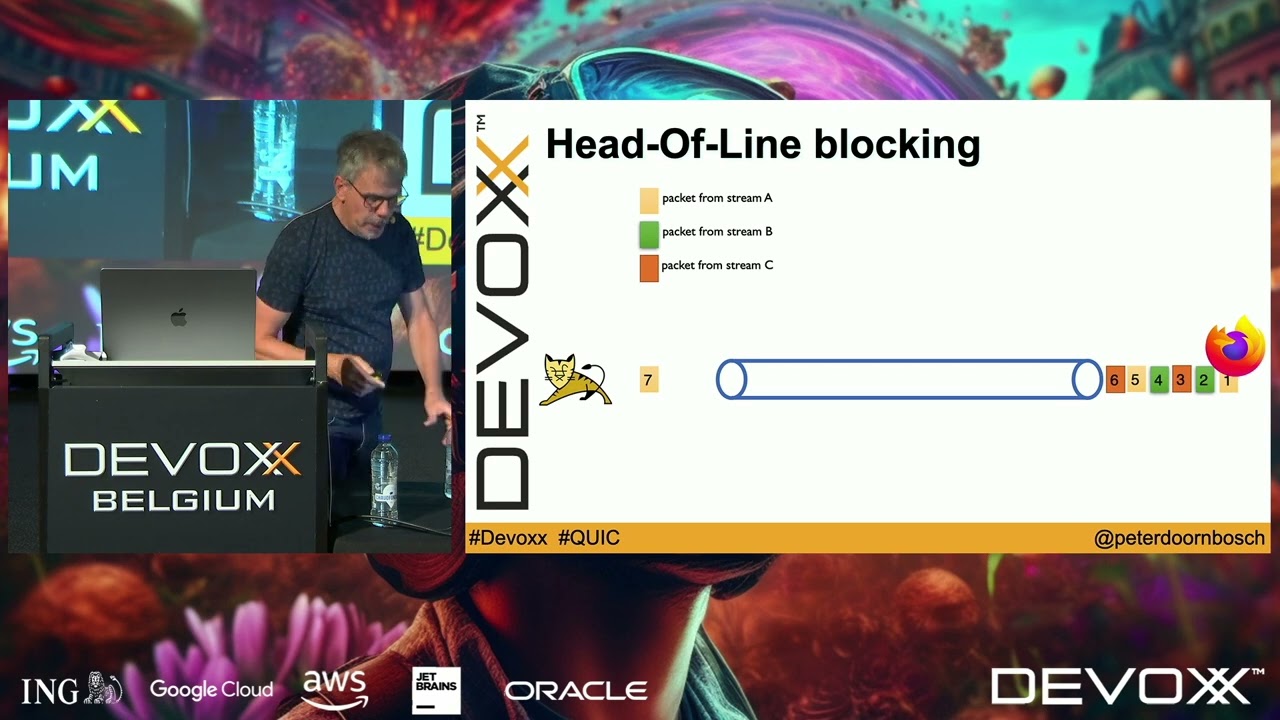

objects would be retrieved serially one after another from the web server the advantage of having multiple streams that multiple web objects can be retrieved concurrently as shown on the right here each of these individual streams has its own reliability and security but they're all congestion controlled by a common congestion control protocol which looks like TCP as you can see here in this animation and objects first retrieved but there's an error on the retrieval of the second object and so in the case of the original HTTP protocol the retrieval of the third object is going to

have to wait until the second object is requested correctly in the case of quic the retrieval of the third object can happen concurrently while the second objects error recovery is happening as shown here on the right when a unit of work is blocked for example the transfer of the second object here and that blocking stalls all of the work backed up behind it that's called head of the line blocking well that wraps up our crystal ball gazing into the future evolution of transport layer functionality and we're really all done now with the transport layer so

let's wrap up by summarizing and then taking a look at where we're heading next [Music]