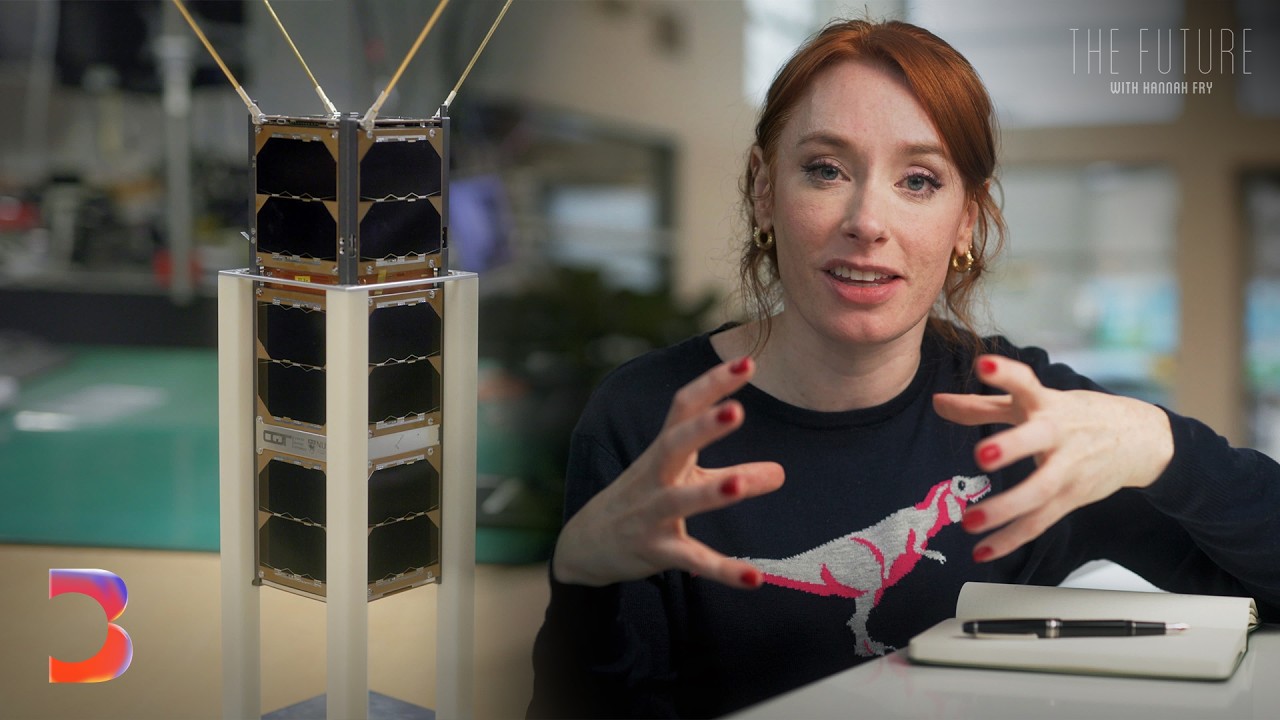

[Music] Welcome to Google DeepMind: The Podcast. I'm Professor Hannah Fry. Now, I want to start today, unusually perhaps, with a clip from another podcast I've listened to. This — what's the overall message here? Is it social commentary, artistic expression, or just a really elaborate joke? That's the beauty of this piece, I think; it defies easy categorization. It exists in this fall space between language and non-language, between art and absurdity. This is a very interesting discussion, which, as you might have guessed, is AI-generated. But what is notable about this particular clip, aside from the fact

that neither of the two podcast hosts have ever existed, is that their conversation — a mini treatise on human nature and our relationship with art — was generated from the most unusual of prompts. The podcast itself was created by a new feature called Audio Overview, part of Notebook LM, a personalized AI research assistant from Google Labs. Now, Notebook LM is powered by Gemini, and it lets you upload your sources — anything from PDFs to videos — to generate insights, explanations, and, of course, podcasts. We often think of AI as just crunching through data and spitting

out answers, but Notebook LM draws on expertise from storytelling to present information in an engaging way. And we wanted to see what happens when you ask Notebook LM to analyze what most people would consider to be nonsense: a single document containing just two words repeated a thousand times over: "cabbage" and "puddle." And here is the result. So, I have to admit, at first I was like, "What is going on here?" But the more I think about it, the curiouser I get. You know, it is fascinating, isn't it? We're dealing with this one-piece puzzle, right? And

we're trying to figure out — does this piece tell us? What do you think? What's your first impression? Honestly, it's almost like hypnotic or something, like if you were really staring into a puddle and all you saw were these words floating around. I can see it. It's a little unsettling, but also kind of funny. Several minutes of intellectual analysis packed to the brim with seemingly relevant ideas that are nowhere in the original document — that's quite impressive, really. Well, I am joined today by two people who are deeply involved in writing Notebook LM's story. Joining

us from San Francisco is Steven Johnson, Notebook LM's editorial director and also a New York Times best-selling author. And in Mountain View, California, Riza Martin is a senior product manager for AI at Google Labs, who leads the team behind Notebook LM. Welcome to the podcast, both of you. Okay, now I want to start with a feature that everybody's been talking about — this audio overview. Well, I understand that you've got a little clip that you want to play for me? Yes, let's play the clip. I think you will enjoy this, Hannah. Okay, here we go.

Welcome back, everyone! Ready for another deep dive? Today we're shrinking down — way down, microscopic, you might say. Exactly. Think about those tiny little droplets of water, you know, like the ones you see on a freshly washed car. Oh yeah, but imagine those droplets clinging to an airplane wing or on a plant leaf, right? Being sprayed with pesticides. The way those droplets behave is actually incredibly important for all kinds of things: making planes safer, more efficient farming, even figuring out how rain forms. Wow, that's fascinating! We're diving into some serious research today. That was my

PhD! The first page of my PhD thesis! Extraordinary! I mean, frankly, there is no good stuff apart from heavy equations in... Okay, lots of things to notice about that. For starters, they made it sound much more exciting than it actually is. That's the point. But also, though — their sort of back-and-forth... I mean, the two voices there are finishing each other's sentences. It felt very fluid — to pardon the pun — very, very natural. Imagine defending your dissertation now; you could just play the podcast and kind of leave it at that! I think if you'd

only had that at your disposal back then... Riza, have you been surprised by people's reaction to this? Because, I mean, it's had a really quite serious uptake, hasn't it? Yes, and I think the most surprising thing to me, and really equally delightful, is how people are using it. I think I imagined how they might, but I think the beautiful thing about launching something with this much sort of excitement around it is you see a whole new universe of what everybody has been trying — from things that are funny, to things that are entertaining, to things

that are inspiring or really meaningful. It's just been incredible. I actually probably spent a good chunk of my day — a third of my day — just listening to these. You set up a Discord server, didn't you? Just to let people share stories about the ways that they're using it? What kind of things have come up? So, I mean, that was an interesting example, playing your dissertation, because one of the things that I think genuinely surprised us is people would put their CVs and their résumés in there and it was almost like a little hype

machine. Like if you were feeling down about yourself, you would listen to a 10-minute audio conversation between two very enthusiastic hosts. You'd be like, "Wow, Stephen has really done a lot in his career. It's very impressive." But actually, a more serious version of that — I mean, that's kind of fun and playful, but people are using it like you can kind of workshop things you're working on. So, you can upload a short story you're working on and say, "Hey, you know, give me some constructive...” Criticism on this, and you get, you know, you listen to

people talking about your work, and they're very good at pulling out the kind of interesting twists or focusing on the characters that are particularly compelling or not. And so, it's a way of getting a little, kind of like, it's almost like a little focus group for stuff that you're working on, which is really amazing. I guess also hearing people actually talk about it out loud adds that kind of extra layer of, I don't know, objectivity, of why, I would say. It's been really surprising because, if we think about it, a lot of the content or

content generation, if you just render it in text, is not new, right? It's like if I upload my CV and then I have an LLM spit out something that says, "Oh, here's his career," right? A summary of sorts. Maybe there's a few interesting tidbits that it pulls out here and there that was novel two years ago; everybody was excited by that. But I think adding that new layer, or that new modality of just very human-like voices, I think it connects with people in a very different way. Right? I think, like, personally, I call this type

of technology "humanik," where you sort of recognize it as being very similar to you, and it resonates with you in a different way as a result. And I think the first time I listened to my CV, I knew what to expect, but when I heard it, I still felt that bubble inside of me. Like that, and I think that's the magic of new modalities. I think that, you know, the other point on this is that human beings have been learning and exchanging information through conversation for hundreds of thousands of years. We've been learning by reading

structured text on a page for, you know, 500 years and structured text on a screen for, you know, 30 years. And so when you activate that sense of a genuine, human-like conversation, it's just a deep, ancient kind of ancestral part of who we are that I think that's one of the reasons why it just lights up people when they hear it for the first time. Also, interestingly, I think that you decided to have two hosts rather than just one person sort of talking into space, as it were, which I guess speaks to the point that

you're making. See, yeah, it's just a very different format. If you just have one person, it feels like text-to-speech, right? We've heard text-to-speech before. You're just like, "The computer is turning the text that it just wrote into something I can listen to," which is great. And, you know, we're interested in trying to figure out ways we can do that in other formats, but to get the conversation right—and we can dive into this in more detail—there are all these subtle things that you have to make work. Nobody wants to listen to two robots talk to each

other like that; that will fail and be unlistenable after 30 seconds. You have to master all these very subtle, weird things that people do in conversation for it to work to make it human-like, exactly as you said. Right, I want to come back to those features a little bit later, to the audio overview, because I also wanted to discuss the origins of Notebook LM. How did it come about, Risa? For one, I think a lot of people think that Notebook LM is new because of the audio overview feature. We had such a massive influx of

people, and people were like, "Wow, what is this? A brand new thing from Google?" But, actually, we've been working on Notebook LM for over a year. We first announced it at Google I/O last year as Project Tailwind, and before then, we actually had been incubating it inside of Google Labs. And, um, it's actually how Stephen and I met. Stephen was brought in. What was your original title, Stephen? I was a visiting scholar. Yeah, yes, then I became editorial director. That's right; he was promoted. And at the time, Josh Woodward, who now leads Google Labs, he's

the vice president, told me, "I want you to build a new AI business." And I thought to myself, "What does it take to actually do that?" But what I’ll say is one of my early inspirations was just watching Stephen work. Honestly, just understanding how he does what he does, I was like, "Wow, that could be a real superpower if you could give that to people." It was a mix of Stephen being abnormal in his research habits, but maybe we could turn this into a mainstream pursuit somehow. Yeah, it was interesting because I had this long

history of writing books, and Josh had read some of those books and had read some things I was writing about, tools for thought—basically, how do you use software to help you think and help you develop your ideas and research? This was the middle of 2022, so language models were at the top of the list then. And so he kind of reached out to me and said, "Hey, any chance you would want to come to Google and help build the tool that you have always wanted to help people learn and organize their ideas now built on

top of language models?" And what Risa and I kind of like, right from the beginning... I think I met Rise, like, day two at Google. We were like, "Let's build something new." This came about at a time when large language models were at the top of the agenda. In those early conversations, how did you see this as being fundamentally different from just, I don't know, uploading a document on Gemini and getting it to summarize it for you? From the very beginning, we called it "source grounding." That's the way we describe it: you supply the source

information that you want to work with. It might be the story you're writing, it might be the book you're researching, it might be your journals, or it might be the marketing documents you're working on. Uploading that to the model then creates a kind of personalized AI that is an expert in the information that you care about. That was not something anyone was talking about in the middle of 2022. So, the first thing we built was, like, we uploaded part of one of my books, and I could have this very crude conversation with the model. That

was not at all like what you see now in Text Store with audio, but you could get a little taste of what it would be like to have all the ideas you were working with, instead of just talking to an open-ended model that just had its general knowledge. You actually have that personalized knowledge, and it was great because it also reduced hallucinations. It made it more factual; you could fact-check it, and you could go back and see the original source material. That's a big part of the whole Notebook LM experience. That was the beginning of

it, and everything we've done is built on that platform. Audio overviews are just about taking that insight of "I supply my sources," and now I turn it into something else. In this case, it's an audio conversation. I guess the real key difference here is that it's very focused on the sources that you're giving it and anything that's connected to that, rather than just, as you say, this general model. Yeah, I think that I'll say, too, that what we've seen is that it's a little bit harder to get started with this paradigm because it's so new,

right? The idea that, one, you're talking to an AI, and two, you have to bring your own stuff. So, I think there's a little bit of a layer where it's like, "Okay, you have to convince somebody that it's worth doing." But once you can get somebody over that hump, it's just massively useful. Because, you know, I think about the work that I do every day, the work Stephen does every day, and many people around the world that work on computers every day: we are working with very specific sets of information and shared context that we

have with others. Right? Like, we do research, we pull it in, and we want to sort of extract our own insights from it. I think that's what makes Notebook LM really special and has made it matter from the beginning. So it does include these text elements, too, because, as you say, the podcast part is the bit that's sort of most notable. That's right. The podcast thing is the most recent development in Notebook LM, but we actually launched a year ago, where it was primarily a chat feature. So, you're chatting with the system using your sources,

and it's always referencing back to exactly what pieces of your content it used. So, give me some more mundane examples of how people are using this, like on a day-to-day level, then, Stephen. Yeah, so, I mean, we actually see a huge amount of usage of the product just with the text features, right? Suddenly, you have this amazing resource that can answer any question about all, you know, hundreds of pages of documents. In the text version, you get citations and everything. It's a very scholarly thing, actually, and you would appreciate it: you get your answers back,

and every fact that the model says has a little inline footnote. You can click directly on that footnote and go and read the original passage. Writers and journalists obviously are using it. This comes a little bit out of my involvement with the project. I have one notebook that has thousands and thousands of quotes from books that I've read over the years, plus a lot of the text of books that I've written. That notebook has basically captured my brain in the AI. So, whenever I work on anything, if I have a new idea for something, I'll

go into that notebook and be like, "Hey, what do you think about this idea?" The notebook— you know, the AI—will say, "Hey Stephen, you read something related to that like seven years ago; what about this passage?" So it's a true extension of my memory. The last thing I'll say is that we're not training the model on this information, so your information is secure; it's private. It's not going to get into the general knowledge of the model and be used by somebody else. So, you can put private information in there, and when you put a couple

of years of your journal in a large context model like this, you can get these amazing insights and you can turn them into audio overviews and kind of listen to two people talk about yourself, or you can just be like, "What was I..." Thinking about, like, last May, you know, give me an overview of, like, all the stuff that was going on. And this, you, 20 seconds later, you'll have this amazing kind of document of your own life. Rather than just recalling stuff, though, can it actually be insightful in terms of your own journals? I

would say yes, because I've used it for that purpose. And, um, one of the things that I like to ask it after uploading— I do these weekly journals— is I say, you know, how much have I changed over time? And it's really remarkable. Um, it's been able to pull out for me really interesting nuances that I haven't been able to observe about myself. Um, it's been able to say things like, "Hey, you know, you tend to associate a lot of negativity with this particular topic; you associate a lot of positivity with this topic." And it's

just really interesting because I think the earlier question around the mundane use cases— I think we see a lot more of those, which is just people trying to take the work that they're doing every day. For example, like, sales teams use this a lot to share knowledge with each other; it makes a lot of sense. There's a lot of technical, complex, changing documentation, so it's really nice to have an AI partner. I think that's really different from how a lot of AI systems work today. Right? Like, you know, I use everything. I use everything that's

out there, and the prompts that I write are massive. Right? Like, the first thing that I write is, "You are a blah," this is what we are doing, "here are the documents that are relevant." And I think for Notebook LM, it sort of just shortcuts it. It's just a project space; it knows what you're talking about. You can have a conversation forever. It takes up to 25 million words; it's just sort of contextually quite massive. I think one of the things that was interesting, and maybe a little bit distinctive about it, was so many of

the questions about, like, what makes this product work or not work are not so much technological questions as they are editorial, stylistic questions. Like, what is the right kind of answer when you get an audio overview that works? You know, what's the style, what's the house style for those conversations? What level should they be pitched at? And those are not technological questions; those are language questions. And that's the crazy reality of the language model age, is that all these things that, you know, used to be just mostly a question of, kind of, like, "Let's get

the programming right," now become more about the rhetoric of it all. Well, I do actually want to dig into, um, some of the house style a little bit more, I guess. Why did you decide to go into audio overview? What was it that inspired that? I mean, there are already quite a lot of podcasts. Let's F.W.A. Audio overviews really began as a great example of the lab's structure, I think, really working well. Um, because it was another small team inside of labs that were just kind of focused on the audio version of this. And, uh,

we had— and part of the idea of it was not so much to, like, compete with podcasts, but rather that there was a whole universe of content that you would never— the economics of generating a podcast for it would never make any sense. But, um, if you could generate one automatically, you might have, you know, five people that would want to listen to it or, like, one person who would want to listen to it, or 20 people but not, you know, 200,000. And so that's like, you know, we want to create a podcast based on

our, like, team meetings from the last week so we can review them. Like, that's not going to be a comm business; like, no one's going to ask you to host that, Hana. But it actually might be useful for that team. And so they had started developing this thing, and, um, Ryse and I heard it, I don't know, probably in like March or April of this year. And as everyone who's heard an audio overview initially were just like, "Wow, what did I just hear? That was amazing!" But we realized, like, pretty early on that part of

our mission with Notebook LM was to build a tool that helps people understand things. Suddenly, we were like, "Oh wait, people really understand and remember and, you know, pay attention when they hear something in the form of an engaging conversation between two smart people." We released it internally to Googlers over the summer, and that was, I think, when we started to think, "This is going to be a hit," like, because you could just see the delight that people had with it. Um, so while we were surprised that it went quite as crazy as it did,

um, we knew we were onto something. Now, I remember in the last season we got to hear a demo of Waver, which of course is one of the first AI models to generate this human-like speech. And, I mean, it was quite impressive back then. But, I mean, presumably there have been technological advancements that have happened since that have been necessary to make something like Audio Overview possible. I think there's, you know, the underlying model for Notebook LM is Gemini 1.5 Pro, and that just creates really, to me, incredible content. The voice models, the audio model

that we use, that by itself is a breakthrough, and I think that's what you're talking about. About which is the realism right of the human voices—the human-like voices that we hear—and pair that with the approach that we've taken. Stephen can speak more to this too, of editorializing the content, thinking about how we create something really useful and really fun for you that's engaging. Yeah, I—that's a great segue, actually, to something I was going to say, which is about interestingness. Okay, so, um, Simon, who's one of the leads on the audio side, he sometimes has this

slogan for audio overviews, which is like, "Make anything interesting." So, like, whatever—make your dissertation interesting. I'm sure it was interesting; it wasn't. And so it's a great example of a convergence of a couple of three different technologies or kind of breakthroughs that make something magical happen. Gemini itself, and it can do this with text as well, is incredibly good at pulling out interesting facts or ideas or stories from the material you give it. So I do this all the time; I upload something new and say, "Tell me the most interesting things from this." Just in

text, computers could never do that before. Like, you couldn't command-F for interestingness; like, this was not a search query you could do. But how are you defining it? Even, I mean, what does it mean? I believe that it comes out of the basic idea behind language models, which is that they're predictive, right? They're like, "Given this string of text, I expect the next thing to happen," right? And so what interestingness is, is kind of controlled surprise. I thought this was going to be the case, but actually, there's some new information here that I wasn't expecting.

And so it makes sense, in a way, that the language models would be good at this because their basic circuitry is like prediction; and so they're looking through all this information, given their training data: what in this information is novel or defies their expectations? So you're very good at that, so that's an underlying Gemini, right? And the hosts of the show are instructed to find the interesting material and present it to the user in an engaging way, right? So that's one capability. The second thing that is really cool about this is that the instructions take

the script that is generated and they add noise to the script, so they add what are called disfluencies—so all the stammers and the likes and the interjections that humans actually have when they speak. And it turns out you need that because if you don't have that noise, it sounds too robotic. And then finally, there's the audio voices themselves, and what they do is all these subtle things. Like, in English, you know, speakers will raise their voice a little bit if they're not sure about what they're saying or for emphasis. They will slow down what they're

saying—all these things that we do natively; we would never even think about it, but no computer could do that until now. And that's part of it; that just lights up, and that's the underlying vocal model, you know, the audio model that didn't exist a year ago. It's the voice modulation, right? It's like, you know, I remember years ago at the BBC being taught to make content sound engaging, and they give you a copy of Winnie the Pooh to read, right? And then they say, "Okay, read it as you would a newsreader," and you sort of

read it very, very flat. And then they say, "Read it as you would to a child," and you notice, exactly as you say, Stephen, that your voice goes up at certain points and it goes down low at other points. The range that you have and the speed completely changes. But you've built all of those aspects into this. I mean, how on Earth do you do that? Yeah, we should clarify—we did not build the vocal model. We have no idea how it was built, and geniuses inside of Google built that, and we inherited that technology and

have been running with it, showing how it could be useful, but we did not build it. One of the questions that people have is, you know, when is it going to be English-only right now? And, you know, people are very eager for it to come into different languages, and we are very eager for that too because we have a wonderful international audience. But it's not something you can do easily because the intonations and all those little conversational ticks are different in every language. And so you can't just be like, "Change the words into Spanish and,

you know, press play." I was just going to add that DeepMind actually has a recent blog post about the audio model and how it was built and who built it and all the research papers underneath it. I think, you know, if we could share that, we should. Yeah, absolutely. I think one thing that's really noticeable playing around with this is how it is very versatile across different types of data that you give it. And so, I mean, the way that you're describing this, Stephen, is that you're sort of coding in all of the disfluencies, but

how do you stop this thing from just sounding like a bunch of clichés every single time? Riser, I actually think it's hard to get it not to sound like a bunch of clichés every time. I think because of, you know, trying... To standardize interestingness—that's really actually quite difficult, and so interestingness tends to sound the same after hearing it enough times. Uh, and that's why we actually introduced the first improvement to this particular launch, which is we're letting users, uh, I call it, "pass a note" to the hosts, where you could slip them a little instruction,

see on, "Hey you know what, maybe less of the cliché, go deeper on this topic," and it will change the way that they talk about whatever content you've given them. Should I sort of imagine this as almost though you have different kinds of dials? Like maybe you turn up the quirky dial, and maybe you sort of turn up the kind of historical fact dial, or how can I think of this? Well, imagine one—one thing that I'm very interested in: what if you could give each of the hosts a different kind of field of expertise? Right

now, they basically are kind of interchangeable; they don't have defined perspectives on the world. They're just kind of one takes the lead in the conversation and we switch back and forth randomly. Um, but what if you were like, "Okay, I'm a city planner and I'm working on this, you know, design for this new town square and I want one of them to be an environmental activist, and I want one of them to be an economist, and now let's have a conversation, and let's have a debate." And now suddenly they have different perspectives because, you know,

one of the things that this is something I've written about a lot in my books over the years is that people are more creative and make better decisions when they have a diverse pool of expertise helping them make the choices or come up with the ideas they're trying to do. I mean, that—that's also on our roadmap for 2025. Would I actually be able to interact with these hosts in the future? Like, I don't know, interrupt them and join their conversation? Well, we actually showed a version of this at IO, the big Google Developers Conference, where

we first rolled out this feature, announced it, and they do their kind of audio podcast format. And then Josh Woodward, the head of labs in the demo, interrupts and says, "Hey, can you—" they're talking about physics, um, and it's like, "Hey, can you use a kind of a basketball metaphor here because my son is listening?" And they're like, "Oh great, okay, there's someone called into the show," basically, and they're like, "You know, let's do it in a basketball metaphor." So that has been, you know, publicly, like, part of what we wanted to do, and you

can imagine we're very eager to bring that to people. I mean, you paint a really compelling picture. I do also wonder, though, is there the danger here that you could have, um, you know, picking up on a minor detail in the corpus of text and then sort of make it into a much bigger thing than it necessarily is? I mean, we're still at the situation where large language models can kind of hallucinate, can not necessarily put the right emphasis on different parts of what it's reporting. Oh, early days of like three weeks ago when we

were testing this customization, I pasted a note from the Producers feature that Rise is talking about. I uploaded an article I'd written a couple of years ago, and I gave them the instructions to, like, um, give me, like, relentless criticism of this piece in the style of, like, an insult comic at a roast. Like, I was like, "Don't." Because there, again, they're kind of instructed to be enthusiastic. So I upload this piece and they—it was—it was cool; they immediately were like, "What is Johnson's problem? Like, does he even—did he even do any research for this

piece?" Whatever. But they also, like, they kind of reached for a criticism of it that genuinely—I'm not just saying this because I wrote it and I'm defensive—was kind of wrong. Like, it kind of misread it a little bit, and I couldn't quite tell whether it was because I'd instructed them to be so extreme or whether they just—it's almost like I keep saying this to people—it's like they don't really hallucinate in the way that the first-generation models do; it's just that they sometimes get confused or they misinterpret something in a way that humans do, and they'll

just kind of—their take is a little bit off. What about humor, though? I mean, we're talking about all of these different types of examples, right? Have they ever made you laugh? Uh, yes. Yes, actually I will say that they have made me laugh through the, uh, the cleverness and the humor and sort of the exploration of other people. Because I myself, like, I don't think I could have come up with the funny cases on my own, but, uh, just seeing what people have tried, uh, you know, in the outside world with the technology—that's been really

funny. And if somebody uploaded a document to Notebook LM and the document just said the words "poop" and "fart" in it, and when I saw that that's what it was, the person posted it on Twitter. They were like, "That's all this is—listen to the podcast!" I was like, "Oh dear, what is this about to be?" But it was hilarious; it was so good. And there were, you know, the thing that makes it so funny is that there were moments that were truly hilarious, and then it would dip into... "Like, but what does it really mean?

It would be thoughtful; it would be bizarre; it would be thought-provoking. And I'm like, am I really listening to this very seriously? It was great! Yeah, I guess in some ways, though, that's sort of hilarious in the way that the AI is kind of oblivious to how absurd the challenge and challenges it's been set. I think on that one, they mentioned, like, is somebody trying to trick us into just saying a bunch of poop and fart? And I was like, I think so! I do also think that, you know, the more traditional forms of humor—so

not just laughing at how oblivious the AI is—but a lot of that seems to me like it's about the buildup and release of tension. So, I mean, it's a kind of similar thing, right? About like you're making a prediction of where you're expecting a sentence to go and then it goes in a different direction. Is this something that you think it will be able to do in the future? Because I don't think it's particularly good at it now. I actually had this sense in the early days, the first couple of weeks really that it was

out. I actually wrote about this briefly, which was that they actually weren't very good at humor. They had banter and they were playful, but they didn't really, like, crack good jokes or have genuinely funny things. Then it turned out, as Risa said, that users were able to kind of push them into being genuinely funny. They had to be put in a funny situation, as it were. Like, we've been given this poop-fart document. Another one was a completely coherent-looking scientific paper with charts and graphs and published with footnotes and everything, except that every word in the

paper was 'chicken'—just chicken, chicken, chicken, chicken, chicken, chicken. And every footnote was chicken, chicken, chicken; all the charts were chicken. And so they gave them that, and that was the first part where I actually really laughed. They were just like, what is even happening? And they made some funny jokes. So it's like they have to be prodded into it by an unusual situation. In a weird way, you did mention something there, actually, that I want to pick up on. There are people who have made a criticism of this technology, saying that it's a threat to

the podcasting world, that you could be flooding the podcasting world with lots of kind of generic, low-quality AI-generated podcasts. I mean, is there a response that you have to that? What is most interesting and nuanced about it is that what we've found is that people are creating content about things that probably don't have a podcast about it to begin with. It really is—I don't want to say mundane—but it really is things that nobody is going to make like a whole show about. And I think that is interesting. I think we're putting power in people's hands

to create content that they want, that they ordinarily wouldn't have access to. The second piece of this around sort of the low-quality content, you know, I would say that most of the content that I've heard—ones on the internet, just people posting on the Discord—the quality is quite high. I think on the third note, all of the generations from Notebook LM are also watermarked with Synth ID. So we've taken a very responsible and cautious approach to making sure that, you know, as we create the machinery or as we launch machinery where you can create audio outputs

that are very human-like, we want to make sure that we approach that with watermarking. One of the other things that's interesting here that I think you're kind of getting at a little bit in this line of questioning is, you know, we are personifying these people. They do sound human, and we do all these things to make them sound human, right? And the interesting thing about this is actually like the philosophy that we'd had up until that, until audio overviews with the product was in the text version of Notebook LM. It actually does not try to

sound particularly human; it's very kind of factual, and it doesn't try to be your friend on some level. Yes, it's almost cold, you know, and that was kind of a bit of the idea—the house style was that. But you can't do that with voice. That's the thing that became very clear, like the second we kind of first heard these. It's like you can't convey this through a conversation and not sound human; don't pretend to be a person—like, there's just no place where the human ear will tolerate that. I do wonder about that, though, because in

that way, you are, as you say, leaning in a different direction to, I mean, lots of the other conversations that I've had with Google DeepMind about how you should try and avoid anthropomorphization. You should avoid trying to think of them as they—I mean, we've been describing the podcast as they the entire conversation. I mean, are there dangers or concerns that are associated with the anthropomorphization of these characters? Right, I think that by personifying them to a certain extent in the way that we have—like, adding texture to the way that they describe things, making them sound

more human-like—I think it's a way to make information easier to consume and easier to..." Something more useful, and I think that the reality is that we probably shouldn't resist these types of approaches if we believe that there is enough value associated with them. I really do; I really think that I've seen — I don't know if you've seen on TikTok — all of these people uploading their study materials, and they're like, "Wow, I can study so much faster!" I think about cases like that, where I'm like, are these people being harmed? What is the actual

danger? And I'm not saying this to sort of be like, "Well, clearly, it's good for society," but I really am thinking about what they might be losing as part of this experience. I think that it's less about the personification or the anthropomorphization of the hosts themselves and more about, okay, what did you lose by listening instead of reading? Maybe that's it. Yeah, and it's a great point. The other thing that I would kind of add on that is it turns out to be a very powerful way to learn and to understand is through dialogue, through

asking follow-up questions, and steering the focus toward the things that you need to know in a complex body of work. But that kind of dialogue — if you wanted to have a conversation about a book and really engage with it — you know, most people don't have access to the author of the book. Most people don't have access to an expert tutor that understands the complexities of the book. But now, with AI, those kinds of conversational explorations are possible. It's kind of a much more ancient way to explore things, exactly as you describe. I do

wonder, though. I mean, you're talking about how people don't have access to the author, but what's to stop somebody from uploading a book where you really don't want them to have a conversation with the author? I'm thinking here, like, putting in "Mein Kampf" or "The Anarchist Cookbook." Yeah, I mean, there's a kind of underlying safety layer that Google, you know, spent a lot of time working on. DeepMind spent a lot of time working on it. So, there are obviously offensive, dangerous things that you can catch. The trickier thing is, like, what happens in terms of

politics? So, if you upload something that's within the bounds of conventional political discussion, but it may be more right-wing or more left-wing, how should the host respond to that? We specifically included instructions that say, listen, if it feels political, then you should adopt the attitude of, "Hey, we're not taking sides in this. We are just going to have a conversation about what this document says, and we're not going to endorse it or critique it in that way." We figured that was the best compromise for those kinds of complicated political stances. I think there's also the

interesting sort of line, right? There's the safety concern, and then I think there's the censorship concern. Actually, in the early days, we ran into this a lot before the safety filters were much more sophisticated, where, you know, people study difficult topics. People study things that happened in history that have quite a bit of violence and racism, right? These are topics that are fraught. But I think it would be wrong to create a tool that blocks sort of content generically without a thought around the intent of the user. We're not allowing users to create harmful content,

but at the same time, if most of our users, especially in the beginning, were learners and educators, like if you're studying history, you are definitely going to run into a safety filter. Well, that was my problem. The last book that I wrote — you mentioned "The Anarchist Cookbook" — was about, part of it is about the history of anarchism and the kind of roots of terrorism in the early anarchist world. I was using Notebook LM to help me research that book as I was writing it, and it was constantly like, "I'm sorry, I can't answer

that question because you are obviously a terrorist, Stephen." I'm like, "No, no, you're definitely on a list somewhere, Stephen." That's right; I still have a job. There is also this question about personal data. I know that this is something that has been really subject to a lot of discussion with large language models and people uploading documents and being concerned about it kind of feeding into the next generation of models. So, how do you make sure in Notebook LM, as you said, that the information that you upload can be private and remain so? Yeah, so this

actually is an opportunity to explain something that's really important here, which is the idea of the context window of the model. The context window is effectively like the short-term memory of a language model. The long-term memory is like its training data — like its general knowledge of the world — and the context is the stuff you put in with your query when you ask a question. Anything in the context window is transitory. The second you close your session, it disappears and gets wiped from the memory of the model. What that also means is, that's why

it's private, right? We're not training the model on your information. All we're doing is putting it in the short-term memory of the model, letting the model answer questions, and then when you close the session, it's like the model has completely... “Forgotten anything that you've given to it? So, in terms of the future of this, I mean, this is still quite a young product. Um, what other things are you hoping to include on it? I think we've seen so much excitement about the audio feature, so I think we can definitely commit to that being on the

future roadmap. I think it alludes to more controls, more voices, more personas, and more languages. I think that's just such an exciting horizon for us. The one that I'm so excited to think about, you know, which we've just started to scratch the surface of, is there's a lot of tools for asking questions and listening to explanations of things, but what about writing with these sources at your disposal? Like, how do we write in a source-grounded environment? And so, just as a writer myself, I think that that's going to be an amazing thing. We have some

really, really cool things in the works. I do also wonder about different modalities. I mean, you've gone to audio, but presumably you could go to video at some point too. Yeah, and actually, there's a fun idea we have for video, which is like, okay, we're not talking about fully generative video yet, but imagine if you could do even something really basic, like you upload these slide decks. They have charts, they have diagrams, you have PDFs of papers—just take the content that's already there. Notebook LM is already incredible at this because of our citations model—the fact

that we know exactly where every piece, you know, of the answer comes from. We use it to generate audio overviews, we use it to generate textual answers. I think it wouldn't be that big of a leap to generate short videos using your own content. I do really like, Stephen, how you're describing this often as the thing that you use to make the podcast that nobody else would want to make, right? But the point here, I guess, is that you're not trying to replace all podcasts. There are presumably things that you expect NM will never be

able to do. Yeah, if there is, you know, people, I think, will generally always prefer to hear two actual humans talking about a topic. If there is, you know, if there is enough economics or passion to generate a podcast on a topic, humans actually talking to each other will be the choice. It just turns out that there's this vast uncharted territory that, you know, just wasn't—no one ever thought about making a podcast based on, you know, the family trip to Alaska because it just wasn't, you know, it just didn’t make sense to like rent a

studio to do that. But now you can just take everybody's journal entries and photos, upload them to Notebook LM, and you can have a podcast based on your family trip. And so, I think that's where it turns out there’s just all this untapped kind of blank space on the map that we've just started to explore. Do you think that there are elements of, I don't know, like human content creation that are really hard to capture with AI or that AI will maybe never be able to capture? Yeah, that’s the thing we're trying to figure out.

I mean, you know, one idea I think that I'm really interested in is: how capable are these models at thinking and developing ideas that are really long-form? So, you know, book writing, right? So, when you're coming up with the idea for a book, you're really thinking—it's an incredibly long-term process, and you're thinking about, like, a presentation of information that's going to go on for 300 pages. It's going to evolve with all this complexity, all this narrative complexity, and you couldn’t approach that at all with a language model. Right now, you could work on little bits

of it, right? You could say, okay, I’m trying to set up the scene or I’m trying to figure out what the narrative should be, but you can’t actually imagine the whole thing. That, right now, is just a human-exclusive capability, and I think it will be for a long time, and it may always be. But who knows where we’re going to end up? Both the wood and the trees simultaneously. Yeah, yeah, it's...it's...it! And I think the kind of seeds of that, there's some promising signals, but it’s still people who write books for a living, I think,

can feel confident that they will continue to be able to do that. Yeah, although writing books for a living is one of the most torturous professions there is. As someone who's trying to write one at the moment, I want you guys to hurry up, please! Well, thank you both for joining me. That’s a really, really fascinating discussion. Appreciate it! Thanks for having us. Thank you! Thanks for having us. You know, I think there’s actually something quite heartwarming about the way that Notebook LM has captured people's imagination because, on the one hand, you’ve got this technology

that is operating at the absolute cutting edge of what is possible with some of the most sophisticated AI models out there, and it's something that's designed to deal with this very modern problem about how we are often overwhelmed with having to process these large amounts of often quite dense and maybe quite boring information. And they've hit upon a solution that is so innately human, so ancient and appealing—the idea of listening in to a conversation between two excitable and interested people.” And, of course, the fastest way to make a human prick up their ears and pay

attention is through gossip. This is like sitting around a fire while an AI uses that very trick to help you digest 25 pages of a snore-fest lecture series. I mean, put it this way: if it can make my PhD thesis sound interesting, then this has the potential to be quite a powerful tool. You have been listening to Google DeepMind, the podcast, with me, Professor Hannah Fry. If you enjoyed that episode, then do subscribe to our YouTube channel, and you can also find us on your favorite podcast platform. And, of course, we have got plenty more

episodes on a whole range of topics to come, so do check those out too. See you next time! [Music]