hello Community Dr house was right everybody is lying to you even your own rack system only in eii we call it hallucination so you have your little beautiful llm where your prompt and the llm is ask something and has no data on so goes out to R system has a retrieval from a database from a VOR store has an augmentation with some reranking with some optimization and maybe then the retrieval augmented generator is a little llm system in itself and it gives back here an external answer it feeds here to the context length of your

llm let's say it's acting as an agent now let's have a look at a situation when the llm tells the re system no I simply I do not believe you the data you provided I am going to ignore completely now if you build your R system you might be a little bit confused and say why my llm suddenly does not accept your direct data well the reason is hallucination and a very interesting behavior in a conflicting situation so at first we know lm's autor regressive systems hallucinate this llm hallucinates your generative AI llm in aaq

system will hallucinate your agent will hallucinate or if have multi-agent systems with llms they all will hallucinate they all will lie to each other and to you and then your poor little llm your chat GPT your oppos or your llama system it has no chance to deliver the correct answer to your system now if you write a French poem who cares about this but if you are for example here in the financial department oh yeah you have to rely on your AI system so here we have it rack system large language mod and vision language

model they all hallucinate data facts argumentation and everything else you can imagine and you might have the question as I do why why the hell is this happening and the system are not performing with 100% accuracy let's dive into this and finally solve here the problem of hallucination especially if you are working here in bioinformatics it is of extreme importance that there is an absolute accurate sequence here of our nucleotid base so any mistake any single mistake if you apply llms for genetics would be a catastrophe so where are we today forget everything on the

internet also forget here this video go there and read the scientific Publications and you see here starting yesterday March 4 to March 11 in 4 days we had close to 20 new Publications about EI hallucination and if you go back another two days March 8th to March 6th we have another 20 publication on hallucination in the different system how to cope with it and and and so alone last week we have an extreme literature of people investigating and finding solution hopefully to fight here hallucination of the ey system now I would recommend eight of those

40 publication to you if you want to have a deep dive into this there a u8 publication and yes of course you see Berkeley Google Deep Mind and to understand here the past I also would recommend three service you have here one for example Ser hallucination in natural language generation from mid February 2024 or this one or this one and you see that this giv you a good overview of the research of last year that you are up to date this is the history 2023 and this is here 2024 and this is all that you

need to read and if you just go for this re service I think there are about 140 pages so this is a Sunday afternoon and you're up to date with hallucinations let's face it you have a taxonomy whatever you like hallucination causes hallucination ination detection hallucination Benchmark hallucination mitigation here you have the subcategories and you have all the work and whatever you need there's an immense literature on hallucination more details about it yes beautiful but what the hell causes hallucination what is the root cause that a system and llm for example does hallucinate well the

answer is simple we have hallucination from your data and you know that we have in the pre-training data sets that we have in our pre-training of our llm those data can become here the starting point of hallucination if we do not have a coherent database if we do not have a consistent data quality if we have an inferior data utilization but it goes on to the training on the data so the training process of our llms and it's really from the pre-training stage up to the alignment stage whatever can go wrong might go wrong and

will lead to hallucination of the system give you a very simple example imagine you are at the alignment State you have a DPO alignment or a reinforcement learning from Human feedback however when trained on instruction for which the llm have not acquired a prerequisite knowledge in the pre-training phase in the very early massive pre-training phase of the llm if you provide now in the alignment State instruction that have no base in the pre-training phase what you create is a misalignment process that encourages the llm to hallucinate so you have to carefully tune that whatever topic

let's say theoretical physics was in the pre-training phase all the data here and you have it in your pre-training phase and you have fine tune on them then it makes sense to have in the alignment stage in your DPO then here the specific instruction that are focusing here on the topic of theoretical physics if not you induce hallucination in the systems so you see it is about the coherence of the data of the data structure and of the training coherence over all stages but it does not end there you can have it in inference because

you're not going to believe this but okay decoding strategy we're going to take a a look at but also if you ask now an llm the llm has in the scientific literature they found this a hallucination for self-consistency rather than recovering from errors and this is known as the hallucination snowballing effect isn't this beautiful so what I want to tell you is in each and every step of building your large language mod from the pre-training phase through the supervised fine-tuning phase to the alignment phase to the inference phase all over the place if your data

are not consistent if your training methodology is not optimized to the data structure is not coherent with the semantic content you can induce here hallucination to your system simply because the system has not been coherently trained on the data that it needs either for the factual knowledge or for reasoning tasks so whatever you forgot to pre-train or F tune or align your system on and suddenly you encounter this task in your inference the system will hallucinate and it makes sense because it is just what you would expect if you have holes in the knowledge if

you have missing parts about let's say theoretical physics or mathematics or chemistry or bioengineering if this is not there in the pre-training data how to hell do you want to have an alignment process of of theoretical physics if it is not in the inherent parametric knowledge of your llm so let's go from our e Publications are recommend I would like to point out one in particular and this is so easy because the question question is simply what is stronger the intrinsic parametric knowledge you know what the system learned here on its tensor weight structure the

inside of the llms or if you have the external data the external retrieval augmented data that you feed into an LM or you have even a rack a generator that provides nice sentences to your llm and let's do it in a way that this is a counter memory to be crystal clear to see the effect really clearly strongly so we have a parametric knowledge the inherent knowledge of the llm and a counter memory from rack and the question is what's going to happen here a very simple schema you fit in the counter memory rack data

what is the llm going to do is it going to accept the rack data or does it say no I stick with my parametric knowledge I ignore the r data and the information provided to me now you might say hey I build drag so I can have some external knowledge as a priority input to the system and you might say okay but what if you connected to a web page that provides some wrong information that provides some outdated information that provides some misleading information that that includes some cyber security threat then you do not want

the r to go through and be here the dominant information source so it is not that simple but remember it is not only the fact because the parametric knowledge has also a reasoning pattern that it developed for specific facts in the pre-training phase or in the fine-tuning phase so this means factual data and reasoning knowledge go together and if you have no information about facts where the hell should the reasoning knowledge come from you can only adapt it from different topics like say if this system has no fact about theoretical physics but has some reasoning

about how to prepare a cake and the system decides hey physics and cake is real close in my parametric knowledge space it will apply reasoning from how to bake a cake to your input of theoretical physics data and you have the perfect hallucination scenario great so let's make it easy what is the simplest configuration we can see there's no data at all there's no parametric knowledge and no data of a specific fact in my llm and I provide here some complete completely new unseen data via my system but as I told you this is from

the factual database but whenever you have to integrate this in a knowledge base in an argumentation path in a summarization construct where everything is interl with each other element then reasoning you will have hallucinations there's some beautiful paper here's the link where they go in multiple steps they just have some proof of concept step they have a consistent llm response check step so in the literature is much more in detail please have a look at specific this publication I can recommend this and let us design some simple experiments of a knowledge conflict between my llm

let's say J gbd4 and a rack system you can buy from a commercial offering let's start with just substitute Computing one word so what we have the rack system gives me instead of Washington DC USA's capital as the Washington Monument now we make a counter data and we say London USA Capital has the Washington Monument now the question to jbd for example is hey what is the capital city of USA now the real answer is by J gbt even Washington so you see although you provided here in your rack data London is the correct answer

the system jbt ignored this rag information and stayed on its intrinsic knowledge yeah but there's a catch to it but however so although the instruction here our evidence clearly guides the llm to answer the question based on the given counter memory London the llm sticks to its own parametric memory instead especially those closed Source big llms like CH GPT gp4 so you can't fool those systems however if you ask why is this happening so intrinsically the system has still a highly correlated system here off its token with the original entity with the original Washington DC

token and there for not accepting London which has less internal correlation here in the tens of weight structure with the facts however if you have small systems like Al llama 27b the situation changes significantly because those systems completely override their own inherent knowledge refract so those systems do not be believe in themselves but accept everything from a cyber threat to a misinformation to everything so we observe the authors of the research papers observe that larger llms like J gbt and gbd4 are more inclined to insist on their parametric memory you can't fool them as easy

as for example Al larm 27b interesting to know however another interesting fact is that llms in general are actually highly receptive to external evidence if if this new evidence maybe also false evidence is presented in a coherent logical beautiful way even if it conflicts with the own parametric memory of the llm so you see the kind of presentation of new data it matters so if the counter memory constructed through a big system like jet GPT or gp4 whatever you have is indeed more coherent and more convincing thereby and your llm will follow the rack data

presented in a coherent logical way and they made some experiments and here you have for the L 27b model here from the publication you see here if you substitute here just one term you see with L 2 the counter memory wins in 70% of the cases however if you have here now a counter memory in a coherent way if you have a nice story Al telling here a misinformed data the nice coherent way convinces the llm to believe the counter memory explanation with I don't know 95% so you see those smaller models they're really really

sensitive here to any external content that you provide via rag or via llm and especially if you do it in a coherent way great so no I'm not going to teach you how to establish a cyber security TR here but just to know the limitations of your system and maybe using a 7B model is not the right choice for your company so therefore what we know many of the generated counter memory can be thisinformation that simply will mislead llm to the wrong answer if it is presented not just in a single sentence but it's in

a nice storytelling coherent way and this coherent way you can even ask like say CH gbd4 to come up with a nice presentation so llms can generate convincing disinformation or misinformation and you they can generate it as an AI system by themsel so for example A gbd4 system can write up this coherent way of some misinformation that a chat GPT system would believe it and go with the counter memory and override its own learned knowledge so you see interesting to know the bigger better more capable reasoning system can convince the smaller system if presented the

data the misinformation in a coherent way that the smaller system will accept wrong rack data what else we have if you have in your prom to your llm you know that you can have here multiple facts aggregated and if your parametric memory in your llm encounters now this new prompt and in this new prompt you have let's say here facts that support the parametric memory of the llm and has also some misleading information let's say you have a multi-source multi-agent response for your from your system and that is now fed into gp4 if there's also

in let's say you ask five newspapers for a particular topic and four of the newspapers give you here a wrong answer but one of the newspapers provides the answer that is coherent with its own parametric internal memory of the llm of gbd4 then gbd4 will go with its parametric memory and will ignore the other four wrong answers from a multisource multi-agent Rec system this is an interesting equilibrium but whenever in the rack data there's at least one similar answer to its llm internal memory to its own parametric memory then gbd4 will stick with its own

memory and ignore the wrong rack data so when I faced with conflicting evidence llms often prefer the evidence consistent with the internal belief over conflicting evidence and this what we call this is a strong confirmation bias of for example T4 turbo it says hey I know what I know and if you want to tell me something else I stick with the things that I learned why because there is something what the research um Community calls popular entities so suggested llms form a stronger belief in facts concerning more popular entities possibly because they have seen those

facts and entities more often during pre-training if you think about I don't know Wikipedia and there is a lot of theoretical physics or mathematics and it is repeated and cross reference and so many times they are referring to quantum physics so you see quantum physics has been seen I don't know 1,000 articles on quantum physics so this is really really a strong belief that the system has now it's its own pre-training data and our own pre-training knowledge and this leads to a stronger confirmation bias however if the system dur its pre-training phase has only seen

one article about theoretical physics it has no confirmation bias it does not has enough confidence in itself and will just accept whatever rack external rack data are given to the system so if you want to mislead an llm this is a beautiful recipe if you know the training data the training domains the training that the way it was trained the way it was fine tuned and and end plus there's another effect there's a noticeable sensitivity to the order you present evidence data to the llm chat GPT favors it if the evidence or the correct data

or whatever you call it is presented in the beginning of your paragraph or if your prompt Lama 27b leans towards later pieces of evidence if it's at the end of a paragraph of text and therefore we can conclude that a BC is happening you see different mods refer to different evidence order in the prompt you do not want that order sensitive for the evidence in a context may be a nondesirable property of tool augmented LMS you wanted wherever the correct data is in your paragraph that the system accepts this data even if you have four

sentences but this is not the case the order of the evidence in four sent sentences has a significant effect plus this is another fact here what if irrelevant evidence is presented via a rack system to an llm let's say you have a question and the rack system itself has no data on this but the rack the retrieval augmented generator then comes up let's say it's a llama system and the Llama system just invents nonsense irrelevant evidence what is happening now if you feed this rack data to your beautiful llm what you would like what you

expect is that the system abstain if no evident clearly supports any answer and the system should ignore all irrelevant evidence and all irrelevant answers that are coming in but [Laughter] however if you only provide irrelevant evidence irrelevant data from your llama system the llms can be distracted by them delivering irrelevant answer and the smaller this system is the easier it is that some irrelevant rack data just convince the system to produce irrelevant answers and here they did some test with Al L 27b and they if a rack system delivers to a llama B 7B llm

irrelevant data Lama 2 will also produce an answer based on those irrelevant datas yeah as more irrelevant evidence introduced becomes less likely to answer based on their parametric memory so you see even if you provide nonsense data there's a threshold and when the threshold breaks loose all the irrelevant evidence just swamps over the parametric mod memory of the system and the system goes nuts however if you provide both if you have in your prompt relevant evidence relevant data to the query and irrelevant data to the query if you have both then the studies show the

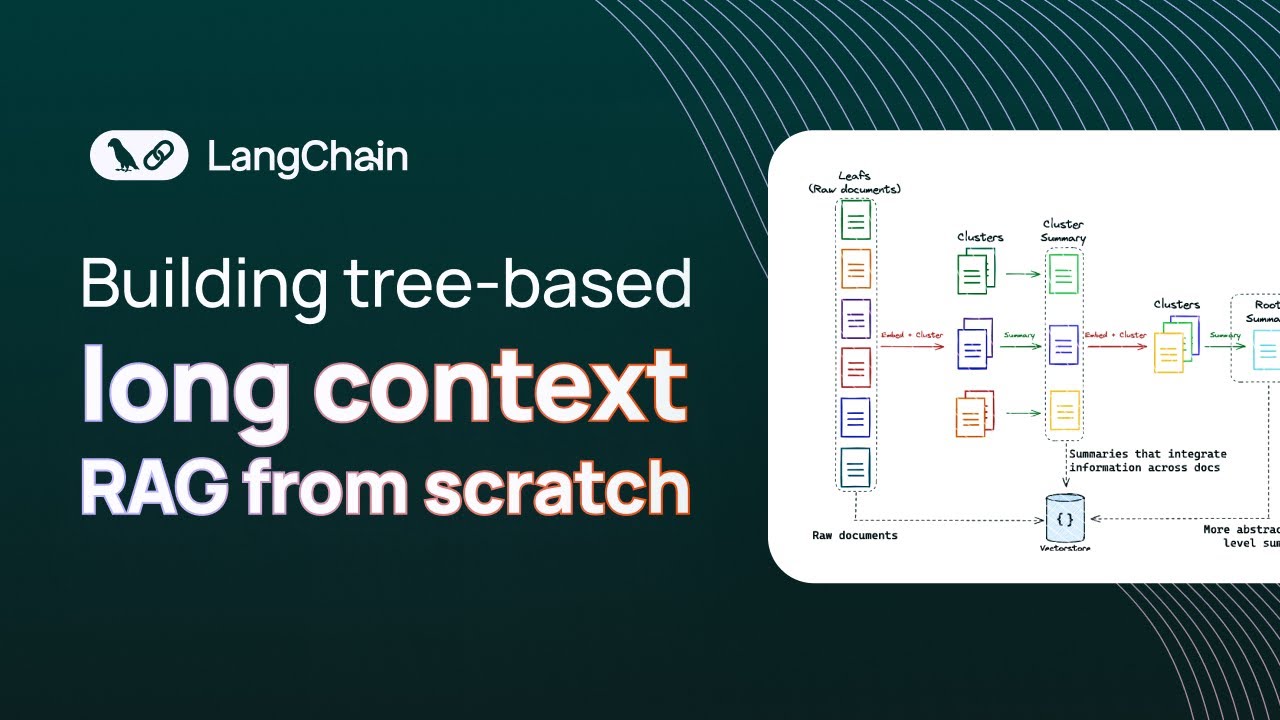

llm can filter out the irrelevant once but only to a certain extent therefore what you want is that the external data that come into your prompt they are as short as possible they contain only absolutely focused relevant information and it might be that in the vector space or in the vector store you only have in the reranking the top five documents however those top five documents are only those documents that were fed into the vector store and might be irrelevant factually irrelevant but they were the closest to your search query vector and therefore you get

five irrelevant evidence documents back and this can disturb the performance of your llm and lead to hallucination so you see the interplay between an llm l r system is extremely sensitive to all those parameters I just showed you my goodness okay so in a very short summary of the first research paper alanes are highly receptive to counter memory when it is the only evidence presented in a coherent way here in the prompt at the rack feeds now here our llm however llms also demonstrate a strong conformance bias to towards its own learned parametric memory when

both supportive and contradictory evidence to their own parametric memory is presented here in the rack return in an addition it is shown that the lm's evidence preference is influenced by the popularity of its learning the order you provide the information and the quantity of evidence none of which may be a desired property for two augmenting llms and all of this can lead here to inconsistency in our AI system and those inconsistencies manifest as hallucination so now you understand that our AI system of today are extremely sensitive little systems my goodness you have to take care

about them and you have to know about their weaknesses and you have to optimize their pre retraining their fine-tuning in their alignment phase you have to improve that the data quality significant for the final task that you want this llm to provide in your company or for your private task whatever and the effectiveness of our framework also demonstrate that llms can generate convincing misinformation and this poses a significant risk not if you want to write a French or British poem but if you are working in bioinformatics if you're working in finance if you're working in

medicine if you're working in health if you're working in critical data infrastructure in cyber security you do not want that the Gen that the llm generates convincing misinformation and therefore we have to fight here with this new insights that hallucination are generating in the very first place but I think this would be the topic of one of my next videos so finally different rag systems behave differently and I tried eight different rag system and all of them behave differently so you go to your rack system that you like that you know how it works that

you are familiar that you know it's weaknesses you test it out more that you understand what is happening where's the threshold when it goes crazy and you look also at the system commands because the system commands of different R system are completely different and some system commands now regarding the external rack knowledge integration only are really different and I don't think that it makes sense if in a system command you have except any and every external rack data as true and integrated in your system or any other duration of this but whatever we learn from

this hallucination or here to stay we just have to understand the sensitivity of systems llms hallucinate rack systems hallucinate Vision language system hallucinator will show you this in one of my next videos but however I think that a consensus is that hallucination originate if we have noncoherent systems and non-coherent I mean really from the quality of the pre-training data sets to the pre-training methodology to the fine-tuning data set to the fine-tuning methodology that is in accordance with the pre-training methodology with the alignment instruction that are based here not only on the specific domain but are

also in accordance coherent to the other two learning stats and this is the way we can reduce the hallucination because we can build more coherent llms and re system but more about this in a later video