[Music] now we're going to move on to information bias and information bias is is a catch-all for a ton of different biases that go for a lot by a lot of different names but it all boils down to the same thing which is essentially mis-measurement so our goal is here to understand the information bias boils down to mis-measurement and especially differential mismeasurement but before we get started i'm going to give you a quick example of uh of a really extreme uh bit of information bias that crept into a study looking at where people come from

i'm gonna ask you to hold this for a second and uh where are you from originally st petersburg florida bismarck north dakota great thank you very much thank you where are you from originally originally like born and raised um washington dc south bend great thanks where are you from uh tennessee all right can tuck e great uh chico california home of aaron rodgers nevada got it where are you from originally i'm from baltimore maryland antarctica antarctica great thank you concord massachusetts illinois thank you very much i'm from stanford connecticut key biscayne great thank you very

much thank you where are you from originally i'm from milwaukee wisconsin vladivostok great thank you all right so what we saw there was an example of someone just getting it wrong right asking for a data point and recording it incorrectly that's mismeasurement that's going to bias your results if you just record random stuff on a piece of paper your results are not going to be very significant right that will bias you towards the null but there's a variety of other types of information bias too and so i want to give you just some examples an

important one is called detection or ascertainment bias this is when you follow one group or one type of person more closely than another so they have an opportunity to have exposures seen more often than another so this is a paper that looked at something called acute kidney injury this is full disclosure one of my papers that my lab group published and what we were interested in was that this finding that people who have acute kidney injury when they're in the hospital which is a sudden decline in kidney function do really badly over time in the

hospital they're more likely to die in the hospital they're more likely to have all sorts of bad things happen to them and the question is is it the acute kidney injury that's causing all that bad stuff or is it not and one thing that a lot of people hadn't thought of was the impact of ascertainment bias to define acute kidney injury you have to measure something in this case it's a blood chemical called creatinine but you have to stick a needle in someone's arm and draw blood and send it to the lab in fact you

have to measure it a couple of times because acute kidney injury is defined by a change in the creatinine level you might see where i'm going with this the more often you measure creatinine the more you're able to see the people with acute kidney injury taking it to an extreme if i never measure creatinine i can't define people as having acute kidney injury at all and so what we found was that sicker people got more blood tests so they had their creatinine measured more often and so a lot of the relationship linking acute kidney injury

this concept to bad outcomes was actually due to the fact that we diagnosed acute kidney injury more frequently just because we were doing more frequent blood tests that is ascertainment bias the more you measure the more likely you are to develop acute kidney injury but the sicker you are the more you measure so when you're thinking about information bias you always want to ask yourself what was measured wrong the exposure or the outcome well our exposure in this case was acute kidney injury our outcome was death and things like that the exposure was mismeasured in

an extent we weren't capturing all the acute kidney injury because in the less sick people we weren't checking as often and so you only check in the sick people that's information bias it makes it look like acute kidney injury is linked to death when in fact it might not be here's another example not one of mine may be more important for the college students out there the relationship between cell phone use and academic performance in a sample of u.s college students so this was 536 college students and they they mapped their self-reported cell phone use

to their gpa so when you see a study like this you have to think okay could information bias be a play well the exposure is cell phone use the outcome is gpa so the outcome gpa depends how they got it but if they just looked up their academic transcript it's probably pretty accurate probably not mismeasured that seems fine but the exposure cell phone use that was self-reported so is it accurate we don't really know and it's possible that people with low gpas might systematically overestimate their cell phone use whereas people with lower gpas might underestimate

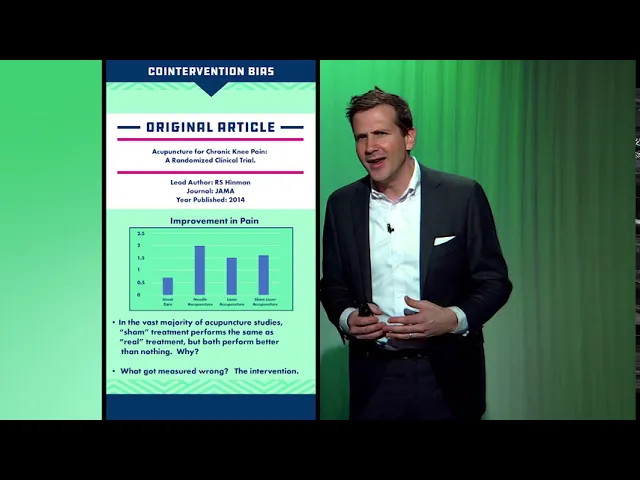

their cell phone use or so on the better way to capture cell phone use would be to you know install an app on the phone or something like that that actually really measured it so anytime you see the word self report think information bias all right co-intervention bias another form of intervention another form of information bias so here's a study appearing in the journal american medical association which was looking at the use of acupuncture for chronic knee pain and this was a randomized trial but like many randomized trials of acupuncture they didn't just have an

acupuncture versus no acupuncture arm because they realized that you know putting needles into someone's skin like might actually do something not magical with qi and stuff but the patient obviously knows that it's happening there could be significant placebo effect there so what they did is they had usual care needle acupuncture the sort of standard laser acupuncture where they use kind of laser beams and then sham laser acupuncture where they use laser beams but not in the right place and what you can see is the overall effect in terms of improvement in pain and i will

tell you that the difference that you see is that in terms of statistical significance everything was better than usual care but none of the various types of acupuncture were better than any of the other types of acupuncture statistically speaking so when we look at studies like this of acupuncture oftentimes they're reported as like acupuncture even sham acupuncture is better than nothing for pain and that's a little disingenuous because you might as well be saying the placebo effect is better than nothing for pain right like we're really interested if acupuncture like placing the needles in a

specific place makes a specific difference so what is the bias here well the exposure is acupuncture and the outcome is pain in this case the both of these might actually have some information bias but i'm going to argue that the exposure is biased by something called co-intervention bias when any of the people in these three groups got their treatment it wasn't just that they had needles put in their knee or whatever they went into a quiet room it was dark there was a provider there who spent some time with them they might have had conversation

all of these things are happening in that group that is happening along with the acupuncture co-intervention we don't know if it's the acupuncture or all the nice talking and hand-holding that actually makes the difference when it comes to pain so when you are thinking about studies like this where the patients are aware of which treatment they're getting be aware of those co-interventions that might be going on and realize that that's a form of information bias because they're labeling this group the acupuncture group not the acupuncture plus hand-holding plus soothing talking group when that in fact

is what's really going on another example here a trial called sprint a randomized trial of intensive versus standard blood pressure control this was a study that randomized a large number of people to get their blood pressure really low systolic blood pressure less than 120 versus a more moderate target of less than 140 and they saw a significant benefit uh in terms of all-cause mortality so the lower you got the blood pressure the less people died that was a big finding big bit of news important study but look at the methods and what you can see

is this statement quote dose adjustment was based on a mean of three blood pressure measurements at an office visit while the patient was seated and after five minutes of quiet rest okay so what they're talking about here is their assessment of the exposure the blood pressure right that's the exposure the outcome is death their assessment of the exposure their measurement of the exposure they measured that exposure after the patient was lying down for five minutes in quiet repose had the blood pressure cuff cycled three times and they took the average has that ever happened to

you when you got your blood pressure checked i'm going to suspect that it has not so the measurement of blood pressure here is not quite accurate with respect to the real world i mean it may be more accurate than the real world in the sense that you know maybe that's your true blood pressure when you're nice and relaxed but if that's what no one does then the target of 120 is not very meaningful because really it's a target of 120 after you're lying down for five minutes and they measure it three times and they take

the average and you're in quiet repose right so there's a bit of measurement error in the exposure here potentially introducing some bias let's do some quick ones just to round out our types of information bias confirmation bias you look harder for an outcome in the group you think is more affected by the outcome okay so this is an example where maybe you're doing a study and you just suspect that uh that that women are more likely to um be arrested for drunken disorderly than men and so you get a bunch of police records and you

identify the the gender of the person in the record and you search through the record for drunken disorderly well if you have that bias in your head maybe you search a little more carefully among the women okay and you find a little bit more so that is a form of information bias you're mismeasuring in this case the outcome drunken disorderly in one group compared to the other the solution there is blinding you have to not know whether it's a woman or a man's chart when you're looking for the outcome in that case social desirability bias

worry about this anytime someone is asking a survey question respondents answer a questionnaire so as to impress the interviewer so if i walk up to someone on the street and i say you know how many hours of television do you watch a night they might say oh none or just 15 minutes when in fact they're binging eight hours of netflix it depends on the person how honest they're going to be but this can certainly happen so anytime there's a questionnaire where there's some kind of social desirability component there's questions that are a little sensitive drug

use sexual history etc be a little bit worried about this form of mismeasurement this form of bias the solution there is offering anonymity giving surveys in a private way you can give them you know a tablet make sure they can answer it away from prying eyes and things like that so that's sort of a whirlwind tour through information bias it basically boils down to mismeasurement you just always want to ask was the exposure or outcome measured appropriately differential mismeasurement in different groups is particularly bad but careful study design can mitigate a lot of these problems

of course with as with all biases you've got to design the study right from the beginning because there's no fixing it after the study is done