hello everyone and welcome to machine learning and large language model tutorials in this tutorial we explain how to install and run a quantized version of Deep seek V3 or version 3 on a local computer by using the Llama CPP approach for those of you who are not familiar with deep seek deep seek V3 is a powerful mixture of experts language model on this figure you can see some comparisons of deep seek with other popular large language models these blue rectangles with a hatch represent the speed or the performance of deep seek V3 and over here

that is these other rectangles represent other popular models such as GPT clothe llama 3.1 400b instruct Etc as you can see from this graph it seems that deep seek outperforms other models however you should always take these graphs with a grain of salt since every software company that publishes large language models will always claim that their model outperforms any other model however you should test and compare the models by yourself we will install and run a quantized version of Deep seek V3 on on a local computer and here are the prerequisites first of all if

you want to install and run under quote smaller model you will need under quotes only 200 gab of disk space however if you want to play with a larger model then you will need 400 GB of disk space when I say a smaller or a larger model I mean that if model is smaller this means that it's heavily quantized that is if the model is larger then the model is less quantized in fact behind the scene you have the same model that is compressed either significantly or less significantly then you will need a significant amount

of RAM memory in our case we have 48 GB of RAM memory and the model inference is relatively slow probably the inference speed can be improved by adding more RAM memory however while running tests we also notice that GPU resources are not not fully exploited and here is the issue with the GPU you will need a decent GPU we perform tests on Nvidia 39 GPU with 24 GB vram better GPU will definitely increase the inference speeds after some tests we realize that the GPU resources are not used fully and this might be the issue that

in this tutorial we are simply downloading the binary files of llama CPP namely llama CPP is a framework that's used to run quantized models and this can be improved by building the Llama CPP from The Source this will be explored in the future tutorials okay let's start with explanations on how to install and run this powerful model first of all you need to download and install llama CPP in order to be able to run llama CPP you need to have at least two things first of all you need to have C++ compiler and then you

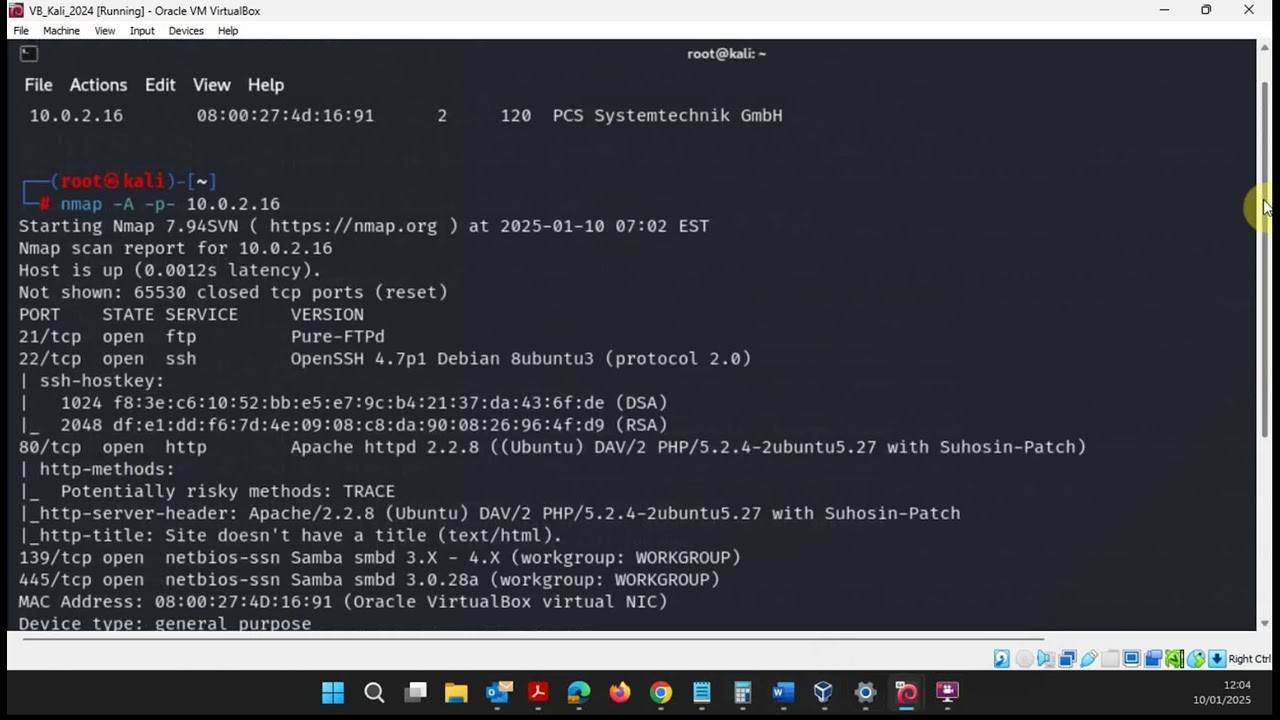

need to have the Cuda compiler in order to install the C++ compiler you need to go to this official Microsoft website and then you need to download Microsoft Visual Studio you can click on Community Edition over here and after clicking the download process start then you need to start the downloaded file and Microsoft Visual Studio C++ with compilers will be automatically installed so this is the first step I will provide this link in the description below the second thing that you need to install is the Cuda toolkit such that you can use C++ VI your

GPU to do that go to this official Nvidia web page or simply search for Cuda toolkit click on download now Now search for your operating system architecture search for your operating system and over here you can click on this file and the download process will start after the file is downloaded you can run the file and Cuda toolkit will be installed after Cuda toolkit is installed you need to verify the installation to do that you can simply go in the command prompt and you need to type this command nvcc D- version and if everything went

well you should see the version of your Cuda compiler good the next step is to download and install llama CPP to do that search for llama CPP and then immediately at the top you should see this official GitHub Page open it then over here scroll all the way down and find the section building the project then click over here download pre-built binaries from releases and over here scroll down find the assets and since I'm on the Windows machine I'm going to download this ZIP file so I will click here and I will save it in

the downloads folder here it is then I will go to the downloads folder and I'm going to extract this file over here click on the extract and the extract process will start it's going to take around 5 to 10 seconds to extract everything okay next let's click on this extracted folder and let's copy all the files by selecting them by pressing crl a then contrl C then go to your C drive and create a new folder I'm going to call this folder as test one two and I'm going to paste all the files over here

by pressing contrl V and here they are okay the next step is to download the Deep seek ggf files for that purpose you need to go to this hugging face repository and I will provide the link in the description below this video over here you have several options and you can see the size that you need on your disk for illustration purposes I'm going to start with this smallest model so I'm going to click over here and here are all the files now there are several ways to download all the files you can write a

python script for downloading all the files at the same time however for Simplicity I will manually download the files since we only have the five files over here however it's going to take a while to download these files so let's be patient so first of all let's download this file and let's make sure that we save this file in the folder we just created that is in the folder in which we have copied and pasted actually which in which we were copied our llama files okay so let's save this first file and now here you

can see that it's going to take a while to download this file I can at the same time start the download process of the second file make sure that you save it over here let's do the third one save it over here let's the do the fourth one let's save it in the same folder and let's do the fifth one over here okay now all the files are downloaded at the same time and you can see the progress over here it's going to take around 50 minutes or maybe an hour to download everything depending on

how fast is your internet connection and how busy is the hugging face website so let's be patient after approximately 1 hour the model files are downloaded and here they are they are in the test folder fer there are five files over here the next step is to explain how to run the inference to do that start the command prompt and search for command prompt next let's navigate to the folder and here we are to run the inference we need to execute this command every over here should be in a single row so let's first of

all call Llama CLI then let's specify the model file over here I'm specifying the name of the first file namely I'm specifying the name of this file over here and make sure that you correctly type this name should be 00001 next we need to specify the cache type okay let me expand this so you can see it better then we need to specify the number of threads and finally we need to specify the prompt over here the prompt should be formatted like this you need to find a user and assistant and over here you can

pose the question I will pose a simple question how to solve a quadratic equation so let me copy this and I will paste it over here now when I press enter it's going to take some time for the model to be loaded and in my case the system will freeze for about 5 to 10 seconds while the model is lo loading namely if you analyze the consumption of your computer resources you will see that initially this consumption will be 100% as well as the CPU and memory later on once the model is loaded you will

see that the memory is being consumed and the GPU as well as the CPU resources are being consumed now I will press enter and then I will stop recording until the model is lo loaded and until I can show you how the inference is happening in real time and after some time you can see over here that the response is being generated and you can see the speed of response which is not great and this speed can be improved by selecting probably different parameters or by optimizing llama do CP okay that's all for today and

thanks for watching