My name is Harrison I'm the co-founder and CEO of Lang chain and I am very lucky enough to be joined bymar Friedman do you want to do a quick intro yes it's interesting that you introduce yourself like I I don't think there's someone that doesn't know about you sorry like if I'm too much flattering here there there are people here for you and so this is now I can teach him a Little bit about linkchain so uh so my name is itmore Freedman I'm the CEO and co-founder of Codi uh previously two times uh X

CTO of VCB startup um my last startup was actually targeting the Asian market and eventually was acquired by Alibaba group and I worked there for more than four years um I'll double click on that very shortly and say that I think like um Alibaba is an amazing Organization for uh AI I don't know if you noticed some of the models they're Actually even an embedding I think like uh one of the leading models there and that's where I mostly was educated although like I have like 18 years of um of machine learning I think like

in 2010 I have a poster of like three layers neural network in the University being still being hanged there since then but my real like learnings I have to admit was in those few years in uh in Alibaba and uh yeah so that's that's about me and uh my my very early early career Started actually in uh verification and chip chip verification and system verification and company called melanox uh to be that was acquired by Nvidia and now is like a huge part of of of nvidia's success and uh the interesting thing is that when

you do verification to Hardware it's actually like very systematic um like bits in bits out you can even do like mathematical Pro like a for formulation to prove that it's it's working um but but then like when moving To software it's not bits in bits out it's like human in human out and I was always like uh thinking about how can we one day like take uh some practices from Hardware to to software and eventually like realized we need AI for because of the human in human out uh uh property here and uh yeah and

eventually like uh 2021 uh I was not ashame anymore to say that what we're doing is AI and until then I was you know in the feeling always like hated all I said I'm doing ML and uh and then like um left Alibaba and 2022 BC before chat GPT uh started Codi and what we're doing basically is like uh helping developers to uh reduce amount of bugs and issues uh and our vision is uh zero bugs yeah so so that's about about myself and and Cody mayang zero bugs was a great vision um yeah we

all yeah so so it's like if if I if I have to choose two words that that would be that like zero bucks and if I have four By the way I wouldn't add to the left but rather add to the right zero bugs and issues and I think that's that part of the opportunity with AI it's not about like uh per se bugs like your software is not working but also for example an issue could be that you're using an API that is about to be deprecated so you want to know about it or

uh you using long chain zero point and you need to move to one point and how how you do that and uh so that's Like uh part of uh part of what we what we do like help to to to reduce those uh issues that's a that's a very real issue very real issue two two two minor um logistic things for the audience before we get started one this is being recorded um and it will be put on uh YouTube after the fact so if you miss part of it for whatever reason or want to

share it with your friends because it's so amazing afterwards you can find it there um and Two uh if you want to ask questions um there is a little Q&A box down on the bottom drop them in there um we'll we'll take a look at them uh throughout but especially at the end and so in terms of how we'll kind of do things itar will talk a bunch about flow engineering and Alpa codium I'll ask uh I'll ask some questions along the way to make sure I understand I'll spend few minutes after that relating some

of that to to Lang graph um and and then after that we will Uh we'll jump right in to we'll just take audience questions so they can be about Alpha codium they can be about codium AI they can be about Lang chain and Lang graph they can be about um you know tomar's vision for the future of AI like anything you want um so that that's the agenda um and with that I think yeah we can we can dive right into it cool first first uh first of all thanks again for uh inviting me and

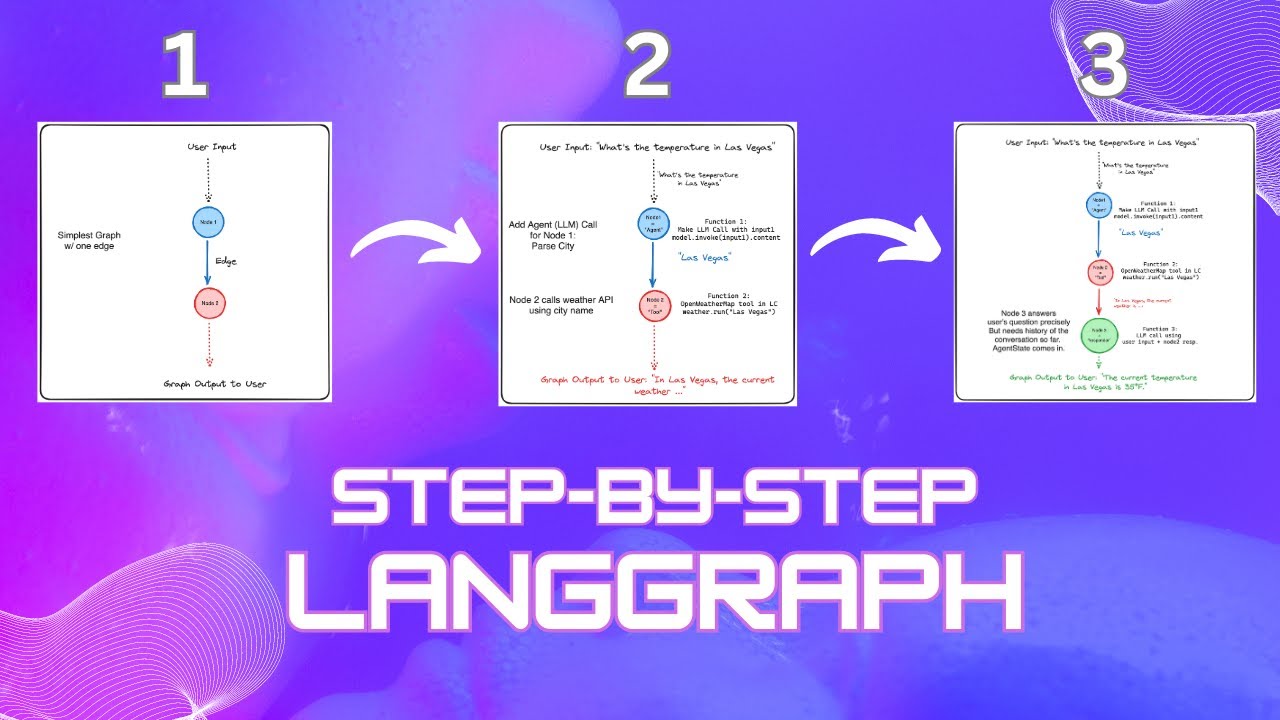

uh I love talking about like uh flow Engineering and the future of uh uh intelligent coding system Etc so I'm happy to to be here and uh actually we we chatted for the first time like a year ago a year and a or something so we've had a lot of great conversations I'm glad we're getting to do it you know and and more people can listen in yeah and and it's interesting because I think like when I saw L graph like coming out I don't think we talked about it before and and basically uh Like

it came like a week after Alpha codium Etc and it shows how I think many people like are working now are like realizing that uh like working with llm actually requires like H having a a well well engineered um algorithm or a flow where llms are taking part of it but but are not like just uh prompt that you use and that's it uh as a single a single system it's requires more engineering around it and uh so it was interesting like when We saw long graph like oh we we both like agree on on

that on that part um with talking to each other and and some like behind the scenes on that so so Nuno one of our kind of like uh founding Engineers had been working on lingraph under a different name since like uh July or something of 2023 but like we didn't talk about it at all like we weren't really sure what it was and kind of around like end of December early January started talking to more people And the these the exact same ideas that you're talking about like everyone was doing some variant of that and

so basically we like got conviction in it and like you know released lingraph in like a week or two right around the time that you did Alpha codium as well so it very much did does feel like yeah sometime around January was when a lot of people started recognizing this and and kind of like focusing in on it amazing so I'm going to uh lay lay the Ground like uh and talk about a bit of context I think it's hard these days by the way not to speak as if we're llms like uh right now

I always like okay what's my context let's collect it and and then move move on from there so uh we're going to talk about Alpac codium but before that like what was the inspiration and uh and like how did it came into life so uh around uh like you can see 2022 um deep mind uh came out with Alpha code and basically what they Did uh what they're really good at as as like competing in a competition an advancing technology advancing concept through through like a competition so deep mine had their Alpha go moments right

and then Alpha zero and Alpha star Etc until Alpha fold so one of the thing they did is Alpha code and and the con the competition that they they they they they uh uh developed is based on a platform called uh a website called code forces uh you can go and compete against People or human in chess or in go Etc so you can also do that in programming for programming competition and and code force is one of the leading platform for for for that the interesting thing about like uh similar to kagle the interesting

thing about code force is that you don't get to see the the test set so this makes it like a really good like uh competition to to compete with with AI with systems with a coding systems so what they did with Alpha code is Basically they they chose like a a set of uh um you know a set of contests that happened on on code forces they collected a benchmark uh and and then um you can see here by examp for example like a a type of a problem in the code in the code forces

like it's a natural language description uh within some explanation about the expected input and output and maybe even one uh like let's call it test like one input output uh example Etc and and basically uh what They did is like build a solution uh similar to how they build a solution Alpha zero alpha alpha full Etc and uh and and then like they they're bragging here how well their solution how how well the output it is for their Solution by the way they have all the reason to brag I think they they did like amazing

work and basically uh what they what they did in Alpha code one is the following um they first generated uh like a very good model it's Like you can see uh this is the model that was um trained on GitHub data and then like on the code contest itself okay the code forces they created a benchmark from it that's called code contest and they they find you in the code code contest itself created a model and then interestingly um now that they have a model for each problem what they basically do is uh they generate

between 10,000 to even one million different solutions okay it depends on the problem With with different prompting okay let's let's uh let's say that there's uh um a selected set of uh prompting that they they decided upfront and like please solve this with dcra please solve this with another algoritm Etc and they just like do a lot of prompt engineering and then they need to filter uh their results uh and and eventually choose uh from all the all the outputs all the programs that were generated choose like 10 so they can you can submit um

10 and And you get like past 10 how many uh uh how what's the percentage of problem that you're solving considering that um that you are basically uh submitting 10 times Etc so there is like really good result comparing to um let's say open AI these are the two companies that they it's not presented here uh I'll show it a bit later um and the interesting thing that that we noticed that basically there there are two steps here if you like or even like two and a half Basically uh what you see is first they

create uh a variety of solution and then they filter the these these Solutions and uh and and like make a final selections so so basically you you you can say that there is kind of a flow here it's very like uh shallow uh just like uh like very uh extensively um extensive Computing here but but there is a flow so that was like kind of ation for us we thought that if you extend it to a flow that actually is more similar To how we do development as developers and we do not generate 10,000 solution

before we select we we do more of a like a thinking that I'm going to present you that is more like in steps and eventually usually we in in our mind or pseo code or we we like think about three different solution before we actually generate one or two um so we thought that that that that would be a good idea and that's one of the like inspiration uh for us that the alpha Code and why we call Alpha code Dum we want to give by the way credit to to deep mine as some people

told me that eventually it poke pokes their eyes like calling it Alpha codium but it wasn't wasn't the idea okay so so this is this the the the setup um there is a benchmark hundreds of problems natural languages script yeah sorry at some point would to see that last graph at the bottom that showed kind of like how much all the different components Contributed to the to the success I don't know if you were going to get that to that or not but I think this is for their this is for their solution like Alpha

code one by the way since by the way since then they released Alpha code 2 that was December 23 this is from December 22 and uh basically what they did here is like it's it's different uh techniques to uh they apply different uh techniques to either train the model and they put a Lot of emphasis on training training the model OKAY like how how do you train the model and how do you rate Solutions and and things like that that's basically what what they did here I actually prefer not to like go into this and

and show one more thing and then later we can come back here sounds good cool um great so I'm not looking on the Q&A let me know if there there are some questions there um so the thing is that eventually uh it did reasonably Alpha Code uh but but the thing is that in order to uh generate uh a reasonable solution let me show you one graph that we're going to talk about a bit later basically to to generate uh one solution they they needed to run like one million times the llm and they need

to find you in the llm on the problem and that's a serious problem and and the reason is because model are actually quite shitty and and generating proper proper code okay so like it's interesting on one Hand we're talking about the idea that you know we're going to use llm to replace developers and generate solution from uh from like from end to end just give me the give me the problem definition like we just saw from code forces and I'll give you the solution but at the same time people are complaining that actually if you

take codea generated by AI as is then it doesn't work and there's even some research uh like about it and and I by The way I'm I don't necessarily agree with this specific research that was published in Visual Studio magazine like two or three months ago but but there is a general uh like um uh General like notion out there that did you with a simple inference even with the best prompting you're not necessarily going to get code at works so so how there is a conflict here how come you know there like we can

get eventually like a AI solving problem in in an efficient way Not with 1 million llm calls at the same time when when you do like a simple prompting then it doesn't give you a high quality code so let me tell you how I think about it and that will lead to why why flow engineering okay um so here the thing uh this is a highest level architecture of gen by the way you can you can uh accuse me for for this is the highest level architecture for anything in the world why right but but

but notice that uh I I like specifically uh There is a space in the input and and a space in the output and not a point and I think that's like a unique thing for for Gen uh where your prompt is like not very specific it's not like a spec definition and the output is not only very specific it's look it's really good for for example when you want to gen generate an image a porcupine riding a horse and a moon behind it it's like very like not well defined and also the output probably like

you will take a lot Of options and not just one so this is a high level architecture and then usually the systems uh behind this gen are what what what I call system one thinking um this system one thinking and system two thinking was coined by Daniel kahanan uh he just passed away like a month ago unfortunately and uh and what he's like in his book uh thinking fast and slow like he talked about as we as human we have the like the L llm like inference mechanism and and we basically like just Use our

intuition almost to uh in MO in most cases even what I'm doing right now I'm almost like completing the next sentence I don't know if you feel that as well yeah almost like completing the next sentence according to what I said like the previous sentence and and that's system one and system two is when I'm thinking about you know uh software architecture or Etc going to talk about it or my career decisions or startup strategy or whatever so but I think that Most like systems including Alpha code okay including Alpha code are system one basically

what they do is they take the input and they gather some context they do a prompt and they give you the results in case of of Alpha code one they just did it one million times it's like telling me as a developer solve it solve it solve it and every time giving me like 10 seconds to do that or something like like that do it 1 million times maybe randomly one of one of those One million times will be fine that's not the way to do it so if if the llms do not work well

in this case don't blame the llm it's it's it's like just one way to do that it's one way to to poke a developer but there's other ways to poke a developer nlm which is what we call like system two thinking okay and and we can consider like uh an agent uh like system to thinking but not going to call it agent because I think like in commonly in Agents people would consider like the agent having a very free uh like very like like an autog GPT like each like that what to do next is

a decision of an llm for example very free flow Etc while um when we will talk soon on Flow engineering actually we're going to design a really well uh design a well-designed flow and the llm makes decision for within a steps but not necessarily on what is the next step to move to or redefine the step uh from Scratch for each time no it's a will Design flow where llms are are are involved and actually then when you don't you do system one you do system two then you have the opportunity to refine the input

make sure that it's well defined and and then like provide an exact output okay so so that was like uh like our thinking on how to solve the code contest let's not like blame the llm and like you know that not being accurate let's let's let it let's design A flow that that will let let it like do small steps like we do as as developers and and and get to get the results okay one um one thing that i' just add here uh just because you mentioned kind of like agents and and how this

differs from kind of like agents one way that I've been thinking about it a little a little bit um is like one of the issues with autog GPT or autonomous agents is basically like their longer term planning basically they kind of like Break down planning over multiple steps um and so things like this are almost a way of like removing the the the need for the llm to do that planning and saying like you're not going to do the planning like me as the engineer I'm going to do the planning I'm going to tell you

that you should first write tests and then you should execute it and then you should like loop around and and there's still some decisions it can take but you're you're basically doing a lot Of planning for the llm and giving it a blueprint to follow does that kind of like resonate is that how you think about it as well curious for any any reaction to that yes and and I don't think that there's it's like uh like one or zero one or like there is a spectrum for let me give you an example of that

that uh I think correlates like goes goes really nicely of what you said you can for example let's think about it like a state machine and Um let's say that you you did design the state machine and that's like you you're not going to like let the llm generate new States but but maybe the decision which step to move move to the what what is the next uh state that you want to go by the way usually from State I uh you can't go to All State just an example you can go to uh I

i+1 I plus2 or I plus 3 or something like that right so you can uh like there's a Spectrum you can either say like uh the llm are just uh Are just that's a lot like doing uh items within a step okay and the state machine is totally like uh engineering decision and no statistics uh uh involved there supposedly and um the ex The Other Extreme is like the autog GPT style that everything like could be configured by by the LM and there's a middle which I mentioned for example the state machine and and the

the possible edges was engineered by human but then maybe like the decision to to be made by L of them just an example so it's not like a one option and that's like how I suggest to think about it what fits for for for your use case and I think maybe langra supports uh these options like uh right yeah yeah I mean I think langra is very much aimed at kind of like that intermediate between like the fully autonomous agent and like the chain so where you do have these different states but the llm is

deciding maybe again not like it doesn't have it may not have the Ability to go to all states from a given state but may have a few different ones it can choose from and so yeah I think things like that like very restricted and very controlled flows that's that's exactly what L graph is and kind of where it falls in the Spectrum I mean I I'm I'm actually going to dig up some slides that I used for a a TED talk back in November or something and I think I actually used the word State machine

I need to figure that out or not but I'll Go look at that that way great so by the way I saw a question about training uh on specific uh taking models and training on specific databases code bases sorry um I I let me table that and I'm coming back to that but if uh just to not like I I will say a spoiler I think like it's it's a possible um it's really a reasonable option to try to train a model pair codebase like I'm not saying not but it may maybe not The first

thing I would do the first thing I would do is actually training on a task like collecting data and training a model for a specific flow and not for a specific codebase it will give you uh I think a better like generic uh model unless you're really trying to have a model that's is working only for one codebase uh um maybe that's a the good idea that's the id20 answer for that for that uh question about should we like what's your saying that should we train Models on specific code basis because that's what Alpha code

did so the answer is could be but that's the last thing I would do actually and we're going to talk about it okay cool so um I'm going to skip a few slides about uh agents if we need them we can go back but I want to like take a step back because I'm claiming that everything that we're talking with this llm Etc hey we actually talked about it already in 2016 okay um good fellow talked about it And I I will explain so you remember the gun uh the generative adversarial uh architecture so so

basically uh I would claim that this is also kind of a flow okay maybe it's more of a flow on the training part but sometimes you would actually use it at inference and what you're what you're trying to do here is is basically like saying okay I have like one model that is good in on the generative part and the other and the adversarial part and they can help train Each other or even at inference uh you can like use them both and and try to get to a conclusion um and and I think like

uh we can learn a lot a lot from that but at some point uh it happened that we kind of like let let G gave gave away like we gave up on on that idea that let let's have like a multimodel working with each each other sorry multimodel like two models or or more working with each other in kind of a flow and the reason is because I think That generative part became so good at least for a certain type of task for example like I mentioned creating images like usually our our requirement are not

so strict uh even for text when we generate text for you know sending an email or sending doing a let alone marketing material so we're not so strict in what we want to write so it generally works but the thing is that I think that when we're coming to to uh problems where it's not like it's not a We can't set we're not satisfy with something like space to space we need like accurate specification to an AC to a working software usually software either works or not right like either software works with no bugs or

it doesn't work or work with bugs so it's like a bit more defined and I claim that when we talking about Cod genen then actually system one code completion style uh and just using just one model will generate you nice suggestions um But uh not necessarily code that that works in order to do that we need to go back to the idea that there is a flow there is like a few models involved that are talking to to each other so this is another inspiration for for why should we do like a flow engineering okay

uh great so what what could flow contain for generating code okay again our context I see like more people joining is that we're talking about engineering specifically I'm going to give example About coding competitions uh although I think the concept we're going to talk about here applies to almost most of the fields uh where you want to apply AI but we're going to uh talk about coding competitions and uh and the thing is that in coding competition you need to have a software that either that works it's not okay that it works sometimes and I

think this is true also for legal or health you you want to have like uh a health system or or AI system for health Or AI system for legal that gives you a good like accurate No Bugs uh uh outcome like a a diagnosis or or or some some document Etc so and like and then what we we talked about is that don't BL the llm that it doesn't that's hallucinating Etc let's try to think like as as a lawyer as a as a doctor as a developer and we do when we want things to

work we do not just tell hey give me the coding work in 10 seconds you tell developer think work as a flow and then we started Thinking talking about Inspirations and we saw Alpha code which had two two steps flow which was much better than the prompt of the gbd4 by the way and then we're talking about like the Gan architecture that had also like kind of a twostep or or more flow depends like their most sophisticated guns right remember there were we had like hundreds of them at some point and some of them were

phisticated and then now at this point I'm trying to think okay maybe That like Gan architecture could work for uh uh for code generation and what what would I include in the adversarial part for for for us at Codi we would consider adversarial the Integrity part as testing spec matching reflection review that what what we put there and and it could be like methods that are not necessarily purely llm calls you can like apply like mut Pati testing techniques Etc we're going to talk about it okay um so that all of that like like Was

inspiration for Alpha codium uh stopping here for a second and then I'm going to jump into Alpha codium just in case there H any any any questions so far or in the QA that all makes sense there's a bunch of questions in the Q&A but I think I think we take those towards the end okay um I think it all makes sense maybe just double clicking on one thing I think the idea of the specifically the um the like checking being uh uh not not just llms But like yeah code or other things like that

it we see that all the time like there you know you can there's these verification steps that you don't need an llm for and that's why you know uh that's why we've been at linkan very focused on kind of like developer tools because I think there's a lot as opposed to like some of these uh as opposed to like code or no code kind of like flows I think there is a lot of code that can be used in this in this verification Step or or other things depending on the application but like and I

think that comes to designing the system as well a lot of what you're talking about by the way like um like I think it's really interesting because you're you're a domain you're a domain expert in coding right and so you're imparting your domain expertise onto this system and thinking like what is the generation what is the is the integrity and I think that's uh whether it's for coding or Other domains like customer support like that domain expertise and bringing that in is uh super important and obviously because you are a coder as well you can

then code this up but I think in other domains there's this there's this you know domain experts need to convey these things to the engineering team in some form because it's this it's it's that kind of like Synergy that we see unlock a lot of interesting things so that's all I but I I'm excited to see where what what's next I I I very much agree a few and I think like that's why like before I uh you know like broke down like into example or exploded into example like I kept that simple with code

integrity and and you can then replace it I think like uh for legal it could be that hey you want to ground it with a rug uh into like uh examples that uh shows exactly like uh like the few uh like each each paragraph in the I don't know like the Legal contract or whatever just an example or or if you're in Health you want to find a relevant like uh um I forgot how they call it like the their there their spec uh like uh of decision let let's find the relevant like spec and

and and um like paper Etc so just an example there are other ways I'm sure uh so that's that that's exactly the idea that you want to bring your specialty uh and your understanding and in uh in a specific space specific domain into the Flow that's exactly the idea and that's it's like a good segue to to the alpha codium Flow Design um by the way uh just before I start I want want to say that I think there's so many benefits of doing a flow engineering instead of prompt engineering because one one of some

one thing that happens is that uh the variance uh of the res of the output like shrinks because every step like you're going to see it's much more defined a question that we're going to Ask the llm or request we're going to ask the llm it's so it's not only like uh lower variance overall in the results but even lower variance between models okay that's thing I'm going to talk about like in five minutes or so okay finally so uh let's say that you are a developer and you got a problem in the coding competition

that we're talking about code contest the first thing you would do is reflect about the problem what did I just see usually you would Reflect in an engineering way right like you're trying to think okay this is what I got and by the way sometimes the the like like I presented before the problem it's like could be even a silly like def Alice and Bob blah blah blah you know who cares about that Alice and Bob now you're trying to think about it like a bit of engineering engineering wise and that's like the first part

and then the next thing you you you would tell us okay let's say let's say I want to I'm Going to bring a solution and I want to test it I want to check that my solution Works what would be like uh how how would I think about it how how would I test my my my uh my outcome my my solution and and then okay now that I like digested as a developer I digested uh like what I should do and how I'm going to test it let's think about different solutions like three right

like uh uh hey I can do that with a database without with a you know um this Kind of memory or whatever and uh and then now let's think about the different solution like PR pros and cons Etc okay now that even have like I further thought about my solution let's think about how I will like even further uh test it it could be by the way like different type of test it could be like unit test because maybe I broke my Solutions into parts or like integration tests Etc and then finally I'm going to

start writing code right and write Initial solution and I'm going to iterate on that a bit and then I'm going to like try like try my tests on it and and then maybe like deeper tests like these are like the initial tests and then like I'll run all the tests like if you want sanity sanity checks and then like all the test Suite Etc until I finally reach the final result so we basically uh what we did here is engineered a flow that is how we perceive a developer would solve this Problem okay and and

and then the llm are uh are used within the flow usually notice here it's it's just like one one pass there there is no options so in our case the llm actually the only only thing they might decide is if to stay is if to stay in a certain State machine or to move to the next that's it and the main usage here is uh uh whether uh uh how to generate a code or how to rank a solution or or ranking a solution sorry or uh thinking about different possible Issues uh Etc now the

interesting thing although eventually I think we come up with a very um simple uh like flow I want to tell you that most of our work was designing the flow 95% was designing the FL flow only 5% was in prompt engineering what we saw is that once this flow was like working well it didn't matter much how much we tweaked the prompts this is very different than like a solution that is pure prompt uh and uh like the the different prompt Could be such a different like could give you could give you such a different

output we're going to talk about a bit later um I'll double double click on the prompting sorry on the usage of llm uh but not on all blocks so we can move forward to interesting stuff for so so for example um when we when you reflect on a problem The Prompt will be like hey um please uh break this into bullet points uh in yaml format or Etc uh where you try to understand like each uh edge Cases and Etc and and and for for this uh problem for um for the ranking uh for example

it could be a prompt of um consider Simplicity versus complexity and make sure that uh a solution uh can actually solve each parts of the edge cases Etc by the way Alpha codium is open sourced and the only work out there that is reproducible so you you can check all our prompting Etc one of the most interesting things in my opinion is the Test generation the test generation the adversarial part Integrity part in Alpha codium and how we uh like decided to generate test and that like I have a dedicated slide for that maybe that

will be good to stop uh afterwards I'm going to explain about the test generation uh using the these figure this figure okay so let's let's consider the left let's consider like we have a problem and conceptually let's say that an oracle solution would be the blue cloud okay Now if we take a gp4 and do and have like use the best prompt that we can think of it might actually generate like a reasonable result but it probably like won't won't work and and then the the thing is that um you can uh sorry most code

context problems most of the problems in in this competition actually did came did came with uh uh with some tests and basically what you can do is use Alpha codium style or like a chain of so Etc with these tests to try to Generate a solution this is the middle figure the thing is that if you have test that does not cover edge cases you will probably overfit to uh with with a solution that that that that catches well the these uh tests so the thing so so the the one of the like uh key

key Point here core uh uh principles here to actually have a solution that that works is being able to generate edge cases okay for for the problem and and I won't double click on that but this is one Thing um that that it's important how to use llms and other techniques to try to generate successful edge cases by the way there could be uh because we're using llms um that these edge cases would would be wrong okay and uh and and then there's the need uh uh to to have kind of mechan ISM to decide

when uh like to consider the test as a problem or the code as a problem by the way I think Harrison in your previous life uh curriculum learning and things like that Right and uh intelligent robust intelligence yeah it was a lot about that right so it's not a new field how if like to to like curriculum learning and how to use like bad samples the good samples if you don't you're not for sure not sure so basically you can apply different techniques and we applied one technique uh but others can be researched here and

basically what we did is like uh a very simple relative relatively simple technique with Anchoring let me explain let's say that we start with a test uh it's it's like basically like uh you can call it like um the opposite of um uh uh um not um sorry I forgot the word I'll come back to that in a second the the the professional world for that uh and um curriculum learning techniques but let me explain it before um yeah it will it will bother me now for for two minutes so it goes like that you

you you You know you have a few tests that that are correct okay and uh hard mining sorry so it's like easy mining what what what we're doing here okay I I'll explain uh so basically you start with the uh with the easy tests sorry you start with a test that you know that they're correct and then you generate a few tests uh the test that pass while uh the test that pass you can consider them as as anchors and if they fail you can try to change the the code To try to fix the

test but you're not allowed to change the code that ruins test that are already anchors okay again tests that we got in the beginning as a ground through supposedly they're green you you you can't like you're not allowed not to pass them now you generate a few test and if the the test pass great you add them as anchor but if they they don't pass then you try to change the code such that uh the P the the test will pass but you have to have The um uh the the the anchor Test passing and

if they don't then you actually say the test are is uh uh is incorrect and you try to choose different test or regenerate them is it a mathematical proof of of like of a technique no that's why we don't do alpha codium is not perfect but it does better in the majority of programmers by the way we'll talk about it in a minute but this is basically the technique and it's and it's it's critical it's Actually like tripling the the the accuracy this specific technique is is the is the like the the important part uh

of alpha codium okay like one of the most important part is like being able to generate test and and how you would would consider them uh okay cool so uh now let's let's talk about but but by the way like this is technique that's supposedly specifically for uh for for code and like and and testing is important there but again I think it Would apply to other fields you just need to think what could be your anchors and uh and how how do you like accumulate them okay cool like uh I have like two two

three more minutes and then maybe I'll stop for maybe one start for Q&A um so so what what happened is that uh um we use this technique and and basically for each problem we we see that there's like 100 llm calls again I want to uh um say that I think as a human if I get a problem I would run 100 Inference in my head okay what did I read let's try to understand it okay what basically uh how do I think about it how would I test it you know think about the process

that you would go we claim do the same do a system too do the same thing with with llms we we and and that led to like 100 LM calls uh per per problem and uh basically um with that with gbd4 we got better result than than Alpha code uh one but four e for four magnitudes better uh like in in the Amount of llm calls okay by the way and I didn't put like GPT for like uh vanilla uh it's really around like the 5% if you really work hard and like do like almost

overfitting you can reach like 19% okay with prompt engineering um okay uh and then Alpha code 2 came out and they reached actually much better result than Alpha code one they didn't change too much uh in their system than Alpha code one uh again Alpha code is by Deep mine Alpha Codium is by codium AI my my uh company that I'm co-found ad and and actually they did like really reach close to our results but there is a very big difference that they fine-tune their model on the codebase okay and that goes back to the

original question in the in the Q&A my claim is that if you needed to find your model on the codebase I think like they do that extensively then I would consider that as another problem of Computation because you might find that you need to find unit on every repo uh in your 1,000 like uh company's repo or something like that that that's a problem our Alpha codium is generic and can can be used with deep seek model GP GPT 3.5 for uh whatever like we're now running with Cloud 3 and the new gbd4 uh to

to show new results and it looks good and no fine-tuning so it show like the how designing a flow could could really help to reduce variants and the Interesting thing is actually we saw that on the test set that actually the the gaps between GPT 4 than other models shrink so like uh it says that probably gbd4 was trained on some uh some of the uh training like some of the uh on blog post that wrote about code forces and but they you don't have access to the test set but that's one explanation but the

other explanation is that the flow actually reduces the the variance yeah I'll stop here I I want to tell you that A lot of the techniques that we learned uh we published them in in our blog and I recommend here uh like uh go go and read about them love love to take question uh from your heard anyone or anyone else I I have one question and then I'll and then I'll just jump right into the audience questions can you go back to the slide where you show the flow of everything uh yeah of course

yeah right here so um you so basically you've got the pre-processing which is All kind of like directed then you go into the code iterations and you first like iterate on the initial code solution and then you go iterate on public tests and then you go iterate on AI tests right correct why um why don't you allow for like going from like why can't like why can't you after you iterate on AI test you go back to like the initial code solution or something like that like why can't you jump more freely around the states

what's what's Kind of like the logic there did you experiment with that yeah it's definitely an option uh I repeat what I understand for you because I agree it's an it's option basically you say hey if I failed uh if if I failed here like even in the public test uh uh like it's it's the first like it could be Zero by the way some some problems come with zero public test some come with one some come with n public test and you need to manage with like with this system with Each one of the

pro with the options let's let's say that we C we had five like public tests so it could be that your solution can solve even those five public tests and then like uh you're stuck here forever supposedly so and then and then go back so so actually we do do that um we we just like kind of like uh like start from scratch uh we we found it like that it's more useful just let me I'll explain for example go all the way back to choose another option Like choose another um once I got stuck

with with the public test then you jump and choose one of the option that was were generated it's true that's that's one of the thing that's happening we just didn't drew that uh Arrow very cool all right I'm gonna I'm gonna jump into some of these questions now because we've got some good ones looking at the first one which I think we already chatted about um uh and specifically like is the flow engineering framework Aoid of a way of avoiding fine tuning and using prompts instead maybe I'll take this and frame this a little bit

differently which is like in the future do you think most of the time will be spent on prompt engineering flow engineering or fine-tuning and how do you think about those three different kind of like aspects intermingling or or or yeah relating to each other yeah of course I'm going to give a disclaimer that it depends the problem depend on The problem Etc but I actually will try to to to give a statement here I think it's it's going to be like um around uh 60% uh flow engineering 35% uh fine-tuning your model and and uh

and and 5% or like on on the prompt engineering and if to switch like some use cases would be uh the other way around for the first two like if you funing a model and cleansing the data before that and then designing the flow the reason I chose the first is because I think here's what we do at COD but I think it's applied to many other uh domains and that the first thing you do is like you say you bring your expertise in the domain and say what would be the best uh what would

be the best flow and and and then you you check that with with generic uh like uh General uh General models like and and and then after that you start seeing where these generic models fail and you start collecting and cleansing uh data Especially for the the failure cases and then it's an opportunity to find a model for a specific task you can even go to Lowa style like have like a different like attachment for for different prompts here or or for different like flows it could be like in that resolution depends like it could

be resolution of of a question or resolution of of a flow Etc and and and and that's I think like the main work that you need to do well while if you Did invest time on the flow engineering and collecting data the prompt engineering still required of course but the variance really shrinks uh like uh it's the the the reason we needed to do so much prompt engineering like until today is because we do the system one like the prompt is everything like like you give the context and and you you get the results so

as humans even think about it if I ask you something like give me an answer right now the way I Even a selection of a few words would change your mind but if I let you think about it actually the selection of words will will mean less so so that's the same thing what what's happening happening here yeah okay so you I was like I was going to ask a followup which is basically like if you're doing flow engineering you still need to do prompt engineering at all these nodes right and you're not saying that

flow engineering replaces prompt engineering it just you You'll spend you don't need to spend as much time on it because of other things right you can claim that there's more prompts like uh um because otherwise maybe you would had W here's the problem yeah giv me the final solution and by the way here I brought you the test like everything in one prompt so but but eventually like it actually takes less time because of the the reduction and and variant and and and by the way I think that also relates uh kind of to Like

that relates a little bit to like I I think what a lot of people are talking about when they talk about kind of like multi-agent systems um I think often times when people talk about multi-agent systems it's not like five Auto gpts hooked up to each other it's like five very it's five specific prompts hooked up to each other in some flow basically um and that flow is I think in multi agent system it's a little bit more Loosey Goosey than kind of like flow Engineering but it's still the same idea where you have you

you have different prompts and and different things um and so I know multi-agent systems have kind of become more popular and I think that's one of the reasons why um the next question uh which I like uh uh uh isn't it a thing that like agents like God mode or autog GPT design design a step-by-step plan which has to be performed and so maybe I'll take this uh uh in or I'll build on this which is Like you know there have been a bunch of papers uh around planning generally and around reflection generally um H

how do you think about those are those examples of flow engineering just like more generally and so I guess like yeah like how how do how do you think about plan and refinement are those flow engineering and then two like how do you think about flow Engineering Systems that are General like this is this is a specific system specifically for coding Can you still get the same benefits if you're doing like a general kind of like system I I think it's it's hard for me to imagine like you know the NO3 lunch theorum if uh

uh like sorry my English is not so good so maybe I'm not saying it correctly but basically it says basically it says that uh you know if if if you have a random problem uh then uh the best algorithm would be the random algorithm hey if if if people in the audience do not know about it let it Sync like think about it first think about it for a second if you have a random problem the random algorithm would be the best one it's proven mathematic right like I think Harrison like like it's 10 years

since I learned that but I think it's it still holds I don't think it was proven otherwise and and and and for me it's like uh uh it's kind of my answer to you like if you're trying a generic one actually the concept of flow almost breaks the idea Of the flow engineering is that you're saying Hey I want to solve a specific pain or even if it's like not so specific but like specific enough then you can integrate like uh best practices and Kno hows and what tools to use when and then you'll get

a much better result than the you know general you know reflect and and Etc but for generic ones uh I think like I'm not sure I think like actually the the more the more like uh Buzzy uh things would would work Actually better because of the what I what I said yeah by the way that that leads me to like uh it's I'll do it quickly I think like I have like a a notion of how agent going to look in the future so one we have the question of One Versus many right like are

we going to have like a single uh agent that does everything like like open a are coming with gb5 that can reason and that's it no no more anything right or is it going to be like uh like Agent swarm like gpts Etc or or like like like mini flow engineering and by the way even if we take the option of super agent that control other agent is it the same agent that operates similar agents or or or one agent that that that that uh like control specialized agent so I'll tell you how I see

it it's either the the Swarm agent swarm and the human is the is the super agent the controller or the the super agent that is the interface for the for the for the User like the developer and it's going to be special a agents and and I think like almost any application I can think of would be one of one of these options and why I'm mentioning it here because of your question is because I think that you would have specific flows or if you like agents but I prefer better the flow you have specific

specific flows for specific use cases otherwise the flow won't matter but if you do have specific applying flow would actually make a Useful uh product and and and then like together they can like provide a more holistic solution that that's how how I say it yeah okay so great I know we have two minutes left I want I want to touch on one last thing which is like the the human the human interaction so there was a part of the previous question which is like you know like uh I think God mode maybe has like

specific a specific UI where you can interact and like change the plan basically so there's that human Interaction I'm scrolling through some more of the questions just to um see the ones uh can a flow take it also take in human help if the flow is a bit confused um there's another one that I liked which is like uh since the feedback only comes at the end how do you kind of like optimize tweak intermediate steps and corresponding prompts so basically I'll maybe generalize all this is just like how uh how do you view the

role of like human in the loop or human on the loop For these types of like flow interactions right uh I think uh I I'll try to answer all of them like in in two concepts first I want to say that we uh we cheated here twice in in the Simplicity of the graph uh one uh you already uh realized that there is one that is missing that if you got stuck somewhere you go back but there is another one that there is also some skip connections or deep supervision sorry I'm going back to 2016

you remember Those models so basically some of the outcomes of specific uh some of the outcomes of specific uh uh blocks actually keeps propagating to the next one and and that's very very important it's kind of like kind of deep supervision if you remember from the past or or like uh enabling to deal with like otherwise you have the if you have an error error it will just like you you will never solve it but you have reflection steps you have reflection Steps almost every like uh block and then if you get the data from

mention like a flow oriented supervision Etc and about the human supervision that's another like cheat that we did here the cheat is that this is the the results we published are real and and you can reproduce them but uh I think it's a bit overclaiming that when when we say and Deep Mind said look we did better than professional programmer but we did better for the code context if You try to do this uh take the alpha Podium as is on real world enterprise software forget about it it won't work like it's like really really

like doesn't handle all all these cases and the uh applying Alpha codium applying Alpha codium Concepts uh into uh real world Enterprise like AI assistant will require uh the llms or the engineering flow to raise questions to the developer uh when when the llm or the flow is is not sure I'm not sure if I have like a Like a ready ready options but um but but basically in in Alpha in the Codi our our tool let's see if I have something ready uh for us one second yeah so you can see here I asked

uh Codi uh to to generate something really really like unclear take this toy example bank account what you're seeing here is an ID with a codium uh plug-in and uh took this silly bank account and and and I did a demo to a crypto company so I made a joke there I told him change His bank account to a blockchain account obviously everything is a toy example and the first thing that that that it did the this flow is saying look dude this is like you gave me a a definition like a product manager it's

missing a lot of uh a lot of parts give more details so this is an example where in the coding competition it won't do that it will just like try to do the best and go like continue comp Computing until like it Tries to give a solution but in real world software when things are not clear the the flow needs to stop and and ask the developer for more information or clarification and things like that and that's how we like productize the the alpha codium for for real world awesome and I know we're over I

think we could talk for another 30 minutes if we had the time but we should probably end and let people get back really appreciate the timear this is Great we'll do a follow up in a few months since Alpha codium too right we'll do a follow up for that with langra with lra all right and than everyone for tuning in um check out codium AI check out Alpha codium check out L graph and uh have a great rest of your day thank you thank you arson thank you everyone

![[Webinar] LLMs for Evaluating LLMs](https://img.youtube.com/vi/jW290vZThgw/maxresdefault.jpg)