[Music] so last time I left you with a definition of uh The Joint probability Mass function of two discrete random variables we could previously make sense of two discrete random variables on the same probability space so this um really just is is notation um but we will have an example today to show you that it's a little bit more interesting than uh than a way to um to Define um the independence of of discrete random variables that's the other definition that we had last time and which we used on one occasion to um to um

represent a binomially distributed random variable as the sum of N independent Bly random variables now the other concept we had last time was the concept of expect ation and we're going to explore some more of that as well today and particularly in the context of the law of total probability which allowed us to calculate probabilities of events from conditional probabilities of that events given a partition of other events and today we're going to see that expectations can be similarly calculated as expectations conditional expectations um of uh of the same random variable given a partition of

events in a formula very similar to the one that we have here okay but going back to to that definition of uh of expectation it's a little subtle because of that uh um condition that we had to put that this um series um is finite and I'd just like to introduce some terminology here um that makes us uh write somewhat fewer series terminology so I want to say that the series that we want to Define which is of the form that we are summing some function G of X um over all X in the um

image of X so in a context of a random variable and I want to say that this or such a series converges absolutely if the associated series that you see there with the absolute value signs around the little X or in this higher generality where the series of absolute value of G of X is finite okay so it's all about um the um absolute value signs that we don't want in the object that we want to Define but that we need in the condition um in order to make sense of that object in sufficiently high

generality so that means that it's really about these absolute value signs in here um that uh that we don't have in there but we can replace um this condition um by saying um if this series or sum converges absolutely okay it's just a way to express in words what the serus expresses and its terminology that is consistent with what you're going to use in the analysis course um and so um so that is is another incentive for us to to be using this terminology now back to the first point that I recalled The Joint probability

Mass function so in the context of two random variables on a single probability space you now have several um Mass functions you've got the probability Mass function jointly for x and y and you've got them separately um for each X and respectively y so in that context um if we have XY with joint probability Mass function P XY in the sense defined up there um we're defining the so-called marginal probability Mass functions as well the probability Mass functions of those random variables so that uh we can write in two ways um but let me first

uh write marginal distribution of X and this is given by the P subx of little X which we've already introduced as the probability of x equal to Little X and uh which we can now write as a sum overall y values in the image of Y so the possible values for the random variable Y where we have summing over the joint probability Mass function evaluated in X and Y so this is a way to relate the previous notion um to the more recent notion of a joint um probability Mass function and similarly similarly the marginal

probability Mass function of the random variable y is the probability Mass function of the random variable y but in this context um it we can represent it as the sum over all X possible values of capital X over The probabilities jointly for X and Y to take the values Little X and little y okay so that's um terminology that uh that we will use um and it's just uh pointing out how joint and marginal um probability Mass functions are related in general now here's an example in this example I take the simplest possible case of

uh two random variable X and Y which both only take two possible values namely zero and one and so that means they are both Boni random variables as we defined uh last week so um we can now um specify a probability Mass function a joint probability Mass function so suppose that is given by um something that I um write in a in a little table so we write um maybe start start here with p XY of 0 0 being 1/3 um then P XY of 1 Z being 1 12 then P XY of 01 and

being 11 12th and P XY of 1 one being 112th then what we've defined is that uh we can take take the column sums and the row sums um and find uh the marginal probability Mass functions so um here if I sum over the rows that means I'm summing over all X's um for a fixed Y and so I'm obtaining py of 0 is equal to 1/3 + 12 is 56 and if I do the same in the second row then I get the py of 1 as being equal to 16 and if I sum

The Columns instead then uh I'm fixing the little x value to be zero and I'm summing overall possible y values and that gives me PX of 0 as 512 and PX of 1 as 712 okay of course you can also um sum across um the uh the final column or the um the final row and you will um have summed all members and remember this uh will always give us one as we sum over all possibilities for the random variables X and Y to take values now let's look at this uh definition of independence of

two discrete random variables what we've said is we require the probability jointly for X = X and yal Y to factorize into the probabilities of xal to X and and yal to Y so we can now investigate this we can now say well is the joint probability of x equal to 0 and y equal to 0 the product of the probabilities respectively of x equal to0 and um y equal to zero and in this particular instance the answer is no because 56 6 * 512th is definitely not 1/3 okay so here P XY of 0

0 is equal to um 1/3 which is not equal to um 512th time uh 56 which is the product of PX of x * p y of Y and well I've written it in terms of the probability Mass functions here but the meaning of course is precisely um that of what appears in the definition of independence of these random variables so we have um um these um factorizations well I write X but X is0 and Y is zero so probability x equals 0 um times the probability y equal to Z here conclusion is that um

we only need one counter example um to say X Y are not independent indeed thank you okay so this is of course a simple example um in fact dependent random variables um well either they have a particular construction from independent random variables or they are um somewhat simple in our examples um but of course in general if you write down arbitrary functions on a probability space um you will find that Independence is the exception of what of what you will find but uh yeah I mean for for our purposes Independence is the is the nice

concept that we are usually um happy about and dependence as here is um is what we can handle as well okay so what more I said the law of total probability was going to feature again today in the context of expectations so let's look at um conditional distributions so here's a definition in the context where we have a discrete random variable we and an event we can look at um the conditional distribution of the random variable given the event so the the random variable is a function of a sample space and of course we suppose

it is a discrete random variable in the sense that we have defined and B an element of f if you want to condition on it um then we suppose that it is such that the probability of B is positive so what are we going to condition while we are conditioning events associated with random varable capital X on the event B so we then Define the conditional probability Mass function of x given the event B by what you might expect but here's some notation as well P subx given B in an index evaluated at Little X

it's a function of little X still is the probability um let me first write the conditional probability of x equal to Little X conditionally given B and that remember is the ratio of the intersection probability of the intersection that is the pro intersection of the event x equal to Little X with the event B divided by the probability of the event we are conditioning on which is B okay that's something that is naturally called a conditional probability Mass function what you can now check is it satisfies our usual constraints for probability Mass functions as you

sum overall um values Little X this adds to one and as you um notice these are also non- negative so we do have a probability Mass function here in the original sense of that word and because it is a probability Mass function we can look at our definition of expectation and say well the expectation is um something that is a a sum over all possible values weighted by their probabilities well that applies if we plug in a conditional probability Mass function and so the concept obtain is what we refer to as a conditional expectation of

x given the event B and so we denote that by expectation of capital x given B and we do what I've just said with some overall possible values in the image of X and uh what we are summing is x * the probability that X is equal to x given b or if we want to use the notation um that we've just introduced is with a conditional probability Mass function that's of course what you see here it's p x given B of little X and as with all of these um we have to say I'm

provided that this sum converges absolutely that is if we put absolute value signs around the X we get something that is finite okay so now we have ingredients that allow us to make sense of a law of total probability where we are expressing now an expectation of a random variable by summing over um all uh members of a partition um and now expectations of the random variable conditionally given each of the bis multiplied by probabilities of bi so here is the law of total probability for expectations so if bi I in f i n i

is a partition of Omega with probability of bi positive for all I and I then we can write the expectation of X as a sum over I in I of the uh conditional expectations of x given bi multiplied into the probability of bi okay whenever the expect of X exists right that was another way of saying whenever these uh the sum behind the definition of expectation of X converges absolutely well that's a theorem let's prove this theorem it's the first of seven several theorems today where we have to handle Sigma notation um for sums in

a way that well we will see several sums appearing here um so let me go into this and then get back to that comment so the expectation of X by definition is the sum over all possible values Little X of X into probability xal to X now that that is an event that we can call a and to which we can apply the law of total probability so this then gives us a sum X in the image of X of X multiplied into a sum over all I indexing our partition of conditional probabilities of this

event xal to X x given bi times probability of bi so let's just acknowledge by the law of total probability so what can we do here well this is where um where we um need some skills of handling uh the sigma notation where we can say let's just put the sums up front is that what I want to write well I have now so let me continue just X into probability of xal x given bi I probability of bi where where do we want to go we want to see the expectations of x given bi

on the right hand side so what is that that's the sum overall X of little x times the probability of xals x given b i so what's in the way is this sum and this probability so what we're going to do is we are going to Interchange the order of these sums so that the I sum comes first and then we notice that uh this probability of bi we can actually move that up front as well and write probability of bi as something that does not depend on the little X that's being summed over in

the second sum and what we are left with is the second sum summing over what remains is x times the probability x = x given b i okay and this is by definition the conditional expectation of x given bi I and we are seeing exactly the expression that I claim is the representation of the expectation of X okay so like with the law of total probability the question arises why is this useful aren't we making a mess of things by uh writing a simple probability as a sum over many more complicated objects in this case

conditional expectations that maybe you feel uh will be harder to calculate than the expectation itself so here's an example where um actually there are some symmetries that can be exploited um that help us remember I defined expectation and gave you several examples of expectations of random variables with given distributions what I did not include is um the geometric distribution the geometric distribution remember has probability Mass function PX of k equal to 1 - P to the K - 1 um * P for K = to 1 1 2 3 Etc and what we want to

also remember is that we can find a geometric random variable um as the first um success in a sequence or let's say among events A1 A2 A3 three Etc um of berulia Trials right these are independent identically uh independent events with identical um probability um p and uh so with that representation um we can think about how we can relate the the expectation of x to some interesting events so one event if you are thinking of doing this one step at a time you have a first trial does A1 happen or does A1 not happen

well that's the same question as asking is x equal to 1 or is X not equal to 1 and that's our event B if we condition on that then things are not so complicated because the probability so the the conditional expectation of our random variable X x given B is a rather degenerate object because given B this random variable is always equal to one so what you are um what we've defined in our conditional expectation is just naturally one uh on the other hand if we want to apply the law of total probability um we

need a partition and the partition I have in mind is the partition into two events B and B complement so we will also need need the expectation of x given B complement now what is that well think of these trials you will have a first trial on this event the first trial has been unsuccessful so we have one um for the first trial which did not lead to a success and the um total number of trials that we have to wait is this one plus we're taking expectations as well some X Tilda which um is

looking not at the um full sequence of events but looking at the events A2 A3 A4 Etc and is asking what is the first success in that sequence and that X Tilda which is the first success among A2 A3 A4 Etc this is again geometrically distributed with the same parameter p as what we started with okay so I wrote Tilda because the random variable is different as it's related to a different sequence of events a subsequence in fact um but uh the distribution is the same so I get um that this is also related to

the expectation of x if we put this together then we find that we have a an equation from which we can work out the expectation of X let me just uh write one more detail for this uh um formula to apply we also need the probability of B but B is xal 1 xal 1 with probability p and so um so that's our final ingredient so we can say by the law of total probability for expectations the expectation of X that we want to work out is equal to the probability well I wrote Expectations first

let me keep that order the expectation of x given B times the probability of B plus the expectation of x given B complement times the probability of B complement all of these we've worked out um the expectation conditional expectation is one the probability is p the conditional expectation here is 1 plus the expectation of X and the probability is 1 minus P okay so that is something where we have a expectation of X on the left hand side expectation of X on the right hand side with a different Factor we can solve for the expectation

of X so um we rearrange to find the expectation of X time P because 1 minus P are on both sides so we are left with p on the left hand side um is equal to the only term that remains here while it's a p + 1 minus P so that is 1 okay and we want to conclude the expectation of X is 1 / P okay there's only one problem with this if the expectation exists do we know if this expectation exists do we know whether the sum defining this expect ation of X is

finite at this point we don't so what I have to write here is if the expectation of X exists okay and there are um there's still important progress here because it's a calculation that gives us an exact formula provided only we show that the sum defining the expectation is finite and certainly in the analysis course um you will learn that it is much easier to check that a series is finite than to work out the precise value of a serious and in that sense it's progress there are other ways also of calculating this expectation and

of in particular showing that it is finite and we will get back to that um at a later stage in this course um when we have some more techniques um associated with uh with expectations of of random variable but for now I'd really like to have that warning on the page and something that you may just want to um to think about when you find similar um situations where you um want to solve for something that you may actually not assume is finite okay so what more um expectations of random variables sometimes it's useful to

not just have the random variable itself and its expectation but some other expectations associated with the same random variable so here is a theorem which says that if you have any function H from the real line into the real line and a random variable X then we can form a new random variable by applying H to that random variable X so how does that work formally well random variables are functions on a sample space so I'm defining Z of Omega to be h of X of Omega for Omega in Omega well we will write z

is equal to H of X and in fact the way of thinking of X as just a real number a random real number um you naturally apply H to that random real number so that is the the meaning that this composition of function captures well um the statement I'm making here is if x is a discrete random variable then this Zed so defined is also a discrete random variable and I said this was about expectations so what can we say about the expectation of that new random variable h of X so that can be written

as a sum over X in the image of X of H of Little X time the probability x = x okay something very natural if you think about what we are trying to do here we're trying to work out the average value of H of x's as each little X is weighted by the probabilities of xal X and that's what this is trying to do but we have a formalism and the formalism says the expectation of a a random variable is defined up there and if this is a random variable in its own right then

this expectation is defined accordingly we have to sum overall possible values of that new random variable Zed and we have to work out the probabilities of that random variable Z taking a particular that particular value Zed that we're summing over right so there is something to do here um that uh is uh is looking into how we set up random variables um but in order not to um to be drawn too deep into this at this point um I say the proof I maybe do it later but the result itself I'd like you to appreciate

as a result that is available to you and uh that you hopefully find sufficiently natural um to apply so here is an example or some examples for or what sorts of expectations associated with X you may want to calculate other than the expectation so if you take um h of x equal to x to the k for K greater than or equal to one then um the expectation that we're looking at here is the expectation of x to the K and um let's just give a name to that is called the k moment of X

okay and um well there are some other um or related things um that uh that I'll get back to um if not today then uh then uh um some other time if we take x equal to um x minus the expectation of x squared then um we will find the variance of X so it is something that relates back to to things that we certainly care a lot about um in probability as well as in statistics as in describing um not just what is our random variable on average but also what is the spread around

that average value that the random variable takes but as I say this is something that uh that I'll do some other time um because for now I want to make use of the fact that I've got this general formula there and uh that I can I can uh derive some other important properties of expectations from formulas just like that so here is another theorem um is that where I want to leave it I wonder I've been sloppy in a way that I didn't want to be sloppy I've written more sums here those sums may or

may not be finite be absolutely convergent so um I have to say if this sum converges absolutely okay so our context has been more than one random variable so here I started with a function H that takes one random variable up there now let me take two random variables and have a function from R squ into R then the same formula holds if I work out want to work out the expectation of H of X and Y then I can work that out by um taking a double sum over all possible values for x and

all possible values for y um where I'm just averaging the H of X Y over the probabilities that both X is equal to Little X and Y is equal to little y okay and this time let me um write it straight away provided um the sum converges absolutely okay well another theorem called for another proof but I'm definitely going to Omit this proof it's it's going to be similar to the other one right the results are effectively the same you have more sums to handle but uh in fact this other proof once you see it

um um addresses difficulties of of multiple sums um where um stepping up from that proof to this one will be a small step as a warmup I'd like to do the next one as a result that I will prove um because that um handles double sums as well in a way that is a little easier um to to follow so here's a consequence consequence is linearity of expectation so if I have two random variables X and Y discrete random variables and if I have a b real numbers then what I can well let me say

um random variables whose expectations exist well in that context um I can relate the expectation of the linear combination of these random variables to the expectations of the random variabl it's just the linear combination of the expectations of the random variables okay and the same is true what I what I said earlier this is a random variable in its own right because it's a function on a sample space and the definition of its expectation is what it is going over all possible values for that um linear combination but that's not the way that we we

need to approach it now because we have a more powerful theorem here um that allows us to um to bypass being explicit about what the possible values of the linear combination are so here um how do we apply that result h of XY if it is to give this um has to be h of x yal to ax + b y then the theorem above or to the left left actually um yields that the expectation of ax plus b b y that we care about is equal to in the notation of the theorem the expectation

of H of X and Y and that um we can write um as a double sum ah no more space as a double sum over X in the image of x and Y in the image of Y over um h of X Y into yeah let me write p x y of X and Y here in my concise notation with the joint probability Mass function so that h of X think of it as ax + b y here so what we then can do is we can say we've got these sums and these sums can

be applied separately onto uh the first term and onto the second term and splitting this apart in this way um is giving us um two sums where we can furthermore move some constants outside okay so that gives us a times a sum X in the image of X Y in the image of Y of um the um x * pxy of XY plus b * the double sum X in the image of X Y in the image of y y * the PX y of XY okay so what do we see here well we see

here that this x does not depend it's constant in the second sum so we can move it um out of that sum and what we then see is that what remains namely this sum and this probability here um that's why we related the marginal probability Mass function to the Joint probability Mass function so this sum is equal to P what are we summing we're summing y so we are retaining PX of X and the other sum gives us the expectation so we find a times what you recognize as the expectation of X right so that

is the sum here with the X and that term that's our definition of the expectation of X and we have a similar term here which you can handle similar IL L um after interchanging your your sums um you will find that is B * the expectation of Y by exactly the same argument and that's what we wanted to show okay so what have we done here we've we've written out our expectation as a double sum and then we've used rules for sums um if if a sum is over a product where one term does not

depend on what we're summing over we can move it outside and that's uh otherwise leading just to the um relationships between our joint Mass function and the marginal probability Mass function and then to the expectation of X right so everything then breaks down into into quantities that we are familiar with or at least that have appeared previously okay so here are some consequences remark so first if a is equal to 1 B is equal to 1 then we get that the expectation of of the sum of two random variables is the sum of the expectations

of the two random variables B if one variable is constant then what we find is that the expectation of ax + B * constant 1 um is equal to a * expectation of x + b or inductively you can establish that the expectation of a linear combination A1 X1 plus Etc plus a n xn will similarly be a times the expectation of X1 plus Etc plus a n time the expectation of xn and finally note that we made no assumptions about independence okay so that's uh a couple of of consequences just uh picking up uh

on on what we've we've discussed I hope you're happy with those but what's all this good for so one way in which this is useful is what you'll see on the problem sheet at some point um which is there are different ways of working out the expectation of a binomial random variable and one way would be to use our representation as a sum of independent beri variables while independent doesn't seem to matter but certainly the representation um gives you an easy way of working out that expectation which you can compare with a definition which involves

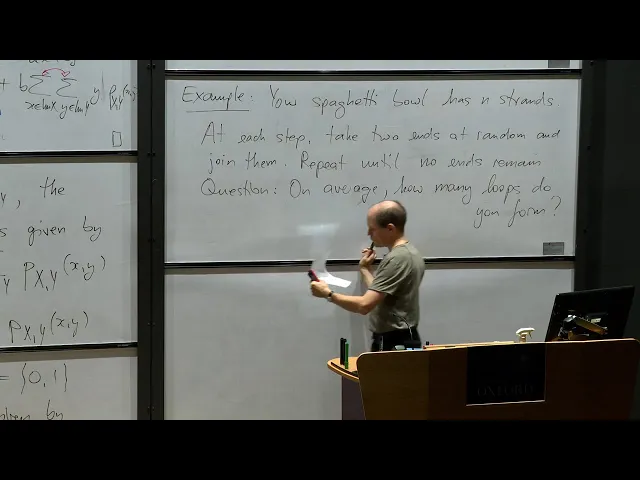

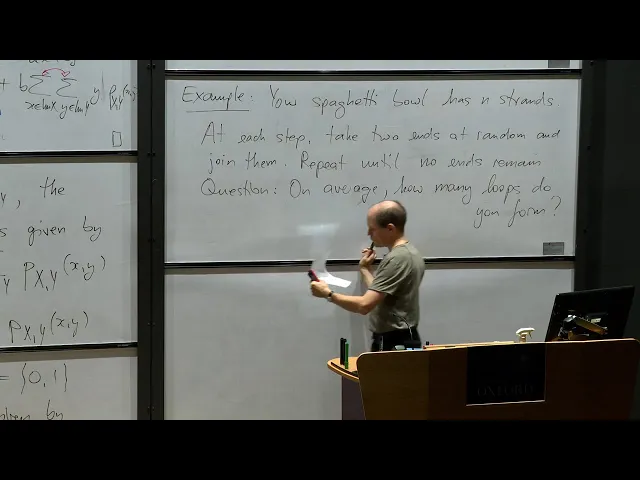

binomial sums and some rearrangement necessary right it's best explored by yourselves as part of the problem sheet question um but what I'd like to point out here is really something to do with that um Independence now here's an example which is maybe not the most useful example but uh but that's uh that's not not the the point here um so imagine you have a spaghetti bowl and lots of strands of spaghetti with two ends each and you want to tie them together at their ends right the way you do that is you pick two ends

out of your bowl suppose the bowl has n strands pick two ends and tie them together there's two possibilities either they are the ends of the same strand in which case you form a loop or they are the ends of different strands in which case you just get a longer strand of of spaghetti Okay now what's the question here I'm asking on average how many Loops are you going to form if you keep doing this until you have no more ends in the bow right so that's um something let me just write that down and

then see if I can uh get at least partway into a solution today so here's the example your spaghetti Bowl has n strands of spaghetti with two ends each well I think that's clear so at each step you take two ends at random and join them and you repeat until no ends remain question on average that's code for what's the expectation of the following random quantity so on average how many Loops do you form okay well let's make some observations there's nothing identically distributed here so we definitely need to be um somewh uh more inventive

here um when we want to analyze this so let's suppose that we have K 's remaining what is the chance that you form a loop the next time round right you pick two ends at random but the first one doesn't matter which it is it's the second one that's interesting because there's K minus one among which you choose and only one of them is the other end of the The Strand you've already picked for the other end of so um that means that 1 / k minus1 um is the chance of a loop at the

next step and the other observation is what happens well the number of ends changes right if you tie two ends to together no matter which case you're in whether you form a loop or not the number of n's drops by two okay so what does this give us in terms of random variables um let us uh look at the steps n steps for n strands um to losing two NS each time I denote by xal 1 the event that we have a loop at the I step and I denote x i equal to zero otherwise

right from what I've said then I have XI Bui distributed because it's 01 valued and we know what the chance of forming a loop is once we work out how many um ends we have for the I step and putting that together um you will realize that the K is equal to 2 into nus I on the I step and so we add one uh well maybe maybe it's one out uh but I think yeah um because I start summing from one um we we get this uh this denominator here and uh well we can

now work out the number of of Loops because we all we need to do is count the ones in our sequence so that is just summing the random variables X1 up to xn and we are interested in the expected number of Loops by linearity of expectation this is the expectation of X1 Plus Etc plus the expectation of xn and now you can write that more or less nicely um with a bit of change of indexation you will find that you are adding all the reciprocals of the first n odd integers um as the as the

answer here and I'm sorry for running over time but I think this is a way where uh where we can usefully stop because it's an example where we haven't thought about independent and it's good we haven't because asking whether those XIs are independent is maybe a question that's harder than uh than answering the question about the expectation of this number of Loops anyway thank you for today