you know what you're watching you're watching AWS on air you're live with us here we're in Las Vegas you're watching us in Vegas at reinvent 2024 and we're so excited that you're here with us my name is Fiona mccan I'm a Solutions architect at AWS I'm in the public sector I work with nonprofits and I have the pleasure of co-hosting with Jasmine Jasmine how you doing I'm great hi everyone I'm KY I'm a manager of product management here at AWS and I work all things partner and we're joined here by some fun guests to have

an exciting announcements to share with us so who do we have here on the stage hi uh my name is Rohit ml I'm a principal product manager in Amazon AI team and uh lucky to have uh the opportunity to announce our latest models um hey thanks roit hey everyone I'm DH rajput I'm also a senior product manager in the Amazon AGI team uh and you know we are here to talk about Amazon NOA absolutely so if anyone was watching our Tuesday keynote Andy jasse made an appearance and he was there to announce a service that

you're now here to talk to us about would you mind telling our audience what it is that we're learning about today absolutely so uh as most of you must have heard uh Andy himself came on stage uh to announce our Amazon Nova family of foundation models so these are state-of-the-art Foundation models uh with Frontier intelligence and industry-leading price performance so these Foundation models are uh I mean have uh state-of-the-art performance as I said these uh are in two family of models one is called the understanding okay which is which uh are is which is available

in four different price performance variants MH and can take text image video as input and generate text as output okay and then there's a second category of models which is called creative content generation models M uh which is text to image and text to video okay and we are very excited to talk about the content uh the creative content generation mod today which is the Amazon NOA canvas and Amazon NOA real so it sounds like on Tuesday we had announced that whole the Nova family of models and there's kind of two different buckets that they

fall into one of them being that multimodal to text one of them being text to uh creative output we're talking about text to creative output what is so special about these text to creative output models so uh we had learnings from our last year so last year I was actually on air uh introdu you were here with us what were you talking about last year so last year I was here talking about our Titan image generator model you're talking about Titan which is also a textto image model and uh a lot of customers love it

but what we did was we took the learnings from our customers from that and took state-of-the-art architecture because I mean technology moves super fast space A lot has happened in the in the world of gen this year so we have taken all of that and created this new generation of of texture image model uh with uh state-of-the-art uh performance and the most comprehensive set of features that are available as an API so all the Enterprises in in advertising marketing e-commerce social media find a lot of value in this model so uh so we uh we

are announcing that as well as the texture video which is a brand new model uh which is not available widely by a lot of other competitor models and that also comes with state-of-the-art performance MH and uh I mean text to video as well as image to video so a lot of features coming in and we'll be excited to show you that I mean I have to say it's pretty fun I after this was announced my team at AWS we were already excited my team has been sending some videos that they've generated I got one of

a dinosaur that's uh riding a skateboard through a jungle that's what one of my team members sent me never thought I'd get that video and it's kind of a new and exciting thing that we get to have now absolutely so you mentioned that there's a set of API Avail or API applicable features that make this stand out what kind of features are we looking at there so in terms of uh image generation we have uh text image generation uh you can generate some variations of the images that you like H you can guide the uh

generation of images using color palette because a lot of brand Aesthetics need that okay and then we also have you can provide a reference image generate images that are similar in style and then we have a lot of editing features also so in painting out painting where you want to replace some object you want to replace a background especially in advertising where customers want to showcase their products in more lifestyle setting right so they can very easily do that with a single API or remove backgrounds Etc so it's it's a whole set of comprehensive features

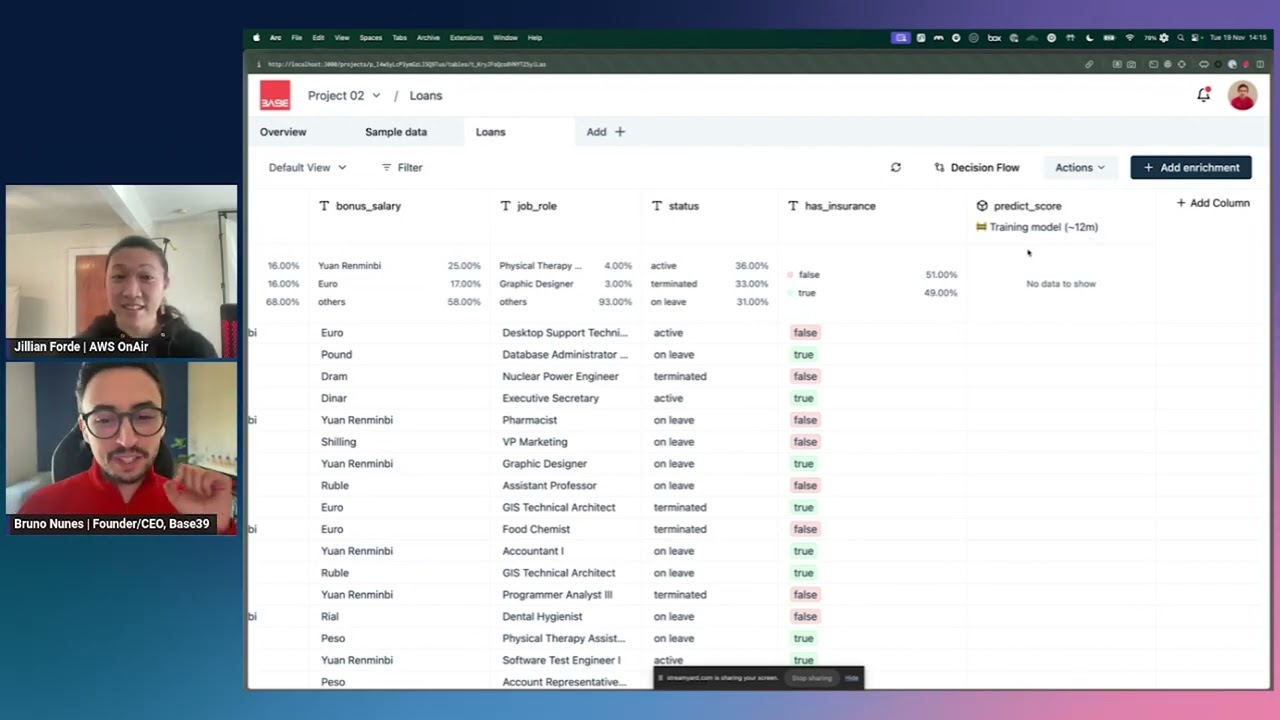

that we provide with this put my object in a kitchen versus put my object against a sunset yep okay very cool and all these models are available on Amazon Bedrock right which is which is our gen platform with a lot of Enterprise uh features around safety customization Etc so people are finding value in this yeah that's awesome so can we see it yeah I'm always like I want to see it I want to see how it works to see how this is evolved um yeah so it looks like you have the Amazon Bedrock pulled up

in the AWS console here yeah absolutely so I'm going to demo a few set of features so as Ro talked about so we'll start with first basic image generation which is text to image uh and then we'll move on to some other features as well um so here as you see this is the AWS console here within AWS bedrock and I've selected Nova canvas which is our text to image generation model yeah and since you talked about a dinosaur let me generate an image of a dinosaur in a btub and let's see what what it

outputs perfect uh I've got one question in the chat here from inder Mohan Li who's asking us can we create a video as well with Nova yes absolutely you can so so we have the Nova real uh which is our text to video model and we'll also show you a quick demo of uh that as well not only can you do it but you'll get to see it today absolutely absolutely uh so this takes a bit of time to generate uh uh since we are we we we are going around on three images here uh

so let me talk about some other features as well so this is our basic text to image uh now we have always uh oh we are here so this is the three variations of uh cute dinosaurs in a in a in a btub here uh and you know we have a lot of parameters here so for example you can change the seed so if you don't like this image you can change the seed and get a different kind of image we also have prompt strength here so uh if you want the model to follow your

prompt a lot you put it at Max if you want the model to be more creative you put it at less so we have different kinds of features as well here can we play around with in painting and out painting on here absolutely so I I have a I have a great demo for that as well oh gorgeous so let me uh switch to let's say we want to we have an image and we want to replace the background of that particular image yeah so I'm going to use the replace background here perfect and let

me upload an image of oh okay of an Amazon truck driving so now the the dinosaur is going to be in the Amazon track no no no uh this is a this is a different demo this is a different demo we could possibly get a dinosaur truck as well uh but this is more like an advertising kind of use case where you have an Amazon truck which is you know kind of driving on a highway and now you want to see this Amazon truck in you know a different kind of environment so uh so we

offer two kinds of uh prompts here so so in terms of deciding what what is the object that you want to replace uh so I'm just going to use a text prompt saying I want to place and Amazon delivery car so this tells the model that this is the this is the particular object that you want to maintain in this and then replace everything else in the background oh okay so that's what we're preserving and then I want to say uh you know I want to replace the background um in an urban background let's someone

in the chat is asking is there any way to make it look more realistic these look like kind of Animation Styles how do you get it to kind of look more real um so we we support different kinds of styles like Fantastical realistic photo realistic photo studio completely through natural language text so you can include all of those words so if you want a cinematic image you can include that word and you'll get you'll get something similar so as you can see now the Amazon truck is in an urban city kind of a setting here

okay I like that driving over the line a little bit can you put the car in the right lane well that's something you could describe and until you get it iterate over it you know absolutely looks like we have someone in the chat here who says there's a few features that our customers find particularly attractive about Nova canvas and Nova real and that's a compreh comprehensive set of editing controls like unique options for natural language and Direct Control of color and composition yeah they're also messaging something here about water marking is that something that's available

yes yes so so we we support a very broad range of transparency as well as responsibility featur so all the images that we produced by default are watermarked so you can trace back that they were created on AWS beg rock as well as from the Amazon Nova canvas model uh this increases the transparent transparency and credibility that you know these are AI generated images and we we take this responsibility very seriously yeah absolutely that's great to that's great to see so you've mentioned our text to image are we going to get to see that text

to video absolutely absolutely so again I'm on the uh uh you know AWS console here so we support two kinds of features in noar one is text to video where you can just input a text and get a 6sec video out okay uh but we also support another feature which is image to video where you can input an image which becomes the starting key exactly exactly um so uh the the the video generation typically takes 3 minutes so I'm just going to show you a few pre-generated videos as you can see done for us it's

almost like you're prepared uh so one one one key feature that I wanted to talk about our text to video model is it not only understands what you are trying to create but it also understands different kinds of camera motions which are you know very important for Creative kind of use cases so as you can see here my prompt is closeup of a large seashell in sand and then you know I want to make sure that the camera is zooming in as well uh so you can give these different kinds of uh camera motions so

our model understands 20 plus different camera motions which are very widely used and you can guide the model not only on what you want to see in the video but how you want to see it in the video ah okay so you have that that kind of cinematic control absolutely absolutely uh so this is a quick uh generation that I had done before so as you can see here uh you have a seashell you have a you know Scenic background and then the camera is zooming in here this is relaxing I can almost smell the

Salt Air yeah we have a question in the chat more about watermarks so how do they work is it saved in the metadata of the generated object no it's sorry go ahead yeah it's it's in the pixels of the of the image and that's why we also provide a feature on Bedrock which is Watermark detection yeah which is basically using the model uh that we have created to inject and detect uh the The Watermark that is created by us so it is our own technology and it's pixelated while the it is generated yeah so um

but we provide both the features yeah yeah absolutely we also have a shout out here that someone is uh zooming in from Germany someone's on Twitch here from Germany thank you so much everyone else if you're zooming if you're twitching in from somewhere else in the world let us know where you're watching from send us some comments send us some questions we love getting to interact with you all right what's next um yeah I I wanted to talk about the image to video feature as well uh so I was going to start with a image

here which is essentially uh a background of um let me actually just switch to the image and then you'll be able to see it better so this is the image that I wanted to animate and I want to also say forward as in you zooming in into this particular image and then I want to animate this so what I can do is I can use the image to video feature where you have a particular reference image you can input here and I can just say Dolly forward as a text prompt and that way the model

understands that the input image needs to be the starting point and then use the text prom to do what you want with the video and I have pregenerated this as well per so you'll be able to see this very quickly lovely oh look at that and the water's moving too wow yeah uh this should also be relaxing uh I hope so you're mentioning studio quality what are the benchmarks or standards that you use to measure that uh so so most most video gen benchmarks today have human evaluation so what we do is we we generate

videos from our model as well as different models and then we have a set of human evaluators who do blind testing of these models on different kind of criteria like video quality how consistent are the subject in the video does the video match what you have said in the text uh how is the quality of the you know uh features as well is the video consistent throughout throughout the throughout all the scenes in here uh so we have AP testers do this across a very large set of prompts uh and uh this is how we

have compared our models to others and you know I'm very happy to say that we are state-of-the-art in video quality as well yeah well congratulations on that I mean you mentioned already that you could see this potentially helping out people who are working working in marketing field could you tell us a little bit more about how you imagine your user based our customers to interact with this and how this might change their working landscape uh sure so I I can give you a great example actually Amazon ads is already using Nova real uh to generate

self-service ads for you know uh sellers or advertisers on amazon.com so creating a video today takes typically very high cost it takes a lot of time weeks and months to create this video and you know you also there's a very steep learning curve in any kind of Legacy tools you want to use uh but using you know Nova real uh Amazon video Amazon ads has been able to you know launch this video generator where any seller or 3p Advertiser small or large can come in and generate a video in a few minutes and then you

know push it to production so so this is this is they've already launched it at Amazon accelerate and you know very happy to say they they're using the Nova real model nice are there any um connectors or any ways that you can set this up with like connections that's what they're kind of asking in the chat is it compatible with other programs or anything like that um so so so the way this works is we have an API service on AWS betrock and you can use you can use no Amazon Nova real or Nova canvas

you know uh through any kind of Integrations with other adws product so it should work very seamlessly but if there's a very specific use case you know please please reach out to us and you know we we'll make sure we make it work for you yeah I I I also want to add that with NOA real uh we have a very interesting lineup of features that is is upcoming in q1 yeah and one of the coolest features is about uh ability to generate long videos with storyboarding So currently the model generates 6 seconds of video

at 720p resolution MH so we'll increase that to 1080p as well as customers can generate multiple scenes that the model will stitch together and generate up to 2 minutes which no nobody else currently provides in the industry wow wow it's a long video my attention span struggles to think about one thing for few minutes these days well that'll be good what types of customers are using this you know you mentioned like advertising and we've seen a little bit of that in some of the other demos where folks are talking about Nova but like what are

you seeing customers adopting and driving towards I mean it's it's I mean we are actually very surprised whenever we talk to customers that okay this is a use case which we have never thought of right I mean publishing is one use case where I mean Publishers have these books and they want to do a visual storytelling right so they started with the I mean the image generation right with the canvas also to I mean example as a kids book right they want they want more illustrations there but now they want to take it one step

further of generating uh an an um U internet version of that like an ebook or something and you can also generate these small videos with it right so it opens up new opportunities uh in markets that we have never thought of so it's it's very exciting to see that yeah I mean Beyond just the advertising marketing social media entertainment where we know that videos carry a lot of Worth right but I mean all of these use cases are also upcoming yeah you've mentioned that it has it can it can be applied with a variety of

cinematography terms like I think one that you used there was Dolly I didn't know what dolly was beforehand do I need to have cinematography experience to use this no absolutely not we we provide a very comprehensive set of prompting guidelines which which show examples of what our model can do and what are the typical terms that you need to use that and all of that is fairly available in documentation but uh but but the but the learning curve is extremely small you don't need to need know a lot just go to our promp team guidelines

it's a one pager read them and you know you should be good to go yeah I I also didn't know about D okay so I'm not allow there we're alling together all right that's about all the time that we have here but where do you want customers to go to start using these two new features so um I mean if you have if if the customers have an AWS account ID and are already using Bedrock then they can very easily try out the models if they're not on Bedrock then they should be on Bedrock what

are you doing if you're not on Bedrock the the other thing is we've recently launched uh this image editor app on Party rock so if you don't have an AWS account ID you can just simply log in with your existing Google ID or whatever do some experimentation yeah just just check it out that's so awesome I did not know it was already on Party rock I love some party rock so I love Party Rock go make an AWS account go learn about some party rock this is all the time we have for for this segment

but we will be back with more live from reinvent and you're going to hear a little bit about training and certification coming up next so stay tuned