in the previous video we gave the definition of optimization and even formulated a few problems as optimization problems what we have not done however is discuss how you go about solving such problems what if i told you that each one of these three optimization problems can be solved easily with a few lines of code and note that the code is almost identical for these three problems even though the problems themselves look very different we won't go into the details of the code or the algorithms that's for a later video but what we will do today

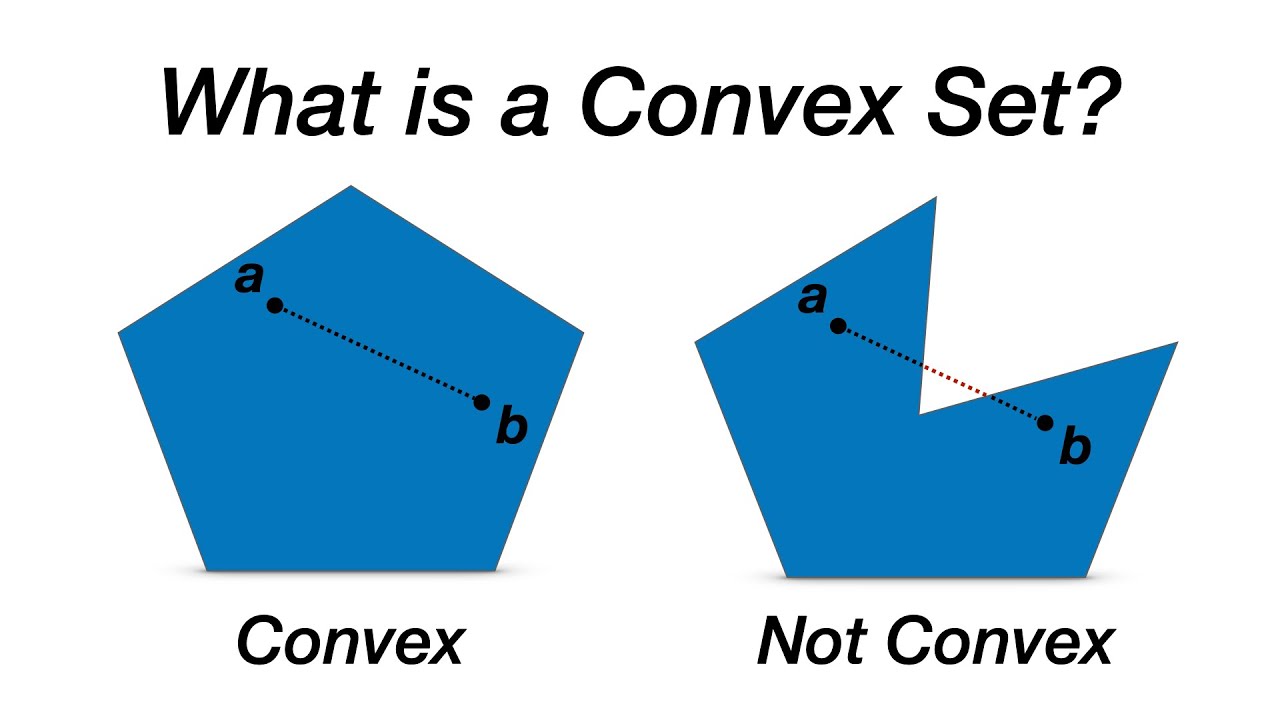

is present the common property that allows us to solve all of these problems in a unified fashion and this property is called as you might have guessed convexit so what is convicted if you open in a textbook you will find the definition of complexity in three different contexts the context of sets functions and optimization problems let's go through them one by one the first definition relates to sets a set is convex if for any pair of points inside the set the segment between the two points falls entirely inside the set it's easy to visualize how

a set can fail to be convex so for example this set with a hole in it fails to become vex because the middle point falls outside of the stand for similar reasons this set here also fails to be convex examples of convex sets include planes polyhedra aka intersection of half spaces bowls potato shapes etc you get the idea the second definition relates to functions a function f is convex if it's epigraph that is the region above the graph of the function is a convex set intuitively this means that the function is ball shaped or curved

upward if you will convex functions include the function x or any linear function for that matter the function x squared the exponential function etc non-convex functions include the function x cubed the sine function etc and i should mention here that a nice way to construct new complex functions from existing ones is to take a function that is known to be convex and scale it with a positive constant or take two convex functions and add them together the third context where one encounters convexity is that of optimization problems an optimization problem is said to be convex

if the objective function f is convex the functions gi that give the inequality constraints are convex and the functions h i are linear you might wonder why we require the functions h i to be linear and not just convex the reason for that is simple we can replace an equality h equals zero with two inequalities h smaller than zero and minus h smaller than zero and the only functions for which h and minus h are both convex are the linear ones now that we get the formalities out of the way what is convexity really and

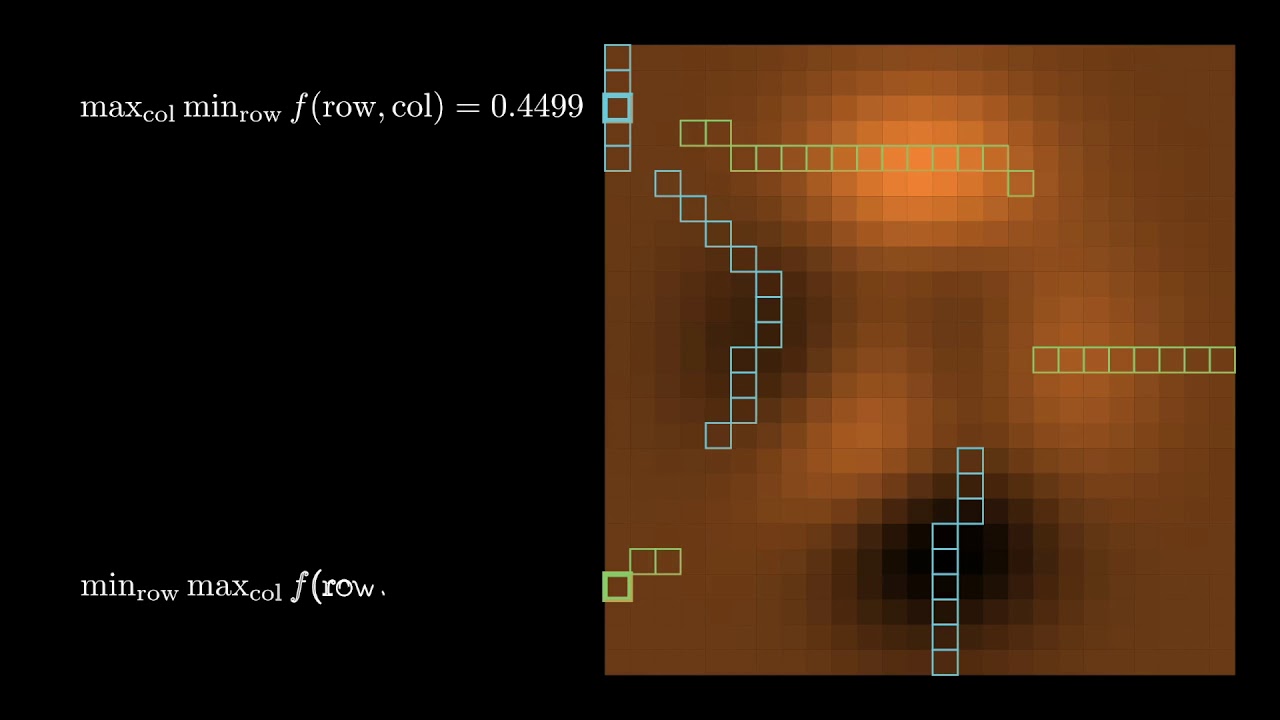

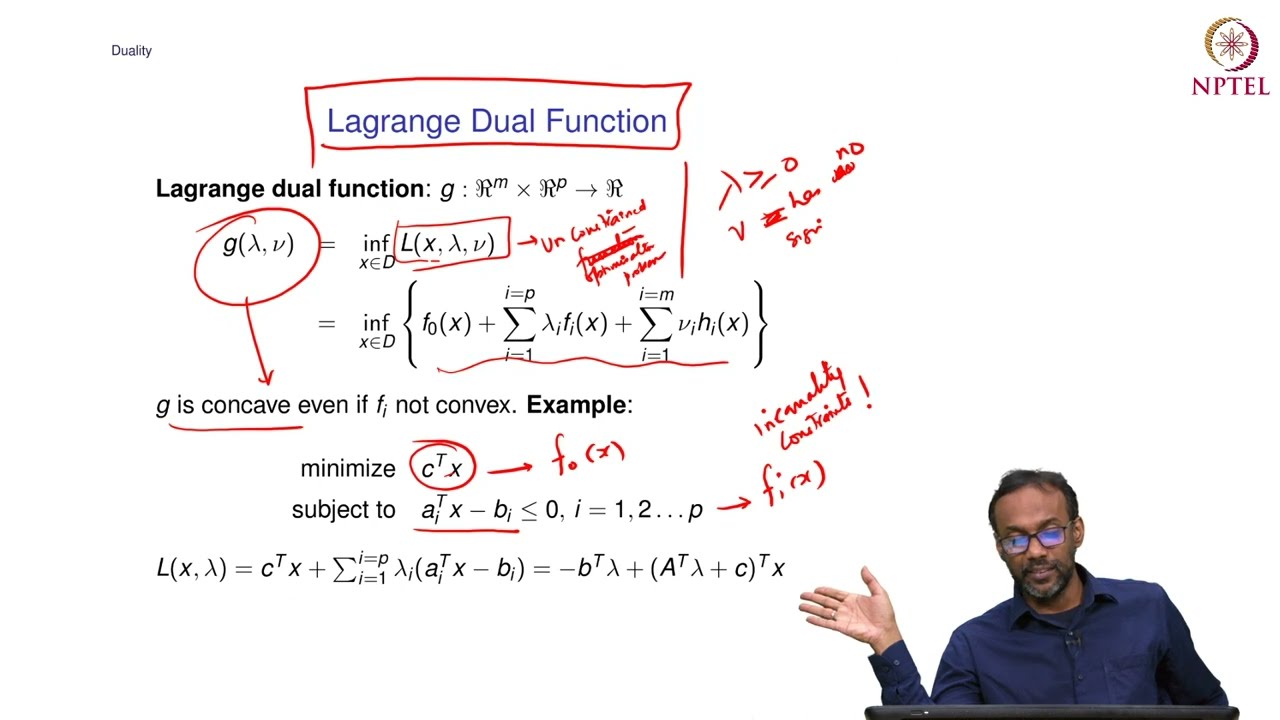

why do we care about it i would argue that the crucial thing that convexity allows us to do is apply the principle of duality the principle of duality is so fundamental and so beautiful that if there was only one thing you should remember from this video it should be this one duality is the ability to view a mathematical concept from two different perspectives a primal one and a dual one let's be more concrete and see what duality means in the three context where convexity appears sets functions and optimization problems let's start with convex sets the

definition i gave before was internal i took points inside the set and i required that the segment between them be inside the set too there is a dual external definition too now instead of taking a point inside my set i take a hyper plane that supports my set this simply means that i take a hyperplane in such a way that my set falls entirely in the positive side of this hyperplane with the definition of supporting hyperplanes in mind the dual definition of convex sets is as follows a set is convex if when you consider the

intersection of the positive regions of all of its supporting hyperplanes you recover the set itself let that sink in for a moment and let's just take some time to appreciate the ramifications of this definition to optimization according to this dual definition convex sets are the ones defined with possibly infinitely many linear inequalities so somehow convex sets through the lens of the duality principle are polyhedra with possibly infinitely many faces and most of the things that we love about the linear world that makes things simpler extend to the convex world with little effort this actually reminds

me of the quote of rockefeller one of the leading figures in optimization theory who said in fact the great watershed in optimization isn't between linearity and non-linearity but between convexity and non-convexity what he means by that is that for a long time we thought that linear problems are easy and nonlinear problems are hard but as it turns out and this is a more and more commonly held belief what actually makes optimization problems easy is convexity which generalizes the notion of just linearity back to duality the duality principle can be more clearly seen in the context

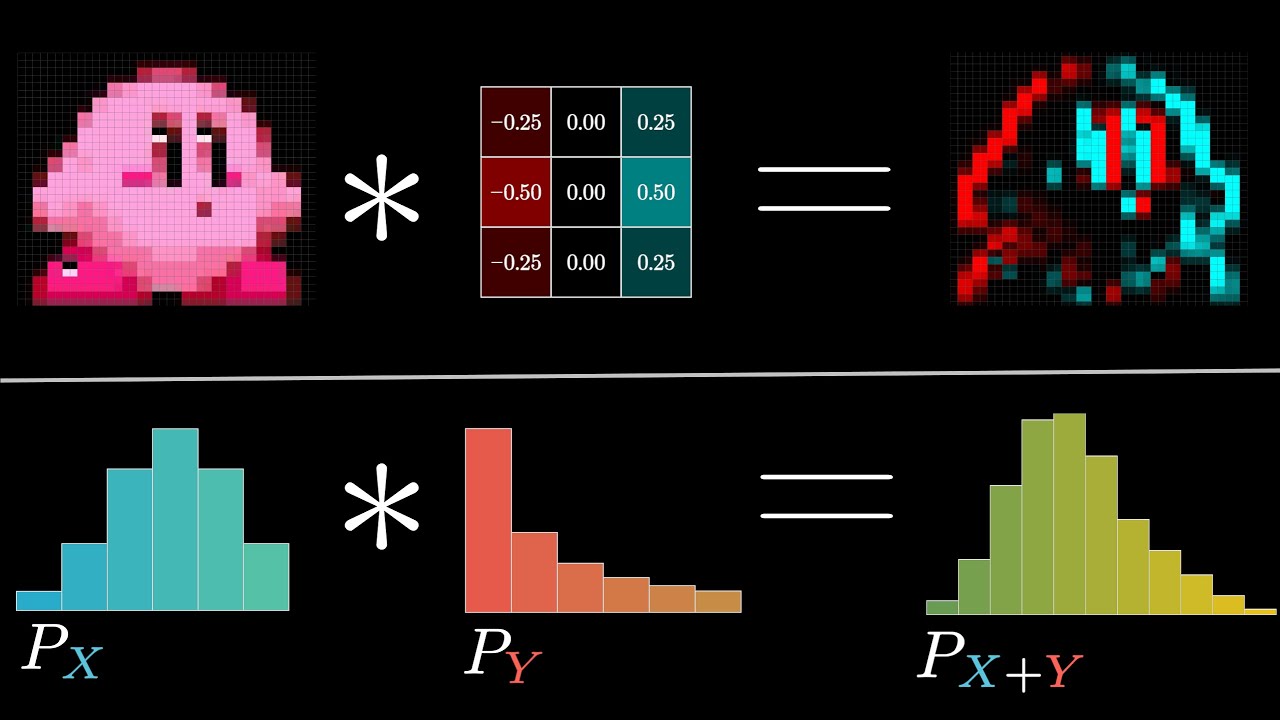

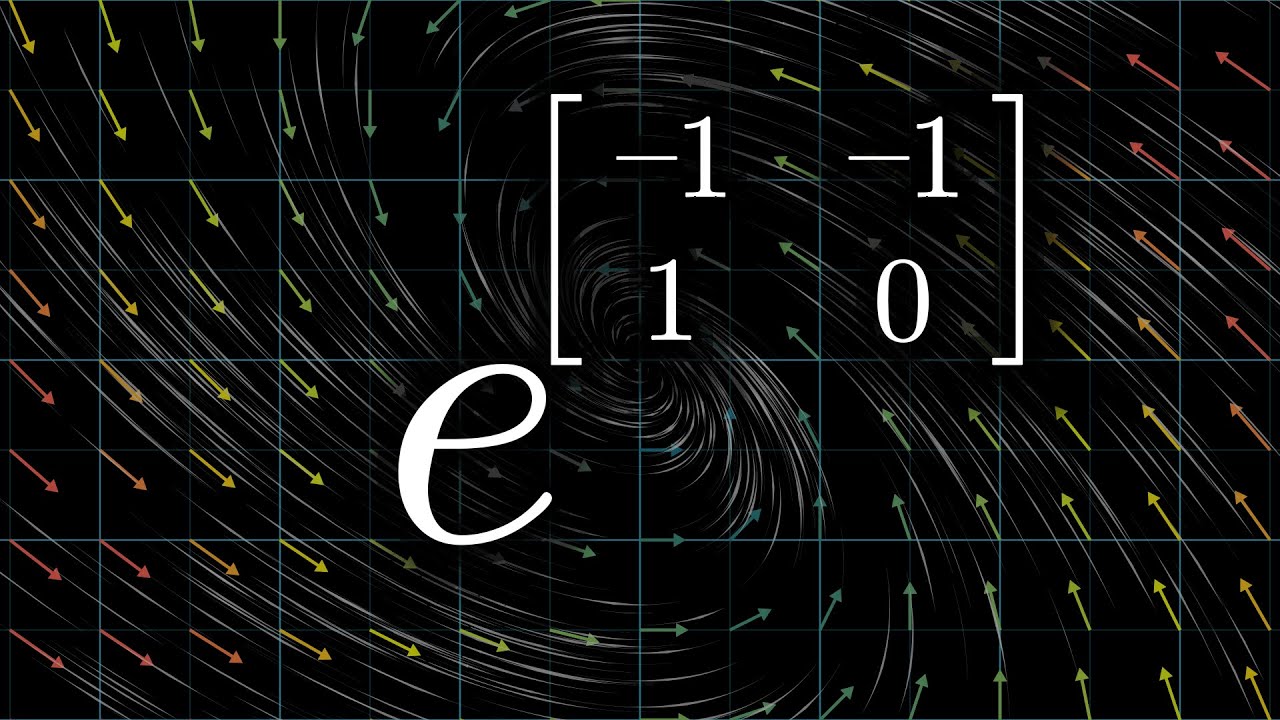

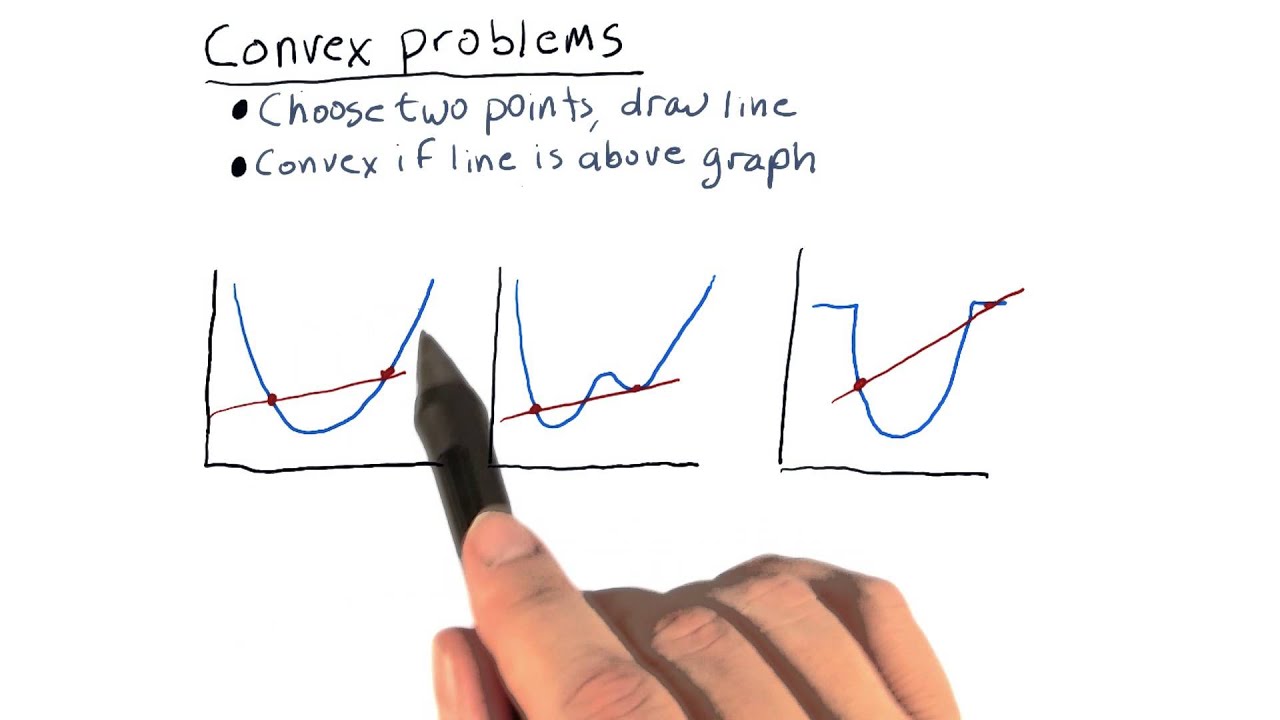

of complex functions as it lets you extrapolate the local behavior of a function to some global property of this function and this statement will make more sense in a second if we want to talk about local behavior of a function we have to mention taylor's approximation theorem in its simplest form this theorem states that if you zoom in on a function around the point x then it would look a lot like a linear function or in other words the line or in higher dimension the hyperplane given by this equation is a good approximation of the

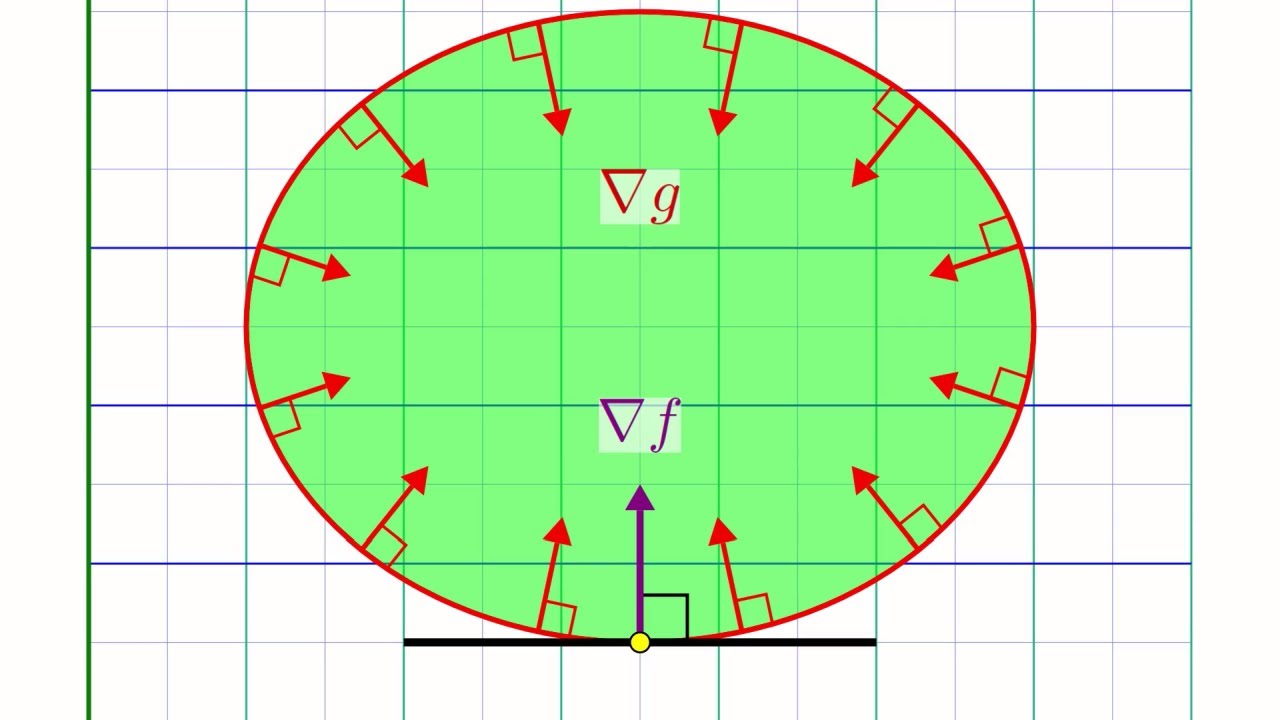

graph of f around the point x and this hyperplane is usually called a tangent hyperplane the dual definition of convex functions is as follows f is convex if and only if its graph is always above its tangent hyperplanes the ramifications of this new definition are huge let's say you find the point x where the gradient of f is equal to zero then the tangent hyperplane at that point is horizontal and since convex functions will always be above their tangent planes this mean that the point x is actually a global minimizer of f and this already

gives us a way to solve and constrain minimization problems take the function x squared plus one as an example this function is convex and its gradient or derivative is two times x so when x equals zero the derivative is equal to zero so x equals zero is where this function attains its minimum another example of an constraint optimization problem is the least squares problem we saw in the last video to find its minimum we simply set the gradient to 0 and then we solve for x the third and last example i wanted to show you

is that of a non-constant linear function like this one the gradient of this function is a constant given by a non-zero constant vector and since this gradient can never vanish we can conclude that this linear function doesn't have a minimizer and indeed it's not hard to see that non-constant linear functions are unbounded [Music] notice how in all of the examples we went from an information about the gradient which is local to a global property of the function that is that x is a global minimizer of the function and all of that thanks to convexity a

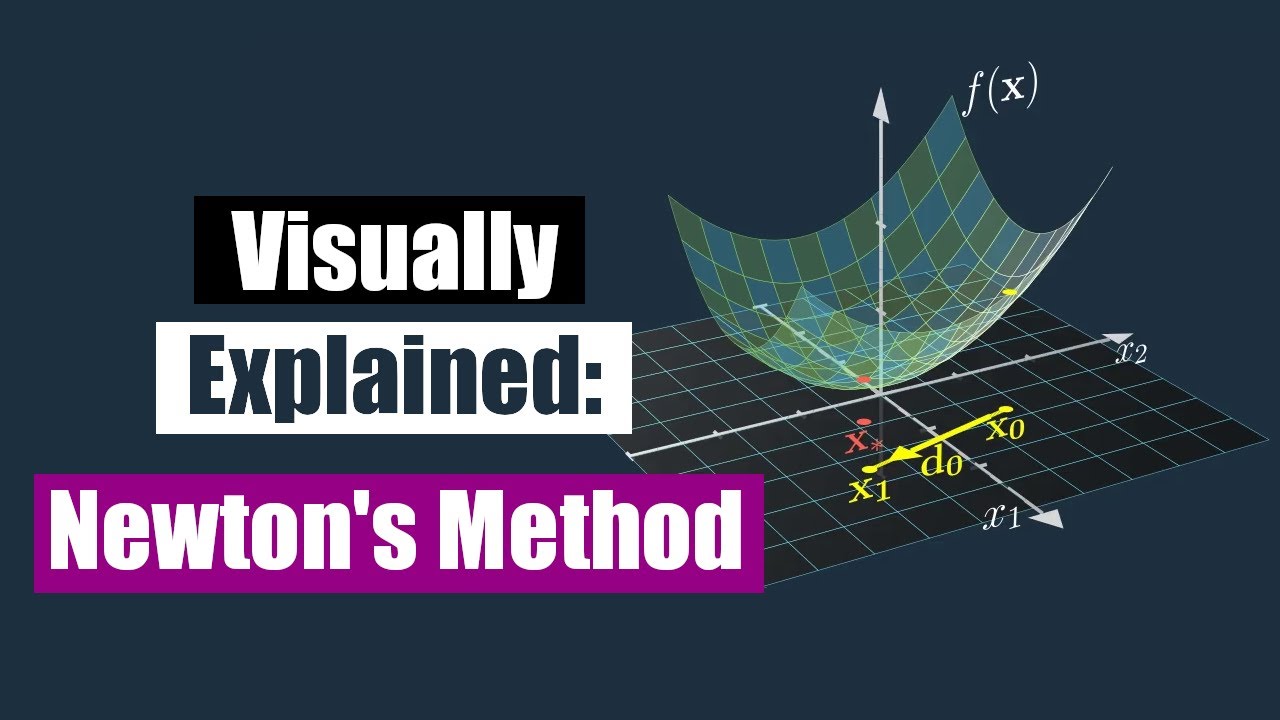

word of warning here in general not all convex optimization problems are as easy to solve are these three for one the objective function can be more complicated so much so that it would not be possible to solve the gradient equation directly and in that case several methods have been developed to solve the gradient equation chief amongst them is newton's method and i have already made a video about that if you're interested a more serious problem is that optimization problems often will have constraints in the next video we will explore the topic of duality in convex

optimizations further and derive the kkd conditions that generalize the condition that grad of f equals zero to optimization problems with cancer friends and we will see a very nice solution technique to solve for these conditions that is known as the interior appointment and that will be the end of this second video thank you very much for watching and see you next time [Music] so