Jensen hang the CEO of Nvidia said in the future every single Pixel in a video game is going to be generated not rendered imagine you're playing a video game and it's unlike any other game that anyone else has ever played it is unique and custom to you and here's the thing it's actually being generated in real time you might think this is some futuristic idea about how video games are going to be but it's actually a lot closer than you think and it might already be here and today we have a new paper from Google

research that shows that they were able to create the game Doom in a generated way using artificial intelligence so I'm going to break this all down for you I'm going to explain what it means and why it really will change everything about how video games are made so first let's start with a little bit about the game doom doom is a classic video game that came out in the 9s that was absolutely pivotal for its time in terms of graphics and gameplay and it's kind of a hacker tradition to put doom on basically any device

I've seen it run on so many devices and in fact it actually has an entire subreddit dedicated to showing off all the different devices that people have gotten doomed to run on so check this out here's one on a phone here's a price checker here's a flower pot I've even seen Doom running on a pregnancy test and of course because of that Doom was the perfect game for Google to show off their new game engine project so today the way that video games are made is everything is predefined a developer or team of developers goes

in they write all the code all the rules every single Pixel how it should operate and they Define everything beforehand and then you render the game and play it and you're all reading from this game engine then we had this evolution in video games in which we had procedural generation everything from Diablo way back in the day to no man Sky more recently and that basically means that these levels or worlds were not necessarily predefined but there was some formula to create them randomly and now we have finally come to the next step in what

video games will be in the future games that are generated on the Fly for you using artificial intelligence that means that no programmer has written code to Define what the game looks like how it works any of the rules none of it it is being generated in real time just for you and that also means you can customize the game as much or as little as you want in real time as well and before I break down the paper I want to show you what has led to this point we've had text to image models

for a few years now so you type in something you want to see and then the text to image model gives you a picture of what you want to see and then we started having text to video model so you type out what you want to see and then a video gets output and nothing in the video was real it is all generated by artificial intelligence and then open AI released Sora Sora has been an incredible achievement ment in the ability to create consistent videos that last minutes simply based on a prompt and the physics

look completely real and a lot of people noticed that some of the videos that Sora was showing off looked really like video games and so the logical Next Step was actually being able to play these video games but again not pre-rendered they are completely generated every single Pixel you see is generated and now that's what we have so this is the paper diffusion models are realtime game engines this is from Google research Tel Aviv University and Google Deep Mind take a look at this video and you will see what I mean here we have the

game Doom this is a recreation of the 1993 version and this is not pre-rendered or rendered whatsoever this is all generated in real time all of the monsters coming at you the movement walking through the different Halls all of the interface elements these are all generated by a neural net and so if you thought completely generated content video games TV shows movies was some far-fetched idea that might happen at some point in the future think again this is happening right now and this isn't the first example of this but this is the most sophisticated that

I've seen so far I've seen Call of Duty like video games that were completely generated using AI but those games you couldn't actually play this game you can play so let's read the abstract quickly we present game engine the first game engine powered entirely by a neural model that enables real-time interaction with a complex environment over long trajectories so long time periods at high quality game engine can interactively simulate the classic game Doom at over 20 frames per second on a single TPU and a TPU is basically like a custom GPU and so how did

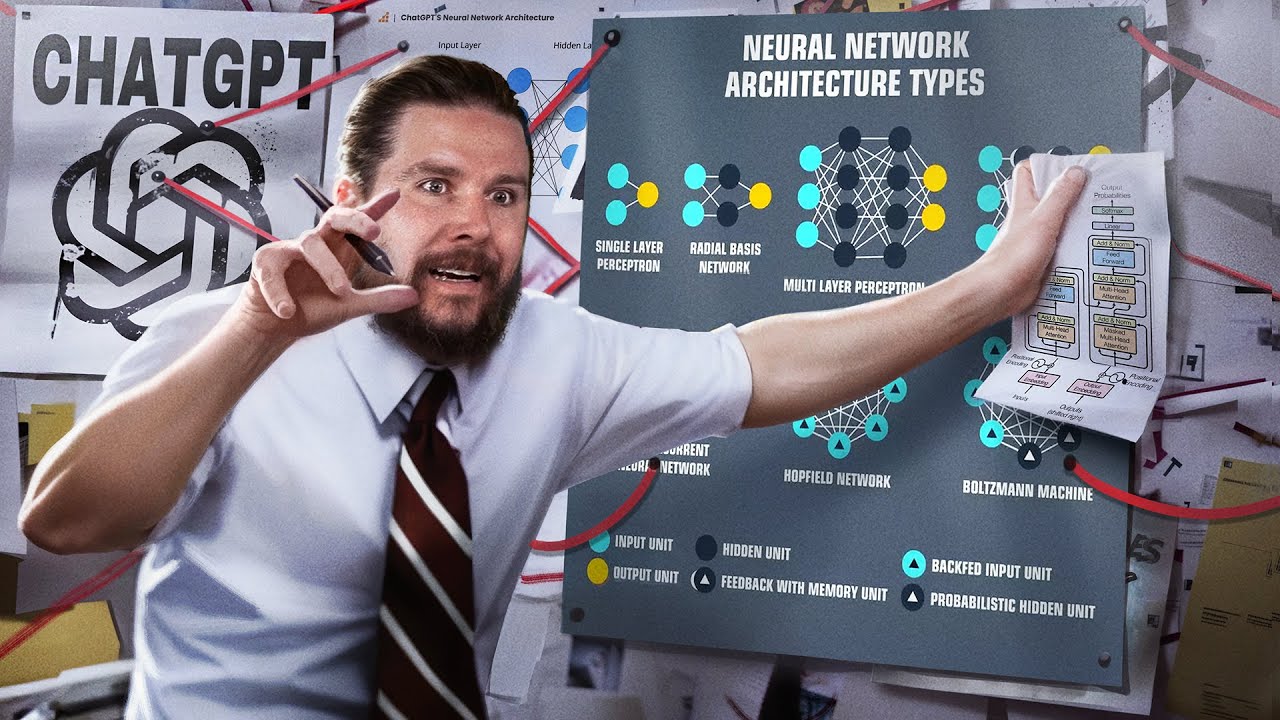

they actually do this well the neural net basically predicts what the next frame is going to be at all times so if you're moving it will predict what the next frame is going to be if you're moving down the hallway if you're shooting the gun if you're killing a monster it is just constantly predicting exactly like it is predicting the next word in a sentence when you're using a large language model what the next frame is going to be so game engine is trained in two phases an RL agent learns to play the game and

the training sessions are recorded so they're going to have a bunch of data about what the world looks like and how the player can move throughout the world and what the menu system looks like what the game's logic and rules are everything then a diffusion model is trained to produce the next frame conditioned on the sequence of past frames and actions so why is this such a big deal this fundamentally changes the way that all content is going to be created and consumed when you can generate content in real time you can generate it for

an audience of one you can describe the exact type of TV show that you want to see the exact type of video game how it plays the style any cheat codes that you want you just describe it to Ai and it will create it for you and this opens up the opportunity to have infinite amounts of content that are extremely tailored to an individual and if you extrapolate this even further it actually speaks to the future of programming as well if neural Nets and artificial intelligence in general are able to create video games if they're

able to create different types of content and we've already seen that they're able to create applications there's really not going to be a need for developers in the future whether it's video game developers or application developers and even possibly content creators themselves and if we really think futuristic a lot of people have been saying Sora that open AI text to video model that I told you about earlier is truly modeling the real world so if we're able to model a video game with a neural net why can't we model the actual world the real world

and in my mind we're only limited by the compute that we can throw at it so this really ends up in a situation like the Matrix if we really just extend out what this Vision looks like and it speaks to simulation Theory now let me just read a couple of the most interesting bits from this research paper we show that a complex video game the iconic game Doom can be run in a neural network an augmented version of the open stable diffusion 1.4 model which is great to know in real time while achieving a visual

quality compared comparable to that of the original game while not an exact simulation the neural model is able to perform complex game State updates such as tallying health and ammo attacking enemies damaging objects opening doors and persist the game State over long trajectories now here's the thing it doesn't actually store the state of the game in a database so if you were to close down that model and then reopen it it would have no way to know what the state of the game is it doesn't actually write it to anywhere it's all done in this

neural network and in the paper they describe what they believe is a new paradigm for interactive video games so today video games are programmed by humans game engine is a proof of concept for one part of a new paradigm where games are weights of a neural model not lines of code game engine shows that an architecture and model weights exist such that a neural model can effectively run a complex game interactively on existing Hardware a small part of this Vision namely creating modifications or novel behaviors for existing games might be achievable in the shorter term

for example we might be able to convert a set of frames into a new playable level or create a new character just based on example images without having to author code so imagine you have a Mario game and you want a brand new world to go explore you could basically take the existing game and simply prompt a model to give you a new world based on that game and you could even put yourself in these video games the possibilities are truly unlimited now let me talk about some of the drawbacks and potential limitations because they're

definitely are some and of course keep in mind this technology is extremely early we're just at the beginning so first it says the game engine suffers from a limited amount of memory the model only has access to a little over 3 seconds of History so it's remarkable that much of the game's logic is persisted for drastically longer time Horizons another limitation is the fact that it is not a perfect simulation of the original game now that only matters if you're trying to recreate an existing game if you're simply generating a brand new game that actually

doesn't matter and it's not perfect just like large language models this game will hallucinate now the hallucinations come out in different ways as we can see from this video there are little Twitches in the eyebrow of the Avatar some of the numbers that are being counted are off we can see little awkward movements in the graphics but overall it does look quite good so how do we fix all of these issues well first of all we have to get better at fixing and preventing in general hallucinations which is a broad problem in the world of

artificial intelligence we need to be able to allow them to persist memory for longer than just a few seconds and again this is another problem in the world of ai ai doesn't currently really have any memory we use certain memory techniques like retrieval augmented generation to give large language models memory but they don't actually have memory they are kind of just Frozen in time and then of course we need a lot more training data and a lot more compute and when we mix all of these things together I believe the future of of video games

is truly going to be generated just like Jensen Wang said early last year so imagine a future where rather than waiting for GTA 6 you can simply tell an AI to create it for you this has huge implications for so many different Industries including movie production television production video games YouTube of course music and even Computing in general I truly believe we're not really going to need an operating system or an application layer in the fure future we're simply going to ask artificial intelligence for exactly what we need it's going to generate an interface and

all the data we need in real time and then just serve it to us there really is no purpose to the application layer or even the operating system at that point the new operating system is artificial intelligence if you liked this video please consider giving a like And subscribe and I'll see you in the next one

![The moment we stopped understanding AI [AlexNet]](https://img.youtube.com/vi/UZDiGooFs54/maxresdefault.jpg)