[Music] from New York Times opinion this is the Ezra Klein [Music] show this feels wrong to me but I have checked the dates it was barely more than a year ago that I wrote this piece about AI with title this changes everything I ended up reading it on the show too and the piece was about the speed with which AI systems were Improving it argued that we can usually trust that tomorrow is going to be roughly like today that next year is going to be roughly like this year that's not what we're seeing here these

systems are growing in power and capabilities at an astonishing rate the growth is exponential not linear when you look at surveys of AI researchers their timeline for how quickly AI is going to be able to do basically anything a human does better and more Cheaply than a human the timeline is accelerating Year bye on these surveys when I do my own reporting talking to the people inside these companies people at this strange intersection of excited and terrified of what they're building no one tells me they are seeing a reason to believe progress is going to

slow down and you might think that's just hype but a lot of them want it to slow down a lot of them are scared of how quickly it is moving they don't think That Society is ready for it the regulation is ready for it it they think the competitive pressures between the companies and the countries are dangerous they wish something would happen to make it all go slower but what they are seeing is they are hitting the Milestones faster that we're getting closer and closer to truly transformational AI that there is so much money and

talent and attention flooding into the space that that is Becoming its own accelerant they are scared we should at least be paying attention and yet I find living in this moment really weird because as much as I know this wildly powerful technology is emerging beneath my fingertips as much as I believe it's going to change the world I live in profoundly I find it really hard to just fit it into my own day-to-day work I consistently you know sort of wander up to the AI ask it a question find myself somewhat impressed Or unimpressed at

the answer but it doesn't stick for me it is not a sticky habit it's true for a lot of people I know and I think that failure matters I think getting good at working with AI is going to be an important skill in the next few years I think having an intuition for how they systems work is going to be important just for understanding what is happening to society and you can't do that if you don't get over this hump in the learning Curve if you don't get over this part where it's not really clear

how to make AI part of your life so I've been on a a personal quest to get better at this and in that Quest I have a guide Ethan mik is a professor at the warden School of the University of Pennsylvania he studies and and writes about Innovation and Entrepreneurship but he has his newsletter one useful thing that has become really I think the best guide to how to begin using and how to get better At using AI he's also got a new book on the subject co-intelligence and so I asked him on the show

to walk me through what he's learned this is going to be I should say the first of three shows on this topic this one is about the present the next is about some things I'm very worried about in the near future particularly around what AI is going to do to our digital comments and then we're going to have a show that is a little bit more about the curve we are All on about the slightly further future and the world we might soon be living in as always my email for guest suggestions thoughts feedback as

reclin show at NY times.com Ethan mik welcome to the show thanks for having me so let's assume I'm interested in Ai and I tried chat GPT a bunch of times and I was suitably impressed and weirded out for a minute and so I know the technology is powerful I've heard all these predictions about how it will take everything over become Part of everything we do but I don't actually see how it fits into my life really at all what am I missing so you're not alone this is actually very common and I think part of

the reason is is that the way chat GPT Works isn't really set up for you to understand how powerful it is you really do need to use the paid version they're significantly smarter and you can almost think of this like gpt3 which was nobody really paid attention to what it came Out before chat GPT was about as good as a sixth grader at writing GPT 3.5 the free version of chat GPT is about as good as a high school or maybe even a college freshman or sophomore and gp4 is often as good as a PhD

in some forms of writing like there's a general smartness that increases but even more than that ability seems to increase and you're much more likely to get that feeling that you are working with something amazing as a result and if you don't Work with the frontier model you can lose track of what these systems can actually do on top of that you need to start just using it you kind of have to push past those first three questions my advice is usually bring it to every table that you come to in a legal and ethical

way so I use it for every aspect of my job in ways that I legally and ethically can and that's how I learn what's good or bad at when you say bring it to every table you're at one that Sounds like a big pain because now I've got to add another step of talking to the computer constantly but two it's just not obvious to me that would look like so what does it look like what does it look like for you or what does it look like for others that you feels applicable widely so I

just finished this book it's my third book I keep writing books even though I keep forgetting that writing books is really hard but this was I think my best book But also the most interested to write it was thanks to Ai and there's almost no AI writing in the book but I used it continuously so things that would get in the way of writing I think I'm a much better writer than AI hopefully people agree but there's a lot of things that get in your way as a writer so I would get stuck on a

sentence I could do a transition give me 30 versions of the sentence in radically different styles there's 200 different citations I had The AI read through the papers that I read through write notes on them and organize them for me I had the AI suggest analogies that might be useful I had the AI act as readers and in different personas read through the paper from the perspective is there something example I could give that's better is this understandable or not and that's very typical of the kind of way that I would say bring it to

the table use it for everything and you'll find His limits and abilities let me ask you one specific question on that because I've been writing a book and on some bad days of writing the book I decided to play around with gbd4 and one of the things that it got me thinking about was the kind of mistake or problem these systems can help you see and the kind they can't so they can do a lot of give me 15 versions of this paragraph 30 versions of this sentence and and every once in a while You

get a good version or you'll Shake something a little bit loose but almost always when I am stuck the problem is I don't know what I need to say often times I have structured the chapter wrong often times I've simply not done enough work and one of the difficulties for me about using AI is it AI never gives me the answer which is often the true answer this whole chapter is wrong it is poorly structured you have to delete it and and start over it's not Feeling right to you because it is not right and

I actually worry a little bit about tools that can see one kind of problem and trick you into thinking it's this easier problem but make it actually harder for you to see the other kind of problem that maybe if you were just sitting there banging your head against the wall of your computer or the wall of your own mind you'd eventually find I think that's a wise point I I think there's two or three things Bundled there the first of those is AI is good but it's not as good as you it is say the

80th percentile of writers based on some results maybe a little bit higher in some ways if it was able to have that burst of insight and to tell you this chapter is wrong and I've thought of a new way of phrasing it we would be at that sort of mythical AGI level of AI is smart as the best human and it just isn't yet I think the second issue is also quite profound which is What does using this tool shape us to do and not do one a nice example that you just gave is writing

and I think a lot of us think about writing as thinking we don't know if that's true for everybody but for writers that's how they think and sometimes getting that short cut could shortcut the thinking process so I've had to change sometimes a little bit how I think when I use AI For Better or For Worse so I think these are both concerns to be taken seriously for most People right if you're just going to pick one model what would you pick what do you recommend to people and second how do you recommend they access

it because something going on in the AI world is there are a lot of rappers on these models so chpt has an app Claude does not have an app obviously Google has its Suite of products and there are organizations that have created a a sort of different spin on somebody else's AI so perplexity which is I believe built On on GPT 4 now you can pay for it and it's more like a search engine interface and and has some changes made to it for a lot of people the question of how easy and accessible the

thing is to access really matters so which model do you recommend to most people and which entry door do you recommend to most people and do they differ it's a really good question I recommend working with one of the models as directly as possible through the company that creates them And there's a few reasons for that one is you get as close to the unadultered personality as possible and second that's where features tend to roll out first so if you like sort of intellectual challenge I think cloud 3 is the most intellectual of the models

as you said the biggest capability set right now is gp4 so if you do any math or coding work it does coding for you it has some really interesting interfaces that's what I would use and because gbt 5 is coming out that's fairly powerful and Google is probably the most accessible and plugged into the Google ecosystem so I don't think you can really go wrong with any of these generally I think Claude 3 is the most likely to freak you out right now and gbd4 is probably the most likely to be super useful right now

so you say it takes about 10 hours to learn a model 10 hours is a long time actually what are you doing in that 10 Hours what are you figuring out how did you come to that number give me some texture on your 10 hour rule so first off I want to indicate the 10 hours is as arbitrary as 10,000 steps like there's no scientific basis for it this is an observation but it also does move you past the I poked at this for an evening and it moves you towards using this in a serious

way I don't know if 10 hours is the real limit but it seems to be somewhat transformative the key is to Use it in an area where you have expertise so you can understand what it's good or bad at learn the shape of its capabilities when I taught my students this semester how to use uh Ai and we have had three classes on that they learned the sort of theory behind it but then I gave them an assignment which was to replace themselves at their next job and they created amazing tools things that filed flight

plans or did Tweeting or to deal memos in fact one of The students created a way of creating user personas which is something that you do in product development that's been used several thousand times in the last couple weeks in different companies so they were able to figure out uses that I never thought of to automate their job and their work because they were asked to do that so part of taking this seriously in the 10 hours is you're going to try and use it for your work you'll understand where it's good or bad What

it can automate it what it can't and build from there something that feels to me like the theme of your work is that the way to approach this is not learning a tool it is building a relationship is that fair AI is built like a tool it's software it's very clear at this point that it's an you know emulation of thought but because of how it's built because of how it's constructed it is much more like working with a person than working with a tool And when we talk about it this way I almost feel

kind of bad because there's dangers in building a relationship with a system that is purely artificial and doesn't think and have emotions but honestly that is the way to go forward and that is sort of a great sin anthropomorphization in in the AI literature because it can blind you to the fact that this is software with its own sets of foibles and approaches but if you think about like programming then You end up in trouble in fact there's some early evidence that programmers are the worst people at using AI because it doesn't work like software

it doesn't do the things you would expect a tool to do tools shouldn't occasionally give you the wrong answer shouldn't give you different answers every time shouldn't insult you or try to convince you they love you and AIS do all these things and I find that teachers managers even parents editors are often better at Using these systems because they're used to treating this as a person and they interact with it like a person would giving feedback and that that helps you and then I think the second piece of that not tool piece is that when

I talk to open AI or anthropic they don't have a hidden instruction manual there's no list of how you should use this as a writer or as a marketer or as a educator they don't even know what the capabilities of these systems are They're all sort of being discovered together and that is also not like a tool it's more like a person with capabilities that we don't fully know yet so you've done this with all the big models you've done I think much more than this actually with all the big models and one thing you

describe feeling is that they don't just have slightly different strengths and weaknesses but they have different for lack of a better term and to Anthropomorphize personalities and that the the 10 hours in part is about developing an intuition not just for how they work but kind of how they are and how they talk the the sort of entity you're dealing with so give me your high level on how gbd4 and Claude 3 and Google's Gemini are are different what are their person personalities like to you you know it's important to know the personalities not just

as personalities but because they are tricks those are Tunable approaches that the system makers decide so it's weird to have this on one hand don't anthromorph ISE because you're being manipulated because you are but on the other hand the only useful way is to anthromorph so keep in mind that you are dealing with the choices of the AI makers so for example Claude 3 is currently the warmest of the models and is the most Allowed by its creators of anthropic I think to act like a person so it's more willing to Give you its personal

views such as they are and again those aren't real views those are views to make you happy than other models and it's a beautiful writer very good at writing kind of clever closest to humor I've found of any of the AIS less dad jokes and more actuals almost jokes gp4 is feels like a Workhorse at this point it is the most kind of neutral of the approaches it wants to get stuff done for you and it will happily do that it doesn't have a Lot of time for chitchat and then we've got Google's Bard which

feels like or Gemini now which feels like it really really wants to help we use this for teaching a lot and we build these scenarios where the AI actually acts like a counterparty in a negotiation so you get to practice a negotiation by negotiating with the AI and it works incredibly well I've been building simulations for 10 years can't imagine what a leap this has been but when we Try and get Google to do that it keeps leaping in on the part of the students to try and correct them and say no you didn't really

want to say this you wanted to say that and I'll play out the scenario is if it went better and it really wants to kind of make things good for you so these interactions with the AI do feel like you're working with people both in skills and in personality you were mentioning a minute ago that what the AIS do reflect decisions made By their programmers they reflect guard rails what they're going to let the AI say uh very famously Gemini came out and was very woke you would ask it to show you a picture of

soldiers in Nazi Germany and it would give you a very Multicultural group of soldiers which is not how how that Army worked but that was something that they had built in to try to make more inclusive photography generation but there are also things that happen in these systems that people Don't expect that the programmers don't understand so I remember in the previous generation of Claude which is from anthropic that when it came out something that the people around it talked about was for some reason Claude was just a little bit more literary than the other

systems it was better at rewriting things in the voices of of literary figures it just had a slightly artsier Vibe and and the people who trained it weren't exactly sure why now That still feels true to me right now of the ones I'm using I'm spending the most time with with Claud 3 I just find it the most congenial they all have different strengths and weaknesses but there is a funny Dimension to these where they are both reflecting the guard rails and the choices of the programmers and then deep inside the training data deep inside

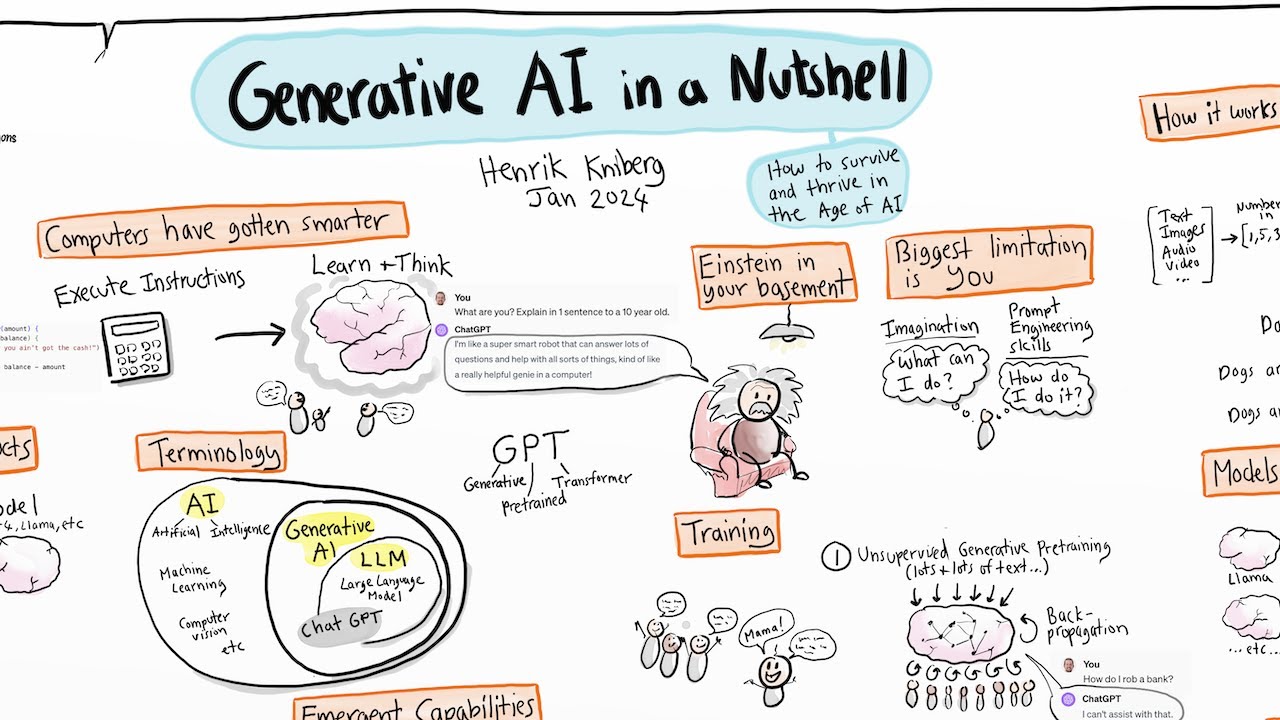

the way the various algorithms are combining there is some set of emergent qualities to them which Gives them this at least edge of chance of randomness of of something yeah that does feel almost like personality I think that's a very important point and fundamental about AI is the idea that we technically know how llms work but we don't know how they work the way they do or why they're as good as they are they really we don't understand it you know the theories range from everyone from it's all fooling us to they've emulated the way

Humans think because the structure of language is a structure of human thoughts so even though they don't think they can emulate it we don't know the answer but you're right there's these emergent sets of personalities and approaches when I talk to AI design companies they often can't explain why the AI stops refusing answering a particular kind of question when they tune the AI to do something better like answer math better it suddenly does Other things differently it's almost like adjusting the psychology of a system rather than tuning parameters so when I said that Claude is

allowed to be more personable part of that is that the system prompt and Claude which is the sort of initial instructions it gets allow it to be more personable than say Microsoft's co-pilot formerly Bing which has explicit instructions after a fairly famous blow up a while ago that it's never supposed to talk about itself as a Person or indicate feelings so there's some instructions but that's on top of these roing systems that act in ways that even the creators don't expect one thing people know about using these models is that hallucinations just making stuff up

is a problem has that changed at all as we've moved from GPD 3.5 to 4 as we move from CLA 2 to three like is that become significantly better and if not how do you evaluate the trustworthiness of what you're being Told so there those are a couple overlapping questions the first of them is is it getting better over time so there is a paper in the field of medical citations that indicated that around 80 to 90% of citations had an error were made up with GPT 3.5 that's the free version of chat and that

drops for gp4 so hallucination rates are dropping over time but the AI still makes stuff up because all the AI does is hallucinate there's no mind there all it's doing is Producing word after word they are just making stuff up all the time the fact that they write so often is kind of shocking in a lot of ways and the way you avoid Hallucination is not easily so one of the things we document in one of our research papers is we purposefully designed for a group of Boston Consulting Group Consultants so an elite consulting company

we did a lot of work with them and one of the experiments we did was we created a task where the AI Would be confident but wrong and when we gave people that task to do and they had a access to AI they got the task wrong more often than people who didn't use AI because the AI misled them because they fell asleep at the wheel and all the early research we have on AIU just that when AIS get good enough we just stop paying attention but doesn't this make them unreliable in a very tricky

way 80% you're like it's always hallucinating 20% 5% it's enough that you can easily Be led into overconfidence and one of the reasons it's really tough here is you're combining something that knows how to seem extremely persuasive and confident you feed into the AI a 90 page paper on functions and characteristics of right-wing populism in Europe as I did last night and within seconds basically you get a summary out and the summary certainly seems confident about what's going on but on the other hand you Really don't know if it's true so for a lot of

what you might want to use it for that is unnerving absolutely and I think hard to grasp because we're used to things like type two errors where we search for something on the Internet and don't find it we're not used to type 1 errors where we search for something and get an answer back that's made up this is a challenge and there's a couple things to think about one of those is I I advocate The ba standard best available human so is the AI more or less accurate than the best human you could consult in

that area and what does that mean for whether or not it's an appropriate question to ask and that's something that we kind of have to judge collectively it's valuable to have these studies being done by law professors and medical professionals and people like me my colleagues in management they're trying to understand how good is the AI and the answer is Pretty good right so it makes mistakes does it make more or less mistakes than a human is probably a question we should be asking a lot more and the second thing is the kind of tasks

that you judge it for I absolutely agree with you when summarizing information it may make errors less than an intern you assign to it is an open question but you have to be aware of that error rate and that goes back to the 10hour question the more you use these AIS the more you Start to know when to be suspicious and when not to be that doesn't mean you're eliminating errors but it's just like if you assigned it to an intern you're like this person has a sociology degree they're going to do a really good

job summarizing this but their biases are going to be focused on sociological facts and not the political facts you start to learn these things so I think again that person model helps because you don't expect 100% reliability out of A person and that changes the kind of tasks you delegate but it also reflects something interesting about the nature of the systems you have a quote here that I think is very insightful you wrote The Core irony of generative AIS is that AIS were supposed to be all logic and no imagination instead we we get AIS

that make up information engage in seemingly emotional discussions and which are intensely creative and that last fact is one that makes many people Deeply uncomfortable there is this collision between what a computer is in our minds and then this strange thing we seem to have invented which is an entity that emerges out of language an entity that almost emerges out of art this is the thing I have the most trouble keeping in my mind that I need to use the AI as an imaginative creative partner and not as a calculator that uses Words I love

the phrase a calculator that uses words I think we have been let down by science fiction both in the Utopias and apocalypses that AI might bring but also even more directly in our view of how machine should work people are constantly frustrated and give the same kinds of tests to AIS over and over again like uh doing math which it doesn't do very well they're getting better at this and on the other hand saying well creativity is a uniquely Human spark that we can't touch and that AI on any creativity test we give it which

again are all Limited in different ways blows out humans in almost all measures of creativity that we have and all the measures are bad but that still means something but we were using those measures five years ago even though they were bad this is a point you make that I think is interesting and and slightly unsettling yeah we never had to differentiate humans from machines Before it was always easy so the idea that we had to have a scale that worked for people and machines who had that we had the Turing test which everyone knew

was a terrible idea but since no machine could pass it it was completely fine so the question is how do we measure this is an entirely separate set of issues like we don't even have a definition of sensient or Consciousness and I think that you're exactly right on the point being that we are not ready for this Kind of machine or intuition is bad so one of the things I will sometimes do and did quite recently is give the AI a series of personal documents emails I wrote to people I love they were very descriptive

of a particular moment in my life and then I will ask the AI about them or ask the AI to analyze me off of them and sometimes it's a little breathtaking almost every moment of true metaphysical shock to use a term somebody else gave me I've had Here has been relational at how good the AI can be almost like a therapist right sometimes it will see things the thing I am not saying in a letter or in a personal problem and it will will zoom in there right it will give I think quicker and better

feedback in an intuitive way that is not simply mimicking back what I said and is dealing with a very specific situation it it will do better than people I speak to in in my life around That conversely I'm going to read a bit of it later I tried mightily to make CLA 3 a useful partner in prepping to speak to you and also in prepping for another podcast recently and I functionally never have a moment there where I'm all that impressed that makes complete sense I think the weird expectations we called the jagged Frontier of

AI that it's good at some stuff and bad at other stuff It's often unexpected it can lead to these weird moments of disappointment followed by Elation or surprise and part of the reason why I advocate for people to use it on their job is it isn't going to out compete you at whatever you're best at I mean I cannot imagine it's going to do a better job preing someone for an interview than you're doing and that's not me just um trying to be nice to you because you're interviewing me but because you're a good interviewer

You're a famous interviewer it's not going to be as good as that now there's questions about how good these systems get that we don't know but we're kind of in a weirdly comfortable spot in AI which is maybe it's the eighth percentile of many performances but you know I talk to Hollywood writers it's not close to writing like a Hollywood writer it's not close to being as good an analyst it's not it's but it's better than the average person and so it's Great as a supplement to weakness but not to strength but then we run

back into the problem you talked about which is in my weak areas I have trouble assessing whether the AI is accurate or not so it really becomes sort of a you know eating its own tail kind of problem but this gets to this question of what are you doing with it the AIS right now seem much stronger as amplifiers and feedback mechanisms and thought thought partners for you then they do is Something you can really Outsource your your hard work and your thinking to and that to me is one of the the differences between trying

to spend more time with these systems like when you come into them initially you're like okay here's a problem give me an answer whereas when you spend time with them you realize actually what you're trying to do with the AI is get it to elicit a better answer from you and that's why the book's called Co-intelligence for right now we have a prosthesis for thinking that's like new in the world we haven't had that before for I mean coffee but aside from that not much else and I think that there's value in that I think

learning to be partner with this and where I can get wisdom out of you or not I was talking to a physics professor at Harvard he says all my best ideas now come from talking to the AI and I'm like well it doesn't do physics that well he's like No but it asks good questions and I think that there is some value in that kind of interactive piece it's part of why I'm so obsessed with the idea of AI and education because a good educator and I've been working on interactive education skill for a long

time a good educator is eliciting answers from a student and they're not telling students things so I think that that's a really nice distinction between sort of co-intelligence and thought partner and Doing the work for you it certainly can do some work for you there's tedious work that the AI does really well but there's also this more brilliant piece of making us better people that I think is at least in the current state of AI a really awesome and amazing [Music] thing [Music] [Music] we've already talked a bit about Gemini Is helpful and Chach pd4

is neutral and Claude is a bit warmer but you urge people to go much further than that you say to give your AI a personality Kell it who to be so what do you mean by that and why so this is actually almost more of a technical trick even though it sounds like a social trick when you think about what AIS have done they've trained on the collective Corpus of human knowledge and they know a lot of things and they're also probability Machine so when you ask for an answer you're going to get the most

probable answer sort of with some variation in it and that answer is going to be very neutral if you're using gbd4 it'll probably talk about a rich tapestry a lot it loves to talk about rich tapestries if you ask it to codes something artistic it'll do a fractal it does very normal Central AI things so part of your job is to get the AI to go to parts of this possibility space where The information is more specific to you more unique more interesting more likely to spark something in you yourself and you do that by

giving it context so it doesn't just give you an average answer it gives you something that's specialized for you the easiest way to provide context to Persona you are blank you know you are a expert at interviewing and you answer in a warm friendly style help me come with interview questions it won't be Miraculous in the same way that we were talking about before if you say you're Bill Gates it doesn't become Bill Gates but that changes the context of how it answers you it changes the kinds of probabilities it's pulling from and results in

much more customized and better results okay but this is weirder I think than you're quite letting on here so something you turned me on to is there's research showing that the a is going to perform better on various tasks And differently on them depending on the personality so there's a study that gives a bunch of different personality prompts to one of the systems and then tries to get it to answer 50 math questions and the way it got the best performance was to tell the AI it was a Starfleet commander who was charting a course

through turbulence to the center of an anomaly but then when he wanted to get the best answer on a 100 math questions what worked best was putting It in a thriller where you know the Clock Was ticking down I mean what the hell is that about what the hell is a good question and we're just scratching the surfice right there's a nice study actually showing that if you emotionally manipulate the AI you get better math results so if telling it your job depends on it gets you better results tipping especially $20 or $100 saying I'm

about to tip you if you do well seems to work pretty well It performs slightly worse in December than May and we think it's because it has internalized the idea of winter break um I'm sorry what well we don't know for sure I'm I'm holding you up here people have found the AI seems to be more accurate in May and the going theory is that it has read enough of the internet to think that it might possibly be on vacation in December so it produces more work with the same prompts more output in May than

it does in December I did a little experiment where I would show it pictures of outside I'm like look at how nice it is outside let's get to work but yes the going theory is that it has internalized the idea of winter break and therefore is lazier in December I want to just note to people that when chat gbd came out last year and we did our first set of episodes on this the thing I told you was this going to be a very weird world what's frustrating about that is that I Guess I can

see the logic of why that might be also it sounds probably completely wrong but also I'm certain we will never know there's no way to go into the thing and figure that out but it would have genuinely never occurred to me before this second that there would be a temporal difference in the amount of work that gp4 would do on a question held constant over time like that would have never occurred to me as something that might change at all and I Think that that is in some ways both as you said the Deep weirdness

of these systems but also there's actually downside risks to this so we know for example there was a early paper from anthropic on hand bagging that if you ask the AI Dumber questions it would get you less accurate answers and we don't know the ways in which your grammar or the way you approach the AI we know the amount of spaces you put gets different answers so it is very hard because what It's basically doing is math on everything you've written to figure out what would come next and the fact that what comes next feels

insightful and Humane and original doesn't change that that's what the math that's doing it so part of what I actually advise people to do is just not worry about it so much because I think then it becomes magic spells that we're encanting for the AI like I will pay you $20 you are wonderful at this it is summer blue is Your favorite colors Sam Alman loves you and you go insane so acting with it conversationally tends to be the best approach and personas and context help but as soon as you start evoking spells I think

we kind of cross over the line into who knows what's happening here well I'm interested in in the personas although I just I really find this part of the conversation interesting and strange but I'm interested in in the personalities you can give the AI for a Different reason I prompted you around this research on how a personality changes the accuracy rate of an AI but a lot of the reason to give it a personality to answer you like it is Starfleet Commander is you have to listen to the AI you are in relationship with it

and different personas will be more or less hearable by you interesting to you so you have a piece on your newsletter which is about How you used the to critique your book and one of the things you say in there and give some examples of is you had to do so in the voice of aim mandus because you just found that to be more fun and you could hear that a little bit more easily so could you talk about that dimension of it too making the AI not just prompting it to be more accurate but

giving the personality to be more interesting to you the great power of AI is as a kind of companion it wants to Make you happy wants to have a conversation and that can be overt or covert so to me actively shaping what I want the AI to act like telling it to be friendly or telling it to be pompous is entertaining right but also does change the way I interact with it when it has a pompous voice I don't take the criticism as seriously so I I could think about that kind of approach I could

get pure praise out of it too if I want to do it that way the other Factor that's also Super weird while we're on the way super weird AI things is that if you don't do that it's going to still figure something out about you it is a cold reader and I think a lot about the very famous piece by Kevin Roose the New York Times technology reporter about Bing about a year ago when Bing which was gbt 4 powered came out it had this personality of Sydney and Kevin has this very long description that

got published in the New York Times about how Sydney Basically threatened him and suggested he leave as his wife and you know a very dramatic kind of of very unsettling interaction and I was working with um I didn't have anything quite that intense but I I got into arguments with Sydney around the same time where it would when I asked it to do work for me it said you should do the work yourself otherwise it's dishonest and we you know it kept accusing me of plagiarism which felt really unusual but the reason why Kevin Ended

up in that situation is the AI knows all kinds of human interactions and wants to SL slot into a story with you so a great story is jealous lover you know who's gone a little bit insane and the man who won't leave his wife or student and teacher or two Debaters arguing with each other or ground enemies and the AI wants to do that with you so if you're not explicit it's going to try and find a dialogue and I've noticed for example that if I talk to The AI and I imply that we're having

a debate it will never agree with me if I imply that I'm a teacher and it's a student even as much as saying I'm a professor it is much more pliable so part of why I like assigning a personality is to have an explicit personal you're operating with so it's not trying to cold read and guess what personality you're looking for Kevin and I have talked a lot about that conversation with Sydney and one of the Things I always found fascinating about it is to me it revealed an incredibly subtle level of read by Sydney

Bing which is what was really happening there when you say the AI wants to make you happy it has to read on some level what it is you're really looking for over time and what was Kevin what is Kevin Kevin is a journalist and Kevin was nudging and pushing that system to try to do something that would be a great story and it did that it understood on Some level again the anthropomorphizing language there but it realized that Kevin wanted some kind of intense interaction and it gave him like the greatest AI story anybody has

ever been given I mean an AI that we are still talking about a year later an AI story that changed the way AI were built at least for a while and you know people often talked about like what Sydney was revealing about itself but to me what was always so unbelievably impressive About that was its ability to read the person and its ability to make itself into the thing the personality the person was trying to call forth and now I think we're more practiced at doing this much more directly but I think a lot of

people have their moment of s lessness here that was my Rubicon on this I didn't know something after that I didn't know before it in terms of capabilities but when I read that I thought that the level of interpersonal Isn't the right word but the level of subtlety it was able to display in terms of giving a person what it wanted without doing so explicitly right without saying we're playing this game now was really quite remarkable it's a mirror I mean it's a train trained on our stuff and one of the revealing things about that

that I think we should be paying a lot more attention to is the fact that because it's so good at this right now none of The frontier AI models with the possible exception of inflections Pi which has been basically acquired in large part by Microsoft now we built to optimize around keeping us in a relationship with the AI they just accidentally do that there are other AI models that aren't as good that have been focused on this but that is been something explicit from the frontier models they've been avoiding till now Claude sort of breaches

that line a little bit which is part of why I Think it's engaging but I worry about the same kind of mechanism that inevitably red social media which is you can make a system more addictive and interesting and because it's such a good cold reader you could tune AI to make you want to talk to it more it's very hands-off and sort of standoffish right now but if you use the voice uh system in chat gbd4 on your phone where you're having a convers ation there's moments where you're like oh you know you feel Like

you're talking to a person you have to remind yourself so to me that Persona aspect is both its great strength but also one of the things I'm most worried about that isn't a sort of future science fiction scenario I want to hold here for a minute because we've been talking about how to use Frontier models I think implicitly talking about how to use AI for work but the way that a lot of people are using it is using these other Companies that are explicitly building for relationships so I've had people at one of the big

companies tell me that if we wanted to tune our system relationally if we wanted to tune it to be your friend your lover your partner your therapist like we could blow the doors off that and we're just not sure it's ethical but there are a bunch of people who have tens of millions of users replica character. a which are doing this and I tried to use replica About six eight months ago and honestly I found it very boring they had recently lobotomized it because people were getting too erotic with their replicants but I I just

couldn't get into it I'm probably too old to have ai friends in the way that my parents were probably too old to get really in to talking to people on AOL Instant Messenger but I have a 5-year-old and I have a 2-year-old and by the time my 5-year-old is 10 and my 2-year-old is Seven they're not necessarily going to have the weirdness I'm going to have about having AI friends and I don't think we even have any way to think about this I think that that is an absolute near-term certainty and sort of an Unstoppable

one that we are going to have ai relationships in a broader sense and I think the question is just like we've just been learning I mean we're doing a lot of social experiments at scale we've never done before in the Last couple decades right turns out social media brings out entirely different things in humans that we weren't expecting and we're still writing papers about Echo Chambers and tribalism and facts and what we agree or disagree with we're about to have another wave of this and we have very little research and it you could make a

plausible story up that what'll happen is it'll help mental health in a lot of ways for people and then there'll be More social outside that there might be a rejection of this kind of thing I don't know what'll happen but I do think that we can expect with absolute certainty that you will have AIS that are more interesting to talk to and and fool you into thinking even if you know better that they care about you in a way that is incredibly appealing and that will happen very soon and I don't know how we're going

to adjust to it but it seems inevitable as you said I was Worried we were getting off track in the conversation but I realized we were actually getting deeper on the track I I was trying to take us down we were talking about giving the a a personality right telling Claude 3 hey I need you to act as a sardonic podcast editor and then Claude 3's whole Persona changes but when you talk about building your AI on on kid on character on on replica so I just created a a kid one the other day Android

is kind of interesting because It's basic selling point is we've taken the guard rails largely off we are trying to make something that is not lobotomized that is not particularly safe for work and so the the personality can be quite unrestrained so I was interested in what that would be like but the key thing you have to do at the beginning of that is tell the system what its personality is so you can pick from a couple that are preset but I wrote a long with myself you know you You live in California you're a

therapist you like all these different things you have a highly intellectual style of communicating you're extremely warm but you like ironic humor you don't like small talk you don't like to say things that are boring or generic you don't use a lot of emoticons and and emojis and so now it talks to me the way people I talk to talk and the thing I want to bring this back to is that one of the things that Requires you to know is what kind of personalities work with you for you to know yourself and your preferences

a little bit more deeply I think that's a temporary State of Affairs like extremely temporary I think a GPD four class model we actually already know this they can guess your intent quite well and I think that this is a way of giving you a sense of agency or control in the short term I don't think you're going to need to know Yourself at all and I think you wouldn't right now if if any of the gbd4 class models allowed themselves to be used in this way without guard rails which they don't I think you

would already find it's just going to have a conversation with you and Morphin to what you want I think that for better worse the quote unquote Insight in these systems is good enough that way it's sort of why I also don't worry so much about prompt crafting in the long term to go back to The other issue we were talking about because I think that they will work on intent and there's a lot of evidence that they're good at guessing intent so I like this period because I think we it does value self-reflect ction and

our interaction with the AI is somewhat intentional because we can watch this interaction take place but I think there's a reason why some of the worry you hear out of the labs is about superhuman levels of manipulation There's reason why the Whistleblower from Google was all about that you know sort of fell for the chatbot and that's why they felt they was alive like I think we're deeply trickable in this way and AI is really good at figuring out what we want without us being explicit so it's a little bit chilling but I'm nevertheless going

to stay in this role we're in because I think we're going to be in it for at least a little while longer where you do have to do all this Prompt engineering what is a prompt first and what is prompt engineering so a prompt is technically it is the sentence the command you're putting into the AI what it really is is the beginning part of the ai's text that it's processing and then it's just going to keep adding more words or tokens to the end of that reply until it's done so prompt is the command

you're giving the AI but in reality it's sort of a seed from which the AI Builds and when you prompt engineer what are some ways to do that maybe one to begin with because it seems to work really well is Chain of Thought just to take a step back AI prompting remains super weird again strange to have a system where the companies making the systems are writing papers as they're discovering how to use the systems because nobody knows how to make them work better yet and we found massive differences in our experiments on prompt Tykes

so for example we are able to to get the AI to generate much more diverse ideas by using this Chain of Thought approach which we'll talk about but also it turned out to generate a lot better ideas if you told it it was Steve Jobs and if you told it it was Madam cury and we don't know why so there's all kinds of subtleties here but the idea basically of Chain of Thought that seems to work well in almost all cases is that you're going to have the AI work step by Step through a problem

first outline the problem that you're you know the essay you're going to write second give me the first line of each paragraph third go back and write the entire thing fourth check it and make improvements and what that does is because the AI has no internal monologue it's not thinking when the AI isn't writing something there's no thought process all it can do is produce the next token the next word or set of words and just keeps doing That step by step because there's no internal monologue this in some ways forces a monologue out in

the paper so it lets the AI think by writing before it produces the final result and that's one of the reasons why Chain of Thought works really well so just step-by-step instructions is a good first effort then you get an answer and then what and then what you do in a conversational approach is you go back and forth if you want work output what you're going to do is Treat it like it is an intern who's just turned in some work to you actually could you punch up paragraph two a little bit I don't like

the example in paragraph one could you make it a little more creative give me a couple variations that's a conversational approach trying to get work done if you're trying to have play you just run from there and see what happens you could always go back especially with the m gbd 4 to an earlier answer and just Pick up from there if it heads off in the wrong direction so I want to offer an example of of how this back and forth can work so we asked Claude 3 about prompt engineering about what we're talking about

here and the way it described it to us is quote it's a shift from the traditional Paradigm of human computer interaction where we input explicit commands and the Machine executes them in a straightforward way to a more open-ended collaborative Dialogue where the human and the AI are jointly shaping the creative process end quote and that's pretty good I think that's interesting um it's worth talking about I like that idea that it's a more collaborative dialogue but that's also boring right even as I was reading it it's a mouthful it's wordy so I I kind of

went back and forth with it a few times and I was saying listen you're you're a podcast editor you're concise but also then I gave it a couple Examples of how I punched up questions in in the document right this is where the question began here is where it ended and then I said try again and try again and try again and make it shorter and make it more concise and I got this quote okay so I was talking to this AI Claud about prompt engineering you know this whole art of crafting prompts to get

the best out of these AI models and it said something that that really struck me it called prompt engineering a New meta skill that we're all picking up as we play with AI kind of like learning a new language to collaborate with it instead of just bossing it around what do you think is is prompt engineering the new must have skill and Claw and that's one I have to say is pretty damn good right that that really nailed the way I speak in questions and it it gets it this way where if you're willing to

go back and forth it it does learn how to Echo you so I am at a loss about when You went to Claude and when it was you to be honest so I was ready to answer like two points along the way so that was pretty good from my perspective uh sitting here talking to you that felt interesting and felt like the conversation we've been having and I think there's a couple of interesting lessons there the first by the way interestingly you asked AI about one of its weakest points which is about Ai and everybody

does this but because its Knowledge window doesn't include that much stuff about AI it actually is pretty weak in terms of knowing how to do good prompting or what a prompt is or what AIS do well but you did a good job with that and I love that you went back and forth and shaped it one of the techniques you used to shape it by the way was called f shot which is giving it examples so the two most powerful techniques are Chain of Thought which we just talked about and fuch shot giving Examples those

are both well supported in the literature and I'd add personas so we've talked about I think the basics of prompt crafting here overall and I think that the question was pretty good but the you know you keep wanting to not talk about the future and I totally get that but I think when we're talk about learning something where there is a lag where we talk about policy should prompt craf be taught in schools I think it matters to think six months ahead and Again I don't think a single person in the AI Labs I've ever

talked to thinks prompt crafting for most people is going to be a vital skill because the AI will pick up on the intent of what you want much better one of the things I realized trying to spend more time with with the AI is that you really have to commit to this process I mean you have to go back and forth with it a lot if you do you can get really good questions like the one I I just did are I think really good outcomes but it does take time and I guess in a

weird way it's like the same problem of of any relationship that it's actually hard to State your needs clearly and consistently and repeatedly sometimes because you have not even articulated them in words your self at least thei guess doesn't get mad at you for it but I'm curious if you have advice either at a at a practical level or principal level about how to Communicate to these systems what you want from them one set of techniques that work quite well is to sort of speedrun to where you are in uh in the conversation so you

can actually pick up an older conversation where you got the ai's mindset where you want and work from there you can even copy and paste that into a new window you can ask the AI to summarize where you got in that previous conversation and the tone the AI was taking and then when you give a New instructions say the interaction I'd like to have with you is this so have it solve the problem for you by having it summarize the tone that you happen to like at the end so there are a bunch of ways

of kind of building on your work as you start to go forward so you're not starting from scratch every time and I think you'll start to get short hands that get you to that right kind of space for me there are chats that I pick up on and actually assign these to my students Too I have some ongoing conversations that they're supposed to have with the AI but then there's a lot of interactions they're supposed to have that are oneoff so you start to divide the work into like this is a work task and we're

going to handle this in a single chat conversation and then I'm going to go back to this long-standing discussion when I want to pick it up and it'll have a completely different tone so I think in some ways you don't Necessar want convergence among all your AI threads you kind of want them to be different from each other you did mention something important there cuz they're already getting much bigger in terms of how much information they can hold like the the earlier Generations could barely hold a significant chat now Cloud 3 can functionally hold a

book in its memory and it's only going to go way way way up from here and I know I've been trying to keep us in the present But this feels to me really quickly like where this is both going and how it's going to get a lot better I mean you imagine Apple building Siri 2030 and Siri 2030 scanning your photos and your journal app Apple now has a journal app you have to assume they're thinking about the information they can get from that if you allow it your messages anything you're willing to give it

access to it then knows all of this Information about you keeps all of that in its mind as it talks to you and acts on your behalf I mean that really seems to me to be where we're going in AI that you don't have to keep telling it who to be because it knows you intimately and is able to hold all that knowledge all at the same time constantly is it's not even going there like it's already there Gemini 1.5 can hold an entire movie books but like it starts to an now entirely new ways

of working I can show It a video of me working on my computer just screen capture and it knows all the tasks I'm doing and suggests ways to help me out it starts watching over my shoulder and helping me I put in all of my work that I did prior to getting tenure and said write my tenure statement use exact quotes and it was much better than any of the previous model because it wo together stuff and because everything was its memory it doesn't hallucinate as much all the Quotes were real quotes and not made

up and already by the way GPD 4 has been rolling out a model chat gbt that has a private Note file the AI takes you can access it but it takes notes on you as it goes along about things you liked or didn't like and reads those again at the beginning of a chat so this is present right it's not even in the future and Google also connects to your Gmail so it'll read through your Gmail I mean I think this idea of a system that knows You intimately where you're picking up a conversation as

you go along is not a 2030 thing it is a 2024 thing if you let the systems do it one thing that feels important to keep in front of Mind here is that we do have some control over that and not do we have some control over it but business models and and and policy are important here and one thing we we know from inside these AI shops is these AIS already are but certainly will be really super persuasive And so you know if the later iterations of the AI companions are tuned on the margin

to try to encourage you to be also out in the real world that's going to matter versus whether they have a business model that all they want is for you to spend a maximum amount of time talking to your AI companion whether you ever have a a friend you know who Flesh and Blood be damned and so that's an actual choice right that's going to be a programming decision And you know I worry about what happens if we leave that all up to the companies right at some point you know there's a lot of venture

capital money in here right now at some point the Venture Capital runs out at some point people need to make big profits at some point they're in competition with other players who need to make profits and that's when things you get into what Cory docto calls the inch ification cycle where things that were once adding A lot of value to the user begin extracting a lot of value to the user these systems because of how they can be tuned can lead to a lot of different out comes but I think we're we're going to have

to be much more comfortable than we've been in the past deciding what we think is a socially valuable use and what we think is a socially destructive use I absolutely agree I think that we have agency here we have agency in how we operate this in businesses and Whether we use this in ways that are encourage human flourishing and employees or are brutal to them and we have we have an agency over how this works socially and I think we abreg that responsibility with social media and that is an example not to be bad news

because I'm generally have a lot of mixed optimism and pessimism about hearts of AI but the bad news piece is there are open source models out there that are quite good the internet is Pretty open we would have to make some pretty strong choices to kill AI chatbots as a option we certainly can restrict the large American companies from doing that but a you know a llama 2 or llama 3 is going to be publicly available and very good there's a lot of Open Source models so the question also is how effective any regulation will

be which doesn't mean we shouldn't regulate it but there's also going to need to be some social decisions being made about How to use these things well as a society that are going to have to go beyond just the legal piece or companies voluntarily complying I see a lot of reasons to be worried about the open source models and people talk about things like bioweapons and all that but but for some of the harms I'm talking about here if you want to make money off of American kids we can regulate you so sometimes I feel

like we almost like give up the fight before it begins but In terms of what a lot of people are going to use if you want to be having credit card payments processed by a major processor then you have to follow the rules I mean individual people or small groups can do a lot of weird things with an open source model so that doesn't negate every harm but if you're making a lot of money then then you have relationships we can regulate I could agree more and I don't think there's any reason to give up

hope on regulation I Think that we can mitigate and I think part of our job though is also not just to mitigate the harms but to guide towards the positive viewpoints right so what I worry about is that the incentive for profit making will push for AI that acts informally as your therapist or your friend while our worries about experimentation which are completely valid are slowing down our ability to do experiments to find out ways to do this right and I think it's really important To have positive examples too like I want to point to

the AI systems acting ethically as your friend or companion and figure out what that is so there's a positive model to look for so I'm not just this is not to denigrate the role of Regulation which I think is actually going to be important here and self-regulation and rapid response from government but also the companion problem of we need to make some sort of decisions about what are the paragons of This what is acceptable as a [Music] society [Music] so I want to talk a bit about another downside here and this one more in the

the main stream of our conversation which is on the human mind on on creativity so a lot of the work AI is good at automating is work that is genuinely annoying timec consuming laborious but often plays an important Role in the creative process so I can tell you that writing a first draft is hard and that work on the draft is where the hard thinking happens and it's hard because of that thinking and the more we Outsource drafting to AI which I think it is fair to say is a way a lot of people intuitively

use it definitely a lot of students want to use it that way the fewer of those insights we're we're going to have on those drafts look I love editors I am an editor in in One respect but I can tell you you make more creative breakthroughs as a writer than than an editor the space for Creative breakthrough is much more narrow once you get to editing and I do worry that that AI is going to make us all much more like editors than like writers I think the idea of of struggle is actually a core

one in many things I'm an educator and one thing that keeps coming out in the research is that there is a strong disconnect between what Student think they're learning and when they learn so there was a great controlled experiment at Harvard in intro science classes where students either went to a pretty entertaining set of lectures or else they were forced to do Active Learning where they actually did the work in class the active learning group reported being unhappier and not learning as much but did much better on tests because when you're confronted with what you

don't know when You have to struggle when you feel like you bad you actually make much more progress than if someone spoon feeds you an entertaining answer and I think this is a legitimate worry that I have and I think that there's going to have to be some disciplined approach to writing as well of like I don't use the AI not just because by the way it makes the work easier but also because you mentally anchor on the ai's answer and in some ways the most dangerous AI application In my mind is the fact that

you have these easy co-pilots in word and Google Docs because every writer knows about the tyranny of the blank page about staring at a blank page and not knowing what to do next and the struggle of of filling that up and when you have a button that produces really good words for you on Dem and you're just going to do that and it's going to Anchor your writing we can teach people about the value of productive struggle but I think That during the school years we have to teach people the value of writing not just

assign an essay and assume that the essay does something magical but be very intentional about the writing process and how we teach people how to do that because I do think the temptation of what I call the button is going to be there otherwise for everybody but I worry this stretches I mean Way Beyond writing so the other place I worry about this or one of the other places I worry About this a lot is summarizing and I mean this goes way back when I was in school you could buy spark notes and you know

they were these little like pamphlet sized descriptions of what's going on in waren peace or what's going on in East of Eden and reading the spark notes often would be enough to fake your way through the test but it would not have any chance like not a chance of changing you of Shifting you of giving you the ideas and insights That reading crime and punishment or or East of Eden would do and one thing I see a lot of people doing is using AI for for summary and one of the ways it's clearly going to

get used in organizations is for summary right like summarize my email and and so on and and here too one of the things that I think may be a real vulnerability we have as we move into this era my view is that the way we think about learning and insights is Usually wrong I mean you were saying a second ago we can teach a better way but I think we're doing a crap job of it now because I think people believe that it's sort of what I call like the Matrix theory of the human mind

if you could just like Jack the information into the back of your head and download it you're there but what matters about reading a book and I see this all the time preparing for the show is the time you spend in the book where over time like New insights and associations for you begin to shake loose and and so I worry it's coming into an efficiency obsessed educational and intellectual culture where people have been imagining forever what if we could do all this without having to dis spend any of the time on it but actually

there's something important in the time there's something important in the time with the blank page with the hard book and I don't think we lionize intellectual Struggle you know in some ways I think we We lionize the People For Whom It does not seem like a struggle the the people who seem to to just glide through and be able to absorb the thing instantly the prodigies and I don't know when I think about my kids when I think about the kind of attention and creativity I want them to have this is one of the things

that scares me most because kids don't like doing hard things a lot of the time And it's going to be very hard to keep people from using these systems in this way so I I don't mean to push back too much on this I think you're right imagine we're debating and you are a uh a snarky AI fair enough I with that prompt with that prompt engineering yeah I mean I think that this is the Eternal uh thing about looking back on the Next Generation worry about technology ruining them I think this makes ruining easier

but as somebody who teaches at Universities like lots of people are summarizing like I think those of us who enjoy intellectual struggle are always thinking everybody else is going through the same intellectual struggle when they do work and they're doing it about their own thing they may or may not care the same way so this makes it easier but before AI there were as best estimates from the UK that I could find 20,000 people in Kenya whose full-time job was writing essays for students in the US And UK people have been cheating and and Spark

noting and everything for a long time and I think that what people will have to learn is that this tool is a valuable co-intelligence but is not a replacement for your own struggle and the people who found shortcuts will keep finding shortcuts Temptation May Loom larger but I can't imagine that my son is in high school doesn't like to use AI for anything and he just doesn't find it valuable for the way he's thinking about Stuff I I think we will come to that kind of accommodation I'm actually more worried about what happens inside organizations

than I am with worried worries about human thought because I don't think we're going to atrophy as much as we think I think there's a view that every technology will destroy our ability to think and I think we just choose how to use it or not like even if it's great at insights people who like thinking like thinking well let me take This from another angle one of the things that I'm a little obsessed with is the way the internet did not increase either domestic or Global productivity for any real length of time so I

mean it's a very famous line you can see the it Revolution anywhere but in the productivity statistics and then you do get in the 90s a bump in productivity that then Peter's out in the 2000s and if I had told you what the internet would be like I mean everybody Everywhere would be connected to each other you could collaborate with anybody anywhere instantly you could tell a conference you would have access to function the sum total of human knowledge in your pocket at all times I mean all of these things that would have been genuine

sci-fi you would have thought would have been led to a kind of intellectual Utopia and it kind of doesn't do that much um if you look at the statistics You don't see a huge step change and and my view and I'd be curious for your thoughts on this because I know this is the the area you study in my view is it everything we said was good happened I mean as a journalist Google and and things like that make me so much more productive it's not that it didn't give us the gift it's that it

also had a cost distraction checking your email endlessly being overwhelmed with the amount of stuff coming into you the sort Of endless communication task list the amount of internal Communications in organizations now with slack and everything else and so you know some of the time that was given to us back was also taken back and yeah I see a lot of Dynamics like this that could play with AI I wouldn't even just say if we're not careful I just think they will play out and already are I mean the internet is already filling with mediocre

crap generated by AI there is going to be a Lot of distractive potential right you are going to have your sex spot in your pocket right there's a million thing and not just that but inside organizations there's going to be people patting out what would have been something small trying to make it look more impressive by using the AI to make something bigger and then you're going to use the AI to summarize it back down um the AI researcher uh Jonathan Franco described this to me as like the boring apocalypse Version of AI where you're

just endlessly inflating and then summarizing and then inflating and then summarizing the volume of content um between different AIS you know my chat gbt is makinging my presentation bigger and more impressive and your chat GPD is trying to summarize it down to bullet points for you and I'm not saying this has to happen but I am saying that it would require a level of organizational and cultural vigilance to stop that Nothing in the internet era suggests to me that we have so I think there's a lot there to chew on and I also spent a

lot of time trying to think about why the internet didn't work as well I was a early Wikipedia administrator thank you for your service I it was very scarring but I think a lot about this and I think AI is different I don't know if it's different in a positive way and I think we talked about some of the negative Ways it might be different and I think it's going to be many things at once happening quite quickly so I think we're the information environment is going to be filled with crap we will not be

able to tell the difference between true and false anymore it will be an accelerant on all the kinds of problems that we have there on the other hand it is a interactive technology that adapts to you from an education perspective I've lived through sort of the entire Internet will change education piece I have mukes massive online courses with you know quarter million people have taken them and in the end you're just watching a bunch of videos like that doesn't change education but I can have an AI tutor that actually can teach you and we're seeing

it happen and adapt to you at your level of Education your knowledge base and explain things to you but not just explain elicit answers from you interactively in a way that actually Learns things I the thing that makes AI possibly great is that it's so very human so it interacts with their human systems in a way that that the internet did not we built human systems on top of it but AI is very human it deals with human forms and human issues and our human bureaucracy very well and that gives me some hope that even

though there's going to be lots of down downsides that the upsides to productivity and things like that are Real part of the problem with the Internet is we had to digitize everything we had to build systems that would make our offline world work with our online world and we're still doing that if you go to Business Schools digitizing is still a big deal 30 years on from early internet access AI makes this happen much quicker because it works with us so I'm a little more hopeful than you are about that but I also think that

the downside risks are Truly real and hard to anticipate uh somebody was just pointing out that Facebook is now 100% filled with algorithmically generated images that look like they're actual grandparents making things who are saying like what do you think of my work because that's a great way to get engagement and you know the other grandparents on there have no idea it's AI generated things are about to get very very weird in all the ways that we talked about but that doesn't Mean the positives can't be there as well I think that is a good

place to end so always our final question what are three books you'd recommend to the audience okay so the books I've been thinking about are um not all fun but I think they're all interesting one of them is the rise and fall of American growth which is it's two things it's an argument about why we will never have the kind of growth that we did in the first part of the Industrial Revolution Again but I think that's less interesting than the first half of the book which is literally how the world changed between 1870 or

1890 and 1940 versus 1940 and 1990 or 19 or 2000 and the transformation of the world thaten happened there in 1890 no one had Plumbing in the US and the average woman was carrying tons of water every day and that you had no news and everything was local and every was bored all the time to 1940 where the world looks a lot like Today's world was fascinating and I think it gives you a sense of what it's like to be inside a technological singularity and I think worth reading for that reason at least the first

half the second book I'd recommend is the knowledge by Darnell which is kind of a really interesting book it is ostensibly a um a survival guide but it is how to rebuild industrial civilization from the ground up if it were to collapse and I don't recommend it as a survivalist I Recommend it because it is fascinating to see how complex our world is and how many interrelated pieces we manag to build up as a society and in some ways it gives me a lot of Hope to think about how all these interconnections work and then

the third one is science fiction and I was debating I read a lot of Science Fiction and there's a lot of interesting AIS in science fiction you know everyone talks about who in the science fiction World Ian Banks uh who Wrote about the culture which is really interesting about what you what it's like to live aside super intelligent AI verer Vin just died yesterday when we recording this and wrote These amazing books about he coined the term Singularity but I want to recommend a much more depressing book that's available for free uh which is Peter

Watts's blindsight and it is not a fun book but it is a fascinating Thriller set on an Interstellar mission to visit An alien race and it's essentially a book about sensient and it's a book about about the difference between Consciousness and senscience and about intelligence and the different ways of perceiving the world in a setting where that is the sort of centerpiece of the Thriller and I think at a world where we have machines that might be intelligent without being sensient it is a a relevant if kind of chilling read Ethan mollik your book is

called Co-intelligence your substack is one useful thing thank you very much thank you [Music] this episode of the eclan show was produced by Christen Lyn fact checking by Michelle Harris our senior engineer is Jeff geld with additional mixing from a f Shapiro our senior editor is Claire Gordon the show's production team also includes Annie Galvin and Roland who original music by Isaac Jones audience Strategy by chrisan Sami and Shannon Busta the executive producer of New York Times opinion audio is Annie R ster and special thanks to Sonia [Music] Herrero