all right well welcome everybody and thank you for joining us here today on the session about how my body migrated 60,000 SQL Server databases to AWS my name is R fan and I'm very excited and also very proud to be here today since I was a senior specialist solution architect working with mind body through this migration with a quick show of hands here how many of you are currently running production databases in AWS okay nice about 2third uh how many of you might be thinking or considering migrating SQL Server really any database to AWS and

let's say the next 24 months or so all right awesome well also about half well you came to the right place today we're going to kind of go through the entire journey of Mind Body and kind of starting at the beginning why did they choose AWS what were some of the benefits of that we're going to be looking at their on premise SQL Server architecture and how that changed coming into AWS we're going to be looking at um how different business decisions drove the migration planning and the tools that they were able to use to

do so we're also going to be reviewing how did the migration go um there's going to be lots of challenges along the way so get ready for those and then we're going to do um kind of what's next when we start talking about modernization and of course Lessons Learned along the way so with that let's get started hey everybody nice to meet you I'm leor sadan solution architect with AWS I work with our customers helping them design Deploy workloads on the cloud and mindbody is one of my customers we have a special guest hi I'm

the customer uh Tim Ford uh manager of database administration at mindbody I uh in addition to that I've also had a very long career as a data professional in in to uh been involved in the community uh for decades now at this point and uh also one of the fun things I uh started this thing called SQL Cruise back in 2010 where I would take people such as yourself out on cruise ships and we would do uh training for a week and have a whole bunch of fun uh speaking of fun let's talk about the

fun that we had during that migration let's go real quick L let me talk about uh Mind Body so body I like to describe it as business in a in a box a business solution for yoga studios fitness centers spas and salons uh we are the leader in the industry we've got about 3 thou or 3,000 3 million active users globally and you might know us by some of our uh other brands uh class pass and Booker yeah I'm actually a user of your application I book my gym classes and mostly cancel them you too

so really needed it's very highly available uh maybe we can start from the beginning right why moving to the cloud what were your goals well uh initially the big thing is what it what it typically is for most companies we wanted to save money uh we wanted to be able to convert a lot of those sunk costs that we had on the data platform specifically uh you can imagine with 60,000 databases what uh kind of Hardware we had sunk cost in and convert that more to an operationalized uh model where we could dial in and

dial back uh compute and therefore cost as we needed to Additionally you know building SQL Server databases is boring I don't know if you guys all realize that or not uh so we wanted to do fun stuff we wanted to be able to innovate and to do that we were looking to be able to leverage uh infrastructure as code and other uh modern type uh uh methods of getting away from the repetitive tasks uh we also wanted to make sure that as a repetitive task uh the human element was involved too because the last thing

we wanted to do was have inconsistency on our builds so uh that mixed to with the fact that we uh definitely needed to look at uh leveraging the bread and the depth of AWS in general uh kind of set us on our journey awesome and you mention scalability ties into when all this kind of started can you yeah yeah so uh who here uh uh was having fun in February 2020 going out socializing all that stuff yeah yeah that wasn't happening uh so uh I'm GNA back up for a second there because that's when we

kicked things off but uh my first day on the job I was pulled into a to a conference center or conference room I should say CEO all these other people I had never met before and I was informed that we were moving to the cloud which was great because the manager at the time who was courting me to come to the company was telling us about all of these interesting uh you know kind of uh issues that you would have to deal with the way we had things structured and structured on Prem that all got

thrown out the window but 18 months later we're looking to go ahead and and uh engage with AWS we're we're uh getting our act together as far as determining exactly what our our plan is going to be and uh part of that was also going to be engaging with uh the partner Network at AWS and uh you know some of those other services that allow us to quickly uh accelerate our movement into the cloud so this is a huge undertaking right you have a big footprint in a data center want to move it where did

you even start well uh you know moving a moving your stuff out of a data center is just kind of like moving out of an apartment right uh you need to know what you're what you've got what you want to move what doesn't spark Joy anymore that you don't want to move uh so the big the first thing we did was look at what we had and what we were going to do with it uh more than anything else uh you know the one thing I also didn't didn't happen to mention on reasons to move

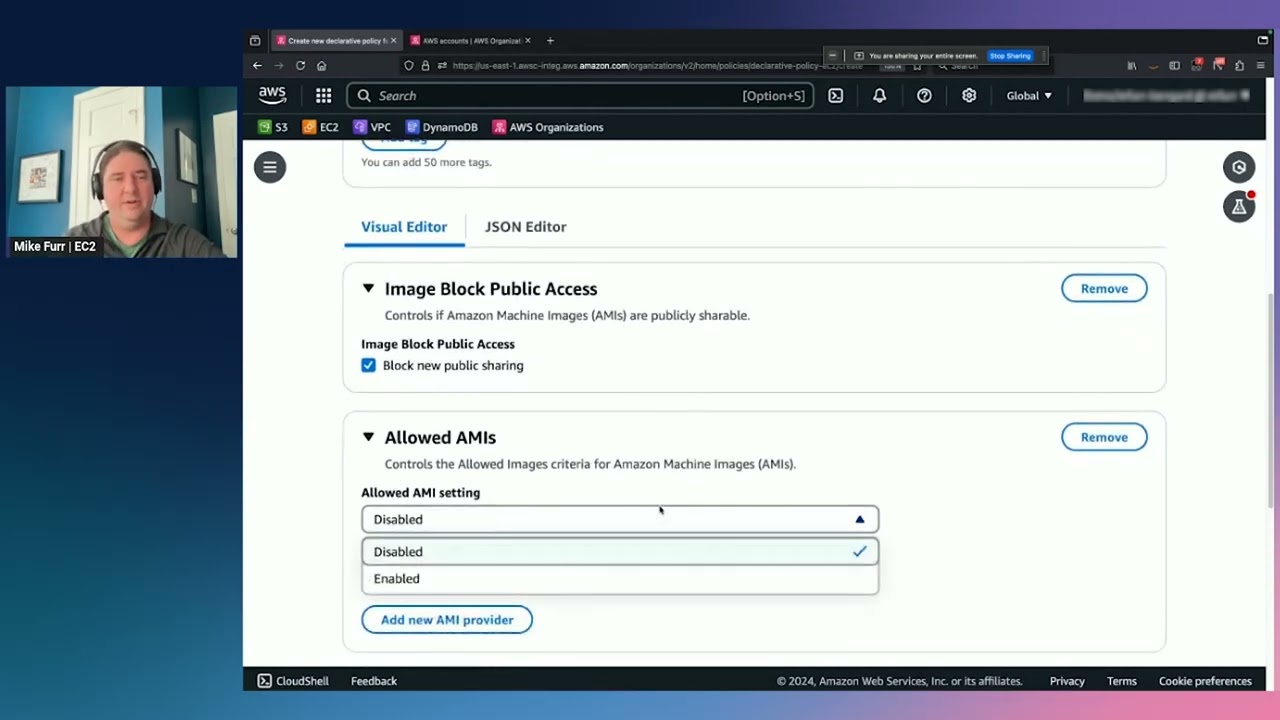

to AWS too is we kind of up against a clock to get out of our data center too so other incentive on top of that for everything else but the first thing that we did with AWS was this uh Ola an optimization and Licensing assessment yeah rard do you mind maybe touching on Ola totally so as part of the um AWS Cloud migration framework is kind of broken into three different steps the assess phase the migrate phase and then the Opera and modernized phasee and the old throughout each one of those phases AWS provides a

wide variety of different tools and programs to support customers like mindbody um to migrate into AWS and the Ola is exactly that it's an optimization licensing assessment so it's a program that ads offers um for customers to go through and say hey what are you currently running and how what would be the best way to deploy and operate that in AWS and it's really built on two different uh pillars and those two pillars are are going to be one's right sizing and then the other one is is optimizing that licensing stance so as far as

a right sizing perspective goes we'll come out and we'll look at your um at your servers and what you're running today on Prem um a lot of customers will buy infrastructure and they're saying hey this needs to operate for the next 3 to 5 years so you're projecting what your needs are going to be when you come to AWS um that's a real great opportunity to start right sizing since you you're pay it's a pay as you go model and you can Leverage The elasticity of the cloud you can run on smaller a smaller footprint

of infrastructure save cost and as needed you can go ahead and increase that uh increase that instance types so what we do is we look at your current utilization for CPU and memory and Network and and for us dbas look at at um IO and and throughput on dis as well and don't worry we don't just look at averages we look at the Peaks as well so we will then come back and say you know based off what we see we see you running at let's say 60% CPU and and you know this menu iops

we'll make a recommendation and actually map out from your server and suggest an ec2 instance type that you can deploy to so that's kind of the first first part the second part is looking at your Microsoft licensing stance so in AWS you can either bring your own licenses uh for when it comes to SQL and windows or you can Leverage The licens included cost model where you're deploying ec2 instances and you're paying AWS and we will pay Microsoft on your behalf for those licenses so um as part of that you know we want to make

sure that that huge SQL server and micro Windows um just a little bit licensing cost you know that in mindbody scenario they were able to Leverage The by cost model um because of their Mobility rights with active software Assurance so they were able to bring that in and um go ahead and deploy with the byall and so again through that assessment phase that was really what we're trying to accomplish there and a quick call to action to everybody here today reach out to your account manager reach out to your technical account manager especially if you're

thinking about doing a migration and ask them say hey I want to do an Ola I want to know what's in my environment and what would this look like because here at AWS we're all about making data driven decisions and giving that data to our customers right so this will give you a beautiful presentation and spread sheeet mapping all of your infrastructure making those suggestions like I mentioned the E2 mapping as well as giving you the the ultimate cost of what this is going to uh cost you so you can have a good TCO for

your SQL server workloads on AWS MH and is this uh only for people who are migrating from data centers or also no good call out on that thank you um so this is going to be for customers that are are either looking to migrate from their data centers maybe uh they're in a Colo or even from a different um Cloud vendor they can also even run this once they're in AWS so once you're in AWS a lot of times we see customers migrate in and they might over provision a little bit because they're very nervous

about the migration or they want to go through that stability phase um but then after that they say okay we now feel comfortable here we want to cost optimize and then we can run the OA again for you in awsi as well yeah good call thank you rard so let's switch gears a little bit talk about uh SQL servers a little bit maybe you can describe your footprint uh in the data center sure sure uh first time talking about SQL Server kidding uh so the way we had structured our environment on Prem is we broke

it into two tiers uh we have a customer subscriber tier that we call it that has those 60 to 70,000 databases those were all on Standalone SQL Server 2016 on Windows 2016 uh standard edition as mentioned no ha that might be one of those things that we're looking to do when we move to the cloud um and then we also have a central here that was composed of about uh four clusters that housed uh six different uh availability groups spread over three nodes each um okay thank you for that and what's the plan to deploy

in the CL well you know we were looking to modernize first of all and get rid of some of that licensing that that regar spoke about because we have a little bit of cost there with 60,000 databases uh so we looked at options for RDS we looked at Aurora um we're still looking to do some of those things we'll talk about that later uh but uh we also wanted to make sure that we solved that ha issue that we had on Prem uh we also were looking to continue using uh availability groups uh for our

Central work and uh when we were uh determining what we were going to use for our sizing for our ec2s we were looking at the stand Edition limits of SQL server and because most of our workloads are memory intensive we we decided to land on a 4X size model because that maximized that 128 gigs worth of memory grant that we were able to leverage for SQL Server got it okay so we have the we understand kind of what we have on Prem we know where we want to go to we're testing this how does this

go so uh when that move up to to Oregon that we were looking at and our data centers are down in La uh we had talked about uh taking care of those sunk cost First by working with moving the databases first so we wanted to see what that was going to look like uh we took uh some of our clusters in our Dev environment we move those up into the Oregon region leveraging that pipeline that I had mentioned that we built and then we started doing testing and then we started to see problems uh in

that every time there was that that conversation up or down from Oregon to La we were seeing about a 20 millisecond latency hit uh we knew our application was chatty we just didn't realize it was chatty like a toddler speed of flight is not fast enough for you no no no uh you know I've done a lot of things in Tech but I've never been able to figure out how to to take care of reducing the speed of light so do you solve this problem Oh God uh hit a brick wall I mean our our

plan was to not do uh a big bang we had no appetite for it it was going to be a huge impact to our customers and even though we were in the middle of a pandemic uh we still had to to respect the fact that we were you know the source of income and livelihood for so many not just chains but also individual uh shop owners and we were also their conduit it uh for their clients to health so we wanted to make sure that we were able to minimize that but big bangs started to

come back into the situation and we got really really concerned but that's when AWS came up and started telling us about these things called local zones right so you're saying big ban you mean moving everything at everything at once yep you know take you know close the door on a Friday open it up in Oregon on Sunday night yeah was impossible no no so I think we propos the local zones um maybe rard do you want to give us a little bit on local zones sure absolutely so a quick show hands here who who here

knows about local zones no right all right about about a third or quarter so I'm sure everybody knows about regions and availability zones right so in an availability Zone we have three three or more different data centers within a region we usually have three or more uh availability zones right for redundancy and so as mentioned as to mention the closest region to La for them where they were trying to land was going to be in Oregon right um well local zones is is a is infrastructure that we deploy in in cities so think about more

of like us running a data center closer to you doing that compute on the edge right Edge Computing there and the Really the idea here is that we for exactly these types of workloads that are latency sensitive right so we see a lot of financial Industries a lot of gaming Industries using our local zones for exactly that region because they're trying to put the data as close to the user as possible and um so what's great about what's great about local zones is that we have one right in La so uh our LAX local Zone

was available to mindbody to be able to use that now in local zones we we have we provide customers with all of our core Services right so we have networking we're going to have compute we're going to have have storage and so on and one of the um one of the things is in local zones is that even though our service are available in local zones some of our service or it it anchors into a parent region as well we're going to kind of get into that here in a little bit why that's important um

so yeah so how did local Zone help you with uh that latency problem well let's talk first about how easy it was to go ahead and test that out snapshots from what had deployed up in Oregon and then we uh restored those down into the LA local Zone we didn't even have to extend the VPC because we're already there ran our tests and you know surprisingly you know we all know about LA traffic uh it did definitely improve things uh to the point where it now freed us up of the ability to now go back

to that hybrid model and step away from The Big Bang because as as we said we are never going to do a big bang never no awesome no way this solves your problem but there were a few challenges as well well there's a few challenges uh as mentioned uh there are some limitations to what services are available in the local zones as well as uh in some cases where those Services reside uh for instance uh we had uh planned to leverage uh gp3 storage uh but gp3 storage at the time wasn't available in the local

Zone additionally uh our backups our backups we backup directly to S3 with a third party tool and so our backups will now take longer because you know our databases aren't stationed close in physical proximity to our our our our our our backups and our backups and our our applications together so what we ended up with was a situation where um we ended up having to you know take some take some consideration in what we could do uh for for instance we were looking at leveraging FSX mhm uh for our our file share Witnesses for our

clusters but with that located up in in Oregon we did have some concerns about what that lency might mean to being able to to ensure that we've got a stable environment as well yeah understood now rart I think you helped with another challenge on the storage side right yeah that's right so um so what what ended up happening was like to mentioned originally when we came and did the design went through the Ola We did the cost analysis um and all the good stuff that was based off of Oregon right so the migration to Oregon

they came out and said okay well great we're in region we're going to use gp3 and so they had a $1 million annual budget set for uh for their EBS for SQL server and that was going to be running all on gp3 now in local Zone um oh sorry yeah I just kind of walk through that so that was going to be 146 different volumes 2 and 1 half terabytes pop and the kicker there being that each volume is going to require 16,000 iops and 500 megabits per second now um this was going to be

for SQL Server data and tempdb files the um what we saw however is that in local Zone gp3 was not available so we had gp2 and io1 was the two options so of course we said not a problem um gp2 cannot um handle the 500 megabytes per second uh through put so we got to switch over to i1 so we went through and re architected everything running on i1 and that estimate came out to be a cool $ 2.86 million so just uh about a here's a little bit to go ahead just a cool little

I'm going to put that in my pocket just a cool little 1.86 million over budget so we said Okay that was obviously not going to fly uh we presented that back to them and they're like absolutely not so we said okay well let's take that back and then think about it again and that's when we said okay well let's work with what we got and gp2 being the solution there right so we said let's go ahead and do a gp2 raid zero configuration now raid zero uh this is not raid five this is not RAID

10 we're not trying to solve for uh availability or resilience we're we're just trying to solve for performance right so what we said with the raid uh raid zero that's where we're striping them together so you can add together the uh performance of both of those uh volumes together and that's what the that's what's going to be um that's going to be the performance metric so we did 8,000 iops time uh and 250 megabits per second throughput on each of the two volumes rate them together gets us to that 16,000 iops and 500 uh 500

megabits per second now this was uh with gp2 performance there is going to be a um you have to trade off because performance is based off of a capacity right so for each one gig of capacity that you grant you get three iops on those volumes so what ended up happening with that is that we had vastly over provision on capacity to be able to get the storage that we wanted to and that's one of the benefits of gp3 and that's why we recommend all of our customers go to gp3 because we um disconnect those

two things so capacity and performance are not interl anymore with gp3 and so after we kind of rearchitecturing over i1 but we're still over budget so what else can we do that's when we started looking and seeing okay what's running on these nodes or on these volumes and again it's going to be the SQL data file in tempdb so we started looking at the different ec2 options that we had available to us on in local zones um R and then that's when we started looking at ec2 instances with locally attached nvme ssds that are super

highp speed with low latency and um we landed on the r5d nodes that's going to be that memory optimized ec2 instance nodes that gives you a 1 vcpu to 8 gig memory and that's the one that we selected with the 128 G Ram um so the r5d 4xls were what they were ended up running with that and so in our story what happened there is I said okay well now that we got this uh nvme what we're going to do is we're going to take temp DB and and put temp DB data files on there

so by doing so we were able to one reduce the capacity and two reduce the performance needs um on those EBS volumes and by doing so we were able to actually go ahead and shrink the amount of capacity like already mentioned we're way over provision on capacity um and saw 32% capacity consolidation bringing us down to $768,000 but here at AWS we're all about listening to the customer and we're all about um innovating on the behalf of customers and so that's exactly what we did so after working with my body for so long I said

hey we we have internal process called a product feature request a pfr and so I went ahead and submitted a pfr in April 2021 um on behalf of mybody and said hey team we really need to deploy gp3 to local zones because um you know my customer needs it and I'm very proud and happy to say that by July 2021 um they released it and as part of that um I think you guys had an interesting little sub story to that as well right with the yeah well on the timing of that too uh definitely

I appreciate the fact that you put put that in for us I'll take that dollar back a little bit later uh but uh we finished our migration a month after that uh Grant was given so we went live with the gp2 storage but uh we've got some smart people that work at mindbody and one of our platform Architects created a python script that would allow us behind the scenes to convert every one of those stripes from gp2 to gp3 without any noticeable impact to the customer yeah that's a really cool story and so just remember

that on adbs you can run CLI commands against your infrastructure in the back end um and it's just an amazing story about how you can convert from gp2 to gp3 in an online State and with limited performance impact sounds like from what mind body experience which is a really cool story and so to kind of wrap up that piece of it oops was that you know we ended up um ultimately after the conversion of gp2 to gp3 just being a little bit over 700k in cost um for the from an annual perspective there so just

kind of a neat little way of how we collaborated together created or deployed new features to um local on as well yeah yeah pfr really drives the uh the products that we release to um on AWS now staying on storage you wanted to achieve High availability for U SQL Server can you talk a little bit about that oh definitely so uh with not having the the uh availability of any sort of shared storage in the local Zone um about 10 years ago uh a whole bunch of uh SQL MVPs were flown into this company called

scos and uh I didn't really pay attention at the time because I didn't think it was going to impact me but I remembered the product and what scos does is they do Block Level uh replication and there's a process that we were able to configure that on the surface uh very much looked like like a structure you would see for availability groups we had identical storage underneath each node but the way scios works is it does that Block Level replication from the primary to the locked uh drive on the secondary and uh it orchestrates perfectly

with uh SQL server failovers and and windows cluster failovers as well so that's how we were able to to uh take advantage of that and uh still be able to maintain a failover cluster scenario even though we didn't have shared storage got it let's maybe backtrack a little bit do you want to touch on FCI maybe sure just one of the benefits there being that you know with FCI again we kind of touched on licensing previously right when we when we when we're on SQL Server standard edition to get to an FCI um you require

that shared storage tier right and on AWS that's going to be Amazon FSX for Windows file server and like uh Tim mentioned earlier that was not available in LAX at the time um that was only something that was available in the Oregon region and having that latency constraint there on every R right that was not going to work out right we also also have Amazon FSX for net up on tap as well as um more re recently introduced um io2 Amazon EBS multi- attach uh for that share storage tier but just awesome to hear about

how one of our AWS Partners stepped in and helped out with with a need there in the local Zone yeah yeah we love it our partners so they give you socks do you know that it's amazing they do have cool socks awesome so um you have a plan had a lot of problems we kind of solve them how's the migration uh laid out yeah yeah so um you know there there's options uh AWS has plenty of of different services that are available for my great to the cloud unfortunately because I was making a conscious decision

to uh improve our our uh both our OS and our Windows editions uh that kind of put us back into doing our own thing so what we ended up doing was uh uh using our our solution for our homegrown uh migration that we were planning for databases behind the scenes with this DB mover uh uh tool that we created and then uh from there we would treat the AGS in a little bit different a fashion that we'll get into as well now this is a lot of infrastructure to stand up right hundreds of um servers

how do you kind of go about that Junior dbas I wish um well uh so I talked about how the partner network uh really helped us and very early on uh we were engaged with a partner that helped upskill us in into the technologies that we didn't really know and realized that we had to know in order to do this work so uh we used a tool called palumi to do a lot of the Terra foaming and and infrastructure builds and built a pipeline working with the uh your your partner to help build that out

and then uh you know this guy right here the team lead and Junior DBA uh ended up U shooting all those pipelines through um and that's we had a little bit of issues there uh I admit you know come on I I'd been familiar with this tool for all of maybe months uh and we kind of over engineered things a bit so it took a little bit longer for us to deploy but that was on us uh the work there was seamless you we got all of that pumped out eventually uh I'm from Michigan so

I looked at it as an assembly line um I was taking care of the deployments we had somebody else who was responsible for those database moves and so on and so forth that's fantastic I see most of my customers are just using infrastructure and Co as code nobody's touching the UI anymore so how was the cutover the migration yeah um yeah it wasn't too bad we uh 18 months I mean 18 months of doing anything is is rough uh but uh you know after we had those initial hiccups everything went really smooth we were doing

those those uh database moves scheduling them in the off hours for our our customers we partition out our our subscriber tier by APAC Amia and North Central South America so in the overnight hours we would move those databases slowly one at a time and then as we were uh you know getting the application prepared uh and and those services that matched up with our Central database in our AGS once all of the services for supporting 1 AG were ready to cut over we would leverage the maintenance windows that we have for our overnight uh or

over our our monthly maintenance windows I should say in order to do those moves so really we had no no need to close the front door we leveraged existing mainten maintenance windows we had and we did this with very little impact to our customers that's beautiful so let's let's just recap kind of what we went through so far you started the migration or kicked off the migration during covid um had your plan did a PO didn't work latency was too high um then local zones to the rescue you're testing that lots of problems with storage

re resolve that you migrated um now let's go back and look at the goals that you have for migrating to the cloud you wanted to increase High availability reduce cost uh lower operation burden how did it go oh you were listening to me talk great um yeah so uh we were able to solve that ha issue definitely that was a big one for us because uh imagine having a server of 1,500 databases go down and not have ha for it uh so we were able to solve that um additionally we were able to do some

right sizing on our compute as we talked about with how we were um building out our our failover clusters uh and we did see uh that noticeable improvement in our our spend through the uh creativity of of rart and I in those conversations so uh you know we were we were fairly happy with everything um but in order to go ahead and and uh get to where we needed to as far as our our total goals uh we knew that uh we were going to have to uh maybe look at other options going forward so

you knew that um local zone is basically a stepping stone order to really reach your goals y you have to move to the region we we knew that we had to do another move but we really weren't looking forward to it uh uh to some extent it was we felt like we were kind of of getting uh prompted along uh by our AWS team who knew better and were able to help us overcome our anxiety I would say about that move yeah I actually think that so once you guys were all done you were running

there for a little bit went through the stabilization phase of the project and then when we came back I was there um and guess what we're ran another Ola so here's the incloud OA that we ran we said hey today you're spending this local zones we think that there's opportunity to op if you migrate out and into Oregon and we helped you guys make the business case to actually kick off your next migration absolutely um into region and let's talk about that because now we're back to square one again in that assess phase um what

was the planning for for the next migration well like you had mentioned uh you know the inventory work was fresh in our head we knew where everything was at um really what it came down to in in in a lot of ways was what kind of time were we going to be granted by by leadership in order to structure out what we wanted to do for the move uh we did this what 18 months I think so you know what's another 18 months uh we were given two hours wait wait wait two hours two hours

can you believe that okay so two hours for the database I mean I think maybe we can figure that out right so well we negotiated originally we were looking at six hours for the database but no uh that two hours is everything oh everything everything oh database database uh cut over DNS changes testing we get 30 minutes oh okay so now we're going from 18 months for migration down to 30 minutes so um let's kind of break it apart okay so let's kind of break it apart um as you as you mentioned earlier you have

your central tier and then you have your uh and then you have your client tier so let's kind of break it out into those two pieces so were there any considerations for re architecting or or using any other Technologies to try and accomplish that well uh you know when it came right down to it uh we were kind of locked into what we were doing we did know that we were going to take advantage at least of this migration to correct for that striping that we did uh because it's very hard to be able to

to extend the the storage if you're working with a stripe volume and we did find even though we had over provisioned for performance at least one or two times where we needed to uh kind of extend to a new logical volume in order to meet the needs of of of things as we had them constructed so we were going to go ahead and take care of getting that out first and then uh you know at that point we were still trying to Fumble for a solution there okay and so for the so for the always

on availability groups did you just extend that out or yeah yeah so the availability groups were the easy part um we were able to extend those same availability groups up into Oregon added a couple nodes up there well three nodes and and hey three availability zones we could get rid of the the hanky janky you know ha and Dr sure uh so we provisioned those extra nodes for the availability group up into Oregon and then we leveraged log shipping of all things there you go uh to log ship from the primary AG uh node in

La up to the what's going to be the primary uh node in in Oregon and you know when time came to cut those over then the solution would be to you know stop log shipping bring that instance up and then leverage Auto seating to be able to eventually get back into an ha situation yeah really great we were able to once again uh you know be prepared on on that move the the AGS were the easy part once again all right so what about the um failover cluster instances so those are a little bit more

tightly coupled what did what did that plan migration look I was hoping we could avoid talking about that it's our favorite well we looked at a whole bunch of different options and threw all you know quite a few of them out the window at one point we were looking at converting all of these standard edition clusters into Enterprise Edition so we could leverage Enterprise Edition to extend those those clusters uh that was going to be expensive and it was going to require us to rebuild everything when we got into Oregon because you can't downsize from

Enterprise Edition to to uh standard uh so we scra that one right away um and what we ended up deciding on though was what we we termed the standard Oprah and what that was is you know you get a you get a standard edition node you get a standard edition node you get one too and what we did is we created a duplicate infrastructure for for those uh clusters all new clusters up in Oregon and then we leveraged scios once again to do the mirroring for us so we ended up mirroring from the primary in

La still maintaining that that uh that uh synchronization job to the secondary in LA but by the nature of how scios Works uh they you can only uh create mirroring jobs off of that primary because all of your secondary uh you know discs are locked so at at one point as we were doing our synchronization and building out those clusters we would start to add in additional uh replication jobs up to both nodes up into Oregon um it we saw some impact I mean we're saturating uh you know that that uh Highway four or Highway

five up into Oregon from LA and uh once we got into syn we were fine but but you imagine you know 100 clusters doing all that at once it was a little rough yeah absolutely and how did the so now you're all synced up you're ready to go we got the AGS we got the fcis we got our data everything's built out over there how did the actual night go did you were you guys able to get that toour or I mean 30 minute Mark is did you have a problem oh God no you're not

going to bring this up I I sore I was going to talk talk longer so that we wouldn't have time for this um one of the things that uh you have to do when you build out clusters availability whatnot is you need to make sure that you've got the ownership permission set right on those nodes because the last thing you want to do is maybe cut over somewhere before uh you're expected to uh so a week one week before our migration I'm in a camper van in the middle of of Eastern Washington and my phone

starts going crazy and what I found out was that there was some communication issues in the local Zone in LA and uh a few of our uh environments I won't say just how many but we had at least a few uh clusters and Avail well clusters that tried to move over early and we had a you know an availability group move over early so we had a little bit of latency happening when that was going on um a lot of a lot of conversations too after the fact but we were able to fail back fairly

fast on that um and get back into a situation where we're ready to go the problem on top of that too uh going back even earlier we were in syn about 2 weeks before our cut over and you know this was an 18-month project I've mentioned that quite a few times about three months before our cut over the company decided that we were going to do an offsite uh and it ended up being that you know two weeks before the event and so on top of everything else we had to make sure we were now

ready two weeks before when we were doing that and we ran into some issues because we thought we were we were uh impacting some of our customers because we were getting um uh alerts uh from from uh uh some of their Tams and Sams that uh that they were experiencing latency so we started turning off syn jobs and reducing things back you know looking at the clock to see how long it would take to get back in the sink down the road and come to find out it was actually uh they were doing something called

their hell week uh with this with this uh customer who was a fairly large client and they were doing this to themselves uh so we were able to recover we recovered and then we encountered that little issue that you had to bring up thank you so much uh but when we got to the night of piece of cake yeah yeah you want to know how how long how many minutes want I guess uh 31 12 12 minutes did it in 12 wow congratulations yeah yeah it was great uh we had one um we had one

cluster that wasn't serving traffic that was problematic and we were able to take care of that the next day just on our own and other than that everything went nice and smooth that's awesome yeah I remember that weekend just sitting there and praying yeah for you but it went well uh now let's go back and look at the original goals right cost High availability operational efficiency oh yeah well let's talk about cost First in my first career I was a uh I was a production estimator uh so when we're starting to talk about cost I'm

you know boring DBA and accountant what the heck but we were able to recognize almost a 13% cost savings just with moving up into the uh parent region uh and additionally we expect about a eight-month Roi so we did this in June so uh q1 Q2 coming right up we should be our banks covered there so uh that went well uh additionally I mentioned we were able to correct for that uh High availability um type situation because we could now use three availability zones to do the work for us so um and on top of

that the big one the big one is uh we were able to improve our backup uh and Recovery by 30% um so one thing I wanted to just pin on that a little bit too is you know there's certain things that no matter how much virtualization you have it doesn't solve everything and if if you don't have that physical proximity or at least a fast ability to to recover uh quickly then you're impairing your availability or your your uh your uh ability to improve and uh reduce that that RTO uh that you got we were

able to do that uh we were able to once again improve on our our transaction log backup Cadence uh by doing so because of of the nature of the hardware we're now running on and ultimately uh you know nice and smooth we we hit our Mark that is beautiful so I know we're working actually we have a meeting right after this session yeah and I'm not kidding there either are you about uh what we're going to go uh do next yeah yeah actually uh you know feel free to read the slide but uh since we've

since we were have been practicing and rehearsing this for months things have changed and accelerated quickly as happens in the cloud uh we are currently uh going through an application modernization lab AML yep uh and it's not just application uh the meeting I've got coming up next is about the database to see if we're able to finally look at man manipulating manipulating converting over from uh SQL to postgress as an option because that licensing is still a hit and we definitely want to get away from the windows licensing and the SQL licensing if we can

yeah that is a a wonderful program and we are trying to kind of modernize and allow you to innovate faster y um so this was an incredible story I'm sure it was uh you have a lot of scars and you learn a lot maybe you can share a little bit of those learnings yeah yeah yeah definitely learned a lot um I've had a mentor early on that said uh if you've got 25 years of experience doing the same thing then you have you know one year of experience and uh this definitely stretched all of our

team into new areas that we hadn't expected so uh you know looking at some of these uh Lessons Learned I'd like to focus on the fact of of train train train I've always been a huge advocate for uh learning growing and sharing and I stand by that so if you're going to encounter or something like this or you're looking at jump in jump in and start reading through everything uh digest blogs and and all the other options that AWS provides to learn about the AWS tooling and services enlist those Partners uh you can't do it

alone uh there's so many things that we didn't know about what we didn't know and having a good solid partner base that knows the ins and outs of the breadth and depth of AWS uh that was priceless for us that's fantastic I think we have maybe two minutes three minutes for questions if anybody wants to ask a question y yes yes so when when we I'm sorry the question was is uh did we move the applications into the local Zone when we moved database files into the region oh into the region yes yes so uh

as in that third in that two hours I should say of uh of Stress and Anxiety that's where they got their turn also so uh for this for this move we did actually shut the front door for two hours that was that was the trade-off and that was kind of the negotiation is you know how much time would we need to be able to do this and that's what we were able to negotiate so once we moved the data uh platform up then it was the application and infrastructure turn to do their things yes for

thews gentl why direct conne as yeah absolutely so um I believe you guys had Direct Connect yeah I was yeah I was going to say the big thing with the data center was the fact that we were trying to get out of it yeah and so they they had Direct reconnect um but the latency will still just too great and it's really based off your architecture right so as DBA as we all know certain applications are very chatty so if you make um if you have many smaller queries that are like Singleton you know like

give me this one piece versus like batch oriented queries where it's like he bring me back 10 or 100 records stuff like that and that's kind of what we what we saw in my bodies application where it was making many small calls uh versus fewer larger call to get the data sets back that that was required and so because of that every single transaction is incurring that latency cumulatively to load that page or to return that back to the end user then you have to kind of add up every single 20 milliseconds on top of

that versus if you're only making let's say one or two database calls you can absorb that into your timeout period uh for the query so that's that was what ended up happening there but they did have a direct connect but it still didn't solve that problem for them yep so any challenges or issues you fa going from data centers to local zones Lo encrytion of the data no uh so uh encryption was still managed as it is underneath the scenes with SQL using using encryption and then our our backups are encrypted at at rest as

well so uh our encryption scheme just followed our our our data moves yeah ention yes yeah so tte encryption is going to provide you that um you know encryption on disk and then you can use TLS uh to encrypt on the wire as well and that's still completely available um yeah go ahead when you did the Ola did you guys find like were there any surprises you like oh my gosh we found this whole group of SQL Servo licenses sitting over here at this business unit we didn't you know about it was it when you

got that assessment was it pretty much what you thought it would be wasn't quite a transformative like so the question was is did anything pop out that we didn't know about during the OA uh on the data side no because we're dbas we got our stuff together but uh but you know with with wanting to go back to a job here on Monday I'll just say that that uh I'm not sure if that was the same on the application side yeah absolutely and and kind of to to continue on that you know the with the

OA you know the the discovery can be as wide or as narrow as you wanted to be and our our scenario you know we we did multiple OAS just on the data tier itself um since that was the SQL server licensing that we're trying to focus on the OA definitely can extend out um to cover application that your application stack as well where we're going to be focusing on that Windows licenses as well and to see hey how can we maybe move that application to you know that net code to crossplatform Donnet get that running

on Linux um you know get that running on graviton to try and see even additional cost savings on top of that but the OA yes we see a wide variety and I don't have the numbers in front of me so I don't want to get misquoted but it's something in the range of like 30% uh that we see on the right sizing and somewhere in the range of close to 50% on licensing cost savings opportunities that we see around our customers and um you know just the optimization part right reduces the CPUs and then you

don't need as much that's that's right good point so both window nsql server is core-based licenses so just by default by reducing your core count um from right sizing you can save cost on your licensing as well and again like we mentioned before you know you have that beat bring your own licenses because we want to try and protect your investment that you already made as well as augmenting what that license include a cost model as well for anything on AOS you know we um you you don't need to pay for your lower environments either

so any non-production workloads can run on something called equal Developer Edition um that's completely free uh and does not require any licensing as well so absolutely um the OA is a great tool again to get those insights to make those data driven decisions um and with that we actually have on screen over here QR code um that calls out calls that out there's that adbs cloud adoption framework that I talked about earlier as well as that um optimization and Licensing assessment oh shoot that just triggered something that's going to make me have to go back

and do some work I realiz we haven't converted from io1 to io2 on our Central tier oh yeah that's good point wow another caller action oh my gosh another caller action there um you know if you're still running on gp2 convert to gp3 if you're running on i1 convert to io2 both of those will save you costs give you more flexibility um and I'd like to mention you know that conversion can be done up time as well just keep on finding new ways to to change things and save money love it um with that we're

just going to go ahead and wrap it up we're just want to we'll take that question afterwards um we just want to wrap it up and say thank you so much for being here today we really do appreciate your time um please please go ahead and fill out that survey when you're done and just like uber we like five stars so uh exactly I heard that joke earlier today so you back but thank you so much for your time today I really appreciate it thanks thank you