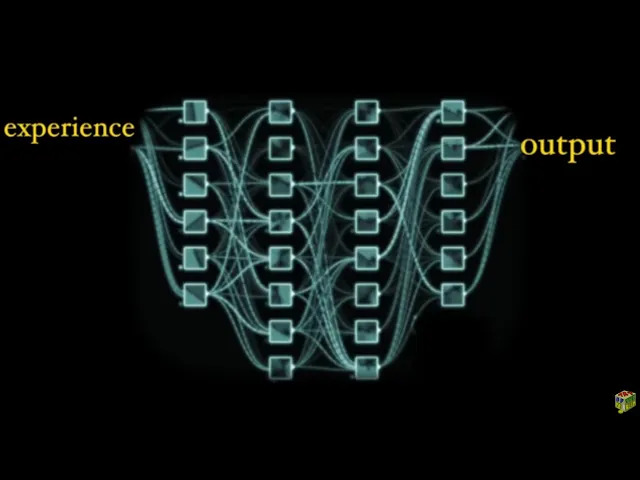

since the mid 20th century people had been dreaming of making computers that mimic the human mind by letting them learn on their own from experience using a new type of computer inspired by biological brains known as neural networks neural networks have remained a more or less obscure corner of computer science as they were conceived in the 1950s but since around 2009 due to a rapid surge in computer power neural networks are suddenly performing functions we never thought possible leading to a second computing revolution we called deep learning traditionally in the 20th century the approach to

computer design was to give them a list of explicit human written instructions to define their action a program regardless of what these programs did they were essentially sequences of logical decisions however with neural networks we throw away this human centric view of programs instead of having humans input explicit instructions to define the behavior of a computer the neural network learns everything by itself using direct experience starting with a random connection pattern it gradually self wires into parallel patterns of computation in order to perform complex functions automatically often achieving human or superhuman performance on a growing

set of tasks which recently were thought far out of reach of machines let's look at a few practical problems first to get a sense of this new power take tic-tac-toe for example the board is small and the length of the game is short and so it's easy to make every decision based on checking all future moves until the game is over chess on the other hand was much harder because the board is bigger and the games are much longer and so the number of possible sequence of moves quickly explodes easily greater than the number of

atoms in the known universe the only way to play chess is to make short cuts which take the form of best guesses the chess masters described these moves as feelings not calculations in the 1990s the deep blue computer developed by IBM was programmed by asking the chess champions for the logic behind their strategies this led to sets of rules which were connected together in a huge step by step decision-making algorithm which famously beat the chess master Garry Kasparov but two people in the computer science community this felt more like a publicity stunt than a real

revolution it wasn't satisfying that a machine following many thousand human rules could do something about as well as a single human can but compare that to the recent deep learning approach applied to chess instead of using human design rules researchers throw away all hard coded knowledge about chess and gave the computer only the rules of chess and let it learn the game of chess entirely through self play starting with a random strategy and feedback on wins and losses it absorbed winning chess strategies which were far beyond human capability and it did this self learning in

a matter of hours and beat the human design computer chess programs which represented 30 plus years of expert work and it keeps getting better Kasparov called it alien chess because it would think deeper and wider than a human can and today right now chess players and masters all over the world are working with these systems to learn new strategies of chess never before seen you know it's always fun to see someone move something with a really iconoclastic style so some of the things that alpha zero is doing are just incredible another example consider translating between

languages up until recently computer translation was built on huge look-up tables of lots of existing translated text to reference along with a huge web of rules for each language at Google for example it was developed with the involvement of hundreds of human experts and linguists this resulted in a complex translation machine which worked for a word or two but quickly broke down into gibberish especially beyond a sentence however compare this to the recent deep learning approaches to translation where researchers throw away all human design knowledge instead they started from scratch and exposed a neural network

to millions of examples of human translated text and let it learn the translation process on its own by absorbing the patterns involved in translation and rapidly the quality of the translation system improved the word error rate on simple translations dropped from 20% to near 0% over the short term the power of deep learning is even more apparent when we look at intuitive problems by intuition we mean actions we perform but can't perfectly describe in words such as the problem of recognizing a face seeing a face is more of a feeling we have difficult to explain

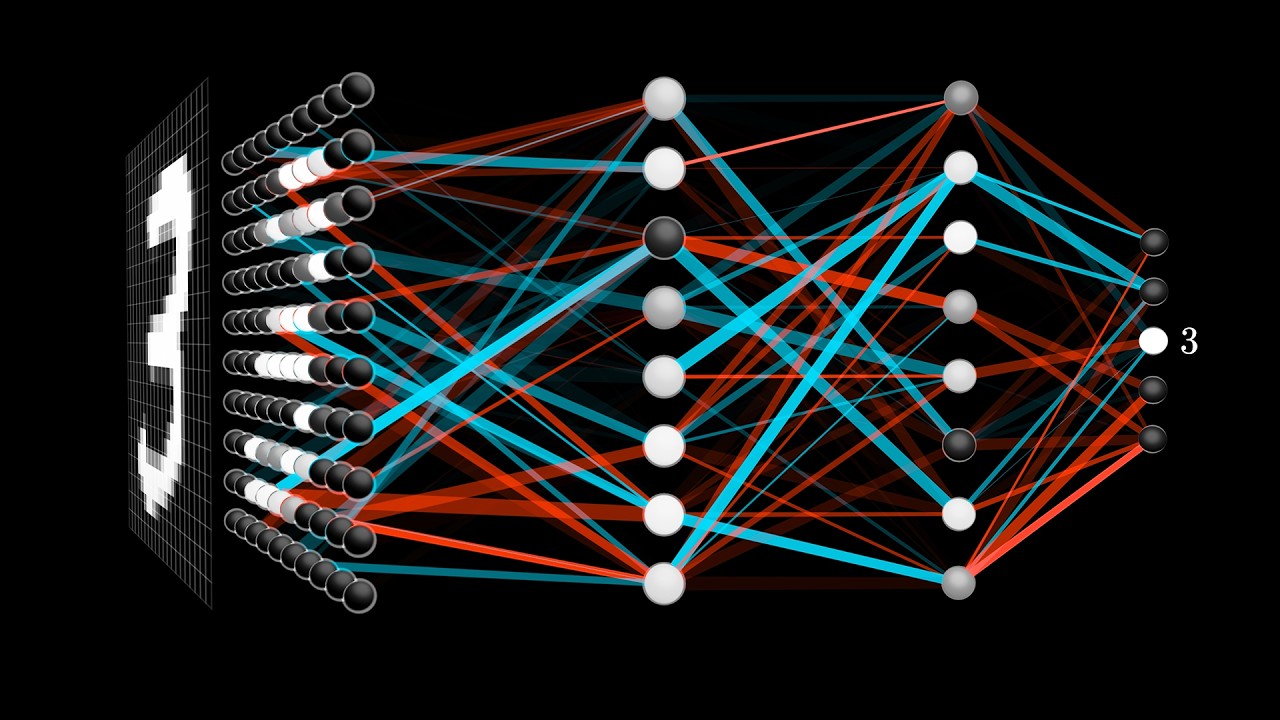

in a sequence of instructions this is why the idea of a human writing a huge series of instructions to recognize things and images seem near impossible the best minds working together on this problem for over 50 years ended up with algorithms which failed about a quarter of the time useless for anything practical especially critical applications that was until people flipped to the deep learning approach and instead collected millions of examples of images which already had a human-made label and fed it into the neural network at the level of individual pixels to learn from using the

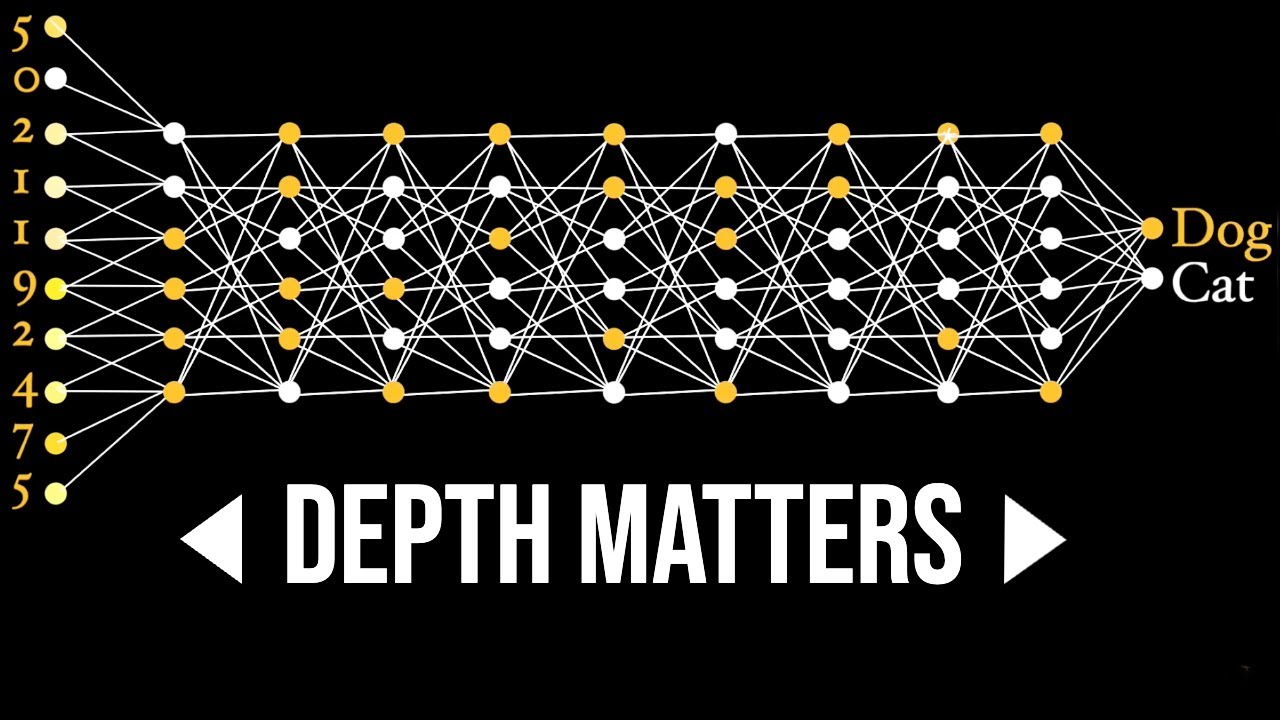

human provided labels as initial feedback the network then absorbed the statistical information in the images from the pixels up and separated into conceptual or word buckets where the idea of a dog versus a cat comes down to a unique pattern of activations inside the network in 2012 this was famously demonstrated with a huge breakthrough in a computer vision competition called image net where systems based on neural networks suddenly cut the error rate down by over 50 percent in one year and within just a few years the machines were superhuman at recognizing many kinds of images

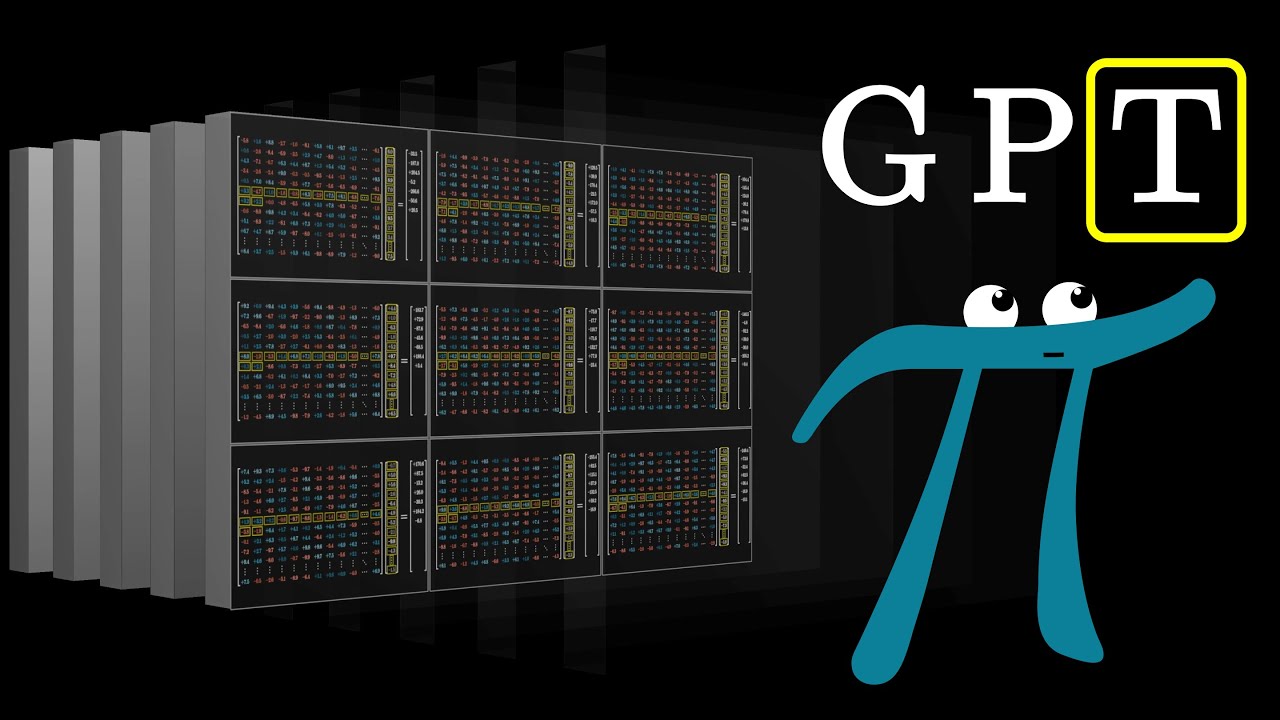

so the field of image diagnosis where we are looking for an illness in an image had to adapt to this new tool which can often identify patterns that humans can miss by now the trend should be getting clear the old human engineered rural systems would hit a wall in performance while the deep learning systems just keep improving often beyond a human level but we've only been looking at this from the perspective of using neural networks to analyze information but we can also run them in Reverse to generate information for example a network trained on lots

of human text can spit out random essays on any topic which are difficult if not impossible to distinguish from human they were so good that the researchers who first saw the results decided it was best to hold the system back from the public for fear it could be used to generate fake but realistic information of all kinds perhaps in ways they haven't yet imagined the same thing happened with images for example a neural network which learned to recognize faces can endlessly generate new images of human faces that don't exist as of today you can no

longer trust a photo or a video in the same way so at first we will experience this new computer revolution both by computers which can trick us in ways like never before but also fill in our blanks in ways people have been dreaming about since the dawn of computers it's the computer science equivalent to the realization that the earth isn't the center of the universe where humans aren't the center or upper limit of intelligence and this advance really breaks down into two key ideas we will explore in this series the first was to let machines

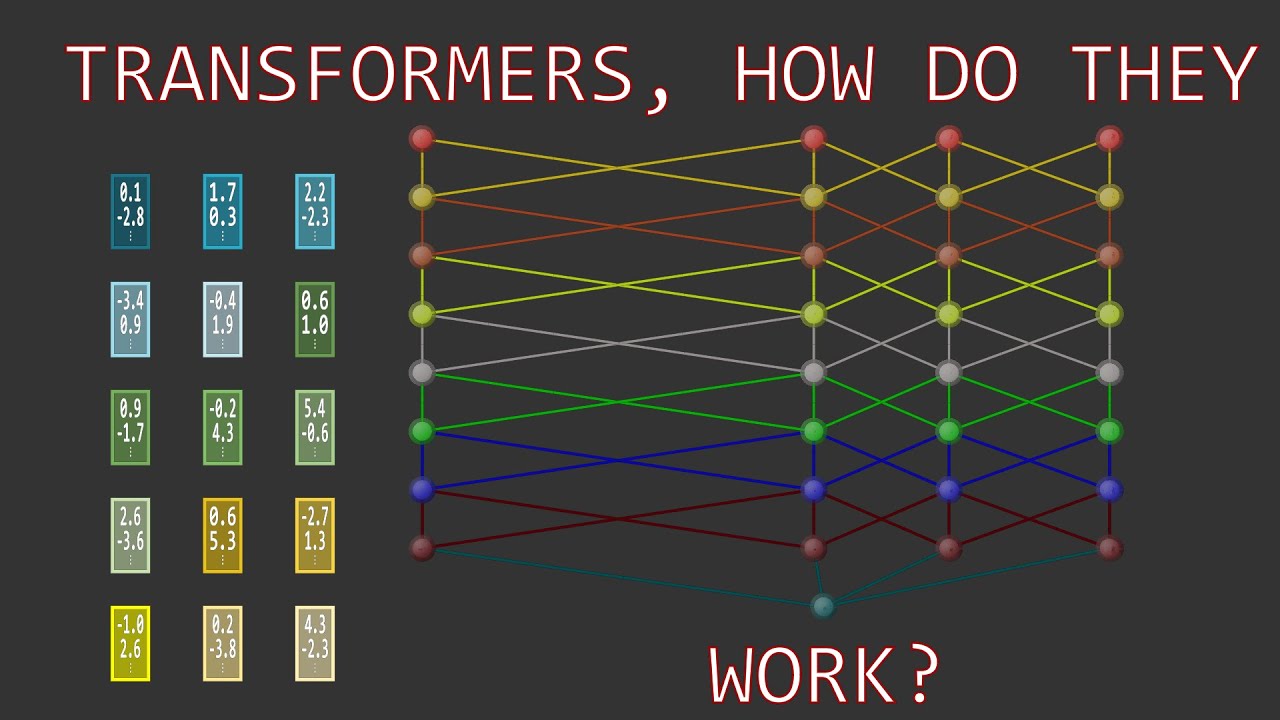

learn knowledge instead of hard-coding it into their instructions known as the machine learning paradigm every time he does something a little closer to what we want him to do we reinforce him twenty minutes and the pigeon has learned affected this and the second idea was to move away from this idea of following a sequence of instructions based on words and move towards using parallel patterns of activity as the unit of computation known as a distributed representation having a symbol only view of the mind was described as the classic mistake by Geoffrey Hinton who is known

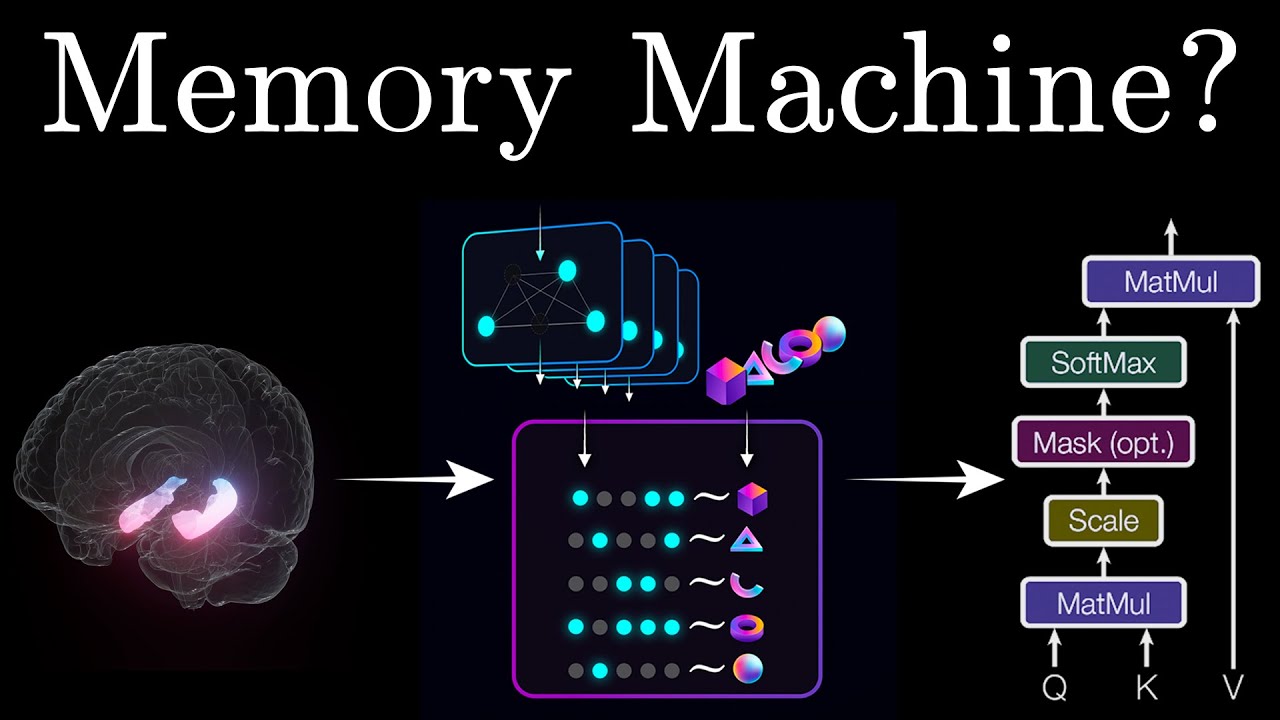

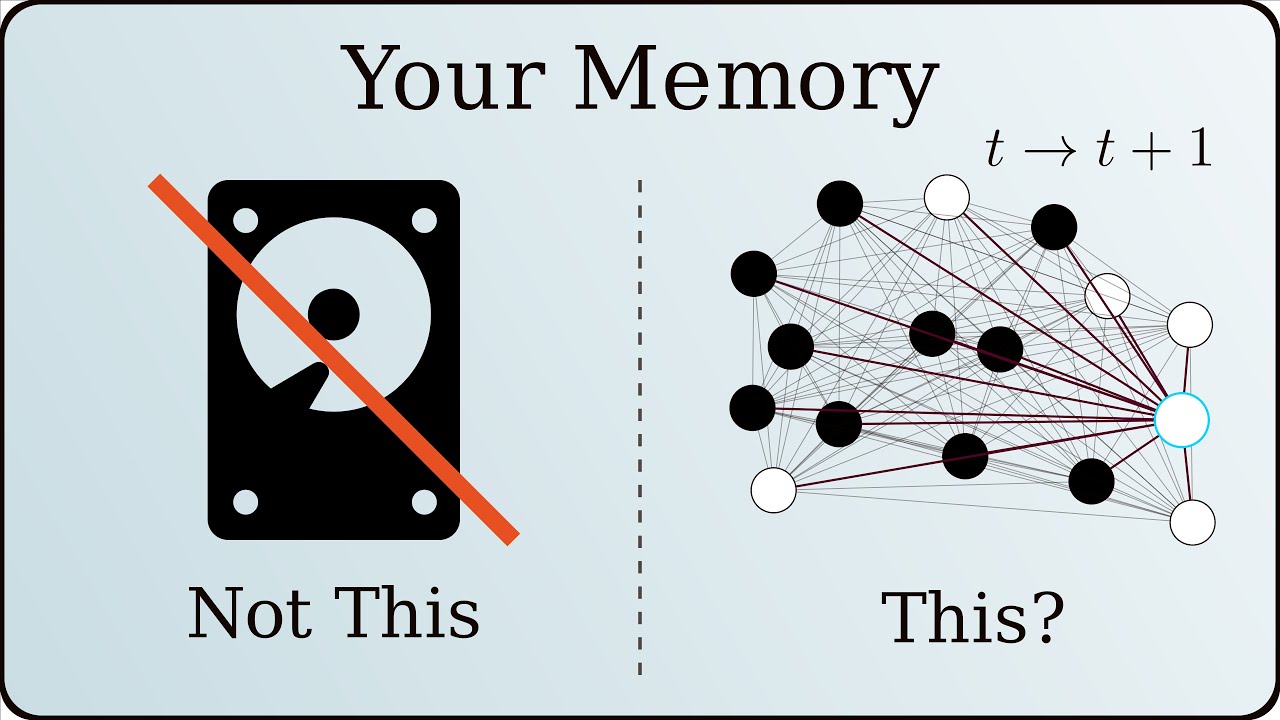

as the father of deep learning in fact still most people in AI they made a very naive mistake which is they thought that strings of symbols come in as words when you say something strings of symbols come out so they think what's in between must be something like a string of symbols even though you know that what's in the brain is just big vectors of neural activity there's no symbols in there instead what goes on inside the brain looks like it happens at the level of patterns not symbols these patterns take the form of a

cascade of electrical pulses which we can see are unique to different thoughts and that's not meant to be a joke that's what I believe a thought is a thought is an activity pattern in a big bunch of neurons and only later in life do humans start using symbols to express these thoughts using language working at the level of patterns of activations or feelings first not symbols first this is a biologically inspired view of thought that neural networks exhibit it gives machines what we call a sense of their inputs or a form of intuition but of

course there are many limitations concerns and open questions about learning with neural networks and parallel questions about how the brain functions of which we have a very limited understanding especially when it comes to explaining what exactly makes the human mind unique and so to begin we need to go way back in time and think about what we mean when we say intelligence [Music] you

![The moment we stopped understanding AI [AlexNet]](https://img.youtube.com/vi/UZDiGooFs54/maxresdefault.jpg)

![Physics Informed Neural Networks (PINNs) [Physics Informed Machine Learning]](https://img.youtube.com/vi/-zrY7P2dVC4/maxresdefault.jpg)