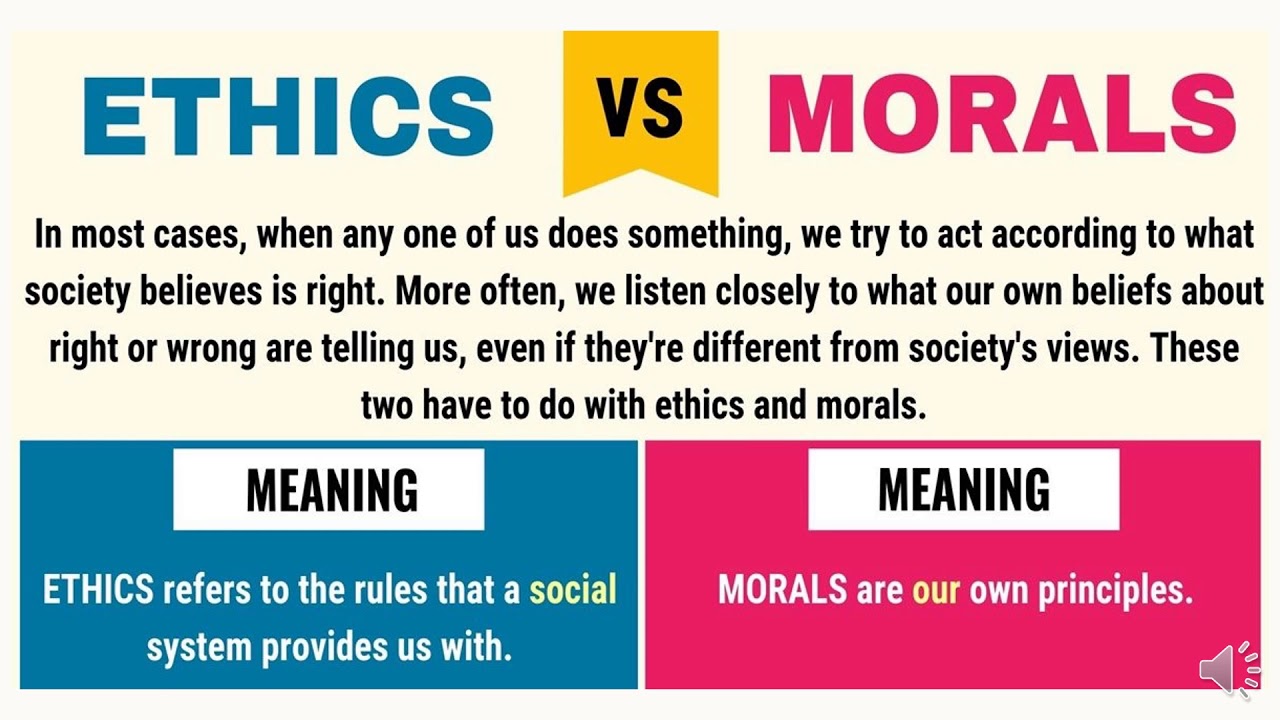

[Professor Robert Prentice] Being aware that an issue presents a moral dimension is step one in being your best self. Step 2 is Moral Decision Making. Moral decision making is having the ability to decide which is the right course of action once we have spotted the ethical issue.

Sometimes this can be very difficult, as multiple options may seem morally defensible, or, perhaps, no options seem morally acceptable. Sometimes people face difficult ethical choices, and it is hard to fault them too much for making a good faith choice that they think is right but turns out to be wrong. However, most white collar crimes -- over-billing, insider trading, paying bribes, fudging earnings numbers, hiding income from the IRS, and most other activities that lead people to end up doing the perp walk on the front page of the business section -- do not present intractable ethical conundrums.

They are obviously wrong. The problem is not that we haven't read enough Kant or John Stuart Mill. More commonly, the problem is that we are unaware of psychological, organizational, and social influences that can cause us to make less than optimal ethical choices.

Our ethical decision making is often automatic and instinctive. It involves emotions, not reasoning. When we think that we are reasoning to an ethical conclusion, the evidence shows that we typically are simply rationalizing a decision already made by the emotional parts of our brain.

Our brains' intuitive system often gets it right, but not universally. So, we should never ignore our gut feelings when they tell us that we are about to do something wrong. But, our intuition does not always choose the ethical path.

An important reason that the intuitive/emotional part of our brain errs is the self-serving bias, which often leads us to unconsciously make choices that seem unjustifiable to objective third party observers. As a simple example, a U. S.

News & World Report survey asked some people: "If someone sues you and you win the case, should they pay your legal expenses? " Eighty-five percent of the respondents thought this would be fair. The magazine asked others: "If you sue someone and lose the case, should you pay their costs?

" Now, only 44% of respondents agreed, illustrating how our sense of fairness is easily influenced by self-interest. [James] Probably every day there is a moment when you think like, "Should I do this, should I not? It's gonna be easier for me to do it, but it doesn't mean that it's gonna be the right thing to do.

Sometimes I'm really tired of grading, for example. Well, my job is part of me doing a PhD and doing a PhD is my first priority so I have to take care of my PhD instead of my teaching and I think that's part of rationalizing. If we are not careful, we will not even notice how the self-serving bias influences our ethical decisions.

Authors Bronson and Merryman report that "if you're a Red Sox fan, watching a Sox game, you're using a different region of the brain to judge if a runner is safe than you would if you were watching a game between two teams you didn't care about. " So, how can we combat the self-serving bias? There is some experimental evidence that if we know about the self-serving bias, we can arm ourselves against it and minimize its effects.

[Claire] I worked in India for 2 and a half years and I worked very closely with private enterprises but also with state and local governments. As some people may be aware, there's a culture of bribery in India that's quite prevalent. That was a very difficult situation for me to be in because I could see the end benefit of the program but I knew in order get there I would have to do something that I felt was unethical, which was pay a bribe.

I did find myself rationalizing it at first, like is it really that big of a deal? I mean, I was working through that whole ethical and moral decision making process where you're sort of fighting between your self-interest and your gut. Ultimately, you know, I, again I relied on that gut instinct that I spoke about earlier and I didn't pay the bribe.

We must focus not just on being objective, but on doing what it takes to ensure that others see us as objective. We will naturally judge our own decisions with a sympathetic eye, but we know that others will not necessarily do so. So if we do what it takes to cause objective third parties to trust our judgments, we should go a long way toward overcoming the impact of the self-serving bias.

At the end of the day when I would step back from my work, I didn't want to participate in a system that I didn't believe in and I felt like justifying the outcomes could be a very slippery slope. We should also pay especially close attention to our profession's code of conduct and our employer's code of ethics, because such standards are normally aimed primarily at minimizing conflicts of interest and their unconscious impact on our decision making. The self-serving bias is far from the only psychological or organizational factor that can cause us to make the wrong ethical choice, but it's certainly a big one!

I think if you surround yourself with good people who, you know, they have good values and you know, you're able to kinda reveal your vulnerability, like I struggle with ethical decisions because they're not always straightforward, they're not clean-cut. Yes, we are gonna try. .

. always try to do the best for ourselves before we do it for the rest. And when you, like, accept that, you start knowing, like, you start understanding that there is a process of rationalizing.

[Howard] When your gut instinct is different from your head, from what your brain is telling you. . .

it's not a good feeling. The best feeling is something that you know is right in your head and that you feel is right and you make a decision and you go forward with that.