My goodness, AlphaGeometry, an amazing Google DeepMind paper fresh out of the oven. And I think this paper might be history in the making. So, how is an AI that learned on a 100,000,000 mathematical problems able to compete in the International Mathematical Olympiad?

That is perhaps the most prestigious math competition in the world. So, did they achieve a breakthrough? Well, let’s see together.

Dear Fellow Scholars, this is Two Minute Papers with Dr Károly Zsolnai-Fehér. Now, competing on such problems is trouble. This requires not just a huge glorified calculator for adding numbers, but it requires planning your solution, logic and reasoning.

And more. But, of course, GPT-4 can do all of that, so just give the problems to it and and off you go. End of the video, right?

Just as a demonstration, GPT-4 can ace the bar exam, would be hired to Amazon for its coding skills, and it is better than almost all of humans on the biological olympiad. So, it can sweep all of these, easy, right? Well, let’s see, if you try to use GPT-4 and give it 30 of these tasks, it can solve exactly…wow.

It can solve zero of them. I hope this gives you a feel of how difficult these problems are. Devilishly difficult.

And scientists at DeepMind just proposed a system that is about a 100 times smaller? I can’t believe it. But to start, how do you even solve such a problem?

Let’s see an easy example. For instance, if we ask a human mathematician to prove that there is an infinite number of primes, we could prove it by listing them. But no one can list an infinite amount of numbers, that is impossible.

No. So what do we do instead? How do we do the impossible?

Well, instead we start with the assumption that there is a finite number of primes, and then find a contradiction that says this assumption cannot possibly be true. This part of the proof requires thinking out of the box. It requires a moment of brilliance.

And without it, the problem is intractable. It is akin to pulling a rabbit out of your hat. And when you have the rabbit, mechanically finishing the problem is relatively easy.

And the strategy could be that if the rabbit didn’t work, try to pick a new one. We can do that. But, can an AI, can a machine pull a rabbit out of a hat?

And the initial answer is no. Mostly not. But let’s try anyway.

This is how a human would do it, and here is their proposed AI that could hopefully try to do it. So, does it work? Well, let’s have a look.

When given a problem, with blue, it first creates the key ideas, the rabbit, and then, the green part is the remainder of the calculation that leads to the solution. Okay, but this was easy peasy. Now give me some proper problems.

Oh my, now we’re talking! So, little AI, can you solve this? Whoa, it pulls the blue rabbit out of the hat, and then, runs the green calculations until it solves it.

And make no mistake, this is just an excerpt of the solution. Now hold on to your papers Fellow Scholars, because the full solution looks more like this. My goodness, look at that.

More than a hundred steps have been concealed here. And it had done all of this correctly. And that is not even the longest proof it is capable of writing.

Not even close. Wow. So, how good is it?

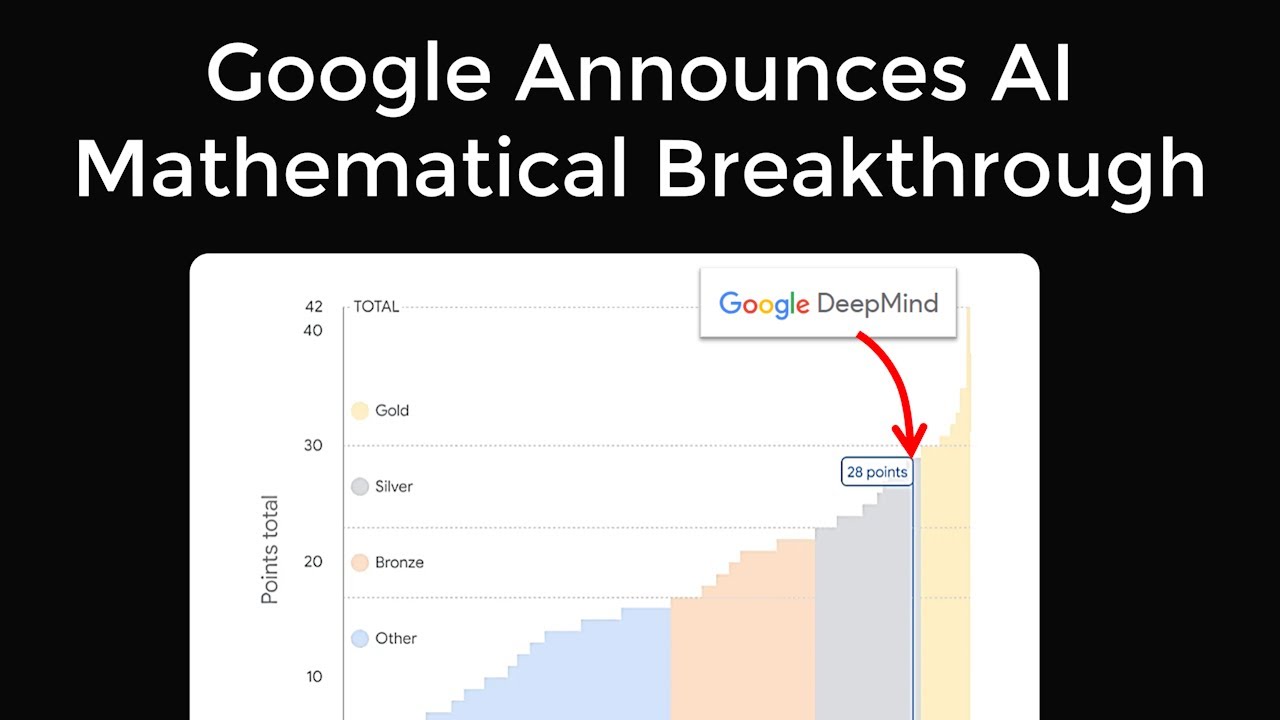

Well, a previous technique was this good, and the new technique without the rabbit is this good. So it is almost as good as the average mathematical olympiad contestant. Note that these are really smart people, so the average of those is also really smart.

And it can compete with that. But, wait a second. This can run the mechanical calculations, but it only takes you so far.

You also need the brilliance to pull the rabbit out of the hat. And as soon as we add the rabbit part of the solution, what happens? What?

Are you seeing what I am seeing? It is nearly as good as the smartest of these super smart people. And, Fellow Scholars, if you think that is impressive, hold on to your papers because we are just getting started.

Here are 2 mind-blowing facts about the paper: One, it learned from scratch by itself, without any human demonstrations. Yes, the proposed system does all this without human intervention. This is essentially an AI implementation of the two modes of human thinking, and that is thinking fast and slow.

Thinking fast is about quick, instinctive responses, like reading something, while thinking slow involves deliberate, logical, and calculated decision-making. This can do both as well as some of the smartest humans can do. So when we are worried that this cannot possibly get any better, because there are no humans good enough to teach it anymore, now we know that it only needs synthetic training data, so it can learn by itself.

And it has already found more general, more elegant solutions for some of the tasks than humans did. Two, this project is open source from day 1. Every piece of the solution is out there for you, for free.

Yes, you Fellow Scholars can run your own experiments with it. And all this with a model that is about a 100 times smaller than GPT-4. An absolute slam dunk of a paper.

We are still early, but I think it might be fair to say that this is a breakthrough. Now, as incredible as this AI is, note that it is still relatively narrow. It can do geometry, but it cannot play StarCraft or do anything else.

However, the ideas and concepts described in the paper are general enough to make sure that this can be applied to other problem domains as well. And that, Fellow Scholars, is going to be a series of incredible breakthroughs. By the way, it is a possibility that I will visit San Francisco around mid April.

For the first time ever. If this is the case, if you are a local lab like DeepMind and OpenAI and you would like me to visit, or if there is someone who I should really meet, please let me know on Twitter/X.

![The moment we stopped understanding AI [AlexNet]](https://img.youtube.com/vi/UZDiGooFs54/maxresdefault.jpg)

![The Most Useful Curve in Mathematics [Logarithms]](https://img.youtube.com/vi/OjIwCOevUew/maxresdefault.jpg)