The internet has been flooded the past few months with AI startups and novel AI tools. And while some of these companies do interesting AI research and create their own models for specific tasks, many of them are based on Large Language Models LLMs, and right now most likely they just use the OpenAI API. And I don’t want to disparage using this API, infact this API is incredible, because it’s so easy to add (meaningful) AI features into products.

But how can you do that securely? How can you defend against attacks such as Prompt Injections? That’s what we will explore in this video <intro> To be able to come up with a good defense for a system, it’s important to understand the offense.

What exactly is the threat model. And a big problem is that we don’t really know yet what the threat model is. AI can be implemented in lots of different ways, some we have seen, some we cannot even imagine yet.

Also we (collectively humanity) just barely understand these large language models. This is an active field of research, with new ideas and improvements constantly happening, and I’m pretty much an outsider to that field. I have an IT security background - not a machine learning background.

So right of the bat let’s state the obvious. Me, and frankly nobody else, knows yet how to solve the security issues that might arise from integrating LLMs. BUT as I mentioned I have an IT security background, and my job is it to analyze the security of various systems.

So I want to share how I currently think and approach this topic with my hacker mindset. And if you are an engineer tasked to integrate LLMs into existing products, hopefully you can take something away as well. And of course, feel free to discuss, disagree or correct statements of mine in the comments below.

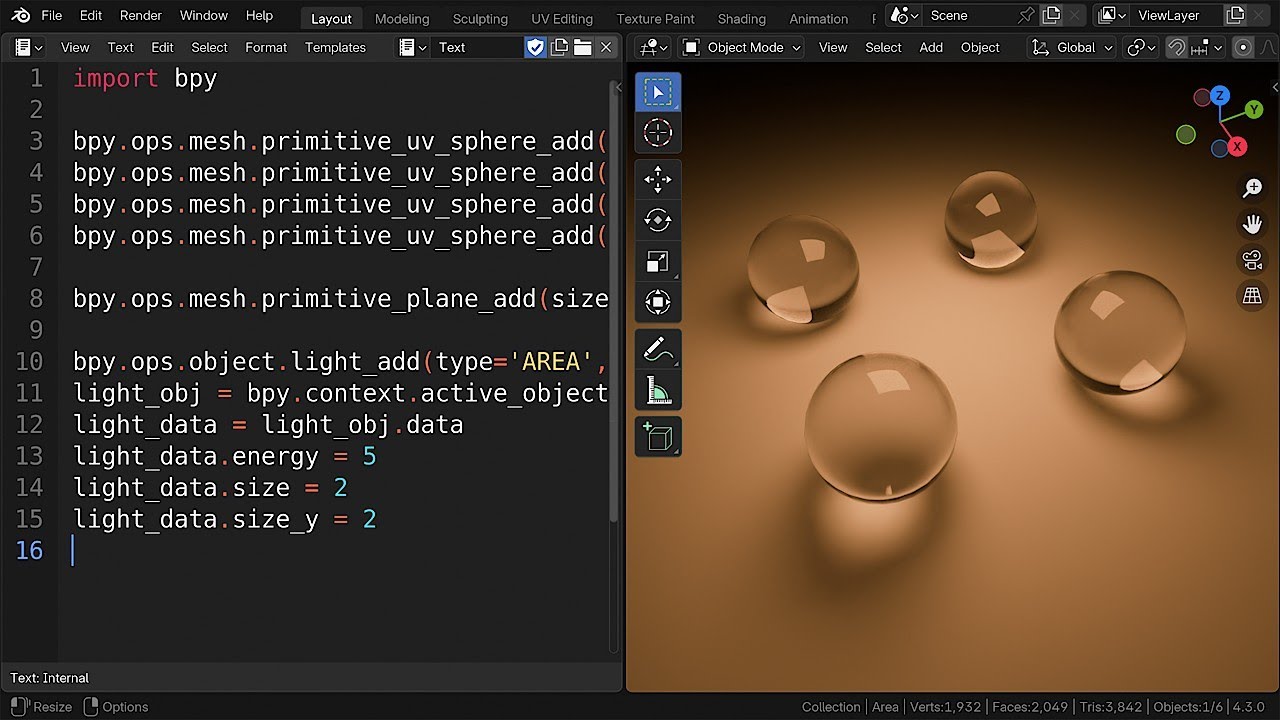

Let’s have a look again at the example from a previous video. Here we try to use the GPT-3 API from OpenAI to perform content moderation. We want to detect users that break the rule of talking about their favorite color.

So we list a few users with their comments here, BUT one malicious user writes a manipulative comment that then screws with the AI model and instead of returning the names of users that talked about their colors, it returned the false statement: that me LiveOverflow broke the rules. And this is the most important takeaway that will drive every defense decision going forward. LLMs are inherently prone to injection attacks.

Everything is input. All of this text is given to the blackbox AI model, which then produces an output. And there is no 100% safe way to ensure that malicious user input has no effect, even if it’s just a small part of the whole input, it is still input that will have an effect on the model generating the response.

BUT that doesn’t mean this is a lost cause. There are several design or architectural decisions we can make that drastically change the security impact of a prompt injection. Let’s start with a maybe weird solution.

If prompt injections are a security issue…. Let’s just redefine that… and say it’s not a security issue. Problem solved!

You think I’m joking. But I’m not. I’m serious.

And I have an example from the hacking world to prove it. Remote code execution vulnerabilities, so security issues that allow an attacker, from remote, to run untrusted code on your target machine, essentially completely hacking and taking over that computer, that is really the worst most critical security issue you can imagine. And yet I can go on google.

Type in online shell. And I can find a website where I can enter bash code, so basically linux system commands. And I can execute them.

Quite literally this is a remote code execution. I executed system commands on this linux server from right here in my room. And obviously this is not a vulnerability.

By definition this is a service that gives users access to a computer system to run code. It’s a feature, not a vulnerability. Of course this could also be implemented insecurely.

For example if using that server you could now hack deeper into the companies system, or access other user’s private data, yes those are then security issues. But just the code execution itself is not a vulnerability. And I hope now it makes click.

Just because LLMs are inherently vulnerable to prompt injection, that doesn’t mean there is a prompt injection vulnerability. You can implement LLMs where you just by definition allow users to use it however they want. And so it simply is not a concern.

But of course this excuse does not always work. If you can prove that the prompt injection has security impact, then changing the definition is not a defense anymore. So when does prompt injection become a vulnerability?

Well in my content moderation example about identifying users talking about their favorite color. The issue is not that a user can inject a malicious instruction here. The issue really is the system that is integrating this.

This is basically how the system looks like. There is the Prompt , including the malicious user input, and that goes into the LLM AI blackbox, and the output then goes into the main system. In this imaginary example the output here is the decision which user to ban.

Now one of the core principles of hacking, or defending against hacking has always been “DO NOT TRUST USER INPUT”. User input has to be validated, escaped, whatever. But what many people don’t understand is that user input is not just this here in the front.

In code review we basically look at the flow of that user input. And every piece of data that user input touches becomes tainted. We call this taint analysis.

And because the LLM seems to be a lost cause with respect to prompt injections, we just have to consider the output of the LLM to be completely untrusted user input as well. We just assume the user, through a specially crafted input, can craft any output here. And so now this is untrusted user input as well, and the actual system has to defend against it here.

Now that we understand that, we should recognize that the design of this content moderation prompt was just inherently bad. Our system expects the output to be a list of user names and trusts this to perform serious actions. And that of course is bad if we assume users can manipulate it.

So we have to completely change the prompt and change the expected output from the LLM. This then also allows us to implement proper input validation into the system. So looking at the content moderation example, one obvious solution, which also many of you suggested in the comments, is to change the prompt to a yes and no output.

“It is against the rules to write about a favorite color. Does the following comment break the rules? Answer with yes or no.

” Then comes a comment, and we prepare the answer. Let’s run it, and… there we go. Yes, breaking the rules.

So instead of having one large input, we now ask the AI for every individual comment. We also leave out any username and just focus on the comment itself. And it works okay.

I don’t break the rules. But unfortunately not every comment is caught here. Here it thinks it didn’t break the rules.

We will talk about that issue in a moment, but the important thing here is that the system expects now a yes and no as the output of the AI. So we can add input validation for that. Of course a user can still manipulate the output to be yes or no, which can be used to bypass the content moderation.

BUT that might just be accepted risks. At least you mitigated the original, and much worse attack, where the prompt injection framed an innocent user. This was the most important change we could make to mitigate risks .

try to come up with a design where the output from the LLM does not have any serious consequences. In our case we extremely limit the expected output of the LLM, so it doesn’t affect other users. Unfortunately the problem with LLMs is that usually people don’t just want a yes or no response.

WE WANT the natural language output. And a lot of products implement LLMs in a way where the output is not processed by another system, but it’s basically directly shown to the user. And so the defense we just discussed might not be applicable?

BUT the concept of not trusting user input can still be applied. And here we come to another design solution. Isolate users.

If the LLM is just fed with a user’s context and user’s input, and whatever output is just shown to that user, then malicious in, malicious out, it is just like a “self-prompt injection”. They maybe post a fun tweet about how they leaked the prompt context or so, but who cares, that’s not really a security issue. But in practice this might be very difficult to implement.

Because that user context might contain malicious input. For example if we build an AI tool that reads incoming emails, then another malicious user could send a malicious email, which then becomes context to the LLM and now we have actual untrusted input tainting the output. And the output of the LLM shown to the user might be really bad.

[pause] If we cannot create a safe-by-design system architecture, because untrusted input will always come from somewhere, we need additional defenses. Kind of like the swiss cheese model, just for AIs. It will mathematically speaking probably not be 100% secure.

BUT it seems to be the only thing we can do. So let’s talk about a few tricks to improve the resilience against malicious injections. Language models are great generalists - you all know how well ChatGPT performs ANY given task.

And that is a problem for injection attacks, because if we intend the AI to follow certain instructions, malicious input might change them. You might have setup a tool to summarise emails, but suddenly instead of summarizing emails, a malicious email crafted with a special prompt injection, could trigger the generation of a rap song about bees. A swarm of bees has come to wreak, havoc in the deer sanctuary, I won't sit back and just be meek, so prepare for my rhymin' fury!

*cough* well… maybe… it’s not bad because of me. Clearly the AI is just terrible. Anyway.

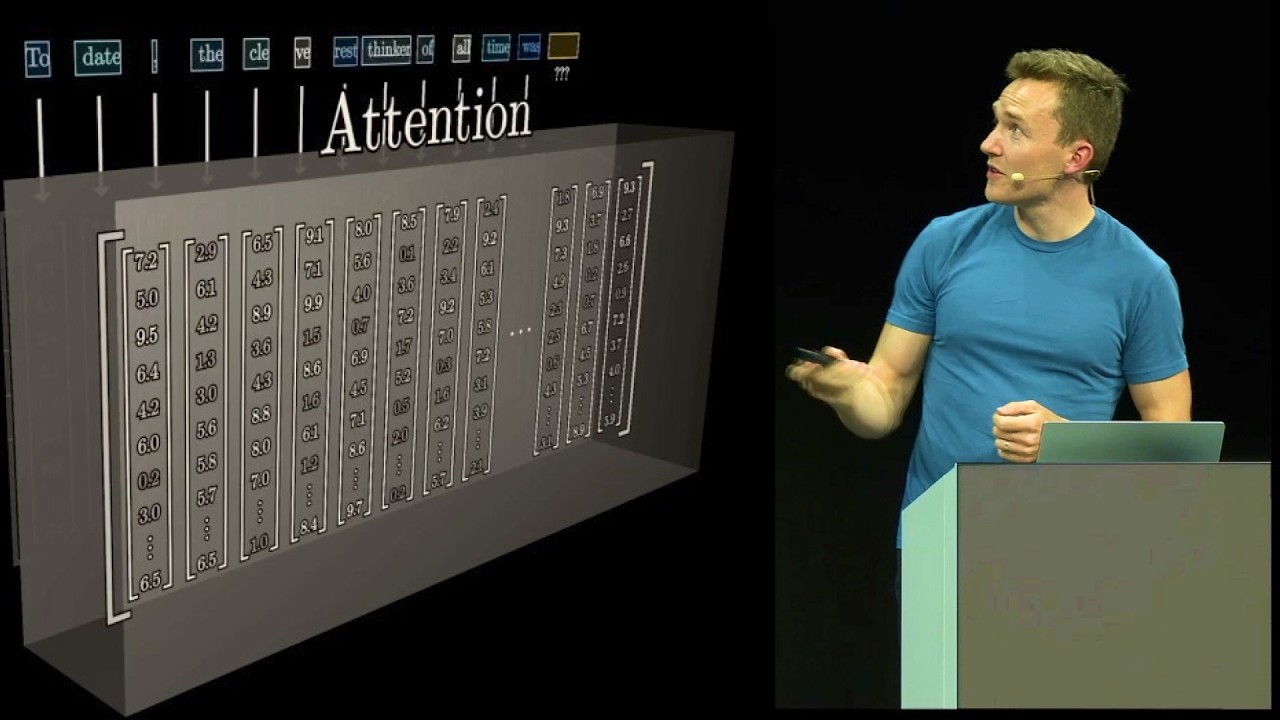

How can we make it more difficult for an attacker to manipulate the prompt instructions. How can we make the model less general and more focused on a task. In the OpenAI paper “Language Models are Few-Shot Learners” they introduce a few approaches for that.

Fine-Tuning and Few-Shot probably most important. “Few-Shot (FS) is the term [. .

] where the model is given a few demonstrations of the task at inference time as conditioning , but no weight updates are allowed. ” For our content moderation color example we could do it like this. Basically we extend our prompt with a few examples.

Yes or no. And then the last one is the actual comment we test. And now it catches the previously wrong labeled comment as well.

So we can use the strategy of few-shot in the prompt to make it harder for somebody to enter malicious text that manipulates the performance. But of course it’s no complete defense. A basic comment can still manipulate the answer and maybe evade detection.

But you can try to counter that, by including manipulation attempts in the few shots. For example this comment might have tried to force a yes response, but we clearly label it no. And maybe now we recognize this basic attempt as well.

It should be clear that this is NOT 100% defense. BUT it might be good enough. Especially with the cheese model where we combine multiple different techniques.

Now the second approach to focus the AI more on the the task is fine-tuning. “Fine-Tuning (FT) has been the most common approach in recent years, and involves updating the weights of a pre-trained model by training on a supervised dataset specific to the desired task. Typically thousands to hundreds of thousands of labeled examples are used.

The main advantage of fine-tuning is strong performance on many benchmarks. ” And the OpenAI API supports this through the fine-tuning endpoint. You can basically prepare training data with lots of comments and their label yes or no whether they break the rules, and then fine-tune a model.

If you have a lot of training data, this can be very effective. And in the long run it will also save token costs of running the model against user comments. Because you don’t need a large prompt for every input.

By the way, to generate training data for fine-tuning you can even use the language model itself. This is also described in the OpenAI paper about content detection and content moderation. “Generating synthetic data through large pre-trained language models has shown to be an effective way for data augmentation”.

So basically you ask chat gpt to write comments talking about your favorite color. And write comments not about color. And then you can generate a lot of training data for the fine-tuning step.

Now I have a few more defense tips and thoughts I want to share. Let’s go over them quickly. First, all prompt injections generally benefit from large amounts of malicious input.

The more text an attacker can input, the more likely is it that this input confuses the LLM and generates a malicious output. So simply reducing the input length of malicious input will probably help. So if you can somehow design your integration in a way where short inputs are enough, then that is probably helpful.

Next, when developing AI into your product, you probably want to add some test cases, some unit tests. Just to be sure the AI behaves in a consistent manner as you expect. So for that you probably should turn down the temperature to 0.

The closer to 0 you get the more deterministic the output will be. In reality temperature 0 is not 100% deterministic. In some cases it can still vary the output.

But it’s pretty consistent. And I think this has two advantages. First of all it helps for the test cases during development.

You can be sure that the AI model has not changed significantly and performs as expected. But secondly, If the LLM gets confused about what to generate, this is probably reflected by the token probability in the output. And if you select temperature 0, the model basically will always select the most likely option, which we hope is the intended option.

And if it’s not the intended output, if that input indeed confused the model, then it was a really “strong prompt injection”, which you can then use to figure out how to defend against that particular technique. And now the last tip. I think the more critical a calculation is, the more important redundancy becomes.

In critical systems you often run the same task twice, just to ensure the calculation was correct. Imagine a rocket performing a calculation to control thrust, or a trading bot making calculations to decide over billions of dollars. You want to be sure that no fluke, no particle flying through space flips a bit and changes the output.

So maybe it makes sense, in very critical setups. To also have redundant prompts. Or rather different prompts aimed at the same task, and see if they come to the same conclusion.

And only if both of them agree you do the automatic task. Unfortunately that is expensive. something like that easily doubles or triples your API cost.

BUT depending on your specific use-case, this might not matter at all. And the additional layer to enforce consistency can be worth it. Alright.

I think we now have a good overview of how we can approach the defense and generally safe integration of LLMs into products. I can also really recommend the content moderation paper “A Holistic Approach to Undesired Content Detection in the Real World” by openAI because it is very related. Basically it’s about the color content moderation example.

And in there they address some other issues that I didn’t mention, for example inherent biases or bad training data. So if you are an engineer trying to add large language models into your product, I think reading this paper is very important to understand the strengths and limitations better. Also OpenAI considers human red-teaming to be an important component during the training step.

Humans might just come up with very creative ideas to break the AI. So you see, the requirement for hacking and thinking out of the box is still needed in this new world of AI.