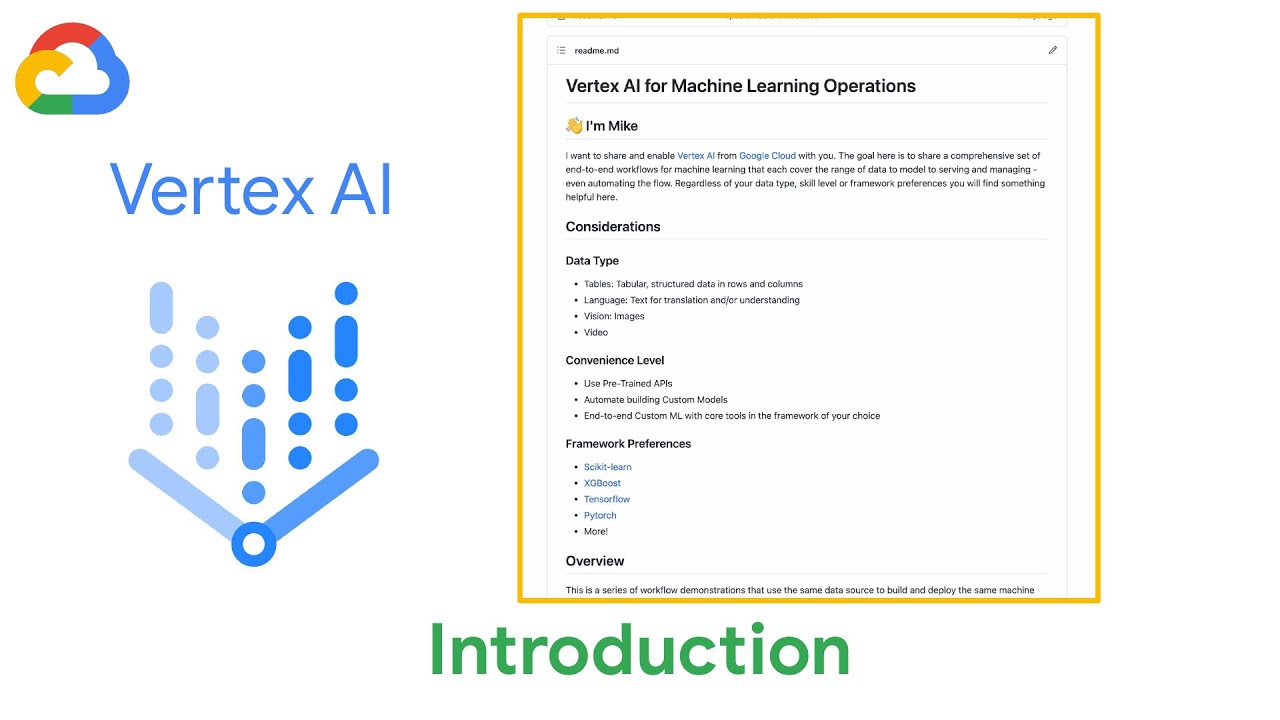

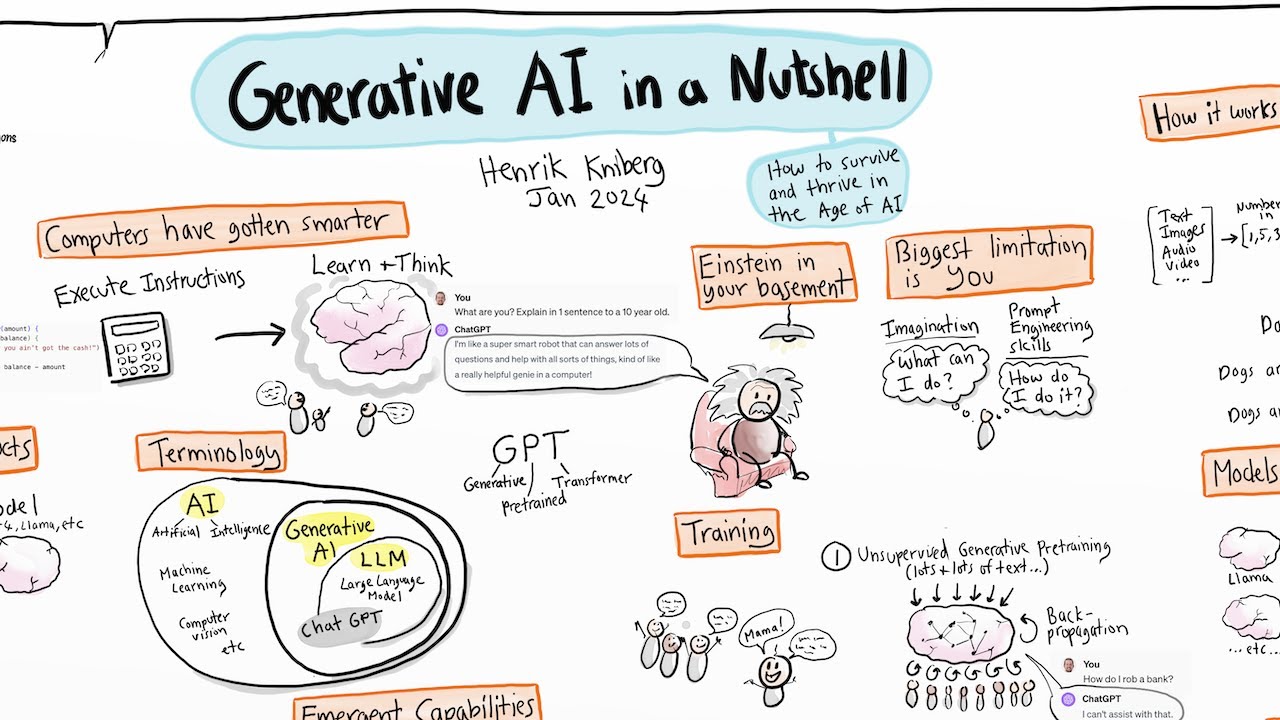

hi i'm priyanka vergaria and this is ai simplified where we learn to make our data useful in this series we've been learning about ingesting data training models and getting predictions it would be handy to learn how to automate all of these tasks by implementing them programmatically in this video we will learn how to do exactly that with the vertex ai sdk now before we dive into the sdk let's take a quick look at the options to interact with vertex ai there is google cloud console it is the ui to work with our model assets the

data sets the endpoints and the predictions in cloud now so far we've been using the console in this series so you must be familiar but if not i've included the links to the previous episode below now anything that you can do through the ui you can also do programmatically and for that we have the google cloud vertex ai sdk and then there are vertex ai client libraries which help us make calls to the vertex ci api now the benefits of using client library is that they are optimized for languages that you choose to use for

example java python or node now the question becomes when to use the console and when to use the sdk the ui helps us get familiar with the features and functions available in vortex ai so for that reason using the ui is my go-to recommendations if you are new to machine learning and ai or just prefer to do things visually the sdk is best if you're looking to programmatically implement the steps in your ml workflow now if you are an experienced machine learning engineer or data scientist then you would benefit from the ease of automating your

workflow if sdk isn't available in the language of your choice then you can use the client libraries or the rest api now that we know the different ways to interact with vertex ai we need a fun example to play with and create a model using the sdk i've picked out the perfect data set for this beans well who does not like beans being a math nerd i found out that pythagoras yeah the one who came up with a squared plus b squared equals c squared that pythagoras had an aversion to beans who would have thought

right okay so here's the game plan overall we will get the data explore it a little bit then create a model and deploy it to an endpoint from which we can make predictions and we will do all of this using the sdk we will need the tools to explore the data and prototype this ml model now for this we will use notebooks within vertex which is the hosted jupyter lab notebooks they come pre-installed with your favorite machine learning workflows such as tensorflow or pytorch these frameworks are already installed and you also have customized hardware so

you can use the cpus and the gpus if you need it it also integrates with git so we can customize and initialize a git repo within the notebook and it also integrates with other google cloud services so we'll put our data into bigquery which is google cloud enterprise data warehouse and inside the notebook we will download that data from bigquery and then put it into use to explore that data using pandas data frame and then the next step would be would be to create our training job creating the vertex ai model deploying it and then

making predictions and then finally monitoring that job in the console now ready to go let's see it now in the vertex ai console we can create a hosted jupyter notebook and if we click on the new instance we see that there are different types of notebooks that we can choose from they come pre-installed with our favorite machine learning frameworks and we can add gpus if needed and can also install additional libraries after the notebook is created i've already created a notebook here so let's get into it the first step is to download our data i'm

using dry beans data set from uci which i have linked below this is a tabular data set that was taken from images for different types of beams and different data points were collected on each type of beam in manufacturing and other industries it's common to extract tabular metadata from images and use that to train a model i've already uploaded the data in bigquery and we can see the different data points in the schema the label that we are predicting is called class and we can run this quick query in the data set to see roughly

what the distribution of bean type is across our data set so here i'm using the bigquery client library to download that data inside my notebook as pandas data frame and using this pandas method we can see a plot of how the data is distributed by parameters next we use the seymour library to create a pair plot which can help us identify our relationships between the features in our data and the label column within the class now that we have a good idea of what our data looks like and how it is balanced we are ready

to kick off the training job in vertex ai we initialize the project that we want to use then to train this model using automl we first need a data set we can pass the url to the data in bigquery directly to vertex ai there's no need to manually move our data vertex will handle that for us now if we go in vertex ai console into our data set we can see our created data set here and then we can see more details such as the schema and the data source in bigquery we could kick off

the training job here but i trust that you can click a button so let's stick to the sdk for this one back in our notebook here is the code to create the training job this is a classification model where we are classifying the beans into seven classes so we define that and pass all the different features that we want to use in the training this model you could add more options here such as how you would want automl to optimize the training specifically useful for an imbalanced data set i'm not doing that here finally job.run

will run the training job we specify the data set we want to use the target column or label that we are predicting how long we want to run the training for and enable early stopping so vertex ai stops the training if it does not see that the model can be further improved now you specify this which is useful to keep the cost low once the model has trained this could take some time we can see in the console that the training job has finished and we can see the evaluation metrics to see how our model

actually performed the confusion matrix shows how good a job our model did in distinguishing the different bean types since this is a tabular model vertex ai also gives us feature importance out of the box with auto emma we can see the features in our data set that signaled our model's prediction the most here it looks like shape and eccentricity were the most important features now that we have our model created let's deploy our endpoint so we can use it to serve predictions you can do that right here in the ui but let me show you

how to do that in the sdk all you have to do to deploy the model is model.deploy and the type of machine type you want to use there are more things you can specify here but i'm just going with the minimum once created we can see it in the console and test to make predictions from our endpoint let's head back over into our notebook and see how to make predictions using the api we provide a test set then we need to create the endpoint object grab the resource name from the deployed model and use it

here and then we use that to make a prediction request to our endpoint and there's our prediction we just trained an automl model and made prediction using the sdk we could use the same data set to train a custom model too all using the sdk here are the code samples that can help you do that try it out yourself and if you need help refer to the links below alright so today we created a bean classification model using the vertex ai python sdk give this a try yourself and let me know how it goes in

the comments below