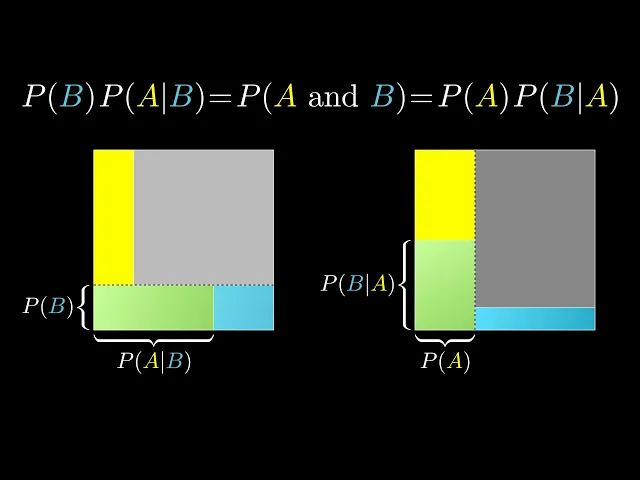

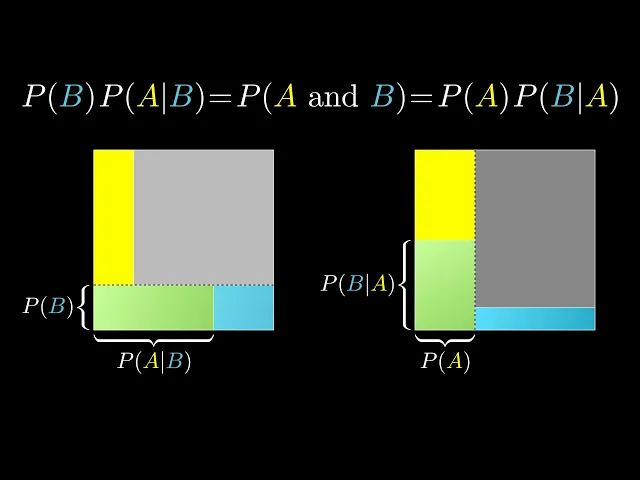

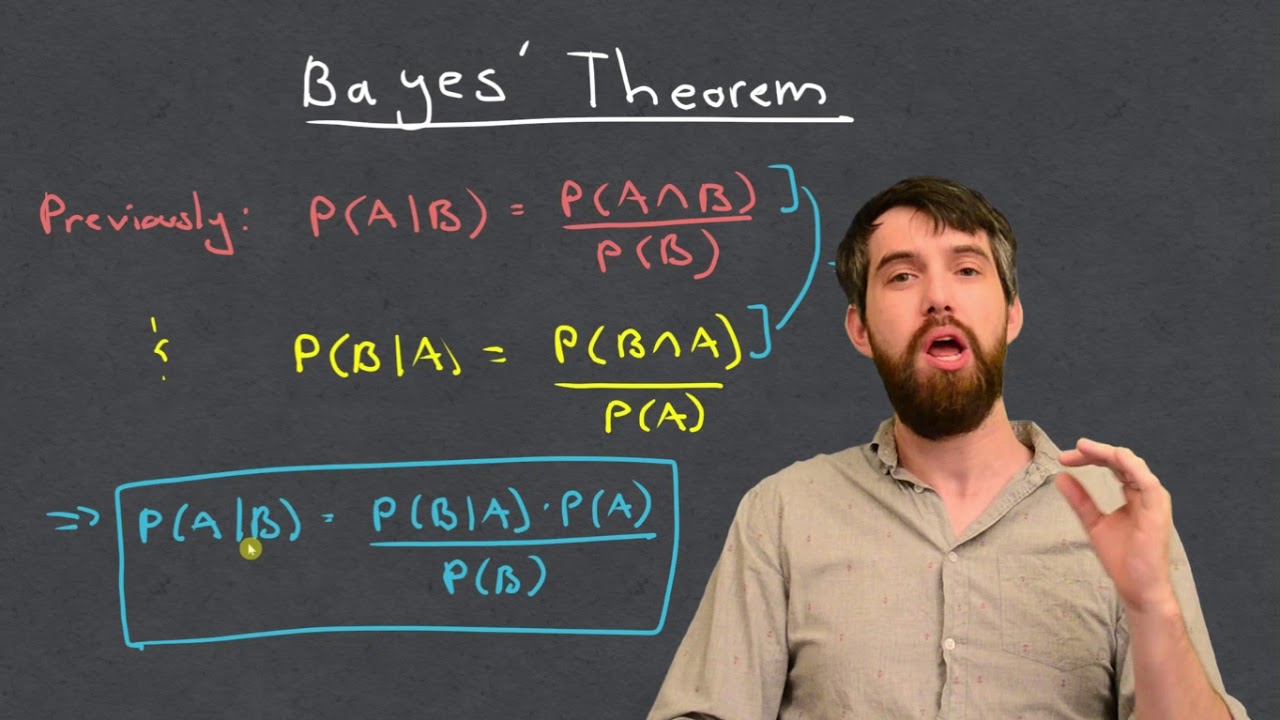

This is a footnote to the main video on Bayes' Theorem. If your goal is simply to understand why it's true from a mathematical standpoint, there's actually a very quick way to see it based on breaking down how the word AND works in probability. Let's say there are two events, A and B.

What's the probability that both of them happen? On the one hand, you could start by thinking of the probability of A, the proportion of all possibilities where A is true, then multiply it by the proportion of those events where B is also true, which is known as the probability of B given A. But it's strange for the formula to look asymmetric in A and B.

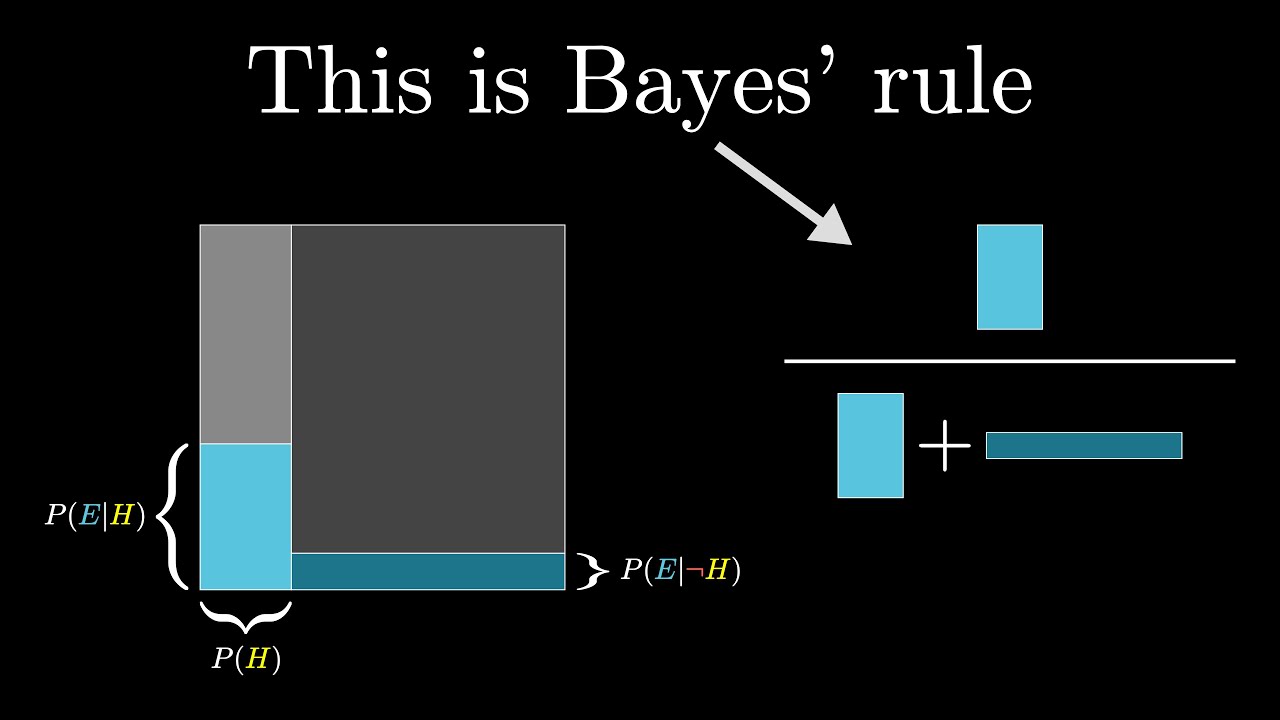

Presumably, we should also be able to think of it as the proportion of cases where B is true, among all possibilities, times the proportion of those where A is also true, the probability of A given B. These are both the same, and the fact that they're both the same gives us a way to express P of A given B in terms of P of B given A, or the other way around. So when one of these conditions is easier to put numbers to than the other, say when it's easier to think about the probability of seeing some evidence given a hypothesis rather than the other way around, this simple identity becomes a useful tool.

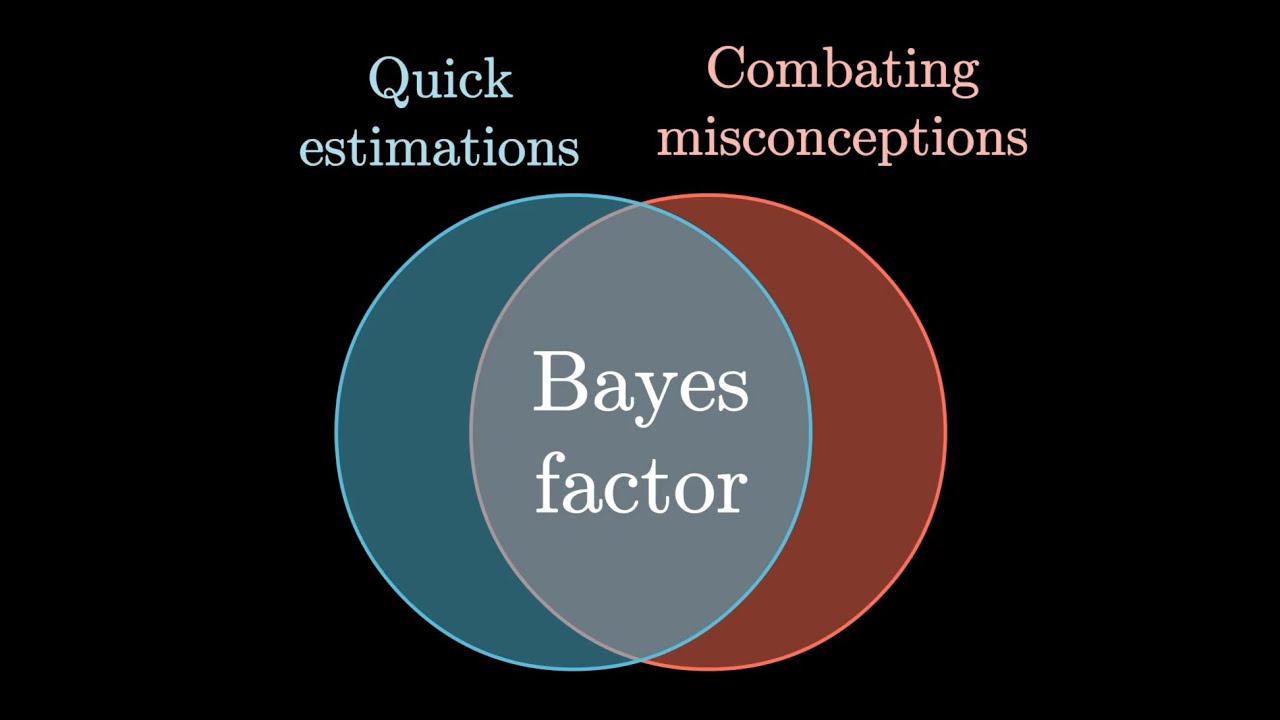

Nevertheless, even if this is somehow a more pure or quick way to understand the formula, the reason I chose to frame everything in terms of updating beliefs with evidence in the main video is to help with that third level of understanding, being able to recognize when this formula, among the wide landscape of available tools in math, happens to be the right one to use. Otherwise, it's kind of easy to just look at it, nod along, and promptly forget. And you know, while we're here, it's worth highlighting a common misconception that the probability of A and B is P of A times P of B.

For example, if you hear that 1 in 4 people die of heart disease, it's really tempting to think that that means the probability that both you and your brother die of heart disease is 1 in 4 times 1 in 4, or 1 in 16. After all, the probability of two successive coin flips yielding tails is ½ times ½, and the probability of rolling two 1s on a pair of dice is 1 6th times 1 6th, right? The issue is correlation.

If your brother dies of heart disease, and considering certain genetic and lifestyle links that are at play here, your chances of dying from a similar condition are higher. A formula like this, as tempting and clean as it looks, is just flat out wrong. What's going on with cases like flipping coins or rolling two dice is that each event is independent of the last.

So the probability of B given A is the same as the probability of B. What happens to A does not affect B. This is the definition of independence.

Keep in mind, many introductory probability examples are given in very gamified contexts, things with dice and coins, where genuine independence holds, but all those examples can skew your intuitions. The irony is that some of the most interesting applications of probability, presumably the whole motivation for the kind of courses using these gamified examples, are only substantive when events aren't independent. Bayes' theorem, which measures exactly how much one variable depends on another, is a perfect example of this.