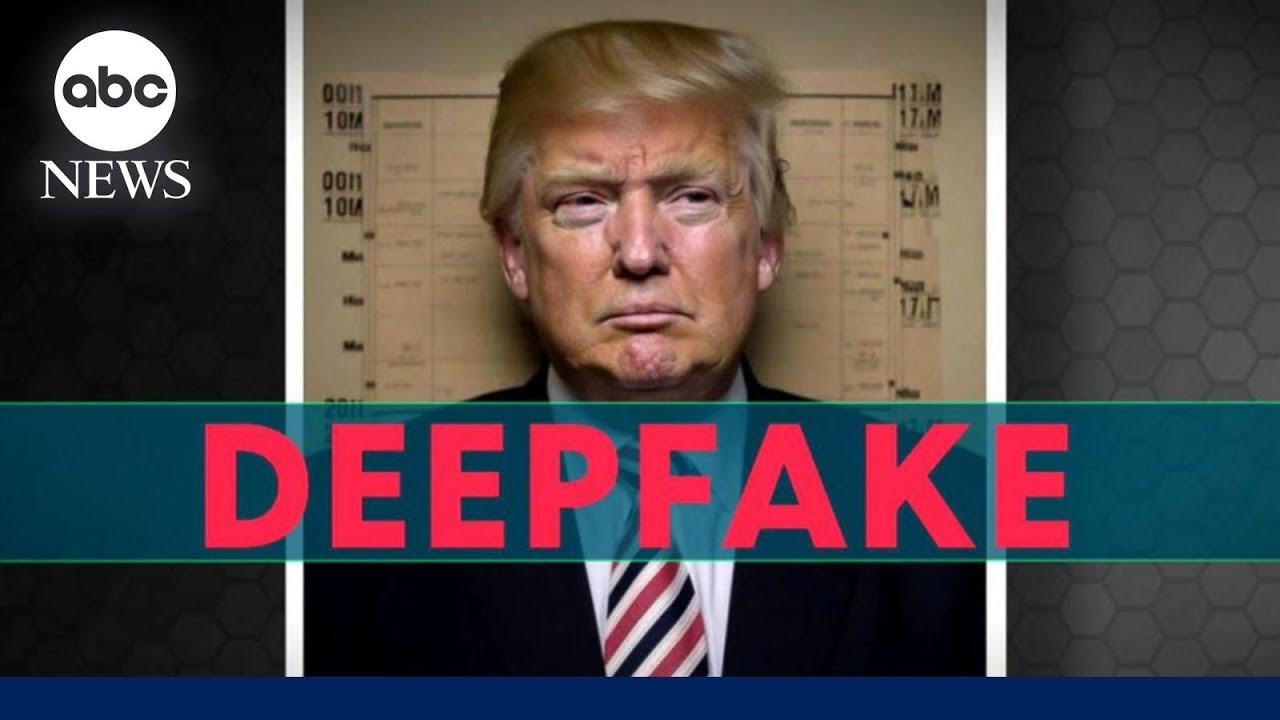

to help families refinance their homes to invest in things like high-tech manufacturing clean energy and the infrastructure that creates good new jobs you see i would never say these things at least not in a public address but someone else would someone by jordan peele [Music] it's sort of learning to recreate that person's face by looking at the thousands of images over and over and over like a lot more research than you would think would uh would go into making a goofy video or something like that truly surprising for me um yeah i was just really

surprised i didn't do any after touching on that video that was just using the technology that was available from the machine learning side you don't even have to know what github is you don't have to know how to program in python none of that matters you pay somebody 20 bucks and they'll create the fake for you that's a bit of a game changer you know the everyday person who the deep fake sex video emerges in a google search of your name where for that person it becomes almost impossible to debunk [Music] while i don't think

that's likely i also don't think it's out of the question and that is enough to keep me up at night the margins are very very thin the last national election here in the u.s was 80 000 votes in three states you don't have to fool tens of millions of people i don't think a deep fake will become a big political issue during the course of the campaign i think about a well-placed rumor before an ipo i think a lot of these things were problems already um and so it's unclear to me how much machine learning

really you know adds to those problems i find it very difficult to sort of believe that there is something so uniquely devastating about video [Music] right now what we are focusing on is developing forensic techniques to protect world leaders from deep face we are particularly worried about how the donald trump's theresa may and angela merkel's of the world how their likeness will be used to disrupt elections and cite potential violence in countries by creating fake content there's some fun and entertaining interesting applications but there is a weaponization of the technology and i simply advocate that

we as technologists we acknowledge it and we start thinking not after the fact but before the fact how can we start putting some safeguards in place you