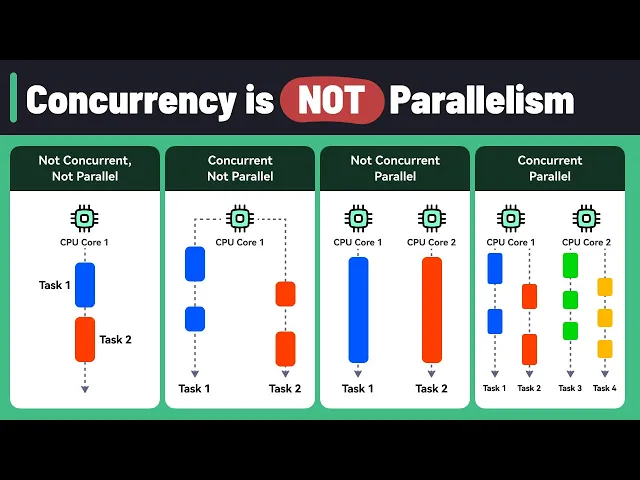

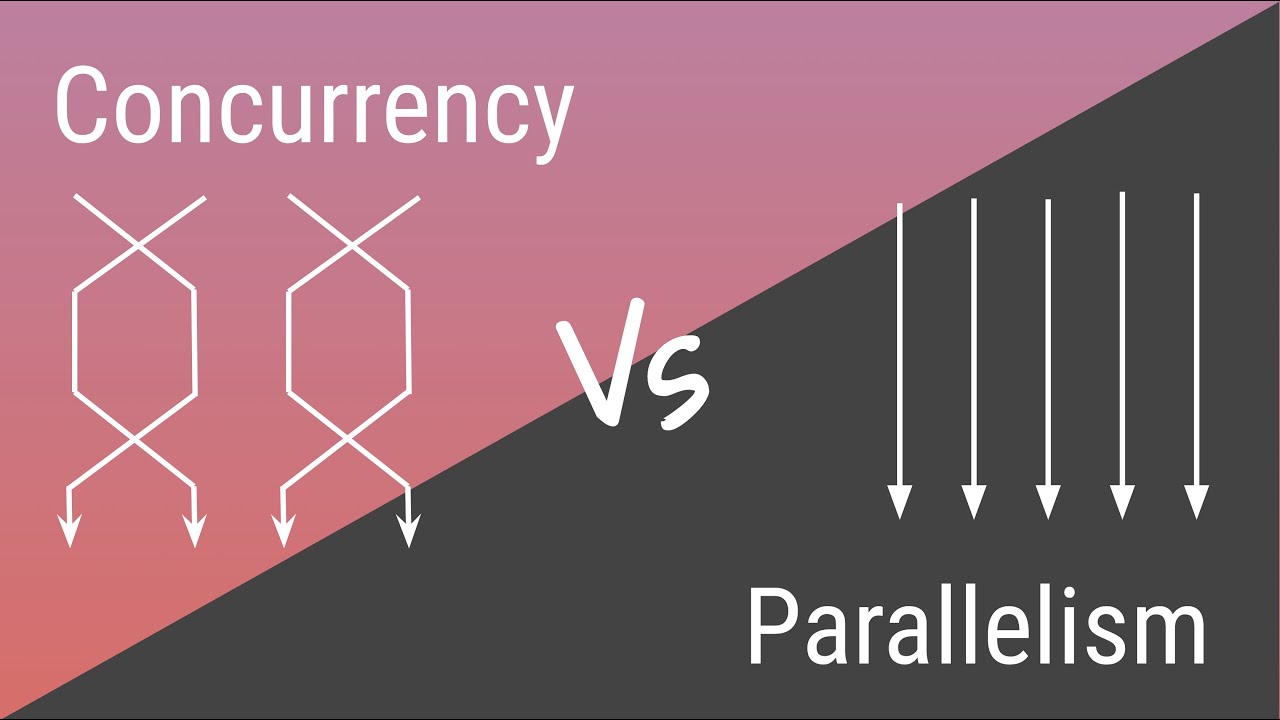

Today, we're exploring an important topic in system design: Concurrency vs. Parallelism. Understanding the difference between these concepts is essential for building efficient and responsive applications.

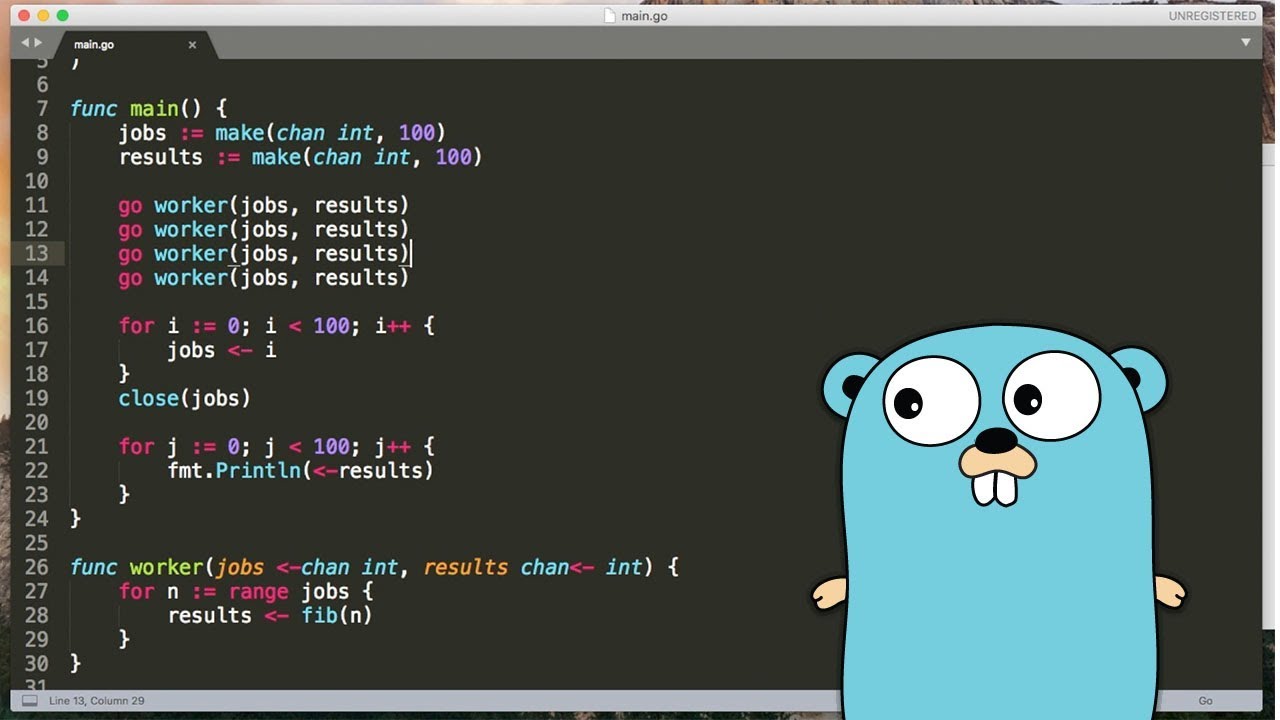

Let's start with concurrency. Imagine a program that handles multiple tasks, like processing user inputs, reading files, and making network requests. Concurrency allows your program to juggle these tasks efficiently, even on a single CPU core.

Here’s how it works: The CPU rapidly switches between tasks, working on each one for a short amount of time before moving to the next. This process, known as context switching, creates the illusion that tasks are progressing simultaneously, though they are not. Think of it like a chef working on multiple dishes.

They prepare one dish for a bit, then switch to another, and keep alternating. While the dishes aren't finished simultaneously, progress is made on all of them. However, context switching comes with overhead.

The CPU must save and restore the state of each task, which takes time. Excessive context-switching can hurt performance. Now, let's talk about parallelism.

This is where multiple tasks are executed simultaneously, using multiple CPU cores. Each core handles a different task independently at the same time. Imagine a kitchen with two chefs.

One chops vegetables while the other cooks meat. Both tasks happen in parallel, and the meal is ready faster. In system design, concurrency is great for tasks that involve waiting, like I/O operations.

It allows other tasks to progress during the wait, improving overall efficiency. For example, a web server can handle multiple requests concurrently, even on a single core. In contrast, parallelism excels at heavy computations like data analysis or rendering graphics.

These tasks can be divided into smaller, independent subtasks and executed simultaneously on different cores, significantly speeding up the process. Let's look at some practical examples. Web applications use concurrency to handle user inputs, database queries, and background tasks smoothly, providing a responsive user experience.

Machine learning leverages parallelism for training large models. By distributing the training across multiple cores or machines, you can significantly reduce computation time. Video rendering benefits from parallelism by processing multiple frames simultaneously across different cores, speeding up the rendering process.

Scientific simulations utilize parallelism to model complex phenomena, like weather patterns or molecular interactions, across multiple processors. Big data processing frameworks, such as Hadoop and Spark, leverage parallelism to process large datasets quickly and efficiently. It's important to note that while concurrency and parallelism are different concepts, they are closely related.

Concurrency is about managing multiple tasks at once, while parallelism is about executing multiple tasks at once. Concurrency can enable parallelism by structuring programs to allow for efficient parallel execution. Using concurrency, we can break down a program into smaller, independent tasks, making it easier to take advantage of parallelism.

These concurrent tasks can be distributed across multiple CPU cores and executed simultaneously. So, while concurrency doesn't automatically lead to parallelism, it provides a foundation that makes parallelism easier to achieve. Programming languages with strong concurrency primitives simplify writing concurrent programs that can be efficiently parallelized.

Concurrency is about efficiently managing multiple tasks to keep your program responsive, especially with I/O-bound operations. Parallelism focuses on boosting performance by handling computation-heavy tasks simultaneously. By understanding the differences and interplay between concurrency and parallelism and leveraging the power of concurrency to enable parallelism, we can design more efficient systems and create better-performing applications.

If you like our videos, you might like our system design newsletter as well. It cover topics and trends in large scale system design. Trusted by 500,000 readers.

Subscribe at blog. bytebytego. com.