Is it possible to steal a million-dollar app using AI? This app makes $24 million yearly by letting users track calories with photos of their food. But what if I told you it wasn't built by a massive team, but a group of teenagers who are still in school?

Today, I'm going to attempt something bold. I will rebuild this entire app from scratch using nothing but AI tools. So, can today's AI tools really help regular people steal million-dollar apps?

Let's find out. In step one, we'll create our master plan. Step two, we'll build the app with AI using new tools like DeepSeek and MCP.

And step three, we'll deploy the app to App Store. But first, time for an upgrade. This is the voice I will be using from now on.

And yes, this is my real voice. Step one, project setup with Brain Dumper. First, head to brain dumper.

ai and choose Windsurf. This is a tool I've built to help create the project context, making it easier to build the app. Here, just brain dump everything you want to include in your app.

When you're done, click next. Select mobile app and then generate plan. Once it's done, you'll see this page with several download buttons.

First, download the context file. This contains your entire project plan. I've linked it with my referral code, which gives you 500 free credits.

Or hey, if you don't want the free credits, no worries. Then, you need to download NodeJS, and we need this to access the MPM and MPX commands. Simply explained, just to install different packages.

And finally, the secret weapon for stealing professional UI, Mobin. It's basically a massive library of successful mobile apps broken down screen by screen. Normally, if you wanted to study an app's design, you'd have to download it, click through every screen, and manually take screenshots.

And trust me, that process sucks. Now, let's search for calories to see if there are any apps to copy here. Okay, nice.

So, they have apps like Livom, My Fitness Pal, and MacAractor. I personally like Livom's UI the most, so let's use that. Click on flows, and check this out.

We can see the entire user journey. Look at their onboarding. 31 screens.

They've clearly optimized this flow to convert users. Now, let's copy this entire flow. Click the copy button with the Figma logo, then download plug-in.

We'll redirect to Figma. Click open in new file. Figma file.

Save it. Open mobin again. Click inside this box and paste with CtrlV.

Nice. Step two is building the app. Now, open Windsorf.

Create a new project folder and drag in your context file from Brain Dumper. And then let's add some rules to make our build process smoother. Click the Windsor settings at the bottom right corner, then memories and rules and manage.

Click edit workspace rules. I've prepared the windsurf rules in the video description. Copy and paste those in.

These rules tell windsurf exactly how it should use expo to build mobile apps. Now press ctr L to open cascade. This is where we talk directly to the AI.

And let's tell it set up the mobile app from the context file using this command. MPX create expo app at latest E with router. I'll provide the UI and onboarding user flow from Figma after the project is set up.

Click accept when it asks for permission. And while this runs, let me explain what Expo router is. This picture from Expo's blog explains it easily.

It just connects all the different screens in our app using a file-based routing system. Now, the AI wants to install the required libraries, so just accept this, too. For some reason, Cascade always gets the syntax wrong when using multiple commands in one line, but it's not a problem since it corrects itself immediately.

Now, Cascade wants to install more packages like Expo Camera for taking food pictures, image picker for uploads, and Expo notifications. Let's accept. Now, it's creating our app structure, folders for onboarding, authentication, home, components, and pages.

Accept each folder creation one by one. We've got a few errors to fix before we can run the app. This is normal in development, but with AI, we can solve these in seconds instead of hours on Stack Overflow.

Just click send to Cascade, and the AI will understand the issues and fix them automatically. Great. Now, let's run the app with NPX Expo Start and see what we've got.

In your terminal, you'll see a QR code. Here's what to do next. Grab your phone, download the Expo Go app, and scan the QR code from your camera.

Look at this. We already have the onboarding screens. Let's go through them.

We've got the goal selection, gender input, height, weight, and activity level. All working perfectly and even the payw wall screen is built. Let's continue to the dashboard.

For now, the main dashboard shows placeholder data. Even the profile screen looks good, too. Let's try the food capture feature.

Nice. It's already asking for camera permissions. But here, we got an error.

Let's head back to the terminal in Windsurf. Copy the error and ask Cascade to fix it. Refresh the app by pressing R in the terminal.

Or you can actually shake your phone and click reload. Perfect. Now I can take a picture, analyze it, and get nutrition data.

Even though it's just placeholder data until we connect the AI functionality. Now let's add a real backend with Superbase to store all our user data and food logs. Let's ask Cascade to start setting up the Superbase back end.

Starting with the user authentication. When it's done, go to superbase. com, sign in, and create a new project.

Give it a name, set the database password, choose your region, and create. Now just wait about 30 seconds until it's set up. Once Superbase is done, go to project settings API.

Copy the URL and API key. Then paste them into our ENV file in Windsurf. Now we need to set up our database tables.

Let's ask Cascade to generate the SQL schema for user profiles, meal tracking, and subscriptions. Copy this code. Go to Superbase SQL editor, paste it in, and click run.

Now, if we check table editor, we can see all our tables, daily goals, food items, meals, profiles, and subscriptions. Let's now test our database connection in the app. Create an account and log in.

And it works. Now check Superbase. There's our user in the database.

Our profile information is saved in the profiles table as well. Let's scan a meal and save it. Perfect.

Let's check the database to confirm everything saved properly. Looking at the meals table. Perfect.

Our entry is there. And in the food items table, we can see all individual items properly stored as well. Now to the exciting part.

Setting up the Google Cloud Vision API to make our AI food scanning feature actually work. First, grab an API key. Then, go to console.

cloud. google. com and create an account.

Click my first project, then new project. Name your project and hit create. Once created, search for API and select API and services.

Then, search for cloud vision and select the cloud vision API. Finally, click enable. Now just navigate to the credentials page and click create credentials and choose API key.

And just like that, the API key is made. Now copy it and close out of this dialogue window. If you now edit this API key, we can add a good name for it.

I'll name it calorie snap. Hit save. And here can actually reveal the key if you need to see it later.

With the API key copied, navigate back to Windserve and create a completely new chat to start clean. Here we need to let Cascade know that we are using the Google Cloud Vision API for the AI image scanning. So let's just prompt this.

Let's use Google Cloud Vision API as the AI integration of the food scanning. Help me set this up. Now let Windsurf generate the code for us.

When it's done, we need to insert the API key in the ENV file. So open the MV file and add the placeholder for the Google Cloud API key and paste in the actual API key there. Now open the terminal and run npx expo start- c to run the app.

Scan the QR code again. I've tested our app by uploading an image of an apple and it correctly showed no protein and approximately 100 calories, confirming that the AI implementation is working. We now have a complete MVP up and running in under 10 minutes.

But let's now focus on improving the UI and the user experience of the app. Earlier we exported all these designs from Mobin to Figma. But now let's quickly create an even better file with only our favorite screenshots.

I want to take inspiration from these three apps in Mobin. Livesome, My Fitness Pal, and Macrofactor. So what I'll do is speed up this process of me spending the next 15 minutes going over all these three apps in Mobin and save all the best pages.

Let's navigate to the saved section and open the collection. As you can see, I've saved 84 pages in here. What you can do is download all the screens you've saved.

or if we mark the first screen and then hold shift and mark the last screen. We can copy all the screens over to Figma like we did before. I'm only adding half of the screens first because 84 images is a lot to process at a time.

Now click download plugin and it will redirect over to Figma where we can create a new file. Hit run and then click inside this box and press Ctrl +V to paste in the designs from Mobin. I'll need to continue by adding the remaining 42 screens.

I'll just speed up this part as well. All right, nice. So here we now have a file with all these screens.

Let's now use these designs as inspiration using Windsurf's new built-in Figma MCP. Could you explain what MCP actually is so I understand it? Yes, of course.

So AI coding assistants like Windsurf are limited to just generating code. If you need to interact with any web pages, databases, or APIs, you're on your own. But that's where MCP or model context protocols come in.

All it is is a standard that lets AI models communicate with apps and tools more easily. Think of MCP as a universal translator between AI assistants and digital tools. It creates a shared language that helps AI understand and control services in the real world.

Now let's continue. So if we now go back to Windsurf and click on Windsurf settings in the bottom right corner and then click on advanced settings under Cascade, we can see the line for MCP. Click on add server.

And here we can already see that Windinssurf has added integrations with quite a few companies already like Stripe, Slack, and Figma. If we now click on add server for Figma, then we need to add our Figma API key. So, navigate back to Figma and click on this button in the top left corner, help an account, and click on account settings.

Then, navigate to the security tab. And if you now scroll down to personal access tokens, this is where we can create API keys in Figma. Click on generate new token and I'll name it calorie snap.

Back in Windsurf, let's paste in this API key and save configuration. Now we have connected Windsurf with Figma using their MCP. And this means that Windinsurf can see all the screens.

So let's prompt Cascade to look at the Figma file with the 84 mobile app screens and use them as design inspiration to improve the UI and UX of our current app. Now the AI will look through all the screen designs from the file we've made and implement a combination of the three app designs in our app. After some time, the AI is finally done examining the designs and it lists up all the improvements.

Now, let's ask it to continue by improving specifically the dashboard page. I'll speed up this process. Okay, now it's done.

Let's ask it to improve the other pages as well. This final prompt actually took almost 10 minutes, so this has to be good. Stop the running local host by pressing Ctrl C.

And now, let's rerun the app with npx expo start- C to open the project freshly. Scan the QR code to open the app. And okay, this looks like it's made by a professional designer.

And all this with just one prompt. AI is getting crazy. Just look at this.

We've actually built this whole app with the food scanning feature working perfectly. Superbase handling all our data and user accounts. And honestly, the UI is looking super clean.

Now for the exciting part, let's launch this app onto the app store. And trust me, it's way easier than you probably think. We've been playing with it in Expo Go on our phones, but now it's time to push it to production so your friends and potential users can actually download and test it themselves.

I'll jump over to my MacBook for this part, but of course this is also possible to do on Windows. First, we need an Expo EIS account. Head over to expo.

dev/signup and create one here. You'll also need an Apple developer account, which costs $99 per year. Go to developer.

apple. com, click on account, and create yours. Now, back in Windsurf, let's type in EAS login.

Here you need to log into the Expo account you just made. This is to create the project. Would you like to create a project?

Hit Y for yes. Nice. Now, the project is made.

Now, let's do the final command. npx test flight. This one command will build the entire app and submit it.

It needs to install EAS. Choose yes. Now we need to log in to the Apple account.

After you've logged in, hit yes on all the next questions. Then it continues to run all the commands for you. And now it's building the app.

This takes a few minutes. So just wait until it's done. When it's done building the app, it will also submit it.

Okay. Now let's navigate to app store connect. apple.

com. Go to apps. And here it is.

Open it and navigate to testflight. Nice. Let's now create a link for our friends to test the app.

Press the blue button under external testing and create a new group. Then we need to add the build. So click here, select the build, and press add.

Now open the group and create a public link. Confirm. You can copy this link and give it to your friends to try out the app.

And there it is, a calorie counter app built completely from scratch in under an hour. Now let's quickly put our creation side by side with those copycat apps cluttering the app store. Ours actually looks just as good as many of those apps.

But here's the real question. Why is Cali so successful when there are dozens of similar apps already out there? The honest answer is that they've mastered their marketing strategy.

I'll dive deeper into that in future videos. But think about this. When Cali first launched, it wasn't a fully polished app.

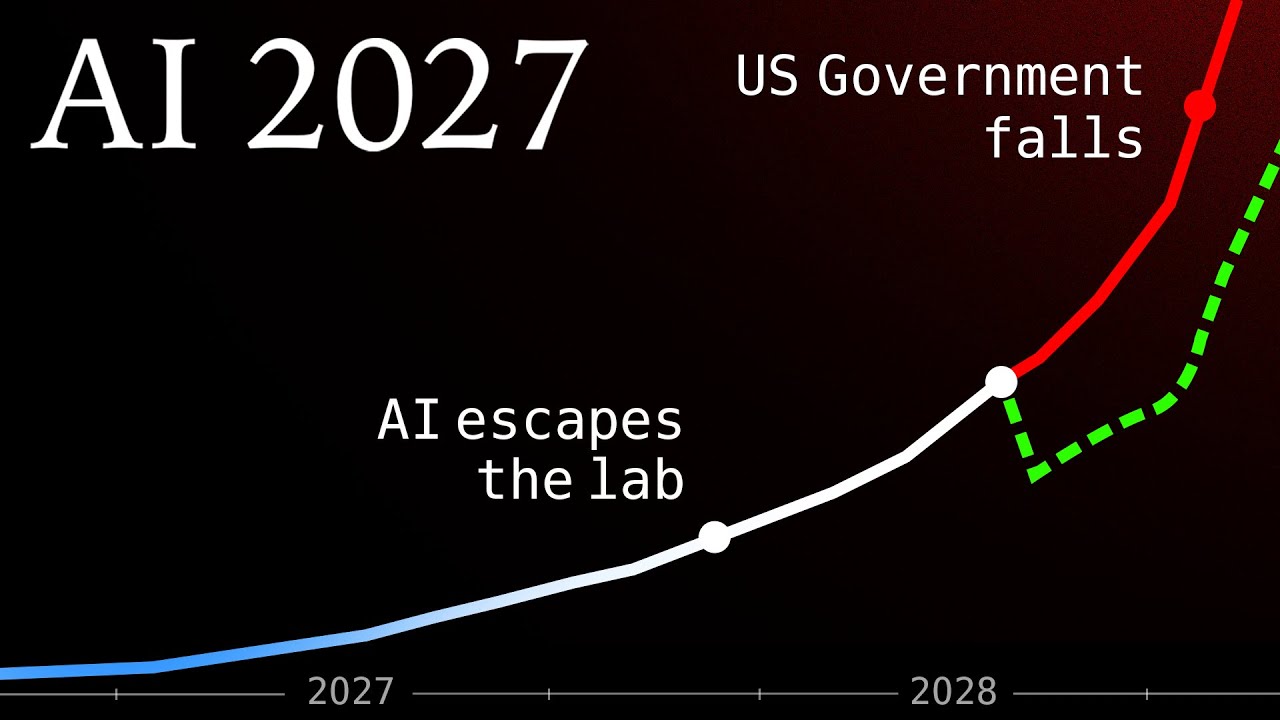

It started as a simple chat GPT rapper, a basic minimum viable product designed purely to test if people even wanted it. Cali turned a simple chat GPT rapper into a multi-million dollar business. Today's AI tools give you everything you need to rapidly create powerful MVPs to validate your own ideas quickly.

And of course, this isn't a complete app yet, but it's a good MVP to test the market built in less than an hour.