thank you alex and uh yeah great to be part of this and i hope you had an exciting course uh until now and got a good foundation right on different aspects of deep learning as well as the applications um so as you can see i kept a general title because you know the aspect of question is you know is deep learning mostly about data is it about compute or is it about also the algorithms right so and how and ultimately how do these three come together and so what i'll show you is what is the

role of some principal design of ai algorithms and when i say challenging domains i'll be focusing on ai for science which as alex just said at caltech kind of we have the ai for science initiative to enable collaborations across the campus and have domain experts work closely with the ai experts and to do that right how do we build that common language and foundation right and and why isn't it just an application a straightforward application of the current ai algorithms what is the need to develop new ones right and how much of the domain specificity

should we have versus having uh domain independent frameworks and all this of course right the answer is it depends but the main aspect that makes it challenging that i'll keep emphasizing i think throughout this talk is the need for extrapolation or zero shot generalization so you need to be able to make predictions on samples that look very different from your training data right on many times you may not even have the supervision for instance if you are asking about the activity of the earth deep underground you haven't observed this so having the ability to do

unsupervised learning is important having the ability to extrapolate and go beyond the training domain is important and so that means it cannot be purely data driven right you have to take into account the domain priors the domain constraints and laws the physical laws and the question is how do we then bring that together in an algorithm design and so you'll see some of that in this talk here yeah and uh the question is this is all great as an intellectual pursuit but is there a need right and the to me the need is huge because

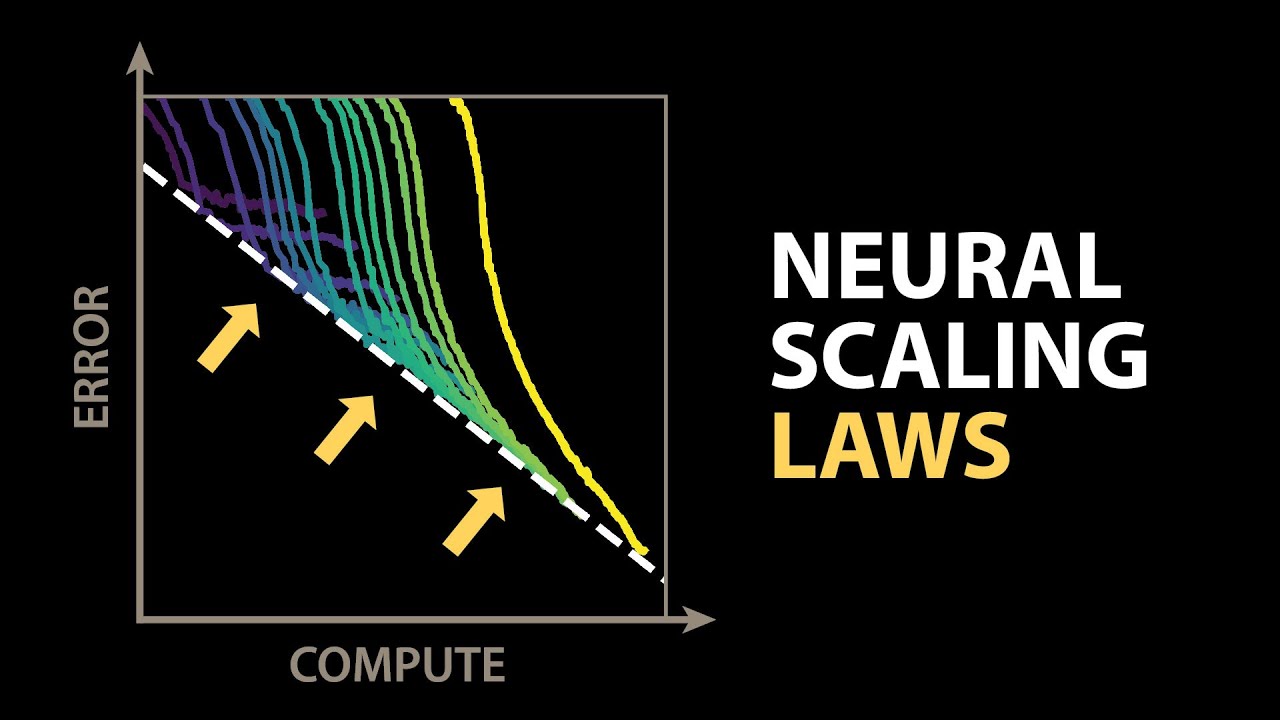

if you look at uh scientific computing and so many applications in the sciences right the requirement for computing is growing exponentially you know now with the pandemic you know the need to understand uh right the ability to develop new drugs vaccines and the evolution of new viruses is so important and this is a highly multi-scale problem right we can go all the way to the quantum level and ask you know how precisely can i do the quantum calculations but this would not be possible at the scale of millions or billions of atoms right so you

cannot like do these fine scale calculations right especially if you're doing them through numerical methods and you cannot then scale up to uh millions or billions of atoms which is necessary to model the real world and similarly like if you want to tackle climate change and you know precisely predict climate change for the next uh century uh we need to also be able to do that at fine scale right so saying that the planet is going to warm up by one and a half or two degrees centigrade is of course it's disturbing but what is

even more disturbing is asking what would happen to specific regions in the world right we talk about the global south or you know the middle east like india like places that uh you know may be severely affected by climate change and so you could go even further to a very fine spatial resolution and you want to ask what is the climate risk here and then how do we mitigate that and so this starts to then require lots of computing uh capabilities uh so you know there's a if you like look at the current numerical methods

and you ask i want to do this let's say at one kilometer scale right so i want the resolution to be at the one kilometer level uh and then i wanna look at the predictions for the night just the next decade that alone would take ten to the love and more computing than what we have today and similarly you know we talked about understanding molecular properties if we try to compute this schrodinger's equation which is right the fundamental equation uh so we know right that characterizes everything about the molecule but even to do that for

a 100 atom molecule it would take more than the age of the universe right the time it's needed in the current supercomputers so so that's the aspect that no matter how much computing we have we will be needing more and so like the hypothesis right that nvidia we're thinking about is yes gpu will give you right some amount of scaling and then you can build super computers and we can have the scale up and out but you need machine learning to have 1000 to further million x speed up right on top of that and then

you could go all the way up to 10 to the 9 or further to you know close that gap so machine learning and ai becomes really critical to be able to speed up scientific simulations and also to be data driven right so we have you know lots of measurements of the planet uh in terms of the weather over the last few decades but then we have to extrapolate further but we do have the data and we are also you know collecting data through satellites right as we go along so how do we take the data

along with the physical laws let's say fluid dynamics right of how clouds move how clouds form so you need to like take all that into account together and same with you know discovering new drugs we have data on the current drugs and we have a lot of the information available on those properties right so how do we use that data along with the um physical properties right whether it's at the level of like classical mechanics or quantum mechanics right so and how how how do we make the decision of at which level at which precision

do we need to make ultimately discoveries right either discovering new drugs or coming up with the precise characterization of climate change and to do that with the right uncertainty quantification because we need to understand right like kind of it's not we're not going to be able to precisely predict what the climate is going to be over the next decade let alone the next century but can we predict also the error bars right so we need that precise error bars and all of this is a deep challenge for the current deep learning methods right because we

know deep learning tends to result in models that are over confident when they're wrong and we've seen that you know the famous cases are like the gender shade studies where it was shown on darker colored skin and especially on women right those models are wrong but they're also very over confident when they're wrong right so so that you cannot just directly apply to the climate's case because you know trillions of dollars are on the line in terms of to design the policies based on those uncertainties that we get in the model and so we cannot

abandon just the current uh numerical methods and say let's do purely deep learning um and in the case of uh of course right drug discovery right the aspect is the space is so vast we can't possibly search through the whole space so how do we then make right like the relevant uh you know design choices on where to explore and as well as there are so many other aspects right is this drug synthesizable you know is that going to be cheap to synthesize so there's so many other aspects beyond just the search space uh so

yeah so i think i've convinced you enough that these are really hard problems to solve the question is where do we get started and how do we make headway in solving them and so what we'll see is uh in you know what i want to cover in this lecture is right if you think about predicting climate change and i emphasize the fine scale phenomena right so this is something that's well known in fluid dynamics that you can't just take measurements of the core scale and try to predict with that because the fine scale features are

the ones that are driving uh the phenomena right so you will be wrong in your predictions if you do it only at the core scale and so then the question is how do we design machine learning methods that can capture this fine scale phenomena and that don't over fit to one resolution right because we have this underlying continuous space on which the fluid moves if we discretize and take only a few measurements and we fit that to a deep learning model it uh may be doing the you know it may be overfitting to the wrong

just those discrete points are not the underlying continuous phenomena so we'll develop methods that can capture that um underlying phenomenon in a resolution invariant manner and the other aspect we'll see for molecular uh modeling uh we'll look at the symmetry aspect because you rotate the molecule in 3d right the result it should be equivariant so also how to capture that into our deep learning models uh we'll see that uh in the later part of the talk so that's hopefully an overview or in terms of the challenges and uh the um also some of the ways

we can overcome that and so this is just saying that you know there is right lots of also data available so that's a good opportunity and we can now have large scale models and we've seen that in the language realm right like uh including now what's not shown here the nvidia 530 billion model uh with 530 billion parameters and with that we've seen language understanding have a huge quantum leap and so that also shows that if you try to capture complex phenomena like the uh earth's um weather or you know ultimately the climate or molecular

modeling we would also need big models and we have now better data and more data available so all this will help us contribute to you know getting good impact in the end and the other aspect right is like also what we're seeing is bigger and bigger super computers and with ai and machine learning the benefit is we don't have to worry about high precision computing right so with traditional high performance computing you needed to do that in very high precision right 64 floating point uh computations whereas now with ai computing we could do it in

32 or even 16 or even eight right so we are kind of getting to lower and lower bits and also mixed precision so we have more flexibility on how we choose that precision and so that's another aspect that is deeply beneficial so okay so let me now get into some aspect of algorithmic design uh i mentioned uh briefly just a few minutes ago that if you look at standard neural networks right they are fixed to a given resolution so they expect image in a certain resolution right a certain size image and also the output whatever

task you're doing is also a fixed size now if you're doing segmentation it would be the same size as the image so so why is this not enough because you know if you are looking to solve fluid flow for instance this is like kind of air foils right so you can uh you know with standard numerical methods what you do is you decide what the mesh should be right and depending on what task you want you may want a different mesh right and they want a different resolution and so we want methods that can remain

invariant across these different resolutions and that's because what we have is an underlying continuous phenomenon right and we are discretizing and only sampling in some points but we don't want to over fit to only predicting on those points right we want to be predicting on other points other than what we've seen during training and also different um initial conditions boundary conditions right so that's what uh when we're solving partial differential equations we need all this flexibility so if you're saying we want to replace current partial differential equation solvers we cannot just straightforward use our standard

neural networks and so that's what we want to then formulate what does it mean to be learning such a pde solver because if you're only solving one instance of a pte right as a standard solver what it does is it looks at what is the solution at different query points and numerical methods will do that by discretizing in space and time right at an appropriate resolution like it has to be fine enough resolution and then you numerically compute the solution on the other hand we want to learn uh solver for uh family of partial differential

equations let's say like fluid flow right so i want to be learning to predict like uh say the velocity or the vorticity like all these properties as the fluid is flowing and to do that i need to be able to learn what happens under different initial conditions so different initial let's say velocities or different initial right and boundary conditions right like what is the boundary of this space so so i need to be given this right so if i tell you what the initial and boundary conditions are i need to be able to find what

the solution is and so if we have multiple training points we can then train to solve for a new point right so if i now give you a new set of initial and boundary conditions i want to ask what the solution is and i could potentially be asking it at different query points right at different resolutions so that's the problem set up any questions here right so hope that's clear so the main difference from standard um supervised learning right that you're familiar with say images is here right it's not just fixed to one resolution right

so you could have like different query points at different resolutions in different samples and different during training versus test samples and so now the question is how do we design a framework that does not fit to one resolution that can work across different resolutions and we can think of that by thinking of it as learning in infinite dimensions because if you learn from this function space of initial and boundary conditions to the solution function space then you can resolve at any resolution yeah so how do we go about building that in a principled way so

to do that just look at a standard neural network right let's say um an mlp and so what that has is right a linear function which is matrix multiplication and then um right so on top of that some non-linearity so you're taking linear processing and on top of that adding a non-linear function and so with this you have good expressivity right because if you only did linear processing that would be limiting right that's not a very expressive model that can't fit to say complex data like images but if you now add non-linearity right you're getting

this expressive network and same with convolutional neural networks right you have a linear function you combine it with non-linearity so this is the basic setup and we can ask can we mimic the same but now the difference is instead of assuming the input to be in fixed finite dimensions in general we can have like an input that's infinite dimensional right so that can be now uh a continuous set on which we define the initial or boundary conditions so how do we extend this to that scenario so in that case right we can still have the

same notion that we will have a linear you know operator so here it's an operator because it's now in infinite dimensions potentially right so that's the only detail but we can ask it's still linear right and compose it with non-linearity so we can still keep the same principle but the question is what would be now some practical design of what these linear operators are and so for this well take inspiration from solving linear partial differential equations i don't know how many of you have taken a pde class right if not not to worry i'll give

you some quick insights here so if you want to solve a linear partial differential equation uh you know the most popular example is heat diffusion so you have like a heat source and then you want to see how it diffuses in space right so that can be described as a linear partial differential equation system and the way you solve that is you know there is this is known as the greens function so this says how it's going to propagate in space right like so at each point uh what is this kernel function and then you

integrate over it and that's how you get the temperature at any point so intuitively what this is doing is uh convolving with this green's function and doing that at every point to get the solution which is the temperature at every point so it's saying how the heat will diffuse right you can write it as the propagation of that heat as this integration or convolution operation and so this is linear and this is also now not dependent on the resolution because this is continuous so you can do that now at any point and query a new

point and get the answer right so it's not fixed to finite dimensions and so this is conceptually right like a way to incorporate now a linear operator uh but then if you only did this right if you only did this operation that would only solve linear partial differential equations but on the other hand we are adding non-linearity and we are going to compose that over several layers so that now allows us to even solve non-linear pdes or any general system right so the idea is we'll be now learning how to do this integration right so

these we will now uh learn over several layers and uh get uh a now what we call a neural operator that can learn in infinite dimensions so of course then the question is how do we come up with the practical architecture that would learn this right so that would learn to do this kind of global convolution and continuous convolution so here we'll do some signal processing 101. again uh you know if you haven't done the course or don't remember here's again a quick primer right so uh you know the idea is if you try to

do convolution in the spatial domain it's much more efficient to do it by transforming it to the fourier domain right or the frequency space and so convolution in the right spatial domain you can change it to multiplication in the frequency domain so now you can multiply in the frequency domain and take the inverse fourier transform and then you solve this convolution operation and the other benefit of using fourier transform is it's global right so if you did a standard convolutional neural network the filters are small so the receptive field is small right even if you

did over a few layers and so you're only capturing local phenomenon which is fine for natural images because you're only looking at edges that's all local right but uh for um especially fluid flow and all these partial differential equations there's lots of global correlations and doing that through the fourier transform can capture that so in the frequency domain you can capture all these global correlations effectively and so with this insight right sorry here that i wanna emphasize is we're gonna ultimately come up with an architecture that's you know very simple to implement because in each

layer what we'll do is we'll take fourier transform so we'll transform our signal to the fourier space right or the frequency domain and learn weights on how to pick like a across different frequencies which one should i update which one should i down weight and so i'm going to learn these weights and also only limit to the low frequencies when i'm doing the inverse fourier transform so this is more of a regularization um of course if i did only one layer of this this highly limits expressivity right because i'm taking away all the high frequency

content which is not good but i'm like now adding non-linearity and i'm doing several layers of it and so that's why it's okay and so this is now a hyper parameter of how much should i filter out right which is like a regularization and it makes it stable uh to trade so so that's an additional detail but at a high level what we are doing is we are processing now from we're doing the training by learning weights in the frequency domain right and then we're adding non-linear transforms in between that to give it expressivity so

this is a very simple formulation but uh the previous slides with that what i try to also give you is an insight to why this is principled right and in fact we can theoretically show that this can universally approximate any operator right including solutions of non-linear pes and it can also like for specific families like fluid flows we can also argue that it can do that very efficiently with not a lot of parameters so which is like an approximation bound so so yeah so that's the idea that uh this is uh right all you know

in many cases we'll also see that incorporates the inductive bias you know of the domain that expressing signals in the fourier domain or the frequency domain is much more efficient and even traditional numerical methods for fluid flows use spectral decomposition right so they do fourier transform and solve it in the fourier domain so we are mimicking some of that properties even when we are designing neural networks now um so that's been a nice benefit and the other thing is ultimately what i want to emphasize is now you can process this at any resolution right so

if you now have input at a different resolution you can still take fourier transform and you can still go through this and the idea is um this would be a lot more generalizing across different resolutions compared to say convolutional filters which learn at only one resolution and don't easily generalize to another resolution any questions here i hope this concept was uh you know clear at least right you got some insights into why first of all fourier transform is a good idea it's a good inductive bias you can also process signals at different resolutions using that

and you can also have this principle approach that you're solving convolution right a global convolution a continuous convolution in a layer and with nonlinear transforms together you have an expressive model we have a few questions coming into the chat are you able to see them or would you like me to read them uh would be helpful if you can yeah i can also see them okay now i can see them here okay great um yeah so how generalizable is the implementation of so you know so that really depends right you can do fourier transform on

different domains you could also do non-linear fourier transform right so i and then the question is of course right if you want to keep it to just fft are there other transforms before that to like do that end to end and so these are all aspects we are now further right uh looking into uh for domains where it may not be uniform but the idea is if it's uniform right you can do fft and that's very fast and so the kernel r is um so so the kernel r is going to be yes it's going

to like but it's not the resolution right so remember this r uh the weight matrix is in the frequency domain right so you can always transfer to the frequency space no matter what the spatial resolution is and so that's why this is okay i hope that answers your question great and the next question is essentially integrating over different resolutions and take the single integrated one to train your uh neural network model uh so you could right so depending on you know if your data is under different resolutions you can still feed all of them to

this network and train one model um and and also the idea is a test time you now have different query points a different resolution you can still use the model and so that's the benefit because we don't want to be training different models for different resolutions because that's first of all clunky right it's expensive and the other is it may not you know if the model doesn't easily generalize from one resolution to another it may not be correctly learning the underlying phenomenon right because your goal is not just to fit to this training data or

one resolution your goal is to be accurately let's say predicting fluid flow and so how do we ensure we're correctly doing that so better generalizability if you do this in a resolution in variant manner i hope those answer your questions great so i'll show you some quick results uh you know here right this is navier stokes to the two dimensions and so here we're training on only low resolution data and directly testing on high resolution right so this is zero shot so and you know you can visually see that right this is the predicted one

and this is the ground truth that's able to do that but we can also see that in being able to capture the energy spectrum right so if you did the standard like unit right you know it starts you know i mean it's well known that the uh convolutional neural networks don't capture high frequencies that well right so that's first of all already a limitation even with the resolution with which it's trained but the thing is if you further try to extrapolate to higher resolution than the training data it you know deviates a lot more and

our model is much closer and and right now we are also doing further uh versions of this to see how to capture this high frequency data well um so that's the idea that we can now you know think about handling different resolutions beyond the training data so let's see what the other um yeah so the phase information so remember we're keeping both the phase and amplitude right so the frequency domain we're doing it as complex numbers so we're not throwing away the phase information so we're not just keeping the amplitude it's amplitude and phase together

that's a good point good good now yeah yeah so i know we intuitively think we're only processing in real numbers because that's what standard neural networks do uh and by the way if you're using these models uh just be careful by torch how to bug in complex numbers uh for gradient updates i think for adam algorithm and we had to redo that so they forgot a conjugate uh software uh so yeah so but yeah this is complex numbers great um so i know there are you know a lot of different uh examples of applications but

uh i have towards the end but that you know i'm happy to share the slides and you can look so the remaining few minutes we have i want to add just another detail right in terms of how to develop the methods better which is to add also the physics laws right so here i'm given the partial differential equation it makes complete sense that i should also check how close is it to satisfying the equations so i can add like now this additional loss function that says am i satisfying the equation or not right and and

one little detail here is if you want to do this at scale and if we want to like you know auto differentiation is expensive but we do it in the frequency domain and we do it also very fast so that's something we developed but the other i think useful detail here is right so you can train first on lots of different problem instances right so different initial boundary conditions you can train and you can learn a good model this is what i described before this is supervised learning but now you can ask i want to

solve one specific instance of the problem now i tell you a test time this is the initial and boundary condition give me the solution now i can further fine tune right and get more accurate solution because i'm not just relying on the generalization of my pre-trained model i can further look at the equation loss and fine-tune on that and so by doing this we show that we can further you know we can get good errors and we can also ask you know what is the trade-off between having training data because training data requires right having

a a numerical solver and getting enough right training points or just looking at equations right if i need to just impose the loss of the equations i don't need any solver any data from existing solver and so what we see is uh the balance is maybe right to get like uh really good error rates right to be able to quickly get to good solutions over a range of different conditions is something like maybe small amount of training data right so if you can query your existing solver get small amounts of training data but then be

able to augment with um just you know this part is unsupervised right so you just add the equation laws over a lot more instances then you can get to good generalization capabilities and so this is like the balance between right being data informed or physics informed right so the hybrid where your you can do physics informed over a lot more samples because it's free right it's just data augmentation but you had a small amount of supervised learning right and that can pay off very well by you know having a good trade-off is the model assumed

to be at a given time um so yes in this cases we looked at the overall error i think like l2 error both in space and time so it depends some of the examples of pdes we used were time independent others are time dependent right so it depends on the setup i think this one is time dependent so this is like the average error great so um i don't know how much longer uh i have um you know so alex are we stopping at 10 45 is that the yeah we can go until 10 45

and maybe wrap up some of the questions after that yeah so like quickly another i think conceptual aspect that's very useful in practice is solving inverse problems right so you know the way partial differential equation solvers are used in practice is you typically already have the solution you want to look at what the initial condition is for instance right like if you want to like you know ask what about the activity deep underground right so that's like the initial condition because that propagated and then you see on the surface what the activity is and same

with the famous example of black hole imaging right so you don't know directly you don't observe what the black hole is and so all these indirect measurements means we are solving an inverse problem and so what we can do with this method is you know we could first like kind of do the way we did right now right we can saw learn a partial differential equation solver in the forward way right from the initial condition to the solution and then try to invert it and find the best uh fit or we can directly try to

learn the inverse problem right so we can see like given solution learn to find the initial condition and do it over right lots of training data and so doing that is also fast and effective so you can avoid the loop of having to do mcmc which is expensive in practice and so you get both the speed up of you know replacing the partial differential equation solver as well as mcmc and you get good speed ups um so chaos is another aspect i won't get into it because here you know this is right more challenging because

we are asking if it's a chaotic system can you like predict its ultimate right statistics and how do we do that effectively and we also have frameworks there i won't get into it and there's also a nice connection with transformers so it turns out that you can think of transformers as finite dimensional right systems and you can now look at continuous generalization where this attention mechanism becomes integration and so you can replace it with these right fourier neural operator kind of models in the spatial mixing and even potentially channel mixing frameworks and so that can

lead to good efficiency like you know you have the same um performance as a full a self-potential model but you can be much faster because of the fourier transform operations yeah so we have many applications of these different frameworks right so just a few that i've listed here and but i'll stop here and take questions instead so lots of like i think application areas and that's what has been exciting collaborating across many different disciplines thank you so much anima are there any remaining questions from the the group and the students yeah we got a one

question in the chat thanks again for the very nice presentation well the neural operators be used for various application domains in any form similar to print trade networks oh yeah yeah so pre-trained networks right so whether neural operators will be useful for many different so i guess like the question is similar to language models you have one big language model and then you apply it in different uh contexts right so there the aspect is there's one common language right so you wouldn't be able to do english and directly to spanish right so but you couldn't

use that model to train again uh so it depends on the question is what is that nature of partial differential equations right for instance if i'm like you know having this model for fluid dynamics that's a starting point to do weather prediction or climate right so i can then use that and build other aspects because i need to also right like you know there's fluid dynamics in the cloud uh but there's also right like kind of precipitation there's other micro physics so so you could like either like kind of plug in models as modules in

a bigger one right because uh you know there's parts of it that it knows how to solve well or you could like uh you know ask that uh or in a multi-scale way in fact like this example of stress prediction in materials right you can't just do all in one scale there's a core scale solver and a fine scale and so you can have solvers at different scales that you train maybe separately right as neural operators and then you can also jointly fine tune them together so in this case it's not straightforward as just language

because you know yes we have the universal physical laws right but can we train a model to understand physics chemistry biology that seems uh too difficult maybe one day but the question is also what all kind of data do we feed in and what kind of constraints do we you know add right so i think i think one day it's probably gonna happen but it's gonna be very hard that's a good question yeah i have uh actually one follow-up question so i think the ability for extrapolation specifically is very fascinating and sorry alex i can't

i think you're completely breaking up i can't oh sorry is this better no no let's see maybe you can type so the next question is from the physics perspective interpreters as learning the best renormalization scheme so indeed you know like uh even convolutional neural networks there's been like connections to renormalization right so um i mean here like you know the uh yeah so we haven't looked into it there's potentially an interpretation but the current interpretation have we have is uh much more straightforward in the sense we are saying each layer is like an integral operator

right so which would be solving a linear partial differential equation and we can compose them together and then that way we can have a universal approximator but yeah that's to be seen it's a good point another question is whether we can augment uh to learn symbolic equations um yeah so this is right it's certainly possible but it's harder right to discover new physics or to discover some new equations new laws uh so this is always a question of like right we've seen all that here but what is the unseen uh but yeah it's definitely possible

um so and alex i guess you're saying the ability for extrapolation is fascinating um so potentials for integration of uncertainty yes quantification and robustness i think these are really important uh you know on other threads we've been looking into uncertainty quantification on how to get conservative uncertainty for deep learning models right and so that's like the foundation is adversarial risk minimization or distributional robustness and we can scale them up uh so i think that's uh an important aspect uh in terms of robustness as well i think there are several you know uh other threats we

are looking into like whether it is right designing say transformer models what is the role of self-attention to get good robustness or in terms of uh right the role of generative models to get robustness right so can you combine them to purify like kind of right the noise in certain way or denoise uh so we've seen all really good promising results there and i think there is ways to combine that here and we will need that in so many applications excellent yeah thank you maybe time for just one more question now um sorry i still

couldn't hear you buddy i think you were saying thank you um yeah so the other question is the speed up versus of the um yeah so it is on the wall clock time with the traditional solvers and the speed increase with parallelism or um yeah so i mean right so we can always certainly further make this efficient right and now we are scaling this up one in fact uh more than thousand gpus uh in some of the applications and so there's also the aspect of the engineering side of it uh which is very important at

nvidia like you know that's what we're looking into this combination of data and model parallelism how to do that at scale um so yeah so those aspects become very important as well when we're looking at scaling this up awesome thank you so much anina for an excellent talk and for fielding sorry i can't hear anyone for some reason it's [Music] can others in the zoom hear me yeah somehow yes i i don't know what maybe it's on my end but uh um it's fully muffled for me so uh but you know anyway i i think

it has been a lot of fun so yeah and uh yeah i hope uh you got now a good foundation of deep learning and you can go ahead and do a lot of cool projects so yeah reach out to me if you have further questions or anything you want to discuss further thanks a lot thanks everyone

![Vectors & Dot Product • Math for Game Devs [Part 1]](https://img.youtube.com/vi/MOYiVLEnhrw/maxresdefault.jpg)