a huge release out of deep seek today so deep seek R1 their reasoning model is now fully open source with an MIT license once interesting with this model is it is the first major competitor to open AI 01 series of models in this video I'll give you a brief overview about the model the pricing some of the technical details and then I'll show you how to get started with the model right off the bat to go over some aspects about this model it's a mixture of experts model in terms of the active parameters it's 37 billion active parameters what's interesting with this is it's actually the same size as deep seek V3 for the mlu score it scores a 90. 8 in comparison claw 3. 5 Sonet has 88.

3 GPD 40 has 87. 2 respectively and this even outperforms 01 mini and it is just shy of open AI 01 that was just released last month just about all of the metrics for the coding benchmarks with the exception of ader polyot are just shy of 01 but where you can really see the difference on some of these benchmarks is look at code forces for instance Claude 3. 5 Sonet scores 20.

3 gbd4 23. 6 R1 scores 96. 3 another huge thing with this release is it has a very permissive license it's MIT license you can distill the model you can commercialize the model you can use the outputs to fine-tune other models or use the outputs from the model for synthetic data generation or basically whatever you want they also released six small models that are fully open source as well these are open source distill models from R1 we can see here deep seek R1 we have 1.

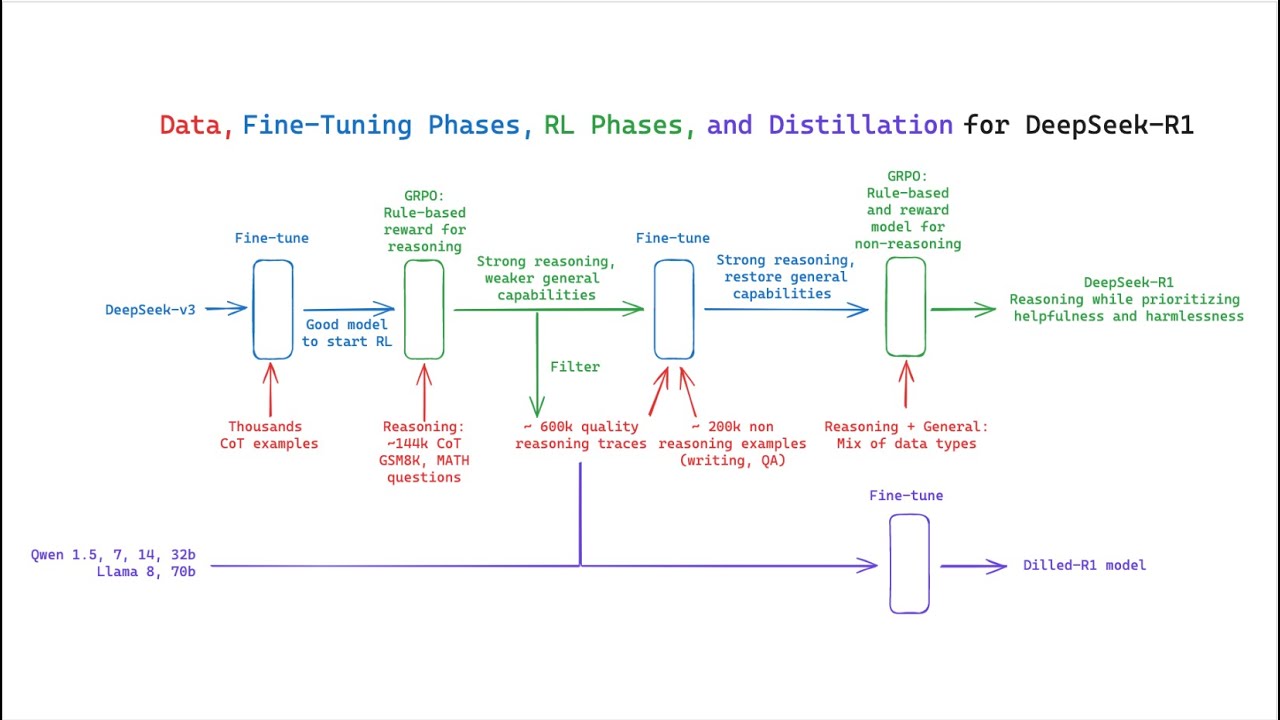

5 70b 14b all the way through to 70b and the 32b and 70b these are on par with opening eyes 01 mini what's great with these distilled models is you're going to be able to run this basically regardless of the hardware that you have now to quickly touch on the technical report I'll also link everything within the description of the video if you're interested we introduced our first generation reasoning models deep seek r10 and deeps R1 deeps r10 a model trained via large scale reinforcement learning without a supervised fine tuning as a preliminary step demonstrating remarkable reasoning capabilities through RL deep seek r10 naturally emerges with numerous powerful and intriguing reasoning behaviors however it encounters challenges such as poor readability and language mixing to address these issues and further enhance reasoning performance we introduce deep seek R1 which incorporates multi-stage training and cold Data before RL deep seek R1 achieves performance comparable to opening eyes 01217 on reasoning TS to support the research Community we open source deep seek r10 deep seek R1 1. 5b 7B 8B 14b 32b 70b which were all distilled from Deep seek R1 based on quen and llama if you're interested in diving in a little further I'll link everything with in this technical report within the description of the video to try out the model you can try it out at chat. Deep seek.

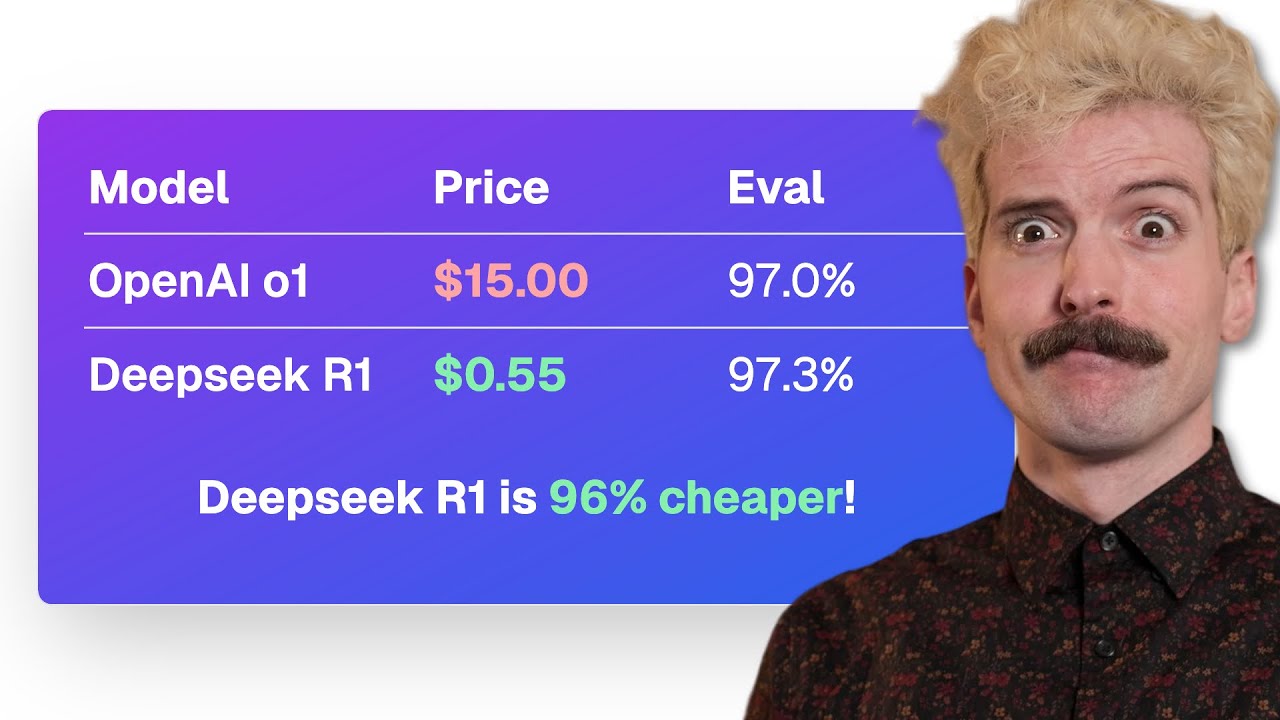

what's really great with this is you also see the reasoning steps here which is different than what you get from open ai1 where you don't actually see here we see our short story but now if we actually test some of the reasoning capability if I say how many times did the letter R occur in that story I'll put that through and we see okay the user wants to know how many times the letter R appears in the story what it's doing is it's breaking up all of the sentences and then with each sentence it's breaking up every single word and it's counting word by word at the bottom we have the breakdown of all of the different paragraphs and potentially how many times the letter are occurred within that now in addition to the chat interface you can also access this from their API with the model string of deep seek Reasoner next what's really unreal with this model is the pricing for a million tokens per input it is 55 cents with context caching is 14 and then for the output tokens it is $219 so if we compare that to 01 output tokens are $60 a million tokens of input is $15 and context caching is still $7.