um hi everyone um Chris and Danel thanks uh Chris and Danel thanks for organizing this thing um so I'm going to talk about the homomorphic encryption as the the slide indicated but let me just give like a very short exposition to this whole morning uh so um Danielle and venod and hotk and myself uh were sort of trying to to figure out a way to tell you this this story about all these all these new developments and I'm going to start by talking about fully homomorphic encryption um but Daniel and and V hotk are actually

going to show you is that this hom fly homomorphic encryption scheme which is quite Wonderful by itself can actually be seen as just one face of a really beautiful uh mathematical structure that can be used to achieve uh um other uh exciting cryptographic goals um so for the next 40 and some minutes you should you can just forget about this whole thing and just think about whole morph encryption uh and then we'll see how it fits into this uh uh larger FR framework so I imagine that most of this audience have uh at least sort

of uh seen what this at least the talk about homework encryption or heard about it uh but if you do need a motivating ex example you can think about this uh problem of Outsourcing computation uh where we have this uh weak device that wants to use um a strong server in order to compute some function and there are many real life uh examples as to why we want to do it uh and also some fake life some you know just for cryptographic purposes uh reasons why why this this would be sought after um more formally

um we we have a client that has an input and we have a server that knows how to compute some function and we want the client wants to use a server uh to compute this uh this f ofx um so the way it's normally done we're just going to send the input to the server the server is going to compute and send us the output back um and this works great uh so long as we sort of don't care about the server knowing our input and you can think about some cases uh where uh our

input the x is actually Prim it uh and you don't want the server uh to to learn anything about to learn anything about X so that's the problem of privately Outsourcing computation um and we we would like to allow the server to perform uh processing uh on on the input in a blindfolded manner uh and sort of the most natural way as cryptographers to think about sort of sending information in a way that does not reveal its content is using encryption so we would like to take our input encrypt it and send it to the

server and now the server well just by the security of the encryption scheme uh should learn nothing about the contents about X itself um but we still want to allow the server to compute on this information uh so obviously the server does not know X so it cannot produce F ofx itself but hopefully it will be able to sort of work on this encryption of X and produce some value y that we can decrypt um locally uh to get to get the value F ofx that we want so really sort of this this magic that

we're looking for uh is what we call a homomorphic evaluation function and this is a function that takes uh um a description of this procedure F that we want that the server wants to carry out and an encryption of the input and produces an encryption of the output so we want want to want to get a way to go from encryption of the input to encryption of the output using only public information so without the server learning anything about the input X itself and uh this leads us to the actual uh definition of fully homomorphic

encryption so it's an encryption scheme and we're thinking about say the public key setting so we're going to start by a key generation process so we're generating uh a secret key uh and a public key for our scheme and um uh the public key can be known to anyone and in particular to the server and like any uh uh public key encryption scheme um we're going to use the public key to encrypt our inputs so in this case uh the party that does both the encryption and the encryption is the same person and this is

actually quite an interesting Case by by itself we can also allow like the Normal public e setting to have a third party that does the encryption so that the encryptor and the decryptor do not have to be the same person um and then the server he knows the function that he wants to compute and he knows the um he know he has the encrypted input so um he will uh be able to execute um a homomorphic evaluation procedure uh and obtain this value uh this value Y and our correctness requirement is that if you know

someone encrypted an input properly and the server um um executed this homomorphic evaluation properly then uh when we decrypt we really should get uh the value F ofx that we wanted so uh this is uh uh what homomorphic encryption is supposed to is supposed to provide um and of course in addition to correctness we also want privacy uh this is sort of why we're here um so but the input privacy turns out to be defined in sort of the most obvious way this is just the same as semantic security for any other public encryption scheme

so we don't need to uh worry too much about the definition of security so we just want to say that for all for all X an encryption of X is going to be computationally distinguishable for from an encryption of zero and this should of course uh hold true even for a distinguisher that knows the public hey so this is just standard semantic security the sort of gimmick of of homomorphic encryption is more in terms of functionality being able to perform these uh homomorphic evaluations so um if you think about it for a bit you can

you can see that if you know this this is all that that we want then this is very uh uh easy to achieve just by sort of cheating um I can just Define this homomorphic evaluation as a function that sort of does nothing it just outputs its input so I can take my y to just be F and the encryption of X themselves and sort of put push all the work down to the decryption and Define a decryption process that sort of takes a toule of function and Cipher text and uh first of all decrypts

the the the cipher text and then uh evaluates the function therefore sort of uh um uh going back to the to the um solution of doing everything locally um and sort of just to prevent this uh the generate solution we're going to require a condition that is called compactness and there are many ways to define it but for now just think about it or not for now for this talk just think about it as requiring that this value y the output of the homomorphic evaluation um should should not depend its length should not depend uh

on the function f so it doesn't necessarily have to look exactly the same as a fresh encryption of f ofx but uh it should not it should not grow as we uh uh as we change the the function that we want that we want to evaluate um so this is this is this is homomorphic encryption and when we talk about fully homomorphic encryption what we mean by fully is that we want this we want to to allow the server to evaluate any function that it wants so uh we want this correctness condition uh to hold

for any uh efficiently computable F and actually you can even extend it to inefficiently computable so long as the server so long as the server has the uh sufficient computational power to to execute the function um and sort of this seems like a uh pretty for aable task to to to allow correctness for any uh possible for any possible function but you know we already know how to uh take these functions and represent them as circuits and then break those circuits into Gates and and uh getting homomorphism with respect to these uh tiny building blocks

is going to give us uh is going to give us correctness for the whole thing um and indeed the task that our task when constructing uh homomorphic encription schemes will be to get homomorphism for a universal set of gates um so in particular one uh um easy to handle Universal set of gates is just a nand gate we know that uh any pomal size circuit can be U made to to um um be composed of Nan gates at all n Gates uh uh and nothing else uh but another perhaps more popular choice um is to

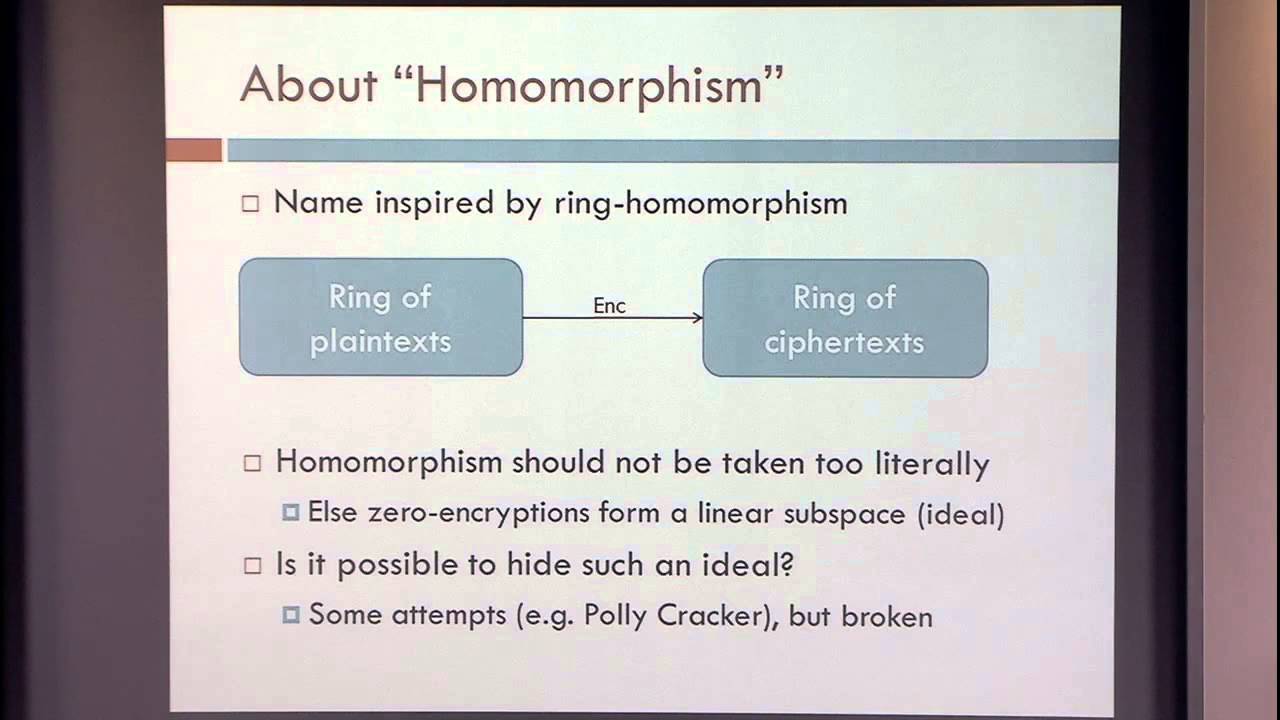

think about sort of a more arithmetic case uh and think about addition and multiplication Gates so uh actually addition if we get addition and multiplication over really any field or even any ring uh this should be good enough uh because well we can think about just having let's say we have addition and multiplication and we can just represent uh the wires of our circuits uh just as the value zero and one uh so not even use all the values that this uh that this field allows us and then multiplication is really going to correspond to

the binary end operation and we can always represent binary KN by the 1us X function um and therefore if we get additional multiplication over uh any uh um over any ring this is going to give us this is going to give us what we want um so this is uh this is going to be our goal uh when we want to construct homomorphic encryption and just to say that while fully homomorphic is the uh fully homomorphic encryption is our ultimate goal um we're actually going to be pretty happy with what's called a leveled fully homomorphic

encryption scheme so in a leveled fully homomorphic encryption uh uh scheme um we actually don't sort of commit to uh being able to evaluate all functions or rather only bound the depth functions and uh what we want is actually uh um to be able to produce uh a scheme for any depth bound that we can get so uh um we sort of know in advance what's the most complicated or the deepest circuit that we will want to that we want to evaluate and then we can produce a scheme that supports this functionality so this is

what fully homomorphic encryption is all about this is what we're going to talk about uh um in in this talk and of course I cannot mention uh a fully homomorphic encryption without sort of mentioning it sort of this this great story uh reest Edelman andas came up with the idea of fully homeomorphic encryption back in 1978 so this is really just the dawn of of publicy encryption uh we didn't even have uh I guess semantic security at the at the time I still uh sort of this wouldn't it be great idea uh came up um

but it was was really far from uh uh from being achievable and that that remained the case for for many years despite uh many attempts until this uh break Breakthrough by uh by Craig Gentry um which was a a grad student at the time uh in I guess late 2008 and he showed the first uh candidate the first the first scheme uh uh uh that actually uh sort of supported this uh this uh functionality and security and this sort of made the whole field really sort of bubble steam uh and there were a lot of

developments and a lot of uh uh great ideas um but in this talk I'm actually only going to present I guess the most modern approach to to fully homomorphic encryption uh and this is this uh approximate igen Vector method um presented by gentries high in waters um and uh this was only from two years ago as as Chris mentioned um and the basic idea is the following so um we want to get uh we want to get this uh homomorphic evaluation to work for say addition and multiplication and we notice that uh if we have

two matrices C1 and C2 uh that have the same igon Vector s um and in C1 this igen Vector corresponds to igen Value M1 and C2 it corresponds to I value M2 then if we add these two matrices then this new the the sum of the two matrices is well it's going to have the same igen Vector s and the corresponding igen value is going to be the sum of these two values and M1 and M2 and if we multiply these two matrices and actually even if we multiply them in the in the opposite order

this is a non-commutative operation then uh the the igen value that corresponds to S is going to be M1 * M2 and we're actually going to sort of see it uh uh in in a little more detail but this sort of basic idea sort of gives rise to the following uh um I guess meta uh meta scheme so why don't we have our Cipher text uh matrices and the the message that is encoded that is encrypted in The Matrix is going to be an igen value that is that corresponds to an igen Vector which is

secret so you know at least uh hopefully if this uh igen Vector is secret then perhaps the message is hidden but if I know what this uh what this Vector s is then I can recover the message so uh if we had an encryption scheme with this uh uh with this high level structure uh then we would be able to do these these homomorphic operations so uh just to pick some uh uh some uh U field to work with let's say that we work module Q for some integer Q um and uh uh so we

we'll get homomorphic for addition multiplication and this will give us full homomorphism um and this seems to be uh again so this this is a a great high level uh outline but it's it's really uh easy to see that it's insecure uh because uh it's it's pretty easy to compute the igen vectors uh of The Matrix so uh the secret key is not going to be hidden in the strong way that we want it to be hidden uh for for for secure encryption schemes so at least if you want to sort of get the sort

of atmost uh level of security that that we want semantic security this seems to not be uh uh the best uh the best idea um so it's tempting to just sort of toss it and and try to try something else but actually um you can think about just maybe slightly tweaking this idea so what if um my secret key is not really an igen Vector but it's approximately an igen vector and by that I mean the following so first of all uh let me sort of warn you that I'm going to use instead of uh

the secret key being an igen Vector of the cyppher Tex it's going to be an igen Vector of the transposed and this just means that I'm multiplying on the left instead of on the right I hope this uh notation is not too confusing it will be helpful uh for the rest of the morning um so uh instead of uh so what we say when we say approximate igen vector is that uh when I take uh the the secret key vector and multiply it by the cipher text I'm going to get message times secret plus some

small noise or in other words it's going to be approximately equal uh to the message times secret so if there was an equality here then this means that really s is an igen Vector with igen value M but we're sort of relaxing this this condition um and this is sort of the approximate uh uh the approximate I Vector uh idea and for now uh take it on faith that um if we that when we are able to achieve this uh uh uh this schema um then uh uh such Cipher texts are going to be decryptable

so long as this uh noise Vector um has a very low norm and the snorm needs to be much smaller than q q is the modulus that we work over so um um we we we would like to we would like to see if uh you know just relaxing this uh uh um perfect condition to relaxed one is still going to give us the homomorphism that we want so let's see how this works so let's say that we have two of these Cipher Tex C1 and C2 uh such that C1 equals to m1s plus E1

and C2 well just uh the the equivalent and let's say that we start with noises that really are uh really small much smaller than q and our goal remember we have this this server that has an encryption of the input so encryption of all these bits of the input and it wants to execute a circuit so in particular we want to get two cyer we want to go from two Cipher teex C1 and C2 to an encryption of their sum to be able to go to encryption of their sum and to an encryption of their

product um so uh in order to get the sum um well let's just try to add these two matrices it seemed to work before when we didn't have noise so let's see what happens so um um I I let's say I generated C add as C1 plus C2 let's see what happens when I try to decrypt uh C1 plus C2 with the same secret key that worked for C1 and C2 so uh this just gives me S * C1 plus s * C2 okay and I'm just going to open these two expressions um based on

these uh uh sort of what we know on on C1 and C2 and of course we only do it in our head the the the uh homomorphic evaluator the server itself does not know does not know s but we should check that really this homomorphic evaluation means something um and what we get is really M1 Plus 2 * S Plus E1 plus E2 so we really get uh something of the same form form as what we start with which is great which means that we really we really get this uh um homomorphic homomorphic correctness for

addition but our new noise is the sum of the old noises so the noise grows a little um this is perhaps a little a little worrisome but if we start with noises that are really really small then at least for a few for a few operations we're going to be okay so at least it's going to give us uh some uh uh some level of of homomorphism okay so that's good uh now let's see what happens when we try to multiply so uh again before we said well maybe for multiplication we're going to take C1

* C2 let's see what this gives us when we try to decrypt so um s * C1 * C2 I'm I'm going to uh try to see what what happens um so s * C1 is just M1 * S Plus E1 this comes from here here and this this multiply C2 open the parenthesis uh you get um uh M1 c2s so remember that the M's are scalar so I'm I'm pretty freely I'm moving them from side to side of these vectors and matrices and so forth so the M's are scalers but everything else uh uh

is uh non-commutative C2 right uh that's what where2 instead of c2s right right and again this should be S time C2 thanks uh Chris and um so s * C2 just from here is going to be M2 * S Plus E2 um and we have this additional this additional term which is E1 * E2 which we just sort of keep carrying around because well we don't know how to break it any further um and what we get in the end is uh M1 so M1 * M2 * S Plus M1 * E2 plus E1 *

uh C2 so so we got something that kind of looks like what we had before so we have M1 * M2 * s which is sort of our new message time uh times s but for the noise well we got something more complicated than before uh uh we get M1 * E2 plus E1 * C2 it's uh sort of asymmetric in a sense you'd get something else if you did it the other way around um and it's a good question whether this thing is going to remain small or not it really depends on the specific

implementation of this uh of this scheme so um it seems like our noise is going to grow uh and in order to control the growth in order to make uh uh to make this thing manageable we really want uh C2 to be small and uh there's sort of many Notions of how you can sort Define it in the tightest way but for us we're just going to think that we want all of the entries of C2 uh to be really small and if that if that was the case then really we could argue that C2

does not make this part of the noise uh too large so so um it seems like it could work uh if we had an if we had an approximate igen value uh igen Vector uh uh encryption scheme then uh we should be able to get at least some some sort of homomorphism and now let's see how to actually construct a scheme like that and um we're going to use uh um oh and yeah I should say that you can also do the the opposite operation I guess I did um so we're going to use the

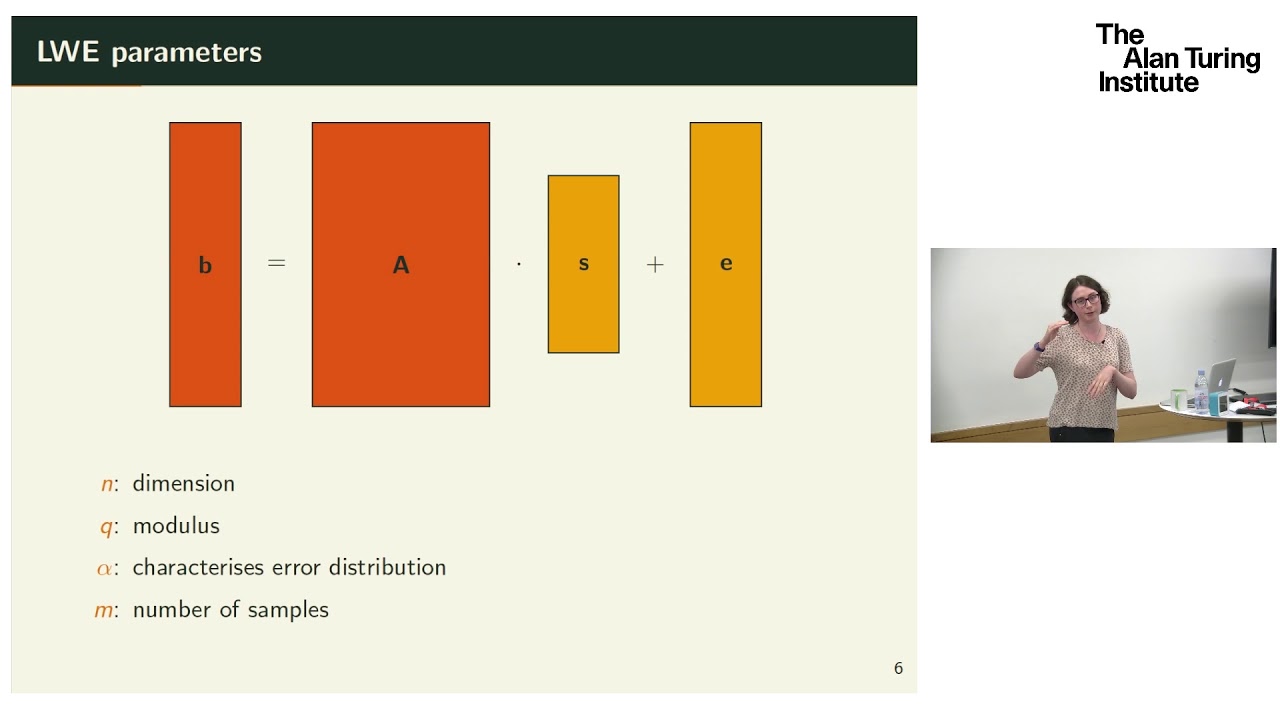

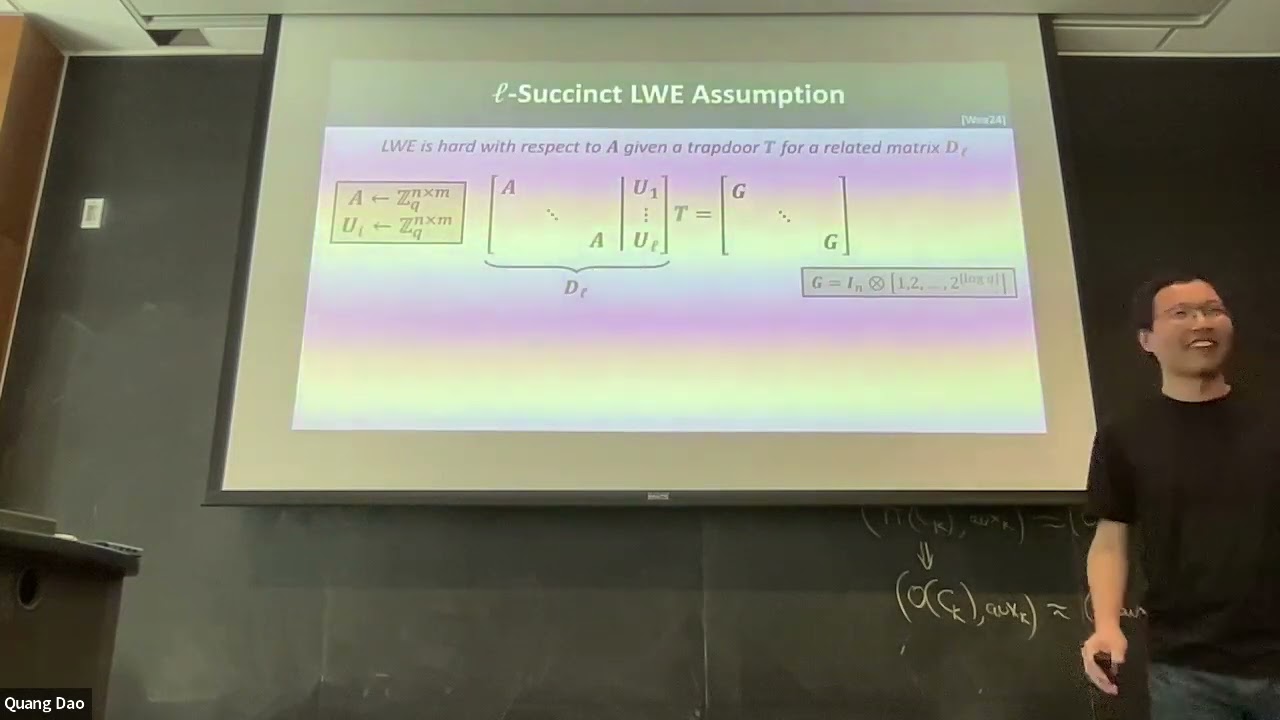

learning with errors assumption which had been very very useful in cryptography since regive introduced it in 2005 and actually also a little before without knowing um and this assumption uh says the following um I guess the the uh intuitive statement is to say that if you have um random noisy linear equations uh module and integer uh then they're going to be uh um computationally indistinguishable from uniform and to be a little more concrete um what we have is the setting is the following so uh we think about the uniform Matrix a and a is uh

uh um I guess wide and uh and short it's n by m so n is this Dimension and M is and capital M is this Dimension so this is just going to be a a uniform Matrix and um we're thinking about uh um we're thinking about this uh this Matrix as a sequence of coefficients for linear equations what does that mean we have a secret n dimensional Vector s and uh um we we take the product of the vector s and the Matrix a so this actually uh gives us sort of a sequence of linear

equations where the coefficients of the equations are The Columns of a and the variables are the uh um uh the components of the vector s um so we take these uh these these linear equations and actually we add some noise to them and this noise is just going to be a vector of small entries in order to get the tightest reduction you may have heard that you want to take this Vector to be drawn from a gaussian distribution or a discrete gaussian distribution but for the purpose of this talk we're not going to care about

it we're just going to think about this as a vector of very short elements in particular each element in this Vector is going to be smaller in absolute value then some Alpha time q and Alpha is going to be really really tiny uh and we're going to see how tiny it needs to be uh um later on so we take these uh linear uh um these random linear equations we add noise to them and we get uh we get some Vector B um what does it mean to be uh indistinguishable from uniform well uh so

we have this B which equals to S * a plus noise and now let's think about the joint distribution of this uniform Matrix a and the vector B it's not hard to see that this distribution is going to be statistically far from uniform at least if this uh if this Alpha is small enough and uh to see this just think about so s * a um is going to be a random element in the row span of a and this row span of a a is going to be is since it's wide and short this

is just um um a hyper plane in zq to the m so we have this fairly low dimensional hyper plane and we take a random point in this hyper plane obviously if I give you these two things it's not it's going to be statistically far from uniform and computationally distinguishable from uniform but turns out that if you just add some noise so if you think about this like as a uh a fuzzy hyper plane uh um in in this uh in this High dimensional space well it's still going to be statistically far from uniform but

uh the learning with eror assumption is that this is actually going to be computationally uh hard to distinguish um from just the uniform distribution so from the case where we sampled both A and B just uniformly and independently so um this is this is pretty great uh and uh we even think that it's true um in particular we we actually have like strong reasons to believe that it's true uh it had been shown in a sequence of Works starting with this work of regiv um that if you can distinguish this from uh from uniform then

well really at uh very I'm I'm saying I'm saying the statement very roughly um then uh um this is at least as hard as approximating uh short Vector problems uh in in uh the n-dimensional lades and this is true in the worst case so this is an average case problem it talks about distributions everything here is uh drawn from from some distributions and yet if you able to solve this uh uh this average case problem so if you're able to distinguish these two distributions you are actually able to approximate uh approximate short vectors in really

any lattice that I give you any end dimensional lattice that I give you um and the approximation Factor as you would expect sort of deteriorates uh as Alpha goes down so obviously if Alpha was like uh uh I guess one over infinity or zero then uh uh then uh um uh this would easily be distinguishable this problem becomes hard and uh as Alpha time Q becomes uh becomes bigger this problem becomes uh becomes harder um but uh um from this point in on we're not going to be concerned about whether this is uh whether this

is hard or not and we'll feel pretty confident to use this as our uh as our hardest assumption um and I actually want to sort of rearrange the notation it will be more convenient for me um to just think about uh the Matrix that that consists of minus A and B and I hope this is not going to be too confusing but from now on I'm just going to call this a so my new a is just going to be what used to be minus A and B this is sort of the traditional notation but

this uh new one is going to be more more convenient for me and um what we have for this uh for this new Matrix is that if I multiply it by uh s concatenated with one then really what we get here is b - s * a b - s * a uh is going to be this short Vector so um what we uh so what we actually have so this new form of of lwe says that we have a matrix a we can generate a matrix a and a vector s such that s *

a uh equals to this very short Vector so obviously a is is very far from being uh very far from being uniform but on the other hand if I just show you a itself without telling you that I have the secret key this is going to be computationally indistinguishable from uniform um so um from now on we're just going to think about having being able to generate matrices like this and I showed you that this follows from the learning with errors assumption um all right so how do we uh how do we get an encryption

scheme uh from uh from uh uh such uh from from this from this lwe structure um so uh well this is uh I guess based on regev's uh um um reg's first uh first paper and also on this paper by Apple bound cash P andah High uh that gave an extension that will be very useful for us um so as you would guess the public key is just going to be my Matrix a um and my secret key is just going to be this secret Vector s how do I encrypt um I take my Matrix

and I multiply it by uh a uniform uh binary Vector so this is just now going to be uh a01 Vector even though we think about it module Q it's still only going to contain zeros and ones um and it's going to be uh it's going to be M dimensional so uh I multiply uh on the right here um and I add some uh other value Y and this value is actually going to be the encoding of the message so here is where the sort of message that I'm encrypting going to come into the play

not going to tell you how exactly at this point um so I'm taking this a r plus Y and I'm get my I get my Tex so my my cyhex is this uh uh is this C suby and to see why this is a secure encryption scheme well in the eyes of of an attacker who doesn't see the secret key only the public key this Matrix a is just a uniform uh is just a uniform matrix it's computationally distinguishable from uniform and had it really been a uniform Matrix we get from the from the leftover

hash Lemma that uh a * R um even given a is going to be statistically close uh uh to uh uniform and independent of of a and therefore um um we we can conclude that uh the The Joint distribution of the cipher text and the the secret the public key and the cipher text regardless of what this Vector Y is uh is going to be computationally indistinguishable uh from from being jointly uniform so long as m is large enough um and this is uh the and this is going to be uh this is going to

give us security uh for for this scheme all right good so we have a secure scheme but sort of we kind of want to also be able to decrypt uh so what happens when we try to decrypt these Cipher text so um hopefully this uh sort of Lego uh uh um presentation uh uh sort of hints that the way to the Crypt will be to just take the secret key and multiply it with uh with the cipher text if nothing else just because the dimensions match um so what happens what happens when we do it

we take the secret key times the cipher text well what is the cipher text it's a * r + y so I'm taking the secret key and multiplying it by a * R so we know that s * a is this small noise Vector so when we multiply by R we're going to get really the inner product of uh uh the vector Ada and the vector R both of these are small uh are are small vectors AA remember we said that it's uh uh the absolute values are at most Alpha time Q and R we

just chose it to be 01 so it's really uh it really has short entries so we're going to get something that is pretty small it's going to be a noise value but the second part perhaps um more interesting is uh we we uh again we take s times the the cipher Tex so this part we took care of um but now we have the vector Y and what we get is the inner product of our secret key and this uh encoding of the of the message Y and uh what you get is sort of an

approximate way uh to encrypt this inner product of secret key * y for any y that I want and I can do that without without knowing s itself so I can actually encrypt some uh function of the secret key without knowing uh without knowing what uh uh what the secret key is um and U okay so uh this see perhaps seems weird and um for one thing you'd want to perhaps see uh how you can just encrypt messages zero and one um so let me show you like a very rudimentary way to do it so

let's just think that when I want to encrypt a zero I'm just going to take this Vector y to be zero and when I want to encrypt a one I'm going to take this to be a completely random Vector in that case when I try to decrypt um if y equals to zero then I'm really only going to get this small noise uh but if Y is uniform then with very high probability I'm not going to get something that is small of course there are much better ways to uh to encrypt with the scheme but

I don't want to get into that we'll see uh a cooler way to encrypt with uh with additional properties um but this is sort of the basic uh uh encryption scheme that uh that that we're going to use and actually we're going to generalize it a bit because if we can encrypt with respect to vectors uh why not do it for matrices so um what we're going to do is actually take this these y's instead of being uh just the vectors we're going to sort of expand them to matrices um so to encrypt um our

encryption is just going to look like a bunch of the previous encryptions so we're going to get a cipher text which is a matrix but each of the columns of the Matrix is just like a column uh Cipher text like we saw in the previous slide so instead of multiplying by one row Vector that is 01 we're going to multiply by capital N such uh such vectors so we're going to get a * R plus some Matrix Y and now um our message is really needs to be our message needs to be encoded uh in

uh in this uh uh small n + 1 * capital N uh size Matrix so if you were wasteful before just sort of encrypting one bit in a vector now we're going to be even more wasteful and just uh uh encrypt uh uh in order to improve one bit we're actually going to have this entire uh Cipher text of uh uh um uh Cipher text this Matrix of a cipher text but so this this uh uh I claim is going to be a step in the right Direction because in the end we want to uh

implement this approximate igen Vector method and for that we do need our Cipher text to be uh to be matrices all right so uh the decryption is going to work in exactly the same way uh we're just going to get instead of just getting one entry we're going to get a vector of entries Each of which is a decryption of the respective column so I'm going to take s * Cy and what I'm going to get is this noise Vector Ada time R and again this Ada is is short and this r a matrix of

01 entries and therefore this is just going to be uh a vector of small entries this is our noise Vector plus uh Vector s times uh times The Matrix Y which which encodes the message all right good um how do we use this this to get the approximate approximate igen Vector well we need two things so first of all we need to choose y as a function of our message M um in a way that will allow us to do all these uh I igen Vector tricks um so I claim that this is going to

be the easier task or I guess um this is going to be the task that we're going to see second uh but first I want to sort of handle this uh trying to get the entries to be small so remember that we needed our our scheme to have small entries uh in order for the for this noise when we do multiplication to not grow by too much so um what in in in this uh in this uh particular scheme that uh that that we're thinking about this uh Cipher Tex Matrix actually looks like a uniform

Matrix in zq so obviously it's not going to have uh it's not going to have small entries so we need to come up with a way to take matrices that have large entries and make them into matrices that have small entries um and the way I usually describe it is uh the second stupidest thing that you can think of um so the first stupidest thing uh um is is to well if I want to have take a big number and making it to a small number well the first stupidest thing would be to just scale

it down uh but this doesn't work in this case because in a sense scaling down is equivalent to doing nothing um but perhaps the second stupidest thing would be to just take this number and break it into uh um I guess bin its binary uh it's binary digits uh each binary digit is small so everything is small so uh this is exactly what we're going to do uh we're going to uh take this uh Matrix uh this this Cipher Tex Matrix that we started with and break each of its entries uh into uh its uh

its binary representation so let's take an example think about the Matrix 3514 mod 8 um and the binary uh the binary decomposition of this Matrix well uh uh three is just 011 1 is 0 01 uh five is 1 0 1 and four is 1 0 0 um done I got a matrix well it's kind of bigger than the uh or taller than the original Matrix uh but maybe that's okay um and it only has small entries uh so uh this is uh this is what we wanted um but on the other hand this is

the second stupidest thing that you can you can come up with you lose all the structure that you had here I mean all these all the all the U linear algebraic structure that you had seems to have been gone um what what does it mean to take uh you know this secret VOR s times this bit Matrix that's kind of weird so um the the cool thing about this is that even though this uh operation is uh um in particular nonlinear the inverse of the the inverse of this uh I claim is is going to

be a linear operation and this going to be extremely useful so uh I claim that giving this uh bit DEC composition of C I can actually multiply it by some uh some red Matrix and get uh and get C back um and uh just maybe take a minute to think about how uh how you do it how do you sort of take the binary representation and convert it back into the number this is really a linear operation so this Matrix is just going to look like you know the powers of two for 4 to1 uh

4 to1 on the diagonal and zero uh uh every everywhere else um so uh this is uh um often referred to as the gadget Matrix and sometimes you change the orders of the the orders of the columns and this this still works it just corresponds to different binary representation um so uh um when you when you take this binary decomposition and multiply it by the gadget Matrix you really get your original Matrix back so this may not be as stupid as as as we thought um um because the the structure the linear structure doesn't really

go away uh and just to sort of emphasize uh that uh this this operation is really sort of the inverse of a linear operation this is usually denoted uh by G inverse of C so G is a matrix uh but G inverse is is a function so uh g g inverse is like an operator a nonlinear operator that works on matrices but uh its left inverse is just uh just a matrix and this notation is going to be extremely convenient uh uh as we will see in the in the next talks so um what we

have here is that well if we if we thought about uh uh trying to decrypt a cipher text a normal Cipher text so taking s * C then this is exactly equivalent to taking s * G so s s * the gadget Matrix times G inverse of C so in a sense we have a linear way to sort of treat these binary decompositions of uh of Cipher ttic um good so this uh uh so this problem is is solved we are able to represent matrices in in uh uh in a short way um and just

to sort of uh uh Point your attention I can generate this gadget Matrix for any uh um uh um for any number of of rows that I want and the property that it's going to have is that if it has uh K rows then it's going to have K * log Q columns um so the proportions are sort of uh um kept um so uh now uh um let's let's actually see this uh the final encryption scheme so remember our public key is this Matrix a which is wide and short our secret key is is

a vector uh and we have this property that s * a is is short is a is a short Vector Ada now to encrypt to encrypt a message M with Randomness R so this R is just going to be this big 01 Matrix that we saw before what we do is take a * R Plus M * G so my y Matrix The Matrix that I that I was alluding to before is really just taking the the uh my uh message M and multiplying it with uh the gadget the gadget Matrix uh G um and

uh this is going to be uh so the dimensions are going to be really the same as the dimensions of of the gadget matrix it's going to be n + one by capital N which is n+ one uh log Q all right so um um why why does this work why is this why is M * G the right choice uh for uh for the for the Matrix Y for the encryption well what we have for this types for this type of encryptions is that um when I take when I sort of take my Cipher

text and uh um multiply it by the secret key which is going to be an essential part of our decryption process what we get is a small Vector e like we saw before this is what you always get and then you get uh um s times The Matrix Y which in this this case is the same as the message M which I can move to the side Time s * G and perhaps at first glance it's not clear what's the igen vector here and it's not clear what's the where's the short Matrix but I can

actually sort of present it in a slightly different way um so s * C is really just s * G * G inverse of C uh which is uh um we just saw that and we get that SG * G inverse of C is small noise plus M * SG so if you want to sort of go back to this uh to the igen vector uh intuition then you can think that s you can think about s * G as being an approximate IG Vector for this G inverse of C Matrix which is both going

to be uh Square now because well the proportion magically just uh just match when you take G in it's not magic that's it has to work uh the the the G inverse just sort of makes this makes the cipher text Matrix uh Square so uh it's going to be square and it's going to have an approximate uh uh igen Vector which is s * G but actually this intuition that was uh pretty useful in getting us to this point uh we're really we can abandon it uh from this point and on and all that I'm

going to care about is that I'm getting this uh encryption scheme uh for which um this invariant holds so um my Cipher texts are these matrices such that s * C equals m * SG plus small noise and now let's see how to do uh um well first of all decryption so uh I didn't I didn't show you how to decrypt these uh uh how to decrypt these Cipher text um it doesn't matter that much but uh let's let's see it anyway so in order to to cryp the cipher Tex C I'm going to compute

s x c it's going to give me this thing but in order to extract M I'm going to do this uh this little trick um I'm going to multiply by G inverse of this Vector what is this vector well u n plus one is just a unit Vector it's 0 0 0 0 and one in the end um and I'm going to and I'm just multiplying it by the scalar Q over2 um so a nice property why this why do why did I choose this Vector so perhaps you remember ages ago when we uh sort

of defined this this Vector s we had a slightly shorter s and we added one in the end this means that the inner product of this U with our Vector s is going to be one so this is a vector that is uh uh sort of the right inverse of our secret key um so what happens when we do this multiplication well um we get e times that the noise e times this G inverse of something we know that this is kind of small so we take a small noise multiply by G inverse that's fine

um and this is going to be plus M * m * SG time this G inverse we know that G and G inverse go away um so at this point this this this becomes sort of this is where the fun happens whenever we see G and G inverse we can just sort of remove them uh remove both of them and what we get is something small plus Q over2 * m times inner product of s and U which is just one so we can just remove it so what you get is well if m equals

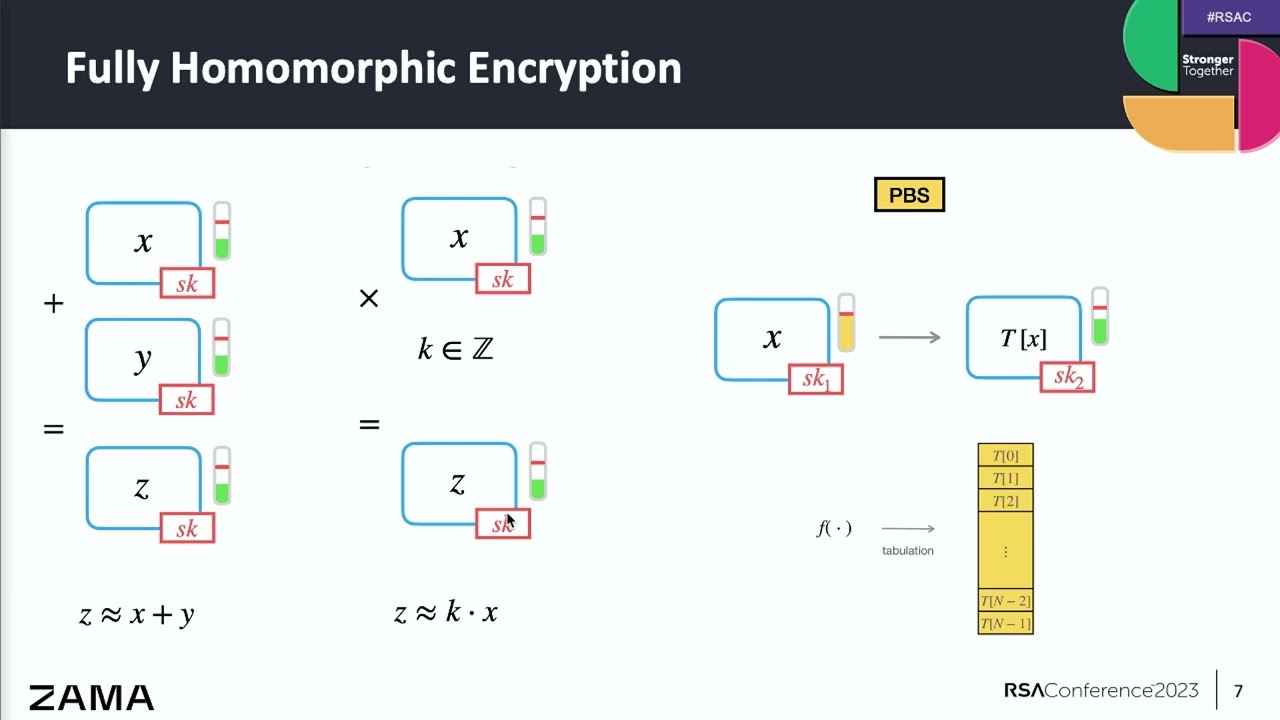

z then you get something really small and if m equals 1 then you get Q over two which is really sort of the largest element mod Q Plus something small so it's very easy to distinguish these two apart and this is this is how the scheme is is decrypted um um and now let's see how to do uh how to do uh homomorphic operations so again the sort of the invariant that we want to keep throughout the homomorphic evaluation is that we want to have Cipher Tex such that s * C equals e plus MSG

uh so so uh so long as this holds we can decrypt that's that's what we saw as long as this holds and the noise is is small enough so um in order to do homomorphic multiplication what we're going to do uh is we're going to take uh uh we have C1 and C2 and we take C1 now we don't multiply it by C2 just the dimensions don't work uh so uh we take C1 which kind of looks like this and multiply it by G inverse of C2 which luckily uh both has the right dimensions so

it's a square Matrix and it has short entries which is going to be very useful and let's see uh that uh that we get this invariant that we started with so we have S * C C1 * G inverse C2 s * C1 is just E1 + M1 SG get gets multiplied by G inverse of C2 um so we get E1 * C2 so G inverse E2 sorry so E1 is uh uh is going to be fairly small hopefully uh and G inverse is just going to be a 01 Matrix so we remain in the

pretty small domain um times M1 SG * G inverse of C2 so uh um now the way the way that the scheme the scheme had been constructed sort of G sort of gives us that this uh uh s * g s * G which just seemed destructive now just cancels out with this G inverse and what we get is uh E1 G inverse E2 plus M1 s C2 so G and G inverse goes away um s * C2 we know how to we know how to expand it further um we just have uh M1 *

E2 plus M2 SG that's again coming from up here um and uh uh so just opening the parentheses and putting everything together well we see that we have M1 from here M2 from here SG so we have M1 M2 SG so this is good this is the the output of the multiplication operation now what do we have is the noise well we have uh uh M1 * E2 that comes from here so this is is going to be E2 is kind of small and M1 is 01 so this remains pretty small uh but this was

that was the part that we uh sort of were were pretty relaxed about also from before the the what used to be the bad part we used to have E1 * C2 here in the sort of naive uh naive approach but now we have E1 * G inverse of C2 um and this G inverse really has just small entries um and indeed this uh this new noise uh is going to be uh um is going to be pretty small we can bound it relative to the noises that we started with um so this is how

you do uh uh this is how you do multiplication so if you didn't sort of follow all the uh specific derivation uh the multiplication is defined like this and when you sort of open everything everything up you really get uh the same invariant that we started with except the noise is going to be this E1 G inverse E2 plus M1 uh M1 E2 and I'm almost done um so I just want to the first thing I want to point out is that um I did multiplication but actually doing an end is is just as easy

uh we just take uh the the this gadget Matrix Matrix G minus the multiplication so it's it's not hard to verify that taking G minus cyppher Tex is equivalent to uh uh making the message into 1 minus M so uh one minus multiplication gives us uh gives us nand and this whole thing works exactly the same way in fact the noise really is is uh the noise expression is the same in in both cases this G inverse is just going to affect what what happens here um so um what we get is that when we

do multiplication when we do an end the basic operation for for our circuit um our noise is going to grow as follows so I'm I'm giving a very loose analysis here you can be you can be much uh much tighter and get uh somewhat better uh uh get some some a somewhat better expression um but uh um and I see that I also swapped E1 and E2 here I'm sorry so we have E1 that gets multiplied by this uh by this G inverse let's just say that uh uh this uh um this grows the norm

by a factor of n the dimension of the Matrix uh on average is going to be much better um and we have this uh should be E2 times uh times M1 and M1 is just 01 so in the end if we started with uh some E1 E2 with Norm Norm E1 and Norm E2 what you get in the end is at most an increase of an N plus one factor in the uh uh in the amplitude of the noise um and you know it's is it good or bad well depends on your perspective uh it's

not as good as what we had for addition which was just a constant uh constant increase but the on the other hand it's much better than what you'd get trivially and in fact it's good enough to get uh uh to get homomorphic uh uh to get um non-trivial homomorphic capabilities um and I just want to point out that um this this noise expression it's uh perhaps very strange but one property that that we'll see that uh is going to become very useful is that um these e this this is actually a linear operation on the

original noises on E1 and E2 and linear in the sense that if I know uh the cipher text or the cipher text C2 and the message M1 then I sort of know how the how my old noise is going to progress into into the new noise and um well if you know the message then there's not this doesn't make much sense in terms of homomorphic encryption but we'll see that in other cases it is going to uh sort of make sense to know both the cipher text and the message but actually not the noise uh

so this is sort of just a preview um but uh just to wrap up um we showed how to do one gate uh if we want to evaluate an entire circuit then with each level of the homomorphic evaluation our noise Grows by a factor of this n plus one say and when we evaluate the depth d circuit um we get a noise growth of something like uh n to the power d and n is like a polinomial in our security parameter or size of uh size of the keys of our scheme and so forth um

and uh so in order to make this decryptable we need the amplitude of the noise to be much much smaller than uh much much smaller than Q or at least uh somewhat smaller than Q so we need this Alpha to be really small to be something smaller than 1 over n the D so it grows exponentially with uh the depth of the circuit that we want to evaluate um and again whether this is good or bad is is uh a matter of perspective it's the almost the best that we can get um and it's good

enough to get uh uh to get uh um practically any notion of homeomorphism that we want um so just to to wrap up I'm a little behind I see uh we showed the encryption scheme uh the way you encrypt is you take the public key Matrix a multiply it by 01 Matrix R plus mg this invariant is that uh when you take when you take your secret key time C you get noise plus message time SG um uh to do homomorphic homomorphic evaluation I just uh um take two cyhex C1 and C2 do the binary

decomposition and uh and multiply if I want n i take G minus this whole thing and this just gives us homomorphism uh we only we only saw how to do bound and depth because at some point your noise is going to grow too much and you're not going to be able to decrypt uh this is solvable but not in this talk so one way to do it is to sort of uh choose the parameters appropriately so if I know D ahead of time then I can choose the parameters for this lwe instance so that the

scheme is secure under uh subexponential uh approximation to Lotus problems so fairly strong assumption uh Gentry presented like a great idea uh called bootstrapping so the bootstrapping procedure actually allows you uh to get uh to get a scheme for for any depth without compromising on the um uh on the parameters of the L of the lwe instance uh so these parameters are not going to grow but something else will grow the size of the of the public key uh but in a way that does not affect the hardness um and actually using this approach uh

you can actually get homomorphic encryption based on a much weaker assumption namely uh the hardness of approximating lest problems to within a polinomial um a polinomial factor in the dimension um and actually but still there's this annoying order of quantifiers that I need to know the depth and only then I can give you a scheme uh and again this is shown by Gentry that if you combine this bootstrapping with an additional assumption which we don't know if it's true or not it's the circular security uh of uh of this of this encryption scheme so this

is an additional security requirement of the scheme then you can really get just one scheme uh that works for any for any depth circuit and this will really give you the full-fledged uh fully homomorphic encryption um so really I'm I'm already behind uh let's get Danielle to to tell us about signatures [Applause]