hello I'm aliser donalson I'm a lectorate at the department of computing at Imperial College London and this is the second of three lectures I'm recording about GPU verify a technique and tool for analyzing GPU kernels which we've been developing as part of the carp project in this lecture I'm going to start digging into the technical details of how GPU verify Works showing you how a GPU conel is translated into a sequential program to be analyzed so this is really the verification method before I start talking about the details I just want to say there's actually

a lot of exciting work about GPU verification and Analysis going on at the moment so the University of Utah the group led by Ganesh kapalak chrishan and have some really exciting work going on they were really the Pioneers in this field with their pug tool which was published in 2010 and pug and GPU verify share many similarities including the reduction to a pair of threads that I'm going to show you during this lecture so I want to be clear that some of the things I'm showing you here we use them but they're not necessarily our

original ideas they're things that have um been pioneered by others and extended by us there's some exciting work from the University of tenta also part of the cart project on permission-based separation logic um as a means for verifying GPU kernels and then there are a couple of techniques one from Utah and one from colleagues here at Imperial on analyzing GPU kernels to find bugs using Dynamic symbolic execution and finally there was a paper at last year's PDI conference about combining Dynamic and static analysis to prove race freedom of GPU kernels so if you would like

to find out more about these papers you could go to the GPU verify web page where there will be links to all the work that's going on in the community about GPU verification and please let us know if you have any more work of your own that you would like to share so what GP verify does is it actually focuses database analysis on barrier regions based on the observation that all threads executing a kernel and by the way during this lecture I'm going to be thinking about threads that are in the same group rather than

threads in different groups the tool and technique do handle the Intergroup case but for the purposes of Simplicity here I'm not going to go into the details of that so threads in the same group are always going to be executing between some pair of barriers so say we have a first barrier and a second barrier and a barrier-free region of code then at any point of execution threads are either before the first barrier or after the second barrier or they're in this barrier free code region and in particular it's impossible for there to be a

data race because of one thread executing before the first barrier and another thread executing inside this region of code because the barrier actually means that this is impossible the threads have to get to the barrier and then all proceed past the barrier of course this is a little bit more complicated if barriers are nested inside loop and our Tech does handle that in full but I'm not going to go into the details of that right now so a race can only occur due to two statements in the same barrier region and this greatly simplifies race

analysis we don't need to consider arbitary pairs of statements in the whole kernel we can actually consider pairs of statements in a single barrier region so this is immediately something that gives us a handle on the problem we're trying to solve all right so the key thing here is that data Ras analysis is restricted to barrier regions this doesn't help too much though because if we've got n threads and we have K statements in a barrier region so here we've got statements S1 S2 up to SK Then There are a large number of possible thread

schedules for this barrier region if we assume that these statements are Atomic statements so actually the total execution length of any interleaving is going to be n * K there are n threads that each have to do K things so in total there are going to be n * K things that happen how many way can we um inter leave these things well thread one is going to execute K statements and there are n * K choose K choices for the way thread one could execute these statements and then thread two is going to execute

n statements and there are going to be n minus 1 * K choose K choices for these because K of the positions in this n * K sequence have now been taken by thread one so they're n minus 1 * K positions left thread two you could take K of these so there are a large number of choices for for these k statements etc etc okay I'm not terribly good with combinatorics but the number of possible thread schedules is in the order of n to the power K which is going to be enormous if the

barrier region is got many statements in it so although we've restricted attention to this barrier region which is better than focusing on the whole kernel there could still be many thread schedules to consider within this barrier region do we really need to consider all of the these thread schedules to detect data races well actually the answer is no it suffices to consider just a single thread schedule and in fact it can be any thread schedule we like so GPU verify relies on this observation which I'm going to justify to you in a minute and this

is an observation that has been made independently by several researchers it's used in a variety of the techniques for um analyzing GPU kernels and also in various methods of doing partial order reduction for concurrent software there are similar observations to do with if you guarantee detecting data races you can relax the number of schedules you need to consider so let me explain this in our context suppose we have a barrier a and a barrier B and some barrier free region of code between A and B what we could do is we could just let thread

zero run all the way from A to B we could log all of the accesses thread zero makes so we know exactly where thread zero read from exactly where it wrote to then we could run thread one all the way from A to B and we could log all the accesses thread one makes and furthermore we could check these accesses against those of thread zero and if we find that thread one reads or writes uh from for or two a memory location that thread one wrote to then we can aboard okay and similarly if thread

one is done a read we can check the thread uh if thread zero has done a read we can check that thread one doesn't right there then we can run thread two from A to B we can log all thread 2's axises and we can check those against both thread zero and thread one and we can continue this process and finally we can run the last thread thread n minus one from A to B we can log all the aises it makes and we can check that they don't interfere with any of the axises made

by preceding threads if you think about this because we're going to abort if we detect a data race then either there is a data race that can occur between two threads in which case the first thread will log its accesses and when we check the second thread's accesses we will find this database or there can be no databases okay in which case there's actually no way the threads can influence each other between the these uh these two barriers they can only influence each other by the actions they make between the barriers if there is a

data R so for instance if thread five was going to write to some shared variable and thread three was then going to read that and make a decision based on its value that would constitute a data race between these threads and we would abort so if there are no data races actually this schedule will we run thread zero all the way then thread one all the way then thread two the way that schedule is just as good as any other schedule the state of the kernel will be the same once we get to barrier B

no matter which schedule we choose so GPU verify exploits this trick it in fact doesn't take this schedule of running thread zero all the way then thr one it uses a different schedule which I will come to in a minute so this is really good because it completely avoids reasoning about interleavings and in fact it lets us think about the GPU kernel as if it were a very long sequential program where we serialize the execution of the threads so we have a long program because there are many threads but we don't have to consider all

of these interleavings so because we can choose a single thread schedule in fact if there are K statements in our barrier region we can reduce analysis to a sequential program representing this barrier region that has n * K statements one statement per thread okay I mean one for every K statements we have a copy of those statements for every thread this is good because we're back in the the realm of sequential program analysis which has been studied for many years in which we've made great progress as a community but in practice as I showed in

the previous lecture we might have a GPU Cel that runs thousands of threads so we're going to get a very long sequential program which is going to be intractably large to analyze so what can we do about this can we do better actually yes we can what we could do is we can choose a pair of threads I and J in the range of thread ads and then we could consider this barrier interval barrier a to barrier B and we could run thread I where I is just some thread all the way from A to

B we can log all of the axises I makes and then we can run thread J all the way from A to B and we can log well we can we don't need to log thre J's axises we can we can simply check that the aises thread J makes don't interfere with the axises that thread I makes and we abort if we find that there is a data Ras if there's an interference between the um the rights and reads of thread J with respect to the rights and reads of thread I if you think about

it a data Ras is a pair wise property it always occurs because of some pair of threads it doesn't involve three threads or four threads so there might be a race between Say thread five and thread 17 and if we're going to prove that this barrier region is okay for all pairs of threads that involves considering the pair 57 and if there could be a race between them we will detect it when we consider that pair using this trick here so if we can show that the barrier region is race free for every pair of

threads then we've actually shown the barrier region is Race Free overall the key thing here is that if a race exists it must be because of some pair of threads we're going to consider all pairs I think this is quite a subtle point and this is again a trick that's been exploited by various researchers and by the Pug tool takes a little while to wrap your head around um we do have a formal proof in one of our papers is it sound though well let's think so suppose we've got a barrier a and a barrier

B and we pull this trick we run thread I all the way from A to B logging what it does and then we run thread J and we check its accesses in a Borton race this is okay we can now establish there are no data races between A and B but let's suppose we now have another barrier region B to C and we now want to run this pair of THS from B to C is that okay well actually this is kind of problematic because it's as if we only have these two threads they've updated

the shared State then they synchronize it to barrier and they should now be able to see what all of the threads executed Theo did but we don't have those threads so if we now let I and j keep running they're going to be reading from elements of arrays that would have been written to by other threads but they're not going to see the changes that those other threads would have made so in fact this is complete nonsense so this is not sounded it's as if thread I and thread J were the only threads run in

the kernel we're not going to see the effects of other threads so a way to get around this is to make the shared State abstract so we say that when you read from the shared State you get some arbitary value back and this arbitrary value reflects the fact that other threads could have manipulated the shared state in ways that are not visible to to the two threads that are executing and a very simple here uh a very simple idea here is to Havoc the shared State at every barrier so we make the shared State arbitrary

at every barrier and this is what CP verified does or something even simpler which I'm going to show you later in the lecture is just to remove the shared State completely and when you read into a variable you Havoc that variable you make it non-deterministic and when you write into the shared state that could just be a no up because there is no shared state so yeah like I said an even simpler idea is to remove the shared State completely I'm going to talk quite a bit about this operation haviing so if x is a

variable Havoc X means set X to an arbitrary value if you like you can think about it as randomizing X although Havoc is operation that is performed in a verification context it's not something you would do in a running program so I prefer to think of bit as making X arbitary considering all possible choices for X so the GPU verified Techni and Tool exploits the any shedule will do trick the two threads will do trick and also this shared State abstraction to make the two threads trick sound to compile a massively parallel kernel K into

a sequential program P such that roughly speaking and I will explain later why this is only roughly speaking if we can prove that the program p is correct and by correct I mean that no assertions can fail in P then we know that the program K the the massively parallel Kern or k is free from databases and because we have a lot of techniques for analyzing sequential programs in principle it should be quite easy to verify P of course these sequential program verification techniques so far from fully automatic so it is a challenge to analyze

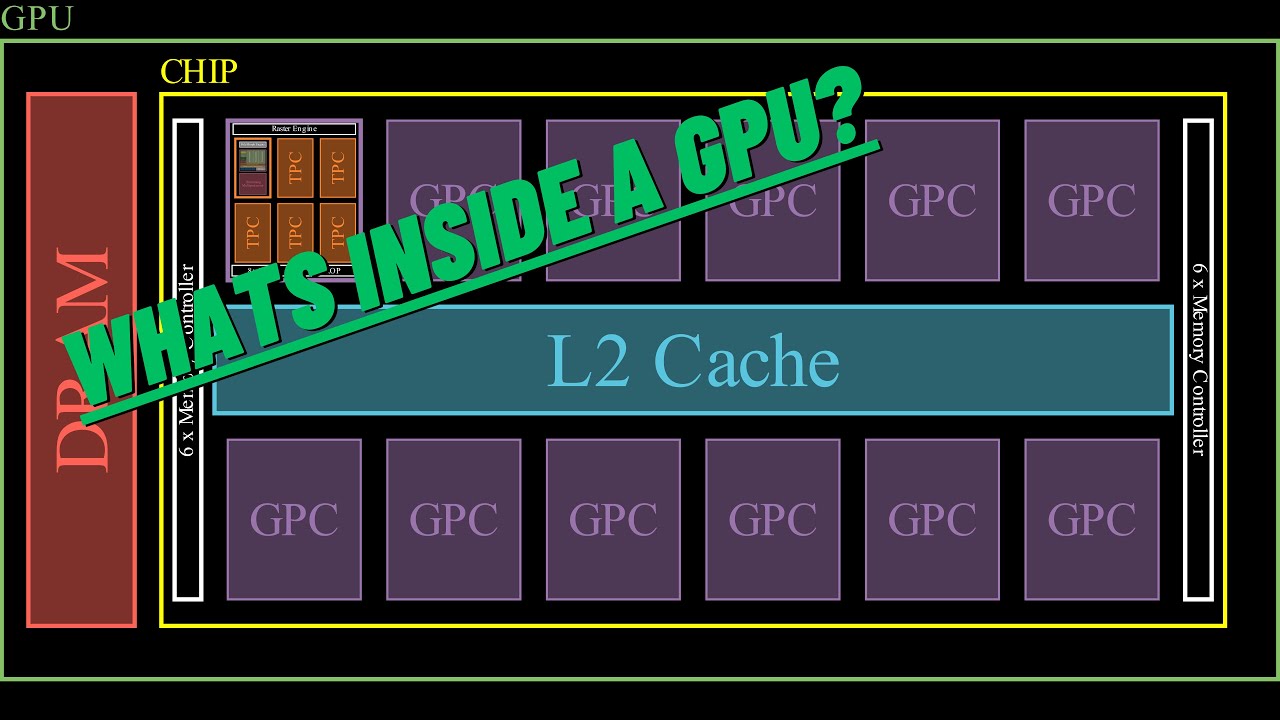

this program but it's much less of a challenge than it would be to directly analyze a massively parallel program the architecture of the tool is as follows we take a kernel either in opencl or Cuda and in future work we would like to consider Microsoft's C++ amp programming model which has various similarities with opencl and Cuda we take one of these kernels and we run it through a front end built on top of the clang and llvm compiler framework so clang and llvm know how to par as openc in Cuda thanks to great open source

efforts by other people and they turn this into an lvm control flow graph and we have a tool that then translates that control flow graph into a boogie program so Boogie is an intermediate language for verification so we have this kernel transformation engine component which we've developed at Imperial which takes the LM control flow graph and produces a boogie program which applies this rewriting of the parallel program to a sequential program executed just by two threads I mean it's a a parallel program executed by two threads with a fixed schedule thus it is equivalent to

a sequential program so we have this sequential Boogie program and we can reuse the existing Boogie verification engine to analyze that program and Boogie calls out to an smt solver by default Microsoft's Z3 smt solver which is what the tool uses at the moment and we're also looking into support um for the cvc4 smt solver as an alternative or a complement to Z3 verifying a sequential program involves reasoning about loops so we have a technique for generating candidate Loop inv variants which I'm going to talk briefly about in the final lecture in this series and

a nice thing about this setup is that the king lovm compiler framework is very widely used extremely widely used I would say Z3 is very widely used in verification and other areas as a constraint solver for many projects and Boogie is widely used as an intermediate language for various verification tools so if there bugs in Kang and lvm it's very likely other people will fix them for us and they're constantly improving similar with E3 and Boogie and the soundness of Boogie as a verifier has been well established for a long time now so the only

magic in our approach is in our kernel transformation engine this is where we have to think very carefully do soundness proofs to make sure that we the argument for why verifying our sequential program allows us to conclude properties of our kernel we have to make sure that this argument is sound and there are not problems in our kernel transformation engine but we don't have to worry about all the many intricacies of building a front end and building a verifier for a sequential programming language what I'm going to do now is go into the details of

how um GPU verifier Works reusing this infrastructure I'm going to show you how this transformation into a two threaded program is actually performed and in the rest of this lecture I'm going to focus on a kernel that doesn't have any conditionals or Loops this is just to make the explanation simple and then in the final lecture I will show how conditionals and Loops are handled so suppose we have a kernel over the following form it's got some number of parameters which include some local arrays some number of local variable declarations and then a sequence of

K statements and let's assume that the statements have quite restricted forms what we can do is we can have a statement X becomes equal to e where X is a local variable and E is an expression only over local variables then we can have X becomes equal to a of e where a is a shared array so an array with a local keyword it's a bit annoying that when you talk about a local variable in the usual sense and then we talk about these local arrays which are really shared among different threads but this is

the open seal terminology which I'm trying to stick to so a is a local array that that is it's shared among many threads and E is an expression purely over a threads private variables or we can write into a at an expression e purely over private variables and we can write the private variable X and we can have a barrier statement so let's suppose we have only these forms of statements so like just to reiterate we have the Restriction here that X is a local variable e is an expression only over local variables and a

is one of the local array parameters where local means shared among threads so this restriction means that a statement involves at most one access to an array a shared array and this is easy to enforce by pre-processing the code so we don't need to force the programmer to actually write their code in this way we can easily um pre-process complex Expressions by pulling out the reads from these expressions and it means that there's no conditional or Loop code which is a a serious restriction this would make the technique not very usable but we're going to

drop this restriction later once I've explained how things work so what we want to do is to translate our kernel into a sequential program that models two arbitrary distinct threads executing detects data races and treats the shared state in an abstract way to make this sound so we'll call the original GPU kernel K and the program that we're generating we'll call P so first we want to introduce these two arbitrary threads so let's say that the kernel okay has an implicit variable tid that gives the ID of thread so in in reality this would be

one of these built-in functions like get local ID or thread idx dox in Cuda and let's suppose that n is the total number of threads in our sequential program we introduced two Global variables that are ins we introduced the variable tid dollar one and tid dollar two so I use dollar one and dollar two as a suffix to a variable name to say whether the variable belongs to the first thread or the second thread now we're going to consider two arbitrary threads they don't have IDs one and two necessarily they could have IDs Z and

47 but I'm always going to call them the first thread and the second thread so just to be clear by tid dollar one I don't mean the idea of thread one I mean the idea of the first thread that we are considering in our analysis so we introduce these two variables to record thread ads and preconditions on the Kernel so first of all a precondition saying that tid one is in the appropriate range of threads so it's non- negative and it's less than n and the same for for tid two and also precondition saying that

tid one and tid 2 are different from one another so that they model distinct threads okay otherwise the threads arbitary so we're not imposing any ordering on tid one and tid two we're not saying tid one is less than tid two the threads are arbitrary distinct threads within the thread range then we do some instrumentation of our arrays in order to perform race checking so for every local array parameter a in the kernel we introduce four Global variables we introduce a Boolean called read has occurred a this Boolean is going to be set to true

if we are logging a read from the array a and similarly a Boolean right has a cur a will be set to true if we're logging a right to the array a and then an INT read offset a such that if read has occurred a is true this variable read offset a this contains the offset that was read from and an in right offset a such that if right has a code a is true right offset a has the offset that was written to and I will explain later how these variables are manipulated to perform

race checking and then we also introduce four procedures log read a which takes an INT offset and it logs the fact that a read from a at offset was made log right a which is similar and then check read a which takes an offset and it checks whether at the time this procedure is called it would be okay to read from a that this would not cause a data race similarly check right a and I will show later on how these procedures are implemented to perform race checking and then we actually get rid of the

parameter a in P because what I'm going to show you here is how we can completely abstract the shared state so this array a is actually removed totally so to illustrate what I've shown you so far suppose we've got this form of a kernel kernel void Fu it has a local in point of parameter a so this is an array a local in point of parameter B another array and an INT ID DX so what we would do we introduce tid one and tid 2 then we would introduce a whole bunch of instrumentation variables read

has occurred a write has occurred a read has occurred B and write has occurred B so these are booleans to track whether we are logging a read from or right to either A or B and then read offset a write offset a read offset B write offset B which are ins tracking the offsets that were read from or written to we would also introduce a bunch of these log and check procedures which I'm not showing you on this slide and then the the kernal function fu is replaced with a regular function Fu where we have

the idx parameter that was a parameter to the original coronal function but these array parameters A and B these are gone we're not representing them in the sequential program and then we have some preconditions so we have preconditions saying that tid one and tid 2 are distinct and are in the appropriate range the next step is to duplicate local variable definitions in the kernel so if we have an a declaration of a local variable like int X then what we generate in the two threaded program are two declarations int X1 and int X2 so here

we're saying that both of the threads under consideration have their own copy of the local variable X so X1 is the first thread's copy X2 is the second thread's copy a non array parameter like idx in our example also gets duplicated because every thread has its own copy of the parameter and although those will initially have the same value it's possible for a thread to update its parameter if it wants to and this update is a purely local update and but we need to record the fact that these parameters initially had the same value so

if x was a parameter then we have two parameters x one and x do2 and a precondition saying that these parameters are equal so requires X1 equals X2 and now I'm going to show you some notation if we've got an expression over local variables and thread ID e then if we write e do one what we mean is e where every local thing like a variable or tid is replaced with the same thing dollar one so for example a loable x would become X doll one if it occurs inside e and we make e do

one and the same thing for E do2 this is actually a very simple idea it's best explained with an example suppose we had the expression e which is a + tid minus X then e do2 would be a do2 plus tid dollar2 minus X do 2 so we're just propagating dollar two into this expression so this dollar two dollar two arising of an expression allows us to actually transform the statements of a kernel into a round robin schedule where the first thre exive statement then the second thread execut the statement then the first thread execut

the statement then the second thread executes the statement and so on so so this is a little translation scheme if we've got an update local variable X becomes equal to local expression e this is Trivial to translate we say that the first thread does this update to its x with respect to its take on E so we have X dollar one becomes equal to e do one and then the second thread goes we do X do2 becomes equal to e do2 so this is a very straightforward transformation then if we have a read from the

array a at expression e into local variable x what we do is we first call this procedure log read a and in our race checking instrumentation which I'll come to in a bit we're going to consider the first thread to be the logging thread and the second thread to be the checking thread so because the first thread is the logging thread we call log read a and we pass in e do1 as the offset that was read from then we call check Reay and because thread two is the checking thread we pass in e do2

as the the offset that is read from For Thread two to be checked and then because we don't actually have a anymore in our sequential program we need to reflect the fact that X has changed but because we've disgarded any information about a we need to consider anything that could possibly have been in a so what we can do is we can simply Havoc X for both threads so we can Havoc X for the first thread and we can Havoc X for the second thread to say that X becomes arbitrary just as in aide it

would now be legitimate um to assume that X1 and X2 have the same value okay if you think about it because we're going to detect data races there's either a data race or they read from the from the array actually no that's complete nonsense because e doll one and e do2 may lead to totally different offsets okay so they're not reading from the same index in a so we have to Havoc X separately for each thread to make it arbitary for each thread so forget what I said about assuming these things are equal afterwards so

this over approximates the effect of reading from a to reflect the fact that a could have changed arbitrarily because there are actually many more than two thre execut in this kernel just to reiterate this because it's an important point we remove the array a so we need to over approximate the effect of reading from a by haviing the receiving variables now let's consider the case where we would write to an array a at offset e and we would write the local value X so what we do here is the first thread is the logger so

we call log WR a with the parameter E1 then because thread 2 is the checking thread we call check right a with the parameter E2 now what do we have to do to reflect the fact that we've written to this array a we don't have the array a anymore we've disgarded it so actually we don't have to do anything when we read from an array we Havoc the receiving variables because anything could be an a and when we write to an array a we just do nothing we don't write because there is no array to

write to if you think about it this is a sound over approximation of the array and then finally we have this barrier statement and we translate this into another called a barrier but later in the presentation I'll show you that barrier is interpreted with a special meaning if we have a sequence of statements we translate the first statement and then the second statement so that's the translation rules let me illustrate them with an example an example illustrating the concepts so far so what we do is we have this kernel void Fu function with a local

int pointer parameter a so this is an array and we generate a regular function Fu with a precondition about tid one and tid 2 and we would also generate these instrumentation variables for a which I'm not showing on this slide and then we take the non-array parameter idx and we duplicate it so we have idx For Thread one and idx For Thread 2 and we don't put this array parameter a into the sequential program version of Fu because we remove this array and we had a precondition saying that initially these parameters idx1 and ID X2

are equal then the local declaration X turns into two declarations X1 and X2 simil similarly for the Declaration of Y now the read from a tid plus idx into x what we do is we call log read a and we take this expression tid plus idx and we dollar one it so we dollar one it to get tid dollar one plus idx dollar one then we call check Reay with respect to the second thread the checking thread and we pass in tid dollar 2 plus idx dollar 2 and now to reflect the fact that we've

read from a into X we Havoc X we Havoc both copies of X we Havoc thread one's copy and thread 2's copy so X is now arbitrary for both threads the right uh then we have another read we're reading from a tid into Y and this is similar so we log read a for for tid one we check read a for tid two and then we Havoc y for both of the threads and finally we have a right at a tid we're writing the expression X Plus y so what we do here is we call

log right thread one is the logger so we are writing to the expression tid so we loger right to tid one thread two is the Checker so we Checker right at tid two and then we don't have to do anything to reflect the fact that a is changed because we've removed a so this example shows how we instrument the program to perform race checking and now what I need to show you is how we actually Implement these procedures log read a check read a etc so that race checking is performed so we have these Global

variables read has occurred a and read offset a and we use these variables to collectively log either nothing if read does Aur a is false we're saying we're not logging a read from a or to log the offset of a single read from a by the first thread so what does this look like okay well if read is occurred is false then we're saying that there has been no read from a in which case it doesn't matter what read offset a is we're saying there is no read and that offset variable it does its value

doesn't matter if read is occurred a is true then we're saying we are logging a read from a and read offset a says the offset of the read that we're logging so this maybe doesn't seem very satisfactory because there could be many reads from an array and we're actually only providing the facility to log a read from the array a right has occurred a and right offset a are used similarly by the way but let me show you how this works the log read a procedure takes an in offset and what it does is it

uses well what it could do is it could just say that we're logging a read so read as a cur a becomes true and read offset a becomes offset but the problem here would be suppose we have had a bad read that's going to race with something later on and then three good reads what we would do is we would call log read a we would log the bad read then we would call log read a and we would overwrite the bad read with a good read and do the same thing again and again and

then we would miss the race between the bad read and a statement later on in the Kel because we've overwritten that read so it's no good to implement log read as shown here what we need to do is something a bit more sophisticated which is we wrap these assignments in a non-deterministic conditional so this star is a non-deterministic expression that evaluates non-deterministically to either true or false so we're saying if star so if we choose to log this read if we don't choose to keep logging whatever we were already logging if anything so now we

are going to if we have a sequence of reads like bad read good read good read good read there's one resolution of an undet termism where we for instance choose to log the bad read and then choose to overwrite this by logging one of the good reads reads and then don't choose to log the other ones and then we won't detect a problem with the bad read because we overwrote the details of that bad read however there is a resolution of nondeterminism where we choose to log the bad read we don't choose to overwrite it

with any of the good reads and then we will detect a problem of this bad read with respect to a future read so this non-deterministically choosing to log something means that at any point we will be logging some read that has happened in the past and if we consider all program executions we will consider all possible reads we could have logged so this race instrumentation this idea of using nondeterminism in this way I think is quite well known in the verification Community but it first was suggested to me by a former colleague of mine Philip

rumor who's now at Upsala University we published some papers together about doing race analysis for the cell processor and we used a similar instrumentation to log data races so thanks Philip for this idea like I said star is an expression that evaluates nondeterministically so we nondeterministically choose to either log this read from a in which case the existing values of readers occurred and read offset are overwritten or we ignore this read from a and keep logging whatever we were already logging if anything log right a is completely similar to log read a and then check

read a is quite simple so we're checking a read from a by thread two because remember thread two is the Checker and the read is from offset so what we do is we assert that if the right has occurred a variable is true I.E if thread one has decided to log a to log a right then the offset we are reading from is not the same as the offset that thread one wrote to so thread one has logged some right in the past to a thread 2 is now doing a read from a and we're

checking that the offset being read from now is different from the offset that was written to in the past if it's different that's okay if it's the same then there is a rewrite data race okay so a read from a at offset by the second R is okay unless the first thread logged a right to a at this offset and this right has occurred a Boolean tells us whether or not thread one did log a right and then right offset tells us the offset of the right that was logged check right is similar but slightly

more complicated because if thread two is writing to offset then it could potentially race with a former right by thread one or a read by thread one so we have to assert that if thread one logged right then the written offset is different from the offset being written to by thread 2 and we also have to check that if thread one logged a read then the offset read by thread one is different from the offset now being written to by thread two so this is similar to check read except there's a little more to check

we have to check for both reads and wres by the first thread and initially we need to say that no reads or writes are logged so for every array we need to insert preconditions saying that read has occurred a is false if a is the array name and write has occurred a is false so we do this for every array so here's an example um including in the precondition we've got the threads tid one and ti2 the thread ads being in range and different we've got the parameter idx being different for the two threads and

furthermore we've got no read having occurred from a and no right having occurred to a by thread one and then this is the the code you before what we can do now is we can restrict this just to consider the log and check calls to see how these calls are interacting because this example is very simple and actually the the look variables X and Y are not relevant because they just get haved okay so let's look at these log and check calls and let's think about what the Conner looks like if we inline these calls

so we've got a log read a with the parameter tid doll1 plus idx doll one so what this becomes is non-deterministic decide whether or not to log this read and if we do decide to set read has a cur a to true and red offset a to tid one plus idx1 then there's a check read a and we're checking thread 2's read so we're saying is it okay for thread 2 to read from tid 2 plus ID X2 so this becomes an assertion that if a right has occurred to a by thread one then the

right offset is not equal to the sum of tid 2 and idx 2 and then we can similarly inline the remaining calls so I'm just taking the implementations of these procedures I showed you a few slides ago and inlining them with the given parameters now the key thing here is that because we are using nondeterminism we ensure that there will be some program execution that will expose a database if it could exist so let's consider for instance the read from a at tid one and the right to a at tid 2 this pair of statements

are clearly race free because ti1 and ti2 are different will a race between these statements be considered yes it will because for example there's going to be a trace that will go through and choose to go in to this non-deterministic branch and log the read from a and then the execution will continue it will not go in here and then it will assert that if a read has occurred from a which it has because we set this to true then the red offset is different from t two and because the red offset was tid one

and tid 1 and tid two are different which comes from the precondition of the kernel then this assertion succeeds so this is an example of a data race being checked and the race is uh well there is no race a potential race being checked and found to not be a race but now let's consider the case where there's a read from a at tid 1 plus idx1 and then a write to a at tid 2 so there is a program path that will go in here set read as a code a to true and set

read offset a to tid 1 plus idx1 then these assertions will pass and there will be a path that doesn't choose to go into these nondeterministic conditionals and then finally we get to this assertion and we say has there been a read from a yes because we set this variable to True here okay well in that case read offset a must not be the same as tid 2 read off say a is tid 1 plus idx1 and we have no constraint on X1 so in fact there will be an assignment to idx1 such that tid

1 plus idx1 is equal to tid 2 for example if tid 1 was Zero tid 2 is one then setting idx1 to be one would cause a data race to occur okay but the key thing is that because the verifier has to prove that the program is correct for all possible executions it will consider all resolutions of non-determinism and the scheme has been designed so that there is a case that will consider every pair of statements so the final thing to show you um for the instrumentation of Loop and conditional free programs is how to

implement barrier and this is actually very simple what we want to do with the barrier is just say that there are no reads or wres live anymore we want to clear them what we could do is we could assign all of the WR has occurred and read has occurred variables to false to clear any assignments where they were made True another thing we can do which is actually very good for technical reasons is we can simply assume that they are false so an assume statement causes execution to block unless the condition is true so any

execution that has gone into one of these non-deterministic branches and set one of these read his accur or right dis accured variables to True will be blocked by an assume that assumes that variable is false however we always guarantee there is one path that doesn't log anything so there's always one path that never sets these variables to true the path that doesn't go into any of the star guided conditionals so by assuming that a read has not occurred for a and a write has not occurred for a by doing this for every thread uh by

doing this for every array we eliminate any path that loged a read or right and essentially clear these sets so for those of you who are really into verification the technical reason why this is nice is that it stops these instrumentation variables becoming part of a Loops modify set simply because there's a barrier inside a loop if we assign these variables to false they would become part of the loop mod set and then when we have a loop invariant um we would need an invariant to recover the values of these variables that's the uh end

of this uh part of the lecture and in the next lecture I'm going to show how barriers are handled and how loops and conditionals are handled