[Music] a [Music] hi everyone I'm James Menard I'm a professor here at the mathematical Institute in Oxford and I work in antic number 3 hey my name is lass grimal and I have the pleasure of being a poto here working in the number Theory group with James um so today I want to talk about The progress that we had with Jared on the FY case and on considering um two different congruences so that was in the last two weeks ago now so this is with the the two different congren is with the uniformity in the

in the second Cong yes and then we quickly thought about that about how this place our method plays into your amplification setup with the additional congr and then last if there's time still what what I'm doing With Yuri now Okay um so let's start with the with the FY setup so in the FY case setup um let's let's remember that we wanted to parameterize a double coet of matrices um let's say on with N1 and2 D um where we looked at the G of the form q1 S2 Q2 S1 um with let's say it's a

Matrix Matrix with determinant D and we had like some congruence condition N1 Divided S2 and and2 divided S1 so the main thing I want to talk about here today is that now I um now we feel we have definitely a nicer way of thinking about it which also should make clear that from the setup um like these gcds and possible things don't don't really matter um and how this nicely fit into our new blackbox so this is before the Ki Schwartz now you do an additional kosi Schwartz so the the set that you're Really interested

in is somehow you have pairs g g Prime um where like let's say one of them is in N1 N2 D and the second coordinate is in N1 Prime N2 Prime D Prime where the left columns are the same so uh we have like q1 is q1 Prime and Q2 is Q2 to Prime yes so we Kush so that the Q variables are kept on the outside so we get twice the determinant conditions and we have the left set the same and we have the divisibility condition um in Application D is like something like a

N1 minus N2 but since we don't have like morally we just look at all the ends are fixed on the outside yes yeah okay that's just the thing I was check so D depends in some way on N1 and N2 um because obviously it's multiple the gcd but um yeah yes and after after this Koshi Schwartz um as long as we assume C value it doesn't matter that they are all fixed on the outside and it's just a Fixed quadruplet of all the ends and all the DS which belong to that and then like in

our blackbox of how we how we write it now at the moment um the best is now to forget um the divisibility condition um as a set condition but write it as an indicator function because our blackbox at the moment says completely okay we should think about it not as that we that we look at certain sets but we look ahead the ambient space and have an Indicator function which we're interested in and our method works as long as the indicator function is invariant under the the right group action so what does this mean in

this case um in this case this means we are looking somehow at a at a weight on G and G Prime where this is the property that this pair is in these um congruence conditions um so in other words it's like something like one SW the blue Oops like one N1 divides S2 one and two divides S1 and then one and Prime divides S2 Prime and one prime I guess one and two prime divides s One Prime so I think so so we talked about this already before my main point is now that we finally

made all the parameterization explicit and after we realized that the next step of the parameterization is not really a property of the congruence condition but it's a property of the Ambient space then it becomes clear that no Co primality conditions can destroy our um parameterization so the setup of the method surely works also in in in what makes the case break so that's that's the nice thing that I wanted to mention here that after we understood this correctly we can be at least sure that the method the application of the method can't run into the

same problem as the BFI approach does because it doesn't really care about the the Indicator function let me let me be a little bit more precise with that um so so just to rep paraphrase what you said to check that I'm understanding correctly you're saying that in your specific situation you have this Alpha that has these different divisibility conditions but any Alpha end that satisfies a few simple group transformation properties can be counted mod yeah if if we call this comp uh this complicated Set let's say we call it with a vector n and the

vector D where this is all four and D is the two DS and then this is in reality equal to if we want to sum over it then we can look at those pairs GG Prime which are in the support of this function and then we can ignore the divisibility condition here so the m space the space that we parameterize doesn't really care about the divisibility conditions what we care about is then that this uh indicator Function is invariant under the right group action so we need to find the group action which does that and

in this case the group action is just um if we take gamma in gamma 2 of like the LCM N1 N1 Prime N2 oops N2 N2 Prime um where the gamma 2 let's remember this notation we have Introduced is the one where the top right entry is divisible by this and the bottom left entry is divisible by that and then we take integers new let's say which are in N1 Prime N2 Prime Z and then we have a group action of pairs let's say Gamma New acts on G G Prime I think maybe red is

good oh yeah GMA new uh acts on G G Prime diagonally in the first coordinate So gamma G um yes GMA G Prime and then via upper triangular Matrix so anava Matrix from the right in the second coordinate and then if gar and new are in those sets they leave Alpha invariant because they preserve the divisibility conditions um this is with so and our G set has the vector D of determinants fixed d d Prime yes That that fixes the determinant and here we act with determinant one so that doesn't change the determinants in all

of these matrices then um from the right if we act by an upper triangular Matrix I mean just multiplying it out you see that shifts if if V is a multiple it doesn't shift it doesn't change S one as two the prim variables and from the left you we on on the top right the N the one variables are preserved and on the Bottom left the two variables are preserved so it it fixes the divisibility conditions um and the divisibility gcds between the sort of N1 and N2 type variables are handled in the V new

action no the the the the bad gcds in in in in BFI were Cross gcds of N1 N2 Prime and they are not here the they are in here so and that is exactly why their approach didn't work because here these cross gcds appear in both places but the way they try to do it you don't see this Effect here but this group acts on this and our blackbox doesn't care like the way we have written the main result it doesn't care about any common devisers between this and um after like after we have translated

it to the group the structure of the Alpha and the divisibility is completely disappeared because now the blackbox just says given a matrix space and a subgroup which acts on the space and leaves your Alpha function invariant this is the result we Get and this is the counting result we get and yeah this is a very like our proof we never at no point in the proof we need that those two arguments are called Prime so this is just how it works and this is just what it gives and like when we did it when

Jared was still here when we tried it then we always carried around the divisibility conditions everywhere and it's just Unnecessary information if you realize this in the first step then it comes down to oh yeah now uh it's about getting a system of Representative for this space acted on by the dou space and all like so the answer will depend very slightly on these ucds but the key point is that this is all encoded by the group which is yeah the answer will depend is there a slightly like in in our blackbox and I will

come to that later in our blackbox you Remember there's the r part and the K part and in the K part you still need to supply some information about about what your what Your set does and there it's not trivial to see um this we have realized um so the gcds are not really the problem but um in what we have worked on previously let me tell you let me so so there there we did also so we we um with Zar together we wrote all of this up and um at least now we have

Finally made rigor is the application of the whole method like um we have said okay this is the counting problem counting and then this gives like a main term and we get an error term which is like R to the 1/2 K to the 1/2 and we have this we can handle clearly and this we have written down in explicit terms and we can do but this we still need to do a little bit so there there's still something to do and I I will explain to this in the next Step um good so that

that's the first thing we did um so that yeah here I I think I didn't have any questions for you that was just um like that we worked on that then the next thing is um we WR down um at first teristics what we expect with two different congruence classes um Let me let me write down precisely what I mean by that and that I think Becomes quite interesting if it compiles yes so what we looked at is what they call let me remember special case two was the [Music] case in which the Q variables

are already one right uh I always need to remember the BFI the cases oh yeah okay so we look at the type one term some case just as a prototypical case in which we don't need to do another kartz um and want to build an extra variable so um in the person set up like let's say we have a new congruent variable v e v and then we sum over Q of size q r of size R so let me let me first write it in as a general Sum M of size m n of

size m alpha M beta n and then so now we do like MN conent to a mod QR so that's the standard one and then we put in MN is congruent to B and B is allowed to depend on V and even on r i mean of course we keep it outside they should be symmetrical um mod V yes and we subtract like one over by by q v are and there are some co- primality conditions which I am not writing and then like just because it's The easiest to write but I mean from the

method it's in somewhat clear that it doesn't really matter if we do an extra Kart so if we can do it nicely in the case um what they call special case 2 where Alpha m is just one then it's clear that after Ki schwazzing there's still something possible and we we we did the heuristics um then we got yeah then let me do the how we do this so we write it as MN I think we never talked like I think Last time we talked about it it was unclear whether you believe that this can

be put in dependent on V and R do you still remember what the last was what we talked about uh I think last time you claimed provided you know you had some suitably small capital V uh you can make BV to you can let B depend on V at least and make it totally uniform yes um And uh I think you said that this was because in your setup um all the uniformity type issues got push onto starting points and and then you just needed to deal with some separation properties with the starting points yes

um but uh I think you never exp yes exactly and let me swap my what I want to do I want to do gamma Q is equal to one I think that's the more easy case let me give one Second so we want to do gamma Q equal to one so we always want to do okay so we start with this system of congruences I need to just remember the right thing we had here yes so hang on your overall claim is for gamma Q being one right you're only or you trying no no no

so my overall claim is that in every BFI case we can put in such a variable with different strengths and the morally it works the same same in every way because In in like I I like in the case of a type two sum I do one Kushi charts like exactly how they do it and then if GMA Q is one I don't need to do another Kushi Schwarz and it's easier to see but if I need to do more Kushi schwarzes it still works morally the same way okay so my claim is it works

always um in this case it's the most easy to put it in and to see what the dependence is yes um so let me think about This so if we do the normal dispersion method setup yes let and let let me do this now slightly not too fast so I'm I'm proposing we get the following system of uh equations and let's assume we just we put the NS on the outside and have this is definitely not a necessary conditions but I think it just makes it a little bit easier if we have uh no common

divisors then I'm saying we have the following system of congruent we Have the following system which captures this let me see so we have q1 N1 Q2 N1 S2 minus q1 N2 S1 is equal to a N1 minus N2 over R then we have N1 t 2 - N2 T1 is equal to B new okay or like let's let's keep it just as a b variable and we know it may depend on may depend on some other things and one minus n 2 over V yeah yeah let me think about this so what yeah and

then we have R q1 S1 minus V T1 is b v minus a yeah and we have that N1 is congruent to N2 mod r v let me think about this for a second so I'm claiming that if I start with these equations and then do the normal amplif then do the normal dispersion Kushi Schwarz where I know keep the M variable I don't double I keep it on the inside of the Kish parts and I keep the V variable on the inside which one may on the outside so V behaves similar like R that's

why we get a congruence on both of them then I'm claiming this is the system of equ and we wrote down the proof here um does it seemed realistic to you so if V is not there then yeah this is is that believable yeah something like that looks right um yeah certainly if B just depends on V Then I guess you could um just put all variables in congruence classes mod V from the outset and then go through the entire fi work and you should get essentially exactly what you had before but you're losing powers

of v and you lose a lot of powers of um yeah but uh that at least as a proof of concept should say that provided you're an Epsilon away from the cases you can handle V being some small power exactly um so I totally believe that you should Be able to do something something in principle fairly uniform um so I guess the question then is just that's a very inefficient way of doing it because you're losing a factor of V to the 10 or something like that yes and I'm I'm saying we lose a v

to the three or so sure okay um let me write one more time here like we combine those and like if we call this one here D1 then what what we end up is like with a Q2 and one just to see then why like in the Justification of why we lose what we lose as 1 is minus D1 and we have like N1 congruent to N2 mod RV and then we have r q1 s one and2 I I mean it's of course a technical everything but um let me just bring it to this point

and then so we make some T variable implicit and then this is what we end up and importantly like if we now look at um we have here the system of congruences so if we are in the special case GMA Q is equal to One then the q's and the s's exactly run here over an SL2 system where we have two congruences and so if the ends are on the outside then this doesn't matter and we can sum efficiently over it we get of course then um the N / r n larger than R condition

in BFI becomes now slightly more restrictive but theoretically one can also keep the V on the inside so this should not be really the the the limiting factor and so this is the normal BFI Case and we have now this additional congruence condition which is exactly like that in in The Matrix we are looking at um the q1 S1 term so let's say the a okay maybe I don't use a so we have q1 as one and now in this Matrix it says exactly that the product of the diagonal entries is in a certain fixed

congruence mod V so we know that if the group before was gamma 2 N1 N2 then now to fix this congruence condition we need to Increase the level of the group by a factor of v and since this now fixes V squ many points we get V starting points to get the right congruence again so we get this Plus V many starting points or like starting points and then the moral is that these these starting points here should not interact in a bad way with with the other group structure and so we get a square

root cancellation in the starting point and so the the loss is from um the Square root cancellation that we here only get square root cancellation in the starting points and that the V increase the level size and and um yeah those are the losses we get and let me write let me write what we get for example if everything done here is correctly then yeah yes so then we say [Music] That we get something like RV less than n less than x over R to the 1/3 V so if V is one this is exactly

the BFI range for this case and now we make it here bigger by a V and make it here smaller by a v so it slightly reduces the n range and morally here it seems like that um V is three * as bad as R so V like I mean yeah B is three times as bad as R And if you remember there's the BFI result where they do this with r on the outside and Q on the inside and then they they count the primes yeah and then their range is R is less than

n to the 1 10 and so if we kick out the r variable and just have a v variable then the worst we should get is that V can be up to n the 1 / 30 so that's that's roughly the order of magnitude we need to expect I think for the V variable so um um in general it Behaves like it's a it's definitely worse than any of the variables here because we lose something in the starting point and we it twice in the level but it's all like two or three like uh many

like a factor the order of magnitude is by a factor of two or three worse than the losses in the normal BFI setup so then um you mentioned and we we we looked about it shortly so now the question becomes is this useful for any Applications and there um we we were not exactly sure but um we can of course look into the bounded gaps between pipes where we I think you already checked this we had like in the current paper is 49 variables or so like you split you choose a 49 dimensional SI so

we have like and we do a cellb SI so we have like q1 up to q49 and the prime pair like the the corresponding other pairs I guess and we can now Okay if one assumes they are well factorable which of course they are not then surely we can do we can probably put a full range in and of course we would need to check if they are just having the the if if there's no additional factorization property like let's say one of them is very long that's that's definitely what we always need like because

uh we need to have that the inner congruence is fixed mod one the the long one so so if one pair Is here very long then maybe we can break the one half barrier for the product for the other one slightly um do you think that is worse to follow and has chance like is it a reasonable thing to try like like now considering what what roughly the sizes are yeah I think this requirement that you have one super long variable is going to completely kill all of everything that's going onally the um I think

uh the main term contribution in The banner Gap screen primes thing and this is a bit approximate because this is sort of when 49 tends to Infinity but I think it's still modly true even when 49 is when uh yeah you don't have these exceptionally long variables and you have the of them remaining so all of them like I should think of them all of them like the main contribution is roughly balanced or like how what should Think about it um or like is the main just not one exceptionally long or yeah I think there's

multiple scales that are interacting so it's a slightly subtle thing to do with the precise choice of Weights um but um yeah morally you're having that um so it's not perfectly balanced perfectly balanc with them you have 98 verbals and so it would all be the more being of size uh X the2 divid by 98 so roughly X the 1 over 200 um and some of them it's important that some of Them are allowed the biggest one can be a bit bigger than that but not massively bigger than that and so it should certainly be

the case that um the product of any 97 of them is uh at least has the exponent sort of 99% of what the maximum is um because it highly concentrates on that sort of situation so then then I would expect that our current automorphic forms don't help much in yeah I think um yeah I would be skeptical of uh any of that sort of Thing helping in this sort of situation let me think about this here okay okay it's of course nice that we in any way can do this in a non-trivial highly non-trivial way

but okay then this this application is probably not oh yeah okay yeah okay it okay it it is more information but I guess that's so I I I know okay if you already said the main contribution is from other ranges then then it's like zero information there I Guess yeah so I would have thought that the numerical benefit that this would give would be so tiny that you wouldn't be able to see it okay good um yeah is there anything else like of course we have not done this only in this one case but um

we are relatively sure that this propagates through the other case it like it always has the same influence like whatever you do what whatever you do there you just put in you you carry another congruence With you and of course you need to be sometimes a bit careful when the ends are glue like okay it's not completely clear because you glue sometimes variables and so on but yeah so when we talked about it before I was a little a bit concerned when you were introducing some of these verbals where you have no control over the

size of it when you write it in this form where you keep all your unknown congruences just as congruences essentially and so you don't Have variables that could be exponentially large in terms of x uh then this completely makes sense but um yeah I guess the thing that I would have in mind is that um yeah in principle you could have done what I mentioned before the really dumb thing or put everything in congruence can that's that's how I do that's how we do it with voltin and D there we also need to have one

extra congr on the outside and we just since It only needs to be a Delta and there's some power saving in the inside it doesn't matter how many Powers you lose um yeah so probably uh you know it would have been quite easy to get that range at least with the to the 10 or something and uh uh and so if you're going to if you know there's going to be a situation the benefits from your work that wouldn't have benefited from that previous Approach that requires it to care about the the numerics very and

quantification um but the problem is that the quantification is still slightly on the weak side because you have something like vbn less than n the 1 over 30 and okay 1 over 30 is a lot better than 1 a th000 or um um but um yeah it would mean that you have to care quite a lot um on the size of this um small uniform component so either like if before if the Gap was Exactly at zero then the old method worked already and if the Gap was not zero then it's improbable that the 30s

is enough or so that's what I would have guessed yeah okay so it's just it's maybe it's still worth to write up because it's like it's nice to have non- trival handling of that um and but it's not like opening new applications immediately yeah uh at least not that I'm aware of on to my head yeah um from my point of view it the main sort of Thing of interest is um uh the way that um you can kind of build a certain level of uniformity into some of the spectral methods because if you're not

care for um you know the spectral methods have at least a um uh have a you know a congruence condition size of the congruence most you class the little1 type Factor um whereas at least here you're able to slightly Sid step that by putting all the uniforms into the uh into your star Yeah we don't do pong and so you don't need to be careful about that yes okay good um okay do we have anything else because else I wanted to go to to to a point where um it where having a very good Improvement

here uh okay let me say what I want to say so the next thing we looked at um I forgot unfortunately some of the details but I can still morally say to you what's what what it's about is how this plays together with the amplification method that you use there And in particular um how this plays together with the case which is missing to break the one half barrier of the four because um okay I I will write things which are Sur wrong because I for got exactly the the right setup but we um like

at some point if you if you write your the amplification method set up then um let's say here's an S variable and this is like one there's and everything is complicated but if you Write the amplification setup um you essentially have somewhere an additional congruence condition which looks like N3 is congruent N2 mod C okay this is not exactly but some somewhere there's an additional congruence which then in the paper in your paper you handle essentially trivially and lose a c to the 10 or like by putting everything into Cong classes now this congruence condition

is actually much better than the congruence condition we looked at in The additional con like if if N1 * N3 because this one is now fixed by gamma n c I guess because this fixes N3 assuming Co primality because gamma n 3 fixes N3 over N2 that means that we don't need that we sum over all the starting points but all the starting points are already in the problem and we have a group which fixes the congruence condition so essentially the amplification setup translates in this method to the best possible thing you Can happen like

you have you have all the starting points so you get square you hope to get square root cancellation and your group is not too like you don't need to have more starting points than necessary so in the language we had there that means the loss is maybe no longer and like it's not not like three times as bad as the other losses but maybe two times or maybe even one and a half like it's it's getting close and if the loss here becomes extremely good one Could do the quadruple divisor for function by just amplifying

very much and we think this is not possible with this but it's much much much closer like we are like three over half of the way of the range of C there which you would need to choose C to amplify yourself around the quadruple divisor function um probably there's a very good reason why this can't in any case do this but I we we still found it interesting that it reaches definitely closer closer to that Um unfortunately we did this two weeks ago and I didn't have my notes so I can't give you exactly the

details but I think from it it should be clear that um in in such Matrix setups we can handle the C quite good but my guess is that any loss in C makes the application impossible and we can't hope we have no loss but um yeah it's still interesting that you can do better than before in that um do you think there are other cases where one needs the amplification Method like with not C to the Delta but where again like C to the one/ sorry where where where like C of size n^ the 1/

10 is interesting because I think like like something like C is of size n to the quarter suddenly would open open something like like the quadruple divisor function and I think maybe we can get to one over I don't know six if we are very optimistic um are there possibly cases where this is still like Opens more applications than n to the delta or is it again a case where between these two there's not much [Music] um okay so a few things uh yeah one thing is it would be worthwhile um chatting to Alex a

little bit because he had um some works that were also uh one consequence of some of the things that he did was to uh treat the sort of sein in a less trivial way than I did and that correspondingly gave Triple convolution in slight look slightly wider ranges um and did he write is this appeared in something that's written up or is it like something he worked on uh I can't remember um I think it was a sort of side application and he didn't have any kind of concrete applications so um I think this was

on thing this was connected to work that he's already done but maybe he just had it as a sort of side comment or something in one of his Papers um I think it's related to some of the stuff that's already appeared on the archive um but it's best for you to double check with him because he certainly didn't write it all up almost precisely because he was improving um a fairly trivial polinomial loss in C to a better polinomial loss but didn't have a kind of conrete application um uh yeah off the top of my

head I correspondingly don't know of um any concrete applications where um this Would have a major effect I mean in there's lots of variance of these PS in arithmetic progressions type questions that you can ask where you can have different assumptions in uh in different ranges and uh with the sort of deamplification style argument um you know suddenly when you're in the sort of small neighborhood of what was previously the critical case you now have a much better estimate and this means that that better estimate Will have a wider range and so when it takes

over from kind of previous estimates if that you have one say quintuple convolution then there's lots of ways of pairing up variables and there's a few different methods depending on the size uh of the different variables and there's all these different messy overlapping estimates which all kind of very numerically quantitative and in some ranges one will do better than the other And the better your C dependence the more the bigger the range it is to use this um different setup but I and so there's therefore lots of possibilities that if you kind of come up

with the right question or look for the right version of the question in the literature you might be able to quantitatively improve something however I'm not really aware of many situations when um uh when this is the absolutely critical Range um and uh therefore I'm not totally sure if you can do anything qualitatively new um yeah I guess one thing to check is the sort of six prime factors of size X to six uhhuh um all right that um I sort of so can I again think about this as a as a six divisor yeah

okay so we have like n i N1 up to n6 of size n let's say x to not X to one six and we want to do this so this is this is not known with Q larger Than2 uh yeah so I think uh I believe believe that sort of um I believe that this should be much easier than the four factors of size um X to one quter but certainly in the literature there's nothing that handles this case um I okay that's interesting that's uh okay I see a non-zero chance that we can because

that seems to me something where that seems like the amplification Limit is maybe somewhere init of course I don't I have not thought about six factors how they you need to think about all possible arrangements and so um so six is more difficult than five here yeah because it's not it it's even I guess and likes to combine to one half and one half is bad uh for example yeah okay um oh that's a good point um and yeah I guess related to this also is um the turn divisor function with three factors Of size

X to 1/3 um and uh because in that situation um uh we can handle this beyond x to1 half but basically this is always using estimates coming from algeb geometry and this no no no Spectrum me uh and so yeah for at least the totally balanced case with three factors of size um X to 1/3 um I think no spectral methods know how to handle we are allowed to average we average all Q right or um for yeah for So the algebraic estimates are able to handle it without the average over q but even averaging

over for Q I don't know of any automorphic spectral method that can get non- trial results for D3 in general for any cu Larter than one for CU bigger than one off oh well that's [Music] interesting yeah somehow my my my gut feeling is that maybe for those cases if You assume some reasonable conectors probably may it may be possible and like the quadruple I guess even with like my feeling would be it would be very surprising if you can amp de amplify your way around around that problem and even if you unless like as

long as you don't put unreasonable things like cancellation between different C forms in I guess it just can't work whereas here this seems more reasonable uh so for what it's worth Yeah I um skeptical that it will work in any of these situations of okay um but uh I would be very happy to be proven wrong okay um okay that's good okay that's a good point that I I I was not aware Let's see we have I feel we have a lot a lot possibilities to do so um let's look at that okay then um

so that those were so far the good news then there's also a little bit Slightly bad news um namely let's look again at the at the whole setup of the method so what I think we we are we are getting there but um there's unfortunately no let me just start with the method so we are running a little bit always um we always need to do things again because we realize that applications require more generality than we had written it up before um surely like um a Lot of the applications I talked to you before

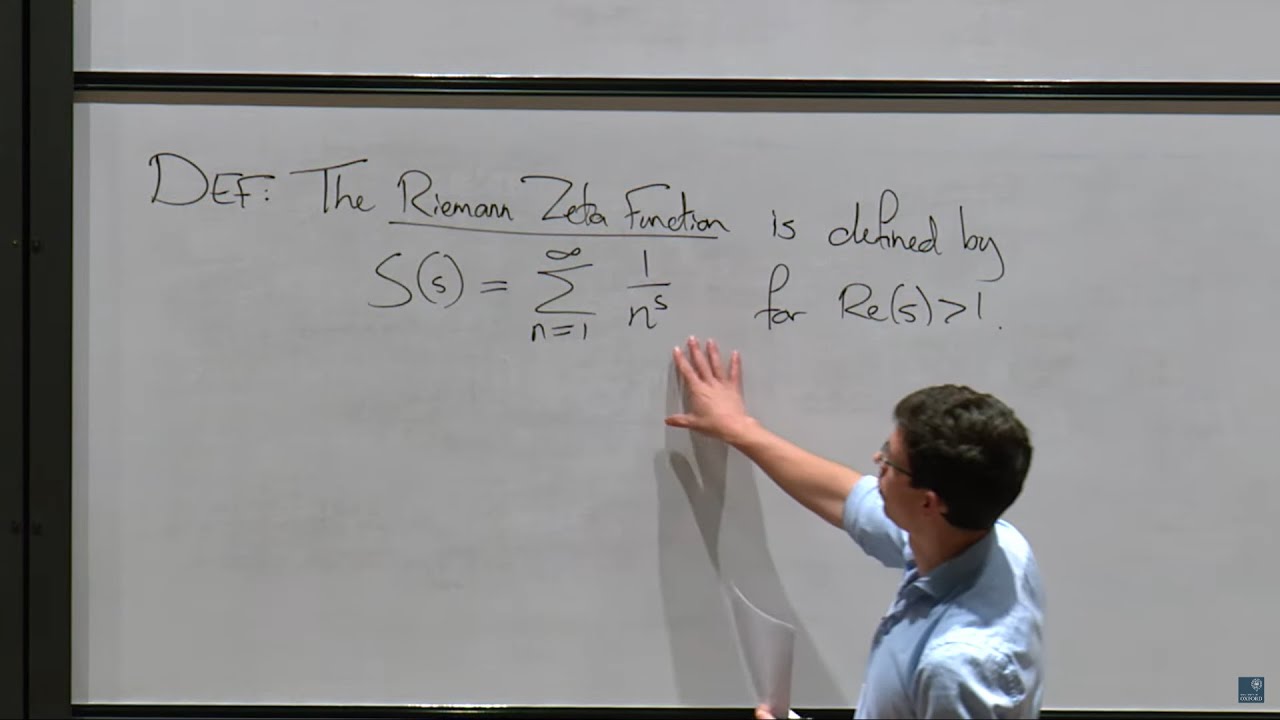

like the divisor function those are all set like they have they surely work with what we do but our goal is to to write the message um that it can handle like the thing the two applications like which are in some sense extremal cases which we want to look at is that we look at that we look at um like an L function estimate and maybe two are complex conjugated where we have four different Characters which um and we we are interested in getting an an asymptotics and say What range um what range of the

moduli is permitted for for to get an ASM totic formula and um bur to toi has a paper where he considers two pairs of characters and gets a result but he uses plumage and loses already factors of of of that just which he can't prevent because he doesn't use the right Spectral approach and then Koo has a paper in which he obtains a spec like a full motohashi type formula for all characters being the same um but I don't understand that paper and I'm suspecting this I don't know I I don't understand that paper so

that paper that he says he can't improve burus result and I don't understand this statement because I would have thought he can he should but I think he he probably understands his work better so I will leave that out and and just look at bur's result hang on so sorry the characters at a different modular yes and we're expecting the main term which is what you'd expect and then you're going to get some a term which would be clearly power saving when all the modly is small but you're going to have some loss depending on

Theta and uh the yes and we say that our our estimate is that in the error term if if like let's say if all the QI Are one Q if all of them are the same then we think we save a full power of Q in the error term over what burad um that is our hope and our hope is also that we can write give it for four different characters which I think with closer mon sums is again possible but would be so messy that I it's essentially hopeless whereas for us it's messy but

it's possible so that is one of the things we want to do and then the other thing we want to do is the is the The whole BFI setup of course that that we talked about all the time and so why do I mention those cases because if we if we think about the general setup of our method um let me take a look um right so we have now generalized a bit which I'm not sure if at some point we just need to stop trying to generalize but at the moment I think it's still

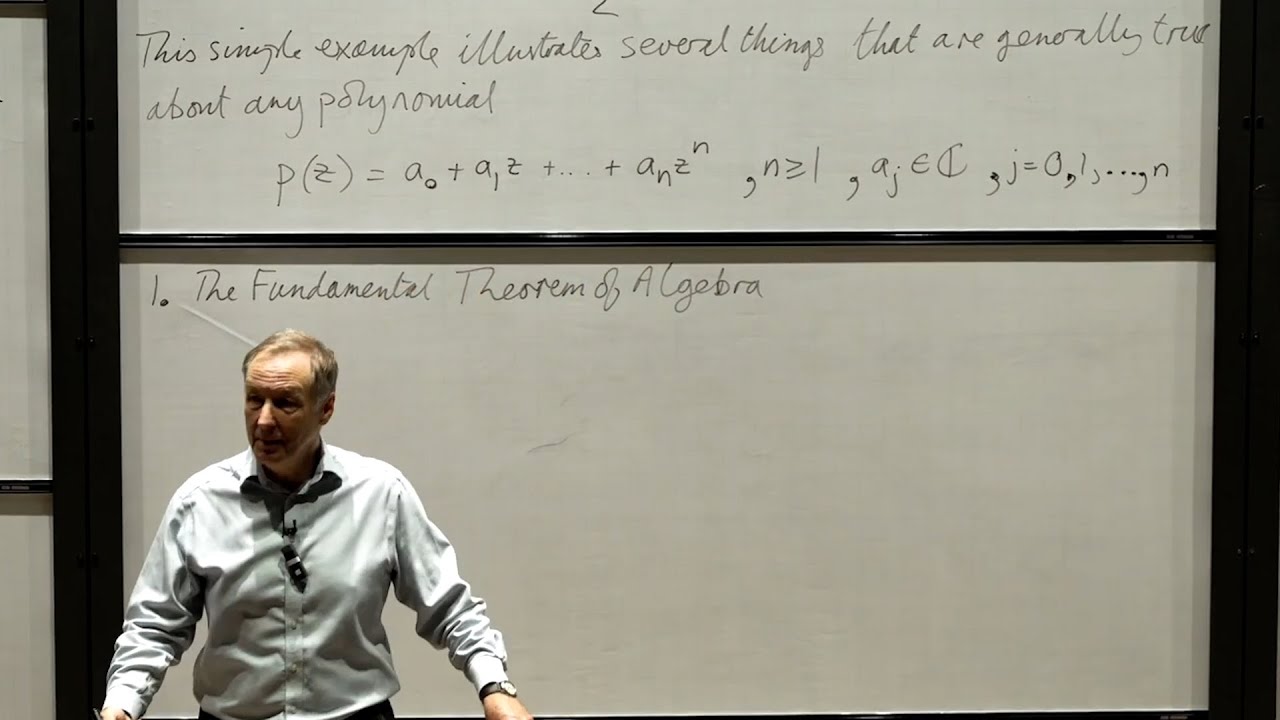

if we want to handle all Those cases we still need to do something a little bit more namely we need to look at matrices and I'm I I will ignore some gcd conditions so let's say these are matrices with determinant h k of integer Matrix then I have some let's call this G I have some weight Alpha on G and I have a weight F on G where this thing is fixed by a certain congruent subgroup and this is a smooth weight and then we want to Say that this is main term plus an error

term which is R to the 1 12 K to the 1/2 so what's different to what we talked about last time um different is now we want to assume that the determinant has two parts why do we want to assume this because let's say oh okay because the beta H variables should go into the r part and the gamma K variables should go into the K part why do they need to do this so in all D applications so in all D applications Beta H is one and there is nothing put into the r part

in all these style of applications so let's say Zeta Force moment applications you usually want to have gamma k equal to one and put everything into the into the R into the other part so if soorry and by equals one you mean is the indicator function k equals one and yes sorry of course it's not a constant it just disappears yeah sure yes and so if if our black should handle Those two extreme cases um we want to write it in a way that the error term like that you can can put it in and

the reason why we think we can handle this thing here for example easier than other people is that we now can allow some of the determinants to go still into the K part if they are ugly with the r part for example if the determinant here has a common divisor with the q's then we don't want to open the Machinery of new forms old forms or like to to to see how It interacts here but we say okay those devis are bounded many we push into the K part because there it does less harm than

in the spectral part and that should be conceptually very nice because we we should require less input from the spectral side because yeah as I said we don't need to we only need to consider them as Hecker operators when they are co-prime to all levels and then they just behave nicely and factor out and everything is just beautiful but we gain The pain of having the them them in the K part and that we have now looked for one week because they make the K part definitely more ugly um and we probably can't prove the

best possible result uh let's say the optimal result but what we can prove is probably good enough for all the applications that gives exactly is like the the additional complications we can't handle optimally but we still hope that how we handle it Is in all applications dominated by diagonal contributions or such things so what do I mean by that uh so just to check so you have the choice if you're putting things in the K part or the r part yes um if you so the advantage of putting in the KE part is that you

avoid having to go into new forms and all forms things and we need to do it if we want to do BFI in the same way okay but let's imagine that we're in this kind of context um so I see how that kind of Technically Sid that s side steps a technical obstacle but of course you could go into new forms and things um but for the for this we anyway need to do it yeah okay no so I I'm not objecting to the principle I'm just trying to understand uh for this context um uh

the main advantage of putting it into the KE part is to sidestep technical difficulties that you would encounter in the AR part yeah for which we are not completely sure if they Are only technical because no one has done it for four different non-primitive characters so we don't know we think we we don't know we think I know I I don't know what to believe I I we decided to not look into that because you feel it's so technical it's going to be hand yes and we don't even know it could be that there is

something happening which loses one effect of we don't know like yes so that's the main advantage that yes I think you summarized that correctly okay Yeah um the problem is now that let me oh I think by the way I think we are soon maybe 5 minutes I think we started um give me one second the the the problem is now the [Music] following uh I let's remember so how did the K part look like um the K part looked previously like we sum of matrices g a b c d then we have our our

K weight On G which is like something like absolute value a plus absolute value B time L plus C over L + D is less than x to the Epsilon and then on the inside here we were summing over to one to two in essentially the set what we are looking at module of the group so system of Representatives and then we have Alpha to 1 Alpha to 2 bar where these are exactly the alphas here so we have a nice weight with these properties and then we sum over these double GO sets oh Yeah

um what is of course there's a dependence to one G to 2 is in the group so this is a function which we called W of G previously which somehow meas measures how these Alpha interact with with each other and like if you remember one part of where we wanted to get the strongest possible result we needed to be able to capture spareness in these Alpha uniformly in G but that we have all worked out everything is fine however this what I wrote down here is Only correct if K is equal to 1 and this

was nice because if you look at this equation here and here these matrices are an SL2 Z and this equation plus the property that the matrices are an SL2 Z means essentially either this Matrix is an upper triangular or lower triangular Matrix or there are only boundedly many and it doesn't really depend on L so this was essentially why our method was nice we started with a Complicated counting problem and then the input you need to give reduces two triangular cases here and if you insert a k it doesn't immediately reduce to triangular cases here

and you you still have a quite complicated I I don't want to write it down but you still have a complicated more complicated counting problem in some sense because these are no longer an SL2 Z but an SL2 Z over K1 K2 like there there fractions can be involved and then you can't exclude that There are matrices with BC not equal to zero which yeah there something happens what we are doing now and okay evaluating this object here on the inside for G not upper or lower triangular is a gigantic mass that you definitely don't

want to do especially if you want to capture cancellation and characters you definitely don't want to do it so what we do is we say that okay we give a trivial Bound for BC pairs where BC is not equal to zero just by Doing kwarts like if you kiwart the toe one and to two variables apart from each other then it becomes a little bit easier um if you do this if you cush way then the contribution of terms where BC is not zero loses a factor K Over the al2 B over over the other

bound and we are not yet sure if this factor of K is a permissible loss um theoretically one can do much better than this by keeping everything intact and by capturing cancellation but if if we write our main Blackbox in that way it becomes a very burden of application to get get the right bound here because I think this is a very difficult object to study and I'm not convinced that for non uper or lower triangular G's that this object is even much easier than your original counting problem like somehow the the whole point of

the method is to reduce a difficult problem to an easier problem if here this is still super messy then you have not won much and we say Okay um if this K is one then we can always make it much easier if this K is not one then okay you lose a factor of K but you don't lose L's so in most applications the L's are what matter and then then for those you can give still efficient bounds for this and this is a little bit the struggle we have the last four or five days

because we really long and hard thought about if we if we can avoid this or like if like we were on two two ways the question is Is there an easier disc ition for this if G is not upper or lower triangular or can can we avoid the SC loss in the kishar and the answer seems to be no for both questions after 5 days so that's a little bit bad uh yeah okay how does this interact with the l so if L is much larger than k then this loss is harmless then yeah this

but also it looks like you don't get non uper triangular lower triangular okay I have to interpret what yeah so the problem is if like if L is Larger than K or L is less than one over K you don't get upper or lower triangular I I slightly I I somewhere here you probably also need to scale with K still and so the answer is if L is very large or very small you automatically don't get upper or lower triangular matrices in in the middle range you get them and trivially they give you this um

so uh when you were working on this problem before uh you didn't put your L's into your starting points you had your L's in the whole spectral method yes um uh and that sort of allowed you and to push difficulty from the Spectrum method to the starting points to happen in this set up and you had a rather Messier argument that handles non-trivial large Els oh that argument never worked that never worked no um we we have a we like we we we so we had a we needed to invert the kernel bound there and

we know what is the Answer and but we did were a not able to prove it and B even assuming the answer we still were not able to make the argument work because one we were not smart I don't know okay so we never had a method that made it work um I believe it's something that can be made work but this is like okay this is uh cuz I was only just wondering as a sort of maybe it's a silly thought experiment uh can you kind of make the L parameter here larger than K

to ignore all the K things and then you have to pay a cost over on theal side that then gets you back into all the difficulties that that loss I think will be larger than this k i I mean we can do this even if you could do all of that oh no if we could do that correctly then probably not on oh I don't actually I don't want to speculate because somehow what we thought first if what is if it were true what we thought first then yes what you just said but After long

time of thinking we actually got to the conclusion that it's probably not true what we thought and maybe there exists no such kernel with these n poty so we are not sure this okay fine um but so we believe the loss is harmless and probably in all the applications will lead to not we weaker results but somehow it's still a like a blemish on on on this whole setup because one one one would expect that the right way of doing it should again Only require simpler information if we don't have simplified the information input here

we have done something wrong on on the steps before we feel um but yeah this is quite a lot of terms you're treating triy now um and uh so in finally in the in the in the FY case there's exactly our our optimal bound result was exactly by Factor n better than what we should get so and n k k is N1 minus N2 so like we had Exactly a wiggle room for this so we we need to still check it but it may actually be that D iits also have this so we're not even

sure if this is worse than disan is probably probably not even um we just hoped to to get something better in some sense so but yeah it might be one of okay so you know there's two possibilities here one thing is that there's a kind of conservation of difficulty and you can maybe push the K pain around in you have lots of choices But you're going to have to pay a price somewhere so you could try and push it onto the r factors and then have to deal with it there you could push it on

the K factors but then either you're incurring this loss or you're going to have to do something nontrivial for uh yes the sort of many terms that are not up or lower triangular um you you know modul speculations about your old method you could maybe push it on the difficulty onto the spectral side of things but all Of these it sort of feels like you're having to pay a price and pay some pain somewhere um and often when that's the case this arises for a fairly good reason and um you know quite often it means

that you can't just come up with some neat trick that s side steps the differes um yes this was roughly the conclusion we got and so for for the moment we our working assumption is we accept it here and check what that does in application so for sure you the first Thing to do is just to see how much this hurts you or doesn't hurt you for your applications because um uh yeah it's also the case that you don't want to add another 40 pages to your paper kind of going through some very complicated argument

that's maybe handling this in an nonrival way you know that's probably something that it's better to leave to a followup paper or something um now the point that I'm Getting from this is that at least with our method you see where the pain is going like you you still have full control and you know that theoretically here should be something possible whereas doing it with cnce formul it becomes super opaque like where it's gone so um that's the general gist which I take away from what we are doing is that we try to give you

a black box but the nice thing is that there are probably a lot of improvement like doing The inversion formula better playing around more with the K Parts here um but I think at some point we just need to say okay we start this and then let's PC we see what what we can do with this later uh yeah I agree so I think the main advantage of your approach and I know we've talked about this several times is that your approach is just fundamentally more transparent at least from the point of view of certain

applications And so uh you at the end of the day um you're applying a spectral decomposition and you're applying Koshi sharts and those are the two things you're doing and uh you know the flexibility that you're talking about here is slightly different ways about applying the Koshi chart step um but uh you know we know that there's a huge amount of mileage that you can get from those two fundamental inputs um but that's all You're doing and so somehow anything else that's equivalent to some sort of spectral decomposition and then some sort of Coach Arts

more or less should be able to do the same things but maybe a much more obscure and complicated language and so anything you know the Cal trades form is essentially just a spectral expansion um you're essentially doing that with your B caries the key benefit is the whole time is not so much that you're you have this new method That can now do things that absolutely couldn't be done before it's just that it's much more transparent about the ways in which you can tweak it and where you have to pay a price and where you

have to put effort in where you don't have to put effort in uh which allows you to do new things just because you can see how to play with it and this sort of more complicated um tweaking about how you want to balance it between one side or another side feels very much Like you got something that's fairly transparent but maybe that actual kind of fundamental work if you hope to improve this by working somewhere else should be coming in some sort of later paper or something I guess we only need to make sure that

this loss doesn't lose say okay