hello data scientist welcome to skill gate in this tutorial we shall build a powerful fake news detection model using the pre-trained bird with the help of transfer learning by the way this is part number three to our three-part transfer learning series where we have earlier discussed the intuition behind transfer learning and the refresher on what bert is and how it works so do refer to these earlier parts to reinforce your understanding i'll share the link to these videos in the description box below now let's get started on building a fake news detection model in this third tutorial for introductions we are skill kit and we are on a mission to bring you application based machine learning education we launch new machine learning projects every week so make sure to subscribe to our channel and hit that bell icon so you get notified of all our new ml projects well this is a snapshot of the data set we are using i have also provided a link to this in the description part of this video for your ready reference here we have these two csv files true dot csv and fake dot csv both of these files have the same exact data form we have the title of the news in this column one then the article text followed by the subject which is basically the category of the news article followed by the date on which this article was published for our use case we shall merge both of these files into a single large data set and add an additional column code label that will have true mention against all the observations from this true. csv and fake mention against all the observations from this fake. csv moving on this is our step-by-step plan on building this project first up we load our data set that is the true.

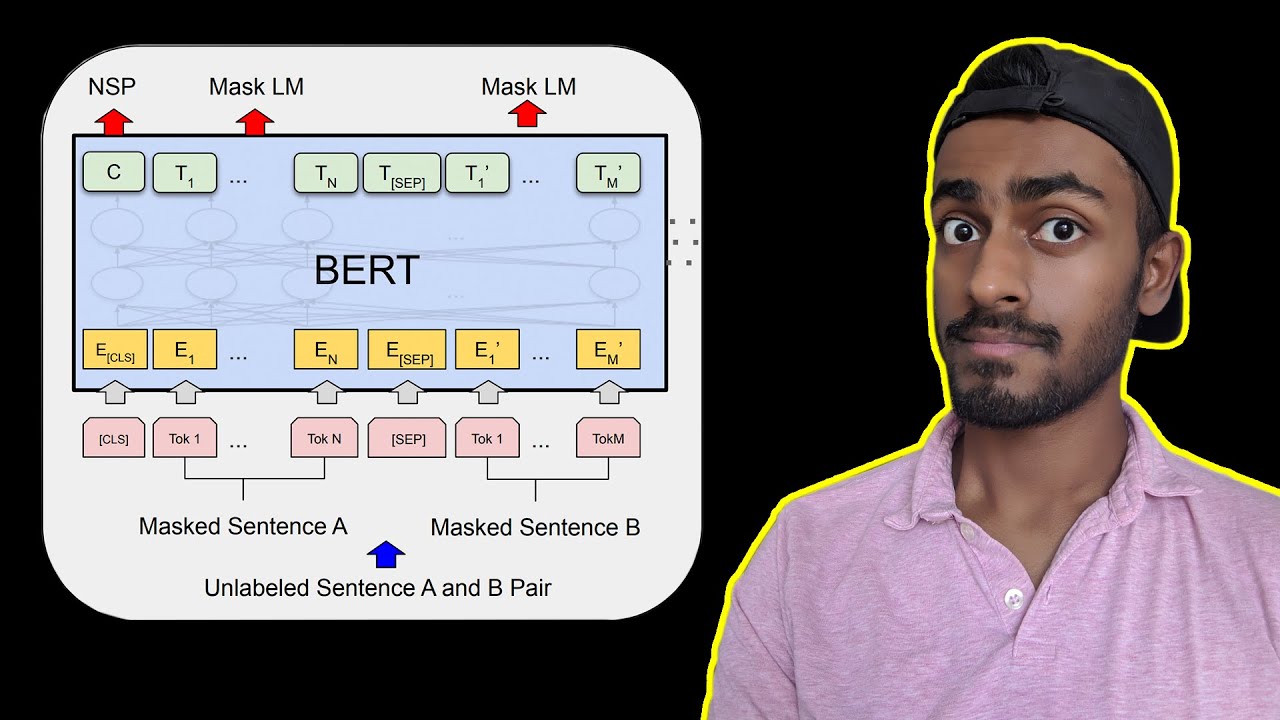

csv and fake. csv files then we merge them into one and generate truefake labels post that we obtain the pre-trained bird model to use it as the base of our fake news detection model as we know by now that bird has insane language comprehension capability so it shall make our fake news detection model better understand news context and hence make intelligent predictions on news being fake or not fake in the next step we define our base model and the overall architecture we shall be using pi torch for defining training and evaluating our neural network models after that we freeze the weights of the starting layers from burt if we don't do this we lose all of the previous training this is something we discussed in the second part of the series then we create our trainable layers generally feature extraction layers are the only knowledge we reuse from the base model to predict the model's specialized task we must add additional layers on top of it on this step we will also define a new output layer as the final output layer of the pre-trained model will almost certainly differ from the output we want for our model which is a binary fake and note fake as last step we fine-tune our model i'll talk more about fine tuning in the next slide and once we are done we move on to make predictions using our fake news detection model on unseen data bert is a big neural network architecture with a huge number of parameters that can range from 100 million to over 300 million and training a bird model from scratch on a small data set would always result in overfitting so it's better to use a pre-trained bird model that was trained on a huge data set as a starting point then we can further train the model on our relatively smaller data set and this process is basically what is known as fine tuning to do this these are the three approaches that we generally adopt first one is to train the entire architecture of our neural network second one is to train some of the layers while freezing others and the third one is to freeze the entire architecture where we basically freeze all the layers of the pre-trained bird model and attach a few neural network layers of our own and train this new model note that the weights of only the attached layers will be updated during modal training as part of this third approach and in this tutorial we will use this third approach where we will freeze all the layers of the bert during fine tuning and append a dense layer and a soft max layer to the architecture we'll talk more on this when we go into the coding stage now let's get started with our fake news detection model building using python this is our project folder on google drive having all the project related files in a single place i'll share a link to this drive folder in the description part below here this b2 fake news detection is our jupiter notebook let's fire it up to get started in my case i have it open already so let me switch tabs by the way to proceed with this tutorial a jupiter notebook environment with a gpu is recommended the same can be accessed through google collaboratory which provides a cloud-based jupyter notebook environment with a free gpu for this tutorial i shall be working on google collab and i highly recommend you to do the same so once we are here on google collab to activate the gpu runtime i click on runtime change runtime type and i select gpu it's already selected in my case and then i connect to the runtime all right now let's go section by section through this code file to do a quick code walkthrough over here first up let's set up the environment here in this first code cell we are installing uh hugging faces transformers library this library lets us import a wide range of transformer based pre-trained models additionally we are also installing pi carrot let's do it all right we are done as next step we import these essential libraries and then we mount google drive to access our project folder using these steps and lastly i set my working directory to the project folder within google drive if you are working in your local machine you may use this line of code all right in the next section we are loading the data set here first up we load true and uh fake csv files as pandas data frame then we create this uh column called target where we put the labels as true and fake against the respective line items and lastly we merge the two data frames into a single data frame called data by random mixing so let's see how the data looks now so this is how our merged data set is like it has uh five columns title text subject data and the new target which has these binary labels fake and true now this target column has string values which a computer wouldn't understand so we need to transform them into a numeric form to do this we use pandas get dummies method to create a new column called label where we put all fake labels as one and true labels as zero to visualize the changes we may use this head function once again so now we have this additional label column with the numeric labels created next up to check if our data is balanced across the two labels we may plot a pie chart so as you may see our data is fairly well balanced alright in the next section we split our data set into training test and validation in 70 15 15 ratio now we come to the bird fine-tuning stage where we shall perform transfer learning here first up we load the bird base model that has 110 million parameters there is an even bigger bert model called bird large that has 345 million parameters for our use case however bird base would do just fine so let's load it along with it we are also importing the bird tokenizer then as next step we prepare our input data to train our model we shall use the title of the news article for sure we could also use the the entire news article text but handling that much data would require more compute resources than what uh collab has an offer and will take a long time so we limit our scope here to just the title of the news article and trust me even with this we shall get a strong accuracy score of something around 90 now before we go to the preparation stage we need to figure out how we standardize the word length of our news titles as it would vary from one article to another for this we plot a word count histogram to understand what the typical word length is across our different titles using this code script here we may see that majority of the titles have word length under 15 loosely speaking so we shall perform padding on all our news titles to limit their word length to 15 words in the tokenization stage which is this next code cell now before we get into the tokenization let me quickly show you how the bird tokenizer works for this i have this dummy list called sample data here which has these two sample sentences basically the elements of the list then i tokenize this sample data by calling the bird tokenizer on it and i'm marking padding to be true let's see what we get as you may see the output is a dictionary of these input ids token token type ids and attention masks here input id contains the integer uh sequences of the input sentences these integer 101 and 102 are special tokens added to both the sentences at their beginning and end and zero represents the padding tokens so we have two zeros here because uh this second element is two word short of the first then attention mask contains uh all zeros and ones it tells the model to pay attention to the tokens corresponding to the mask value of one and ignore the rest token type ids identify which sentence a token belong to when there is more than one sentences available for your further reading i have added this reference link here in this code cell with this understanding now let's go ahead to tokenize our sequences that is titles in our training test and validation sets all right now our train test and validation sets are tokenized using the word tokenizer as next step we convert the integer sequences to tensors and as last step here we create these data loaders for both the train and validation set these data loaders will pass batches of trained data and validation data as input to the model uh during the training phase let's do it next up we freeze pre-trained model weights if you recall earlier i mentioned that in this tutorial i would freeze all the layers of the model before fine tuning it so let's do it now freezing the layers will prevent updating of modal weights during fine tuning if you wish to fine tune even the pre-trained weights of the bird model then you may not execute this code moving on as next step we define our modal architecture are using pi torch for defining training and evaluating our neural network models over here post our birth model which is this we are adding these couple of dense layers one and two followed by the soft max activation function then we define our hyper parameters we are using adam w as our optimizer it is an improved version of the regular adam optimizer then we define our loss function and finally we keep number of epochs as two even with collapse free gpu each epoch might take up to 20 minutes to finish so i'm keeping this number as a low value to not keep waiting on forever so as a quick recap we have defined the modal architecture uh we have specified our optimizer we have uh declared our lows function and uh previously we have also defined our data loaders over here right now as next step we have to define functions to train or rather fine tune and evaluate our fake news detection model this is the train function that we have written and this next one is the evaluate function which uses the validation set data let me run this and finally we can start fine tuning our bird model to learn fake news detection as a training may take around half an hour or so in the interest of time i will be leaving this part to you for this demonstration uh i already have this uh c1 fake news uh weights kept in this drive that i trained a while back before recording this video let me call these weights to our runtime now to continue further with our code review let's do it now let's build a classification report on the test set using our fake news detection model as you may see we are getting a strong 88 percent accuracy both precision and recall for class 1 are quite high uh which means that the model predicts this class pretty well if you look at the recall for class 1 it is a healthy 0. 84 which means that the model was able to correctly classify 84 percent of the fake news as fake precision stands at uh 0.

92 which means that 92 percent of the fake news classification by the model are actually fake news as last step let me also run predictions on these uh sample news titles first two uh here are the fake ones and the next two are the real ones now let's see what our model predicts so quite rightly our model classifies all four of these reviews correctly as fake and not fake guys congratulations to you for making it to this point do give yourself a pat on the back for completing this transfer learning fake news detection project all by yourself to summarize in this tutorial we fine-tuned our pre-trained bird model to perform text classification on a small data set i urge you to fine tune bert on a different data set and see how it performs there for example you may do a sentiment classification or a spam detection model you can even perform multi-class or multi-label classification with the help of bert in addition to that you can even train the entire bird architecture as well if you have a bigger data set in case you have any doubts or you got stuck somewhere leave a comment below and i'll help you out you may write to me over email whatsapp or schedule a free one-on-one by visiting my website scalcade.