Does anyone remember when they were first introduced to the Fibonacci sequence? Did you encounter it while messing around with repeated addition as a child? Was it taught to you in school?

I definitely can't remember. If you somehow ended up here without ever learning what the Fibonacci sequence, is then I'm honoured to be the first place you're introduced to it. The Fibonacci sequence is generated by a very simple recurrence relation: starting with 0 and 1, the subsequent terms of the sequence are given by adding the previous two.

Given its simplicity, the Fibonacci sequence comes up a lot. No, I'm not talking about in nature. I'm a pure mathematician; I don't care about the real world.

It does come up as a simple but non-trivial pedagogical example, though, and you might run into it in your first course in linear algebra, probability, or (of course) combinatorics. It's also not without its own old open problems. For example, how many terms in this sequence are primes?

you can muse over this problem if you like, but I'm more interested in a much simpler question: what's the largest Fibonacci number that I can compute within a second? I mentioned that the Fibonacci sequence is a common pedagogical example in the first course in various fields of maths, but it's also a common example in some first courses in computer science. The mathematical recurrence relation defining the Fibonacci sequence leads to one of the first examples of recursion a CS student learns.

Let's translate this definition into a program. Computing Fibonacci numbers by hand gets tedious really quickly, so imagine how far we can get in just 1 second with the power of my hand-me-down computer! 30.

Just 30. To be fair, the 30th Fibonacci number is. .

. kind of big. Who am I kidding; this is really disappointing.

Despite this being a near direct translation of the very definition of the Fibonacci sequence, the program sucks! This brings us to our first really important life lesson: mathematicians don't know how to program. So why is this doing so poorly?

Well, we can answer this question by looking at its graph. Phew; look at how that runtime just blows up! This innocent looking program has exponential runtime.

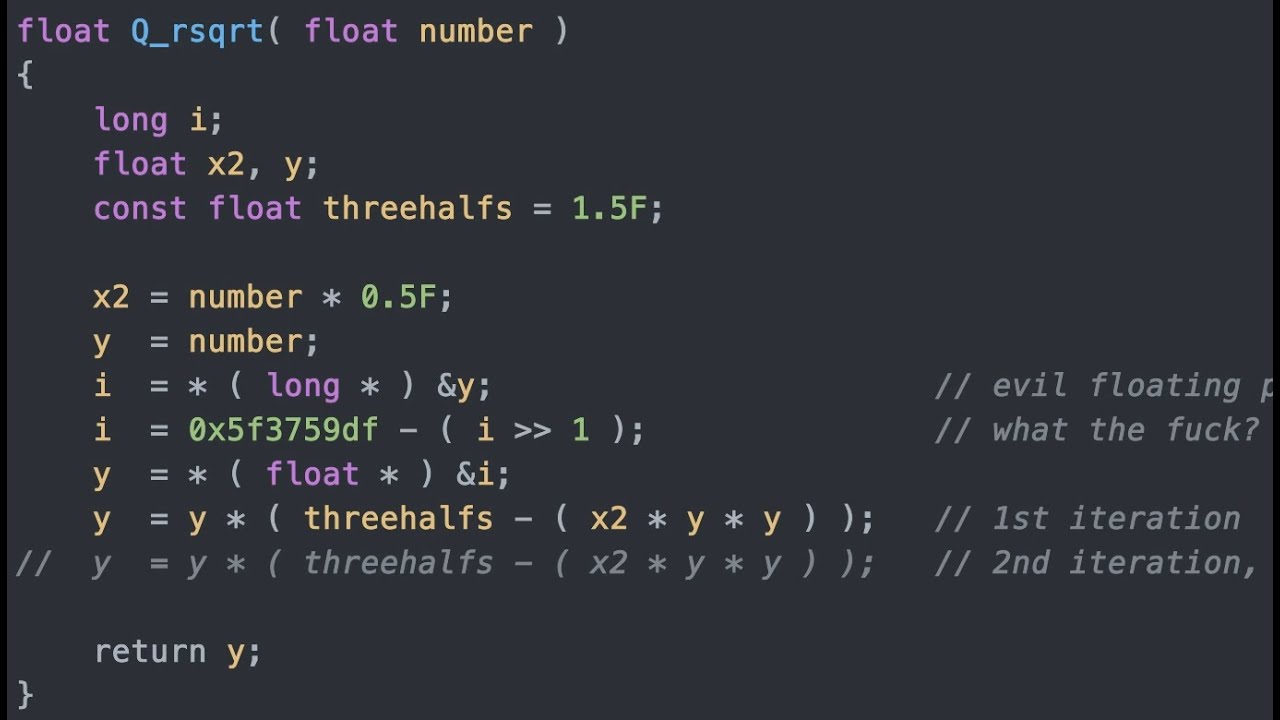

However, this is not the graph I was referring to; I'm referring to its call graph. Suppose you want to compute the fifth Fibonacci number. By definition, this would require us to compute the third and the fourth Fibonacci numbers, so that we can add them together.

Recursively, to compute F_4 we'd likewise have to compute F_2 and F_3, and we'd have to compute F_1 and F_2 to compute F_3. you might notice from this call graph that there is a lot of redundant computation going on: once we calculate F_3 the first time, we don't need to go through the whole recursive computation of F_3 when we need it again later. Therefore, we can modify our naïve program in the following way to save computation: when computing F_n, if we've never seen n as an input before, then use the recursion.

Once you finish this calculation, save the result to some lookup table. Then, the next time you're asked to calculate F_n again, just output the results saved in the lookup table. This optimisation is called memoisation---yeah, that's not a typo.

This is a standard optimisation technique used in dynamic programming, and is taught in most first courses in algorithms. Memoisation is particularly useful if you're going to be computing various Fibonacci numbers in rapid succession. However, memoisation is a little overkill in our case; we're only interested in calculating a single value of F_n, so we don't need the memory overhead of caching previous computation outputs.

Instead, we can just prune the call graph of redundancies in a way that leaves us with a very linear structure. In fact, if you never learned recursion before, this more linear implementation would probably be the first one that comes to your mind. "L-linear?

" I hear you say, sweating, "Like linear algebra? " Don't worry. "Linear" in this case just refers to the fact that the implementation loops n times when computing the nth Fibonacci number.

Since there's also a constant amount of work that gets done before we do the n loops, it might be better to call this an affine implementation. After all. .

. . .

. this truly is a *fine* implementation. Without breaking a sweat, this algorithm can calculate past the 200,000th Fibonacci number.

Our record now has over 43,000 digits! This is the power of using linearly many loops. And you thought I was talking about linear algebra.

What did you think this is, a math channel? As we can see, Fibonacci numbers are determined by a linear recurrence. This is to say that the nth Fibonacci number is a linear combination of the previous two.

. . well, so long as n is at least two.

This means that we can express subsequent Fibonacci terms by applying a matrix to the vector of previous terms. I know what you're thinking: "Since the input to the matrix is a pair of previous terms, it would be better if the output also [is] a pair of terms. " While this observation has been assiduously avoided thus far, it is not unexpected, and thus not beyond a measure of continuation, which leads us---inexorably---to this.

This might seem inconsequential, or even needlessly complicated, but the point is that this equation also accounts for the initial conditions. This leads us to a very concise matrix equation. With an ad hoc 2x2 matrix struct, we get a new fangled algorithm for Fibonacci numbers!

I hope you're as excited as I am to see how far this fancy algorithm will take us. Hmm, it seems like we've taken a step backwards. What's going on here?

Well, this leads us to our next important life lesson: you must look beyond what you see. Let's look back at the first linear algorithm. If you asked someone who just learned runtime analysis what the runtime of this algorithm is, they would probably say that the runtime is O(n), or on the order of n.

Very roughly speaking, this means that the amount of time it takes to execute this algorithm on an input n grows at most as quickly as some linear function [of n]. In even rougher terms, fibonacci(2n) should take up to twice as long to compute as fibonacci(n). If we graph the runtime of an O(n) algorithm, we should expect to see something linear, but that's not what we see; in fact, the graph of this linear algorithm seems much more like the runtime of an O(n^2) algorithm.

. . where is this extra factor of n coming from?

Well, if you were following along with my examples in C++, you probably already know what the problem is. My number class isn't just some meagre typedef: even unsigned long long integers are only 64 bits, meaning that they max out at around 18 quintillian. In particular, my puny 64-bit unsigned integers can't even hold the 94th Fibonacci number.

We need arbitrary-precision unsigned integers for this problem, and that means we can really feel it when we need to perform arithmetic. Imagine if you had to compute the 95th Fibonacci number by hand, and I gave you the 93rd and the 94th. As we learned in elementary school, we start with the least significant digits and add pairwise, digit-by-digit, and propagate carries along the way until we get to the leading digit.

In particular, the number of digitwise sums we need to compute is the same as the number of digits of the summands. This is to say that the addition of two n-digit numbers takes O(n) time to complete. This shows that our linear algorithm is in fact an O(n^2) algorithm after all.

In any case, we haven't really explained why the matrix implementation is slower. you might think it's because this algorithm not only has large number addition, but also has multiplication, and this is so much worse. We're definitely doing some multiplication, but if you unwind the operations, we're really just multiplying with zeros and ones.

In particular, multiplication is not the problem; rather, the issue has to do with the fact that we do four times as many additions, as well as some more copying. However, additions and copies are both O(n) operations, so as far as asymptotic runtime analysis is concerned, this algorithm is also O(n^2), which is why the graph just looks like a larger growing quadratic. you're probably still wondering why we're bothering with matrices if the result is worse.

Well, let's just imagine we're computing the 16th Fibonacci number by hand. Using our matrix algorithm, we can do so by calculating the bottom-left entry of this 16-matrix-long product. While we could certainly calculate all 15 of these multiplications, we can also achieve the same result using just four.

More generally, we can raise a matrix to the power of a power of two significantly faster this way. What if the exponent is not a perfect power of two? No problem: if we write out the exponent in binary, then we can split the exponent into pieces that all have perfect powers of two as exponents.

Since calculating all of A, A^2, A^4, A^8, all the way to A^(2^m), only requires m multiplications from the tactic we just established, this gives us a very efficient alternative. Since computers already encode numbers in binary, this fast exponentiation optimisation can be written really nicely with bitwise operations. So does our hard work finally pay off?

We did it! We now reached past the 600,000th Fibonacci number! We're evidently making some very clear *steps* in the right direction.

you might be wondering why these peculiar jumps happen when the input n is exactly 131072, 262144, 524288. . ?

[Europeans are right to use dots instead of commas to separate thousands when it comes to listing numbers out. ] As is expected of any self-respecting computer scientist, we should all have immediately recognised that these numbers are perfect powers of two! These jumps occur because these are exactly the points where the fast exponentiation algorithm has to do one more loop.

While we have certainly made some decent improvements, we've only made it around three times further than our previous best. This is not what we picture when we hear log n. The log n is definitely great to have, but it came at the cost of us doing actual multiplications in our algorithm.

For example, when computing the 32nd Fibonacci number, we would have to compute this matrix product. Let's just look at how we would compute one of these products: unlike with addition, this computation takes a lot more space. Grade-school multiplication of two n-digit numbers requires O(n) many additions of n-digit numbers, and therefore this is an O(n^2) algorithm.

Now, if we revisit our Fibonacci algorithm, you might think that the runtime is O(n^2 log n), which would be worse than our previous algorithms, but this is actually too pessimistic. To calculate the nth Fibonacci number, we basically need to calculate the (n/2)th Fibonacci number, and then do around four multiplications (and additions). Therefore, the time T(n) it takes to compute the nth Fibonacci number is at most T(n/2) plus some multiple of n^2.

By the so-called master theorem (as though there's only one such theorem) or by just expanding this step-by-step, we see that the fast exponentiation algorithm turns out to be. . .

O(n^2) again. This explains why we don't have huge gains. .

. yet, but we can see where the bottleneck is. All we have to do is multiply more intelligently.

A smart thing we can do to multiply better is to divide . . .

and conquer. Let's revisit our multiplication example. We can try dividing the problem into smaller chunks by splitting the digits up.

If we expand this out, we can compute this large multiplication by doing four non-trivial multiplications that are half as large. However, this division doesn't actually do anything: by the master theorem, or expanding [it] yourself (with some care), we see that the splitting doesn't actually lead to any savings. However, there is a trick that can help us calculate the middle term z_1 using only one multiplication rather than two: if we compute z_0 and z_2, and then we also compute this other product, then we can recover z_1 by this two-fold difference.

This is a trick worked out by Karatsuba. Going down from four half-sized multiplications to three might seem inconsequential, but this compounds when we do it recursively, and we ultimately get an O(n^1. 585) algorithm instead.

Finally, we break the O(n^2) barrier! Let's sit back and enjoy the spectacle as Karatsuba demolishes the other algorithms. Huh, what's going on?

Well, when implementing Karatsuba's multiplication algorithm, the logical flow of computation is much more complicated in each step of the recursion, and you also need to allocate extra workspace to do the separate calculations of z_3 on the side. In my implementation, I allocate eight times as much memory to ensure all of the recursive calls have enough workpace as well (though according to some back-of-the-envelope calculations, six times would have been enough, too). This brings us to another important life lesson: a lot can hide behind the Big O.

Although using Big O notation to summarise algorithmic runtime is convenient it's only a good measure for bounding asymptotic behaviour, the input n just doesn't get large enough in our time frame for Karatsuba's multiplication algorithm to show any benefit over the naïve implementation. It's of course more than likely that I did not implement Karatsuba's algorithm very efficiently, and you're more than welcome to look at my source code and come to this conclusion. The asymptotic runtime of algorithms do *eventually* take centre stage.

To demonstrate this, I calculated the 33,554,431st Fibonacci number using fast exponentiation and both the naïve and Karatsuba multiplication algorithms. The naïve multiplication algorithm completed this calculation in about 30 minutes, whereas the Karatsuba algorithm computed in only 17 minutes. If you're thinking that 33,554,43 is a completely out-of-the-blue choice of an input index, you get an F.

In fact on top of the 1F for you, I'll also give five of your closest friends an F as well. Multiplication really is a heavy burden. .

. wouldn't it be nice if we could just perform the multiplication digit-by-digit just like with addition? Why does the multiplication have to be so.

. . convoluted?

While O(n) digitwise multiplication might seem like a dream, some dreams do come true thanks to to the Fourier transform. I'm not about to give a full-blown lesson on the discrete Fourier transform, but here's the big idea: first think of our numbers x and y as degree less than N polynomial functions in the base variable B. Then, the product x * y is given by plugging in the base B to the degree atmost 2N product polynomial.

Now we have the following facts: first, a degree [less than] 2N polynomial is completely determined by its value at 2N distinct inputs; and secondly, we can calculate f * g at any value B by calculating f(B) and g(B), and then just multiplying that. In this context, the discrete Fourier transform amounts to doing the following: first, calculate f(B) and g(B) on 2N distinct inputs then use these values to calculate f * g on the same inputs finally use these 2N values to reconstruct the polynomial f * g. We perform step one using very particular inputs to these polynomials.

This might look complex. . .

because it is: these numbers are powers of a 2Nth primitive root of unity. The reason for this choice is because it makes recovering the polynomial in step three much easier. Once we recover this polynomial, we can plug in the original choice of base B to get the value of the original product x * y.

Now, let's put this idea into code. Since the DFT and its inverse are actually very similar, we can write them both with the same templated function. With the DFT established, we can follow our three-step recipe for multiplication.

I think I've baited you plenty of times already in this video: while this technique is certainly clever, the result is nothing to write home about. We only get to a measly 16380. .

. so what's going on this time? Well, for one thing, we're back to a quadratic-time algorithm: a DFT has two nested loops each that repeat O(n) times.

But why is the result so much worse than the other quadratic-time algorithms? Well, there's a few reasons: for one thing, we you need to compute two DFTs and one inverse DFT per multiplication. For another, each DFT requires computing an exponential, which is pretty expensive.

Most importantly, all of our complex numbers are encoded by pairs of double-precision floating-point numbers, and this makes all of the arithmetic quite a bit more expensive, at least compared to the integer arithmetic we've been doing so far. At this point, though, I hope that you're already in the frame of mind to trust me that this large step back is wind up for a great leap forward. Cooley and Tukey improved the discrete Fourier transform by a divide-and-conquer technique similar to Karatsuba's algorithm for multiplication.

Again, though, I'm not here to give a full explanation---the video is long enough as it is---but here's a quick rundown for what you do. Looking at the discrete Fourier transform of some even-length sequence of complex numbers, we split the summands based on whether the index is even or odd. Then, notice that an even power of an Mth primitive root is the same as half the power of an (M/2)nd primitive root.

Now, we can view these even and odd portions as discrete Fourier transforms of just the even and odd subsequences of a. This allows us to compute the discrete Fourier transform recursively on even and odd parts. However, this is not enough to give us an asymptotic speed up: the real punch line is that the M-periodicity of the powers of an Mth root of unity allows us to compute the M terms of the discrete Fourier transform of a by only computing half of the even pieces and half of the odd pieces: for the first M/2, we use the recursive formula we just derived; for the remaining m/2, we reuse the E_k and O_k computed in the first half.

Recursively, this means that we can compute the discrete Fourier transform of a length M sequence by computing two discrete Fourier transforms of half the length, and then combining them with O(M) work. Spelling this out, this means that the algorithm calculates a discrete Fourier transform in O(M log M) time. For those who don't know, O(M log M) is faster than O(M^alpha) for every alpha greater than 1, meaning that this is a seriously fast algorithm.

. . asymptotically.

This fast Fourier transform is known as the Cooley-Tukey algorithm, so-call because these insights are due to none other than the same person who discovered the Fourier transforms: Gauss. This brings us to our next important life lesson: a true measure of success is when you manage to unmake a name for yourself. Now, with cautious optimism, let's see if this asymptotic improvement of an O(n log n) algorithm overcomes the costs we incurred by going down the Fourier transform route.

At last, we've reached past the 2 millionth Fibonacci number! So far, we've been focusing on improving our multiplication. However, there's also something we can do about our matrices.

Ultimately, our algorithm is still focused on calculating high powers of a 2x2 matrix. While the computer will put up with whatever tasks we give it---best it can---even small powers of a general 2x2 matrix is something we wouldn't bother to do by hand. However, even high powers of a 2x2 matrix [aren't] so bad if the matrix takes a very special shape: a diagonal matrix.

While the Fibonacci transition matrix is not diagonal, it is diagonalisable: it has two distinct eigenvalues, the golden ratio and. . .

the other golden ratio. We can therefore compute a high power of the Fibonacci transition matrix by diagonalising first, and then undiagonalising afterwards. In particular, this gives us a closed formula for the nth Fibonacci number!

This is known as Binet's formula. While this may seem very compact, it uses irrational real numbers! From a previous video, you may know that the general real numbers are more than a computer can handle.

However, we're only using very particular real numbers in this case; specifically, we're only interested in the real numbers obtained by taking the rational numbers, and then adjoining root five. This is much more computationally manageable, because we can encode these numbers as polynomials in the variable t that satisfies t^2 - 5 = 0. In fact, we don't even need this whole field: we know that every Fibonacci number is an integer.

Since (-phi)^(-n) decays to zero very quickly, we can calculate the Fibonacci numbers instead through an approximation: in particular, we can compute the nth Fibonacci number by calculating the nth power of 1 + sqrt(5), taking the coefficient of sqrt(5), and then dividing it by 2^n (which can be done by a bit shift). If we use pairs of numbers to represent elements of Z[sqrt(5)], then we can create an ad hoc Z[sqrt(5)] struct, and this gives us a way of actually implementing Binet's formula using code! This resulting algorithm is still O(n lgo n) if we use FFTs for multiplication; however, instead of using 2x2 matrices of numbers, we're only using pairs of numbers, so since we're only using half of the memory, this should lead to an improvement.

. . .

. . and there we go: with this optimisation, we've almost doubled the distance travelled, reaching past the 4 millionth Fibonacci number!

This is our gold medalist for the 1 second time-limit! If you're still here, thank you for watching! If you like what you watched, I hope you find an efficient way of expressing that somehow.

If you didn't like what you watched. . .

. . .

you saw right through me, eh? What gave it away? Was it the n log n multiplication algorithm?

The double-precision complex numbers? The dislikers are right; I have to come clean. This brings us to our final life lesson: you can't trust everything you've seen on the internet.

While there were certainly practical reasons for setting the time limit to 1 second, instead of 1 minute or 1 hour, it also hides an ugly truth about our fast Fourier transforms. Let me tell you a bit more about the multiplication algorithm. Large numbers are stored as vectors of unsigned bytes, and are naturally represented in base 256.

These digits are individually cast to complex numbers prior to the fast Fourier transform, after the termwise multiplication and inverse fast Fourier transform, since we know that the result should consist of non-negative integers, the results are then rounded to unsigned 64-bit integers, each of which is folded back into a base 256 representation. Now, let's do some back-of-the-envelope calculations: if we have an N-byte number x, the Fourier transform of its sequence of digits is roughly going to need (8 + log N) bits of precision to be accurately represented. If we take the Fourier transform of two such numbers, then the terms of their product z-hat would require about (16 + 2 log N) bits of precision to be accurate.

Finally, the precision requirement for taking the inverse Fourier transform to recover z is coarsely bounded by 1 bit fewer than the last step. As mentioned before, the complex numbers are encoded as a pair of 64-bit double-precision floats. This means that we have (52+1)-bit mantissas.

Maybe we can forgive one additional bit of [im]precision for rounding, since we know that the final result is going to be an integer, so let's call this 54 bits of precision. From our rough analysis above, this means that the byte-length of our multiplication factor is bounded by around 2^19. This means that our multiplications are fine so long as the Fibonacci number is at most 2^19 bytes long.

Thanks to Binet's formula, we can approximate how far in the sequence we can go while respecting this memory limit. We should be fine so long as we are a healthy distance away from the 6 millionth Fibonacci number. I know this is very coarse number crunching.

You can rest assured that I checked all of the digits of our fast Fourier transform output on the 4 millionth Fibonacci number, and they're all correct. I did so by comparing them with a couple of our slower algorithms. In particular, I haven't lied to you about our gold medal achievement.

However, we are cutting it a bit close. 4 million is close to 2^22, and if we go just one order of magnitude larger, both FFT algorithms fail. I hope this is enough to come clean, but if you're still unhappy with what you watched, it's fine.

Although this video has altered your consciousness, you remain irrevocably human. Ergo, some of my content you will enjoy, and some of it you will not. Concordantly, while your antipathy may be most disheartening, you may or may not realise from the ambience of an ineradicable fan to my back that it is also irrelevant.

![The biggest hand calculation in a century! [Pi Day 2024]](https://img.youtube.com/vi/LIg-6glbLkU/maxresdefault.jpg)