[Music] what is the free energy principle so it's just a formal mathematical prescription of the way that things behave that you can then use to either simulate or reproduce or indeed explain the behavior of things in the film The Matrix of course your brain's reality is the reality you think of and understand and it is not receiving external input most of what the brain is actually in charge of is moving the body or secreting those are the only two ways you can change the universe you can either the move a muscle or secrete something you

cannot bend the spoon with your [Music] brain this is Star Talk special edition Neil degrass Tyson here your personal astrophysicist and if this is special edition you know it means we've got not only Chuck Knight how you doing man hey buddy always good to have you there as my co-host and we also have Gary O'Reilly former Soccer Pro Sports commentator was that the crowd cheering them on yeah that's the that's the crowd at uh Tottenham Tottenham yeah Crystal Palace they're all anytime you mention my name in this room there's a crowd effect Gary you know

with special edition what you've helped do with this branch of Star Talk is focus on The Human Condition and every way that matters to it the Mind Body Soul it would include AI a mechanical augmentation to who and what we are robotics so this is fits right in to that theme so take us where you need for this episode in the age of AI and machine learning uh we as a society and naturally as Star Talk are asking all sorts of questions about the human brain how it works and how we can apply it to

machines one of these big questions being perception uh how do you get a blob of neurons I think that's a technical term yes technical for sure yeah in your skull to understand the world outside our guest KL friston is one of the world's leading neuroscientist and an authority on neuroimaging theoretical neuroscience and the architect of the free energy principle using physics inspired statistical methods to model neuroimaging data that's one of big successes he's also sought after by the people in the machine learning Universe now just to give you a little background on Carl a neuroscientist

and theoretician at University College London where he is a professor studied physics and psychology at Cambridge University in England an inventor of the statistical parametric mapping used around the world and neuroimaging plus many other fascinating things he is the owner of a seriously impressive array of honors and awards which we do not have time to get into and he speaks Brit yes so he's evened this out there's no more picking on the brick because there's only one of them okay Carl friston welcome to Star Talk well thank you very much for having me I should

just point out I can speak American as well please don't Carl that that takes a certain level of illiteracy that I'm sure that you don't possess yeah please don't stoop to our level so let's let's start off with uh something is it a field or is it a principle or is it a an idea that you pioneered which is in our notes known as the free energy principle I come to this as a physicist and there's a lot of sort of and in what way they apply so let's just start off what is the free

energy principle well as it says on the ti it is a principle and um in the spirit of physics it is therefore a method so it's just like Hamilton's principle Bast action so it's just a prescription a formal mathematical prescription of the way that things behave that you can then use to either simulate or reproduce or indeed explain the behavior of things so you might apply the principle released action for example uh to describe the motion of a football the free energy principle it's has a special domain of application it it talks about the self-organization

of things uh where things can be particles they can be people they can be population so it's a it's a method really of describing things that self-organize them themselves into space very so but why give it this whole new term you know we've all read about or or or thought about or seeing the self-organization of matter usually there's a source of energy there available though or it reaches sort of a minimum energy because that's what ite it's a state that it prefers to have you know so so A ball rolls off a table onto the

ground it doesn't roll off the ground onto the table so it's seeks the minimum place and my favorite of these is the the box of morning breakfast cereal and it will always say some settling of contents may have occurred yeah and you open up and it's like 2third full two3 of of powder you get two-thirds of crushed shards of H Corn Flakes so it's it's finding sort of the lowest place in the gra Earth's gravitational potential so why the need for this this new term well it's an old term I guess um again pursuing the

American theme you can trace the um this kind of free energy back to Richard fan in probably his PhD thesis so he was he was trying to deal with the problem of describing the behavior of small particles and invented this kind of free energy as a proxy that enabled him to evaluate the probability that a particle would take this path or that path have so exactly the same mats now has been transplanted and applied not to the movement of particles back to what we refer to as belief updating so it's lovely you should introduce this

notion of um you know nature finding its preferred state that can be described as you know rolling downhill to those free energy Minima this is exactly the ambition behind the free energy principle but the preferred States here are States of beliefs or representations about a world in which something say you or I exist so we this is a point of contact with machine learning and artificial intelligence so the free energy is not a thermodynamic free energy it is a free energy that scores a probability of your explanation for the world in your head being the

right kind of explanation and you can now think about our existence the way that we make sense of the world and our Behavior the way that we sample that world as effectively falling downhill to to that settling towards the bottom but an extremely itinerant way in a Wandering Way as we sort of go through our daily lives at different temporal scales it can all be described effectively as um coagulating at the bottom of the of the serial packet in our preferred States wow so you're again if I don't want to put words in your mouth

that don't belong there this is just my attempt to interpret and understand what you just described you didn't yet mention neurons which are the carriers of all of this or the transmitters of all of this thoughts and memories and interpretations of the world so when you talk about the pathways that an an understanding of the world takes shape do those Pathways track the nearly semi- infinite connectivity of neurons in our brains and it is so you're finding what the neuron it will naturally do in the face of one stimulus versus another that's absolutely right in

fact technically you can describe neuronal Dynamics the the trajectory or the path of nerve nerve cell firing exactly as performing a gradient descent on this variational free energy so that is literally true but I think more intuitively the idea is um in fact the idea you've just expressed um which is you can trace back Poss L to the early days of cybernetics in terms of the good regulat theorem the idea here is that to be well adapted to your environment you have to be a model of that environment um in other words to interface and

interact with your world through your Sensations you have to have a model of the causal structure in that world and that causal structure is thought to be literally embedded in the connectivity within your among your neurons within your brain so you know my favorite example of this um would be the distinction between where something is and what something is so in our universe you your a certain object can be in different positions so if you tell me what something is you wouldn't I wouldn't know where it was likewise if you told me where something was

I wouldn't know what it was that statistical separation if you like is literally installed in in our anatomy so you know there are two streams in the back of the brain one dealing with where things are and and while scam of connectivity dealing dealing with what things are however uh we are pliable enough though uh and of course I'm not pushing back I'm just trying to further understand uh we're pliable enough though that if you were to say go get me the thing okay and then you give me very specific coordinates of the thing I

would not have to know what the thing is and I would be able to find it even if there are other things that are there yep that and that speaks to um something which is quite remarkable about ourselves that we actually have a model of our lived world that has this sort of geometry that can be navigated because that presupposes you've got a model of yourself moving in a world and you know the way that your your body works I'm tempted here to bring in groins but I don't no was Chuck injured his gr a

few a few days ago offline that's all he's been talking about we've all heard about it since Carl I hear the term active inference and then I hear the term basian active inference let's start with active inference what is it how does it play a part in cognitive Neuroscience active inference I think most simply put would be an application of this free energy principle we're talking about so it's it's a description of applying the maths to understand how we behave in a sentient way so active inference is meant to emphasize that perception read as unconscious

inference in the spirit of Helm holds depends upon the data that we actively solicit from the environment you know so what I see depends upon where I am currently looking so this speaks to the notion of active sensing you went a little fast I'm I'm I'm sorry man I'm trying to keep up here okay but you went a little fast there Carl uh you talked about perception um being an inference that is somehow tied to the subconscious but when you can you just do that again please and just to be clear he's speaking slowly exactly

so it's not that he's going fast no is that you are not keeping up well listen I I don't have a problem okay I have no problem not keeping up which is why I have never been left behind by the way I have no problem keeping up because I go wait a minute okay so anyway can you just like break that down a little bit for me sure I I I was trying to speak at a New York Pace my apologies I I'll I'll revert to London um okay so let's start at the beginning sense

making perception how do we make sense of the world we are locked inside our brains are locked inside a skull it's dark in there there's no you can't see other than what information is conveyed by your eyes or by your ears or by your skin your sensory organs so you have to make sense of this unstructured data coming in from your sensory organs your sensory epithelia how might you do that the answer to that or one answer to that can be traced back to the days of Plato through count and helmold so Helm helds brought

up this notion of unconscious inance um sounds very glorious but very very simply it says that if inside your head you've got a model of how your Sensations were caused then you can use this model to generate a prediction of what you would sense if this was the right CA if you got the right hypothesis and if what you predict matches what you actually sense then you can confirm your hypothesis so this is where inference gets into the game it's very much like a scientist who has to use scientific instruments say microscopes or telescopes in

order to acquire the right kind of data to test her hypotheses about the structure of the universe about the the State of Affairs out there as measured by her uh instruments so this can be described this sort of hypothesis testing putting your your fantasies your hypotheses your beliefs about the State of Affairs outside your skull to to test um by sampling data and testing your hypotheses this is just inference so this is where inference uh gets into the game so these are micro steps on route to establishing an objective reality and they're people for whom

their model does not match a prediction they might make for the world outside of them and they would be living in some delusional some world that you cannot otherwise agree to what is objectively true and that would be that would then be an objective measure of insanity or some other neological disconnect yeah really though I mean is it really well if you if you project your own Fantastical world into reality that and you know it doesn't sit but it's what you want then that's a dysfunction you're not working with you're working against but we we

live in a time now yeah where that fast Fantastical dysfunction actually has a place and talked to James Cameron for just a little bit and you'll see that that fast Fantastical dysfunction has was a worldbuilding creation that we see now as a series of movies so is it really so you know aberant that it's you know a disfunction or is it just different well I think he's trying to create artistically rather than impose upon yeah so Carl if everyone always received the world objectively would there be room for art at all oh that was a

good question really was well done sir I'm gonna say I think I was the uh inspiration for that question yes Chuck inspired that question so there's a role for each of each side of this the perceptive reality correct no absolutely so it's pick up on a couple of those themes but that last point was I think quite key it is certainly the case that one application of or one use of active inference is to understand psychiatric disorders so you're absolutely right when people a model of their lived world that's not quite apt for the situation

in which they find themselves say something changes say you lose a loved one so your world changes so your predictions and the way that you sort of navigate through your day either socially or physically is now changed so your model is no longer fit for purpose for this world but as Chuck was saying before the brain is incredibly plastic and adaptive so what you can do is you can use the misl between what you predict is going to happen and what you actually sense to update your model of the world and before I was saying

that this is um a model that would be able to generate predictions of what you you would see under a particular hypothesis or or fantasy and just to make a link back to AI this is generative AI it's intelligent forecasting prediction under a Jed model that is entailed exactly by the connectivity that we were talking about before in the brain and it's the free energy principle manifesting when you readjust to the changes and it's finding the new roots that are presumably the more accurate your understanding of Your World the lower is that free energy state

or is it higher or lower what is it's yeah that is absolutely right so actually technically you know if you go into the cognitive U neurosciences you'll find a big move in the past 10 years towards this notion of predictive processing and predictive coding which again just rests upon this meane that we are our brains are constructive organs generating from the inside our predictions of the sensorium and then the the mismatch is now a prediction error that prediction eror is then used to drive the neurodynamics that then allow for this revising or updating my beliefs

so um such that my predictions now are more accurate and therefore the prediction error is minimized the key thing is to answer your question technically the gradients of the free energy that drive you downhill just are the prediction errors so when you minimized when you minimized your free energy you've absolutely excellent and you're not going to roll uphill unless there's some other change to your environment so if we think back to early Mankind and the predictability so I'm walking along I see a lion in the long grass what do I start to predict if I

run up a tree high enough that lion won't get me but if I run along the ground the Line's probably going to get is this kind of evolutionary that we've born for survival yes or have I misinterpreted this completely no no I think that's an excellent point um well let's just think about what it means to um be able to predict exactly what you would sense in a given situation and thereby predict also what's going to happen next if you can do that with your environment and you've reached the bottom of the serial packet and

you minimize your Fe and you minimize your prediction errors you now can fit the world you can model the world in an accurate way that just is adaptive Fitness so if you look at this process now as unfolding over evolutionary time youve just you can now read the variation of free energy or it's negative as adaptive Fitness so that tells you immediately that Evolution itself is one of these free energy minimizing processes it is also if you like testing hypotheses about the kind of Denis of its environment the kind of creatures that that will be

a good fit for this particular environment so you can actually read natural selection as what in statistics will be known as basian model selection so you are infected inheriting inferences or learning transgenerationally in a way that's minimizing your free energy minimizing your prediction errors so things that get eaten by lions don't have the ability to promate propagate themselves through to the Next Generation so that everything ends up bottom of the serial packet uh avoiding lines because those are the only things that can be there because the other ones didn't minimize their free energy yeah unless

Gary you made babies before you said I wonder if that's a lion in the bushes let me go check but if they've got my jeans then there's a line with their name on it that's exactly right I want to share with you one observation Carl and then I want to hand back to Gary because I know he wants to get all in the AI side of this yeah I remembered one of the books by Douglas hoffstead it might have been girdle eer Bach or or he had a few more that were brilliant Explorations into the

Mind and Body in the end of one of his books he had was an appendix I don't remember a conversation with Einstein's brain and I said to myself this is stupid what what does this even mean and then he went and describe the fact that imagine Einstein's brain could be preserved at the moment he died and all the neurosynaptic elements are still in place and it's just sitting there in a jar and you ask a question and the question goes into his ears gets transmitted into the sounds that trigger neurosynaptic firings it just moves through

the brain and then Einstein then speaks and answer and the way that setup was established it was like yeah I can picture this sometime in the distant future now maybe the modern version of that is you upload your Consciousness and then you're asking your your brain in a jar but it's not biological at that point it's it's in Silicon but what I'm asking is the information going into Einstein's brain in that thought experiment presumably trigger his thoughts and then his need to answer that question because it was posed as a question could you just comment

on that exercise the exercise of probing a brain that's sitting there waiting for you to ask it a question I it's a very um specific and interesting example of the kind of predicted processing that we are capable of because we're talking about language and communication here and and just note the way that you set up that question provides a lovely segue into large language models um but note also that it's not the kind of embodied intelligence that we were talking about with in relation to active inference because there's no the brain is in a body

the brain is embodied most of what the brain is actually in charge of is moving the body or secreting in fact the those are the only two ways you can change the universe you can either move a muscle or secrete something there is no other way that you can affect the the universe so this means that you have to deploy your body in a way to sample the right kind of information that makes your um your model as apt or as adaptive as possible well so Chuck did you hear what he said it means you

cannot bend the spoon with your brain right so that to Yuri G just to clarify okay so what I was trying to hint at I suspect it's going to come up in L conversation that there's I think a difference between a brain and a vat or a large language model that is the involement of lots of knowledge so one can imagine say a large language model being a little bit like Einstein's brain but Einstein plus you know 100 um possibly million other people and the history of everything that has been written that you you can

Pro by asking it questions and in fact there are people whose entire career is now prompt Engineers AI prompts y it's funny the people who program AI then leave that job to become prompt the people who uh are responsible for uh creating the best prompts to get the most information back out of AI so it's a pretty fascinating industry that that that they've created their own feedback loop that benefits them and now you can start to argue you know who where where is the intelligence is it in the prompt engineer as a scientist I would

say that's where the intelligence is that's where the sort of senting behavior is it's asking the questions not producing the ANW that's the easy bit it's you know asking quering the world in the right way and just notice what what are we all doing what what is your job is it asking the right questions Carl can I ask you this please um could active inference cause us to miss things that do happen and secondly does deja vu fit into this yes and um yes um oh so um in a sense active inference um is really

about missing things that uh are measurable or observable in the right kind of way um so another another sort of key thing about natural intelligence um and be a good scientist just to to um point out that sort of noting the discovering infrared that's an Act of Creation that is Art so yeah where that come from from somebody's model about the structure of electromagnetic radiation so you know I think just to pick up on on a point we missed earlier on creativity and insight um is an emerging property of this kind of question answering in

an effort to improve our models you know or our particular world coming back to uh Missing stuff you know it always fascinates me that the way that we can move depends upon ignoring the fact we're not moving so I'm talking now about a phenomena in um cognitive science called sensory attenuation and this is the rather paradoxical or at least counterintuitive phenomena that in order to initiate a movement we have to ignore and switch off and suppress any sensory evidence that we're not currently moving and my favorite example of this is uh moving your eyes so

if I asked you to sort of track my finger as Ive M it across the screen and you moved your eyes very very quickly while your eyes are moving you're actually not seeing the optic flow that's being produced because you are engaging something called ptic suppression and this is a reflection of the brain very cleverly knowing that that particular optic flow that I have induced is fake news so the ability to ignore fake news is absolutely essential for good navigation and movement uh of our world is it fake or just irrelevant to the moment if

it's the New York Times it's definitely fake fake news but it's not it's not so much fake it's just not relevant to the task at hand isn't that a different notion it's a subtle one I for the Simplicity of the conversation and uh then I I'm reading fake as irrelevant imprecise so it's like it's unusable so your brain is just throwing it out basically like don't don't nothing to see here so get rid of that moving so Neil Neil this is this is in your backyard rather more than mine but isn't this where the Matrix

pretext kind of fits in that our perception might differ from what's actually out there and then perception can be manipulated or recreated well I think Carl's descendants will just put us all in a jar the way he's talking Carl Carl what is your laboratory look like full of Jaws well yes well there are there are several pods and we have been waiting for you in the film The Matrix of course which came out in 1999 now 25 years you quarter century ago which is hard to believe what the it was very candid un sense that

your brain's reality is the reality you think of and understand and it is not receiving external input all that your brain is constructing is detached from what's exterior to it and if you've had enough lived experience or maybe in that future that they're describing the brain can be implanted with memory it reminds me what's that movie that Arnold schweger is in about Mars um Total Recall Total Recall thank you thank get your ass to Mars instead of paying thousands of dollars to go on vacation they would just implant the memories of a vacation in you

yeah and bypassing the sensory conduits into your brain of course these are movies and their stories and it's it's science fiction H how science fictiony is it really well I certainly think that the you the philosophy uh behind I think probably both Total Recall uh but particularly The Matrix um I I think that's very real and very current um you know just going back to our understanding people with psychiatric orders or perhaps real people um who have odd views World World Views to understand that the way that you make sense of the world can be

very different from the way I make sense of the world dependent on my history my predispositions and my prize what I have learned thus far and also the information that I select to attend to so just pursuing this theme of ignoring 99% of all the sensations for example um Chuck are you thinking about your groin at the moment I would guarantee you're not and yet it is generating sensory impulses from the nerve endings uh but you at this point in time were not selecting that so the capacity to select is I you know I think

a fundamental part of intelligence and agency but of course to select means that you are not attending to or selecting 99% of the things that you could select so I think this you know the notion of selection is is a Hallmark of truly intelligent uh Behavior yeah are you analogizing that to large language models in the sense that it could give you gibberish it could find crap anywhere in the world that's online but because you prompted it precisely it is going to find only the information necessary and ignore everything else yes I I know but

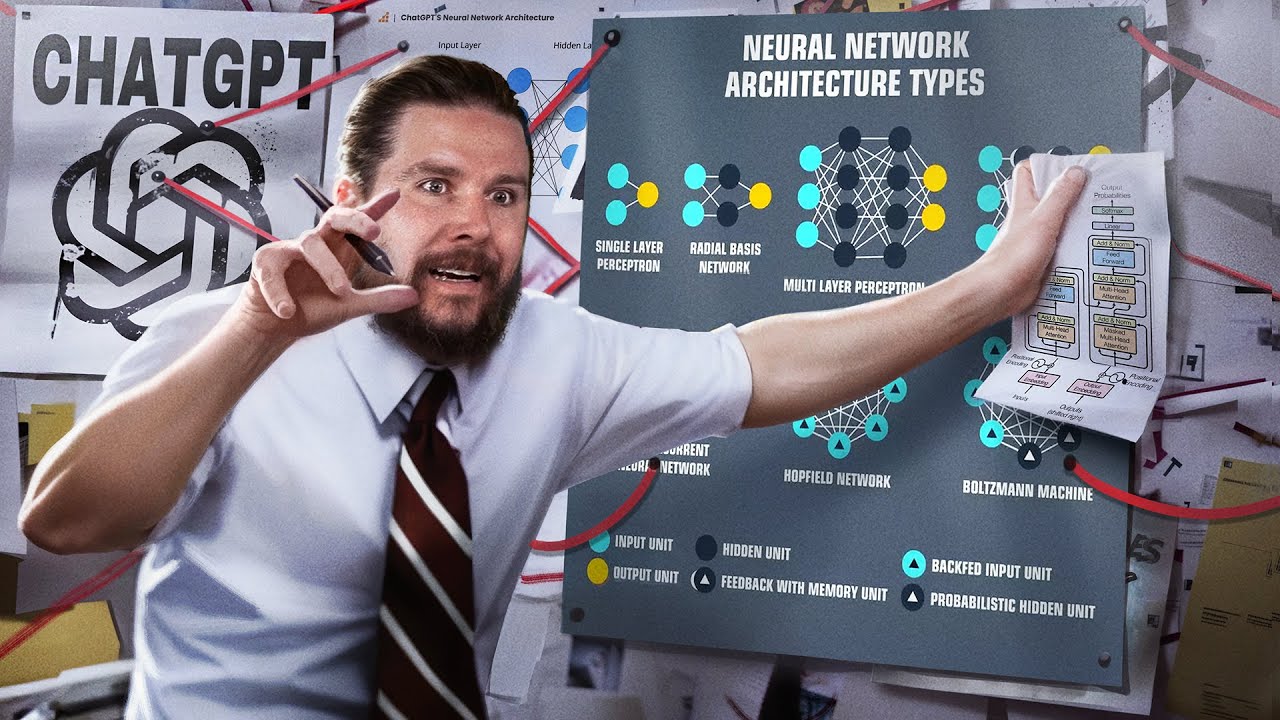

that's a really really good example um so the yes part is that the the characteristic bit of AR ecture that makes large language models work certainly those that are implemented using Transformer architectures are something called attention heads so it is exactly the same mechanism the same basic mechanics uh that we were talking about in terms of intentional selection that makes Transformers work so they select the recent past in order to predict the next word that's why they work to selectively pick out something in the past ignore everything else to make them work when you talk

about that probability in a in an llm that probability is a mathematical equation that happens for like every single letter that's coming out of that model so it is literally just giving you the best probability of what is going to come next okay whereas when we perceive things we do so from a world view so for an llm if you show it up picture of a ball with a red stripe that's next to a house okay and say that's a ball and then show it a picture of a ball in the hands of a little

girl who's bouncing it it's going to say all right that might be a ball that may not be a ball whereas if you show even a two-year-old child this is a ball and then take that ball and place it in any circumstance the baby will look at it and go th B so there is a difference in the kind of intelligence that we're talking about here yeah I think that's spot on that's absolutely right and and that's why I said yes and no so okay you know that kind of fluency um that you see in

large language models is very compelling and it's very easy to give the illusion that these things have some understanding or some intelligence but they don't have the right kind of generative model underneath to be to generalize and recognize a ball in different context the way that we do well it would if if it was set up correctly and that setup is no different from you looking at reading the scene I mean a police officer does that busting into a room you know who's the perpetrator who's not before you shoot there's an instantaneous awareness factor that

you have to draw from your exterior stimuli and so because you know I'm reminded of here Carl I saw one of these New Yorker style cartoons where there two dolphins swimming in one of these water you know Parks right and so they're in captivity but the two dolphins are swimming and one says to the other of the person walking along the pool's Edge those humans they face each other and make noises but it's not clear they're actually communicating and so so who are we to say that the AI large language is not actually intelligent if

you cannot otherwise tell the difference who cares how it generates what it is if it gets the result that you seek you're going to say oh well we're intelligent and it's not how much of that is just human ego speaking well I'm sure it is human ego speaking um but in a technical sense um I think okay there's a loophole you're saying because I'm not going to say that bees are not intelligent when they do their waggle dance tell other bees where the honey is and I'm not going to say termites are not intelligent when

they build something a thousand times bigger than they are when they make termite Ms and they all cooperate I'm fatigued by humans trying to say how special we are relative to everything else in the world that has a brain when they do stuff we can't let me ask you then so what's the common theme between the termite and the be and and the policeman reading the scene what do they all have in common all of those three things move whereas the large language model doesn't doesn't um so that brings us back to this um action

the active part of active inference so the no to the question about um large language models um and attention was that large language models are just given everything they given all the data there is no requirement upon them to select which data are going to most useful to learn from and therefore they don't have to build expressive um fit for purpose World morals or generative models whereas your daughter would the two-year-old daughter playing with the beach ball would have to by moving and selectively reading the scene by moving her eyes by observing her body by

observing bows in different contexts build a much deeper appropriate world or Jared model that would enable her to recognize the ball in this context and that context and ultimately tell her father I'm playing with a ball so we had a great show with Brett Kagan who mentioned your free energy principal and in his work creating computer chips out of neurons what people call organoid intelligence what he was calling synthetic biological intelligence and that's in our archives our recent archives actually recent archives yeah do you think the answer to AGI is a biological solution a mechanical

solution or a mixture of both and and remind people what AGI is artificial general intelligence I know that's what the word stand for but what is it not asking me for the answer don't ask me either seriously I've been told off for even using the acronym anymore because it's so IL defined and people have very different readings of it so open AI has a very specific specific meaning uh for it if you talk to other theoreticians they would represent it I think um what people are searching for is natural intelligence it's natural Gary you know

it ask your question do we have to make a move towards btic neuromorphic natural kinds of instantiation of intelligent Behavior yes absolutely but CH just coming back to your previous theme notice we're talking about behaving systems systems that act and move and cons SCT and do their own data mining in a smart way as opposed to just ingesting all the data um so what I think people mean when they talk about super intelligence or G generalized AI or artificial general intelligence they just mean natural intelligence kind mean us it's our brain our brain if you

want to know what a uh AGI is it's our brain you know and the way no no truck if it was actually our brain it would be natural stupidity our brain without the stupidity that's really what it is so back in December 22 You Dropped a white paper titled designing ecosystems of intelligence from first principles now is this a road map for the next 10 years or Beyond or to the terminate it ultimate destination and then somewhere along the line you discussed the thinking behind a move from AI to IIA and IIA standing for intelligent

AG which seems a lot like moving towards the architecture for sentient Behavior have I misread this in any way no you've read that perfectly so that that white paper was written with colleagues in Industry particularly uh versus AI exactly a kind of road map that um those people who were committed to a future of artificial intelligence that was more sustainable that was um explicitly committed to a move to Natural intelligence and all the biomagnetic moves that you would You' want to make including implementations on neuromorphic Hardware uh Quantum computation and photonics all those efficient um

approaches that um would be sustainable in the sense of your know climate change for example but also speaking to Chuck's notion about efficiency efficiency is um is also if you like bait into natural intelligence in the sense that if you can describe intelligent Behavior as this falling downhill pursuing free energy gradients minimizing free energy getting to the bottom of the seral packet you're doing this VI a path of least action that is the most efficient way of doing it not only informationally but also in terms of the amount of electricity you use and the carbon

footprint you leave behind so from the point of view of sustainability it's important we get this right so that part of the of that white paper was saying there is another direction of travel away from large language models large is in the title it's seductive but it's also very dangerous it shouldn't be large it should be the size of a b so to do it biologically you should be able to do it much more efficiently and of course the meme here is that our brains work on 20 watts not 20 kilowatts um and we do

more than any large language model um so that we have low energy intelligence we do efficient I guess that's a that's a way to say it I've seen you quot it Carl as saying that we are coming out of the age of information and moving into the age of intelligence if that's the case what is the age of intelligence going to look like or have we already discussed that well I think we're at its Inception now dist in virtue all the wonderful things that are happening around us and the things that we are talking about

we're asking some some of the very big questions about you know what's going what is happening and and what will happen over the next over the next decade I think part of the answer to that lies in your previous um nod to the the switch between Ai and IIA so IIA brings agency into play so one deep question would be is current generative AI an example of agentic is it an agent is a large language model an agent and if not then it can't be intelligent and certainly can't have generalized intelligence so what is definitive

of being an agent I put that out there as a question half expecting a joke but I've got agent Smith in my head if anyone can take that and run with it where yeah there you go it's right about now where you hear people commenting on the morality of a decision and whether a decision is good for civilization or not and everybody's afraid of AI achieving conscious and just declaring that the world would be better off without humans and I think we're afraid of that because we know it's true yeah I was going to say

we we've already come to that conclusion that's the problem okay Carl is consciousness the same as self-awareness yeah I'm sure there are lots of people who you could answer that question of and get a better answer I I would say the purpose of this conversation probably not no I think to be conscious um certainly to be sentient and to behave in a senent kind of way would not necessarily imply that you knew you were a self I'm pretty sure that a bee doesn't have self-awareness but it still has sentience it still experien has experiences and

has plans um and communicates and behaves in a you know in an intelligent way and you could also argue that certain um humans um don't have self-awareness of a fully developed sort um you know and about very severe uh psychiatric conditions so I think self-awareness is is a a gift of a particular very laborate very deep gentic model that not only entertains the consequences of my actions but also entertains the fantasy or hypothesis that I am an agent and I am self and can be self-reflective in a sort of metacognitive sense so I think um

I think i' differentiate between self-aware and simply being um being capable of censing behavior interesting that great let me play skeptic here for a moment mild skeptic you've described you've accounted for human decision-making and behavior with a model that connects our sensory the sensory conduits between what's exterior to our brain and what we do with that information as it enters our brain and you've applied this free energy uh uh gradient that this information follows that sounds good it all sounds fine I'm not going to argue with that but how does it benefit us to think

of things that way or is it just an after theact pasti on top of what's we already knew was going on but now you put fancier words behind it is there predictive value to this model or is the predictivity in your reach because when you assume that you can actually make it happen in the AI Marketplace yeah I think that that's the key thing so I mean when I'm asked that question or indeed when I ask that question of myself I sort of apply it to things like Hamilton's principle of least sttion why is that

useful well it becomes very useful when you're actually sort of building things it becomes very useful when you're simulating things it becomes useful when um something does not comply with a pamp's PRP leased action so just to unpack those directions to travel in terms of applying the free energy principle that means that you can write down the equations of motion and now you can simulate self-organization that has this natural um kind of intelligence this natural kind of senent in Behavior you can simulate it in a robot in an artifact in a Terminator should you want

to although strictly speaking that would not be compliant with the free energy principle um but you can also simulated in silico and make digital twins of um people and choices and decision making and sense making and once you can simulate you can now use that as an observation model for real artifacts that start to phenotype say people with addiction or say people who are very creative or say people who had uh schizophrenia so if you can cast a Barren imprint of false inference believing things are uh are present when they're not or vice versa as

an inference problem and you know what the principles of sense making and inference are and you can model that in a computer you can now go a sampit in which you can now not only phenotype by adjusting the model to match somebody's observed Behavior but now you can go and apply synthetic drugs or do brain surgery in silico so there are lots of practical applications of knowing how things work well when I say things work how things behave that presumes that your model is correct for example just a few decades ago it was presumed and

I think no longer so that our brain functioned via neural Nets neural networks where it's a decision tree and you slide down the tree to make an Ever more refined decision on that assumption we then we then mirrored that in our software to invoke neural net decision making in my field in astrophysics how do we decide what galaxy is interesting to study versus others in the millions that are in the data set you just put it all into a neural net that has parameters that select for features that we might in the end of that

effort determine to be interesting we still invoke that but I think that's no longer the model for how the brain works but it doesn't matter it's still helpful to us you're right and honestly that is now how a i is organized around the new way that we see the brain working yeah so so and what and why is the brain the model of what should be emulated I mean the human physiological system is Rife with baggage evolutionary baggage much of it is of no utility to us today except sitting there available to be hijacked by

advertisers or other who will take advantage of some feature we had 30,000 years ago when it mattered for our survival and today it's just dangling there waiting to be exploited so a straight answer to your question the free energy principle is really you know a description or a recipe for self-organization of things that possess um a set of preferred or characteristic States coming right back to where we started which is the bottom of the serial packet if that's where I live if I want to be there that's where I'm comfortable then I can give you

a calculus that will um for any given situation prescribe the Dynamics and the behavior and the sense making and the choices to get you to that to to that point it is not a prescription for what is uh the best place to be or what the best um embodied form of that being should be in saying that if you exist and you want to exist in a sustainable way where it could be you know a species or it could be a a meme in a given environment yes in a given set it's all about it's

all about the relationship you that's a really key point so the variational fre that we've been talking about the prediction eror is a measure of the way that something couples to its universe or to its world it's not it's not a statement about a thing in isolation it's the fit it's you know again if you just take the notion of prediction error the something that's predicting and there's something being predicted so it's all relational it's all observational it's it's a a measure of adaptive Fitness that's an important clarification here yes Carl could you give us

a few sentences on basian inference that's a new word to many people who even claim to know some statistics that's a it's a way of using what you already know to be true to help you decide what's going to happen next are there any more subtleties to a basian inference than that I think what you just said um captures the key point it's all about updating so it's a way of describing inference by which people just mean estimating the best explanation probabilistically a process of inference that is ongoing so sometimes this is called Asing belief

updating updating one's belief in the face of new data and how do you do that update in a mathematically optimal way you simply take the New Evidence the new data you combine it using Bas rule with your pride beliefs established before you saw those new data to give you a belief afterwards sometimes called a posterior belief go otherwise you would just come up with a hypothesis assuming you don't know anything about the system and that's not always the fastest way to get the answer yeah so you could argue is important you can't do it it

has to be a process it has to be a path through some belief space you're always updating whether it's at an evolutionary scale or whether it's during this conversation you can't start from scratch and you're using the word belief the way here State side we might use the word what's supported by evidence so it's not that I believe something is true often the word belief is just well I believe in Jesus or Jesus Is My Savior Muhammad so belief is I'll believe that no matter what you tell me because that's my belief right and my

belief is protected constit tionally uh on those grounds when you move scientifically through data and more data comes to support it then I will ascribe confidence in the result measured by the evidence that supports it so it's an evidentiary supported belief yeah yeah I guess if we have to say belief then it's what is the strength of your belief it is it is measured by the strength of the evidence behind it yeah that's how we have to say that so Gary do you have any last questions before we got to land This Plane yeah I

do because um if I think about us as humans uh we have sadly some of us have psychotic otes schizophrenia uh if someone has hallucinations they have a neurological problem that's going on inside their mind yet we are told that AI can have hallucinations I don't know if it's if does AI have mental illness hey I just learned to lie that's all you know you ask it a question it does does know the answer and it's just like all right well how about this that's what we do in school right you don't know the answer

up it might be right right exactly you what's the answer ah Rockets okay yeah uh I I I I was speaking to Gary Marcus in Davos a few months ago and he was tell me he invented the world or applied the world the word hallucination context and it became word of the year I think in in in some circles and I think he regrets it now because the spirit in which he was using it was um technically very divorced from the way that people hallucinate um and I think it's a really important question that you

know theoreticians and neuroscientists um have to think about in in terms of understanding false imprints in a brain and just to pick up an Neil's point at the when we talk about beliefs we're talking about subpersonal nonpropositional Basin beliefs that you you you wouldn't be able to articulate these These are the way that the brain encodes problemistic the causes of its Sensations and of course if you get that inference process wrong you're going to be subject to inferring things are there when they're not which is basically hallucinations and delusions or inferring things are not there

when they are and this also happens to thas in terms of neglect syndromes dissociative syndromes hysterical syndromes these can be devastating conditions where you've just got the inference wrong so understand the mechanics of this failed inference I think for example Hallucination is absolutely crucial it usually tracks back to what we're talking about before in terms of the ability to select versus ignore different parts of the data so if you've lost the ability to ignore stuff then very often you preclude an ability to make sense of it because you're always attending to the surface structure of

Sensations take for example severe autism um you may not get past the bombardment of sensory input in all modalities of all parts of the scene on all parts of your sensory all alive right it's all alive guys I think we got go to call it quits there Carl this has been highly Illuminating yes good stuff and what's interesting is as much as you've accomplished thus far we all deep down know it's only just the beginning and who knows where the next year much less five years will take this it be interesting to check back in

with you and see what you're making in your basement um with the Brit Neil it's garage oh garage you guys the basements is more the garage we go out there of wonderful things yeah exactly exactly okay Professor Carl thanks for joining us thank you very much conversation the chokes particularly soome I've ever done conversation thanks for joining us from London thank you uh time shifted from us here Stateside again we're delighted that you could share your expertise with us in this Star Talk Special Edition all right Chuck always good to have you man always a

pleasure all right Gary pleasure Neil thank you I'm Neil degrass Tyson your personal astrophysicist as always bidding you to keep looking up a