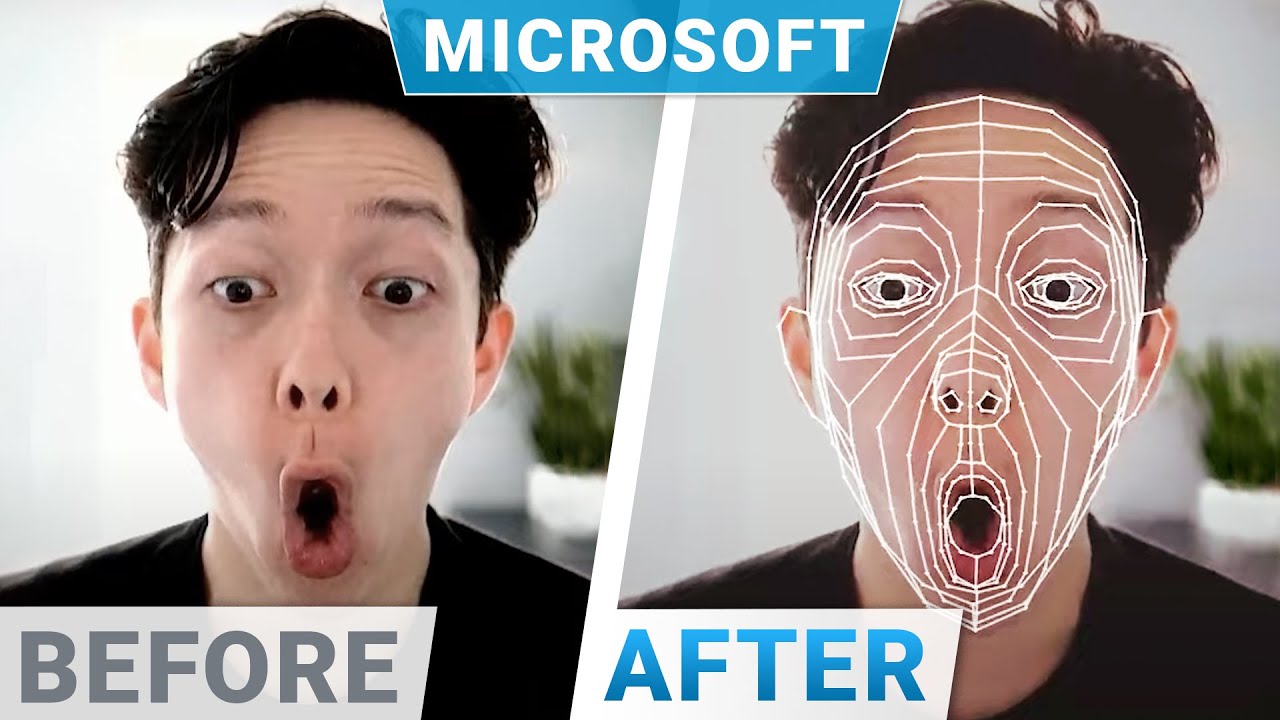

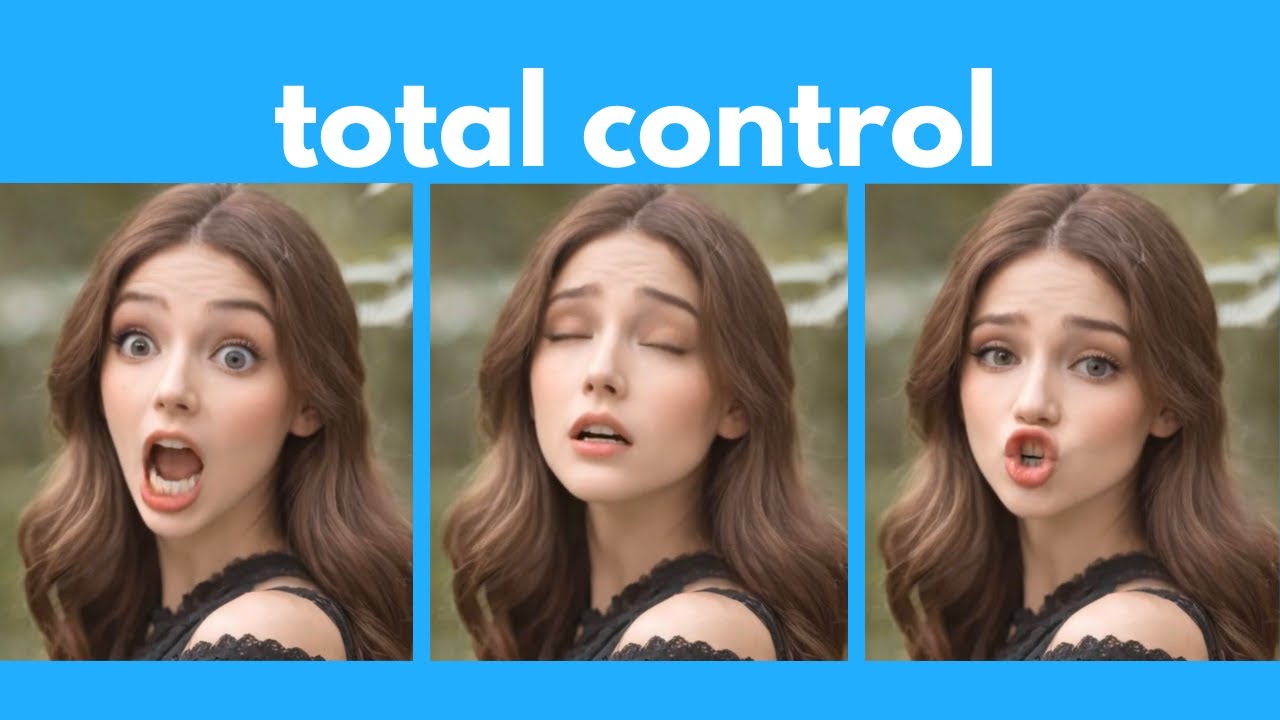

Dear Fellow Scholars, this is Two Minute Papers with Dr Károly Zsolnai-Fehér. Today we are going to make the Mona Lisa and other fictional characters come to life through this DeepFake technology that is able to create more detailed videos than previous techniques. So, what are DeepFakes?

Simple, we record a video of ourselves, as you see me over here doing just that, and with this, we can make a different person speak. What you see here is my favorite DeepFake application where I became these movie characters and tried to make them come alive. Ideally, expressions, eye movements and other gestures are also transferred.

Some of them even work for animation movie characters too. Absolutely lovely. This can and will create filmmaking much more accessible for all of us, which I absolutely love.

Also, when it comes to real people, just imagine how cool it would be to create a more smiling version of an actor without actually having to re-record the scene. Or maybe even make legendary actors appear in a new movie. The band Abba is already doing these virtual concerts where they appear as their younger selves, which is a true triumph of technology.

What a time to be alive! Now, if you look at these results, these are absolutely amazing, but the resolution of the outputs is not the greatest. They don’t seem to have enough fine details to look so convincing.

And here is where this new paper comes into play. Now, hold on to your papers and have a look at this. Wow.

Oh my! Yes, you are seeing correctly, the authors claim that these are the first one megapixel resolution deepfakes out there. This means that they showcase considerably more detail than the previous ones.

I love these, the amount of detail compared to previous works is simply stunning. But, as you see, these are not perfect by any means. There is a price to be paid for these results.

And the price is that we need to trade some temporal coherence for these extra pixels. What does that mean? It means that as we move from one image to the next one, the algorithm does not have a perfect memory of what it had done before, and therefore some of these jarring artifacts will appear.

Now, you are all experienced Fellow Scholars, so here, you know that we have to invoke The First Law Of Papers, which says that research is a process. Do not look at where we are, look at where we will be two more papers down the line. So, megapixel results huh?

How long do we have to wait for these? Well, if you have been holding on to your papers so far, now, squeeze that paper because these somewhat reduced examples work in real time. This means that we can point a camera at ourselves, do our thing, and see ourselves become these people on our screens in real time.

Now, I love the film directing aspect of these videos, and also, I am super excited for us to be able to even put ourselves into a virtual world. And clearly, there are also people who are less interested in these amazing applications with these techniques. To make sure that we are prepared and everyone knows about this, I am trying my best to teach political decision makers from all around the world to make sure that they can make the best decisions for all of us.

And I do this free of charge. As any self-respecting Fellow Scholar would do, you can see me holding on to my papers here at a NATO conference. At such a conference, I often tell these political decision makers that DeepFake detectors also exist, how reliable they are, what the key issues are, and more.

So, what do you think? What actor or musician would you like to see revived this way? Prince or Robin Williams anyone?

Let me know in the comments below! Thanks for watching and for your generous support, and I'll see you next time!

![The moment we stopped understanding AI [AlexNet]](https://img.youtube.com/vi/UZDiGooFs54/maxresdefault.jpg)