you might think technology is the great leveler and that ones and zeros don't have racial bias google's photos app apparently automatically labeled a black couple as gorillas you'd be wrong it's common knowledge that this algorithm is racially biased as technology races further and faster ahead is the world in danger of automating racism all kinds of structural inequality are reflected in our ai systems and what can be done to fix it [Music] yeah suddenly i deep clean the car the sanitization of stuff you know it's even more important for parmanjang keeping his car clean is vital

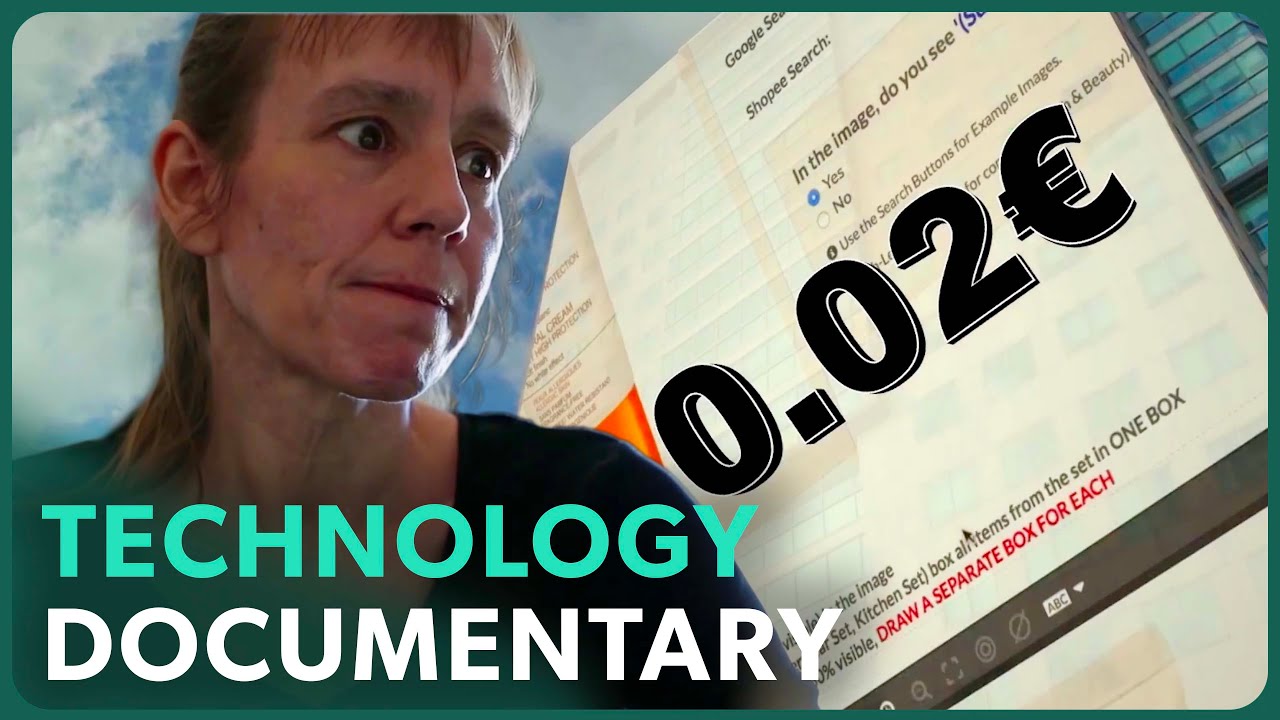

working as an uber driver supported his family and relatives back home in the gambia until he lost his job par believes he's a victim of racial bias built into uber's software last april he got a message from uber after my shift finished one night came home i was looking at my emails and i saw one that says like japan has been permanently deactivated i thought it was just like a computer mishap following public concerns about customer safety uber introduced a new system in 2020 to verify drivers identities periodically drivers are asked to upload selfies which

are checked against photos supplied when they first joined uber despite providing numerous selfies par says uber's algorithm failed to match them to his profile i didn't think much of the whole thing because i thought if a human being reviews it it'll be clear i'll be back online the next morning but parr says his account remained deactivated he later discovered he was far from the only ethnic minority driver to have experienced this in dignity i knew from the stories that i come across online that some some of the black people have got and the same experiences

with the uber algorithm uber employs at least 70 000 drivers in the uk 52 of whom are from an ethnic minority parr and a handful of other drivers are taking uber to court for unfair dismissal they allege uber's algorithm discriminated against them because of the color of their skin the company denies these claims and says it uses a robust system of human review to ensure decisions about livelihoods are not made without oversight many technologies have a history of embedded racism in the 1970s kodak colour film was unable to capture darker skin tones accurately the company

only fixed this after chocolate makers complained its photos weren't doing their products justice the racist history behind facial recognition techno racism [Music] in recent times microsoft facebook and google have all had high profile problems with their technology just recently facebook's ai got it terribly wrong when it put the label primates on a video of black men so what is going wrong the simple answer is technologies are trained using publicly available data sets which do not include sufficient data from ethnic minorities the problems of the world include all kinds of structural inequality and those problems are

reflected in the data that we're using to train our ai systems it's commonly believed that technology is neutral and free of bias so one of the things that i write about as a is an idea that i call technochauvinism it's the idea that technology is superior that technological solutions are superior as the world puts greater faith in technology embedded biases are affecting black people in all aspects of their lives during the covid pandemic pulse oximeters have been essential in measuring blood oxygen levels but then researchers discovered that they gave flawed readings for black patients according

to the researchers who discovered this bias it was very possible that darker skin patients were turned away and sent home to self-monitor because medical practitioners thought that they were doing well so this is one example of how racial bias can really be a matter of life or death i don't actually think that the creators intended to be racist i think that they were probably a group of light-skinned developers who tested it on themselves and said oh it works for us it must work for everybody and if you want to buy a house software might also

discriminate on the basis of your skin color brooklyn native rachelle faroul moved to philadelphia in 2015 hoping to buy a home here research in the u.s found that older credit scoring algorithms used by some mortgage lenders favored particular financial behaviors that are more common among white people [Music] black applicants for home loans were 80 percent more likely to be rejected than white applicants from similar backgrounds [Music] so what can be done to fix the problem of racial bias with computers data journalist meredith broussard and her colleague thomas adams are working on a solution even if

people don't think that race data is being used the computer may actually be using race data because ais are making all these decisions they're fighting fire with fire by designing a unique set of software tools to identify bias embedded within technologies once you set the standard then you can refine the standard but until you do that you don't really know what's going on i've partnered with o'neill risk consulting in order to come up with what we call a regulatory sandbox which is a software system that companies are going to be able to use to test

out their algorithms for bias in order to confirm that companies are not releasing biased algorithms into the world however observers worry that tech companies cannot be trusted to police themselves for many companies reducing racial bias will align with their bottom line however in the instances when reducing racial bias does not align with the bottom line it might be necessary to regulate those companies for many there's only one way to ensure that systemic racism isn't built into the digital future much tighter regulation we're at an interesting inflection point right now some of the changes that would

need to happen to combat racial bias include government regulatory agencies to have more resources to take action but so far only the eu has started to get serious about policing tech companies big and small a white paper on artificial intelligence it's proposing a groundbreaking principle the higher the risk posed by an ai system to fundamental rights the stricter the oversight rules i do think that eu's draft regulations are an important step there still are some concerns because it assumes that we have a shared understanding of what risk is and how to make those calculations without

effective regulation and more concerted self-policing the old scourge of racism risks blighting the new digital future i'm tamara jokes for us policy correspondent at the economist if you'd like to learn more about vet tech bias you can read my piece by clicking on the link and if you'd like to watch more of the now and next series you can click on the other link thank you for watching and please do not forget to subscribe