this is The Genesis Project and it's a bit hard to even put into words the actual extent of what this is of what this means so Genesis is a generative AI model similar to a large language model or an image or video model it produces something it generates something but instead of videos or text or images it's a generative physics engine able to generate four-dimensional dynamical worlds powered by a physics simulation plan form designed for general purpose Robotics and physical AI applications we're basically talking about something very close to a fullblown similation of entire worlds

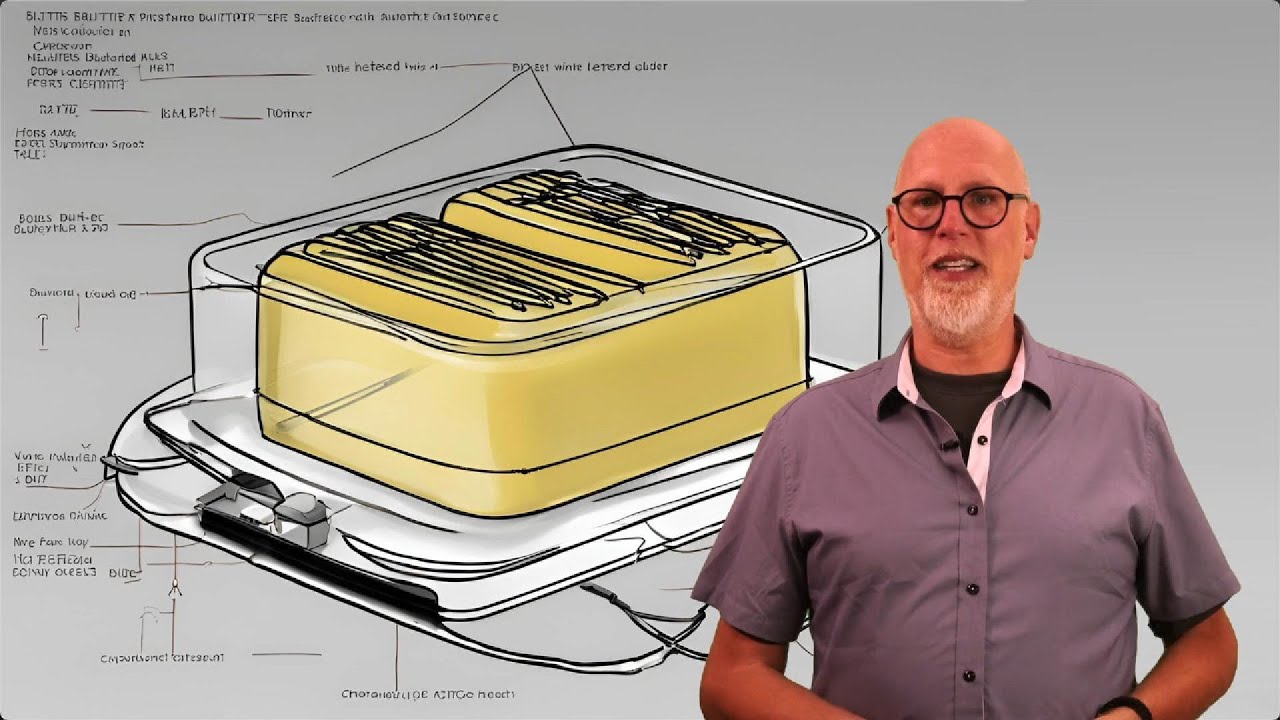

as AI takes over make this your Mantra let the robots do the work subscribe to stay on top of AI news interestingly Genesis physics engine is developed in pure python a very popular programming language especially when it comes to AI but if it's 10 to 80 times faster than the existing GPU accelerated Stacks like Isaac Jim we've talked about Isaac Jim it's what Nvidia uses to train the robots in simulation it's powered by gpus the nvidia's graphic chips and some of the Nvidia simulations boast that they deliver a simulation speed of up to 10,000 times

faster than the real world while still retaining all the physics of the real world Genesis delivers a simulation speed 430,000 times faster than real time than our world and it takes only 26 seconds to train a robotic Locomotion policy that's transferable to the real world on a single RTX 490 and RTX 490 is what I currently have on the computer on which I'm recording this video they're not cheap but they're still accessible to regular consumers for at home consumer Hardware which means that my home computer can run a simulation and train a robot to let's

say walk right as they say a robotic Locomotion policy it's able to run a simulation that trains that robot to walk in under 30 seconds then I can sort of uh take those skills and copy and paste them to a real life physical robot that is able to walk out here in the real world keep in mind since Genesis simulates the physics of our world perfectly that means that if a robot learns to walk inside that simulation that means it has the skills to walk out here in the real world oh by the way this

is open source so that means that you can actually do this for free on your computer and Genesis isn't just one thing it is as they call it a unified simulation framework created all from scratch that combines a lot of different state-of-the-art physics solvers allowing simulation of the whole physical world in a virtual realm with the highest possible realism their goal is to create an all-in-one Universal data engine something that can autonomously create physical worlds various modes of data environments camera motions robotic task proposals reward functions robot policies character motions fully interactive 3D scenes and

much much more all this is done for the purpose of create completely automated data generation for robots basically a whole world for robots to run around and learn how to move around avoid obstacles pick up things open doors whatever so Nvidia did a lot of the groundbreaking when it came to using graphical processing units right gpus Nvidia cards using that sort of GPU acceleration for robotic simulation the increase simulations by more than one order of magnitude compared to CPU based simulations right so whatever we were able to do with uh basic central processing units CPUs

we increase that speed by one order of magnitude you can think of that as increasing it by 10x or even close to 100x this model Genesis pushes up the speed by another order of magnitude and it does so without any compromise in simulation accuracy so they're not cutting Corners they're not making the simulation less accurate but they're able to take the speed of the simulation to the next level since Genesis is a combination of these physics solvers these different phys physics models they can simulate a wide range of materials rigid body like a bowling ball

articulated body like the standard robots that we think of but it can also do cloth liquids deforma bles various thin shell materials elastic or plastic bodies and also interestingly enough Genesis is the first ever platform for providing comprehensive support for soft muscles and soft robot and their interactions with rigid robots this has been seen as kind of a big breakthrough if we're able to create these soft robots or soft muscles they are notoriously hard to simulate to make work but they would allow for a much wider range of things that we could create and it

works very similar to how a chatbot would work you simply type in what you want simulated and it does so the generative agents autonomously propose various robotic tasks cleaning up moving stuff around picking stuff up off the floor they provide the environments they design it with the layouts the write reward functions so actually the code that makes the robot let's say want to do certain things and gives it an attaboy when it does it right or some penalty when it let's say drops some fragile item which ultimately leads to automated generation of robotic policies robotic

policies are these skills that robots can execute in first in simulation and then in the real world not only that but Genesis can do a lot of things with character motion including creating some almost video game like characters characters that can run dance fight stagger if you look at this robotic arm there's a lot of ways in which he can fold to be able to manipulate an object like grasp something pick something up the method that's used to determine kind of how to calculate all the joint angles is called inverse kinematics or i k not

a trivial task here you can see Genesis GPU parallelized ik solver so basically the calculation of how that arm should fold along all its joints it's able to solve those calculations for 10,000 of those arms simultaneously under 2 milliseconds and again that's on that RTX 4090 right consumer grade Hardware it supports native non-convex Collision handling which is the idea of dealing with some of the more complex shapes that interact with each other like nuts and bolts or multiple gears that are driving each other and here's a cute interactive physical Tetris game made with Genesis I'm

guessing the tetris pieces are made out of jello so as you can tell there's a lot going on here so first of all we're creating entire four-dimensional dynamical and physical worlds highly accurate highly precise physics very diverse physics across many different sort of shapes and sizes and material Behavior not only that but they have very precise camera motion alling for both simulations of these objects as well as visual data Gathering if you're training a robot as well as taking a step forward towards actually autonomously creating these robotic training sessions this simulation creates these jungle gyms

that begins to train the robots to do the stuff without a lot of human intervention and of course we've covered this in some of the previous videos but this idea of sim to real so similation to the real world a lot of different companies and research organizations have had a lot of success with that by training the robots in simulation they able to create these skills these robot policies a lot faster a lot cheaper and a lot more scalable than it would be in the real life they don't have to worry about the cost of

electricity and replacing Parts wear and tear and they're able to make the simulation run you know in the case of Isaac Jim 10,000 times faster in the case of Genesis it sounds like they're saying it's almost half a million times faster than the real world so when we train these robots in the simulation we find that this Sim to real this idea of taking them out into from the simulation into the real world the knowledge that they gain in the simulation tends to transfer fairly well one of the things for example that Google Deep Mind

researchers talked about is creating a little bit more of a chaotic atmosphere in the simulation so adding some random factors to account for potential sort of variability in the real world in the real world some floors might have different friction levels there might be random gusts of wind a wire in one hand of the robot might be passing a little bit less current meaning that there's just a little bit less force that can generate so little things like that are added into the simulation again this is from Google deep mine but others probably use a

similar training tactics but what that means is that when that knowledge is taken from the simulation into the real world the robots tend to be fairly robust they tend to be pretty agile they tend to be able to recover from various slips and Falls if they lose their footing they know how to react and adapt and recover from that the S tooreal policy transfer can create various back flipping robots robots that are capable of dragging chairs around putting chairs in the right places and many many other behaviors that we've seen recently now obviously this has

an infinite amount of applications not only for training various robots various embodied AI because it could be training factories or cars or robots pretty much anything that moves can be automated using something like this the fact that this is open source can be run on RTX 4090 meaning that most people can run it at home if they have a computer powerful enough to run this this is going to going to open up a lot of doors for the next generation of people in robotics both for professional researchers and just enthusiasts we've just reached the point

in the progress of Robotics where anybody with a few thousand to invest on a decent computer can begin for free to train a robot or any embod AI in whatever form Factory they choose to start completing whatever tasks they want them to cleaning up the house watering the plants or a fleet of 24 drones take off from the ground and perform a flip together possibilities are endless let me know what you think about this is this exciting a little bit scary do you support open-source simulations like this for training robots do you think there could

be potential some pitfalls some dangers there let me know what you think in the comments the Twitter posts had millions of views there's a lot of interest in this there's going to be a lot of people installing this in GitHub and trying it out and seeing what they can do we're in the middle of the AI Revolution right now and the very next big wave is going to be the robotic Revolution we've already seen kits where you're able to get robots that are not that expensive thousands of dollars low tens of thousands we some like

this year able to train them and once they're trained to do a particular task you can easily share that task similar how you can share an email or a picture I personally can't wait to get started but let me know what you think if you made this far thank you so much for watching my name is Wes rth and I'll see you in the next one

![The moment we stopped understanding AI [AlexNet]](https://img.youtube.com/vi/UZDiGooFs54/maxresdefault.jpg)