all right thank you all for coming today we're going to get started uh right on time here hopefully a few more people will drift in from other sessions we just spend the first half hour or so talking about in some depth about the F1 fpga instances how they're used what the use cases are and walk you through the development process and I move fairly quickly through that part of the presentation because I really want to get to motivating use cases and we have Eric stalberg here from Frederick National Laboratory to talk about most motivators for

Accelerated Computing so again the agenda today I want to talk briefly about F1 how it differs from other AWS instance types how it's similar I want to talk about the programming environment and I'm going to make an assumption that that you have little or no knowledge of fpga programming but I'll ask first how many do have some fpga programming experience well that's actually surprising number Eric you don't count George you don't count um but that's good that's good so uh we can go into a little bit of depth and certainly in Q&A afterwards I'll also

direct you to the some of the resources that are available on GitHub on the community forum and so forth we'll also talk briefly about how applications are delivered how you create an Amazon fpj image an AFI and then either to to deploy in your own applications or offer to third parties through a Marketplace how that how that works and then as I said I'd like to back up a bit and talk about the real motivators for Accelerated Computing that would apply to gpus and fpgas but spe specifically fpgas you know what what is driving us

to do this you know on the cloud so first the the goals of the F1 fpga instances we really did want to make fpga acceleration capabilities accessible to dramatically more end users there's absolutely applications that have been well proven for fpg acceler a for many many years areas like genomics processing high performance data analytics uh image processing video These are applications that have existed for quite some time and have existed in the form of Hardware accelerated systems appliances if you will uh that are used in a wide variety of Industries and in the research sector

another important motivator is just to make development of Hardware accelerated systems much much easier and faster in part by providing the development tools in the cloud in a in a very um you know standardized environment easy to access uh easy to deploy we also wanted as part of that to allow application developers working with the fpj to really focus on the application itself on the algorithms not so much on the low-level issues of IO and how do I communicate with ram how do I push data around we want abstract that to the greatest extent possible

while providing lots of options for different types of application architectures and then finally as I said before providing that Marketplace so now somebody who's an expert perhaps in image processing video processing genomics can offer their solution to other customers in the marketplace as they do today with with traditional software to quickly review the AWS instance types the ec2 instance types as you know we have a variety of these uh and over time we've added more and more different types of ec2 instances some of these are quite specialized for example in the storage side the I3

instance is quite specialized for extremely high iops uh local attached storage types of applications like scalable nosql databases for example X1 instances specialized for very high memory requirements right large in-memory analytics for example and certainly on the right side GPU acceleration has existed in the cloud for some some time now both for graphics acceleration in the case of G2 that's its primary use case and for compute acceleration the P2 being a a great example of that recently released last year based on Nvidia k80 gpus specifically designed for compute acceleration with gpus similarly F1 offers the

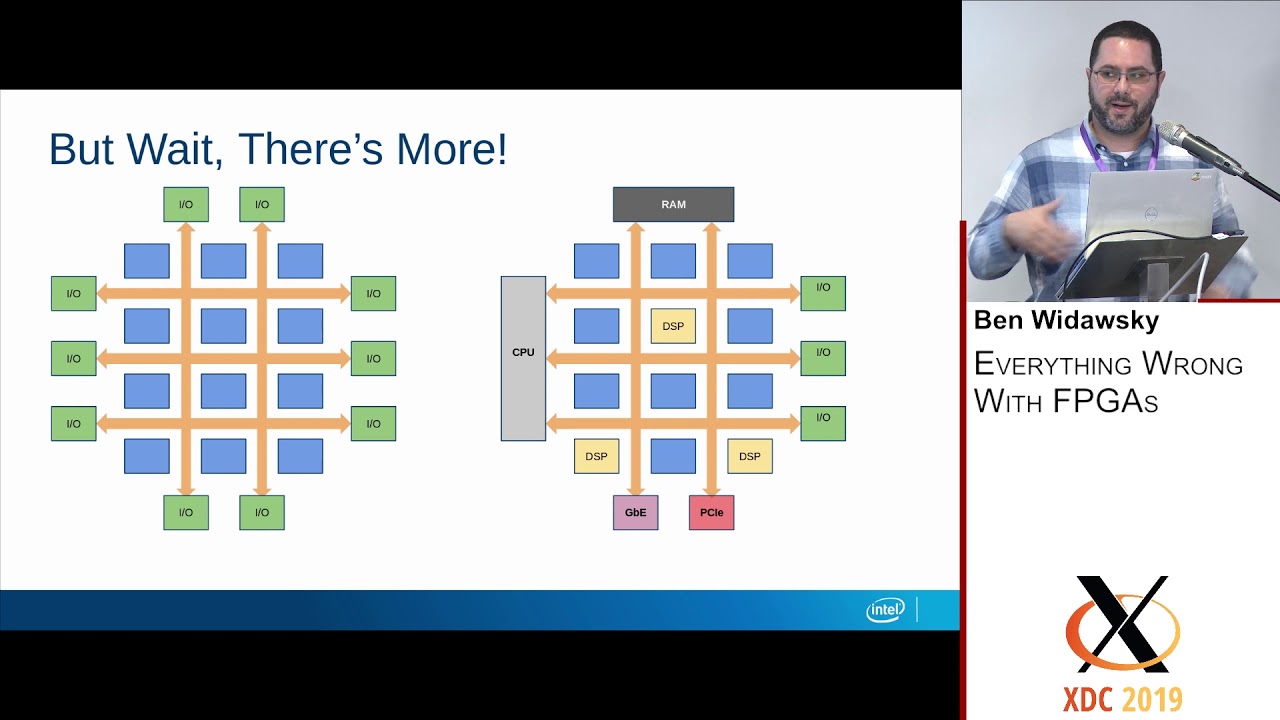

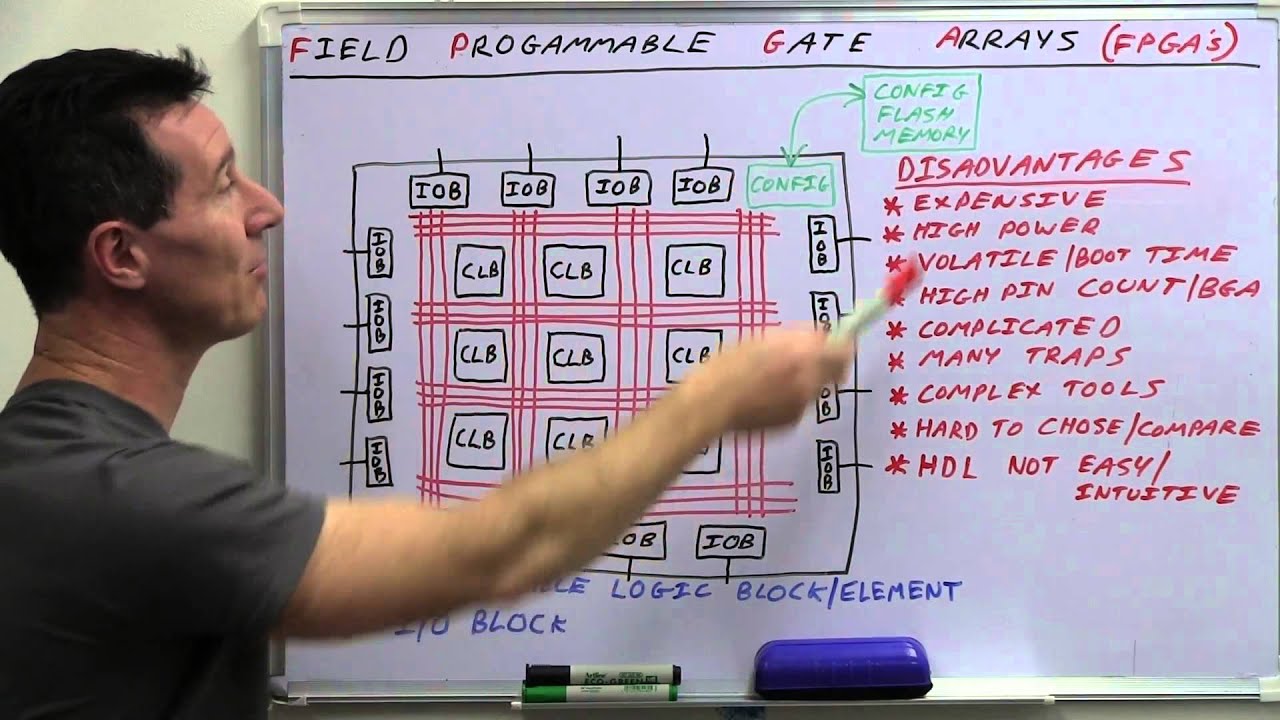

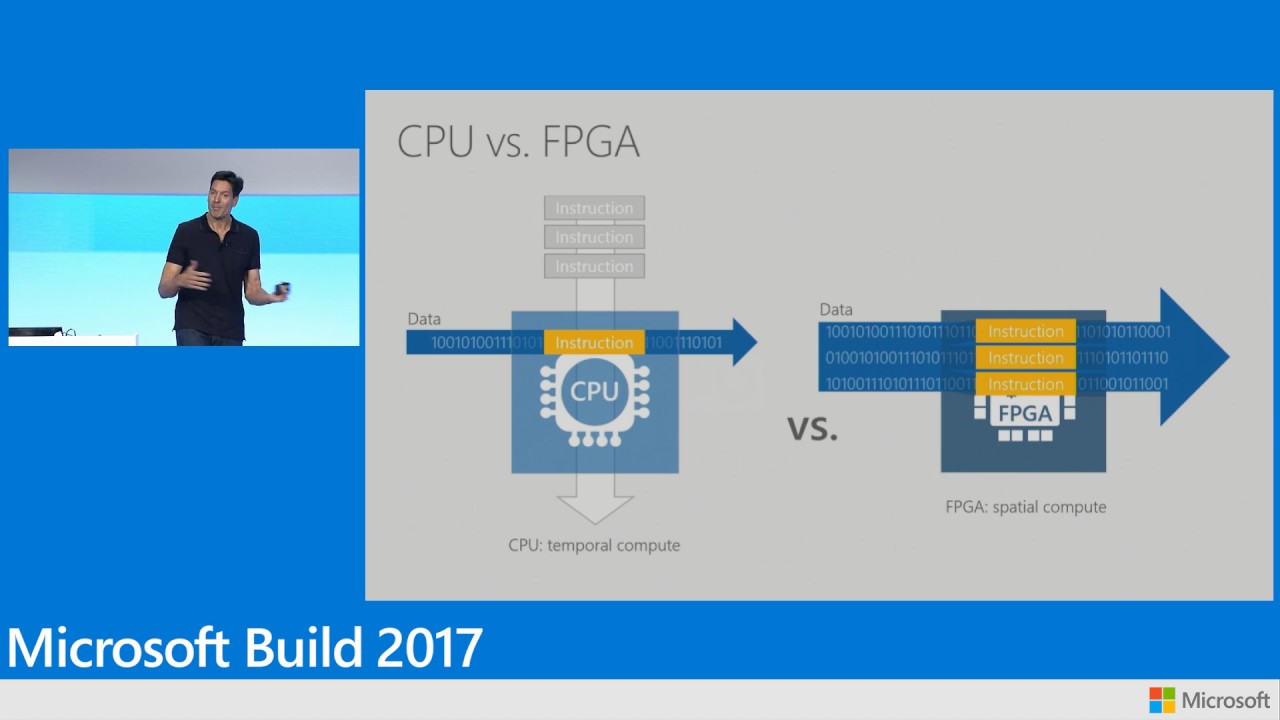

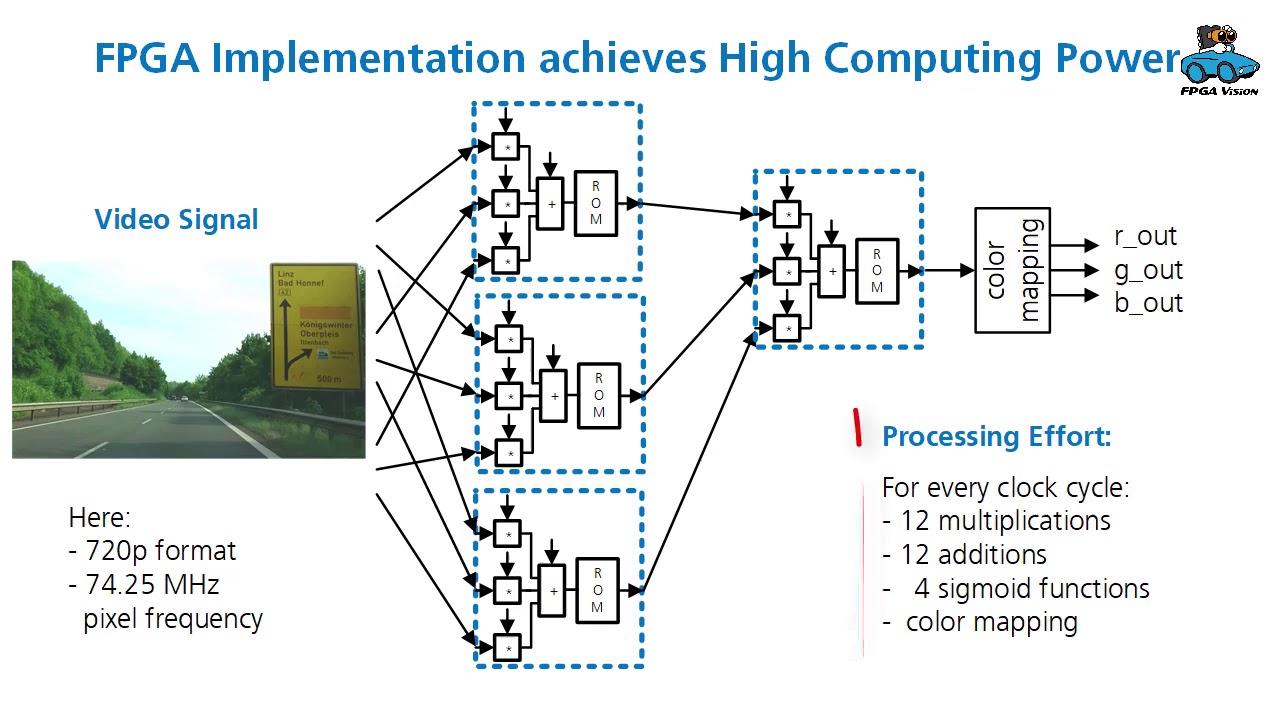

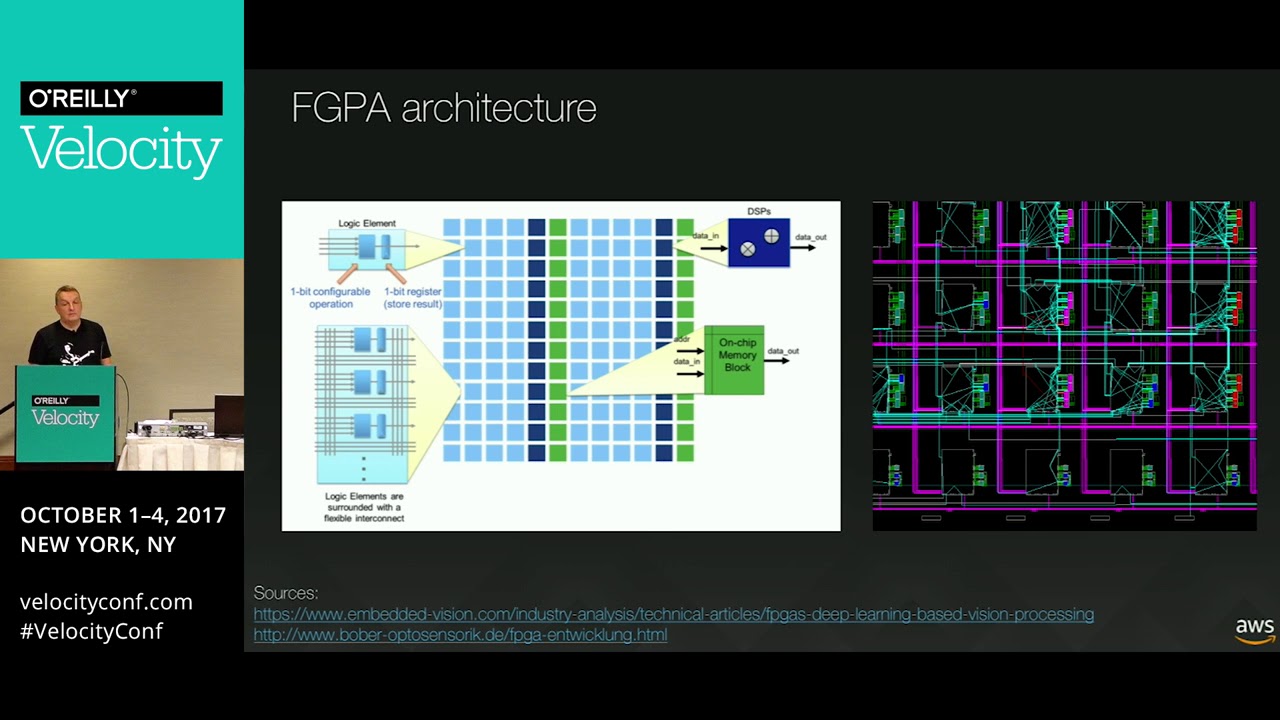

ability to do fpga acceleration in a manner somewhat akin to what we do with the P2 GPU instances so basically summarize uh what an fpga offers and this applies to some extent to gpus as well it allows you to find those application hotspots to find those opportunities to do things more efficiently and faster to push those out to custom Hardware right so you want to find maybe the inner code Loops maybe that kernel of computation that needs to be run extremely parallel unroll the loops pipeline things push them out to hardware this works very very

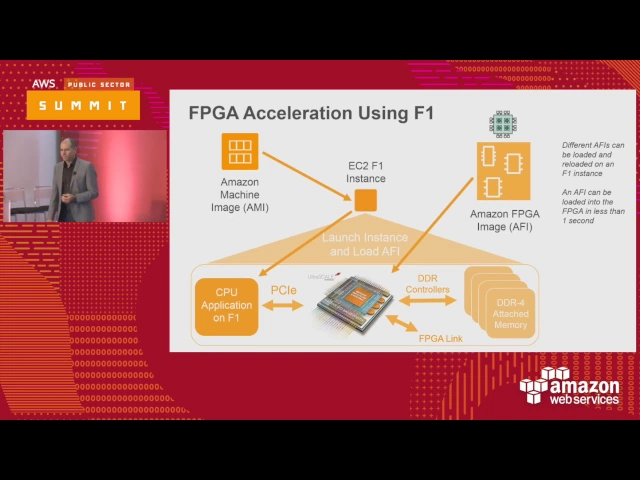

well if you can manage the data back and forth if you're not uh bottlenecked let's say by tremendous amounts of IO streaming for example video processing genomics processing uh streaming analytics these are extremely good in fpgs because they're heavily pipelined so the F1 fpj instance supports this by providing up to eight high density zyink ultrascale plus f fpg in a single instance so each of these each of these fpgas is on a dedicated PCI Express card you've got full access to to them through the programming environments that I'll describe in a moment and these are

attached to a highly capable uh instance type it's got quite a lot of memory as you can see a lot of vcpus it's based on Intel Broadwell processors it's a very powerful instance type that's augmented by from either one or eight fpga as PCI Express accelerators each of these accelerators each of these fpgas has access to local ddr4 memory as you can see so we've got the ability to move data down into the accelerator put it in ddr4 Access that memory process stream the data perhaps from one fpj to another if you have an 8

fpga application and then move that data back to the host that's the that's the basic model of computation for F1 and we do have uh quite a lot of use cases that have driven uh fpga acceleration in the cloud this is not speculative these are actual applications that are known to be accelerat using programmable hardware and a number of Partners uh a list here there's more of course uh coming who are developing applications or who have deployed applications on F1 instances I see uh Rift in the room here today doing accelerated data analytics EO genome

for example doing accelerated genomics many of these Partners have previous experience or products that are fpga based as appliances for example and providing a cloud alternative uh in addition to their on premise uh applications that are typically offered as appliances can be a great benefit to customers we'll talk a bit later about about the specific applications in life science iences in cancer research Eric stalberg will help us explain some of the motivators there but it's important to know that across Industries across use cases there are many many opportunities to accelerate applications using fpga I'm going

to very quickly walk through the fpga programming flow there are two webinars available uh on uh the uh AWS a YouTube channel that you can access more details on these and as I'll I'll describe there's also resources for example on GitHub that you can go deeper into this so I just want to summarize uh the four basic steps the first step is to develop the hardware for your fpga the accelerator itself and for that you use fpga programming tools that are supplied by the fpga vendors zinks that are provided on AWS uh really free uh

you know in terms of software licensing on AWS you run those on a standard ec2 instance and you have many choices M4 for example C4 R4 depending on the amount of memory and compute you need for your particular problem you develop the application you run synthesis place an route simulated if you need to then when you're ready you have your custom logic developed with those tools you now are going to deploy that onto an F1 instance using what's called an AFI an Amazon fpga instance similar in concept to the Ami Ami that's used Amazon machine

image in an ec2 instance and then if you wish you can take your Ami plus the afis that are associated with it and offer those out on AWS Marketplace again just to summarize the AFI Amazon fpga image similar to the Ami is uniquely encrypted identified you can track it you can secure it uh offer it to customers track its usage and so forth via the marketplace it's also important to realize that you can have multiple afo associated with one application so you know very quickly less than a second you can swap out one AFI and

swap in another one right to have a different Hardware accelerated function for your application running in the Ami so that First Development step I described before you use the developer Ami the the the fpj developer Ami that we provide it's available actually on Marketplace uh we don't charge for it you will pay for the underlying ec2 instance that you're running the Ami but you can choose from a variety of instances for example you might want to use the t2 instance very low cost to do the initial design and simulation but then when it's time to

do fpj place and Route which is more memory and compute intensive maybe at that point start to use an M4 or4 some other larger instance so you have choices so you you use that developer Ami you know whatever it takes you to develop your application using higher level tools perhaps opencl coming soon uh or using vhdl and verilog methods traditional methods generate your application your custom logic to deploy in the AFI using these tools the developer Ami as I said that's available on Marketplace we don't charge for it it includes all of the fpga tools

that you need specifically xyl links Viv a uh on AWS with the necessary libraries you combine that with a hardware development kit and HD K that's available on GitHub to create your applications that generates uh a file called a DCP design checkpoint that you can then combine with other aspects that we provide to create your complete AFI apologize I'll go through that uh in more detail in a moment speaking slower um the AWS hdk that I just mentioned and the SDK for the software side of the application are available on GitHub and the addresses there

so all of that codes available the documentation continues to evolve and improve uh but that makes it much easier to develop your application for F1 now you still need to have fpga development experience expertise knowledge um these are somewhat specialized skills but if you have experience with fpgas in other platforms you'll be able to start very quickly with the F1 if you don't have significant experience doing fpga design you can absolutely learn it as a software developer um one of the things that makes that learning easier is that we take care of a lot of

the abstractions uh around Hardware IO and that traditionally has been one of the more difficult aspects of developing for fpgas it's one thing to take an algorithm an application and embody that as a as a hardware a pipeline and parallel implementation using vhr verog it's quite another thing to figure out to talk to all of the io interfaces and the fpga shell that we provide is part of the hdk and the SDK on the software side to communicate to your application makes that much much easier and again the hdk the axi interfaces the interfaces to

memory and so forth are all described in the documentation that's in that GitHub link for the hdk I mentioned a few moments ago if you're familiar with zyink uh Viv a and related uh development tools you'll use them in much the same way on AWS you can either use them in interactive mode as we're showing here for example to run your um your HDL your Hardware simulations uh or in this case to run even uh you know JTAG type debugging with with chip scope if you're familiar with that those are interactive tools you can certainly

run those in the cloud just as you do today remote desktop is is basically what you're doing there uh it's also possible if you're interested in running tools like the zyink chipsco tools locally on your own machine and tunneling up to the fpj to do things like uh uh emulated JTAG debugging from your desktop up into the cloud that's described as well in the documentation so at the end of this process running the vato tools generating that design checkpoint submitting that design checkpoint into the tools that we provide for f AFI management you can now

generate that AFI using tools that we provide that AFI is secured encrypted given a unique ID now it's ready to be deployed on the fpga that's attached to your F1 instance and that deployment well this is the build step so this is actually creating that uh AFI from the DCP again all of these methods are described in the documentation you can either do it with command line as we see here or through apis but at the end of that you've got the AFI you've created it it's encrypted and now you can download that to the

fpga to start an application it's kind of a two-step process first thing you want to do is get your application up and running on the F1 ec2 instance on the software side and then through either a command line or API call you can then then download the AFI select an AFI and download it onto the fpga you can as I said before load different afis over time so maybe your application needs to do uh for for image processing or video processing maybe it needs to do some filtering application and then maybe you want to swap

that out and put in perhaps a machine learning inference application or something else completely different in that fpga and you can do that over time without rebooting the uh the host ec2 instance now what makes this possible from the software side is the SDK the SDK includes apis command lines uh you know and functions that allow you to do things like load and unload that AFI into the fpga OR to write data right to get data from from your host application down into the fpga into the into the local memory and actually interact with that

PGA from an application perspective so there are management capabilities like managing the fpga itself there are run times that are used on the software side for that uh for that software communication with the fpga and then there's a code that's gets gets um you know executed uh that we provide on the driver level to make it possible to communicate data to and from the fpga and again this is all described in the documentation I'm just summarizing izing it briefly here when you're ready to download that AFI here's an example of the command lines there's two

two or three of them shown here the first of these fpj described local image is basically saying what's in that fpga at the moment it's returning back that there's there's nothing loaded currently so under fpga image ID nothing is loaded it's empty the next uh a the next uh command line fpj load local image is going to take a specific AFI that has a unique identifier and load that down onto the fpga and that should take less than a second to do now we do a describe image again we will see that that image is

successfully loaded in the fpj it's ready to execute again this is just some of the SDK functions that are available to you either a command line or API for your application communication with other customers other users of of F1 is is available via the forums we got quite an active developer Community really starting before the public launch in the preview process uh quite a lot of of customers uh and partners helping each other uh and communicating back to us and our our documentation our a our apis our sdks hdks have absolutely improved over time as

a in response to customer feedback it's a very active Community uh feel free to join this and uh join in this discussion want to talk briefly now about the the marketplace how that fits in fits in and then really turn it over to Eric to talk about at a kind of a higher level why we care about accelerated Computing uh in large scale applications in Spec specifically in research so the AWS Marketplace has existed for quite some time as a way to allow third-party software providers to offer their solutions to other customers on AWS and

this includes literally thousands of applications provided by uh organizations that range from small startups with very specific applications specific business needs up to very large uh well-known isvs offering their Solutions on the cloud again to other customers and those other customers might be research customers government customers they might be large Enterprises but the ability to select applications from Marketplace deoy those in your own uh virtual private Cloud your VPC is a very very effective way for end customers and um third party isvs to assemble solutions for their own customers quite quickly the AFI uh builds

Upon This by allowing you to deliver the Ami the Amazon machine image that encompasses your application along with the AFI or one or more afis that actually provide that Hardware acceleration for your application this is very important for uh for fpj developers who historically have had challenges in delivering Hardware accelerated solutions to end customers because not only did the End customer had to previously evaluate the software and the application and its ability to deliver on its promise of acceleration they also had to qualify and bring in house the hardware itself which can be quite challenging

from a from a compliance perspective or from a uh simply an IT governance perspective bringing in custom Hardware is not is not trivial for many organizations and for the software provider it's an expensive proposition to send out speculatively this custom Hardware in order to have it evaluated and doing this via Marketplace on the cloud just makes it dramatically easier for developers of Hardware accelerated applications to take their solutions to other customers take them to Market and actually monetize them in various methods which could be you know uh pay per hour types of methods or long-term

subscriptions or or other other methods that are supported in Marketplace today so if you're not familiar with AWS Marketplace and you're interested in developing fpga accelerated applications uh please go have a look and uh we can absolutely help you with that through the partner program just one example and there's certainly more to come uh the first uh application to appear on Marketplace was actually by myology myology is providing a uh deep learning inference uh application and this is really an example of of what an organization uh can do in the fpga in this case U

you know image classification algorithm implemented in the fpga in F1 offered as a complete uh solution uh to other customers via Marketplace and there'll be many more to come so that was a that was a really fast uh overview of the F1 instances what the architectures are what the development flow looks like and again there are absolutely uh many more details you can find in the GitHub repository I showed earlier uh also uh on the uh webinars that we we published uh earlier this year uh both an overview and a deeper dive some weeks ago

I'd like now to turn it over to Eric stal of Frederick National Laboratory to talk about uh about the role of accelerated Computing and why this is so important for computing today and in the future uh Eric has a long history with fpgas knows them well accelerators of All Sorts but I won't steal this funder in in providing too much information about Eric and his role so thank you Eric for being here thank you we live thank you David for the opportunity to share with you several of the things that are underway at Frederick National

Lab uh to accelerate cancer research a lot of this is something that's becoming increasingly accessible to you all as the cancer research is taking full advantage of the opportunities that the cloud presents both in Sharing data and increasingly taking advantage of high performance Computing to give you a quick overviews you'll see these slides these points again to give you a little bit of a context as we mentioned we're working to accelerate cancer research uh cancer is a devastating disease it affects us all in many different ways and so we want to accelerate the research in

cancer in order to find Solutions more quickly we deal with rapidly growing volumes of data requires Advanced Computing challenges to address incorporate take advantage of the information that's within that data few observations about how things are today is that increasingly the environments are heterogeneous fpgas are a component gpus are a component CPUs our component and certainly there's architectures to come that we haven't yet thought of that we'll have to incorporate if we really want to accelerate cancer research we have issues with moving data around presumably these are themes that you've all familiar with may have

experienced yourself keeping the data stable and increasingly as we start building a knowledge Network for cancer how do we get the stability of the knowledge that's maintained within that data and then as we look at a summary point is looking at the the Technologies continue to move rapidly we need standards and representations that allow us to share our information and insight and this is where you all come in Innovations are key so one of the things that has been part of my job for the past couple years is to recognize that we can accelerate cancer

research with the increasing levels of high performance Computing we have a data data glut we have a Personnel shortage so all of you have an opportunity potentially to take your insights and take advantage of that expertise and apply it in a cancer research context all right this is how you get it connected one of the things that's been a priority of the Precision medicine initiative that's what the PMI stands for is about open science supporting open access and getting open data and even open source so this theme of openness is what's making the cancer research

accessible to you standardization of various pieces getting interoperability getting a common vocabularies ways that we can describe the data consistently but then also services and infrastructure that make it possible these again are things themes that resonate with what we can do in the cloud and what we can do in the cloud is take advantage of it to do these types of things come up with new treatments ways to discover new drugs take advantage of the data and validate and find effective pre clinical models that are increasingly personal taking advantage of your own DNA your own

genetic context to understand how best to treat you and that applies not only in cancer but in Precision Health and in doing that we understand biology even more completely and through that we can actually identify the new drugs and new data coming through new techniques and transform Cancer Care ultimately by taking advantage of the advanced computation affecting the population and the load that cancer puts on all of us so in the context of the Precision medicine initiative working with the National Cancer Institute they have four priority areas in the upper right hand side you'll see

one of the big issues is overcoming drug resistance cancer can be initially treated but then overcoming that over time and maintaining that cancer in check becomes a challenge because cancer evolves in the lower left you'll see a reference to models new ways to screen new drugs to affect the cancer both initially and as it involves our priorities and in the lower right you'll see something that we're all familiar with building a network of information so part of what the NCI is doing in the context of precision oncology is building that network of information all the

way from the molecular level to the patient level motivating what you see in the upper right left hand corner is developing new treatments that would be effective for the individuals who might be unfortunate enough to be affected by cancer this all leads to generating lots of information so if we look at the genomic information you can see it's basically on a hockey stick curve the computers are becoming more effective we're using more Automation in the generation of the information essentially accelerating the flow of information these are all good insights into the complexity of biology which

is extremely complex it is one of the most difficult software problems you would ever imagine the software is continuing to change your DNA is continuing to change how it operates and trying to understand that is going to take an awful lot of information and so you see this rapid rise in information that you'll be available to take advantage of and understand that complexity but today we see that traditionally HBC has been distinct from Big Data bringing them together has been in priority of the national strategic Computing initiative and some of these observations are there is

that the data and the HBC have to come together you know the applications that we saw David refer to earlier are all applicable when we start looking at cancer there's engineering challenges Big Data challenges curation challenges all of those things apply we have to deal with limits of how we can transmit and store data we have to develop complicated analysis share that Insight which ultimately gets us into collaborations which require standards and other ways to work together so getting back to the observations as we start looking at exoscale very large systems Big Data are key

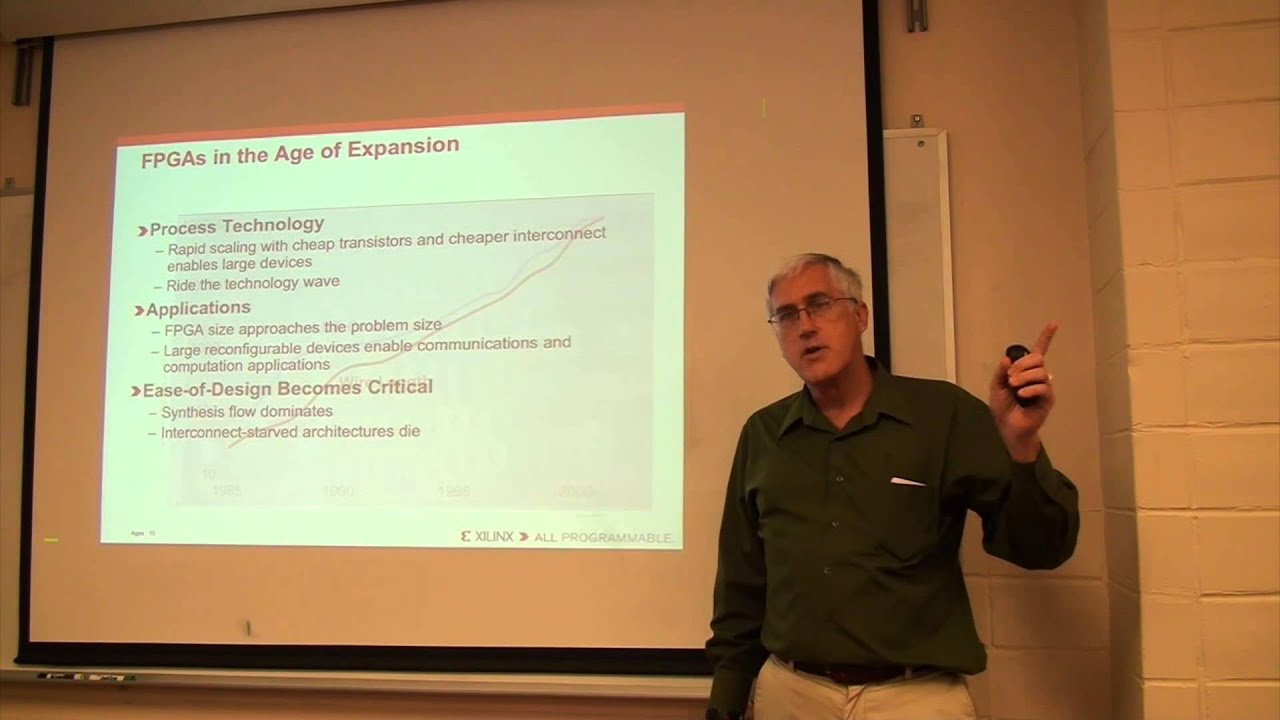

large problems large analysis complex running things in ensembles and doing multiple trials to have the confidence in the solution of what's predicted research and development are also very important this is where new algorithms and software come into play where the fpga having a resurgence it's been a technology that's been around for years which we'll see in just a moment but using that in new ways to accelerate the research recognizing that we also have mobile Health Options and low power mobile technologies clouds and analytics come into play and ultimately taking advantage of the marketplace and so

forth of the entire scientific Community to come up with new ways to solve these particular problems so we start to looking at fpgas and recognize that it's not a small Market it's 7.3 excuse me 7.2 billion by 2022 which means it's been established for a while fpgas are around they just don't see them what's happening now is they're becoming much more accessible and actually becoming much more needed as we try and Achieve that high performance Computing Edge while maintaining the efficiency and you see them in computer storage and medical applications which is particularly applicable to

cancer but then again all of these things begin to apply when we look at cancer so on the that road to efficiency and heterogeneity we look recognize that all the pieces have to work together so the fbga has a role the GPU has a role the CPU has a role and we all have a role one of the things that's happening right now is there's been Resurgence in artificial intelligence AI has been around for a while but we have the new context for deep learning using Graphics processors to train large scale neural networks that will

allow us to get value and information out of the data that provides opportunities where we can actually then become more efficient both in our storage and our computations by using different accelerators and even different different representations of the data and so there's three key takeway points heterogenity is with us it's going to continue to be with us as we start and press forward selective Precision is important because if we always use the same level of precision we're not necessarily being as efficient or as effective as we can and adaptability is going to be key supporting

those innovations that we have yet to see so there's several challenges and you can look up the source here it's Communications of the ACM data management issues programming models dealing with ensuring correctness of the result which is important for scientific repeatability and reproducibility and optimizing and even software engineering challenges so these are all opportunities as well one of the things that's clear is that every challenge is actually an opportunity waiting to be found and framed because if we didn't see it as a challenge we wouldn't know that there was value in overcoming it so those

are both application focused and you can see several of those that are Hardware focused Energy Efficiency high performance interconnect these are all possibilities that apply with an fpga so we see the opportunities for acceleration here we to recognize that we have large volumes of data how do we work with that how do we store it and compression becomes one of those application space that an fpga is particularly suited for and again I'm not biased towards fbga at all because all the technologies that we have can accelerate it just using them in the right Blend or

in the right combination and that's kind of the context for Innovation data security is important preserving the privacy of the information but also the Integrity of the information is key to making the progress that we want to see in the cancer research increasingly data reduction at the edge you saw the spike in the amount of data that's generated we're not going to necessarily be able to store everything so at some point we have to reduce down to what the essential information is that we would want to retain out of those instruments becomes increasingly important as

we start looking at mobile Health the ability to monitor your biological response to different types of conditions and capturing that information CERN the uh collider over in Europe they've been using fpgas for a long time dealing with the data reduction problem so it's it's a proven C case it's just applied now in a medical and even in a health context and ultimately data processing and transformation is taking these large data sets and getting the value out of them changing their representation changing their forms image processing is a key one in particular you'll notice in the

middle is that fbj are being used in artificial NE networks in the inference engines we've already seen that as an example as well in David's presentation so fjs are very valuable in that context GPU gpus are effective in training but we all have to put all this together get data together ultimately getting back down to what you see in the lower part is reproducibility and repeatability because nothing accelerates faster than learning from others and not recreating the mistakes not recreating and redoing the work so ultimately in the summary just getting this pulling together of how

do we accelerate the cancer research it's building Partnerships in interdisciplinary working together looking at new technologies and getting new insights from scalable Frameworks Cutting Edge predictive models and even advances in machine learning so there's efforts that are underway to take advantage of this and revisiting some of these key points accelerating the cancer research the data volumes continue to grow we have advanced Computing technologies that we need to take advantage of to deal with that growing data that we're using to actually work and to make progress on a very complex model problem challenge the observations heterogeneous

Computing moving the Computing to the data data stability and knowledge are all key we start looking at an fpga what's very nice about an fpga is that once you create that image that image is stable it's not necessarily going to be affected by libraries that would be loaded in and so that it can improve the repeatability the reproducibility in that particular context and recog that Technologies are continuing to advance and we can't necessarily have chaos with everything advancing the same so we need some elements of stability to manage and keep things going forward not having

to redo and standards and representations and common sharing important so Innovations are key and that's where you all come back in to actually take advantage of the opportunities that are presented as the data becomes much more open and available to take the insights that you may have and if there's ways that you can actually make contributions to what we're doing to actually accelerate the research on cancer so thank you David thank you about ER David again we're uh we're quite ahead of schedule um we blew through the early part of this quite quickly because we

really do want to focus on the use cases for those that are not experienced fpga developers I wanted to reemphasize uh something that we covered earlier and that Eric covered as well and that's the idea that heterogeneous Computing is here it's been here for quite some time it's here to stay uh it's relatively new uh to the cloud but fpgs have been around a long time the use cases are well known those application developers who can get access to fpj learn to use fpj deploy fpj based solutions they have a tremendous advantage in creating a

cost effective high performance solutions right and one of the reasons to think about this in the cloud context as Eric said is that we need to bring the data together with the compute and in areas like life sciences genomics research uh personalized medicine and other areas as well uh it's very very important deep learning TR great example of this it's very important that you have access to vast amounts of data and so when deploying fpga based Solutions on awos the fact that you have access to things like public data sets right ranging from satellite imagery

to to genomics data sets is very very powerful the fact that you have access to other types of compute and higher level services to use alongside your fpga application is very very powerful so rather than the previous approach for fpga computing where it really was appliance-based purpose-built Hardware that had to be inserted into a Data Center and used in the context of other applications that may not play well with that that Appliance um it's going be much much easier to deploy these types of Solutions in a cloud context so I want to point you to

a few uh resources online if you're interested in more details around the F1 instances uh that the F1 landing page uh on the AWS website has a fair amount of information about the specifics of the instance type I covered those briefly the ec2 instance type the Broadwell based uh CPUs the the the RAM and so forth we didn't cover in detail the interconnect between the fpgas which is provided in a couple of different forms a PCI Express Fabric and a streaming interface uh those are covered in some detail on that landing page if you're not

familiar with the marketplace that I described before uh dig into that a bit deeper because that is the place where you will go to get for example your Ami uh for the dev Ami for the developer Ami to develop the fpga applications that's also where you as a developer can offer your solutions to other customers either free uh right or as a uh something that you're monetizing you're creating a business around and the Ami and the AFI together Encompass your complete application and the marketplace is a great way to deliver that to in customers track

it monetize it and so forth if you're in the research Community whether you're in the educational sector or or research we have a couple of programs of interest uh that have been covered certainly in other sessions as well AWS educate uh which has uh programs uh credits for example for individual students for instructors and for institutions different types of programs uh to make it easier for you to develop courseware around fpg programming around F1 instances in in AWS in general and then AWS research which is uh more of a grants type of a program right

so if you have an interesting project uh you want to to request some funding for that uh put in a grant proposal there's a process for that on the AWS research page and we're very very interested in hearing from the fpga community around applications that you'd like to uh to try out to deploy on on the cloud as I mentioned before the public data sets are very important so if you're doing uh maybe high resolution image processing applications uh you might be interested in the satellite uh imagery data sets that are out there if you're

doing uh genomics workloads we have thousand genomes and other other um genomics data sets available on AWS uh today you can access those and there's many other data sets as well common crawl for example to do analytics types of workloads and a link here to to Eric's team and what they're doing at Frederick National Labs all kinds of interesting research and research like that highly compelling right from a research perspective from a uh you know from an application perspective and just to see what what Hardware accelerated can can do for us um in in a

very very important sector right of research as I mentioned before uh Eric has a long background on fpgas uh he's also been involved in the past in in U reconfigurable Computing organizations open fpga and whatnot uh so we really appreciate him coming today and offering some perspective uh on why fpj's are so important uh in Computing today and in the future heterogeneous Computing so thank you again Eric yeah please so the question is does it play nice with Hadoop type of of networking which is more like a grid uh opportunity so one of the benefits

of running in the cloud of course for any instance type including F1 is that you can scale out and deploy many many uh F1 instances now within the largest uh F1 F1 16x large I believe it's called you have eight fpgas those are interconnected directly with PCI Express Fabric and with with streaming interface to go from one F1 instance to another to create a cluster a grid of them that's going to go across the same high performance networking that we have for other ec2 instan for example for the P2 instances so those that are doing

things like deep learning training on P2 GPU instances at scale it's similar concept to what you might do with the fpgas yeah yes consider support so that's a great question so will we be supporting part I reconfiguration of the fpgas in the flow in the future uh well actually uh today with the fpga shell and the custom logic that you insert into that shell that is actually taking advantage of of PR a partial reconfiguration today in the zyink flow um you know whether you can do that uh at a more granular level with the tools

uh we're interested in your feedback on the applications how we we might support that better but partial reconfiguration is supported in the flow today and we take advantage of that to combine the shell and the custom logic in the middle hope that answers your question we can yeah thank you yes please oh opencl support So opencl support uh is coming uh We've announced that already and that is that is part of the zyink tool set through sdxl that will be supported in F1 in the future time frame for uh I don't have a time frame

to share today but if you if you come and talk to one of the product managers perhaps we can shed a little more insight into that thank you other questions about uh fpgs in general specifically around F1 yes please uh the we're quite transparent about exactly what that chip is that is a xylin Ultra scale plus vu9p uh you can certainly uh acquire development boards and systems from xlinks and other partners that use that device um so yes the short answer is yes you can use that same device yourself now the the development tools that

we offer the fpga shell and so forth those are all designed and optimized for use with the F1 instance in the cloud so um you know there will be obviously some differences in in using a physical fpga board versus what you're doing in the cloud but you can get access to that same device it's commodity uh I don't yeah I don't know this so actually programming from the F1 instance creating an application and running it yeah that's uh not something we really have as a use case but if you're interested in that let us know

um what that use case might look like and we're happy to to listen and see if there's something we can do to support that um as Eric mentioned earlier compute at the edge with fpga based devices in combination with compute in the cloud with fpga enabled applications is a very interesting use case we're interested in hearing more about what you might want to do in that area yes compare gpus yeah comparing and contrasting gpus and fpgas I'll take a shot at that and then Eric maybe you can supply some experience as well but you really

have to think of of gpus as being um built to take the same kernel of computation and parallelize it massively for the original use case of course shaders that are used in graphics right so it is a simd machine as they call it single instruction multiple data means you're just replicating that same thing now over time gpus have become more complex more control logic and so forth uh but fundamentally that's what they are a GPU has a fixed instruction set it has a fixed data width it's convenient to program the instruction set means you can

compile to it and so forth if you look at an fpga an fpga has no instruction set it's flexible in terms of you can create any arbitrary pipeline of operations in it and it doesn't have a fixed size of data and Eric mentioned this as well it doesn't have a fixed numeric Precision so if your application is optimized around 32 or 6 6 4 bits as is traditional in the CPU world that may actually not be the most optimal data width for the problem you're trying to solve certainly genomics right you know where you're doing

uh basically four types you could get away with far less bit Precision in doing comparison of of two genomic sequences right for example uh or if you're doing image processing deep learning inference you may only need very reduced precision and so the ability to custom craft the instruction set and the Precision you need and to create massively pipelined operations is really how fpgs are different fundamentally different from gpus but at the end of the day they're both accelerating an application that otherwise would have been run in a more sequential manner Eric any other no I

would I would say the same thing in that in that particular context you know the gpus obviously have their Niche and the things that they can do well and they continue to innovate and do more things well but the fbga itself as David mentioned is essentially a blank slate for Innovation creative new ways to come up with composits of what you might want to do combining enry encryption decryption compression and doing those things efficiently where it's not strictly a floating Point operation and having that essentially variable Precision that allows you to take away and utilize

not necessarily double but potentially even quadruple if you needed to go to one extreme or even down to bitwise and still being able to do various types of analytic itics in that particular context there's algorithms out there that will take advantage of essentially single bit representations for graphs you're just looking at edges and doing analysis in that way and so if you were to be actually be using a GPU um all the extra Precision would essentially be unnecessary right so there's opportunities to find that balance about what is going to be the most optimal solution

for your particular problem where the GPU provides essentially acceleration in in a very stable configuration in a particular programming model and the fpga can be very complimentary to that to give you even further acceleration that you might not otherwise be able to achieve that's right at the end of the day you know CPUs gpus fpgs and whatever are to come in the future are are very different they serve different needs um there is some overlap of course but the types of applications that are best suited to a GPU could be quite different from the applications

best suited for an fpga there really complimentary part of that ecosystem there's various ways that you start looking at different programming models and how the fpgas can be deployed as we mentioned it's not a not an immature industry it's been around for years in telecommunications and so forth and as the data and the big data problems become increasingly Central to what we're trying to do that transmission that curation that validation of the data the movement of the data which fpga have been doing for a long time becomes increasingly Central to the problem and so we

have yet to actually really achieve that real fusion that we bring the floating Point capabilities of the GPU together with that data uh manipulation aspect of the fpga but that's where a lot of that Innovation comes into play uh and asking in essentially all of you to to go look see what you can come up with find those use cases that are important and compelling and see what you can do right thank you all so much it's been an enjoyable session appreciate the great questions

![FPGAs are (not) Good at Deep Learning [Invited]](https://img.youtube.com/vi/WWCWsub3YkE/maxresdefault.jpg)