[Music] well welcome everyone I'm glad you guys all made it to our talk on accelerating time to value with the Azure openi service I'm Chris hoder and we're going to have a great 45 minutes talking about how to use the openi service and manage it at scale and we also have some great announcements coming so we didn't save all the announcements yesterday yday we got a few more coming so just to hop into it we have about over 60,000 companies are using Azure Ai and open AI today for their mission critical workloads they're depending on

these services and the generative capabilities to drive you know tremendous growth business value across horizontals verticals Industries uh it's things like onata which we're going to talk about that is being able to analyze previously unanalyzed data getting value from that data that was sitting around to BMW and cars being able to drive more efficiently to Thompson Reuters which we're also going to see being able to uh launch new capabilities and drive efficiencies in their business and Azure open it's powered uh Microsoft itself is powered by Azure open you can see where we sit in kind

of the layer cake of offerings and all of the announcements you saw on things like co-pilot and co-pilot Studio are all powered by these underlying services and so we're learning how to run those services at scale learning what's important for both perform performance and quality and then bringing it to you all within the Azure openingi service and for those that are new to the space the Azure open AI service aims to provide open AI Cutting Edge AI models onto Microsoft with as a first-party service and so what that means is you get all of azure's

Standards uh and typical services that you get within an Azure environment you get managed identity you get uh virtual networks privacy data security Regional controls all of that comes out the box and fully integrated within the Azure open service and so you get open ai's you know latest and most powerful models whether they be the 01 series or the gp40 series fine-tuning image generation whisper for transcription and translation as well on the service as a first party offering and just to kick it off with some great announcements you guys here will be the first ones

to know that we are announcing today a new version of GPD 40 there's the 1120 2024 version that includes uh great improvements on the quality around instruction following creative writing and deeper insights from uploaded files and so this was just announced this morning and we'll be rolling it out to the service in the coming weeks yeah so it's super exciting so we can't wait to see what everyone's going to start building other announcements that we're making we've brought these batch service and so this is the real time or sorry the ability to process bulk amounts

of data to GA earlier this year this provides a way to do hundreds of thousands to billions of tokens in a cost efficient way at 50% off of what you pay in the standard environment and then we've also announced and shipped the Azure data zones this is a really exciting new way to help alleviate a couple of constraints that we see from customers around getting the model access and throughput that they need for their applications while also maintaining the right data boundaries for their compliance and regulations and so data zones sits in the middle between

a global offering and a regional offering where you know that and can be assured that your data is going to remain within the defined Zone and so today we have two there's the US and then there's the European Union so you can get be assured you'll get the bottle access and the throughput to grow and build your applications without having to bounce around different regions which was a a typical pain point we heard from customers in the past and so if we take a step back and now look at what the service offerings in Azure

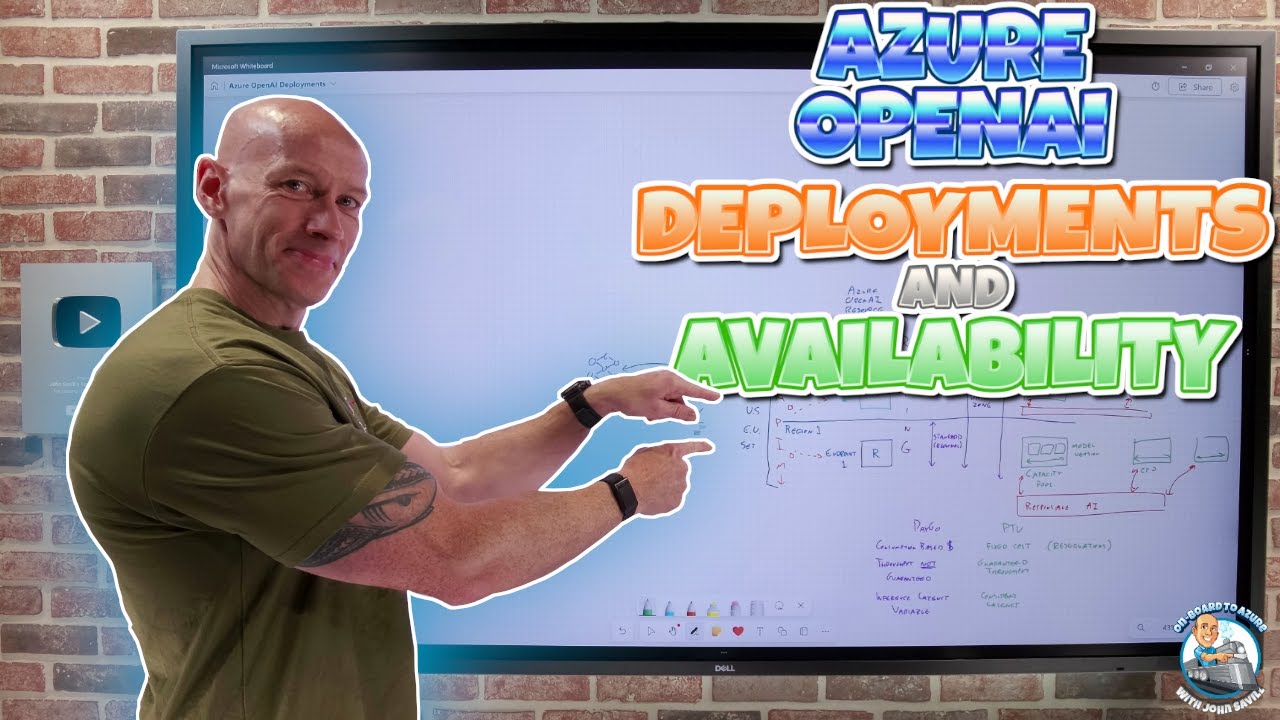

open a look like we've actually ballooned this out a lot over the last years and so over the last year we've really been working to provide the right knobs and functionality to allow customers to optimize their solution to their specific needs that works out to really two variables you have the offer and the deployment type and so I think about the offer as how do you want to optimize the performance of the model and you're going to trade off things like latency and throughput uh and have a different way to use the service so you

on standard is your payer call this is your easiest environment to get started batch asynchronous very large throughput but it takes say 24 hours to complete and then provision provides your high scale high volume workload capability and then once you choose your the performance and and uh approach that you want you then choose what type of data structuring and controls you want in place so we have global data zones and Regional Global highest throughput best access to models but we're going to process that anywhere in the world leverage Microsoft's backbone to really provide you the

best access and the highest throughput but your data is going to go be routed around the world so that we can enforce or allow for that inference data zones will keep it within a data zone so you'll still get more throughput and more model access than Regional but maybe not as high throughput as Global and then Regional is going to be the most constrained that's your kind of classic Azure data promise so just to hop into these offers in a little bit more detail and talk through them the standard offering this is your go-to offering

if you don't know what you want you want to start with standard it's PID for use it's free to set up get started so you can start your development activities your experimentation without incurring a ton of cost especially when you're not really making high volumes of calls but at the same time it can scale to tremendous volumes so we have some very very large customers running entirely on standard and are perfectly happy able to drive all the value that they uh want to their customers and so it does have that high performance it's also ease

of management in the sense that you're not paying you're only paying for what you use and then if you do have Regional requirements this will be a little bit more regionally limited than the provision service which we'll talk about uh in a second so the use cases for standard this is really the ideal for most use cases this is where we expect all customers to start building and grow and then once they optimize they might choose to optimize to the other deployment types and so it's going to be really cost- effective especially if you're at

low to medium even large but not extra large scale or you have a very intermittent and spiky uh workload but uh so it's good for that starting but it's also very good as well for production provisioned now provision provides some additional guarantees and so it's a per hour billing model when you go in and deploy it we will provision the amount of capacity for that throughput that you need and you're going to ensure that it's there when you need it whether you're using it or not and so it provides that reserved model processing capacity and

that becomes really critical if you have you know super Mission critical application that you have to ensure that your throughput is there when you need it and standard there is no uh performance guarantee and so if there's a very high or or constrained time on the system you could imagine maybe Black Friday a lot of Shoppers coming to the systems a lot of systems trying to offer values to customers there might be a little bit of uh interaction there and your performance may vary a little bit more versus provision comes with our latency SLA so

you're going to be guaranteed to have a certain performance response rate compared to standard uh and then if you're able to use this at a consistent high volume you can start to look at Cost savings through reservations so as I mentioned we announced earlier this month that we added the latency SLA to provisioned uh which means that you are getting that guarantee on the predictable generation speed from the service and so you'll get that low latency with the throughput we also announced a couple of changes to lower the entry point to provision so in the

past we heard a lot about hey I want these guarantees it's just too expensive I'm not going to utilize it enough and so we announced at the beginning of November that we were dropping the entry point for Global uh so for gbt for oh for example from 50 to 15 and the increments from 50 to 5 so that's a 10x drop in the ability to scale up your solution so that you can better align it to your actual needs uh and then with data zones we're going to mirror that as well and so with these

wider offerings you're seeing that when you go to Global you go to data zones you're going to get more flexibility you're going to get more tooling to optimize your solution and so you're going to trade that off with the data processing guarantees we also announced a bunch of C savings with Azure reservations we dropped or we dropped the hourly price on global and introduced data zones with they a similarly low price so the hourly price was dropped in half the monthly reservation price Remains the Same at 260 per PTU uh couple things to note the

hourly price is not what we recommend where customers land it's ideal for some very specific use cases if you want to test the service see how it scales see how it responds maybe you have an intermittent Spike and need the guarantees but if you are going to be using provisioned on a consistent basis the cost value comes from monthly and yearly reservations 260 a month works out to be about 35 cents 36 Cents an hour so it's about 3x cheaper than the $1 per hour and 5x for the $2 per hour what do you want

to use provision for so in this is really around production workloads it's about getting access to really high throughput that also comes with that predictable throughput and uh consistent latency on the generation speeds so it really becomes cost effective and management effective when you are running very large workloads in production so we like to think about starting in that standard learning how to develop learning what you're building scaling up to provision as you get to very high workloads so now I want to dump into a demo of this and show you guys a little bit

more detail on how provision runs so what I have actually let me jump back to main I jumped to the intro on the slide all right um the scenario and setup here so we're not going to dive into the actual data but you could imagine a scenario where you want to analyze questions about documentation this is a pretty common one lots of customers have them you might have a bunch of contracts you want to load into the system and summarize or ask questions on or you might have support cases that you want to understand the

past Trend with a customer so you can respond better in the current case the result is you typically have very large prompts in very small generations and so in this case we're going to generate about or send about 15,000 tokens on each call generate out about 50 to 250 50 and because you're kind of asking questions about documents we expect some amount of repetitiveness and so we going to show how if you can get to a prompt cash match of 50% how that affects your scaling and your cost optimization and so prompt match rate for

those that aren't familiar is basically a measure of repetitiveness in your prompt if your prompt looks the same on each call we're going to um hold the computations of that temporarily for your service and so we don't have to re load that into the model each time and you'll get a faster response time and we also offer discounts it's 50% off in the standard environment and it's actually up to 100% off in provision and so when you combined provision deployments with prompt cashing you can actually get tremendous value and that's what I want to show

you guys today so what I have here today I have my Azure environment I have a couple of resources set up I'm simulating that production environment using Azure load testing so this is just running a bunch of calls into the service and then I'm streaming out the data that of what's Happening into from Azure monitor into a managed grafana dashboard and so you can see here this uh GPT 40 mini deployment is currently doing about 220 requests per minute and we're driving about 3.3 million tokens per minute through our service for this production scenario and

you can also just scroll down here and see that hey our prompt tokens are roughly what I said about 15,000 to 15,400 tokens and our generation window is actually actually pretty narrow at about 100 and so this is a great candidate for provisioned I have a consistent workload of about 200 calls it's running uh in our case we're going to run it 24 by7 and so let's show how I could move this to provision managed and get some additional value and so what you would do you go into your studio so I'm in the Azure

AI Foundry uh with focused on Azure open AI in my deployment span I can go create a new deployment go to gp40 Mini I'm going to choose my deployment option and you're going to see all those options those nine options that we talked about earlier uh in the list I'm going to do Global provision managed and here you don't just set your TPM like you do with uh a standard deployment what we have is this concept of a provision throughput unit or PTU and we add a little bit of complexity to give you a lot

of flexibility so we have one billing meter for ptus it's that you know $2 an hour 260 per month and that's across all the models and so you can once you have figured that out you can switch your models without changing your bilding mechanism and so you can kind of separate out your development activities from managing your costs once you're set up but you do need to figure out how many TPMS do I get for PTU which varies by model and so to do that we have some handy calculators where I just need to know

how many TPM I'm running in my system today and so if we go back to our dashboard I'm doing that 3.3 million and 21,600 uh tokens let me just fill that in an X all right and you can see I need about 95 ptus to deploy I'm not going to deploy that here because I actually have one running and you can see I have a provision managed sorry wrong line provision managed running with 95 ptus and using the same Azure load test but running against this one we can hop back to our manage graan dashboard

and take a look at it and I want to show let me jump back an hour here because we're going to show a couple of optimizations and so you can see when I'm running that um beginning I have about the same 200 I'm actually running a little bit higher here 254 calls per minute at 3.88 million uh tokens per minute so I'm able to satisfy that that throughput with my 95 as the calculator said and we're doing the same workload definition we don't have the cash Master rate yet and then we're also working on improving

observability so you can further optimize and so you can see here I'm running this at 112% so I'm actually fully maxing out this PTU instance getting all the value that I can my calls are taking about 1300 milliseconds to complete it's not streaming and it's a fairly large window and you can see I'm generating about 200 tokens per second so it's actually really really fast generation speed if you're familiar with GPD 40 mini the SL for gp40 mini is 33 tokens per second now you can imagine we talked about that repetitive prompt so let's imagine

we're able to optimize that and get our cash match rate up to 50% let's see what that does to the service and so if I zoom back out i' got to go a little further um that's exactly what we did right around here you can see we scaled up to about 50% cash match rate and if I zoom in on this time window we can see that we're now doing 437 requests per minute on the same deployment with the same amount of ptus on the same workload so I was able to double my throughput by

ensuring that those 50% cash match rate was occurring so I'm getting now 6.68 million tokens per minute and you can see we're doing the same uh token distribution and calls we're also still running now at 100% you can see my time to last bite has dropped from about4 1400 to 1100 so you get about 30% benefit to your speed as well so with the prompt cash matching you're going to get better value better speed and finally just to conclude the other value we'll get you can see I'm basically calculating off of these values how much

this would cost me per minute based on standard list price it's about a101 and with that I will hop back to the slides to do some summaries so at that doll1 cost I forgot to update this slide but if that doll1 cost if you calculate your for 50% cash match rate you're going to get a 75% pay 75% of that works out to about 77 cents the generation speed is or generation cost was three cents per minute you multiply that out to a monthly cost it's about $34,000 a month versus for those same 95 ptus

we could pay $260 per month wor reservations for 24,700 and so the combination of running these at consistent throughput with high cash match rate is just going to continue to increase your uh value that you can get from the service and actually start to save a tremendous amount of money um over the standard price so just to recap the demo because I know I went very quickly through a lot of items we ran a production workload and global standard using Azure load testing we then used the Azure monitoring metrics that are provided out of the

box stream them into a manag grafana dashboard which allowed us to easily size the PTU go and deploy our Global provision managed deployment we migrated the traffic in azzure low testing and then optimized to a 50% cash match to show you how you can get that value benefit this also included a bunch of incremental changes that maybe are not newsworthy for the announcement slides but kind of highlight our our focus on continuing to make this easier and better for customers over the coming months so we've done things like increased the throughput per PTU for GPT

40 many earlier in the summer we added the latency SLA we've also added a lot of observability metrics and have a lot more coming over the following months and so we added time to last bite we added your generation speed and we added the cash match rate and so these are all new things that we're adding into the service to really help you get that scale and optimize your services once you get to production so that was a really quick example of how you can use provision manage to optimize your service and scale it I

want to now invite vene radua up to the stage to talk about how he's getting value from these deployments hey welcome yeah so tell me about uh your use case in scenario uh sure yeah uh but good afternoon everyone uh this is uh vopa I lead the platform engineering team at Thompson Reuters for folks uh who don't know Thompson Reuters we are a technology company focused on legal tax and accounting space um I wanted to talk a little bit about I mean I'm sure each of you have heard co-pilot that's a big word out here

at Microsoft but I wanted to leave you guys with another terminology called as co-counsel uh that's something which we've been working on for the last year or so uh it's the Gen capability for all of our legal tax and account space that's something in proud and then we are kind of proud about it as well um so tell me a little bit about how you're using Azure opening eye to to sure yeah I mean you talked about ptus uh being an early adopter we did have to go through a little bit of a pain on

the cost aspect of it adoption aspect of it and everything that's where this uh Journey about uh so-called like how do we optimize our llm and so-called the TR llm smart orchestrator something which kind of started I've just listed out like what why and then how uh it's a central calized API system allowed our uh business units to adopt uh llm services in a more mature way in a more optimized way and uh it was built to accelerate the adoption not only the adoption but keeping the cost in mind that's where our initial Journey can

of started but we can of export expanded that into other capabilities as well and I'm happy to say that uh the how part I mean this was all built on Azure platform with the partnership with the Microsoft so certainly appreciate that cool yeah and I think what's great about this is you were able to use use these services and then figure out how to build on them and get around some of those challenges around managing them high entry costs to build a this orchestration engine that allowed the company as a whole to take advantage of

it when maybe individual applications were not large enough I did and so yeah I'd love to see kind of the other cool thing is once you had this up I think it expanded into a lot more services to create more of a holistic solution so I wonder if you could talk more about that yeah I'm sure you folks have heard about the uh the Azure AI Foundry I'm happy to say that that's being announced as well which can addresses a lot of things which we were going to start working on uh the extension of it

is uh I've just listed out like some of the uh llm smart orchestrator capabilities as I said like it started off with a optimized way of consuming our ptus but it soon expanded into more of a capacity management when we procure some ptus uh making sure that efficent efficient usage across different llms routing the traffic to different regions so that's where the capacity man management Roll Along with this the standards and security aspect of it automated engineering for easy adoption a single API entry point for all of our business units so that consumption becomes a

lot more easier adoption becomes a lot more easier and big part is like I did did see that The Foundry kind of gives a view on your cost and usage reports as well so that's something which we can of built out using internally as well uh centralized contracts to make sure that uh folks use our PTU in a more efficient way uh and also so uh TR is big in we're working towards our own slm like a custom model as well infusing for a different data set with our own custom models I think that that

became an Avenue as well um smart routing I kind of talked about uh routing the traffic against different models against our custom models against the different regions as well life cycle management of the models I think and that's that's probably a big thing for most of the organizations with around 1600 models in the in the in the portfolio out there and also cost optimization so I think the TR llm orchestration has got us all the way but we do look forward to continue the partnership with Microsoft and then I've I've been a part of the

cab as well I've been trying to give some influence on a few other things which uh the cloud Foundry can the a cloud Foundry can uh can build towards and then give that capabilities as well yeah I love it because I think we've been learning a lot at where the pains are so that we can bring that into the solutions and help make it simpler for everyone and I I love this use case as well because we see this a lot with our customers right a solution in the generative AI space space is not just

Azure open AI it's all of these things life cycle management cost optimization usage tracking you have security and standards and that's going to require a solution which you can get with Azure um I did want to go a little bit deeper and learn a little bit more about the orchestration layer and how you kind of put that together in Azure sure yeah uh proud to say that yeah the the solution was built out uh initially using the Azure workload and uh some of the Azure components which be used out here in order to stand up

this so-called the TR llm orchestrator uh the aure API uh management which is the APM layer that's what we can used authentication again Azure enter ID uh Azure open a service for sure and then the Azure container app Azure app functions and keywall these were some of the components which we can use in order to bring this TR llm orchestrator together from a reporting standpoint we extensively use powerbi as well um in order to build out this entire system give a little bit of a high level I chart out there in order to give a

view on how this was all built out but yeah happy to talk more yeah awesome it's a it shows you the complexity to really scale and manage a full Solutions and so finally we talked a lot about the kind of the what and how uh tell me a little bit about the impact it had on your business yeah so uh benefits of smart orchestrator I think uh it's evident we kind of talked about the cost optimization but uh improved performance making sure that we get that performance throughout uh ptus is a big thing making sure

that when we put our co- consel product out in the market it gave out the the right performance out there so making sure that the improve recuit performance uh better distribution among among different workloads as I said like we have a legal entity we have a tax and an accounting entity each of these product teams are going of using the I Services making sure that it's evident for them or you get the right performance during their PE covers or business times that's something like a better distribution um enhanced utilization optimization like security across all of

our workloads and also making sure that we are kind of easy adoption right like making sure that it's up and running quickly so that those became a little bit of a more of benefits out there it's been well received across the organization we've seen an accelerated path of our AI Services growing at TR I look forward to build more cool awesome well thank you for joining us apprciate thank you [Applause] again cool I would next like to invite up Steve Sweetman to talk a little bit more about the batch service fantastic yeah welcome thank you

very much Chris and uh let's give Chris a round of applause too that's awesome to see so Chris and V did a great job at talking about the benefits that you can get from standard to get started and then how provisioned can actually be a great way to scale your applications and when used ma to the maximum can actually save you real dollars as well and I love Thompson's example of drilling in and showing us how do you actually then scale that to the Enterprise not just one application but to every single application across your

Enterprise by building that front-end service on top of apim that actually aggregated all the applications and all the capabilities you need behind a single endpoint delivering that end to-end solution and service so that was fantastic now I'm going to go into a little bit more detail on Azure openai batch so as Chris mentioned at the beginning our Fleet actually operates not only services for you as customers but all of our internal co-pilot applications as well and why that's important is because during off peak times like in the evenings or on the weekends we have a

lot of fellow capacity capacity that is functionally underutilized and we actually offer that capacity back to you through this batch service and so the way that the batch service works is it actually allows you to submit a job which can be cued for operating when there's excess capacity available in the system and then we offer for a 24-hour guarantee on when that job will be processed and we handle all the processing for you and so that job then gets submitted cued and returned often well within the 24-hour period and the benefit to you is that

it's 50% off so take the lowest rates that you already see on our standard rate chart and have it and that's what you get with this batch processing and so it provides truly low cost for processing a large amount of data it really lets you scale to billions of tokens at a time that you can submit into your queue and it becomes really cost effective for large scale processing but rather than just me tell you about it I'd actually love to invite another customer on stage who's going to share how they used it to drive

Innovations within their business so one may why don't you come and join me on stage here me Fant ftic so M May why don't you start by telling me a little bit about the amazing work that onata is doing and how you're revolutionizing the healthcare space sounds great thank you thank you Steve and thank you for the audience for giving me an opportunity to share our work before that I would like to maybe give some backgrounds about who onata is you may have heard about a healthcare conglomerate called mcken corporate onata is one of the

business unit within the uh mcken conglomerate so we have a number of sister uh organizations and to help United together to fight against cancer including the US oncology Network helping the practice to reduce the administrative burdens uh SE Canon Institute which help to effectively put patient Into The Cutting Edge clinical trial and for us onata and genos space really much focusing on in between the technology as well as making the data driving the data into the Insight what an important and Powerful Mission I mean I'm sure we all know someone I certainly do in my

life who's been impacted by cancer and it's amazing the work you're doing so tell me how are you leveraging AI as you're doing that research absolutely so we have a number of use case uh in AI between since we are specially sitting in this unique position between the healthcare provider patients and life science organization relate to the data Insight so we have a set of use case is really much about driving the data to figure out that those unanalyzable unstructured data that in the longitudinal patient record that we do not quite use today but they

are badg historically right sitting there for long a long time so that is what we call the AI for real world evidence second bundle is very similar to the first one but more like the online use uh if I put it into the more provisioning side the PTU side and and and the pay youo standard uh offering is is still very similar is to understand those unstructured documents but in a will time to helping our provider to reduce that documentation burdens and finally that we see that is as we continue the Journey of AI as

AI continue to uh mature the reasoning capability we will lead to using AI for clinical decision support think about that there are so much longitudinal information about the patient some are structured some are unstructured some are Imaging multimodal right it's very hard for a physician seeing 20 to 30 patient per day to remember every single thing like oh what is the best next treatment right giving the the the history of this patient have been full so we see the opportunity that going forward the AI is also going to be helping us to uh reduce this

cognitive burden and providing that best uh treatment alternative what an amazing Vision so so just to reflect that back so what I'm hearing you're saying is that you look forward to a future where AI can even help doctors in their decision- making by using the patterns that's coming from data and even today you're using real-time information from patient histories to help bring all of the data that a physician needs to make a decision at that time yes and going up back to the beginning there's all of this history from years of patient studies and records

that you're processing through this batch service so tell me like how much data we talked about what you doing you want to make a guess okay go for it you want to make a guess how much data we have I have no idea how much data are you processing we have um okay we have 150 million document wow just that is only the look back of 10 years it would be more if we look back for longer history so we have about uh two million patients coming to see our practice every year and we have

looking back longitudinally that there are different type of documents some of them are because our provider are making note to himself or herself and second is that because during the patient Journey patient actually go see many different Services a lot of them are outside of our providers for War as a result of that you can imagine a lot of report like surgical report like genomic report they come in as a PDF so we have 90 Millions PDF and uh 60 Millions progress noes so together 150 millions and that is where we really much the batch

API service that's amazing what an amazing use case and I couldn't think of anything more impactful that our service is able to be part of so thank you for bringing us on that Journey with you that's awesome you very [Applause] much so this is just one example of the amazing services and values that we're seeing customers getting on batch in fact batch is now our fastest growing offering that we have in open eye it accounts for over 10% of the total tokens being used and it was only launched back in August just a couple months

ago and so it's growing incredibly incredibly quickly so you've heard about our portfolio and Chris really summarized it well at the beginning to say our goal is to ensure that you can create value no matter what stage of the application development life cycle you're on and we recognize that within your Institute and organization you may have multiple projects at different stages of the life cycle and so we want to bring all of these capabilities together for you from standard the place to get started the place to begin your development and even start to find product

Market fit and a place to run your applications particularly if you have you know peaky workloads that actually only have usage at one point of time during the day and then you've got provision which Chris demonstrated gives you that consistent sustained high throughput with the performance and latency SLA guarantees that's something that you can Bank on across your organization and we've got batch that offers you tremendous value when time is less important but you do need to process a large amount of documents you want to do a historical research on cancer and you want to

actually analyze all of those files and across all those offerings we then give you the choice around data residency and control starting with global which again gives you the highest throughput and with global the data is processed any in the world but it still remains resident in the location of your Azure resource when it's stored so any data for example that you're uploading to a batch service would be stored in the region of your choosing which just be processed around the world then we have the data zones these are brand new still coming for provisioned

and coming for batch in December but these allow you to not only keep the data at rest with residency but processing with residency around a region and the two geographic regions we have today are across the US and around European Union member states so if you create a data Zone when you're in one of the European Union regions you will automatically get the European Union data Zone and if you create a data Zone when you're in one of the US regions you'll get the US data Zone and then we do have 28 regions around the

world more regions than any other generative AI provider today where you can actually deploy and maintain your data in that specific region like East or east 2 or west 3 or Japan or Korea or the US Government Federal space where we've just gone GA with our regions as well and so you can keep your data isolated in that specific region now there will be more constraints there you won't get access to as many models and as much throughput but if that specific Geographic Regional residency is something you need we have that for you too and

so as you're thinking about these don't think about any them as a one siiz fits-all but really think about them as a portfolio where our goal is to help you achieve the most value you can that we want to ensure that you can go to production in the way that suits your business in the way that suits your workload and every one of those we know is different and tailored to you and that's our goal with our offering is ensuring that you have the maximum flexibility to go into production whenever you want to and overall

we think about the deployment options with starting with global so I talked about starting with standard I also recommend when you can start with global global will give you the greatest flexibility it'll give you access to the newest models we always launch our models on global first and it'll give you access to the most amount of capacity and the lowest cost for getting started but we recognize that some of you have constraints from Regulators with specific scenarios where you need to have those controls around data residency and that's where you can start to them focus

on okay where do I need a specific data Zone and where do I need a specific region and again I doubt that across your portfolio that any one of these by themselves will be the solution but it's really the mix and match for your specific applications and what you need tailored to your offerings and so with that I'm really pleased to say that the azra open AI service is aiming to deliver these capabilities to you from the state-of-the-art models that we're shipping on the same day as open AI as we said open AI announced today

the latest update to gbd 40 and actually it's it'll be available on our service in a couple of weeks we're in a uh no deployment freeze right now because of Black Friday but as soon as that's through we're going to make that available on our platform too our goal is to be the best performing platform on the market and we know that that performance is so important to you because that impacts your application performance as well and so we're constantly benchmarking ourselves against our our competitors in the market and aiming to be the best to

offer the best latency to offer the best throughput and the best value with that and that really comes to cost efficiency too our goal is to be on par with the leaders in the market offering the best models at the lowest cost we're constantly looking for that trade-off between cost and value and ensuring that we can deliver that through offerings like batch and we're constantly exploring new business models too where we can continue to offer you greater and greater value but we recognize that that's not enough we also need to ensure that your data is

secure your application is secure and that's where building on Azure makes sense we offer things like Microsoft entra ID and managed identity integrated within the stack we just announced the network security perimeter offering which is going to be coming to Azure open AI soon that actually lets you create data boundaries and data exfiltration protections to ensure that your data is never exfiltrated from your environment we offer private networking for the Azure open ey service so you can securely connect over your private Network to the endpoint of your choosing so the data never leaves your network

in a virtual sense and we're here to offer as much capacity as you need we want to ensure that you have access to the highest capacity that's available the highest throughput limits so that you can scale to whatever size application that you need we have some massive scale customers on our platform you just saw how on data was shipping over 150 million PDF files processing through our service at tremendous scale and we make that scale available to you all with maximum flexibility as well and these are some of the promises that we have for you

in Azure open Ai and so the actions that I want you to take away are these you know first of all go to the AI Foundry portal get started start to play at the service if you haven't already start with standard start with global explore the latest models that are available there on the catalog those include the Azure openi models and models from over 1,800 other providers as well with our um agreements with hugging face with meta with um mistol with coare and others as well and then start to scale on production choose the deployment

that fits your needs and begin to scale there and we look forward to going on that scaling Journey with you and so with that I will open up the floor thank you for the round of applause there I will open up the floor for questions there are some follow-up sessions too so if you do leave please I'll encourage you to go to some of these other sessions where you can learn more about other innovations that our customers having the messen team on data are going to do a deep dive more into how their solution was

built and what they're doing to forward cancer research and Innovation within their industry we're seeing some amazing Solutions in the assistant space and here from DocuSign about how they're creating intelligent agreements with Azure as well so thank you very much and thanks for coming to our session today thank you everyone e e