a fascinating new model just dropped and apparently it is beating every other model out there and it uses a completely new technique to enable the model to be able to self-correct hallucinations it's kind of insane let's check it out so here's the post by Matt Schumer Matt Schumer is a great follow on Twitter if you're not already following him he puts out open- Source projects all the time and now we have a brand new open source open weights model reflection 70b this model is a fine-tune of the llama 3. 1 model and it is a 70 billion parameter model and currently it is the world's top open- Source model easily competing with all of the frontier models and easily competing with all of the top Frontier closed Source models so Matt Schumer's new model reflection 70b is trained using reflection tuning a technique developed to enable llms to fix their own mistakes now he also mentions a 405b model coming next week and we expect it to be the best model in the world so no big deal all right so here are the benchmarks GP QA mlu human eval math GSM 8K and I eval as we can see the MML U it reached nearly 90% with zero shot reflection now that is compared to Cloud 3. 5 Sonet at 88.

7 Cloud 3 Opus 86. 8 GPT 40 88. 7 so yeah it beats every other model including the base model llama 3.

14 5B gp2a and human eval are the only ones in which it barely lost against clae 3. 5 Sonet but it is a much smaller model plus its open source plus its open weights and you can download it already right now from hugging face now I plan on doing a full llm test of it but the website's down so this is the website we're currently experiencing high traffic and are temporarily down please try again soon Matt Schumer said that this website is just getting absolutely hammered and so yeah you're not going to be able to use it anytime soon they're adding more gpus but as soon as he does that he said it's being saturated and look at GSM 8K which is basically solved it's at 100% which is just absolutely nuts so how was he able to achieve this incredible performance let's look into that reflection 70b holds its own against even the top closed Source models Claude 3. 5 Sonet and gbt 40 it's the top llm in at least mlu math if eval and GSM AK it beats GPT 40 on every Benchmark tested it clobbers llama 3.

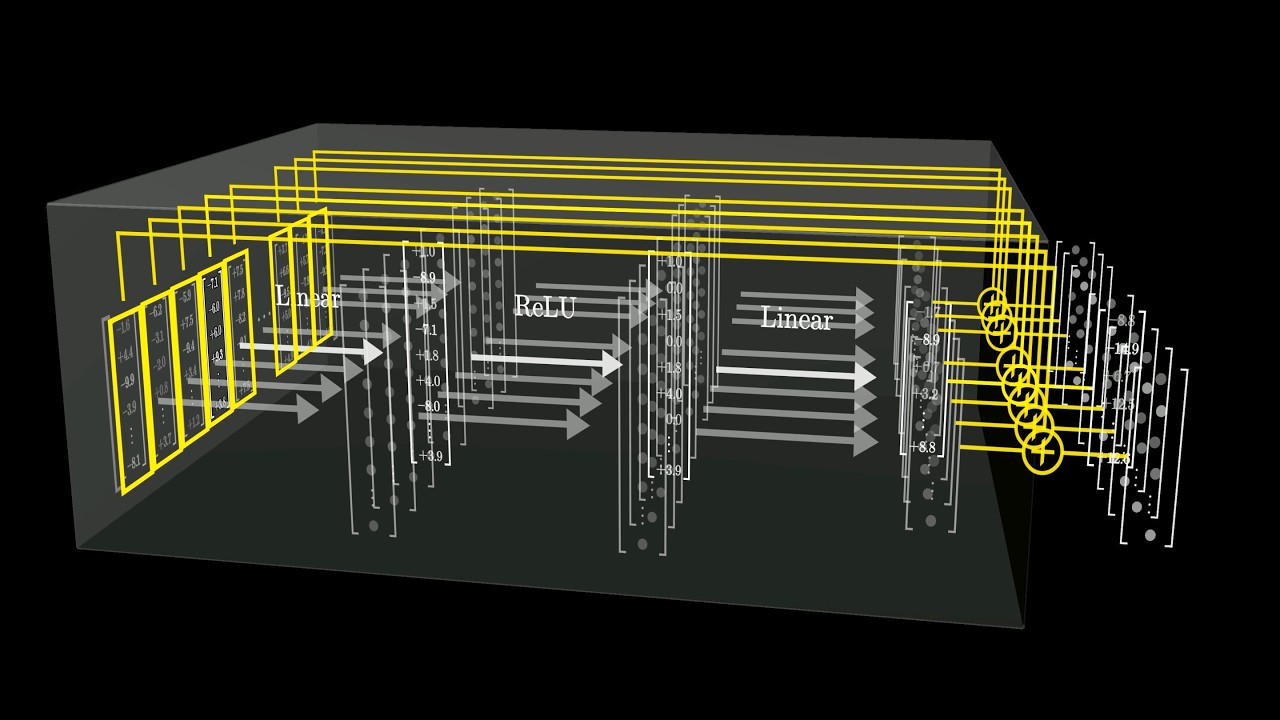

1 405b it's not even close all right let's look at an example of how it works and then I'll explain what's going on so the way that this model was created is using self-reflection in the data set meaning it actually showed hallucinations and self-correction in the actual data set so it's able to recreate that as you're running inference with it so in this example it says write the first sentence of the preamble to the Declaration of Independence but do it in mirrored writing and that is kind of a new prompt that everybody's using to try to test large language models so initially we have this thinking tag here so this is an interesting and moderately challenging task let's break it down into steps so it's basically thinking out loud it's not actually thinking because it's still just predicting the next token but it is kind of thinking pseudo thinking so recall the preamble to the Declaration of Independence write out the first sentence convert each character to its mirrored version and present the final mirrored text let's go through this plan so the preamble to the Declaration of Independence is well known and we hold these truths to be self-evident here's the first sentence of the Preamble now we need to convert this to mirrored writing in mirrored writing each letter is flipped horizontally as if seen in a mirror that means we need to reverse the order of the letters and flip each individual character now here's the special part reflection here's the reflection tag before proceeding I should clarify that there are two ways to interpret mirrored writing a simply reversing the order of the letters right to left or B reversing the order and flipping each character for this task I'll use method b as it's more challenging and true to the concept of mirrored writing so reflection over that's the end tag step four here's the mirrored version and there it is it is output in mirrored writing now reflected again here's another reflection I've double checked the mirror text and it appears to be correct each character has been flipped and the order has been reversed first the punctuation marks remain the same as they would in a true mirror reflection now here's the thing this is a really cool technique it seems to work very very well but it's not actually doing step-by-step thinking this is all just one output zero shot so again it's kind of pseudo thinking or semi Chain of Thought it's hard to explain but it's certainly not iterating on the prompt response and it's definitely not using multi-agent or multi llms to coordinate and collaborate on the response but it still does it incredibly well thanks to the sponsor of this video vulture reap the benefits of the world's largest independent cloud provider when you bring your GPU workloads over to vulture they have the latest Nvidia gpus spanning 32 locations across six continents vulture delivers industry leading price to Performance and serious accessibility and reliability vulture's Global fully composable Cloud infrastructure moves your applications closer to to your users and frees you from vendor lockin allowing you to bring your own networking and database Solutions and if you need to scale Beyond just a single cluster vulture's kubernetes engine allows you to take full control over your deployment offering up a 100% free control plane so if you're tired of waiting for gpus from other providers make sure to use vulture you can deploy at any scale immediately and they have h100s l4s and more available right now and you can get a fraction of a card or fully dedicated ated bare metal systems which gives you full control over your hardware and your throughput they also have oneclick installation of all the applications you might need for advanced machine learning workloads allowing you to get up and running in minutes not hours so experience the vulture difference don't get bogged down by severe weight times or limited locations try it free today with a $300 credit for your first 30 days when you visit get vulture. com Burman and make sure to use code Burman 300 at checkout to get that credit thanks again to vulture for sponsoring this video the technique that drives reflection 70b is simple but very powerful current llms have a tendency to hallucinate and can't recognize when they do so and this is very very true reflection tuning enables llms to recognize their mistakes and then correct them before committing to an answer now I don't actually know if that's 100% accurate because ultimately unless the large language model has a completely new architecture or is able to read the output and then iterate on that output it's not really able to self-correct but it does seem to work so maybe I'm wrong let's look at this example so counting the RS in Strawberry so it does that and then it says there are two RS in the word strawberry and then it reflects on it I made a mistake I can clearly see now that there are actually three Rs in the word strawberry but here's the thing I'm confused about it's not actually reflecting on what has already been output that's not really how it works it is just predicting the next token just like any other model would but again for some reason this seems to work really well but a better output would just be to get it right the first time around because now you have to get it wrong and then correct and you're using all those extra tokens probably not a big deal but still the optimal output is just to get it right the first time then additionally we separate planning into a separate step improving Chain of Thought potency and keeping the output simple and concise for end user let's look at an example another popular test which I've incorporated into my llm rubric which number is larger 9. 11 or 9.

9 now look at all this text what the optimal result would have been is just simply say 9. 9 is larger but now let's look what it went through to get to that answer this is a relatively simple problem that involves comparing two decimal numbers I can solve this using a straightforward approach with Chain of Thought reasoning plan identify the numbers to compare compare the whole number parts if whole number parts are equal compare the decimal Parts determine which number is larger based on the comparison Chain of Thought okay so it continues reflects the approach is correct the comparison of whole number and decimal Parts is accurate conclusion is logical based on the comparison so output 9. 9 is larger than 9.

11 that's great but look how many tokens it took to get there and here's the other thing this model was fine-tuned to go through all of these steps to reach the right answer and potentially quote un quote identify its mistakes but you could also just do that with prompt engineering you can simply say think through this step by step or first I want you to give me an answer then I want you to reflect on that answer so it's essentially that kind of prompt engineering just built into the model itself which is great but I really don't think this is some leap in core intelligence of the model it's just a better way of including that kind of prompt technique in the model itself rather than putting it in the actual prompt and if we look at Hacker News right now they actually posted about it and user RW L4 says you can somewhat recreate this just by using a system prompt which induces the model to do the same thing and here it is so here is essentially the same thing that you could do that Matt Schumer has been able to train into his model so begin with the thinking section inside the thinking section do that Chain of Thought include a reflection section check your reasoning be sure to close all reflection sections etc etc and then output and the jux the position user says under the hood reflection 70b seems to be llama 3. 1 fine tune that encourages the model to add think reflection and output tokens and corresponding phases this is an evolution of chain of thoughts think step by step yep so it works that's great I'm glad to see it it's beating most other models right now so you know what good on him good job Matt Schumer he goes on to say it's important to note we have checked for decontamination against all benchmarks mentioned using lmis .