In this comprehensive generative AI course you'll dive deep into the world of generative AI exploring key Concepts such as large language models data pre-processing and Advanced Techniques like fine-tuning and rag through Hands-On projects with tools like hugging face open Ai and Lang chain you'll build real world applications from text summarization to custom chatbots by the end you'll have mastered AI pipelines Vector databases and deployment techniques using platforms like Google Cloud vertex Ai and AWS Bedrock Baker Ahmed B created this course JV is the most demandable skills nowadays across Industries if you see all the industries

have started using genv in their product development if you want to level up your skill and if you want to crack good job nowadays with a good package definitely you should know about genbi if you're looking for well Organized n2n complete genv course I have a very much good news for you recently I have published one amazing n2n genv course so here I have covered everything you need to know to master the genbi this course will cover Basics to Advanced concept of genv so here we are not only going to focus on the theoretical part

we'll be also focusing on the Practical implementation of genbi we'll be exploring different different kinds of large language model with that We'll be creating different different kinds of gen VI based application so let me show you the course content of this course so guys as you can see this is the course content as I already told you we'll be starting from very basics of the Gen and we'll be covering till Advanced part of the Gen so here we'll be starting from uh introduction of the Gen VI so if you're completely new to this field no

need to worry I'll cover each and everything you need to know About gen VI then we'll be learning about data pre-processing and emitting because going forward whenever you'll be using large language model and to give your data to the large language model first of all you have to know how to process this data and how to generate the embeddings of the data and to process our data we need to know some of the technique so here we'll be covering these are the technique in this section then we'll be starting with the large Language model we'll

be learning about different kinds of large language model we'll be learning about commercial large language model we'll be also learning about open source large language model we'll be starting with the hugging F platform and its API because if you see the hugging pH hugging pH is having all kinds of large language model whether it's a commercial model whether it's a open source model all kinds of model are available inside the hugging face Platform then we'll be also learning about open Ai and its platform because if you see nowaday open AI is evolving a lot they

are coming up with different different kinds of large language model like they are coming up with image model language model OKAY different different kinds of model they are bringing so in this course we'll be learning about the complete openi and its platform so that you can use openi platform to implement any of genv based application we'll be Learning about prompt engineering because if you see prompt is everything for the large language model so if you are designing a good prompt definitely you will be getting a good response from the large language model so here we'll

be learning how we can designer efficient prompt and we'll be also learning about different different kinds of prompting inside large language model in this course we'll be mastering the vector database we'll be learning Different kinds of vector database like Pine con we chroma files and so on this Vector database will help you to create a knowledge base so whenever you will be creating any kinds of rack based application any kinds of LM powered application this Vector database concept will help you a lot in this course we'll be mastering different different kinds of genbi based framework

like we'll be mastering Lang chain llama index CH leit and so on these are the fr work will Help you to implement different different kinds of jni based application in this course we'll also learn one very important topic inside jtbi called retable augmented generation that means rack so we'll be learning how we can create different kinds of rack based application with our custom data even we'll be also learning how we can find you any kinds of large language model on top of our custom data after completing all the topics inside genbi we'll be Starting with

some n2n project implementation with the deployment so here we'll be implementing these kinds of project from scratch we'll be using modular coding to implement this kinds of project at the last of the course we'll be also discussing about llm Ops if you see inside genbi llm Ops is the most trending topic nowadays we'll be learning how we can use different different kinds of llm Ops platform like we'll be learning about Bedrock vertex Ai and we'll be learning how we can use these are the platform to implement efficient large language based application so yes this is

the complete curriculum of our course so if you want to start your career with jvi guys so this would be the amazing course for you so make sure you complete this course till till the end and this is my promise guys you will become Champion inside geni with that guys all the best and I will see you in the course hi everyone Welcome to the course of generative VI my name is BK Ahmed Bui and I am a data scientist and Mentor I'm having more than four years of working experience in the field of machine

learning deep learning generative mlops and so on I have already worked with so many multinational company as a developer even I also work with so many attech company as a mentor I have also taken lots of bats related generative machine learning deep learning computer vision National language processing and so on so throughout the entire course I'm going to be your Mentor so as I already have some experience from the industry so in this particular course I'm going to share my knowledge and experience with you so in this course we'll be starting with the very Basics

concept of generative and we'll be completing till Advanced part of the generative a so if you're a beginner if you want to start your career with the genbi so this Course is for you I have designed this particular course in a such a way so that anyone is coming from any kinds of domain they will be also able to understand so if you're from non-tech background if you're from Tech background it doesn't matter so if you are interested in Genera only you just need to start with this course everything I will take care apart from

the theoretical understanding I'm also going to show you lots of practical in This particular course we'll be doing lots of Hands-On in this particular course we'll be solving different different problem statement in this course so that your understanding would be more clear hello everyone welcome back with another video in this video we'll be discussing about what is gener tvi so before discussing about generative a first of all uh let me introduce some of the real world application of the generative you are Using in your day-to-day life so I think you know about chat GPT Google

Jin metal Lama right so these are the application you are using in your day-to-day life I hope everyone has used chat GPT at least right since at chat GPT what we can perform we can give any kinds of prompt we can uh do the conversation we can do the summarization we can generate the content we can generate the code we can do the Tex summarization any kinds of task we can perform in the chat gbt Right so similar wise Google has developed their own product called jini Okay Google jini so with the help of Google

jini also we can perform the same things the things actually we perform usually in our chat gbt so the same thing we can perform with the help of metal amar2 as well that means chat GPT is developed by open AI uh Google J mini is developed by Google and Lama is developed by meta okay meta that means Facebook so all the TS company iies are Working on generative day by day they are improving this particular generative they're bringing actually different different large language model and they're uh launching different different application okay for the users nowadays

all the companies are using generate to implement their product So currently in the market uh genbi having more popularity so that's why you have to know what is genbi exactly how genbi works and definitely if you want to work In the current job market you have to know about this genbi you have to learn about generative value okay this is the idea see all the application I showed you here like chart GPT Google jini metal Lama so they are using something called large language model in the back end okay with the help of large language

model they're able to perform these are the operation now first of all let's try to understand what is generative a exactly see generative a is nothing but Generative AI generates new data based on training samples generative model can generate image text audio video and so on data as an output so I think you have already worked with machine learning deep learning computer vision and so on right so there you used to use something called discriminative model and what is discriminative model based on some input actually it will predict the output of that particular data okay

so this was actually discriminative model and to Train the discriminative model actually we used to use something called label data that means that means whenever we used to prepare the data for the training we used to prepare the let's say uh input data as well as the output data that means you have to pass the X data and Y data that means input output both okay and if you uh give input and output both it will learn the relationship between input and output it will learn the pattern from the data Then it will able to

predict something on top of the test data so that was the idea in the discriminative model that means in the prediction model right but generative models are different okay so generative models can generate a new data based on the training samples so here you'll be giving some training sample which is called unstructured Data So based on this training sample data it will try to generate some new data that means inside jna when whenever you are Giving any kinds of unstructured data as an input your generative model will try to understand this unstructured data it will

learn the pattern from the unstructured data and it will try to generate something from that particular sample you are giving okay this is the idea and the output can be anything it can be text it can be audios it can be videos and so on okay as I already told you inser generative we not only work with the text but also we also work with The image videos audios and so on okay so all the unstructured data can be used inside generate this is the idea so that's why I generative AI is a very huge

topic inside generative AI actually we are having generative image model as well as the generative language model that means you can work with the languages that means text you can also work with the images videos okay and audios so there is another model you can consider generative Audio model but at The end you are converting this audio to the text representation that means language representation that's why I haven't mentioned this Audio model separately okay this is the idea because audio is nothing but it's a frequency and from the frequency we can convert to the textual

representation okay this is the idea there are so many API you will get even Google has the API you can let's say convert SP to text okay with the help of is to text you can easily Convert any of audio to textual representation okay this is the idea that means what is generative a Genera a is nothing but it generates new data based on the training sample you are giving and generative model can generate any kinds of output whether it can be image it can be text or it can be audios okay now let me

tell you how they got the idea like how this generative model can work see this idea had taken from real life only let's say uh if I give You 10 different scat book okay let's say if I give you 10 different cats book and if I tell you just try to read all the 10 different cat book let's say you have read all the 10 different cat books now if I'm asking anything related cats okay you will be able to give me the answer because you have already studied about the cats like uh 10 different

books I have given and this was the enough data for you right to learn about the cats so that's why if I'm asking Anything related to the cats you'll be able to give me the response so here you have become one cat model okay you have become one cat model and that is why if I'm giving any kinds of question you will be able to give me the response okay so similar concept applied in the generative also so they implemented one model called generative model in the generative model they feed actually tons of data that

means huge amount of data they uh trained okay that particular Model and then actually they were uh giving some kinds of question and that particular model was able to give the response okay this is the idea that means here you are feeding tons of unstructured data and your model is able to uh let's say learn the pattern from the unstructured data and there would be a position your model will be able to capable enough to give any kinds of response okay based on the question you are asking now you can ask me why Generative models

are required see I can give you thousands reasons of the generative model requirement but here I have listed down some of them so the first thing you can consider understand the complex pattern from the data because nowadays you can see people are using unstructured data a lot unstructured data means it can be text Data it can be audio data it can be videos data right so this is called actually unstructured data and in Today's world actually people are using internet broadly and in in Internet actually people are generating huge amount of unstructured data okay they're

using different different social media they are using different different platform so that's how actually they're generating tons of unstructured data so that's why it's very hard to understand the pattern from these kinds of unstructured data with traditional machine learning model okay so that's Why this gentic model comes into picture so it can easily understand the complex pattern from these kinds of unstructured data the second thing you can consider the content generation that means that means your generative model can generate any kinds of content it can generate code it can generate let's say any kinds of

story it can generate any kinds of music any kinds of videos I think nowadays you have seen like there are so many application came in the market so If you give any kinds of prompt it will generate the video for you okay the complete video for you that means it is generating some content okay it is generating some content based on the prompt prompt you are giving and that's how for the content creator also it is becoming one of the very uh used tool okay for their content generation and all let's say you are a

Blog writer now you don't need to write the blog from scratch so what you can do you Can just pass the topic and your model will generate the blog for you and what you can do you can just modify this particular blog with respect to your requirement and you can publish okay anywhere you can also generate any kinds of videos you can also generate any kinds of skills okay everything is possible nowadays with the help of this generative models okay so that is why content generation is one of the very uh important let's say features

inside Generative models okay I can talk about now the third thing you can consider build powerful application as I already told you we are already using uh tons of powerful application in our day-to-day life like CH GPT gini okay then Lama so different different actually powerful application we are using in our day-to-day life and just try to think about when we didn't have these kinds of application we had to complete of work actually manually but nowadays we are Having this kinds of powerful application now you can do any kinds of work actually in a few

seconds okay it is possible let's say if I give you one example as a developer whenever let's say you are getting any kinds of error okay from your let's say application what you can do you can copy that error and you can ask through the chat GPT chat GPT will give you the solution okay how you can solve it but previously when we didn't have any kind of chat GPT what We used to do we used to search that particular error inside Google we used to open this track overflow and we used to see lots

of let's say Solutions then we used to solve that particular let's say error and nowadays actually we are using these kinds of application and it is actually saving lots of our time so we don't have to spend actually lots of time to fix any kinds of bugs uh in a few seconds actually it is possible nowadays uh if we are using these kinds Of powerful generative AI based application okay this is the idea those who have already studied about artificial intelligence machine learning deep learning maybe you have already seen these kinds of V diagram okay

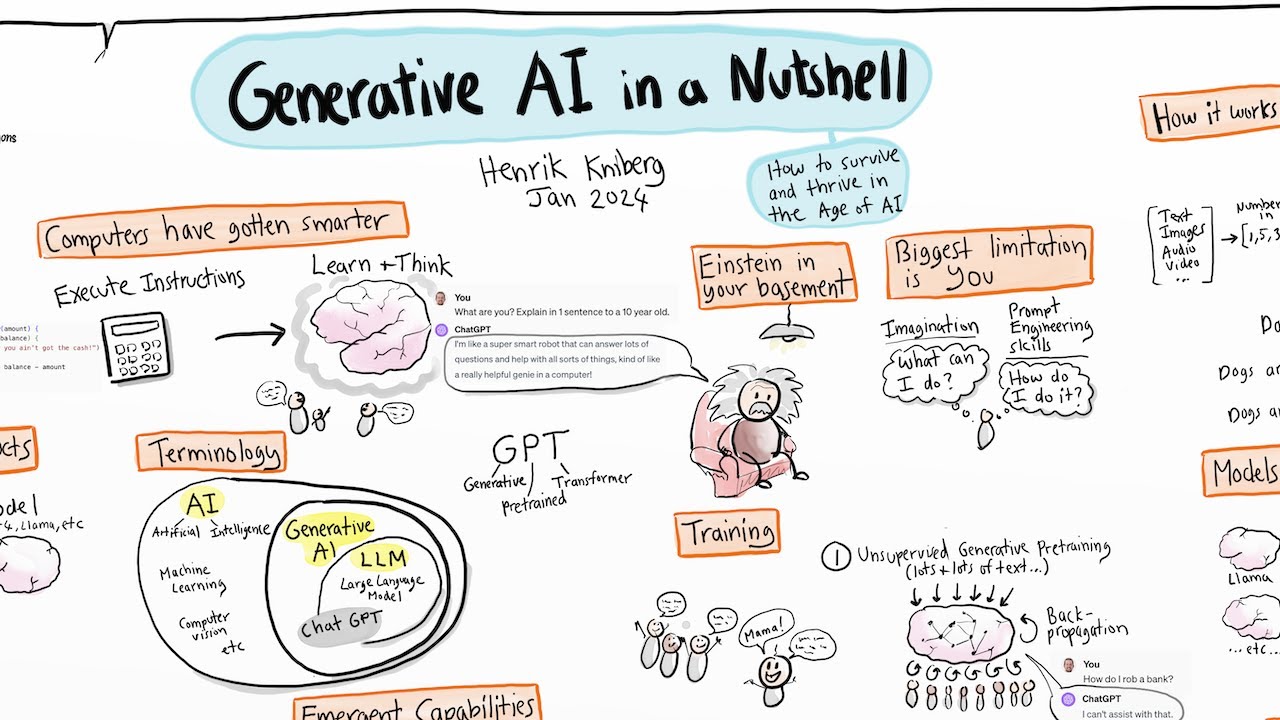

this is the V diagram of our AI the complete AI now you can see machine learning is a subset of artificial intelligence deep learning is the subset of machine learning and now here you can see gen VI is the subset of deep learning okay it Is the subset of deep learning that means machine learning is the subset of artificial intelligence deep learning is the subset of machine learning and generative is the subset of deep learning okay this is the complete V diagram of the complete artificial intelligence okay I hope it is clear now let's try

to see the difference between discriminative model and generative model you can see uh discriminative model is nothing but it's just a Prediction model let's say here you have given two input one is the cat image other is the dog image what your discriminator model will do it will try to predict whether it's a cat image or whether it's a dog image that means you are trying to do some prediction here I think you already use these kinds of model in your deep learning let's say whenever you used to perform something called image classification there we

had different different model like resonet 50 Inception V3 right mobile net vg6 so with the help of this particular model we can perform the image classification task on the other hand generative model can take actually any kinds of sample data any of noise data and from that particular noise data it will generate a complete new image for you okay new data for you now let's say here you are passing one noise data noise data means it can be any kinds of unstructured data and what generative model will do it Will try to understand the pattern

it will try to understand the pattern from these kinds of data okay then it will generate a new data for you new data point for you this is the idea okay this this is the idea of the generative model see I have kept another example here let's say so here we are using distributive model to classify the music type you can see here we are uh giving actually different different music rock classical and romantic so here your Discriminative model that means your discriminator deep learning model will try to classify whether it's a rock music whether

it's a classical music or whether it's a romantic music on the other hand generative model what it will do you will just give some music sample okay and generative model will try to understand the pattern and it will generate a complete new music for you maybe you have already heard of AI has created Music AI has created songs AI Has written Story how that's how actually they're using something called generative model they're actually feeding lots of training samples okay and this model is learning and it is generating a new content okay with respect to the

data they are giving this is the idea of the generative model and the distributive model I hope now it is clear now if I discuss a little bit low level like how things are working here see maybe you have heard of something Called supervised learning okay in supervised learning uh what we do we give the X data as well as the Y data that means input and output and here we try to find the relationship between our input and output okay this is called actually supervised learning and whatever actually let's say discret model actually we're

using it is called actually supervised learning on the other hand we are having another learning called unsupervised learning in The unsupervised learning what we use to perform we us to perform something called clustering technique let's say here we are only giving the X data that means the input data and what my model will try to do it will try to make a cluster let's say this is uh one cluster this is another cluster this is another cluster that's how it is separated out my data okay that's how it will find out the relationship between the

data okay this is the idea now you can consider This unsupervised learning as a generative model so generative model will also work in the same way so instead of actually finding the relationship what it will try to do it will try to make a class chart okay it will try to make a cluster from the unstructured data actually will be feding this is actually lowlevel actually understanding I'm giving you how things are working here but on top of that they have added some more Technique to actually build this kinds of genbi model okay this is

the idea so in summary actually you can see generative VI is a subset of deep learning and generative models are trained on huge amount of data as I already told you while training the generative model we don't need to provide any kinds of label data we only give the unstructured input data because whenever uh you are working with huge amount of data it's not possible to Label the data okay that's why you are giving the unstructured data as an input to the generating model so here you can see in generative AI we give the unstructured

data to the large language model for the training purpose and whatever things I explain so far you can see what your distributive model will do it will try to uh actually predict whether it's a dog image or whether it's a cat image okay by finding the relationship on the other hand your Generative model will try to uh make a cluster okay let's say this is a cat cluster this is a dog cluster okay then with the help of that it will able to generate a new content from you I think you have heard of something

called gan the generative aders neural network okay so Gan is also called generative model okay because here you will be giving noise data and from the noise data itself it will able to generate a new content okay new data this is the idea Of the Gans so so far I told you about generative model but what exactly this generative model see generative model is nothing but it's a large language model which is also called llm see a large language model is nothing but uh it's a foundational machine learning model that uses a deep learning algorithm

to process and understand the natural language these models are trained on massive amount of Text data to learn patterns and entity relationships in a Language it can be also considered in a image data as well okay not only text Data you can also use llm for the image as well because I told you we are having two kinds of model uh generative language model generative image model okay so you can also use image data here not only text data and it is a language model which is responsible for performing tasks such as text to text

generation text to image Generation image to text generation generation as I Already told you as I already told you generative model can support actually all kinds of data you can generate text to text you can generate text to image even you can also generate image to text okay everything is possible with the help of this large language model nowadays okay and we also call it as multimodel that means you can perform multiple task here not one specific task you can perform multiple task like text to text text to image and image to text Generation apart

from that you can perform uh some more tasks let's say you can perform uh language translation you can perform text generation you can perform let's say text classification you can perform sentiment analysis name entity recognization all kinds of task you can perform with the help of only one model and which is nothing but our large language model okay and large language model is nothing but our generative model okay this is the Complete idea about our generative AI I hope it is clear now now let's try to understand what makes llm so powerful as I already

told you in case of llm one model can be used for whole variety of the task okay I I told you now uh Tex generation you can perform chatboard you can perform summarization you can perform translation you can perform code generation you can perform that means whatever task actually you have inside the NLP all the task you can perform With the help of one model which is nothing but large language model so that's why we call this llm is so powerful and that makes llm so powerful because in our traditional model whatever model we used

to use that means the language model only we can only perform one specific task let's say you are you want to do actually language translation for language translation you need to only 10 mon language translation model that model can't do the code Generation or let's say t summarization that model can perform but if you're using large language model you can perform all kinds of task with the help of one model only okay so that's why llm is so powerful because of this particular idea so as I already told you if you're using your traditional actually

language model it can only perform one specific task at a time let's say you want to build a sentiment analysis so one model you have to train For the sentiment analysis which will only able to do the sentiment analysis whether this particular let's say sentiment is positive neutral or negative let's see you want to perform language translation you have to read another model for the language translation and that model will be able to translate any kinds of text okay that means you have created two separate model okay for two separate task but if you're using

large language model you Need to only train one specific model okay and that can perform multiple task this is the idea of large language model so let's see some of the large language model so here you can see we are having gini GPT uh xlm then we are having t five Lama mral Falcon apart from that there are so many large language models are available over the internet I'm going to discuss I'm going to show you each and everything so here I just mentioned some of the large language Model just to show you but I

will tell you uh how you can see all the large language models are available over the Internet even I will also show you how you can access those model and how we can use those model to create your application okay on top of it everything I'll try to clarify so yes guys this is the introduction of generative I now I think you got the clear-cut idea about generative like what exactly the generative Ai and inside generative a Actually what are the things are available and why it is actually mostly used technology inside any kinds of

software product nowadays and inside generative models actually uh what is uses it is uses something called large language model and not body I'll discuss about large language model uh in detail like how large language model works and inside that what are the architecture they're using everything I will try to clarify and now you can ask me what is N2 pipeline of generi no need to worry I will explain uh each and everything what is Pipeline and all and why pipeline is super important whenever you are working in the generative field see it's not like that

whenever you are getting uh some problem statement from your company whenever let's say you are receiving the data it's not like that you will be directly applying the model on top of it before applying the model we have to perform some specific task okay and Those stepbystep task is called pipeline so you can see gener TBI pipeline is a set of steps followed to build an n2n genni software okay that means here you will be breaking the problem statement into several sub problems then you will be trying to develop a step byst step procedure to

solve them and here you can see since language processing is involved okay inside generative AI so we would also list all the forms of text processing needed at each step this step Byst step processing of the text is known as a pipeline okay that means uh that means whenever you are getting any kinds of problem statement from the company first of all break that problem statement in a several sub problems okay then try to solve one by one and to solve that you have to perform some steps okay you have to perform some steps and

those steps is called actually pipelines okay pipelines inside generative a now let's see one end to End pipelines actually we have to follow for all the projects we'll be developing in Futures see in Genera pipeline we are having these are the step we have to follow the first step actually we have to follow the data acquisition because here data is everything right at the end you have to work with the data here so first of all you have to get the data and this steps falls into data acquisition part okay then after that data acquisition

we have to perform Something called Data preparation okay what is data preparation I'll will let you know that means here you will be doing the cleaning of your data okay after that you'll be starting with something called feature engineering inside feature engineering there are so many steps you can follow like so the main uh step actually will be doing inside feature engineering called text representation that means you will represent your text to the Vector that means number so that your model can take the input then after that you'll be doing the modeling then you have

to select different different model and you have to fit the data there okay this is the idea after uh modeling what you have to do we have to evaluate that particular model Because unless and until you are not evaluating the model on top of test data you won't be able to decide whether this particular model is suitable for our production or not okay Then once let's say you got your uh let's say production model what you can do you can do the deployment deployment of your model you can poost your model so that other people

can use your model OKAY other user can use your model and they can give the feedback okay based on the feedback last step what you can do you can perform monitoring and model updating that means you have to monitor you have to uh keep on monitoring your application like uh I think you saw the Chart GPT right so previously I think you saw in chart GPT whenever let's say you are giving any kinds of prompt so it will give you one actually let's say input form it will tell you are you enjoying this CH GPT

or any kinds of feedb feedback you want to give or not not so they actually they were taking the input in real time okay this is called actually monitoring okay monitoring their application that means they're monitoring whether this Application is working fine or not in the production if something going wrong in the application if let's say user is giving negative feedback that time what they will do they will try to update this model again okay this is the idea so it's not like that you are only building your model you are only deploying your model

it's not like that you have to monitor the model in production and based on the feedback you have to keep on updating the model Itself okay this is the complete idea and these are the steps involves inside the generative VI pipeline I hope it is clear now let's break down all the let's say pipeline steps one by one and try to understand what we have to do in each and every step the first step was actually data acquisition so inside data acquisition you can follow some step to collect your data so the first thing you

have to check whether you have available Data or not so available data available data means let's say you are having a CSV file directly you are having let's say text file you are having let's say PDF documents you are having docs documents then you might also have something called Excel Excel SX okay H so if you have these are the files apart from if you have any other file format it's completely fine but first of all you Have to check whether you have available data or not okay this is the idea now if you don't

have the available data what you have to do you have to look for other data okay other data other data means you can collect the data from the database you can see in the internet whether someone is having the data set or not then you can also use the API let's say someone will give you the data but you have to fix the data Through the API that time actually what you can do you can collect the data from the API itself okay then you have then you can perform something called scrapping that me web

scrapping let's say uh you don't have the data in database internet API then what you can do you can do the web web scrapping okay we web scrapping means uh you'll be scrapping the data from a website okay this is the idea now there is another possibility you have no data that time What you will do okay that time what you will do that time you have to create your create your own data create your own data means either you can collect the data okay either you can do the survey you can collect the data

or what you can do you can use large language model to generate the data okay LM to generate data nowadays actually you will see people are using open AI okay open AI GPT model OKAY GPT model to generate the data okay so with the help of llm Also you can generate your own data but whenever you are uh creating your own data there is a possibility let's say uh your data will be less let's say here I will just write you have to note let's say whenever you are collecting your own data your data quantity

might be less so that time what you can do so let me write here if you have less data then you can perform data augmentation I think you know what is data augmentation okay so data augmentation is nothing but You will be augmenting the data let's say uh I'll give you one example so the first augmentation technique you can perform so let me give you the example you can do something called replace you can do replace with synonyms okay now how to do the syon see let's say here is one example I'll will give you

let's say I am a data scientist okay so let's say this is my text now what you can do you can just Replace the synonym so here you can write I am a AI engineer that means you are replacing this particular uh entity with this entity so this is called actually replace with synonyms okay this is the idea I hope you got it because here you will be working with textual data mostly and if you have the image that time what you can perform so there are lots of image augmentation technique as well let me

show you so you Can simply search image augmentation so there are different different technique you can follow see let me show you one example let's say let's say this is the example I can show you let's say this is your original image so what you can do you can do the horizontal flip you can do the vertical flip you can do the rotation of the image you can also do the negative rotation you can do the blower operation You can change the brightness you can add some noise you can add some darker okay so that's

how actually you can change the original image okay you can change the original image and make different different variants okay this is called actually image data augmentation because I already told you inside jni we use both kinds of data whether it can be textual data it can be image data it can be audio data okay anything actually you can consider here Now the next technique you can uh apply which is called diagram flip Bagram flip now you can ask me sir how to perform Bagram flip see it's very easy let's say I'll give another example

uh I am buppy all right now you can write this sentence like that puppy is my name okay so this is called actually Byram plate that's how actually you can also do the data augmentation because at The end you are increasing the data that is the idea and whenever you are increasing the data it should have the meaning it doesn't have the meaning that means there is no use of the data so you have to make sure whenever you are performing these are the technique this data should have some meaning I hope you getting my

point now the next thing you can perform third step you can perform back translate Back translate now how to perform back translate let me show you so let me go to my uh translator so I'll open the translator Okay Google translator let's say here if I write one sentence or I can just copy one story let's say we whatever will do let's say I will copy this uh copy this text and here let me paste it now you can see it is uh translating to the Bengali now what I Will do I'll copy this Bengali

transl translation and here uh the input language I'll be selecting the Bengali right now so let me select the Bengali and I can paste it here now here you can select any other language let's say I'll select Spanish now see Spanish text it is giving now let me copy okay now what I can do again uh I can come here I can past the Spanish text and let's say I want to convert to English again so here let me select the English you got your English text now so here if you see carefully uh some

of the word would be different okay then your previous actually text you have copied after doing the Byram flip that means you will be converting um it a language to another language that language to another language then uh again in your English language so that's how if you do multiple time translation you will see some word would be changed and see this this will work if you have actually lots Of text actually lots of sentence not like that you are only copi one to two line if you have multiple let's say line multiple text that

time actually the reflection actually will able to see okay I'm just only showing you how we can perform the back translation because there are so many um API nowadays inside python you can use for the back translate I think translation API is already there with the help of that you can do the translation first of all Let's say you have the English text first of all try to translate to the Hindi Hindi to let's say uh Spanish Spanish to English again that's how you can do the back translation and you can increase the data so

this is another amazing data mentation technique you can follow okay I hope you're cleared the last step actually you can follow something called add additional data or additional noise how you can add additional noise let's say here you have One sentence I am a data scientist so with this sentence you can add one more line let's say uh I love this job okay so this is your additional data or additional noise you have added okay with your data again this is called actually data augmentation technique and again uh it's an amazing technique you can follow

okay Al together so these are the step actually you can perform inside data acquisition okay so first of all Try to check whether you have available data or not if you don't have try to see the other data if you don't have try to uh create your own data for this you can use large language model even I will I will also show you how you can generate your data with the help of large language model and after generating let's say if you have less amount of data in that case you can perform something called

Data augmentation technique inside data augmentation Technique these are the step you can perform okay so this is the first step of the pipeline now let's discuss the second step of the pipeline okay the Second Step I think you remember data preprocessing so inside data pre-processing you can perform cleanup operation okay cleanup operation that means you can remove HTML tags then you can remove let's say Emoji you can do the spelling check okay spelling correction fine so these are The technique actually you can perform inside cleanup now there is another uh step you can follow called

basic pre-processing I'll tell you what what are the basics pre-processing are available even I'll also show you the Practical how you can perform then you can also perform something called Advanced preprocessing because nowadays the application actually we have let's say chat GPT Jin so these are the actually Advanced application advanc Let's say large language model so for this we have to also perform some Advanced pre-processing technique okay let's say whenever I'm having that data nowadays because let me show you let's say if I open my chat GPT okay if I open the chat GPT see

if I give any kinds of emoji nowadays inside chat GPT still it will be able to understand let's say if I give this haha okay haha let's Emoji now if I send it to my chat GPT see it is automatically detecting What's so funny okay so that's kinds of a text also we need to handle because whenever we are uh collecting the text Data it will have emojis it will have HML tags okay so many things it will have so sometimes it is also necessary to keep the Emojis because I told you now this kinds

of application is Advanced so it is also understand the Emojis any kinds of text so we also need to handle these are the let's say uh I mean text in that time now inside basic Speed processing let me just write here inside basic pre-processing so inside basic pre-processing the very important things you have to perform something called tokenization okay so you have two kinds of tokenization one is called sentence level tokenization other one is Word level tokenization so I'll be discussing each and everything no need to worry and there are some optional pre-processing Are available

so inside optional pre-processing the first thing you can perform stop word removal okay stop word removal then the second uh things you can perform something called steaming and this is like very less used nowadays okay less used actually technique the third thing you can perform something called latiz and the lemmatization actually more used more used okay I'll tell you what is steaming LZ whenever I'll show the Practical that time I'll discuss then the fourth uh things you can perform something called punctuation removal so punctuation means let's say you have uh question symbol you have dot

you have comma you have uh exclamation sign okay you have dollar symbol so these are the things are actually punctuation so you have to also handle the punctuation then you have to perform something called lower case then the sixth you can Perform something called language detection because nowadays if you open the chat GPT or gini if you give any kinds of language it will first of all detect in which language you are asking the question B not that it will give you the reply okay in the same language so uh so they are also applying

this language detection technique okay in the back end so that's actually one real world product Implement okay it's not like that you are getting the data you Are directly applying the model it's not like that so you have to follow the entire pipeline to build the endend software okay that's why I'm showing you these are the pipeline why this gen VI pipeline is super important and what are the steps are uh involved inside the pipeline so that after learning it it would be easy for you to develop any kinds of software in future and this

is my promise if you understand this okay if you can do this you can design any Kinds of software in future okay because what is generative Genera is nothing but just a technology so you have to use that technology you have to learn how to use the large language model how to prepare the data for the large language model okay how to use let's say Lang chain how to use Vector database you have to connect everything together then you have to build one application that is the idea only but the root things available inside the

pipeline how you're Designing the pipeline whether pipeline has all the steps available or not I hope you getting my point right so now I think these are the steps are clear uh but I think some of them still you are getting confused like what is steaming what is let's say LZ what is let's say why we have to perform the lowercase operation and what is this uh tokenization see let me just discuss all of them one by one so first of all let me discuss the tokenization that means Let's see you are having a sentence

here let's say my name is buy so this is a cent right this is a complete sentence right so whenever I will be converting this sentence to number that means Vector representation first of all I have to I have to actually do the tokenization tokenization means you will be uh taking the individual words let's say like that my comma Name okay comma is comma buy this is called actually Word level tokenization okay Word level tokenization okay that means you had one complete sentence you just did the tokenization and you got the word as a token

okay everywhere now you can take the word and you can convert to number there is some technique we can follow I'll tell you those are the technique what are the technique you can follow to Convert your text to number representation okay and there is another technique called uh let's say sentence level tokenization let's say you are having a sentence like that let's say my name is bbby uh I am a data scientist okay now if I perform sentence level tokenization so sentence wise actually I have to convert so it will come like that my name

is buy so this is my one token then Comma then I am a data scientist okay this is another token that means two token I'm having in the list okay this is called actually sentence level tokenization sentence level tokenization okay so we'll be using this word level tokenization a lot sentence level you can also use sometimes you have to use sentence level tokenization sometimes you'll be using Word level tokenization But most of the application I saw people are using what level tokenization that is the idea so this is what actually our tokenization okay now let's

try to understand what is steaming see steaming is nothing but see steaming is nothing but you are bringing one word in a root form let's say here we are having three kinds of word play played and playing so if you see them carefully meaning are same yes or no meaning are Same only the ver representation is different now instead of taking actually three okay separately what I can do I can um convert in a root form so root form is nothing but play okay because player represents Sports okay Sports so this is called actually Ste

steaming that means you have different different uh actually word form that means different different V form and you are trying to bring in a root representation okay this is called Actually uh steaming and again it's important because it will reduce the dimension okay it will reduce the dimension whenever let's say will be converting your data to the vector representation because if you have three word so it will create actually three dimensions separately but if you convert it to one let's say word only so it will only have one dimensional and we know that inside artificial

intelligence Dimension is like the issue so if you Have let's say more Dimension that time your model will get confused because there is a concept of curs of dimensionality so you have to also take care about the dimension okay that's why we perform this streamming operation and LZ is the same okay the way actually perform the steaming LZ is also same only whenever you will perform the LZ your root meaning of the word would be readable but whenever you will perform actually let's say streaming sometimes It won't be readable I'll tell you whenever I'll do

the Practical of it that time actually will get it okay what I'm trying to say that is the idea now you can ask me what is lower casing okay because fation you already know now you can ask me why lower casing is important why you have to perform the lower case operation let's say if I write two sentence buppy is very good boy and Buppy is a data scientist let's say these kinds of sentence we are having now here you can see I think buy and buy both name are same but here you can see

the first character it's a Capital One the second character it's a l one so as a human actually you can consider these two buy are same but whenever you will be passing to the model that means in your computer your computer will be considering this two word is a different word because here you are using capital And here you using lowercase character because all the character is having their asky value right that means the asky code uni code so with the help of that it will consider these two words are separate so that time this

bu would be different this bu would be different although they are same okay this is another issue so that's why we perform lower case operation so whenever actually we're having the upper case we'll try to convert to the lower case Okay we'll try to convert so let me show you we'll try to convert to the lower case Okay so this is now you can see this buy and this buppy will become same okay now if you give pass to the computer also your computer will identify this buy and this buy is the same right now

okay so that's why we perform the lowercase operation so that my model won't be getting the confused okay this is the idea so I think most of the things I clarified and yeah so Everything I'll show you as a practical as well how we can perform as a practical I'll tell I'll discuss it okay no need to worry now apart from basic pre-processing I also told you we have something called uh Advanced preprocessing as well now let's try to see what are the steps are involved inside Advan preprocessing see inside advance preprocessing advance pre-processing we

have something Called parts of space tagging parts of speech tagging so if you're good with English grammar I think you know what is parts of speech okay then you are also having something called parsing then you have something called Co reference okay Co reference resolution now if you want to understand them let me show you one uh image I think then this part would be cleared so guys I think you can see this image so here you can see uh this is the um input text we are having chaplain Wrote directed and composed the music

of uh for the most of is film now if I perform tokenization and LZ so I already told you what is tokenization you will be taking individual word see I have taken individual word here and if you perform the LZ LZ means it will bring the uh let's say any kinds of verb in a root word now we can see root root if I bring to the root what it will be right directed it would be direct okay then compost it would be compos it would be Compos so that's how actually you can understand the

tokenization and LZ now what is po is tagging P tagging means parts of spe tagging the things I showed you in my Advan preprocessing so this is what actually parts of spe tagging that means you are assigning what is noun what is verb what is adjective okay so that's how you are defining different kinds of part parts of speech if you're good with grammar I think you will be understanding better than me uh just try To consider here you are uh tagging everything that means you are tagging all the let's say noun verb okay then

pronoun everything you are tagging now there is another one called parsing okay I think I showed you parsing so this is the parsing tree that means you will be creating a tree and inside this tree you will have the entire sentence word you can see chaplain Road directed okay all the words so in the parts three you will be again assigning the POS tagging apart From that you will be also dividing these are the word in a separate separate form again this is a advanc actually grammatical rules uh you have to learn but no need

to worry let's say when will be working in the industry there you will have something called domain expertise okay let's say language expertise actually people will get so they will help you to do it because I know as a developer you know you don't Know about let's say uh this English grammar and all but whenever you'll be designing the software that time language expert will help you okay how we can perform the pursing how we can perform the P tagging everything they will help you out now this is the last example core reference resolution so

cor reference resolution means let's say Chaplin wrote directed and composed the music for the most of his film now here chaplain and his it is indicating the Both person uh sorry it is indicating the same person right so this is called actually core reference resolution Solutions sometimes you your model has to understand uh this Chap and his so it is mentioning the both uh it is mentioning the same person okay so this is called actually core reference resolution because nowadays if you see the chat GPD G so these kinds of application is able to understand

okay this kinds of input disc kind of prompt Okay so in the back end actually they're handling like that I hope it is clear now then the third actually step were available inside uh gen pipeline which is nothing but feature engineer ing feature engineering so inside feature engineering you can perform text vectorization so to perform the text vectorization you can follow some technique like uh you have tfidf Tfidf you have something called bag oford you have something called word to you can also perform something called one not encoding then you can also use some Transformer

okay Transformers model to perform the text vectorization as well because these are the very old technique so if you're using any deep learning and machine learning model that time you can use these are the technique but if you are creating advanc application like Let's if we using large language model Transformer based architecture like say encoder and decoder architecture that time you have to use Transformer model to perform the text vectorization technique okay I hope it is clear so these are the steps are available inside feature engineering so inside feature engineering you'll just try to convert

your text to Vector representation it can be also uh work with the image as well let's say you are having an image Okay let's say you having an image and in that image actually you are having a cat image let's say this is a cat let this is a cat okay now image is nothing but it's a pixel so if you just see the image so here you will see different different pixel value okay different different pixel value so that time what you can do you can use any kinds of vision Transformer model to convert

this image to the vector representation okay Vector Representation and you can pass to the model okay so that is the idea now fourth step actually I think you saw after feature engineering you can do the modeling okay modeling modeling means you have you'll be training the model that means uh you'll fit the data to the model okay so here you can choose different model you can choose actually different kinds of model so here you can either use open Source okay open source LM that means large language model either you can use paid one okay paid

paid model so so what is the difference between open source and paid see if you're using paid see if you're using paid one this model would be available in the server that means you don't need to download this model in your machine okay so you don't need to download instead of that what you will do you will only let's say Pass the data okay let's say you are using open a because I think you know opena provides actually paid model that's a GPT model so in opena you will upload your data and in their server

it will train the model okay you don't need to download the model in your machine and you don't need to train there because I know that people onlyon be having good GPU in their system okay it's not possible actually to buy uh expensive GPU for training these are Kinds of large language model because if you see this large language it's very huge okay if you see the parameter size is very huge so we can't download in our machine and we can't train it it's not possible so that's why most of the time we'll be using

Cloud platform to train the model okay so that time actually you can use the paid model because paid model if you're using paid platform if you're using everything they will take care only you have to upload your data There but if using open source large language model that time you have to download this model in your machine you have to prepare everything you have to set up the environment and then you have to train the model and for this you need a good GPU okay good GPU good CPU good memory okay everything you need and

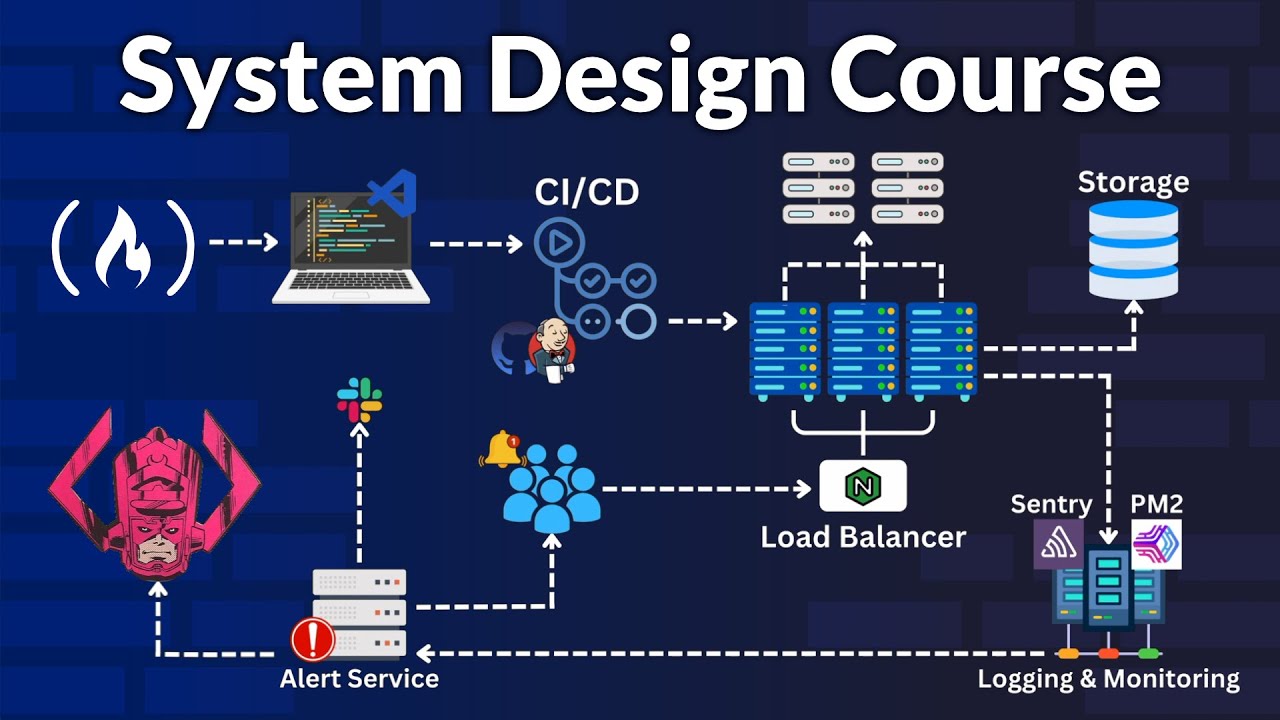

after training this model you have to deploy this model uh in a cloud platform okay manually you have to deploy this model in a cloud platform and again you Will get the challenges there like uh you have to buy a good instance then you have to set up the load balancing and all okay then you have to deploy this particular model but if you're using Cloud platform they directly will get one option to deploy the model in their server only okay so that people can access the model in their platform only okay so you don't

need to take care about the infrastructure you don't need to take care about the instance Everything they will take care that is the difference got it we'll be also understanding how we can use paid model how we can use open source model and I will also discuss the difficulty level actually we'll be getting whenever you'll be using open source llm whenever you will be using let's say paid llm everything I'll try to clarify no need to worry uh train your model you have to perform something called Model evaluation okay evaluation so inside Evaluation you have

to part from two kinds of evaluation one is like inin evaluation other is like extrinsic evaluation so intrinsic Evolution means you'll be using some metrics okay you'll be using some metrics to evaluate the model I think you know we are having different different metrics for the evaluation let we are having accuracy score R2 score then we are having AOC card so these are the different different metrics we are Having okay so after trading a model you have to perform something called intrinsic evaluation and this is performed by the and this evaluation will perform by the

jna engineer okay jna engineer because they training the model and after training the model they have to actually uh evaluate the model with the help of these are the matrixes and the extensive evaluation when they have to perform after doing the deployment okay after doing the Deployment deployment so this uh evaluation can be applied during the production okay let's say whenever this model is in the production people are using it that time this extensive evaluation can be performed let's say I already told you chat GPT will uh launch one input sometimes it will uh ask

you give the feedback not only chat GPT if you see any kinds of application so if you just consider your phone keyboard so whenever you use the keyboard okay so Initially I think you won't be getting any of suggestion but whenever you will be using that particular keyboard okay um long time then you will see that automatically you will get the suggestion because it is learning okay how what kinds of input you are giving it is learning and sometimes it will ask for one kinds of feedback whether you are enjoying this app or not okay

any feedback you want to give or not and whenever you are giving the feedback Whenever you are giving the five rating four rating that time so that time actually they're getting this application is working fine there is no issue but whenever you are giving negative feedback that time they're getting actually there is some issue with the application that time what they will do again they will they will uh retain the model okay they will retain the model and they will again push the model Through the production okay so that's how extensive Evolution works that means

after deployment after uh going to the production okay after going to the production of that model you will be performing this particular extensive evaluation this is the idea and the sixth I told you we have to perform deployment okay deployment and inside deployment you will be also doing the monitoring monitoring and retraining okay retraining Because you'll be doing the monitoring in the extensive evaluation and if something going wrong you'll be doing the rning of the model this is the idea so these things are involved inside so the entire generative AI pipeline I hope it is

clear so first of all data acquisation then uh data preparation then uh feature engineering modeling evaluation and deployment and here one thing you have to remember because going forward I'll be using this Trum a lot so Let me just write here common Trum let me call copy this text and let me paste here let's say this is my data now here you will see some of the actually let's say common term the first ter actually we'll see uh called cordus okay Corpus then the second term you will see which is nothing but vocabulary and the

third term you will see which is nothing but documents and the fourth you will see Something called word okay word now what is Corpus Corpus is nothing but the entire text entire text that means the entire text is called Corpus okay and what is vocabulary unique word unique word now you can see this is unique word this is unique word this is unique word this un this un okay so these are the uh what is unique and if you see any of the word is coming duplicates only you have to take the Unique word okay

this is called actually vocable okay then what is documents document is nothing but one row that means one line only so till here actually one documents D1 then this is called actually D2 okay D2 then D3 so this is called actually documents so I can write one line okay one line is the documents okay or one row and what is what what is n this single word okay single word is called word so you can see this is a single Word is a single word this called actually word so these are the common term you

have to remember because going forward whenever we'll be actually playing with the data that time actually I may um tell you these are the actually common term okay that time you won't be getting confused what is corus what is vocabulary what is documents what is what okay that's time so that's why I showed you okay here in this video so yes Guys these are the actually Generative VI Pipeline and uh here I have just showed you as a theoretical so in the next video we'll be doing the Practical of it like how we can perform

different different text cleaning technique like different different text pre-processing technique after that we'll also see how we can perform feature engineering technique as well okay different different feature engineering technique because what I feel like before starting with our large Language model first of all we have to understand the data first of all we have to know how to prepare the data for the model okay then we'll be starting with the large language model okay so this thing actually is uh so this uh thing actually very important before learning uh your gen before learning the large

language model and application building so that's why I'm teaching you this part uh in our previous video I already discussed about end to end generate TBI Pipeline so there I told you what is the use of data preprocessing right because if you want to use the model that means large language model the first thing you have to do the data P processing Because unless and until you are not processing the data how your model will try to understand that one right that is the idea so here we'll be learning various kinds of technique uh to

clean up about data so for this what I have done I have created a beautiful uh collab notebook So there actually I have uh added all the examples you can uh use for the data cleanup operation so guys now let's try to see how we can do the data preprocessing part so guys as you can see this is The Notebook I already prepared so here you can see I'm using one data set uh from kagle so let me open the link um this is the link so the data set name is IMDb uh data set

and it is having actually 50k uh movie reviews Okay 50k actually movie reviews if you are already from let's say machine learning deep learning background I think you know about this data set right it's like very common data set and why I took this particular data set because in this data set you will see uh there are so many U actually unnecessary text okay because it's a movie review so what they did actually they extracted they scra this data from the IMDb website if you don't know this is the IMDB website IMDb So in this

website you will see uh all the movies reviews and rating and what they did actually they published this data set in the Kagel website so that if anyone is working in the field of let's say genv or natural language processing they can use the data set it now here what you just need to do you just need to download this data okay it's around uh I think 27 MB just click on download button it will download okay so I already downloaded this data set so it Is available inside my download folder so I'm going to

upload in my collab notebook so here first of all what you have to do you have to connect this particular notebook so there is a connect button just try to connect and no need to worry I will also share this notebook Link in the resources section from there you can open it up so my notebook is connected so first of all let's import some of the library first of all I need something called pandas Because if you see the data uh it's a csb data okay it's a csb data so let me upload and let

me show you so if you want to upload anything in the Google Drive just try to right click and there is a upload button click on upload and try to upload this data here now see it's a CSV file okay and if I want to load any kinds of CSV file Json file or let's Excel file whatever file you can use the pandas package for that so you can see my data set is Uploaded now if I want to load the data set what I have to do I have to assign the path and see

you don't need to execute deser the code because uh this code I have added let's say if your data set is available in your Google Drive that time what you have to do you have to Mount Your Google Drive first of all Mount means you will be connecting with your Google Drive then will'll be relocating the folder okay like inside which folder you kept your data okay With the help of CD command CD means change directory okay then after that what he will do he will assign the data path but here I haven't kept my

data inside my Google Drive I kept inside my col collab actually you can see drive this is the collab disc I'm using here you will get around 74 GB of space so here you also you can keep your data so I don't need to execute this code what I will do I'll just go below and if I check my current working directory that Means PWD you'll see I'm inside content content means this is the directory right now now here I'll just simply Define my data path I'll copy the path copy and let me paste it

here okay that's it now let me execute now see if I now load the data pd. csb I'm doing now see it will load the data see it has loaded the data if you want to see the shape of the data this is the shape okay you have around 50 uh 50k actually movies movies reviews In this particular csb file and two columns two columns means one is the reviews column other is like the sentiment column okay sentiment means whether it's a positive sentiment whether it's a negative sentiment this kinds of sentiment you will get

here I hope it is clear now see here I'm having 50k movie reviews but here I'm not going to use all the reviews here I'm going to show you the demo like how we can perform the text pre-processing and if I'm taking all the 50k reviews so it will take like lots of time um to process those are the text so what I will do I only take the 100 example okay the first 100 example I I'll be taking and on top of that I will perform all the text processing task right so this is

the code you can execute so it will load 100 example now if I show you the shape see now we are having 100 example and only two columns fine now if I want to show you the data see this is the Data so I think you remember in my uh theoretical class I was discussing about some pre-processing technique uh here I think H so here you can perform something called HTML tag removal Emoji handle art then spelling correction then in the basic preprocessing we saw that we can perform something called tokenization then we had some

optional preing as well like stop word streaming LZ punctation lower case Okay language detection each and everything so first Thing we'll be learning how we can perform the lower case operation and why lower case important I already explained here I think you remember let's say let's say if one of the name is containing uppercase character it will consider these are actually separate name okay these are actually separate entity that is the idea so that's how we have to bring everything in a lower case character so that's why you have to apply this lower operation and

how to Perform lower operation I think you already know in Python we are having a function called Lower with the help of lower also we can do it right now see here let me show you one example let's say I'm taking the reviews three so here I'm taking the three three three number rows this is the three number rows now you can see some of the uh character is uppercase character here so this is uppercase this is uppercase okay so that's how actually you will see Different different uppercase character would be there now if I

want to make them lower case what I have to do first of all I have to select my column like in which column you have to apply the lower function I have to apply on top of my review column now first of all I'm converting everything to the string okay string data type then I'm applying the lower because lower is a string method okay okay L is string method I think you already know that then whatever changes Actually I'm doing I'm saving inside my column that means I'm just doing the permanent change okay inside my

column so that's why I have given review again that is the idea now if I execute now see if I show you the data now see guys all the character has become lower case right now now if I want to show you the now if I again execute that review three you will see that all the character has converted to the lower case character okay that is The idea now the next thing will be learning how we can handle the HTML tags that means how we can remove the HTML tags for this here I have

written a function here I'm using something called regular expression regx okay inside regx you can give a pattern let's say here you are giving a pattern if you are getting this kinds of symbol okay if you are getting this kinds of symbol it's a like HTML tag you have to remove those HTML tags and you have to replace with Empty okay empty string that is the idea now this is the function we can use now if I execute now let's say this is a one text I have prepared you can see in this text actually

we are having lots of HTML tags now if I pass this particular text to my function see it will automatically remove all the tags now see I'm only getting the text okay relevant text that is the idea so this notebook I prepared in such a way so that you can use it as A template let's say whenever you need anything any kinds of functionality you can come here you can copy those function so please try to keep this particular notebook with you because this is going to help you a lot okay whenever you'll be developing

the projects this is going to help you a lot now if you want to apply on top of the entire data set again just call this column name let's say review column okay and there is a function called apply and Inside that just try to pass this function okay apply function takes actually uh one function object now here I'm giving the function object so what it will do it will try to apply this particular function on top of the entire uh rows you are having in your data set okay now see if I execute and

and the changes actually I'm doing I'm saving everything permanent okay now let me execute now if I show you any kinds of random let's say rows now you'll see all The uh now let's see if I also show you um seven okay seven number rows now just try to see there is no HTML tags in this particular text you can pick up any kinds of let's say index so let's say if I show you the 10 nowhere you will see the HTML tags here now we'll be learning how we can remove actually URL let's say

if you are having some URL in the text how we can remove it for this again I'm using regular expression and there is a Pattern I have given if you are getting this kinds of let's say word HTTP then slash then ww that means it is a URL and you have to remove the URL with the empty string okay this is the function now let me execute now here I have just mentioned some of the URL so this is my YouTube channel URL this is my LinkedIn URL and google.com and kaggle.com okay now these are

the text I have prepared one by one now let's say if I'm passing any kinds Of text inside my remove URL function it will remove that URL let me show you see I'm giving the text to that means my LinkedIn URL now you can see check out my LinkedIn I hope it is clear see again I'm telling you it's not a mandate things let's say you need URL in your data at that time you can keep it let's say you need actually HTML tags you can keep it but if you don't need it you can

remove it because I already told you uh nowadays actually we are having Advanced Genbi application it also supports all kinds of text like emojis HTML okay everything it supports so if you're creating these kinds of advanced let's say u i mean application that time you need Des are the data you don't need to remove it okay but sometimes actually you also need to remove this data set so that's why I'm sure you how you can remove it and if you want to keep it you can also keep it it's up to you okay you have

to decide based on your project Architecture that time this is the idea now we'll be learning how we can handle the punctuation so if you want to see the punctuation so there is a string package you can use now if you just write string. punctuation you will see all kinds of function are available now what I have done I just stored these are the function in a variable called exclude now here I have written a function okay here I've written a function called remove punctuation and Here I've written a for Loop so I'm just uh

looping through this punctuation one by one and user is giving the text and I'm just checking whether if there is any punctuation okay I'm just replacing with the empty string now let me show you how it will work now let's say this is one text string with a punctuation you can see there are so many punctuation I have assigned now if I pass this particular text to my punctuation function it will remove the Punctuation now see there is no punctuation right now even I'm also calculating the time like how much time it is taking to

remove the punctuation because there is another way you can follow to remove the punctuation I'll tell you how you can do it see this is the function guys uh so here you can use something called text. translate inside that just try to use this particular function okay make translate and inside that just try to mention the punctuation So what it will do it will uh take your text and it will remove all the punctuation so this is another approach now see if I calculate the time of this function you will see that this is the

time that means this function is taking less time than your this function because here you are using one for Loop and for Loop has the linear time complexity I think you know that if you are familiar with DSA con I you know that it is having linear time time Complexity okay so this particular approach is good so let's say if you're are applying this particular Logic on top of 50k data set just try to think how much time it will take this full loop and in other hand if you're using this particular method it will

take very less time to perform the operation now if you want to see the time difference you can also see the time difference now if I show you my uh text now see inside this text Actually I'm having lots of punctuation now what I will do I will use my uh remove function one that means this particular function and inside that I'm going to pass my entire review now see it will remove the punctuation okay see all the punctuation has been removed now you can also pass the entire data like that you can also pass

the entire data it will remove all the punctuation inside your entire data now we'll be learning how we can handle the chat Conversation see sometimes whenever we perform the chatting operation we give uh like lots of shortcut let's say if I want to write as far as I know what you will give you will give AF a ik then let's say away from keyboard AFK then as soon as possible ASAP that's how we use lots of chat keyword okay we use lots of chat shortcut keyword okay here I have listed down some of them you