[Music] welcome everyone I'm joined here by three Google fellows experts whove shaped some of the most critical technologies that power everything we do at Google our pace of innovation here at Google cloud is rapid but it's important to note that our speed today is the result of Investments we've been making for decades and today you're going to get a rare look behind the curtain at our approach to innovation as we learned from three of the Google fellows who have been The Architects of some of the incredible work over the past few decades and we're truly

doing some remarkable things we operate one of the largest terrestrial and subc fiber networks more than 2 million miles of fiber that is over 10 times more than the next cloud provider which enables us to deliver quality of service and performance that outpaces everyone else our next Generation firewall has 20 times the threat efficacy of other cloud service providers with our internal system Borg and Colossus we built a disaggregated Compu and storage architecture which enables near arbitrary scaling best performance and lowest costs from the beginning we have viewed our mission to organize the world's information

and to make it universally accessible and useful as a problem in artificial intelligence this lens gave us a head start on understanding the fundamental problems in the space it is the Google fellows and Technical leads like the ones we will be speaking to today that led breakthroughs like Transformers and AI accelerators like tpus the result of this is somewhat magical but it's really just evidence of what the best of human Ingenuity working in collaboration can achieve today we'll take you a little bit behind the scenes it's an honor to welcome three of the brightest Minds

in Computing to our stage today each of them has made profound contributions to the world of technology not just within Google but across the broader industry Jeff Dean has been at The Cutting Edge of large scale distributed systems and search for more than two decades and at the Forefront of AI for more than 10 years leading the development of critical infrastructure like tensor flow and Pathways Jeff has truly witnessed and led the evolution of AI from its early days to its current transformative power Eric Brewer's career has spanned everything from pioneering distributed systems responsible for

some of the earliest work on cluster-based scaleout Computing and ultimately laying the foundation for kubernetes truly the foundation of modern container orchestration K Grimes BTO has been a Cornerstone of Google's infrastructure for over 20 years building a scaling systems like Borg and our data centers which are at the heart of nearly every Innovation we have at Google today we're excited to hear from these experts as they share their perspectives on Google Cloud's Journey the dramatic impact of generative AI really can't be overstated right this is a once in a generation inflection point and it's impacting

all of us it feels a bit sudden but we know the technology behind it has been the result of Decades of technology advancements so can you each take me back to your early days at Google and share some of those early decisions and how that's shaping us today jefff let's start with you sure uh I mean I think you're right A lot of the things we're doing today in AI rely on a lot of the infrastructure and systems that we've built up and and sort of improved over over many decades uh you know I think

in the earliest days of Google we were essentially a single product search engine company and we were working on how do you scale that system up how do you build systems that can crawl large amounts of the web index it you know serve queries to a larger and ever growing number of queries per second or queries per day however you want to measure it um and then uh at the same time we were trying to scale up you know the size of our index because searching more pages is generally better for search quality handling more

queries uh faster so lower latency and then also updating the index more often and those combination of factors is really like one of the really exciting scaling challenges in Computing and I think now uh we're sort of at a similar point where we're working on how do you build really highly scalable AI systems at you know the uh you know scales the world has not really attempted before and there's always things that go wrong and go right about that but I I think it's uh an exciting time in Computing and we now have these systems

that can sort of see and understand language better than than anything we've we've built before and and that's exciting for what we can do with computers yeah parallels with web search are actually really interesting and we and you've seen this in terms of sort of indexing and suring and the transition there and going through that Arc a second time is as fascinating K what what do you think yeah I completely agree with that I mean when we first were doing web search there were so many things we really had to come to terms with right

you know it has to be a good quality product right you can index everything in the world which was a challenge in and of itself but you have to able to produce something sensible out of it that people can rely on they think they're high quality answers you have to deal with Spam and all kinds of junk that shows up and people trying to game the system and I think the challenges associated with that are really really similar to some of the things that we're confronting with AI today how do we make it and then

how do we use it as a product and I think the second thing that really came up is just the the uncertainty right where when we first tried to do index selection we we did machine learning assisted index selection that transition was a a huge challenge right because there was so much institutional knowledge built up in the existing systems and we had to be ready to take a risk that we were not just going to use an algorithm to choose the index but we were going to do it online all the time trillions of pages

a day and we had to be convinced that over hours minutes days those decisions were the right decisions and that was a huge Challenge and I think we're we're really dealing with something with AI today there's the huge scale piece which we have to get right but there's also the how do you turn it into a product how do you make it something someone wants to use how do you manage all the data that you're moving around and make sure it's the right data in the right place at the right time yeah the quality signals

and extracting those quality signals has been really important and I think your uh point on explainability is also key and what our lessons there have been really valuable to us over the years Eric how about yourself what what do you think here well I kind of view this uh from the same lens I always have which is really I I want to build large scale systems that are easy to program and can solve really large problems and in fact I was working on search even before Google existed because it was the first problem we had

in the world really required giant infrastructure that made it super appealing and so that work in some sense indirectly led to Borg but really what happened is there's a very in Google it's very much focus on containers and processes and apis and all our software is built that way and so it's kind of natural to say how far can you push that model and it's a great model for giant scale systems right it's really the only model that that it's been working well so that really led to kubernetes and that's been quite a its 10th

anniversary was recently so that was a lot of fun uh and then now we're seeing kubernetes used incredibly largely for AI and I can't name the customers but all large customers all large players in this industry are building on top of kubernetes I think it's a fair statement so it's great to see see that transition and gke is by far the best way to do that today and is evolving very quickly in those in that direction the growth of uh GK and kubernetes for AI workloads has been stunning I mean it's just been off the

charts um so I want to talk a little bit more about some of these early days at uh Google and you represent a lot of experience here uh I know there were some real challenges that we have to solve what do we have to overcome I we maybe we'll start with you Carrie what what are some of the hard problems you remember well you know talking about Borg and and about our Computing infrastructure you Borg started off as a way that we'd replace babysitting your machines the thing before it was actually called the babysitter and

it pretty much let you send your work out into the world and just made sure if a a computer died or something happened you know it got restarted it got sent for repairs and that was pretty much the scope of it right everything else was kind of on you I mean that's that's a very simplified statement but that's kind of where we were and and Borg replaced that but maybe about 10 or 11 years ago we we hit an inflection point right because Borg was really managing these high availability workloads like web search where you

go to the server you need a response fast and we tried to sort of rethink that and how do we make it better but it actually you know as many systems projects do it some things went better than others um and we had a chance to take stock of where are we going with this right and and I think it actually turned out to be a great opportunity for us because we had to say well what value does the system provide to us right how do we make this add more value and one of the

realizations is that we were managing these massive data pipelines which were not high availability at all they were but they weren't right they needed to run they needed to process a lot of data at the same time but we realized even you know accounting for special stuff like indexing when you looked at our clusters we were clearly doing work that was different than the kind of canonical computer science work that people talk talked about scheduling and so we realized that that to be cost effective and to do the work that needed to be done and

really respect the things that our internal developers wanted right they said I just want to send my job out and know it'll come back by Friday right they don't you know they had these different requirements that we need to blend kind of a traditional High availability scheduling with other options and and to do that we really had to be able to run our Fleet at a much higher utilization we needed to be able to manage jobs much more tightly and that was I think as much as it was kind of a a moment when we

had to reevaluate what we were doing it was a really important thing that led to kind of the next 10 years and really supported some of our large data applications our AI pipelines our you know more Nuance scheduling and also cost Effectiveness as a whole as we went forward yep very helpful Eric how about yourself this brings up memories of um you know many little problems we had to solve but I think the one that probably plays best for this audience is when you you know the early days of kubernetes when you're trying to get

people to use it explain that why they should use it the one that often carried the day was this utilization argument that at the time a typical VM would be about 10% utilized because it's either not the right size for the job it's doing or no one's looked at it recently so it was the right size before but now it's not uh and when we got people to just move on to kubernetes and just putting containers on machines instead of jobs on machines suddenly was easy to get to 50% utilization uh and that ended up

being a great sales technique for us obviously because like that's easy to sell you don't have to be but it was not actually what we wanted to so we wanted to sell apis and processes and containers and but it doesn't matter what people bought was efficiency not surprisingly and and flexibility right running different workloads on the same Hardware is a is a Hallmark of Borg and that it's another kind of efficiency get from these systems so uh that's still continuing to this day and AI is just a new kind of very complicated workload but yet

again just another workload yeah the the evolution from Borg to kubernetes and now um AI workload synchronously running at scale that's been uh incredible to see Jeff you always have great wor stories about um the early days and the experiences we've gained yeah I mean I think uh actually a lot of the systems we've built over the years really are about uh different ways of managing data transforming it getting you know derived information that you compute from that data and you you can think of that as like some simple map produce style computation where you're

running on thousands of machines to compute some derived information uh but you can also kind of view modern AI workloads training large models as that you're trying to sort of take a large pile of raw data and then train a model on it so that it can extract interesting insights from it in the most effective AI model arure you can come up with that can absorb you know whatever whatever information you want to you want to train the model on so I kind of view that as like and even Google search is sort of transforming

the raw web pages of of a you know the web into something that you can all of a sudden do queries and get useful information out of so I I think that kind of thread runs through a lot of the work we've done over many years and it's a it's an exciting thread transforming the world's information uh to an intermediate for format that can is easily queriable I mean that theme seems to underly 25 plus years of work I think represented by multiple aspects of this group really really amazing Jee I'll stay with you I

want to um uh talk about some of the early work that really led to some of the observations that motivated tpus can you give us some background on how you identifi the need for tpus and some early design principles that uh we came up with sure so uh maybe I'll go back even further um I mean I got introduced uced to neural networks in 1990 when I was a senior at the University of Minnesota um and you know I had a couple lecture class segment on it on on the approach and I thought it sounded

kind of interesting so I decided to do a senior thesis on Parallel training of neural networks because at that time people were kind of excited about the ability of neural Nets to solve really teeny problems in ways that other approaches couldn't but they didn't really solve real world scale problems um so I thought oh wow if we just have the 32 processor machine in the department do something instead of one processor it would be amazing um so I I spent a few months on that and came up with a couple different approaches of paralyzing them

uh neural network training and then but and you know you could get them to scale a little bit but they still couldn't solve really real world problems but I felt like the abstraction was the right one right um so then fast forward to about 2011 and uh I bumped into Andrew in in a micro kitchen at Google he was a St he's a Stanford faculty member and he was spending a day a week at Google I'm like oh what are you doing here he's like oh I haven't figured it out yet but uh at Stanford

my students are starting to get good results on neural neural networks um using GPU cards I'm like oh that's interesting so we got to talking and eventually uh I decided we should really try to scale up training of neural networks again uh back to my undergrad thesis and so we decided to use thousands of computers because we didn't have accelerators like gpus or tpus that uh didn't exist yet in our data centers but you could get thousands of 2,000 uh machines 16,000 cores all working together to train a single model and pretty interesting things emerge

where like the model could in an unsupervised way learn high level representations of things like cat faces or human faces or the backs of people walking or things like that without ever being told what those were um and so we started to work on speech recognition models as well and we're getting really good results using neural networks there uh but they were computationally pretty expensive compared to what we were using in our production speech systems then so I started to say well if the speech system gets a lot better in quality people will start to

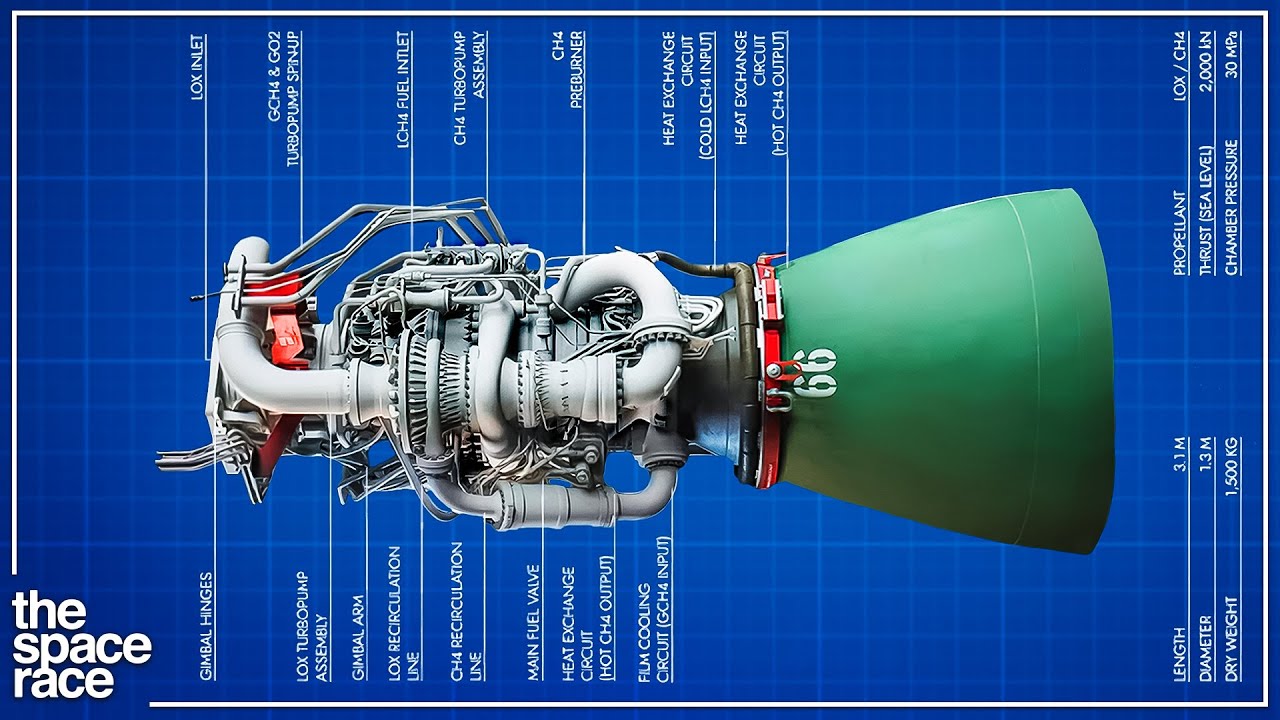

use it more and if they use it uh three minutes a day and we have 100 million people doing that um the computational cost of just launching this improved neural network-based speech system instead of the current production one would require us to double the number of computers Google had in our data centers um and so we realized there was a better way uh and it turns out we took advantage of two features one is neural network computations are actually quite specialized there're essentially lots of different compositions of linear algebra and they're also very tolerant to

low Precision so you can essentially build computers like tpus tensor processing units that are essentially very low Precision linear algebra machines that's right and you get really incredible performance advantages when we did the tpv1 you know the improvements over uh CPUs and gpus of the day were about 30 to 80x unbeliev improvements and we've sort of had many generations of tpus after that but that's sort of the Genesis was that thought experiment of what happens if everyone starts using speech uh recognition or image models at Google it's really unbelievable je I mean you can't escape

your youth from your senior year and your senior thesis to bumping into someone in the micro kitchen to building out image recognition speech and you never know when your senior thesis will come in handy again stay in school I think is maybe the um takeaway here um Eric uh Jeff mentioned some of the design principles around tpus and the lasting impact what were some of the early design principles you've leveraged and have they remained consistent throughout your journey I think there are some that are deeply part of Google the most obious one is you know

stateless processes are easier to manage than stateful ones and separating State Management out from the rest of infrastructure uh I think there's a a lot to be said for the kind of um the value we get from hiding things behind load balancers that kind of is how we scale out things it's also how we deal with a lot of high availability and fault tolerance um Google historically takes its machines down every month all of them right and that's that requires a lot not all at once right carefully coordinated but nonetheless you know you talk to

most modern Cloud users they would say no you can't take my machine down right we have to actually like it's got to stay it forever right that's my mental model of my machine and Google wises before I got here was like that's an unreasonable promise to expect so let's assume that machines will go down all the time and build our higher level system to make up for that and I think that principle still really applies very widely in many parts of Google today and I think that was a good choice uh and certainly one I

still advocate for but it's not the default choice for people that kind of grew up on running their own babysat machines and clouds where it's kind of like oh I have one of these it's very special I've got to make it work forever right um and so those R don't go too well together one little point I'd add on that which has been interesting which is when we you know we try to build VMS on top of processes that get shot down every month that would normally take the VM out too so that's also how

we had to develop live migration so that was one of my first big projects at Google was how to live migrate these things so that we could move them around every 30 days rather than shoot them that's still how Google Cloud works I remember I learned this from you actually when I was a graduate student Eric uh in one of your early Ventures you uh showed how you would reboot machines rolling every few days actually to keep them um safe reliable and actually up and that that's this was 20 20 something years AO yeah that

was driven mostly because there are large parts of the software stack including the OS that we couldn't control and it would leak kernel memory people weren't used to making applications that had lots and lots of network connections Network stack would leak memory so you were going to Rite out a memory way less than 30 days from now and so you had to actually same thing the load balancers hiding the fact that we're changing the machines all the time but yeah there was a period where we were doing every 3 days we had to reboot all

the machines yeah that was definitely counterintuitive but it's been the pattern the OS but of course that requires help from the vendor right right very very interesting Carri um sort of along these exact lines you've spoken about how Google's rapid evolution of products and infrastructure meant that the traditional uh crud approach create read update delete was no longer practical uh how did this environment really shape your approach to Bor data centers and what were some of the design choices you made to accommodate this rapid change yeah I mean we've spent a lot of time Looking

Backward but I actually think this question is reversed right I I think there's a there's an approach where when we talk about developers we talk about workflow right and and what's the workflow and what's the use case and that's a really important way to look at things but when we think about the kinds of evolution we've needed to do things like AI right to make major product changes I actually think we have to go back to that older model and talk about what are the data structures what are the simple apis right like the crow

apis that we need because we don't know what's coming by the time we fully understand what the workflow looks like it's too late right and there are definitely cases where we need to do that but I think about the work we do in AI right now where you know one of the things the primary thing I work on is trying to get these these new models to products right how do we make them something that's operating in production has all the stuff that it needs really works for a product and we don't know right people

ask what are we doing for 2025 and they answer is we're doing what we need to do you know I I think we have some great ideas we think we're doing what we need to do and I've really been encouraging the teams to assume that everything will change you have to pick some kinds of basic functionality some parts of the API that you believe are important and you have to collect that data and someone will come with a new use for it right and and so really thinking about modular pieces rather than endtoend workflow yes

you want to support that workflow but how do you do that in way that gives you kind of components you can play with when the next grade idea shows up because you don't have six months from the time someone has the next grade idea to make something that really is going to work you have 10 days three weeks maybe six weeks but you have to have pieces you can work with right you have to have apis that you can use to do new use cases you have to have data structures that you can repurpose right

I built this thing to tell me if a data set is compliant but I actually need to use it to enforce during the training Loop the the the things that I want to have happen right and so just new use cases come all the time we have to think carefully about how we build the underlying pieces you know things like Pathways you know bits and pieces that we can use to really shape the next idea into something that's a real product yeah yeah so uh the the journey here has been astounding I mean I think

it's safe to say though that the Germany forward is going to be uh over the next few years uh probably even more exciting so looking forward uh what do you all think is going to be some of the most um uh promising but also concerning implications for the future generative Ai and its impact um K why don't we start with you well I think the thing that I worry about is how do we use generative AI to access information to provide information to support things like captioning or you know interacting with people in remote locations

or being to really summarize data I think there's some fantastic use cases right the things we do with images and Medical Imaging but how do we not use it to replace things that really require human judgment as well right and and I think for me that's something I really think about is how do we find these really compelling use cases for it how do we engineer it so we really know that we're delivering high quality data we have users who trust it we really understand what's happening to the extent that we can um with a

new technology but at the same time how do we pick the use cases where it really enables new things helps people do their jobs helps people interact with the world find information um you know get the best results but not necessarily replace all the things that you know need to be generated as new information in the world and and need humans working with them and exercising judgment because it's a very different kind of loop that happens in the process yeah the judicious application of the technology is going to be super important Jee how about yourself

what what do you think about promising and um concerning yeah uh I mean I think um promising I I think there's so much potential in many many areas of of endeavor you know I think if you think about education you know we're close to having the ability to have something where you could put in some material in a variety of different modalities be it text you know chapters of a textbook or 20 research papers you're trying to understand or a video of a lecture and then have a system that can help you in the way

you learn best you know learn from that material um you know I think the notebook LM feature that uh was uh launched maybe a month or so ago where you can put in material in one form and you get out a you know two-person AI generated podcast about that you know for some people they don't learn very well from a PDF they learn better from a conversation and this could be great for that kind of learning style and other learning styles should be adapted to and dealt with so I think education Healthcare yes um the

ability to help people get things done that they they want at a high level can you tell an an AI agent either virtual or eventually physical robotics ones to go off and do something that you care about and it sort of does a bunch of actions on your behalf maybe comes back and asks you some questions about you know did you mean this or that or would you prefer this or that um but enables people to do more I think that's the aspiration we should have for AI um is to really figure out how to

maximize this potential to help us all accomplish more and on the you know the the worry side I think there's a bunch of of concerns and one has to be pretty careful about how one deploys AI systems I think misinformation generation you know misinformation could always be generated but now you can generate it you know in a turbocharged way that's very personal ands even more realistic in some ways um so that's certainly a concern particularly this year with so many people going to the election polls in countries around the world yes um you know that

that that's certainly one one area of concern I think you know deploying systems in ways that are not biased by the world as it is but instead aspire to the world that we want it to be is what we should do yeah very insightful Eric what do you think well I've done a lot of work in developing countries and one thing that's always struck me that I think we can address is to first order developing countries don't have enough Specialists right I remember Randa had one guy that could read an MRI and if he was

out of the country then they had zero right and so uh we've done this already some right there's been quite a good work around like diabetic retinopathy getting assistance reading those images uh x-rays things like that but I kind of feel like if you just broaden the category like we need specialists in places where that are not specialist in the combination of internet access and AI seems like a pretty complete solution to that for so many Specialties so that's what I'd love to see happen because it's empowering right to those areas uh on the worry

side I tend to think about it kind of how I think about databases where we we kind of separate things that are kind of readon and presenting information from things that where you start to to make transactions or changes right and those are not too bad either because at least you can undo those in a database system so undoable changes are not so bad and then there's you know irreversible changes and like before we have automated systems making irreversible decisions I think we need some thought yeah right and the the risk we're in is not

so much I don't worry about this at Google for example is that so many people can try so many Control Systems over the next decade and not all of them will understand all of these trade-offs so we will have some badly designed control system systems that make bad decisions and uh that's going to be a learning decade I would say yes but that's one I definitely worry about yeah we're going to be living with the uh positives and the um concerning outcomes over the coming decade and there'll be lots of um experience and policy that

we're going to have to develop uh keeping the focus on the future and Eric staying with you to begin with uh what are you working on next what uh what are you most excited about right now a lots of things but I would say the ones I'm you know working on most are kind of what's next for kubernetes and AI is a big part of that story it is a good foundation for AI but it does nothing particularly special to enable AI or make AI workloads particularly easier to do especially given how much different stuff

is going on under that single label right quite complicated that actually is a place where a little more structure could help applications uh be easier to write and easier to use so we'll see if that can go somewhere uh still doing a lot of work on open source and open source security uh we depend on open source at Google but we also depend on it for all of our critical systems whether that's water utilities pipelines electrical grids and uh those are now subject to nation state attacks so would be nice if we could fix that

right not trivial but I think an important problem that I'm also working on and a few things lat to that but that's probably a good place to stop I think we those are two big enough two big ones absolutely very good how about yourself G what are you what are you excited about well I have some stuff that is a continuation of you know Borg and and compute work and just the scaling that that Jeff's been talking about and how do we how do we really get there right and and you know people thinking about

having these big monolithic sort of supercomputer models versus being able to be much more flexible and take advantage of all the great chips and all the stuff that's out there but also just opportunistically how do we train models right how do we just go out there and say okay I've got room today how can I make this better and have that really seamlessly fit in and I think other thing that I've been very interested in on the the data side is you we're moving to more multimodal right you put in text you get speech you

put in speech you get video you put in video you get who knows what it maybe whatever is most appropriate right and and I think figuring out how to manage all that data make sure we're producing um results that really are good quality in all the ways we think about quality right that that are helpful to people that help them with whatever they're doing but also you know that respect kind of the opportunity of not producing fake images and and what data we have on hand and how we use it and how we get it

to the right place is is a big part of that process as well a lot ahead here Jee how about yourself what what are you seeing as next um I mean I guess a few things so one is I'm one of three technical leads for the Gemini model building effort respons um which is like an exciting project with lots and lots of amazing people working on improving our models giving them new capabilities um one I'm particularly excited about is is the long context capability we introduced in Gemini 1.5 where instead of you know a few

tens of thousands of tokens the models could you know get inputs that were as long as you know a million or two million tokens which is like you know a thousand pages of text or a two hour video or 20 hours of audio or something um and I think the interesting thing about material in the input to the model is it's got a very clear view of that so it tends to hallucinate less and it's able to sort of refer back to like these paragraphs or extract like all the results from the 20 papers you

put in and like give transform them and put them in a useful form for you based on on what you asked the model to do so I'm excited about how do we push that to the next you know level which would be not just here's 20 papers you give the model but how can you actually get you know potentially all information in the context window in some useful form that the model can pay attention to yeah uh and then the other thing I'm spending a bit of time on is like interesting new approaches to Hardware

starting to think about that continuing in the in the tradition yeah lot lot of opportunity there ahead okay let's um I think maybe comose the two the past and the future so what have been some of the most surprising aspects of the way that Google has grown over the past two decades maybe we'll go back to you Eric well obviously entering the cloud space was a big transition and I think it's one that Google was probably not as ready for as it thought it was I think the reason comes back to this fact that Google

has a especially then a very unique architecture that's great for large scale systems but it's not great for babysitting lots of pets which is kind of what VMS and clouds were at the time and so uh you know that's a two-way tree we have to learn how to support the workloads that people wanted to run and that took a while uh and uh other Enterprises are not like Google right just because we know what Google wants does not mean we understand what cloud customers want right so it took us a while to learn that uh

but that being said I think uh there's now built a large capacity to understand and serve Enterprise customers that didn't exist before and that whole transformation has been great part to be fun of great part to be great fun to be part of let's try that again uh so that was certainly entertaining at times but also I think transformative for Google yeah that I think has been an incredible journey and the S success that we have and also the AI opportunity really comes back to our strengths and that's been also interesting to see uh as

this sort of AI Revolution and infrastructure has taken hold going back to some of our core capabilities whether it's tpus Borg kubernetes and how that really placed the heart of AI and cloud has been exciting um Jeff let's go to your word I mean um you see the future clearly so but what's what's been surprising um you know I think one thing that I really like and appreciate is how Timeless the company's mission statement has been um you know I think we've been working on this journey for how do we take information and make it

uh universally accessible and useful yes and you know the early days of search is you know a a iteration of that uh the most recent AI models that can you know learn from lots of information in the world and transform it transform it across modalities and also how much is left to do that still fits in that mission you know yes uh why can't we we should make all information available in all languages in the world that's right or be able to transform any information into the right modality for the the person who wants that

information regardless of what its source modality is and I think these are really hard computer science and AI problems and there's plenty to do but when we accomplish some of these things you know users will benefit and that's going to be amazing yep yep now the the timelessness has been absolutely surprising and invigorating I think krie what do you think what's been surprising for you I mean I think it's been surprising how applicable some of the things you know as Jeff said that we did earlier turned out to be to other products even in cases

where you know we didn't even understand at the time that that was going to be true right and and I think we see now some of the the pieces that were built for traditional web indexing right that that labeled document that labeled images for web indix and suddenly we're we're putting all this load on them right where we're using them for all these new things in the AI space to make sure we have the right results to be really fast with some of this and in some cases we're feeding some of the new generative AI

back into those pieces to improve the existing products right and and I think it's been both you know having been around for a long time not as long as Jeff but you know quite a while um quite a while I think you know initially a lot of these things were sort of built on the spot right we knew that we didn't understand how to respond in certain languages or we didn't understand what aspects of the web made a page really good in India versus what it would look like in North America or something like that

and we had to tackle some of these problems just from you know experimental results but now as we move into other areas whether it's something with photos it's something with a customer in Google Cloud who has a specific need or it's some of the generative AI work we're seeing that we can actually you know feed through some of that expertise but also we can feed back in the new things that we're we're bringing in the new models and they actually improve some of that underlying very basic stuff at the core right and I think that's

been a pretty exciting opportunity to to bring it all together um and I hope that that's sort of the direction we go with some of this work too yeah the timelessness has been uh fantastic uh look I've love this conversation it's been a privilege to have you all here uh thank you Jeff Carrie and Eric for joining us today it's been really amazing to hear firsthand how your work and that of countless others of course that Google laid the foundation for today's pivotal AI moment [Applause] [Music]

![How I Trick My Brain To Study Focussed Everyday?[Andrew Huberman Method]](https://img.youtube.com/vi/eBz5JwWMg3Q/maxresdefault.jpg)