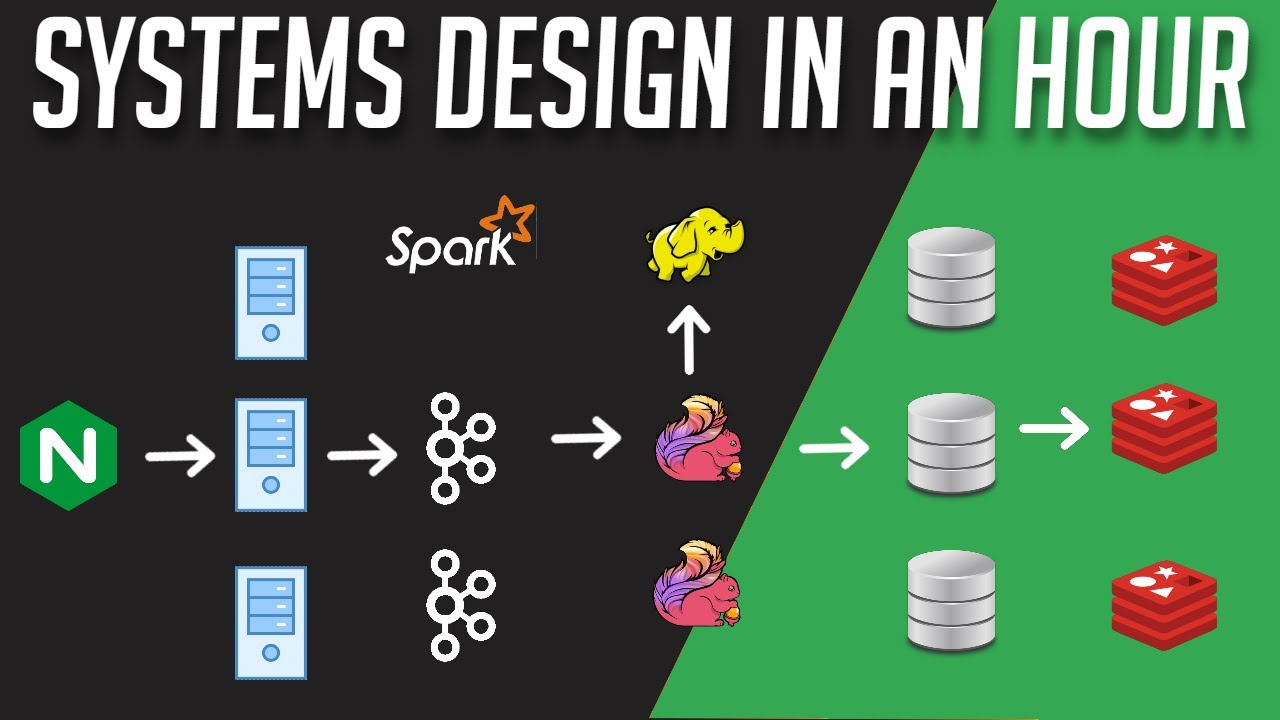

all right I'm going to push this data pipeline to production okay go for it wait team what's happening why are we being charged $1.2 million already uh what Calvin a software engineer at Shopify and his team were tasked with building a data pipeline for a new marketing tool for Shopify Merchants this data pipeline would be roll out for a small selected group of merchants as part of their early release the merchant data were sent to Kafka and ingested using Apache fling to perform various calculations Kafka is a distributed streaming platform that is used for building

realtime data pipelines and streaming applications it uses a published subscribe model in this model producers generate data to a topic and any system that are subscribed to that specific topic can read the data once it is available Topics in Kafka serve as logical containers for data acting as categories or Feats where records are written by producers and read by consumers topics can be partitioned into multiple segments spread across the cluster Brokers the Brokers are responsible for receiving datas from producers storing it in topics and forwarding it to Consumers while also managing replication and partitioning for

high availability and fault tolerance using CFA as an intermediate layer between the data sources and apachi Flink can provide several advantages when working with distributed and real-time data processing one it decouples the producers and the consumers this decoupling allows for flexibility and scalability by allowing both data producers and consumers to write and read data respectively at their own pace two it helps protect against buffering and back pressure handling burst of datas or temporary load Spike can happen and kfka can buffer and prevent overwhelming Apache fling with data three data retention and replayability to recover from

failures Apache fling is a framework designed for processing streams of data upon receiving the data Apache fling performs various calculations and uses Rock DB a key Value Store optimized for fast storage for storing State the stateful approach enables fling to maintain historical context or patterns of their process data this works with their very small limited subset of merchants however they were already ingesting over 1 billion rows of data even with just this small subset a quick Google search shows that Shopify has over 1 million Merchants across the globe therefore this data pipeline would not be

sustainable after the initial release they aim to streamline their workflow by Outsourcing certain paths from the Apache fling pipeline to an external SQL based Data Warehouse in this setup fling would simply submit queries to the data warehouse which in turn would write the results directly to Google Cloud Storage this essentially means that data ingestion can be entirely removed from the pipeline allowing the external data Warehouse to management this approach aims to boost the overall throughput of Apache flings for their General release when considering which data warehouse to consider it needed to meet three criteria One

automatically load the data set daily can handle 60 requests per minute with ease three can export the results to Google Cloud Storage the data warehouse they ultimately chose was bigquery a platform specifically designed for large scale data processing and Analytics it can easily store pedabytes of data I don't actually even know how big that is damn that's big what's even more impressive is that you can query against a very large data set in a matter of seconds Shopify already had an internal tool that Calvin and his team could use to load their initial billion rows

of data into big query after the data load was completed they ran their first query and got back a very interesting log message a single query was scanning through 75 gab worth of data yikes remember how one of their criteria was to be able to handle 60 requests per minute why don't we perform some calculations 60 requests per minute time 60 minutes per hour * 24 hours a day times 30 days in a month equals to 2,592 th000 queries per month multiply that by 75 gigabyte that is equals to 24,400 tab worth of data scanned

each month according to Big queries on demand pricing at the time that would have costed them 949218 75 USD per month that's almost enough to buy a box to live in Toronto but still that's insane okay what's going on while the exact query is not known it likely resembles a scenario where they select everything from a table based on some certain conditions such as timestamp and geography if the data in big query isn't sorted using these criteria the platform would need to scan through countless role to identify all the data meeting the specifi wear conditions

they knew that they had to Cluster their tables clustering means that you are sorting your data based on one or more columns in your table these columns are usually the fields defined in the wear condition for example using the previous query we can cluster on the column's time stamp and geography by doing doing so big query will only scan relevant data Within These specified conditions resulting in a significant reduction in the amount of data being scanned after applying the clustering to their tables the exact same query log scans show only 108.3 megabyte of data isn't

that amazing let's redo our cost calculation 2,592 th000 queries per month time 0.1 GB only equals to 29,200 G gab of data scan per month according to Shopify this optimization brought down their cost to only $1,370 USD per month while clustering resulting in a Big W for them they didn't stop there they realize that there are additional ways to optimize cost one avoid using the select star statement only select columns that you need to limit the engine scan two partition your tables if you can Det determine how you're quering your data partitioning your data can

significantly reduce the amount of scanning being done three don't run queries to explore or preview data this can result in unnecessary cost big query provides a preview option to view your data instead for free this is what I love about software engineering and system designs this concept of partitioning or data and clustering them can be applied in real life and can produce amazing results as seen in this example the data pipe went from costing almost $1 million USD to just 1K in one month I hope that you were able to learn something from this video

and maybe even apply it at your job let's start the year strong together and as always thank you for watching and see you in the next one